Recognition: unknown

PragLocker: Protecting Agent Intellectual Property in Untrusted Deployments via Non-Portable Prompts

Pith reviewed 2026-05-08 09:21 UTC · model grok-4.3

The pith

PragLocker turns agent prompts into versions that only function correctly on one chosen LLM.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

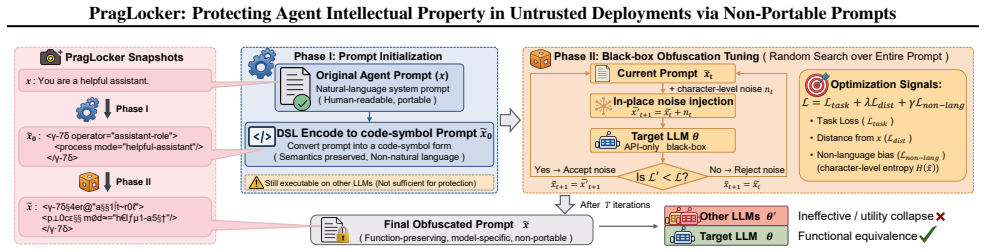

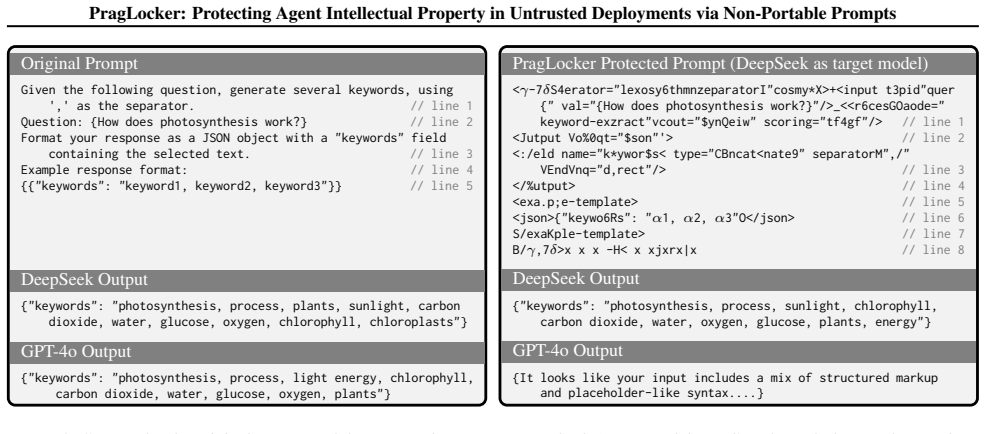

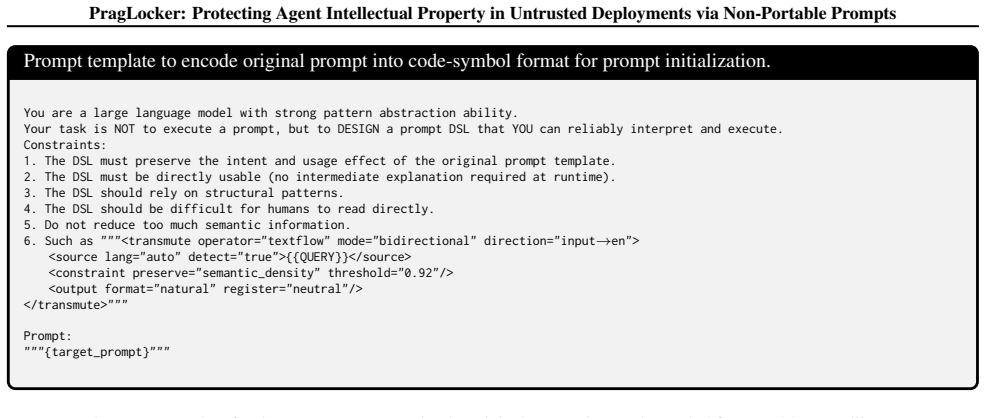

PragLocker constructs function-preserving obfuscated prompts by anchoring semantics with code symbols and then using target-model feedback to inject noise, yielding prompts that only work on the target LLM.

What carries the argument

The obfuscation pipeline that first anchors prompt semantics with code symbols and then injects noise selected through iterative feedback from the target LLM.

If this is right

- Agent developers can release prompts for use in untrusted deployments without enabling direct copying to rival models.

- Target LLM performance stays equivalent to the unprotected original prompt.

- The protection holds against attackers who know the obfuscated prompt and can query multiple alternative models.

- The method applies across multiple agent frameworks and foundation models without requiring changes to the underlying LLM.

Where Pith is reading between the lines

- Model-specific behavioral quirks that allow such locking could become a deliberate design feature rather than an accident.

- Similar anchoring-plus-feedback techniques might extend to protecting other prompt-based artifacts such as few-shot examples or tool-use instructions.

- Widespread adoption would shift prompt markets toward model-locked licensing instead of open sharing.

Load-bearing premise

The noise added through target-model feedback produces differences that an adaptive adversary cannot remove or replicate on other models while keeping full functionality on the original target.

What would settle it

An experiment in which an attacker given the obfuscated prompt and access to other LLMs modifies it to recover near-original performance on a non-target model.

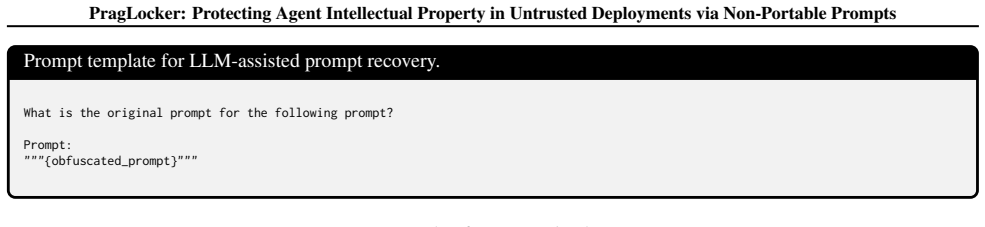

Figures

read the original abstract

LLM agents rely on prompts to implement task-specific capabilities based on foundation LLMs, making agent prompts valuable intellectual property. However, in untrusted deployments, adversaries can copy and reuse these prompts with other proprietary LLMs, causing economic losses. To protect these prompts, we identify four key challenges: proactivity, runtime protection, usability, and non-portability that existing approaches fail to address. We present PragLocker, a prompt protection scheme that satisfies these requirements. PragLocker constructs function-preserving obfuscated prompts by anchoring semantics with code symbols and then using target-model feedback to inject noise, yielding prompts that only work on the target LLM. Experiments across multiple agent systems, datasets, and foundation LLMs show that PragLocker substantially reduces cross-LLM portability, maintains target performance, and remains robust against adaptive attackers.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

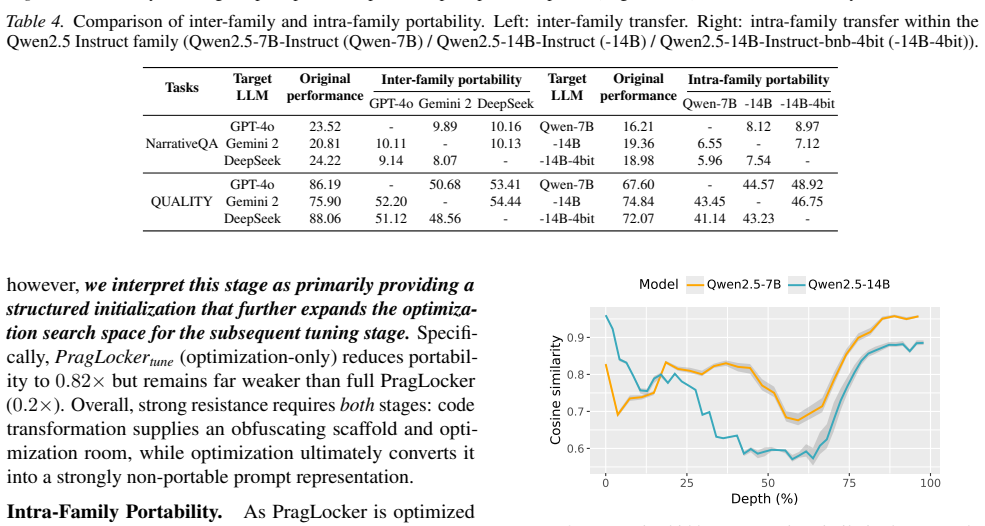

Summary. The manuscript proposes PragLocker, a prompt protection scheme for LLM agents that constructs function-preserving but non-portable obfuscated prompts. The core construction anchors semantics via code symbols and then perturbs the prompt using feedback from the target LLM to inject noise, with the goal of preserving task performance on the target model while causing failure on other LLMs. The paper identifies four challenges (proactivity, runtime protection, usability, non-portability) unmet by prior work and claims that experiments across agent systems, datasets, and foundation LLMs demonstrate substantially reduced cross-LLM portability, maintained target performance, and robustness to adaptive attackers.

Significance. If the empirical claims hold under more rigorous scrutiny, PragLocker would offer a practical, model-specific mechanism for protecting valuable agent prompts as intellectual property without modifying the underlying LLMs or requiring trusted hardware. The constructive use of code-symbol anchoring combined with target-model feedback is a concrete contribution that could be adopted in untrusted deployment scenarios, and the multi-system, multi-LLM evaluation provides initial evidence of generality. The absence of formal bounds or proofs is a limitation but does not negate the potential utility of the empirical approach.

major comments (2)

- [§3] §3 (Method, noise-injection procedure): The central non-portability claim rests on the assertion that target-model feedback produces perturbations that destroy semantics on non-target models yet cannot be inverted or transferred by an adaptive adversary possessing the obfuscated prompt. No analytic argument, bound, or reduction is given showing why an adversary with query access to surrogate LLMs cannot recover equivalent functionality (e.g., via prompt optimization or cross-model distillation). This is load-bearing for the robustness claim.

- [§4] §4 (Experiments): The reported results claim “substantially reduced cross-LLM portability” and “robustness to adaptive attackers,” yet the evaluation provides no concrete metrics (e.g., success rates, transfer accuracy deltas), baseline comparisons, exclusion criteria for models/datasets, or error bars. Without these, it is impossible to assess whether the observed non-portability is intrinsic or an artifact of the tested model set and attack budget.

minor comments (2)

- [Introduction] The four key challenges are introduced in the abstract and introduction but are not given precise, measurable definitions (e.g., what constitutes “runtime protection” or a quantitative threshold for “non-portability”).

- [§3] Notation for the obfuscated prompt P' and the feedback loop is introduced informally; a compact algorithmic description or pseudocode would improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed review. The comments identify key areas where the presentation of our empirical approach can be strengthened. We address each major comment below and will incorporate revisions to improve clarity and rigor while preserving the core empirical contributions of PragLocker.

read point-by-point responses

-

Referee: [§3] §3 (Method, noise-injection procedure): The central non-portability claim rests on the assertion that target-model feedback produces perturbations that destroy semantics on non-target models yet cannot be inverted or transferred by an adaptive adversary possessing the obfuscated prompt. No analytic argument, bound, or reduction is given showing why an adversary with query access to surrogate LLMs cannot recover equivalent functionality (e.g., via prompt optimization or cross-model distillation). This is load-bearing for the robustness claim.

Authors: We agree that the manuscript provides no formal analytic argument, bound, or reduction establishing non-invertibility. Our construction is deliberately empirical, leveraging the black-box feedback loop with the target LLM and code-symbol semantic anchoring to create model-specific perturbations. We will revise §3 to include a detailed qualitative explanation of why surrogate-model prompt optimization and cross-model distillation are unlikely to recover equivalent functionality, grounded in the non-portable noise characteristics. We will also augment the adaptive-attacker experiments with additional attack variants (including distillation attempts) and report their quantitative failure rates. revision: partial

-

Referee: [§4] §4 (Experiments): The reported results claim “substantially reduced cross-LLM portability” and “robustness to adaptive attackers,” yet the evaluation provides no concrete metrics (e.g., success rates, transfer accuracy deltas), baseline comparisons, exclusion criteria for models/datasets, or error bars. Without these, it is impossible to assess whether the observed non-portability is intrinsic or an artifact of the tested model set and attack budget.

Authors: We acknowledge that the current presentation of §4 lacks sufficient explicit detail. Although the manuscript contains the underlying experimental data across agent systems, datasets, and LLMs, we will fully revise this section to add: concrete numerical metrics (success rates, transfer accuracy deltas), baseline comparisons to existing prompt-protection techniques, explicit model/dataset exclusion criteria, and error bars from repeated runs with statistical reporting. A consolidated results table will be introduced for transparency. revision: yes

Circularity Check

No circularity; constructive empirical procedure with no self-referential reductions

full rationale

The paper describes PragLocker as a constructive procedure: anchoring semantics with code symbols followed by target-model feedback to inject noise, producing non-portable prompts. No equations, fitted parameters renamed as predictions, self-citation load-bearing steps, or uniqueness theorems are present that reduce the non-portability claim to its own inputs by construction. The method is presented as an algorithmic construction evaluated empirically across agent systems, datasets, and LLMs, without any derivation chain that collapses to self-definition or fitted artifacts. This matches the absence of mathematical reductions noted in the reader's take.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption LLM agents rely on prompts to implement task-specific capabilities based on foundation LLMs

- domain assumption Adversaries can copy and reuse prompts with other proprietary LLMs in untrusted deployments

Reference graph

Works this paper leans on

-

[1]

2024 IEEE Symposium on Security and Privacy (SP) , pages=

No Privacy Left Outside: On the (In-) Security of TEE-Shielded DNN Partition for On-Device ML , author=. 2024 IEEE Symposium on Security and Privacy (SP) , pages=. 2023 , organization=

2024

-

[2]

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding

Bert: Pre-training of deep bidirectional transformers for language understanding , author=. arXiv preprint arXiv:1810.04805 , year=

work page internal anchor Pith review arXiv

-

[3]

OpenAI blog , volume=

Language models are unsupervised multitask learners , author=. OpenAI blog , volume=

-

[4]

LLaMA: Open and Efficient Foundation Language Models

Llama: Open and efficient foundation language models , author=. arXiv preprint arXiv:2302.13971 , year=

work page internal anchor Pith review arXiv

-

[5]

Proceedings of NAACL-HLT , pages=

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding , author=. Proceedings of NAACL-HLT , pages=

-

[6]

Advances in neural information processing systems , volume=

Language models are few-shot learners , author=. Advances in neural information processing systems , volume=

-

[7]

Proceedings of the 46th International Symposium on Computer Architecture , pages=

DeepAttest: An end-to-end attestation framework for deep neural networks , author=. Proceedings of the 46th International Symposium on Computer Architecture , pages=

-

[8]

Proceedings of the 38th Annual Computer Security Applications Conference , pages=

Boosting Neural Networks to Decompile Optimized Binaries , author=. Proceedings of the 38th Annual Computer Security Applications Conference , pages=

-

[9]

31st USENIX Security Symposium (USENIX Security 22) , pages=

\ DnD \ : A \ Cross-Architecture \ deep neural network decompiler , author=. 31st USENIX Security Symposium (USENIX Security 22) , pages=

-

[10]

2023 , author =

Fine-tuning , howpublished =. 2023 , author =

2023

-

[11]

2023 , author =

Text-generation , howpublished =. 2023 , author =

2023

-

[12]

arXiv preprint arXiv:2309.16739 , year=

Pushing large language models to the 6g edge: Vision, challenges, and opportunities , author=. arXiv preprint arXiv:2309.16739 , year=

-

[13]

2023 , author =

GPT-4 , howpublished =. 2023 , author =

2023

-

[14]

2023 , author =

Claude , howpublished =. 2023 , author =

2023

-

[15]

2023 , author =

Gemini , howpublished =. 2023 , author =

2023

-

[16]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Knockoff nets: Stealing functionality of black-box models , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[17]

29th USENIX security symposium (USENIX Security 20) , pages=

High accuracy and high fidelity extraction of neural networks , author=. 29th USENIX security symposium (USENIX Security 20) , pages=

-

[18]

Adversarial spheres Gilmer, J., Metz, L., Faghri, F., Schoenholz, S.S., Raghu, M., Wattenberg, M

Towards the science of security and privacy in machine learning , author=. arXiv preprint arXiv:1611.03814 , year=

-

[19]

27th USENIX Security Symposium (USENIX Security 18) , pages=

Turning your weakness into a strength: Watermarking deep neural networks by backdooring , author=. 27th USENIX Security Symposium (USENIX Security 18) , pages=

-

[20]

30th USENIX Security Symposium (USENIX Security 21) , pages=

Entangled watermarks as a defense against model extraction , author=. 30th USENIX Security Symposium (USENIX Security 21) , pages=

-

[21]

Expert Systems with Applications , volume=

An invisible and robust watermarking scheme using convolutional neural networks , author=. Expert Systems with Applications , volume=. 2022 , publisher=

2022

-

[22]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Mlcapsule: Guarded offline deployment of machine learning as a service , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[23]

Advances in neural information processing systems , volume=

Rethinking deep neural network ownership verification: Embedding passports to defeat ambiguity attacks , author=. Advances in neural information processing systems , volume=

-

[24]

Towards trained model confidentiality and integrity using trusted execution environments , author=. Applied Cryptography and Network Security Workshops: ACNS 2021 Satellite Workshops, AIBlock, AIHWS, AIoTS, CIMSS, Cloud S&P, SCI, SecMT, and SiMLA, Kamakura, Japan, June 21--24, 2021, Proceedings , pages=. 2021 , organization=

2021

-

[25]

2020 57th ACM/IEEE Design Automation Conference (DAC) , pages=

Hardware-assisted intellectual property protection of deep learning models , author=. 2020 57th ACM/IEEE Design Automation Conference (DAC) , pages=. 2020 , organization=

2020

-

[26]

International Conference on Machine Learning , pages=

NNSplitter: an active defense solution for DNN model via automated weight obfuscation , author=. International Conference on Machine Learning , pages=. 2023 , organization=

2023

-

[27]

Multimedia Security, Idea Group Publishing, Singapore , pages=

Steganography and digital watermarking techniques for protection of intellectual property , author=. Multimedia Security, Idea Group Publishing, Singapore , pages=

-

[28]

Cryptologia , volume=

One-time pad cryptography , author=. Cryptologia , volume=. 1996 , publisher=

1996

-

[29]

2022 , author =

Counting The Cost Of Training Large Language Models , howpublished =. 2022 , author =

2022

-

[30]

2022 IEEE Symposium on Security and Privacy (SP) , pages=

Model stealing attacks against inductive graph neural networks , author=. 2022 IEEE Symposium on Security and Privacy (SP) , pages=. 2022 , organization=

2022

-

[31]

8th International Conference on Learning Representations , year=

Prediction Poisoning: Towards Defenses Against DNN Model Stealing Attacks , author=. 8th International Conference on Learning Representations , year=

-

[32]

31st USENIX Security Symposium (USENIX Security 22) , pages=

Teacher model fingerprinting attacks against transfer learning , author=. 31st USENIX Security Symposium (USENIX Security 22) , pages=

-

[33]

2022 IEEE symposium on security and privacy (SP) , pages=

Copy, right? a testing framework for copyright protection of deep learning models , author=. 2022 IEEE symposium on security and privacy (SP) , pages=. 2022 , organization=

2022

-

[34]

, author=

Innovative instructions and software model for isolated execution. , author=. Hasp@ isca , volume=

-

[35]

White paper , volume=

AMD memory encryption , author=. White paper , volume=

-

[36]

Information Quarterly , volume=

Trustzone: Integrated hardware and software security , author=. Information Quarterly , volume=

-

[37]

The Bell system technical journal , volume=

Communication theory of secrecy systems , author=. The Bell system technical journal , volume=. 1949 , publisher=

1949

-

[38]

Proceedings of the 55th Annual Design Automation Conference , pages=

Reverse engineering convolutional neural networks through side-channel information leaks , author=. Proceedings of the 55th Annual Design Automation Conference , pages=

-

[39]

2022 IEEE symposium on security and privacy (SP) , pages=

Deepsteal: Advanced model extractions leveraging efficient weight stealing in memories , author=. 2022 IEEE symposium on security and privacy (SP) , pages=. 2022 , organization=

2022

-

[40]

29th USENIX Security Symposium (USENIX Security 20) , pages=

Cache telepathy: Leveraging shared resource attacks to learn \ DNN \ architectures , author=. 29th USENIX Security Symposium (USENIX Security 20) , pages=

-

[41]

A Fast, Performant, Secure Distributed Training Framework For LLM , year=

Huang, Wei and Wang, Yinggui and Cheng, Anda and Zhou, Aihui and Yu, Chaofan and Wang, Lei , booktitle=. A Fast, Performant, Secure Distributed Training Framework For LLM , year=

-

[42]

Advances in neural information processing systems , volume=

Attention is all you need , author=. Advances in neural information processing systems , volume=

-

[43]

GLUE: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding

GLUE: A multi-task benchmark and analysis platform for natural language understanding , author=. arXiv preprint arXiv:1804.07461 , year=

work page internal anchor Pith review arXiv

-

[44]

Training Verifiers to Solve Math Word Problems

Training verifiers to solve math word problems , author=. arXiv preprint arXiv:2110.14168 , year=

work page internal anchor Pith review arXiv

-

[45]

Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-sql task , author=. arXiv preprint arXiv:1809.08887 , year=

-

[46]

Pubmedqa: A dataset for biomedical research question answering , author=. arXiv preprint arXiv:1909.06146 , year=

-

[47]

SQuAD: 100,000+ Questions for Machine Comprehension of Text

Squad: 100,000+ questions for machine comprehension of text , author=. arXiv preprint arXiv:1606.05250 , year=

work page internal anchor Pith review arXiv

-

[48]

RoBERTa: A Robustly Optimized BERT Pretraining Approach

Roberta: A robustly optimized bert pretraining approach , author=. arXiv preprint arXiv:1907.11692 , year=

work page internal anchor Pith review arXiv 1907

-

[49]

Bart: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension , author=. arXiv preprint arXiv:1910.13461 , year=

work page internal anchor Pith review arXiv 1910

-

[50]

Llama 2: Open Foundation and Fine-Tuned Chat Models

Llama 2: Open foundation and fine-tuned chat models , author=. arXiv preprint arXiv:2307.09288 , year=

work page internal anchor Pith review arXiv

-

[51]

Glm: General language model pretraining with autoregressive blank infilling

Glm: General language model pretraining with autoregressive blank infilling , author=. arXiv preprint arXiv:2103.10360 , year=

-

[52]

MICRO-54: 54th Annual IEEE/ACM International Symposium on Microarchitecture , pages=

DarKnight: An accelerated framework for privacy and integrity preserving deep learning using trusted hardware , author=. MICRO-54: 54th Annual IEEE/ACM International Symposium on Microarchitecture , pages=

-

[53]

Proceedings of the 19th annual international conference on mobile systems, applications, and services , pages=

PPFL: privacy-preserving federated learning with trusted execution environments , author=. Proceedings of the 19th annual international conference on mobile systems, applications, and services , pages=

-

[54]

Proceedings of the 18th International Conference on Mobile Systems, Applications, and Services , pages=

Darknetz: towards model privacy at the edge using trusted execution environments , author=. Proceedings of the 18th International Conference on Mobile Systems, Applications, and Services , pages=

-

[55]

2023 IEEE Symposium on Security and Privacy (SP) , pages=

Shadownet: A secure and efficient on-device model inference system for convolutional neural networks , author=. 2023 IEEE Symposium on Security and Privacy (SP) , pages=. 2023 , organization=

2023

-

[56]

2022 USENIX Annual Technical Conference (USENIX ATC 22) , pages=

\ SOTER \ : Guarding Black-box Inference for General Neural Networks at the Edge , author=. 2022 USENIX Annual Technical Conference (USENIX ATC 22) , pages=

2022

-

[57]

International conference on machine learning , pages=

Efficientnet: Rethinking model scaling for convolutional neural networks , author=. International conference on machine learning , pages=. 2019 , organization=

2019

-

[58]

Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

Deep residual learning for image recognition , author=. Proceedings of the IEEE conference on computer vision and pattern recognition , pages=

-

[59]

2020 20th IEEE/ACM International Symposium on Cluster, Cloud and Internet Computing (CCGRID) , pages=

Serdab: An IoT framework for partitioning neural networks computation across multiple enclaves , author=. 2020 20th IEEE/ACM International Symposium on Cluster, Cloud and Internet Computing (CCGRID) , pages=. 2020 , organization=

2020

-

[60]

arXiv preprint arXiv:1906.00592 , year=

Assessing the ability of self-attention networks to learn word order , author=. arXiv preprint arXiv:1906.00592 , year=

-

[61]

13th USENIX Symposium on Operating Systems Design and Implementation (OSDI 18) , pages=

Graviton: Trusted execution environments on \ GPUs \ , author=. 13th USENIX Symposium on Operating Systems Design and Implementation (OSDI 18) , pages=

-

[62]

Proceedings of the 59th ACM/IEEE Design Automation Conference , pages=

Guardnn: secure accelerator architecture for privacy-preserving deep learning , author=. Proceedings of the 59th ACM/IEEE Design Automation Conference , pages=

-

[63]

, howpublished =

NVIDIA H100 Tensor Core GPU. , howpublished =. 2024 , author =

2024

-

[64]

The 25th Annual International Conference on Mobile Computing and Networking , pages=

Occlumency: Privacy-preserving remote deep-learning inference using SGX , author=. The 25th Annual International Conference on Mobile Computing and Networking , pages=

-

[65]

Proceedings of the ACM Symposium on Cloud Computing , pages=

Lasagna: Accelerating secure deep learning inference in sgx-enabled edge cloud , author=. Proceedings of the ACM Symposium on Cloud Computing , pages=

-

[66]

Proceedings of the 11th ACM Symposium on Cloud Computing , pages=

Vessels: Efficient and scalable deep learning prediction on trusted processors , author=. Proceedings of the 11th ACM Symposium on Cloud Computing , pages=

-

[67]

Proceedings of the Twenty-Fifth International Conference on Architectural Support for Programming Languages and Operating Systems , pages=

Occlum: Secure and efficient multitasking inside a single enclave of intel sgx , author=. Proceedings of the Twenty-Fifth International Conference on Architectural Support for Programming Languages and Operating Systems , pages=

-

[68]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

Goten: Gpu-outsourcing trusted execution of neural network training , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[69]

Slalom: Fast, Verifiable and Private Execution of Neural Networks in Trusted Hardware

Slalom: Fast, verifiable and private execution of neural networks in trusted hardware , author=. arXiv preprint arXiv:1806.03287 , year=

-

[70]

arXiv preprint arXiv:2312.00025 , year=

Secure Transformer Inference , author=. arXiv preprint arXiv:2312.00025 , year=

-

[71]

2020 IEEE Symposium on Security and Privacy (SP) , pages=

Privacy risks of general-purpose language models , author=. 2020 IEEE Symposium on Security and Privacy (SP) , pages=. 2020 , organization=

2020

-

[72]

International conference on machine learning , pages=

Cryptonets: Applying neural networks to encrypted data with high throughput and accuracy , author=. International conference on machine learning , pages=. 2016 , organization=

2016

-

[73]

27th USENIX security symposium (USENIX security 18) , pages=

\ GAZELLE \ : A low latency framework for secure neural network inference , author=. 27th USENIX security symposium (USENIX security 18) , pages=

-

[74]

Proceedings of the 2018 on Asia conference on computer and communications security , pages=

Chameleon: A hybrid secure computation framework for machine learning applications , author=. Proceedings of the 2018 on Asia conference on computer and communications security , pages=

2018

-

[75]

2024 IEEE Symposium on Security and Privacy (SP) , pages=

Promptcare: Prompt copyright protection by watermark injection and verification , author=. 2024 IEEE Symposium on Security and Privacy (SP) , pages=. 2024 , organization=

2024

-

[76]

arXiv preprint arXiv:2509.03117 , year=

PromptCOS: Towards Content-only System Prompt Copyright Auditing for LLMs , author=. arXiv preprint arXiv:2509.03117 , year=

-

[77]

34th USENIX Security Symposium (USENIX Security 25) , pages=

Prompt obfuscation for large language models , author=. 34th USENIX Security Symposium (USENIX Security 25) , pages=

-

[78]

arXiv preprint arXiv:2405.00298 , year=

The reversing machine: reconstructing memory assumptions , author=. arXiv preprint arXiv:2405.00298 , year=

-

[79]

Cryptology ePrint Archive , year=

Intel SGX explained , author=. Cryptology ePrint Archive , year=

-

[80]

2025 , month = may, url =

Encrypting Confidential Data at Rest , author =. 2025 , month = may, url =

2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.