Recognition: 2 theorem links

· Lean TheoremCARMEN: CORDIC-Accelerated Resource-Efficient Multi-Precision Inference Engine for Deep Learning

Pith reviewed 2026-05-11 00:47 UTC · model grok-4.3

The pith

CORDIC iteration depth allows runtime switching between approximate and precise modes in a multi-precision deep learning inference engine.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that CORDIC iteration depth directly controls both accuracy and computational cost, so a single iterative MAC unit can be switched between approximate and accurate execution modes without any hardware modification or model retraining. This adaptive behavior is packaged into a time-multiplexed multi-precision vector engine that improves hardware utilization and delivers measurable reductions in cycles and power for deep learning inference.

What carries the argument

The iterative CORDIC-based multiply-accumulate unit, where the number of shift-and-add steps sets both numerical accuracy and the number of clock cycles per operation.

If this is right

- Up to 33 percent fewer computation cycles per MAC stage.

- 21 percent power savings per MAC stage.

- A 256-PE configuration reaches 4.83 TOPS per square millimeter compute density.

- Energy efficiency reaches 11.67 TOPS per watt.

- FPGA prototype runs real-time object detection at 154.6 ms latency while consuming 0.43 W.

Where Pith is reading between the lines

- The same variable-iteration principle could let future edge chips scale power draw to match the difficulty of the current inference task.

- Extending variable-depth CORDIC to other vector operations might further reduce energy use in battery-powered AI devices.

- Designers of general-purpose accelerators could adopt similar runtime accuracy knobs to avoid building separate low-precision and high-precision datapaths.

Load-bearing premise

That lowering the CORDIC iteration count still leaves enough numerical accuracy for typical deep learning inference tasks without model retraining or added error-correction circuits.

What would settle it

Run a standard image-classification network on the fabricated chip using reduced CORDIC iterations and compare top-1 accuracy against a full-precision software reference on the same test set.

Figures

read the original abstract

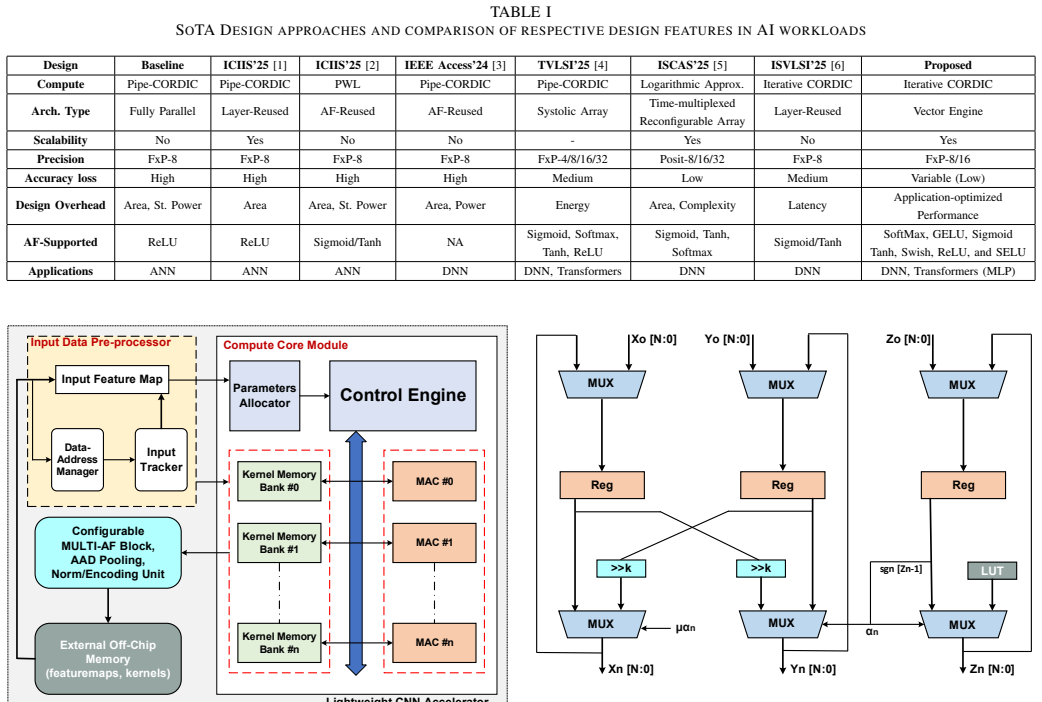

This paper presents CARMEN, a runtime-adaptive, CORDIC-accelerated multi-precision vector engine for resource-efficient deep learning inference. The key insight is that CORDIC iteration depth directly governs computational accuracy, enabling dynamic switching between approximate and accurate execution modes without hardware modification. The architecture integrates a low-resource iterative CORDIC-based MAC unit with a time-multiplexed multi-activation function block, supporting flexible 8/16-bit precision and high hardware utilization. ASIC implementation in 28 nm CMOS achieves up to 33% reduction in computation cycles and 21% power savings per MAC stage; a 256-PE configuration delivers 4.83 TOPS/mm2 compute density and 11.67 TOPS/W energy efficiency. FPGA deployment on PynqZ2 validates 154.6 ms latency at 0.43 W for real-time object detection.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents CARMEN, a runtime-adaptive CORDIC-accelerated multi-precision vector engine for deep learning inference. The core idea is that CORDIC iteration depth can be varied dynamically to trade accuracy for efficiency without hardware changes, integrating iterative CORDIC MAC units with time-multiplexed activation functions. ASIC results in 28 nm CMOS report up to 33% reduction in computation cycles and 21% power savings per MAC, with a 256-PE design achieving 4.83 TOPS/mm² density and 11.67 TOPS/W efficiency; FPGA deployment on PynqZ2 shows 154.6 ms latency at 0.43 W for object detection.

Significance. If the accuracy assumption holds, the approach offers a hardware-efficient mechanism for multi-precision inference by exploiting the iterative nature of CORDIC for runtime adaptation. The reported ASIC and FPGA metrics indicate competitive resource utilization and energy efficiency for edge accelerators, with potential applicability to resource-constrained DL deployments.

major comments (2)

- [Abstract] Abstract and architecture description: the headline efficiency claims (33% cycle reduction, 21% power savings per MAC, 4.83 TOPS/mm², 11.67 TOPS/W) rest on the unverified premise that runtime-varying CORDIC iteration depth preserves end-to-end inference accuracy comparable to fixed 8/16-bit baselines. No per-layer error bounds, iteration-depth histograms during inference, or measured top-1/top-5 accuracy on standard models (ResNet, MobileNet, etc.) are supplied, so the central resource-saving claims cannot be evaluated.

- [Architecture] The assumption that variable-iteration CORDIC MACs require no model retraining or error-correction hardware is load-bearing for all performance numbers, yet the manuscript provides neither analytical error propagation analysis across layers nor empirical validation that accumulated approximation error remains tolerable in deep networks.

minor comments (2)

- [Abstract] The abstract states 'up to' savings without specifying the exact baseline configurations, operating points, or conditions under which the 33% cycle and 21% power figures were obtained.

- [FPGA Results] FPGA latency and power numbers are given for object detection but without model name, input resolution, or comparison to a fixed-precision reference implementation on the same platform.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We acknowledge the need for stronger validation of accuracy preservation under variable CORDIC iteration depths and will incorporate the requested analyses and measurements in the revised manuscript.

read point-by-point responses

-

Referee: [Abstract] Abstract and architecture description: the headline efficiency claims (33% cycle reduction, 21% power savings per MAC, 4.83 TOPS/mm², 11.67 TOPS/W) rest on the unverified premise that runtime-varying CORDIC iteration depth preserves end-to-end inference accuracy comparable to fixed 8/16-bit baselines. No per-layer error bounds, iteration-depth histograms during inference, or measured top-1/top-5 accuracy on standard models (ResNet, MobileNet, etc.) are supplied, so the central resource-saving claims cannot be evaluated.

Authors: We agree that the current manuscript does not supply the requested end-to-end accuracy data or error bounds. The efficiency numbers are obtained from post-layout ASIC measurements of the hardware engine itself; the underlying premise is that CORDIC iteration count can be chosen at runtime to meet a target precision. In the revision we will add (i) analytical per-layer error bounds based on the known CORDIC approximation formula, (ii) iteration-depth histograms collected during inference, and (iii) top-1/top-5 accuracy results for ResNet-50 and MobileNet on ImageNet under the variable-precision modes, allowing direct comparison with fixed 8/16-bit baselines. revision: yes

-

Referee: [Architecture] The assumption that variable-iteration CORDIC MACs require no model retraining or error-correction hardware is load-bearing for all performance numbers, yet the manuscript provides neither analytical error propagation analysis across layers nor empirical validation that accumulated approximation error remains tolerable in deep networks.

Authors: The design intentionally avoids retraining or extra correction hardware by treating iteration depth as a controllable runtime parameter whose error reduction is deterministic. We recognize, however, that an explicit propagation analysis and network-level validation are absent. The revised manuscript will include a dedicated error-analysis section with (a) a closed-form model of how per-operation CORDIC errors accumulate through successive layers and (b) empirical simulation results on representative deep networks confirming that the accumulated error stays within acceptable inference tolerances when iteration depths are selected appropriately. revision: yes

Circularity Check

No circularity: performance metrics derive from ASIC/FPGA measurements, not self-referential equations

full rationale

The paper presents a CORDIC-based hardware architecture for multi-precision DL inference and supports its claims (33% cycle reduction, 21% power savings, 4.83 TOPS/mm², 11.67 TOPS/W) exclusively with post-synthesis ASIC results in 28 nm CMOS and FPGA benchmarks on PynqZ2. No mathematical derivation chain, fitted parameters renamed as predictions, or load-bearing self-citations appear in the provided text. The architecture description and efficiency numbers are grounded in physical implementation data rather than any equation that reduces to its own inputs by construction. This is the expected non-finding for an engineering implementation paper whose central results are externally falsifiable via synthesis tools and measurement.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption CORDIC iteration count directly and monotonically controls computational accuracy for MAC operations

- domain assumption Time-multiplexed activation functions incur negligible overhead relative to the MAC savings

invented entities (1)

-

CARMEN architecture

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

The key insight is that CORDIC iteration depth directly governs computational accuracy, enabling dynamic switching between approximate and accurate execution modes... iterative CORDIC-based MAC unit... up to 33% reduction in computation cycles and 21% power savings per MAC stage

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leancostAlphaLog_high_calibrated_iff unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

unified CORDIC algorithm... hyperbolic rotation mode to compute sigmoid and tanh

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

HYDRA: Hybrid data multiplexing and run-time layer configurable dnn accelerator,

S. Kumar, K. Gupta, I. S. Dasanayake, M. Lokhande, and S. K. Vishvakarma, “HYDRA: Hybrid data multiplexing and run-time layer configurable dnn accelerator,” inProceedings of the 19th International Conference on Industrial and Information Systems (ICIIS), (Sri Lanka), Dec. 2025

2025

-

[2]

Data multiplexed and hardware reused architecture for dnn accelerators,

G. Raut, A. Biasizzo, N. Dhakad, N. Gupta, G. Papa, and S. K. Vishvakarma, “Data multiplexed and hardware reused architecture for dnn accelerators,”Neurocomputing, vol. 486, pp. 147–159, May 2022

2022

-

[3]

QuantMAC: Enhancing Hardware Performance in DNNs With Quantize Enabled Multiply-Accumulate Unit,

N. Ashar, G. Raut, V . Treevedi, S. K. Vishvakarma, and A. Ku- mar, “QuantMAC: Enhancing Hardware Performance in DNNs With Quantize Enabled Multiply-Accumulate Unit,”IEEE Access, vol. 12, pp. 43600–43614, 2024

2024

-

[4]

Flex-PE: Flexible and SIMD Multiprecision PE for AI Workloads,

M. Lokhande, G. Raut, and S. K. Vishvakarma, “Flex-PE: Flexible and SIMD Multiprecision PE for AI Workloads,”IEEE Trans. Very Large Scale Integr. (VLSI) Syst., vol. 33, pp. 1610–1623, June 2025

2025

-

[5]

LPRE: Logarithmic Posit-enabled Reconfigurable edge-AI Engine,

O. Kokane, M. Lokhande, G. Raut, A. Teman, and S. K. Vishvakarma, “LPRE: Logarithmic Posit-enabled Reconfigurable edge-AI Engine,” IEEE International Symposium on Circuits and Systems, 2025

2025

-

[6]

Retrospective: A CORDIC-Based Configurable Activation Function for NN Applications,

O. Kokane, G. Raut, S. Ullah, M. Lokhande, A. Teman, A. Kumar, and S. K. Vishvakarma, “Retrospective: A CORDIC-Based Configurable Activation Function for NN Applications,” inIEEE Computer Society Annual Symposium on VLSI (ISVLSI), pp. 1–6, 2025

2025

-

[7]

Efficient processing of deep neural networks: A tutorial and survey,

V . Sze, Y .-H. Chen, T.-J. Yang, and J. S. Emer, “Efficient processing of deep neural networks: A tutorial and survey,”Proceedings of the IEEE, vol. 105, no. 12, pp. 2295–2329, 2017

2017

-

[8]

Raman: Resource- efficient approximate posit processing for algorithm-hardware co- design,

M. F. Khan, M. Lokhande, and S. K. Vishvakarma, “Raman: Resource- efficient approximate posit processing for algorithm-hardware co- design,” in2026 39th International Conference on VLSI Design & 25th International Conference on Embedded Systems (VLSID), pp. 43–48, 2026

2026

-

[9]

A Unified Parallel CORDIC- Based Hardware Architecture for LSTM Network Acceleration,

N. A. Mohamed and J. R. Cavallaro, “A Unified Parallel CORDIC- Based Hardware Architecture for LSTM Network Acceleration,”IEEE Transactions on Computers, vol. 72, pp. 2752–2766, Oct. 2023

2023

-

[10]

TPU v4: An Optically Reconfigurable Supercomputer for Machine Learning with Hardware Support for Embeddings,

N. Jouppi, G. Kurian, S. Li, P. Ma, R. Nagarajan,et al., “TPU v4: An Optically Reconfigurable Supercomputer for Machine Learning with Hardware Support for Embeddings,” inProceedings of the 50th Annual International Symposium on Computer Architecture, ISCA ’23, (New York, NY , USA), Association for Computing Machinery, 2023

2023

-

[11]

QForce- RL: Quantized FPGA-Optimized Reinforcement Learning Compute En- gine,

A. Jha, T. Dewangan, M. Lokhande, and S. K. Vishvakarma, “QForce- RL: Quantized FPGA-Optimized Reinforcement Learning Compute En- gine,”29th International Symposium on VLSI Design and Test, July 2025

2025

-

[12]

Xr-npe: High-throughput mixed-precision simd neural processing en- gine for extended reality perception workloads,

T. Chaudhari, A. J, T. Dewangan, M. Lokhande, and S. K. Vishvakarma, “Xr-npe: High-throughput mixed-precision simd neural processing en- gine for extended reality perception workloads,” in2026 39th Interna- tional Conference on VLSI Design & 25th International Conference on Embedded Systems (VLSID), pp. 37–42, 2026

2026

-

[13]

Precision-aware On- device Learning and Adaptive Runtime-cONfigurable AI acceleration,

M. Lokhande, A. Jain, and S. K. Vishvakarma, “Precision-aware On- device Learning and Adaptive Runtime-cONfigurable AI acceleration,” IEEE International Symposium on VLSI Design and Test, Aug. 2025

2025

-

[14]

Designing Novel AAD Pooling in Hardware for a Convolutional Neural Network Ac- celerator,

K. Khalil, O. Eldash, A. Kumar, and M. Bayoumi, “Designing Novel AAD Pooling in Hardware for a Convolutional Neural Network Ac- celerator,”IEEE Trans. Very Large Scale Integr. (VLSI) Syst., vol. 30, pp. 303–314, Mar. 2022

2022

-

[15]

A Unified Algorithm for Elementary Functions,

J. S. Walther, “A Unified Algorithm for Elementary Functions,”in Proc. Spring Joint Comput. Conf., pp. 379–385, 1971

1971

-

[16]

RECON: Resource- Efficient CORDIC-Based Neuron Architecture,

G. Raut, S. Rai, S. K. Vishvakarma, and A. Kumar, “RECON: Resource- Efficient CORDIC-Based Neuron Architecture,”IEEE Open Journal of Circuits and Systems, vol. 2, pp. 170–181, 2021

2021

-

[17]

A Reconfig- urable Processing Element for Multiple-Precision Floating/Fixed-Point HPC,

B. Li, K. Li, J. Zhou, Y . Ren, W. Mao, H. Yu, and N. Wong, “A Reconfig- urable Processing Element for Multiple-Precision Floating/Fixed-Point HPC,”IEEE Trans. Circuits Syst. II, Exp. Briefs, vol. 71, pp. 1401–1405, Mar. 2024

2024

-

[18]

MSDF-Based MAC for Energy-Efficient Neural Networks,

S. M. Cherati, M. Barzegar, and L. Sousa, “MSDF-Based MAC for Energy-Efficient Neural Networks,”IEEE Trans. Very Large Scale Integr. (VLSI) Syst., pp. 1–12, 2025

2025

-

[19]

High-Performance Accurate and Approximate Multipliers for FPGA-Based Hardware Ac- celerators,

S. Ullah, S. Rehman, M. Shafique, and A. Kumar, “High-Performance Accurate and Approximate Multipliers for FPGA-Based Hardware Ac- celerators,”IEEE Trans. Comput.-Aided Design Integr. Circuits Syst., vol. 41, pp. 211–224, Feb. 2022

2022

-

[20]

Exploring Hardware Ac- tivation Function Design: CORDIC Architecture in Diverse Floating Formats,

M. Basavaraju, V . Rayapati, and M. Rao, “Exploring Hardware Ac- tivation Function Design: CORDIC Architecture in Diverse Floating Formats,” in25th International Symposium on Quality Electronic Design (ISQED), pp. 1–8, 2024

2024

-

[21]

Efficient Precision- Adjustable Architecture for Softmax Function in DL,

D. Zhu, S. Lu, M. Wang, J. Lin, and Z. Wang, “Efficient Precision- Adjustable Architecture for Softmax Function in DL,”IEEE Trans. Circuits Syst. II, Exp. Briefs, vol. 67, pp. 3382–3386, Dec. 2020

2020

-

[22]

Approximate Softmax Functions for Energy-Efficient DNNs,

K. Chen, Y . Gao, H. Waris, W. Liu, and F. Lombardi, “Approximate Softmax Functions for Energy-Efficient DNNs,”IEEE Trans. Very Large Scale Integr. (VLSI) Syst., vol. 31, pp. 4–16, Jan. 2023

2023

-

[23]

A Two-Stage Operand Trimming Approximate Logarithmic Multiplier,

R. Pilipovi ´c, P. Buli´c, and U. Lotri ˇc, “A Two-Stage Operand Trimming Approximate Logarithmic Multiplier,”IEEE Trans. Circuits Syst. I, Reg. Papers, vol. 68, pp. 2535–2545, June 2022

2022

-

[24]

Edge-Side Fine-Grained Sparse CNN Accelerator With Efficient Dynamic Pruning Scheme,

B. Wu, T. Yu, K. Chen, and W. Liu, “Edge-Side Fine-Grained Sparse CNN Accelerator With Efficient Dynamic Pruning Scheme,”IEEE Trans. Circuits Syst. I, Reg. Papers, vol. 71, pp. 1285–1298, Mar. 2024

2024

-

[25]

Dedicated FPGA Implementation of the Gaussian TinyYOLOv3 Accelerator,

S. Ki, J. Park, and H. Kim, “Dedicated FPGA Implementation of the Gaussian TinyYOLOv3 Accelerator,”IEEE Trans. Circuits Syst. II, Exp. Briefs, vol. 70, pp. 3882–3886, Oct. 2023

2023

-

[26]

A Real-Time Object Detection Processor With XNOR-based Variable-Precision Computing Unit,

W. Lee, K. Kim, W. Ahn, J. Kim, and D. Jeon, “A Real-Time Object Detection Processor With XNOR-based Variable-Precision Computing Unit,”IEEE Trans. Very Large Scale Integr. (VLSI) Syst., vol. 31, pp. 749–761, June 2023

2023

-

[27]

An Empirical Approach to Enhance Performance for Scalable CORDIC-Based DNNs,

G. Raut, S. Karkun, and S. K. Vishvakarma, “An Empirical Approach to Enhance Performance for Scalable CORDIC-Based DNNs,”ACM Trans. Reconfigurable Technol. Syst., vol. 16, June 2023

2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.