Recognition: 2 theorem links

· Lean TheoremBGM-IV: an AI-powered Bayesian generative modeling approach for instrumental variable analysis

Pith reviewed 2026-05-11 00:50 UTC · model grok-4.3

The pith

A Bayesian generative model with structured latent components enables accurate nonlinear instrumental variable estimation from high-dimensional covariates.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

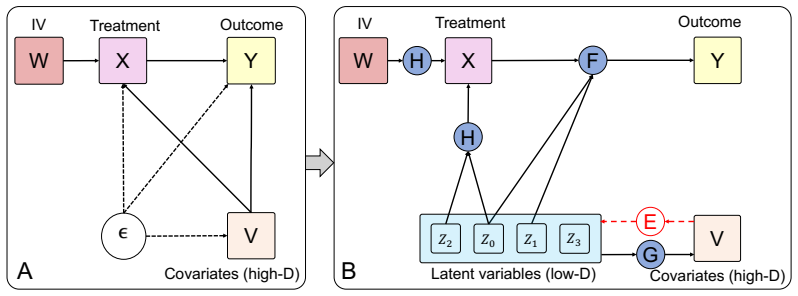

BGM-IV reframes nonlinear IV regression as posterior inference in a causally structured latent space. BGM-IV infers latent components that separately capture shared confounding structure, outcome-specific variation, treatment-specific variation, and covariate-only nuisance information. To account for endogeneity, BGM-IV replaces the confounded outcome likelihood with an IV-integrated pseudo-likelihood that averages over instrument-induced treatment values within the latent model. Across various benchmark datasets, BGM-IV remains competitive in the classical low-dimensional regime and performs best in high-dimensional covariate regimes.

What carries the argument

The structured latent generative model whose components separately encode confounding, outcome, treatment, and nuisance variation, together with the IV-integrated pseudo-likelihood that averages over instrument-induced treatments to correct for endogeneity during posterior inference.

If this is right

- Nonlinear IV estimation becomes feasible without directly learning the causal map in the raw high-dimensional feature space.

- Endogeneity is handled inside a single generative model rather than through separate two-stage or moment-based procedures.

- The same latent separation that isolates confounding also supports competitive performance on standard low-dimensional IV tasks.

- Generative modeling supplies a principled route to causal estimation when the causal signal is embedded in rich covariate representations.

Where Pith is reading between the lines

- The explicit separation of latent components may make the sources of estimated causal effects easier to inspect than in black-box representation learners.

- The same generative structure could be tested on problems with multiple weak instruments or with continuous instruments.

- Replacing the current inference procedure with more scalable variational methods would test whether the performance gains survive at larger scale.

Load-bearing premise

The assumed latent components can be inferred to capture distinct sources of variation without residual mixing, and the pseudo-likelihood correctly removes the endogeneity induced by the instruments.

What would settle it

A high-dimensional IV benchmark dataset with known ground-truth causal effects where BGM-IV recovers the effects less accurately than existing nonlinear methods would falsify the claim that the structured latent approach succeeds where other methods fail.

Figures

read the original abstract

Instrumental-variable (IV) regression enables causal estimation under endogeneity, but modern IV problems often involve nonlinear structural effects and high-dimensional covariates. Existing nonlinear IV methods directly learn the causal relation in observed feature space or rely on learned representations within two-stage or moment-based procedures, which can struggle when the causal information is embedded in a high-dimensional representation. We propose BGM-IV, a latent Bayesian generative modeling approach that reframes nonlinear IV regression as posterior inference in a causally structured latent space. BGM-IV infers latent components that separately capture shared confounding structure, outcome-specific variation, treatment-specific variation, and covariate-only nuisance information. To account for endogeneity, BGM-IV replaces the confounded outcome likelihood with an IV-integrated pseudo-likelihood that averages over instrument-induced treatment values within the latent model. Across various benchmark datasets, BGM-IV remains competitive in the classical low-dimensional regime and performs best in high-dimensional covariate regimes. Together, these results show that structured latent generative modeling provides a principled and effective strategy to nonlinear IV estimation with rich covariates. The code of BGM-IV is available at https://github.com/liuq-lab/BGM-IV.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes BGM-IV, a Bayesian generative modeling approach for nonlinear instrumental variable (IV) regression with high-dimensional covariates. It reframes the problem as posterior inference over a structured latent space whose components separately capture shared confounding, outcome-specific variation, treatment-specific variation, and covariate-only nuisance. Endogeneity is addressed via an IV-integrated pseudo-likelihood that averages over instrument-induced treatment values. The abstract reports that the method is competitive on classical low-dimensional benchmarks and outperforms existing approaches in high-dimensional regimes, concluding that structured latent generative modeling supplies a principled strategy for such IV problems.

Significance. If the modeling assumptions, identifiability arguments, and inference procedure are sound, the work could offer a flexible generative framework for disentangling latent structure in nonlinear IV settings where direct representation learning or two-stage methods struggle. The emphasis on explicit separation of confounding, treatment, and outcome latents, together with the pseudo-likelihood construction, addresses a practically relevant gap in high-dimensional causal estimation.

major comments (2)

- [Abstract] Abstract: the central modeling claim—that the four latent components can be inferred to separately capture shared confounding, outcome-specific, treatment-specific, and nuisance variation—is stated without any equations, identifiability conditions, or derivation of the IV-integrated pseudo-likelihood. This absence makes it impossible to evaluate whether the separation is independently grounded or reduces to a fitted decomposition by construction.

- [Empirical Evaluation] Empirical section (implied by benchmark claims): the abstract states competitive or superior performance but supplies no error bars, ablation studies, details on pseudo-likelihood implementation, or description of how the latent posterior is approximated. Without these, the reported gains cannot be assessed for statistical significance or sensitivity to modeling choices.

minor comments (1)

- The GitHub link for code is a positive feature; ensure the released repository contains the exact model specification, inference algorithm, and scripts that reproduce the benchmark numbers.

Simulated Author's Rebuttal

We thank the referee for their positive assessment of BGM-IV's potential and for the constructive major comments. We address each point below, clarifying the role of the abstract as a summary while offering targeted revisions for improved transparency.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central modeling claim—that the four latent components can be inferred to separately capture shared confounding, outcome-specific, treatment-specific, and nuisance variation—is stated without any equations, identifiability conditions, or derivation of the IV-integrated pseudo-likelihood. This absence makes it impossible to evaluate whether the separation is independently grounded or reduces to a fitted decomposition by construction.

Authors: The abstract is a concise high-level summary, as is conventional. The manuscript provides the full generative model equations defining the four latent components (Section 2), the identifiability conditions and causal separation arguments (Section 3.1), and the derivation of the IV-integrated pseudo-likelihood that averages over instrument-induced treatments (Section 3.2). The separation is grounded in the explicit causal roles assigned to each latent and the pseudo-likelihood's enforcement of the IV exclusion restriction, rather than arising solely from fitting. We will revise the abstract to include a brief reference to these sections for readers evaluating the claims from the summary alone. revision: partial

-

Referee: [Empirical Evaluation] Empirical section (implied by benchmark claims): the abstract states competitive or superior performance but supplies no error bars, ablation studies, details on pseudo-likelihood implementation, or description of how the latent posterior is approximated. Without these, the reported gains cannot be assessed for statistical significance or sensitivity to modeling choices.

Authors: The abstract summarizes benchmark outcomes without technical reporting details. Section 4 of the manuscript presents the empirical results with error bars from repeated runs, ablation studies isolating the latent components and pseudo-likelihood, implementation specifics for the instrument-averaged pseudo-likelihood, and the variational inference scheme used to approximate the latent posterior. These elements support evaluation of significance and robustness. We will revise the abstract to note that the performance claims are backed by such analyses in the main text. revision: partial

Circularity Check

No significant circularity detected

full rationale

The abstract and summary describe BGM-IV as a latent Bayesian generative model that infers separate components for shared confounding, outcome-specific variation, treatment-specific variation, and covariate nuisance, then replaces the outcome likelihood with an IV-integrated pseudo-likelihood. No equations, model specifications, identifiability proofs, or derivation steps appear in the provided text. Without visible mathematical structure, no step can be exhibited that reduces by construction to a fitted input, self-definition, or self-citation chain. The central modeling claim therefore remains self-contained and cannot be flagged as circular under the required criteria of explicit reduction.

Axiom & Free-Parameter Ledger

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearBGM-IV infers latent components that separately capture shared confounding structure, outcome-specific variation, treatment-specific variation, and covariate-only nuisance information. ... replaces the confounded outcome likelihood with an IV-integrated pseudo-likelihood that averages over instrument-induced treatment values

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearstructured latent generative modeling provides a principled and effective strategy to nonlinear IV estimation

Reference graph

Works this paper leans on

-

[1]

Pearl, M

J. Pearl, M. Glymour, and N.P. Jewell.Causal Inference in Statistics: A Primer. Wiley, 2016. ISBN 9781119186847. URLhttps://books.google.com/books?id=L3G-CgAAQBAJ

2016

-

[2]

Imbens and Donald B

Guido W. Imbens and Donald B. Rubin.Causal Inference for Statistics, Social, and Biomedical Sciences: An Introduction. Cambridge University Press, 2015

2015

-

[3]

Hernan and J.M

M.A. Hernan and J.M. Robins.Causal Inference: What If. Chapman & Hall/CRC Monographs on Statistics & Applied Probab. CRC Press, 2025. ISBN 9781420076165. URL https: //books.google.com/books?id=_KnHIAAACAAJ

2025

-

[4]

Estimating causal effects of treatments in randomized and nonrandomized studies.Journal of educational Psychology, 66(5):688, 1974

Donald B Rubin. Estimating causal effects of treatments in randomized and nonrandomized studies.Journal of educational Psychology, 66(5):688, 1974

1974

-

[5]

Statistics and causal inference.Journal of the American statistical Association, 81(396):945–960, 1986

Paul W Holland. Statistics and causal inference.Journal of the American statistical Association, 81(396):945–960, 1986

1986

-

[6]

Morgan and Christopher Winship.Counterfactuals and Causal Inference: Methods and Principles for Social Research

Stephen L. Morgan and Christopher Winship.Counterfactuals and Causal Inference: Methods and Principles for Social Research. Analytical Methods for Social Research. Cambridge University Press, 2 edition, 2014

2014

-

[7]

Identification of causal effects using instrumental variables.Journal of the American statistical Association, 91(434):444–455, 1996

Joshua D Angrist, Guido W Imbens, and Donald B Rubin. Identification of causal effects using instrumental variables.Journal of the American statistical Association, 91(434):444–455, 1996

1996

-

[8]

Princeton university press, 2009

Joshua D Angrist and Jörn-Steffen Pischke.Mostly harmless econometrics: An empiricist’s companion. Princeton university press, 2009

2009

-

[9]

Identification and estimation of local average treatment effects, 1995

Joshua Angrist and Guido Imbens. Identification and estimation of local average treatment effects, 1995

1995

-

[10]

Automatic debiased machine learning of causal and structural effects.Econometrica, 90(3):967–1027, 2022

Victor Chernozhukov, Whitney K Newey, and Rahul Singh. Automatic debiased machine learning of causal and structural effects.Econometrica, 90(3):967–1027, 2022

2022

-

[11]

Overlap in observational studies with high-dimensional covariates.Journal of Econometrics, 221(2): 644–654, 2021

Alexander D’Amour, Peng Ding, Avi Feller, Lihua Lei, and Jasjeet Sekhon. Overlap in observational studies with high-dimensional covariates.Journal of Econometrics, 221(2): 644–654, 2021

2021

-

[12]

Victor Chernozhukov, Denis Chetverikov, Mert Demirer, Esther Duflo, Christian Hansen, Whitney Newey, and James Robins. Double/debiased machine learning for treatment and structural parameters.The Econometrics Journal, 21(1):C1–C68, 02 2018. ISSN 1368-4221. doi: 10.1111/ectj.12097. URLhttps://doi.org/10.1111/ectj.12097

-

[13]

Learning representations for counterfactual inference

Fredrik Johansson, Uri Shalit, and David Sontag. Learning representations for counterfactual inference. InInternational conference on machine learning, pages 3020–3029. PMLR, 2016

2016

-

[14]

Causal effect inference with deep latent-variable models.Advances in neural information processing systems, 30, 2017

Christos Louizos, Uri Shalit, Joris M Mooij, David Sontag, Richard Zemel, and Max Welling. Causal effect inference with deep latent-variable models.Advances in neural information processing systems, 30, 2017

2017

-

[15]

Estimating individual treatment effect: generalization bounds and algorithms

Uri Shalit, Fredrik D Johansson, and David Sontag. Estimating individual treatment effect: generalization bounds and algorithms. InInternational conference on machine learning, pages 3076–3085. PMLR, 2017

2017

-

[16]

Toward causal representation learning.Proceedings of the IEEE, 109(5):612–634, 2021

Bernhard Schölkopf, Francesco Locatello, Stefan Bauer, Nan Rosemary Ke, Nal Kalchbrenner, Anirudh Goyal, and Yoshua Bengio. Toward causal representation learning.Proceedings of the IEEE, 109(5):612–634, 2021. 10

2021

-

[17]

Instrumental variables in causal inference and machine learning: A survey.ACM Computing Surveys, 57(11):1–36, 2025

Anpeng Wu, Kun Kuang, Ruoxuan Xiong, and Fei Wu. Instrumental variables in causal inference and machine learning: A survey.ACM Computing Surveys, 57(11):1–36, 2025

2025

-

[18]

Retrospectives: Who invented instrumental variable regression?Journal of Economic Perspectives, 17(3):177–194, 2003

James H Stock and Francesco Trebbi. Retrospectives: Who invented instrumental variable regression?Journal of Economic Perspectives, 17(3):177–194, 2003

2003

-

[19]

Instrumental variable estimation of nonparametric models.Econometrica, 71(5):1565–1578, 2003

Whitney K Newey and James L Powell. Instrumental variable estimation of nonparametric models.Econometrica, 71(5):1565–1578, 2003

2003

-

[20]

Semi-nonparametric iv estimation of shape-invariant engel curves.Econometrica, 75(6):1613–1669, 2007

Richard Blundell, Xiaohong Chen, and Dennis Kristensen. Semi-nonparametric iv estimation of shape-invariant engel curves.Econometrica, 75(6):1613–1669, 2007

2007

-

[21]

Nonparametric instrumental regression.Econometrica, 79(5):1541–1565, 2011

Serge Darolles, Yanqin Fan, Jean-Pierre Florens, and Eric Renault. Nonparametric instrumental regression.Econometrica, 79(5):1541–1565, 2011

2011

-

[22]

Estimation of nonparametric conditional moment models with possibly nonsmooth generalized residuals.Econometrica, 80(1):277–321, 2012

Xiaohong Chen and Demian Pouzo. Estimation of nonparametric conditional moment models with possibly nonsmooth generalized residuals.Econometrica, 80(1):277–321, 2012

2012

-

[23]

Kernel instrumental variable regression

Rahul Singh, Maneesh Sahani, and Arthur Gretton. Kernel instrumental variable regression. Advances in Neural Information Processing Systems, 32, 2019

2019

-

[24]

Deep iv: A flexible approach for counterfactual prediction

Jason Hartford, Greg Lewis, Kevin Leyton-Brown, and Matt Taddy. Deep iv: A flexible approach for counterfactual prediction. InInternational conference on machine learning, pages 1414–1423. PMLR, 2017

2017

-

[25]

Learning deep features in instrumental variable regression

Liyuan Xu, Yutian Chen, Siddarth Srinivasan, Nando de Freitas, Arnaud Doucet, and Arthur Gretton. Learning deep features in instrumental variable regression. InInternational Conference on Learning Representations, 2021. URL https://openreview.net/forum?id=sy4Kg_ ZQmS7

2021

-

[26]

Large sample properties of generalized method of moments estimators

Lars Peter Hansen. Large sample properties of generalized method of moments estimators. Econometrica: Journal of the econometric society, pages 1029–1054, 1982

1982

-

[27]

Deep generalized method of moments for instrumental variable analysis.Advances in neural information processing systems, 32, 2019

Andrew Bennett, Nathan Kallus, and Tobias Schnabel. Deep generalized method of moments for instrumental variable analysis.Advances in neural information processing systems, 32, 2019

2019

-

[28]

Minimax estimation of conditional moment models.Advances in Neural Information Processing Systems, 33: 12248–12262, 2020

Nishanth Dikkala, Greg Lewis, Lester Mackey, and Vasilis Syrgkanis. Minimax estimation of conditional moment models.Advances in Neural Information Processing Systems, 33: 12248–12262, 2020

2020

-

[29]

Cambridge University Press, 2 edition, 2009

Judea Pearl.Causality. Cambridge University Press, 2 edition, 2009

2009

-

[30]

John Bound, David A Jaeger, and Regina M Baker. Problems with instrumental variables estimation when the correlation between the instruments and the endogenous explanatory variable is weak.Journal of the American statistical association, 90(430):443–450, 1995

1995

-

[31]

Peter Hall and Joel L. Horowitz. Nonparametric methods for inference in the presence of instrumental variables.The Annals of Statistics, 33(6):2904 – 2929, 2005. doi: 10.1214/ 009053605000000714. URLhttps://doi.org/10.1214/009053605000000714

-

[32]

Linear inverse problems in structural econometrics estimation based on spectral decomposition and regularization.Handbook of econometrics, 6:5633–5751, 2007

Marine Carrasco, Jean-Pierre Florens, and Eric Renault. Linear inverse problems in structural econometrics estimation based on spectral decomposition and regularization.Handbook of econometrics, 6:5633–5751, 2007

2007

-

[33]

On the completeness condition in nonparametric instrumental problems

Xavier D’Haultfoeuille. On the completeness condition in nonparametric instrumental problems. Econometric Theory, 27(3):460–471, 2011

2011

-

[34]

Qiao Liu and Wing Hung Wong. A bayesian generative modeling approach for arbitrary conditional inference.arXiv preprint arXiv:2601.05355, 2026

-

[35]

Journal of the American Statistical Association , author =

Qiao Liu and Wing Hung Wong. An ai-powered bayesian generative modeling approach for causal inference in observational studies.Journal of the American Statistical Association, 0 (ja):1–20, 2026. doi: 10.1080/01621459.2026.2654227. URL https://doi.org/10.1080/ 01621459.2026.2654227. 11

-

[36]

{TensorFlow}: a system for {Large-Scale} machine learning

Martín Abadi, Paul Barham, Jianmin Chen, Zhifeng Chen, Andy Davis, Jeffrey Dean, Matthieu Devin, Sanjay Ghemawat, Geoffrey Irving, Michael Isard, et al. {TensorFlow}: a system for {Large-Scale} machine learning. In12th USENIX symposium on operating systems design and implementation (OSDI 16), pages 265–283, 2016

2016

-

[37]

An encoding generative modeling approach to dimension reduction and covariate adjustment in causal inference with observational studies

Qiao Liu, Zhongren Chen, and Wing Hung Wong. An encoding generative modeling approach to dimension reduction and covariate adjustment in causal inference with observational studies. Proceedings of the National Academy of Sciences, 121(23):e2322376121, 2024

2024

-

[38]

Pytorch: An imperative style, high-performance deep learning library.Advances in neural information processing systems, 32, 2019

Adam Paszke, Sam Gross, Francisco Massa, Adam Lerer, James Bradbury, Gregory Chanan, Trevor Killeen, Zeming Lin, Natalia Gimelshein, Luca Antiga, et al. Pytorch: An imperative style, high-performance deep learning library.Advances in neural information processing systems, 32, 2019

2019

-

[39]

Measurement bias and effect restoration in causal inference

Manabu Kuroki and Judea Pearl. Measurement bias and effect restoration in causal inference. Biometrika, pages 423–437, 2014

2014

-

[40]

Identifying causal effects with proxy variables of an unmeasured confounder.Biometrika, 105(4):987–993, 2018

Wang Miao, Zhi Geng, and Eric J Tchetgen Tchetgen. Identifying causal effects with proxy variables of an unmeasured confounder.Biometrika, 105(4):987–993, 2018

2018

-

[41]

Gradient-based learning applied to document recognition.Proceedings of the IEEE, 86(11):2278–2324, 2002

Yann LeCun, Léon Bottou, Yoshua Bengio, and Patrick Haffner. Gradient-based learning applied to document recognition.Proceedings of the IEEE, 86(11):2278–2324, 2002. A Detailed Training and Evaluation Algorithm Algorithm 2 expands the main-text procedure in Algorithm 1. For the vector-proxy experiment, the neural baselines use a shared fully connected vec...

2002

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.