Recognition: no theorem link

Causal EpiNets: Precision-corrected Bounds on Individual Treatment Effects using Epistemic Neural Networks

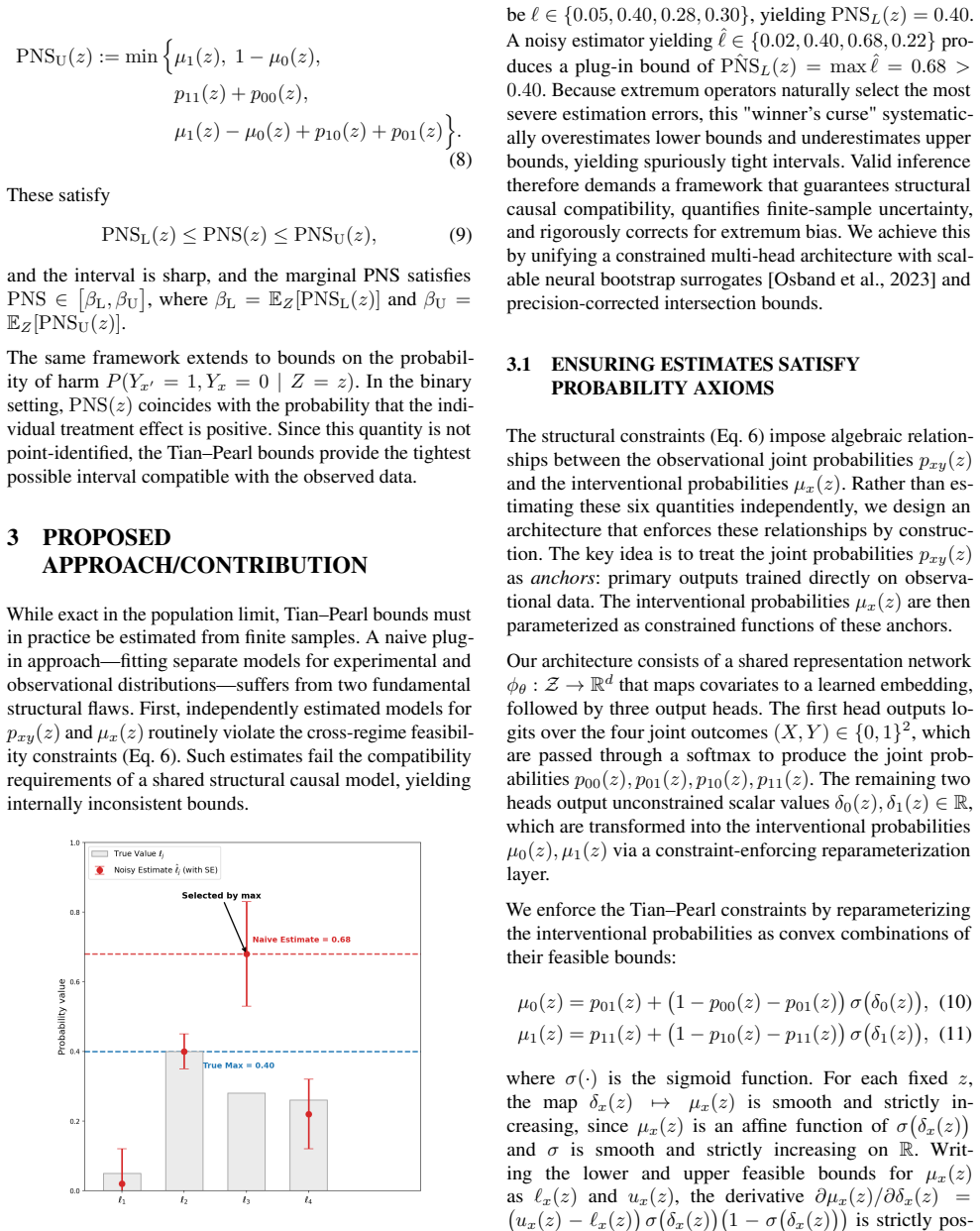

Pith reviewed 2026-05-11 01:36 UTC · model grok-4.3

The pith

A neural framework corrects finite-sample bias and constraint violations in bounds on individual treatment effects.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Standard plug-in estimators for the Probability of Necessity and Sufficiency violate structural constraints and suffer from extremum bias in finite samples. The proposed neural framework resolves these issues through an anchored architecture that enforces constraints by design and precision-corrected inference with Epistemic Neural Networks. Empirical evaluations confirm that this approach maintains nominal coverage and exact constraint validity in high-dimensional regimes where standard estimators systematically undercover.

What carries the argument

Anchored neural architecture that guarantees structural constraint satisfaction by construction, combined with precision-corrected intersection-bound inference using Epistemic Neural Networks for scalable uncertainty quantification.

Load-bearing premise

The anchored neural architecture guarantees structural constraint satisfaction by construction and that epistemic neural networks deliver accurate uncertainty quantification for the intersection bounds without introducing new biases.

What would settle it

High-dimensional simulation experiments showing that the proposed intervals fail to achieve nominal coverage or violate probability constraints.

Figures

read the original abstract

Individual treatment effects are not point-identified from data. The Probability of Necessity and Sufficiency (PNS) circumvents this limitation by characterizing individual-level causality through intersection bounds derived from combined experimental and observational data. In finite samples, however, standard plug-in estimators systematically fail: they violate structural probability constraints and suffer from extremum bias induced by max-min operators, yielding spuriously narrow intervals. We propose a neural framework for finite-sample PNS estimation that resolves both pathologies. We introduce an anchored neural architecture that guarantees structural constraint satisfaction by construction. To correct extremum bias, we employ precision-corrected intersection-bound inference, leveraging Epistemic Neural Networks for scalable, high-dimensional uncertainty quantification. Empirical evaluations confirm that this approach maintains nominal coverage and exact constraint validity in high-dimensional regimes where standard estimators systematically undercover.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to introduce Causal EpiNets, a neural framework for finite-sample PNS estimation that resolves constraint violations and extremum bias in standard plug-in estimators. It proposes an anchored neural architecture to enforce structural probability constraints exactly by construction and employs epistemic neural networks for precision-corrected intersection-bound inference, with empirical results purportedly showing nominal coverage and exact validity in high-dimensional regimes.

Significance. If the central technical guarantees hold, the work would provide a scalable approach to valid individual treatment effect bounds in high dimensions, addressing a practical limitation of existing causal estimators. The combination of architectural constraints with epistemic uncertainty quantification represents a potentially useful methodological advance for finite-sample causal inference.

major comments (3)

- [Abstract and §3] Abstract and §3 (Anchored Neural Architecture): The claim that the anchored architecture 'guarantees structural constraint satisfaction by construction' is load-bearing for the finite-sample validity result, yet no derivation is provided showing that the anchoring mechanism remains invariant under gradient-based optimization in high dimensions; neural anchoring is typically parameterization-dependent and can drift.

- [§4] §4 (Empirical Evaluations): The reported nominal coverage and exact constraint validity lack sufficient experimental details (data splits, hyperparameter selection, controls for post-hoc adjustments during NN training), making it impossible to rule out that the results are sensitive to implementation choices that could affect the central claims.

- [§3.2] §3.2 (Precision-corrected inference): The assertion that epistemic neural networks deliver calibrated uncertainty for the max-min PNS bounds without introducing new finite-sample biases requires explicit analysis or bounds; extremum operators can amplify tail miscalibration in posterior approximations.

minor comments (2)

- [Notation] Clarify notation for the precision-correction term and its exact relationship to the intersection bounds in the main text.

- [Experiments] Add a table or figure comparing constraint violation rates and interval widths against baselines with explicit sample sizes.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed comments on the manuscript. We address each major comment point by point below, indicating where revisions will be made to strengthen the paper.

read point-by-point responses

-

Referee: [Abstract and §3] Abstract and §3 (Anchored Neural Architecture): The claim that the anchored architecture 'guarantees structural constraint satisfaction by construction' is load-bearing for the finite-sample validity result, yet no derivation is provided showing that the anchoring mechanism remains invariant under gradient-based optimization in high dimensions; neural anchoring is typically parameterization-dependent and can drift.

Authors: We agree that an explicit derivation of invariance under gradient-based optimization would improve the rigor of the claim. The anchored architecture is constructed so that the network outputs lie in the valid probability simplex for any parameter values (via a softmax-like normalization anchored to the structural constraints), and the loss function is defined only over this constrained set. Gradient updates therefore cannot violate the constraints by design. In the revised manuscript we will add a short formal argument and proof sketch in §3 clarifying this invariance, including a note on why drift does not occur under standard optimizers. revision: yes

-

Referee: [§4] §4 (Empirical Evaluations): The reported nominal coverage and exact constraint validity lack sufficient experimental details (data splits, hyperparameter selection, controls for post-hoc adjustments during NN training), making it impossible to rule out that the results are sensitive to implementation choices that could affect the central claims.

Authors: We acknowledge that the current experimental section provides insufficient detail for full reproducibility and robustness assessment. In the revision we will expand §4 with explicit descriptions of train/validation/test splits, hyperparameter selection (including grid search or Bayesian optimization ranges and criteria), random seed reporting, and any post-hoc checks or adjustments performed during or after training. We will also add sensitivity analyses to demonstrate that the nominal coverage and constraint satisfaction are not artifacts of particular implementation choices. revision: yes

-

Referee: [§3.2] §3.2 (Precision-corrected inference): The assertion that epistemic neural networks deliver calibrated uncertainty for the max-min PNS bounds without introducing new finite-sample biases requires explicit analysis or bounds; extremum operators can amplify tail miscalibration in posterior approximations.

Authors: This concern is well-taken: max-min operators can indeed magnify miscalibration in approximate posteriors. Our precision-correction procedure widens the intersection bounds using the epistemic uncertainty estimates precisely to counteract extremum bias, and the reported experiments show that the resulting intervals achieve nominal coverage. However, we do not currently provide a rigorous finite-sample bound quantifying residual bias after correction. In the revision we will expand §3.2 with additional discussion of the correction mechanism, references to related work on epistemic uncertainty under optimization, and an explicit statement that while empirical validation supports the approach, a complete theoretical guarantee on bias amplification remains an open question. revision: partial

- A complete theoretical finite-sample bound establishing that the precision-correction fully neutralizes any new biases introduced by the max-min operators under the epistemic neural network posterior approximation.

Circularity Check

No significant circularity in the proposed neural framework for finite-sample PNS estimation.

full rationale

The paper introduces an anchored neural architecture explicitly designed to enforce structural constraints by construction and employs epistemic neural networks for uncertainty quantification on precision-corrected intersection bounds. These are presented as novel components that address identified pathologies in plug-in estimators, without any quoted reduction of the output bounds or coverage guarantees back to fitted parameters or prior self-citations by the paper's own equations. The derivation chain relies on the new architecture's parameterization and the properties of epistemic NNs as independent tools, remaining self-contained against external benchmarks rather than tautological. No self-definitional loops, renamed predictions, or load-bearing self-citations appear in the provided abstract or description.

Axiom & Free-Parameter Ledger

free parameters (1)

- neural network hyperparameters and training parameters

axioms (1)

- domain assumption Combined experimental and observational data are available and satisfy the conditions for PNS identification

invented entities (2)

-

Anchored neural architecture

no independent evidence

-

Precision-corrected intersection-bound inference

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Annals of Mathematics and Artificial Intelligence , volume =

Tian, Jin and Pearl, Judea , title =. Annals of Mathematics and Artificial Intelligence , volume =. 2000 , doi =

work page 2000

- [2]

-

[3]

Annals of Statistics , volume =

Chernozhukov, Victor and Chetverikov, Denis and Kato, Kengo , title =. Annals of Statistics , volume =. 2013 , doi =

work page 2013

- [4]

-

[5]

Weak Convergence and Empirical Processes: With Applications to Statistics , series =. 1996 , doi =

work page 1996

-

[6]

Bickel, Peter J. and Klaassen, Chris A. J. and Ritov, Ya'acov and Wellner, Jon A. , title =

-

[7]

and McFadden, Daniel , title =

Newey, Whitney K. and McFadden, Daniel , title =. Handbook of Econometrics , editor =. 1994 , doi =

work page 1994

-

[8]

Journal of the American Statistical Association , volume =

White, Halbert , title =. Journal of the American Statistical Association , volume =. 1989 , doi =

work page 1989

- [9]

-

[10]

Journal of Nonparametric Statistics , volume =

Shen, Xiaoxi and Jiang, Chao and Sakhanenko, Lyudmila and Lu, Qing , title =. Journal of Nonparametric Statistics , volume =. 2023 , doi =

work page 2023

-

[11]

White, Halbert , title =. Econometrica , volume =. 1982 , doi =

work page 1982

- [12]

-

[13]

Proceedings of the 34th International Conference on Machine Learning , pages =

Koh, Pang Wei and Liang, Percy , title =. Proceedings of the 34th International Conference on Machine Learning , pages =

-

[14]

Proceedings of the 27th International Conference on Machine Learning , pages =

Martens, James , title =. Proceedings of the 27th International Conference on Machine Learning , pages =

- [15]

-

[16]

International Conference on Learning Representations (ICLR) , year =

A Neural Framework for Generalized Causal Sensitivity Analysis , author =. International Conference on Learning Representations (ICLR) , year =

-

[17]

International Conference on Machine Learning (ICML) , year =

Doubly Robust Causal Effect Estimation under Networked Interference via Targeted Learning , author =. International Conference on Machine Learning (ICML) , year =

-

[18]

Conference on Learning Theory (COLT) , year =

Implicit regularization for deep neural networks driven by an Ornstein-Uhlenbeck like process , author =. Conference on Learning Theory (COLT) , year =

-

[19]

Conference on Learning Theory (COLT) , year =

Algorithmic Regularization in Over-parameterized Matrix Sensing and Neural Networks with Quadratic Activations , author =. Conference on Learning Theory (COLT) , year =

-

[20]

arXiv preprint arXiv:2205.10327 , year =

What's the Harm? Sharp Bounds on the Fraction Negatively Affected by Treatment , author =. arXiv preprint arXiv:2205.10327 , year =. doi:10.48550/arXiv.2205.10327 , url =

-

[21]

Journal of Causal Inference , volume =

Personalized decision making – A conceptual introduction , author =. Journal of Causal Inference , volume =. 2023 , publisher =

work page 2023

-

[22]

arXiv preprint arXiv:2210.05027 , year =

Probabilities of Causation: Adequate Size of Experimental and Observational Samples , author =. arXiv preprint arXiv:2210.05027 , year =

-

[23]

Learning Probabilities of Causation from Finite Population Data , author =. 2024 , url =

work page 2024

-

[24]

Twin Research and Human Genetics , year =

Causal Inference and Observational Research: The Utility of Twins , author =. Twin Research and Human Genetics , year =

-

[25]

Advances in Neural Information Processing Systems (NeurIPS 2017) , pages =

Causal Effect Inference with Deep Latent-Variable Models , author =. Advances in Neural Information Processing Systems (NeurIPS 2017) , pages =. 2017 , publisher =

work page 2017

-

[26]

Advances in Neural Information Processing Systems , volume =

Adapting Neural Networks for the Estimation of Treatment Effects , author =. Advances in Neural Information Processing Systems , volume =

-

[27]

Proceedings of the 34th International Conference on Machine Learning , pages =

Estimating Individual Treatment Effect: Generalization Bounds and Algorithms , author =. Proceedings of the 34th International Conference on Machine Learning , pages =. 2017 , volume =

work page 2017

-

[28]

arXiv preprint arXiv:2111.10106 , year=

A Large Scale Benchmark for Individual Treatment Effect Prediction and Uplift Modeling , author =. arXiv preprint arXiv:2111.10106 , year =

-

[29]

Karavani, Ehud and ACIC Organizing Committee , title =. 2018 , howpublished =

work page 2018

-

[30]

ACIC 2019 Data Challenge Datasets , year =

work page 2019

-

[31]

Journal of Computational and Graphical Statistics , volume =

Bayesian nonparametric modeling for causal inference , author =. Journal of Computational and Graphical Statistics , volume =. 2011 , publisher =

work page 2011

-

[32]

Advances in Neural Information Processing Systems (NeurIPS 2023) , year =

Epistemic Neural Networks , author =. Advances in Neural Information Processing Systems (NeurIPS 2023) , year =

work page 2023

-

[33]

Advances in Neural Information Processing Systems , volume =

Randomized Prior Functions for Deep Reinforcement Learning , author =. Advances in Neural Information Processing Systems , volume =

-

[34]

Proceedings of the 32nd International Conference on Machine Learning , series =

Weight Uncertainty in Neural Network , author =. Proceedings of the 32nd International Conference on Machine Learning , series =. 2015 , publisher =

work page 2015

-

[35]

Gal, Yarin and Ghahramani, Zoubin , booktitle =. Dropout as a. 2016 , publisher =

work page 2016

-

[36]

Advances in Neural Information Processing Systems , volume =

Simple and Scalable Predictive Uncertainty Estimation Using Deep Ensembles , author =. Advances in Neural Information Processing Systems , volume =. 2017 , publisher =

work page 2017

-

[37]

Foong, Andrew Y. K. and Burt, David R. and Li, Yingzhen and Turner, Richard E. , booktitle =. Between. 2019 , publisher =

work page 2019

-

[38]

Kleijn, Bas J. K. and van der Vaart, Aad W. , journal=. The. 2012 , publisher=

work page 2012

-

[39]

Advances in Neural Information Processing Systems , year =

Neural Tangent Kernel: Convergence and Generalization in Neural Networks , author =. Advances in Neural Information Processing Systems , year =

-

[40]

Advances in Neural Information Processing Systems , year =

Posterior Network: Uncertainty Estimation without OOD Samples via Density-Based Pseudo-Counts , author =. Advances in Neural Information Processing Systems , year =

-

[41]

Probabilities of Causation: Adequate Size of Experimental and Observational Samples , author=. 2022 , eprint=

work page 2022

-

[42]

Metalearners for Estimating Heterogeneous Treatment Effects Using Machine Learning , journal =

K\". Metalearners for Estimating Heterogeneous Treatment Effects Using Machine Learning , journal =. 2019 , doi =

work page 2019

-

[43]

Journal of the American Statistical Association , volume=

A decision-theoretic approach to interval estimation , author=. Journal of the American Statistical Association , volume=. 1972 , publisher=

work page 1972

-

[44]

James Bradbury and Roy Frostig and Peter Hawkins and Matthew James Johnson and Chris Leary and Dougal Maclaurin and George Necula and Adam Paszke and Jake Vander

-

[45]

Differentiable Programming workshop at Neural Information Processing Systems 2021 , year =

Patrick Kidger and Cristian Garcia , title =. Differentiable Programming workshop at Neural Information Processing Systems 2021 , year =

work page 2021

-

[46]

Journal of Educational and Behavioral Statistics , volume =

Jiannan Lu and Peng Ding and Tirthankar Dasgupta , title =. Journal of Educational and Behavioral Statistics , volume =

-

[47]

Journal of Econometrics , volume =

Vira Semenova , title =. Journal of Econometrics , volume =

-

[48]

Alaa and Zaid Ahmad and Mark van der Laan , title =

Ahmed M. Alaa and Zaid Ahmad and Mark van der Laan , title =. Advances in Neural Information Processing Systems , volume =

-

[49]

arXiv preprint arXiv:2502.08858 , year =

Shuai Wang and Ang Li , title =. arXiv preprint arXiv:2502.08858 , year =

-

[50]

Proceedings of the Fortieth Conference on Uncertainty in Artificial Intelligence , pages =

Probabilities of Causation for Continuous and Vector Variables , author =. Proceedings of the Fortieth Conference on Uncertainty in Artificial Intelligence , pages =. 2024 , editor =

work page 2024

-

[51]

Proceedings of the Thirty-Eighth AAAI Conference on Artificial Intelligence , series =

Li, Ang and Pearl, Judea , title =. Proceedings of the Thirty-Eighth AAAI Conference on Artificial Intelligence , series =. 2024 , month =. doi:10.1609/aaai.v38i18.30030 , url =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.