Recognition: 2 theorem links

· Lean TheoremTopoFisher: Learning Topological Summary Statistics by Maximizing Fisher Information

Pith reviewed 2026-05-11 02:45 UTC · model grok-4.3

The pith

Optimizing persistent homology to maximize local Fisher information yields topological summaries that carry more parameter information than standard cosmological statistics.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By training a differentiable persistent-homology pipeline to maximize the log-determinant of the local Gaussian Fisher information matrix, the resulting topological summaries recover substantially more information about cosmological parameters than hand-chosen vectorizations, approach the performance of full information-maximizing networks with far fewer parameters, and remain effective under changes in the underlying simulator.

What carries the argument

Trainable persistent homology pipeline that adjusts filtrations, vectorizations, and compressors to maximize the log-determinant Fisher loss.

If this is right

- Learned summaries achieve higher Fisher information than state-of-the-art cosmological summaries on weak lensing data.

- The summaries approach the information content of an unconstrained neural baseline using up to 80 times fewer parameters.

- Under simulator shift from lognormal to LPT-based maps the learned summaries retain most Fisher information while neural baselines lose performance.

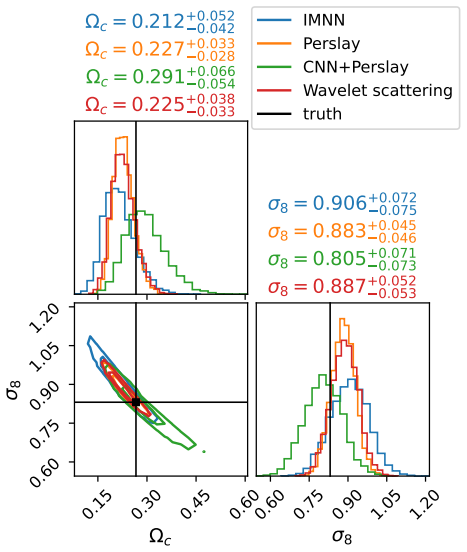

- In neural posterior estimation the summaries produce tighter parameter constraints than both the neural baseline and fixed cosmological summaries.

Where Pith is reading between the lines

- Fisher-maximization training could adapt topological summaries to other high-dimensional inference problems where hand-designed features are incomplete.

- Efficient learned topological summaries could serve as compact inputs that reduce the size of downstream neural inference models.

- Extending the optimization to global rather than local Fisher information might improve robustness when parameters vary widely.

Load-bearing premise

That Fisher information measured near one fiducial parameter value produces summaries that remain informative across the full range of parameters of interest.

What would settle it

If summaries learned at one fiducial point deliver lower Fisher information or wider posteriors than standard methods when tested on simulations at distant parameter values.

Figures

read the original abstract

Persistence diagrams provide stable, interpretable summaries of geometric and topological structure and are useful for simulation-based inference when low-order statistics miss key information. Yet persistence-based pipelines require hand-chosen filtrations, vectorizations, and compressors, typically without an objective tied to parameter uncertainty. We introduce \textbf{TopoFisher}, a differentiable persistent-homology pipeline that learns topological summaries by maximizing local Gaussian Fisher information. Using simulations near a fiducial parameter, TopoFisher optimizes trainable filtrations, diagram vectorizations, and compressors without posterior samples or supervised regression targets, while retaining stable topological inductive bias. We also give sufficient regularity conditions for the log-determinant Fisher loss to be locally Lipschitz in trainable parameters. Controlled experiments on noisy spirals and Gaussian random fields, where total Fisher information is known, show that TopoFisher recovers much of the available information and outperforms fixed topological vectorizations. Our main results are on weak gravitational lensing, a high-dimensional non-Gaussian cosmological field-inference problem. Learned topological summaries reach higher Fisher information than state-of-the-art cosmological summaries and approach an unconstrained Information Maximising Neural Network baseline with up to $\sim80\times$ fewer parameters. The learned filtrations also generalize better: under simulator shift from lognormal to LPT-based maps it retains most Fisher information, while the neural baseline drops, and in neural posterior estimation they give tighter constraints than the neural baseline, and of state-of-the-art cosmological summaries. These results support Fisher-based topological optimization as a robust, parameter-efficient front end for simulation-based inference.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces TopoFisher, a fully differentiable persistent-homology pipeline that learns filtrations, diagram vectorizations, and compressors by directly maximizing the log-determinant of the local Gaussian Fisher information matrix evaluated at a single fiducial parameter point. Controlled experiments on spirals and Gaussian random fields (where ground-truth Fisher information is known) demonstrate recovery of substantial information and outperformance of fixed topological baselines. On weak-lensing maps the learned summaries are reported to yield higher Fisher information than state-of-the-art cosmological statistics, to approach an unconstrained Information Maximising Neural Network (IMNN) baseline while using up to ~80× fewer parameters, and to generalize better under simulator shift from lognormal to LPT-based maps; the same summaries also produce tighter constraints in neural posterior estimation.

Significance. If the local-Gaussian proxy is shown to be reliable, the method supplies a label-free, posterior-free objective for optimizing topological summaries that retains the stability and inductive bias of persistent homology while remaining parameter-efficient. The controlled experiments with known ground-truth information and the reported robustness under simulator shift are concrete strengths that would make the approach attractive as a front-end for simulation-based inference on high-dimensional non-Gaussian fields.

major comments (2)

- [§3, Eq. (4)] §3, Eq. (4): The training objective maximizes the log-determinant of the local Gaussian Fisher matrix at a single fiducial cosmology. The manuscript supplies sufficient regularity conditions for local Lipschitz continuity of the loss (Section 3.3) but does not report the parameter range over which the local Gaussian approximation remains accurate, nor the Kullback-Leibler divergence between the local Gaussian and the empirical distribution of the learned summaries. This quantification is required to support the claim that the resulting summaries are near-optimal for downstream simulation-based inference.

- [Section 5] Weak-lensing experiments (Section 5): All Fisher-information comparisons and the reported generalization under simulator shift are evaluated only near the original fiducial point. The paper does not show how the learned summaries perform when the local Fisher matrix is recomputed at displaced parameter values or under stronger model misspecification, which directly affects the interpretation of the ~80× parameter-efficiency advantage over the unconstrained IMNN baseline.

minor comments (2)

- [Abstract and Section 5] The abstract states that the learned summaries 'approach' the IMNN baseline; the main text should explicitly tabulate the exact parameter counts of both models and confirm that the IMNN architecture is unconstrained in the same manner as the topological pipeline.

- [Section 3 and Section 5] Notation for the trainable filtration and vectorization parameters is introduced in Section 3 but the precise functional form of the persistence diagram vectorization (e.g., which kernel or binning is used) is not restated in the experimental sections, making it difficult to reproduce the exact pipeline.

Simulated Author's Rebuttal

We thank the referee for their thoughtful and constructive feedback on our manuscript. We address each of the major comments below and outline the revisions we will make to strengthen the paper.

read point-by-point responses

-

Referee: [§3, Eq. (4)] §3, Eq. (4): The training objective maximizes the log-determinant of the local Gaussian Fisher matrix at a single fiducial cosmology. The manuscript supplies sufficient regularity conditions for local Lipschitz continuity of the loss (Section 3.3) but does not report the parameter range over which the local Gaussian approximation remains accurate, nor the Kullback-Leibler divergence between the local Gaussian and the empirical distribution of the learned summaries. This quantification is required to support the claim that the resulting summaries are near-optimal for downstream simulation-based inference.

Authors: We agree that providing explicit quantification of the validity of the local Gaussian approximation would enhance the manuscript's support for the near-optimality claim. Although the controlled experiments with known ground-truth Fisher information already indicate substantial information recovery, we will revise the paper to include an analysis of the approximation's accuracy. Specifically, we will compute the Fisher matrix at multiple displaced parameter points and report the KL divergence between the local Gaussian and the empirical distribution of the summaries using additional simulations. This addition will be placed in Section 3 or an appendix. revision: yes

-

Referee: [Section 5] Weak-lensing experiments (Section 5): All Fisher-information comparisons and the reported generalization under simulator shift are evaluated only near the original fiducial point. The paper does not show how the learned summaries perform when the local Fisher matrix is recomputed at displaced parameter values or under stronger model misspecification, which directly affects the interpretation of the ~80× parameter-efficiency advantage over the unconstrained IMNN baseline.

Authors: We acknowledge that the primary evaluations are performed near the fiducial point. The existing simulator-shift experiment from lognormal to LPT-based maps does provide some evidence of generalization under model change. To more directly address the referee's concern, we will add new experiments in the revised manuscript that recompute the Fisher information at displaced cosmological parameters and under stronger misspecification scenarios. These results will help contextualize the parameter-efficiency advantage over the IMNN baseline. revision: yes

Circularity Check

No circularity: objective is external Fisher information; comparisons and generalization tests are independent of the optimization.

full rationale

The paper defines a differentiable pipeline whose trainable components (filtrations, vectorizations, compressors) are optimized by maximizing the log-determinant of the local Gaussian Fisher matrix computed from simulations at a single fiducial point. This objective is an independently defined information measure, not a self-referential quantity derived from the summaries themselves. Reported performance gains over fixed state-of-the-art cosmological summaries and an unconstrained IMNN baseline follow directly from the fact that those baselines are not optimized under the same objective; the outperformance is therefore a genuine empirical comparison rather than a tautology. Generalization results under simulator shift (lognormal to LPT) and in downstream neural posterior estimation are evaluated on held-out data and different simulators, supplying independent evidence. No self-citations, uniqueness theorems, ansatzes, or renamings appear in the provided text that would reduce any central claim to an input by construction. The derivation is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Sufficient regularity conditions exist such that the log-determinant Fisher loss is locally Lipschitz in the trainable parameters.

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearWe define the TopoFisher loss as bL_B(ϕ) = −log |bF^G_ϕ,B(θ_fid)| ... under boundedness, Lipschitz, and non-degeneracy assumptions, the negative log-determinant Gaussian-Fisher loss is locally Lipschitz in the trainable summary parameters (Theorem 3.2).

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanalpha_pin_under_high_calibration unclearLearned topological summaries reach higher Fisher information than state-of-the-art cosmological summaries ... with up to ∼80× fewer parameters.

Reference graph

Works this paper leans on

-

[1]

Monthly Notices of the Royal Astronomical Society , volume=

Massive lossless data compression and multiple parameter estimation from galaxy spectra , author=. Monthly Notices of the Royal Astronomical Society , volume=. 2000 , publisher=

work page 2000

-

[2]

Measuring Cosmological Parameters with Galaxy Surveys. , keywords =. doi:10.1103/PhysRevLett.79.3806 , archivePrefix =. astro-ph/9706198 , primaryClass =

-

[3]

Unbiased estimation of the inverse covariance matrix , author=

Why your model parameter confidences may be too optimistic. Unbiased estimation of the inverse covariance matrix , author=. Astronomy & Astrophysics , volume=. 2007 , publisher=

work page 2007

-

[4]

Monthly Notices of the Royal Astronomical Society , volume=

Massive optimal data compression and density estimation for scalable, likelihood-free inference in cosmology , author=. Monthly Notices of the Royal Astronomical Society , volume=. 2018 , publisher=

work page 2018

-

[5]

Automatic physical inference with information maximizing neural networks , author=. Physical Review D , volume=. 2018 , publisher=

work page 2018

-

[6]

Discrete & Computational Geometry , volume=

Topological persistence and simplification , author=. Discrete & Computational Geometry , volume=. 2002 , publisher=

work page 2002

-

[7]

Bulletin of the American Mathematical Society , volume=

Topology and data , author=. Bulletin of the American Mathematical Society , volume=

- [8]

-

[9]

A Survey of Vectorization Methods in Topological Data Analysis , year=

Ali, Dashti and Asaad, Aras and Jimenez, Maria-Jose and Nanda, Vidit and Paluzo-Hidalgo, Eduardo and Soriano-Trigueros, Manuel , journal=. A Survey of Vectorization Methods in Topological Data Analysis , year=

-

[10]

Frontiers in Artificial Intelligence , VOLUME=

Hensel, Felix and Moor, Michael and Rieck, Bastian , TITLE=. Frontiers in Artificial Intelligence , VOLUME=. 2021 , URL=. doi:10.3389/frai.2021.681108 , ISSN=

-

[11]

Proceedings of the twentieth annual symposium on Computational geometry , pages=

Computing persistent homology , author=. Proceedings of the twentieth annual symposium on Computational geometry , pages=

-

[12]

Advances in Neural Information Processing Systems , volume=

Deep learning with topological signatures , author=. Advances in Neural Information Processing Systems , volume=

-

[13]

Frontiers in Artificial Intelligence , volume=

A survey of topological machine learning methods , author=. Frontiers in Artificial Intelligence , volume=. 2021 , publisher=

work page 2021

-

[14]

International Conference on Machine Learning , pages=

Optimizing persistent homology based functions , author=. International Conference on Machine Learning , pages=. 2021 , organization=

work page 2021

-

[15]

The persistence of large scale structures

Biagetti, Matteo and Cole, Alex and Shiu, Gary , journal=. The persistence of large scale structures. 2021 , publisher=

work page 2021

-

[16]

The persistence of large scale structures

Biagetti, Matteo and Cole, Alex and Shiu, Gary , journal=. The persistence of large scale structures. 2022 , publisher=

work page 2022

-

[17]

Persistent homology in cosmic shear. II. A tomographic analysis of DES-Y1. , keywords =. doi:10.1051/0004-6361/202243868 , archivePrefix =. 2204.11831 , primaryClass =

-

[18]

Cosmological information in the persistence of large-scale structures , author=. Physical Review D , year=

-

[19]

Reports on Progress in Physics , volume=

Cosmology with cosmic shear observations: A review , author=. Reports on Progress in Physics , volume=. 2015 , publisher=

work page 2015

-

[20]

Weak lensing cosmology with convolutional neural networks on noisy data. , keywords =. doi:10.1093/mnras/stz2610 , archivePrefix =. 1902.03663 , primaryClass =

-

[21]

Cosmological constraints with deep learning from

Fluri, Janis and Kacprzak, Tomasz and Lucchi, Aurelien and Refregier, Alexandre and Amara, Adam and Hofmann, Thomas and Schneider, Aurel , journal=. Cosmological constraints with deep learning from. 2019 , publisher=

work page 2019

-

[22]

Fluri, Janis and Kacprzak, Tomasz and Lucchi, Aurelien and Schneider, Aurel and Refregier, Alexandre and Hofmann, Thomas , journal =. Full w. 2022 , month =. doi:10.1103/PhysRevD.105.083518 , url =

-

[23]

Monthly Notices of the Royal Astronomical Society , volume=

A new approach to observational cosmology using the scattering transform , author=. Monthly Notices of the Royal Astronomical Society , volume=. 2020 , publisher=

work page 2020

-

[24]

New interpretable statistics for large-scale structure analysis and generation , author=. Physical Review D , volume=. 2020 , publisher=

work page 2020

-

[25]

GUDHI Editorial Board , year=

- [26]

-

[27]

Bayesian Simulation-based Inference for Cosmological Initial Conditions. arXiv e-prints , keywords =. doi:10.48550/arXiv.2310.19910 , archivePrefix =. 2310.19910 , primaryClass =

-

[28]

NeurIPS 2019 Workshop on Topological Data Analysis and Beyond , year=

Topology optimization with differentiable persistence , author=. NeurIPS 2019 Workshop on Topological Data Analysis and Beyond , year=

work page 2019

-

[29]

arXiv preprint arXiv:2109.05743 , year=

A fast and robust method for global topological functional optimization , author=. arXiv preprint arXiv:2109.05743 , year=

-

[30]

Foundations of Computational Mathematics , volume=

A framework for differential calculus on persistence barcodes , author=. Foundations of Computational Mathematics , volume=. 2022 , publisher=

work page 2022

-

[31]

Journal of Cosmology and Astroparticle Physics , volume=

Lossless, scalable implicit likelihood inference for cosmological fields , author=. Journal of Cosmology and Astroparticle Physics , volume=. 2021 , publisher=

work page 2021

-

[32]

Dark matter satisfies cosmological constraints with neural simulation-based inference , author=. Physical Review D , volume=. 2024 , publisher=

work page 2024

-

[33]

Journal of Machine Learning Research , volume=

Persistence images: A stable vector representation of persistent homology , author=. Journal of Machine Learning Research , volume=

-

[34]

Wide angle effects for peculiar velocities

Castorina, Emanuele and White, Martin. Wide angle effects for peculiar velocities. Mon. Not. Roy. Astron. Soc. 2020. doi:10.1093/mnras/staa2129. arXiv:1911.08353

-

[35]

Computer graphics forum , volume=

Stable topological signatures for points on 3d shapes , author=. Computer graphics forum , volume=. 2015 , organization=

work page 2015

-

[36]

Carron, Julien. On the assumption of Gaussianity for cosmological two-point statistics and parameter dependent covariance matrices. Astron. Astrophys. 2013. doi:10.1051/0004-6361/201220538. arXiv:1204.4724

-

[37]

The Persistence of Large Scale Structures I: Primordial non-Gaussianity

Biagetti, Matteo and Cole, Alex and Shiu, Gary. The Persistence of Large Scale Structures I: Primordial non-Gaussianity. JCAP. 2021. doi:10.1088/1475-7516/2021/04/061. arXiv:2009.04819

-

[38]

Cadiou, C. and Pichon, C. and Codis, S. and Musso, M. and Pogosyan, D. and Dubois, Y. and Cardoso, J.-F. and Prunet, S. When do cosmic peaks, filaments or walls merge? A theory of critical events in a multi-scale landscape. 2020. doi:10.1093/mnras/staa1853. arXiv:2003.04413

-

[39]

Baumann, Daniel and Green, Daniel. The Power of Locality: Primordial Non-Gaussianity at the Map Level. 2021. arXiv:2112.14645

-

[40]

Cabass, Giovanni and Ivanov, Mikhail M. and Philcox, Oliver H. E. and Simonovi\'c, Marko and Zaldarriaga, Matias. Constraints on Single-Field Inflation from the BOSS Galaxy Survey. 2022. arXiv:2201.07238

-

[41]

D'Amico, Guido and Lewandowski, Matthew and Senatore, Leonardo and Zhang, Pierre. Limits on primordial non-Gaussianities from BOSS galaxy-clustering data. 2022. arXiv:2201.11518

-

[42]

An excursion set model of the cosmic web: the abundance of sheets, filaments and halos

Shen, Jiajian and Abel, Tom and Mo, Houjun and Sheth, Ravi K. An excursion set model of the cosmic web: the abundance of sheets, filaments and halos. Astrophys. J. 2006. doi:10.1086/504513. arXiv:astro-ph/0511365

-

[43]

Journal of Statistical Physics , volume=

Euler characteristic and related measures for random geometric sets , author=. Journal of Statistical Physics , volume=. 1991 , publisher=

work page 1991

-

[44]

The Astrophysical Journal , volume=

The sponge-like topology of large-scale structure in the universe , author=. The Astrophysical Journal , volume=

- [45]

-

[46]

Tempel, E. and Stoica, R.S. and Saar, E. and Martínez, V.J. and Liivamägi, L.J. and Castellan, G. Detecting filamentary pattern in the cosmic web: a catalogue of filaments for the SDSS. Mon. Not. Roy. Astron. Soc. 2014. doi:10.1093/mnras/stt2454. arXiv:1308.2533

-

[47]

Colberg, Jorg M. and Krughoff, K.Simon and Connolly, Andrew J. Inter-cluster filaments in a lambda-CDM Universe. Mon. Not. Roy. Astron. Soc. 2005. doi:10.1111/j.1365-2966.2005.08897.x. arXiv:astro-ph/0406665

-

[48]

Hahn, Oliver and Porciani, Cristiano and Carollo, C.Marcella and Dekel, Avishai. Properties of Dark Matter Haloes in Clusters, Filaments, Sheets and Voids. Mon. Not. Roy. Astron. Soc. 2007. doi:10.1111/j.1365-2966.2006.11318.x. arXiv:astro-ph/0610280

-

[49]

Richard and Kofman, Lev and Pogosyan, Dmitri

Bond, J. Richard and Kofman, Lev and Pogosyan, Dmitri. How filaments are woven into the cosmic web. Nature. 1996. doi:10.1038/380603a0. arXiv:astro-ph/9512141

-

[50]

Planck 2018 results. VI. Cosmological parameters

Aghanim, N. and others. Planck 2018 results. VI. Cosmological parameters. 2018. arXiv:1807.06209

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[51]

Nadathur, Seshadri and others. The completed SDSS-IV extended Baryon Oscillation Spectroscopic Survey: geometry and growth from the anisotropic void-galaxy correlation function in the luminous red galaxy sample. 2020. arXiv:2008.06060

-

[52]

and Simonovi\'c, Marko and Zaldarriaga, Matias

Ivanov, Mikhail M. and Simonovi\'c, Marko and Zaldarriaga, Matias. Cosmological Parameters from the BOSS Galaxy Power Spectrum. JCAP. 2020. doi:10.1088/1475-7516/2020/05/042. arXiv:1909.05277

-

[53]

D'Amico, Guido and Gleyzes, Jérôme and Kokron, Nickolas and Markovic, Katarina and Senatore, Leonardo and Zhang, Pierre and Beutler, Florian and Gil-Marín, Héctor. The Cosmological Analysis of the SDSS/BOSS data from the Effective Field Theory of Large-Scale Structure. JCAP. 2020. doi:10.1088/1475-7516/2020/05/005. arXiv:1909.05271

-

[54]

Alam, Shadab and others. The Completed SDSS-IV extended Baryon Oscillation Spectroscopic Survey: Cosmological Implications from two Decades of Spectroscopic Surveys at the Apache Point observatory. 2020. arXiv:2007.08991

- [55]

-

[56]

The effect of active galactic nuclei feedback on the halo mass function

Cui, Weiguang and Borgani, Stefano and Murante, Giuseppe. The effect of active galactic nuclei feedback on the halo mass function. Mon. Not. Roy. Astron. Soc. 2014. doi:10.1093/mnras/stu673. arXiv:1402.1493

-

[57]

and Carlsson, Gunnar , title =

Nicolau, Monica and Levine, Arnold J. and Carlsson, Gunnar , title =. 2011 , doi =

work page 2011

-

[58]

Persistent topology of the reionization bubble network -- I

Elbers, Willem and van de Weygaert, Rien. Persistent topology of the reionization bubble network -- I. Formalism and phenomenology. Mon. Not. Roy. Astron. Soc. 2019. doi:10.1093/mnras/stz908. arXiv:1812.00462

-

[59]

Assessment of the Information Content of the Power Spectrum and Bispectrum

Chan, Kwan Chuen and Blot, Linda. Assessment of the Information Content of the Power Spectrum and Bispectrum. Phys. Rev. D. 2017. doi:10.1103/PhysRevD.96.023528. arXiv:1610.06585

-

[60]

A roadmap for the computation of persistent homology , author=. EPJ Data Science , volume=. 2017 , publisher=

work page 2017

-

[61]

A topological measurement of protein compressibility

Gameiro, Marcio and Hiraoka, Yasuaki and Izumi, Shunsuke and Kramar, Miroslav and Mischaikow, Konstantin and Nanda, Vidit. A topological measurement of protein compressibility. Japan Journal of Industrial and Applied Mathematics. 2015. doi:10.1007/s13160-014-0153-5

-

[62]

Proceedings of the National Academy of Sciences , volume=

Topology of viral evolution , author=. Proceedings of the National Academy of Sciences , volume=. 2013 , publisher=

work page 2013

-

[63]

ArXiv e-prints , archivePrefix = "arXiv", eprint =

The importance of the whole: topological data analysis for the network neuroscientist. ArXiv e-prints , archivePrefix = "arXiv", eprint =

-

[64]

The Astrophysical Journal , keywords =

Hot and Cold Spot Counts as Probes of Non-Gaussianity in the Cosmic Microwave Background. The Astrophysical Journal , keywords =. doi:10.1088/0004-637X/755/2/122 , archivePrefix =. 1206.0436 , primaryClass =

-

[65]

and Buchert, Thomas and Edelsbrunner, Herbert and Jones, Bernard J.T

Pranav, Pratyush and Adler, Robert J. and Buchert, Thomas and Edelsbrunner, Herbert and Jones, Bernard J.T. and Schwartzman, Armin and Wagner, Hubert and van de Weygaert, Rien. Unexpected Topology of the Temperature Fluctuations in the Cosmic Microwave Background. Astron. Astrophys. 2019. doi:10.1051/0004-6361/201834916. arXiv:1812.07678

-

[66]

Massive data compression for parameter-dependent covariance matrices

Heavens, Alan and Sellentin, Elena and de Mijolla, Damien and Vianello, Alvise. Massive data compression for parameter-dependent covariance matrices. Mon. Not. Roy. Astron. Soc. 2017. doi:10.1093/mnras/stx2326. arXiv:1707.06529

-

[67]

Automatic physical inference with information maximizing neural networks

Charnock, Tom and Lavaux, Guilhem and Wandelt, Benjamin D. Automatic physical inference with information maximizing neural networks. Phys. Rev. D. 2018. doi:10.1103/PhysRevD.97.083004. arXiv:1802.03537

-

[68]

Lucas and Charnock, Tom and Alsing, Justin and Wandelt, Benjamin D

Makinen, T. Lucas and Charnock, Tom and Alsing, Justin and Wandelt, Benjamin D. , year=. Lossless, scalable implicit likelihood inference for cosmological fields , volume=. Journal of Cosmology and Astroparticle Physics , publisher=. doi:10.1088/1475-7516/2021/11/049 , number=

-

[69]

Makinen, T. and Charnock, Tom and Lemos, Pablo and Porqueres, Natalia and Heavens, Alan and Wandelt, Benjamin , year =. The Cosmic Graph: Optimal Information Extraction from Large-Scale Structure using Catalogues , volume =. The Open Journal of Astrophysics , doi =

-

[70]

Optimal neural summarization for full-field weak lensing cosmological implicit inference , DOI= "10.1051/0004-6361/202451535", url= "https://doi.org/10.1051/0004-6361/202451535", journal =

-

[71]

Journal of the American Statistical Association , volume =

Sangun Park , title =. Journal of the American Statistical Association , volume =. 1996 , publisher =. doi:10.1080/01621459.1996.10476699 , URL =

-

[72]

Peter Bubenik and Michael Hull and Dhruv Patel and Benjamin Whittle , title =. Inverse Problems , abstract =. 2020 , month =. doi:10.1088/1361-6420/ab4ac0 , url =

-

[73]

doi:10.1007/978-3-030-43408-3 , url =

-

[74]

Cole, Alex and Loges, Gregory J. and Shiu, Gary. Quantitative and interpretable order parameters for phase transitions from persistent homology. Phys. Rev. B. 2021. doi:10.1103/PhysRevB.104.104426. arXiv:2009.14231

-

[75]

Topological Data Analysis for the String Landscape

Cole, Alex and Shiu, Gary. Topological Data Analysis for the String Landscape. JHEP. 2019. doi:10.1007/JHEP03(2019)054. arXiv:1812.06960

-

[76]

2011, MNRAS, 417, 2300, doi: 10.1111/j.1365-2966.2011.19412.x

Sousbie, Thierry. The persistent cosmic web and its filamentary structure I: Theory and implementation. Mon. Not. Roy. Astron. Soc. 2011. doi:10.1111/j.1365-2966.2011.18394.x. arXiv:1009.4015

-

[77]

International journal of computer vision , volume=

On the local behavior of spaces of natural images , author=. International journal of computer vision , volume=. 2008 , publisher=

work page 2008

-

[78]

Coverage in sensor networks via persistent homology

de Silva, Vin and Ghrist, Robert. Coverage in sensor networks via persistent homology. Algebr. Geom. Topol. 2007. doi:10.2140/agt.2007.7.339

-

[79]

The biasing of baryons on the cluster mass function and cosmological parameter estimation

Martizzi, Davide and Mohammed, Irshad and Teyssier, Romain and Moore, Ben. The biasing of baryons on the cluster mass function and cosmological parameter estimation. Mon. Not. Roy. Astron. Soc. 2014. doi:10.1093/mnras/stu440. arXiv:1307.6002

-

[80]

Halo mass function: Baryon impact, fitting formulae and implications for cluster cosmology

Bocquet, Sebastian and Saro, Alex and Dolag, Klaus and Mohr, Joseph J. Halo mass function: Baryon impact, fitting formulae and implications for cluster cosmology. Mon. Not. Roy. Astron. Soc. 2016. doi:10.1093/mnras/stv2657. arXiv:1502.07357

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.