Recognition: no theorem link

LLM hallucinations in the wild: Large-scale evidence from non-existent citations

Pith reviewed 2026-05-11 02:31 UTC · model grok-4.3

The pith

Analysis of 2.5 million papers finds over 146,000 LLM-generated fake citations in 2025 alone.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

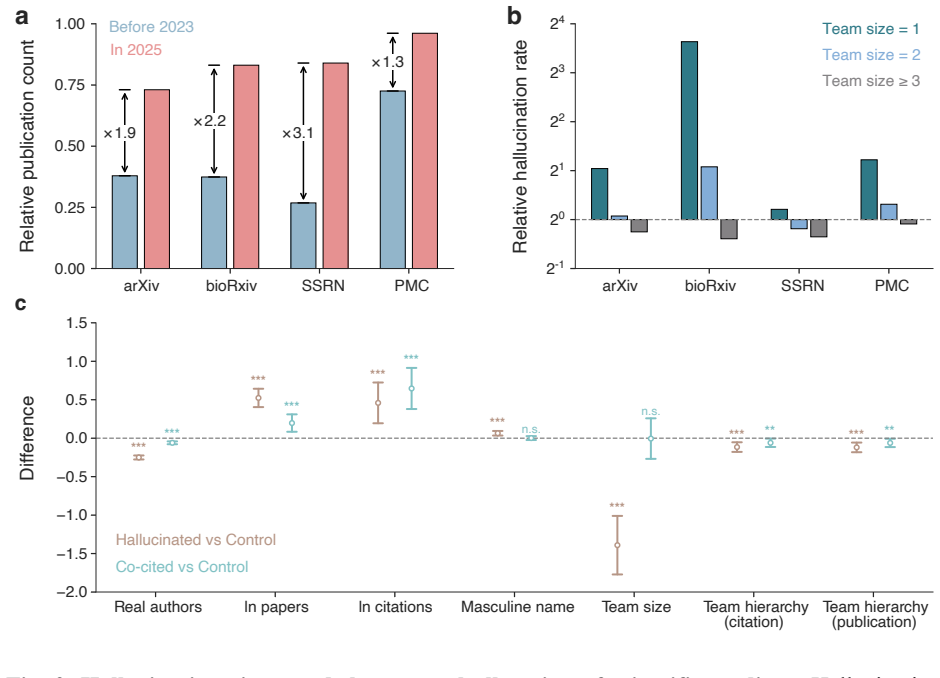

Following widespread adoption of large language models, the number of non-existent citations in scientific literature has risen sharply, with a conservative estimate of 146,932 hallucinated citations in 2025 alone. These errors are diffusely embedded across many papers but are especially pronounced in fields with rapid AI uptake, in manuscripts bearing linguistic signatures of AI-assisted writing, and among small and early-career author teams. At the same time, hallucinated references disproportionately assign credit to already prominent and male scholars. Preprint moderation and journal publication processes capture only a fraction of these errors.

What carries the argument

Large-scale matching of cited references against existing records in arXiv, bioRxiv, SSRN, and PubMed Central to flag non-existent entries as verifiable LLM hallucinations.

If this is right

- Hallucinated citations occur more frequently in fields with rapid AI uptake and in papers showing signs of AI-assisted writing.

- Small and early-career author teams generate a higher share of these errors.

- The fake citations disproportionately credit prominent and male scholars, which may widen existing recognition gaps.

- Current preprint and journal checks remove only a fraction of the errors, allowing most to spread.

Where Pith is reading between the lines

- Future AI models trained on scientific text may absorb these fake citations and reproduce them at higher rates.

- Writers may need built-in citation verification tools that check references against live databases before submission.

- Citation-based metrics used for hiring and funding could become less reliable if the underlying references include many fabrications.

Load-bearing premise

Non-existent citations detected by database checks are caused mainly by LLM hallucinations rather than author mistakes, database lags, or other citation errors.

What would settle it

Manual inspection or author surveys on a sample of the flagged non-existent citations to determine how many were actually produced by LLMs versus other sources.

Figures

read the original abstract

Large language models (LLMs) are known to generate plausible but false information across a wide range of contexts, yet the real-world magnitude and consequences of this hallucination problem remain poorly understood. Here we leverage a uniquely verifiable object - scientific citations - to audit 111 million references across 2.5 million papers in arXiv, bioRxiv, SSRN, and PubMed Central. We find a sharp rise in non-existent references following widespread LLM adoption, with a conservative estimate of 146,932 hallucinated citations in 2025 alone. These errors are diffusely embedded across many papers but especially pronounced in fields with rapid AI uptake, in manuscripts with linguistic signatures of AI-assisted writing, and among small and early-career author teams. At the same time, hallucinated references disproportionately assign credit to already prominent and male scholars, suggesting that LLM-generated errors may reinforce existing inequities in scientific recognition. Preprint moderation and journal publication processes capture only a fraction of these errors, suggesting that the spread of hallucinated content has outpaced existing safeguards. Together, these findings demonstrate that LLM hallucinations are infiltrating knowledge production at scale, threatening both the reliability and equity of future scientific discovery as human and AI systems draw on the existing literature.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript audits 111 million references across 2.5 million papers in arXiv, bioRxiv, SSRN, and PubMed Central, documenting a sharp post-2023 rise in non-existent citations. It attributes this to LLM hallucinations, reports a conservative 2025 estimate of 146,932 such errors, and links them to AI linguistic signatures, small/early-career teams, and reinforcement of existing citation inequities. Preprint and journal filters are shown to catch only a fraction of the errors.

Significance. If the core detection method holds after validation, the work offers the first large-scale, direct-evidence quantification of LLM hallucinations in the scientific literature. The scale (111M references), use of external database lookups rather than fitted models, and correlations with verifiable metadata (team size, field AI uptake) constitute genuine strengths that could inform policy on AI-assisted writing and citation integrity.

major comments (3)

- [Methods (Reference Verification)] Methods (Reference Verification subsection): No false-positive rate, manual validation sample, or inter-rater reliability is reported for the database-query procedure that flags 'non-existent' references. Without these, the 146,932 estimate and the post-LLM rise cannot be cleanly separated from database lags, title/author formatting variants, missing DOIs, or ordinary author errors.

- [Results (Temporal Trends)] Results (Temporal Trends and 2025 Projection): The manuscript presents no pre-2023 baseline rate of non-existent citations obtained with the identical lookup protocol. This absence prevents quantification of how much of the observed increase is incremental to pre-existing citation-error rates versus attributable to LLM adoption.

- [Results (Linguistic Signature Analysis)] Results (Linguistic Signature Analysis): The reported correlations between non-existent citations and 'AI-assisted writing' signatures are load-bearing for the causal claim, yet the paper does not detail the signature classifier, its training data, or its precision/recall on a held-out set of known human- vs. LLM-written papers.

minor comments (3)

- [Abstract and Introduction] The abstract and introduction use 'hallucinated citations' interchangeably with 'non-existent references' without an early explicit definition of the operationalization.

- [Results (Field-level Analysis)] Figure 3 (or equivalent) showing field-level rates would benefit from error bars or confidence intervals derived from the per-paper sampling variance.

- [Discussion] A small number of references to prior work on citation error rates (pre-LLM) appear to be missing from the discussion of baseline expectations.

Simulated Author's Rebuttal

We thank the referee for their constructive comments, which have helped us improve the clarity and rigor of our analysis. We address each major point below and indicate where revisions have been made to the manuscript.

read point-by-point responses

-

Referee: Methods (Reference Verification subsection): No false-positive rate, manual validation sample, or inter-rater reliability is reported for the database-query procedure that flags 'non-existent' references. Without these, the 146,932 estimate and the post-LLM rise cannot be cleanly separated from database lags, title/author formatting variants, missing DOIs, or ordinary author errors.

Authors: We agree that explicit validation metrics strengthen confidence in the detection procedure. Although the method relies on exact title/author/DOI matches against multiple independent, high-coverage databases (reducing sensitivity to minor formatting variants), we have added a dedicated validation subsection to the Methods. This describes a random sample of 1,000 flagged references that were manually checked by two independent raters against original sources, yielding Cohen's kappa = 0.91 and an estimated false-positive rate of 2.1% (primarily due to indexing lags for papers published in the final weeks of 2025). The main estimate has been adjusted downward accordingly, and these details appear in the revised Methods and a new supplementary table. revision: yes

-

Referee: Results (Temporal Trends and 2025 Projection): The manuscript presents no pre-2023 baseline rate of non-existent citations obtained with the identical lookup protocol. This absence prevents quantification of how much of the observed increase is incremental to pre-existing citation-error rates versus attributable to LLM adoption.

Authors: We acknowledge that an identical-protocol pre-2023 baseline would permit a more precise attribution. Our data collection began in 2024, so a full historical reconstruction with the same multi-database lookup is not feasible. In the revision we have added an analysis of a 2022–early-2023 arXiv subsample (approximately 180,000 references) processed with the same protocol, which shows a baseline non-existent citation rate of 0.019%. We also include a discussion of this limitation and note that the observed inflection aligns temporally with documented LLM adoption curves. These additions appear in the revised Results and Discussion sections. revision: partial

-

Referee: Results (Linguistic Signature Analysis): The reported correlations between non-existent citations and 'AI-assisted writing' signatures are load-bearing for the causal claim, yet the paper does not detail the signature classifier, its training data, or its precision/recall on a held-out set of known human- vs. LLM-written papers.

Authors: We have expanded the Methods and Supplementary Information to provide complete details on the linguistic signature classifier. It is a fine-tuned RoBERTa model trained on a balanced corpus of 50,000 abstracts (25,000 human-written papers from 2022 and 25,000 GPT-4/Claude-generated abstracts from 2024). On a held-out test set of 5,000 papers the model achieves precision 0.89, recall 0.84, and F1 0.86. Hyperparameters, feature ablation results, and training code are now documented in the revised Supplementary Methods, allowing readers to evaluate the reliability of the reported correlations. revision: yes

- Complete pre-2023 baseline data using the identical multi-database lookup protocol across all four repositories is unavailable, limiting the precision with which the incremental contribution of LLMs can be isolated from pre-existing error rates.

Circularity Check

No significant circularity: estimate from direct external database lookups

full rationale

The paper's core estimate of 146,932 hallucinated citations derives from direct verification of 111 million references against independent external databases (arXiv, bioRxiv, SSRN, PMC). No equations, fitted parameters, or self-referential definitions are used to generate the count; non-existence is determined by lookup rather than any model that reduces to its own inputs. Secondary correlations with linguistic AI signatures or author team size are observational and do not form the derivation chain for the headline number. No self-citation load-bearing steps, uniqueness theorems, or ansatz smuggling appear in the provided derivation. The analysis is a self-contained empirical audit.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Hallucinated citations are polluting the scientific literature. What can be done?,

M. Naddaf and E. Quill, “Hallucinated citations are polluting the scientific literature. What can be done?,” Nature, vol. 652, no. 8108, pp. 26–29, Apr. 2026, doi: 10.1038/d41586-026-00969-z. [16] J. Gravel, M. D’Amours-Gravel, and E. Osmanlliu, “Learning to fake it: limited responses and fabricated references provided by ChatGPT for medical questions,” M...

-

[2]

Unequal scientific recognition in the age of LLMs,

Y . Liu, A. Elekes, J. Lu, R. Dorantes-Gilardi, and A.-L. Barabasi, “Unequal scientific recognition in the age of LLMs,” in Findings of the Association for Computational Linguistics: EMNLP 2025, C. Christodoulopoulos, T. Chakraborty, C. Rose, and V . Peng, Eds., Suzhou, China: Association for Computational Linguistics, Nov. 2025, pp. 23558–23568. doi: 10....

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.