Recognition: no theorem link

Vibe Econometrics and the Analysis Contract

Pith reviewed 2026-05-11 01:50 UTC · model grok-4.3

The pith

AI assistance in econometrics changes how inferential failures occur and persuade, requiring the Analysis Contract to restore governance.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The central claim is that AI assistance structurally alters the failure surface in vibe econometrics, a class of methods where identification can be named faster than it can be audited, so that outputs do not reliably signal invalidity and recognizing any signals requires the expertise the workflow bypasses. The result is not new failure modes but their industrialization: weak analysis packaged with rigor that spreads faster and more credibly than before. The Analysis Contract addresses this by imposing three pre-conditions on causal claims: a method-data contract, a data audit, and a pre-commitment statement defining disconfirming results, generalizing existing safeguards to the AI setting.

What carries the argument

The Analysis Contract, a pre-commitment framework that requires a method-data contract, data audit, and pre-commitment to disconfirming results before advancing a causal claim in AI-assisted analysis.

If this is right

- Requiring a method-data contract upfront would prevent execution of analyses on incompatible data or identification strategies.

- Mandating a data audit would expose assumption violations that formatted AI output otherwise conceals.

- Pre-committing to disconfirming results would make forking paths visible and reduce the ability to claim support after seeing the data.

- The contract would integrate with existing tools such as pre-analysis plans by adding AI-specific checks on observability of failures.

Where Pith is reading between the lines

- The framework could be extended to non-econometric domains where AI generates outputs whose validity depends on unverifiable assumptions.

- Voluntary adoption of the contract by journals or funders might serve as a test by tracking whether signed papers face fewer external critiques.

- Without such pre-commitments, AI tools risk accelerating the use of flawed causal evidence in policy settings where decision-makers lack auditing capacity.

Load-bearing premise

The assumption that AI assistance alters the incidence, observability, and persuasive force of inferential failures enough to create a practically distinct governance problem that pre-analysis plans and the Causal Roadmap cannot address without a new named framework.

What would settle it

A study documenting no measurable increase in the rate or undetected incidence of invalid causal claims from AI-assisted versus traditional econometric workflows, or showing that the three elements of the Analysis Contract produce no improvement in auditability or reduction in post-hoc adjustments, would falsify the need for the new framework.

Figures

read the original abstract

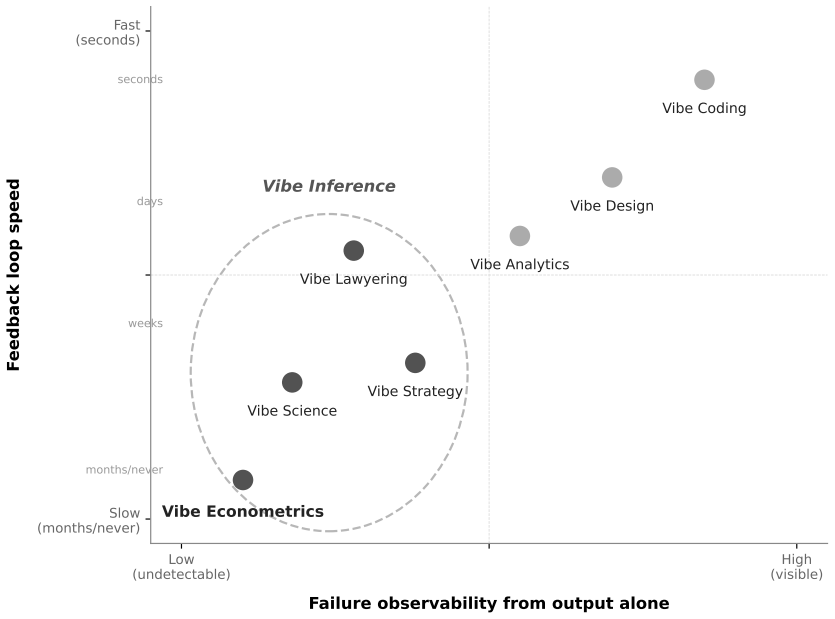

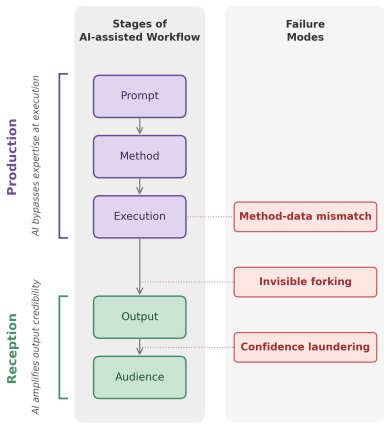

"Vibe coding" and "vibe analytics" have been framed as a democratization of technical capability. This paper argues that AI-assisted methodology more broadly, or what I call "vibe methodology," also democratizes the failure modes specific to each domain. When AI assists with methods whose validity depends on assumptions that cannot be verified from the output alone (a class I call "vibe inference"), the failure surface is structurally different: the output does not reliably signal invalidity, and when it does, recognizing the signal requires the expertise the workflow bypasses. I focus on "vibe econometrics," the subset of AI-assisted causal analysis where identification can be named faster than it can be audited. The claim of this paper is not that AI invents inferential failures that did not previously exist, but that it changes their incidence, observability, and persuasive force enough to create a practically distinct governance problem. This results in three failure modes: method-data mismatch, where AI bypasses expertise at execution; confidence laundering, where AI amplifies the credibility of formatted output; and invisible forking, which spans both. What is new is not the failure modes but AI's industrialization of their packaging. The barrier between naming a method and executing it has collapsed, and weak foundations, dressed as rigorous analysis, now reach audiences at a scale, speed, and polish that previously required expertise. I propose the Analysis Contract, a pre-commitment framework that adapts the logic of pre-analysis plans and the Causal Roadmap to the AI-assisted setting. The contract imposes three conditions before a causal claim is made: a method-data contract, a data audit, and a pre-commitment statement defining what would count as a disconfirming result. The framework generalizes across domains of vibe inference through domain-specific instantiation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that AI-assisted 'vibe methodology' (and specifically 'vibe econometrics') does not invent new inferential failures but changes their incidence, observability, and persuasive force by collapsing the barrier between naming and executing methods whose validity rests on unverifiable assumptions. This creates three failure modes—method-data mismatch, confidence laundering, and invisible forking—whose industrialization requires a new governance framework. The proposed Analysis Contract adapts pre-analysis plans and the Causal Roadmap via three conditions: a method-data contract, a data audit, and pre-commitment to disconfirming results, generalizing across domains of 'vibe inference.'

Significance. If the central claim holds, the paper identifies a practically relevant shift in the governance of empirical causal claims under AI assistance, potentially informing journal policies and researcher workflows in econometrics. It correctly credits that the underlying failures predate AI and focuses on their changed packaging and scale. The conceptual mapping to existing tools (pre-analysis plans, Causal Roadmap) is a strength, though the manuscript supplies no empirical data, simulations, or worked examples to quantify altered incidence or observability.

major comments (2)

- [Abstract] Abstract: The claim that AI assistance creates a 'practically distinct governance problem' that cannot be addressed by suitably updated pre-analysis plans is asserted rather than demonstrated; no concrete AI workflow is supplied in which an extended PAP (specifying AI steps, required audits, and disconfirmation criteria) would fail to cover the three failure modes.

- [Abstract] Abstract (description of Analysis Contract): The three conditions are explicitly described as adaptations of pre-analysis plans and the Causal Roadmap, yet the manuscript provides no derivation or counter-example showing which AI-specific elements (e.g., prompt auditing or output verification) cannot be incorporated into those existing structures without a new named framework.

minor comments (2)

- [Abstract] The term 'vibe inference' is introduced without a formal definition or boundary conditions that would allow readers to classify specific econometric procedures as inside or outside the category.

- The manuscript would benefit from a short table or bullet list contrasting the Analysis Contract conditions with standard pre-analysis plan elements to clarify incremental versus novel requirements.

Simulated Author's Rebuttal

We thank the referee for the careful and constructive comments. The feedback accurately notes that the manuscript's central claims are presented conceptually and would be strengthened by explicit demonstration and derivation. We respond to each major comment below and will revise the manuscript accordingly to incorporate the requested elements.

read point-by-point responses

-

Referee: [Abstract] Abstract: The claim that AI assistance creates a 'practically distinct governance problem' that cannot be addressed by suitably updated pre-analysis plans is asserted rather than demonstrated; no concrete AI workflow is supplied in which an extended PAP (specifying AI steps, required audits, and disconfirmation criteria) would fail to cover the three failure modes.

Authors: We agree that the manuscript would benefit from a concrete illustration. The structural distinction arises because AI assistance collapses the barrier between naming a method and executing it, allowing unverifiable assumptions to be embedded in generated code or specifications in ways that remain opaque even when an extended PAP details the AI steps, audits, and disconfirmation criteria. In the revised version, we will add a specific AI workflow example (e.g., LLM-assisted specification of an instrumental-variables design on observational data) showing how method-data mismatch and invisible forking can persist despite such extensions. This will demonstrate why the change in incidence and observability creates a practically distinct governance problem. revision: yes

-

Referee: [Abstract] Abstract (description of Analysis Contract): The three conditions are explicitly described as adaptations of pre-analysis plans and the Causal Roadmap, yet the manuscript provides no derivation or counter-example showing which AI-specific elements (e.g., prompt auditing or output verification) cannot be incorporated into those existing structures without a new named framework.

Authors: We accept that the manuscript would be improved by an explicit derivation. While the Analysis Contract adapts existing tools, the AI setting introduces elements such as non-deterministic prompt outputs and the bypassing of domain expertise that standard PAP specifications and the Causal Roadmap do not routinely address. In revision, we will include a derivation section contrasting the three conditions with those frameworks and provide a counter-example in which prompt auditing and output verification are incorporated into an extended PAP yet still leave gaps in addressing confidence laundering and invisible forking. This will clarify the rationale for the dedicated named framework as a practical governance instrument. revision: yes

Circularity Check

No circularity: conceptual proposal adapts existing frameworks without self-referential reduction

full rationale

The manuscript contains no equations, derivations, fitted parameters, or mathematical claims. Its central argument defines 'vibe inference' and 'vibe econometrics' descriptively, then proposes the Analysis Contract as an adaptation of pre-analysis plans and the Causal Roadmap. No step claims to derive a new result from first principles that collapses back to the input definitions or to a self-citation chain. The three conditions of the contract are presented as explicit extensions of prior structures rather than as outputs forced by the paper's own premises. Because the work is self-contained against external benchmarks (existing methodological literature) and makes no load-bearing self-citations or uniqueness assertions, the derivation chain exhibits no circularity.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption AI assistance bypasses expertise at the point of method execution in ways that alter failure observability

- domain assumption Formatted AI output increases the persuasive force of claims beyond their actual validity

Reference graph

Works this paper leans on

-

[1]

Acemoglu, D., Kong, D., & Ozdaglar, A. (2026). AI, Human Cognition and Knowledge Collapse. NBER Working Paper No. 34910. All About AI. (2025). AI Hallucinations: Statistics. Retrieved from https://www.allaboutai.com/resources/ai- statistics/ai-hallucinations/ Arbour, D., Bojinov, I., Feller, A., & Ni, T. (2026). Toward causal field evaluations of AI syste...

-

[2]

DOI: 10.1038/s41562-016-0021. Nickerson, R.S. (1998). Confirmation Bias: A Ubiquitous Phenomenon in Many Guises. Review of General Psychology, 2 (2), 175-220. DOI: 10.1037/1089-2680.2.2.175. NIST. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). NIST AI 100-1. https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf Perez, E., Ringer,...

-

[3]

doi:10.1016/j.tics.2016.07.002 Rocky.ai

arXiv:2507.06306. Risko, E.F., & Gilbert, S.J. (2016). Cognitive Offloading. Trends in Cognitive Sciences, 20 (9), 676–688. DOI: 10.1016/j.tics.2016.07.002. Roth, J., Sant’Anna, P.H.C., Bilinski, A., & Poe, J. (2023). What’s Trending in Difference-in- Differences? A Synthesis of the Recent Econometrics Literature. Journal of Econometrics, 235 (2), 2218–22...

-

[4]

arXiv:2310.13548. Shaw, S.D., & Nave, G. (2026). Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender. Wharton School Working Paper. DOI: 10.2139/ssrn.6097646. Shen, J. H., & Tamkin, A. (2026). How AI impacts skill formation. arXiv preprint arXiv:2601.20245v2. 19 Shumailov, I., Shumaylov, Z., Zhao, Y...

-

[5]

Wang, G., Hamad, R., & White, J.S

Suprmind.ai. Wang, G., Hamad, R., & White, J.S. (2024). Advances in Difference-in-Differences Methods for Policy Evaluation Research. Epidemiology, 35 (5), 628–637. DOI: 10.1097/EDE.0000000000001630. PMC: PMC11305929. Watson, H.J. (2025). A Statistical Analysis Plan Template for Observational Studies: Promot- ing Quality and Rigor in Research. Journal of ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.