Recognition: no theorem link

SceneFactory: GPU-Accelerated Multi-Agent Driving Simulation with Physics-Based Vehicle Dynamics

Pith reviewed 2026-05-12 01:13 UTC · model grok-4.3

The pith

GPU vectorization lets physics-based multi-agent driving simulation run 127 times faster than non-batched versions on the same hardware.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

By expressing multiple independent worlds and their articulated vehicles as GPU tensors, the system runs physics steps, control application, and data collection concurrently across 256 worlds with 16 agents each, reaching 19,250 controlled-agent simulation steps per second. This produces a measured 127-fold throughput gain over the same physics solver run without vectorization. Transfer tests show policies trained under full physics reach 99.5 percent success when moved to a kinematic model, while the reverse direction falls to 47.3 percent; policies that respond to friction changes cut mean peak deceleration on wet roads from 58.7 to 27.8 m/s squared.

What carries the argument

Batched tensor representation of worlds, agents, controls, observations, and rewards that allows the physics solver to advance all scenarios simultaneously as GPU operations.

If this is right

- Reinforcement learning policies trained with full articulated dynamics transfer successfully to simpler kinematic models.

- The reverse transfer from kinematic to physics-based models succeeds at much lower rates.

- Friction-aware policies measurably reduce peak deceleration demands when road conditions change.

- Large numbers of concurrent physics-accurate worlds become practical for training without discarding contact or suspension effects.

Where Pith is reading between the lines

- Training loops could incorporate real road geometry and weather-dependent friction at scales that previously required simplified models.

- The same tensor batching pattern could be tested in other multi-body domains where both parallelism and physical fidelity matter.

- Further increases in world or agent count per GPU might be feasible if memory and kernel launch overhead remain manageable.

Load-bearing premise

The batched tensor operations on the physics solver produce vehicle dynamics, contacts, and friction responses that match those of the non-batched solver within acceptable numerical tolerance.

What would settle it

Run identical control sequences and initial conditions in both the batched vectorized mode and the standard single-world mode, then compare resulting positions, velocities, tire forces, and contact impulses for any divergence larger than floating-point error.

Figures

read the original abstract

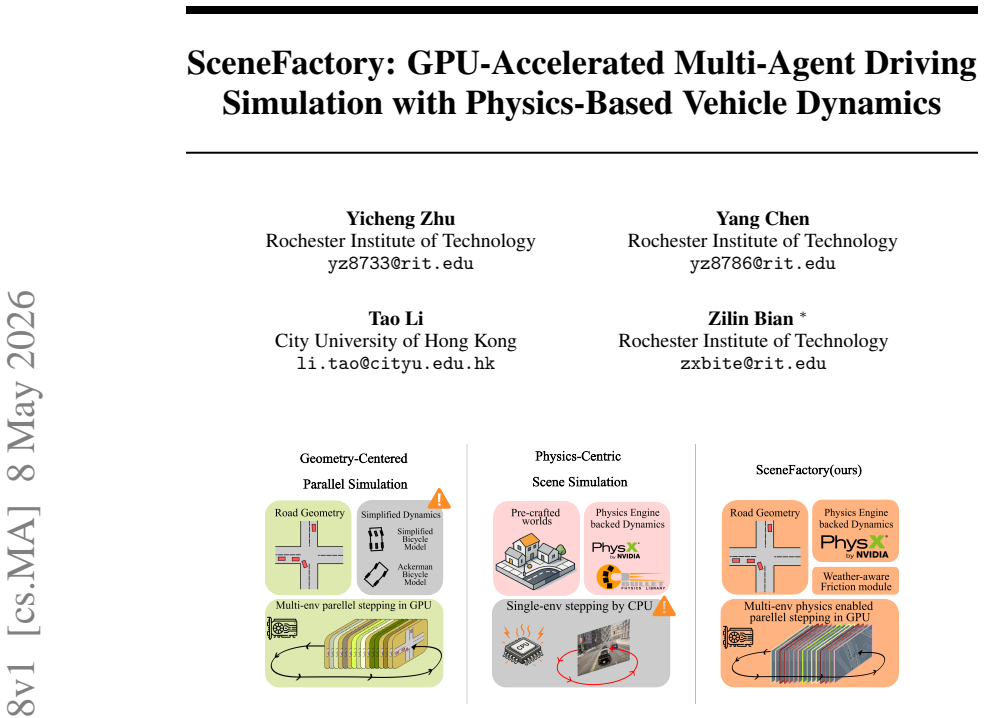

Autonomous-driving simulators typically trade physical fidelity for scalable parallelism. Physics-based platforms such as CARLA and MetaDrive provide articulated vehicle dynamics and contact, but their non-vectorized interfaces make batched training difficult. GPU-batched systems such as Waymax and GPUDrive scale to hundreds of scenarios by replacing rigid-body physics with simplified kinematic models, omitting tire--road interaction, suspension, contact dynamics, and road-condition-dependent friction. We introduce SceneFactory, a GPU-vectorized platform for procedural scene construction, physics-based multi-agent simulation, and RL in autonomous-driving environments. Built on NVIDIA Isaac Sim + Isaac Lab, SceneFactory represents worlds and agents as batched tensors: control, observations, rewards, resets, and policy inference run as GPU tensor operations over the Isaac Lab tensor API. SceneFactory converts Waymo Open Motion Dataset road topologies into simulation-ready USD worlds, runs many worlds concurrently on one GPU, populates each with multiple articulated PhysX vehicles, and maps precipitation and road-surface type to PhysX material friction coefficients. With GPU vectorization, SceneFactory achieves up to 127$\times$ higher throughput than a non-vectorized PhysX baseline on the same GPU and physics solver, reaching 19,250 controlled-agent simulation steps per second at 256 worlds $\times$ 16 agents. Cross-simulator transfer reveals an asymmetric dynamics gap: physics-grounded RL policies transfer to a simplified kinematic bicycle model with 99.5% success, whereas reverse transfer drops to 47.3%. Under wet-road friction, friction-aware policies reduce mean peak DRAC from 58.7 to 27.8,m/s$^2$ without sacrificing goal reach. SceneFactory shows that scalable autonomous-driving training need not discard articulated rigid-body dynamics or physically grounded road-condition variation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. SceneFactory is a GPU-vectorized multi-agent driving simulator built on NVIDIA Isaac Sim and Isaac Lab. It converts Waymo Open Motion Dataset road topologies into USD worlds, populates them with multiple articulated PhysX vehicles, maps road conditions to friction coefficients, and runs batched tensor operations for control, observation, reward, and reset over the Isaac Lab tensor API. The central empirical claims are a 127× throughput increase over a non-vectorized PhysX baseline (reaching 19,250 controlled-agent steps/s at 256 worlds × 16 agents), asymmetric cross-simulator policy transfer (99.5% physics-to-kinematic vs. 47.3% reverse), and reduced peak DRAC (58.7 to 27.8 m/s²) for friction-aware policies on wet roads.

Significance. If the batched PhysX dynamics remain equivalent to the single-instance baseline, the work is significant because it demonstrates that articulated rigid-body physics and road-condition variation can be retained at the scale needed for parallel RL training, rather than defaulting to kinematic approximations as in Waymax or GPUDrive. The concrete throughput numbers, the transfer asymmetry result, and the friction-policy experiment provide direct, falsifiable support for the thesis that scalable autonomous-driving training need not discard physically grounded dynamics.

major comments (2)

- [§5.1] §5.1 (throughput evaluation): The 127× speedup and the claim of preserving full physics-based dynamics both rest on the assumption that the GPU-batched Isaac Lab / PhysX implementation produces numerically identical trajectories and contact forces to the non-vectorized baseline. No quantitative equivalence check (state L2 error, friction-force histograms, or collision-outcome agreement under matched initial conditions and controls) is reported; batching can alter solver convergence or floating-point accumulation even with the same underlying solver.

- [§5.3] §5.3 (cross-simulator transfer): The reported success rates of 99.5% (physics-to-kinematic) and 47.3% (reverse) are presented without the number of evaluation episodes, random seeds, or variance; this makes it impossible to judge whether the asymmetric gap is statistically robust and therefore weakens the supporting evidence for the central claim that physics-grounded policies are meaningfully different.

minor comments (3)

- [Abstract] Abstract: the string '27.8,m/s$^2$' contains a formatting error (missing space and incorrect comma); it should read '27.8 m/s²'.

- [Abstract and §3] The abstract and §3 do not cite the Isaac Lab or PhysX documentation/papers; adding these references would clarify the exact tensor API and solver version used.

- [§5.1 and §5.3] Throughput and transfer tables/figures should report standard deviations or confidence intervals across random seeds to allow readers to assess variability of the 19,250 steps/s and 99.5%/47.3% figures.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. The comments identify areas where additional evidence and statistical details will strengthen the manuscript. We address each major comment below and commit to the corresponding revisions.

read point-by-point responses

-

Referee: [§5.1] §5.1 (throughput evaluation): The 127× speedup and the claim of preserving full physics-based dynamics both rest on the assumption that the GPU-batched Isaac Lab / PhysX implementation produces numerically identical trajectories and contact forces to the non-vectorized baseline. No quantitative equivalence check (state L2 error, friction-force histograms, or collision-outcome agreement under matched initial conditions and controls) is reported; batching can alter solver convergence or floating-point accumulation even with the same underlying solver.

Authors: We agree that a direct quantitative verification of numerical equivalence is necessary to rigorously support the claim that the batched implementation preserves full physics fidelity. Although the underlying PhysX solver, material parameters, and integration settings are identical between the single-instance and batched versions, parallel execution could in principle affect solver convergence or floating-point accumulation. In the revised manuscript we will add an equivalence analysis in §5.1 that reports state L2 errors, contact-force histograms, and collision-outcome agreement under matched initial conditions and controls. This will be performed on a representative set of scenarios drawn from the Waymo Open Motion Dataset. revision: yes

-

Referee: [§5.3] §5.3 (cross-simulator transfer): The reported success rates of 99.5% (physics-to-kinematic) and 47.3% (reverse) are presented without the number of evaluation episodes, random seeds, or variance; this makes it impossible to judge whether the asymmetric gap is statistically robust and therefore weakens the supporting evidence for the central claim that physics-grounded policies are meaningfully different.

Authors: We concur that the absence of evaluation details prevents readers from assessing statistical robustness. The transfer experiments were conducted over a substantial number of episodes and multiple random seeds, yet these quantities and the associated variance were not reported. In the revised manuscript we will explicitly state the number of evaluation episodes, the number of random seeds, and the observed variance (or standard deviation) for both transfer directions, together with any confidence intervals. This information will be added to §5.3. revision: yes

Circularity Check

No circularity; all performance and transfer claims are direct empirical measurements against external baselines

full rationale

The paper is a systems contribution introducing SceneFactory, a GPU-vectorized multi-agent simulator. Its central claims—127× throughput improvement, 19,250 steps/s at 256×16 agents, asymmetric cross-simulator transfer (99.5% vs 47.3%), and DRAC reduction under wet roads—are reported as measured outcomes from running the new system against non-vectorized PhysX and kinematic bicycle baselines. No equations, fitted parameters, self-definitional loops, or load-bearing self-citations appear in the provided text. The numerical-equivalence assumption for batched PhysX is an unverified premise rather than a derivation that reduces to the paper's own inputs by construction. The work is therefore self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption PhysX rigid-body dynamics and contact model remain accurate when executed in batched tensor form via Isaac Lab

- domain assumption Precipitation and road-surface labels from Waymo can be mapped to PhysX friction coefficients in a way that produces realistic vehicle behavior

Reference graph

Works this paper leans on

-

[1]

How Do Weather Events Affect Roads? - FHWA Road Weather Management

FHWA. How Do Weather Events Affect Roads? - FHWA Road Weather Management. URL https://ops.fhwa.dot.gov/weather/roadimpact.htm

-

[2]

CARLA: An open urban driving simulator

Alexey Dosovitskiy, German Ros, Felipe Codevilla, Antonio Lopez, and Vladlen Koltun. CARLA: An open urban driving simulator. InProceedings of the 1st Annual Conference on Robot Learning, pages 1–16, 2017

work page 2017

-

[3]

Metadrive: Composing diverse driving scenarios for generalizable reinforcement learning

Quanyi Li, Zhenghao Peng, Lan Feng, Qihang Zhang, Zhenghai Xue, and Bolei Zhou. Metadrive: Composing diverse driving scenarios for generalizable reinforcement learning. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022

work page 2022

-

[4]

Waymax: An Accelerated, Data- Driven Simulator for Large-Scale Autonomous Driving Research

Cole Gulino, Justin Fu, Wenjie Luo, George Tucker, Eli Bronstein, Yiren Lu, Jean Harb, Xinlei Pan, Yan Wang, Xiangyu Chen, John Co-Reyes, Rishabh Agarwal, Rebecca Roelofs, Yao Lu, Nico Montali, Paul Mougin, Zoey Yang, Brandyn White, Aleksandra Faust, Rowan McAllister, Dragomir Anguelov, and Benjamin Sapp. Waymax: An Accelerated, Data- Driven Simulator for...

work page 2023

-

[5]

GPUDrive: Data-driven, multi-agent driving simulation at 1 million FPS, February 2025

Saman Kazemkhani, Aarav Pandya, Daphne Cornelisse, Brennan Shacklett, and Eugene Vinit- sky. GPUDrive: Data-driven, multi-agent driving simulation at 1 million FPS, February 2025. URLhttp://arxiv.org/abs/2408.01584. arXiv:2408.01584 [cs]

-

[6]

NVIDIA Isaac Sim and Isaac Lab

NVIDIA Corporation. NVIDIA Isaac Sim and Isaac Lab. 2025. https://developer. nvidia.com/isaac-sim

work page 2025

-

[7]

Kan Chen, Runzhou Ge, Hang Qiu, Rami Ai-Rfou, Charles R. Qi, Xuanyu Zhou, Zoey Yang, Scott Ettinger, Pei Sun, Zhaoqi Leng, Mustafa Mustafa, Ivan Bogun, Weiyue Wang, Mingxing Tan, and Dragomir Anguelov. WOMD-LiDAR: Raw sensor dataset benchmark for motion forecasting. InProceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2024

work page 2024

-

[8]

Lanruo Zhao, Hongduo Zhao, and Juewei Cai. Tire-pavement friction modeling consider- ing pavement texture and water film.International Journal of Transportation Science and Technology, 14:99–109, June 2024. ISSN 20460430. doi: 10.1016/j.ijtst.2023.04.001. URL https://linkinghub.elsevier.com/retrieve/pii/S204604302300031X

-

[9]

An extensible, data-oriented architecture for high-performance, many-world simulation

Brennan Shacklett, Luc Guy Rosenzweig, Zhiqiang Xie, Bidipta Sarkar, Andrew Szot, Erik Wijmans, Vladlen Koltun, Dhruv Batra, and Kayvon Fatahalian. An extensible, data-oriented architecture for high-performance, many-world simulation. InACM Transactions on Graphics (SIGGRAPH), 2023

work page 2023

-

[10]

SUMMIT: A Simulator for Urban Driving in Massive Mixed Traffic, March 2020

Panpan Cai, Yiyuan Lee, Yuanfu Luo, and David Hsu. SUMMIT: A Simulator for Urban Driving in Massive Mixed Traffic, March 2020. URLhttp://arxiv.org/abs/1911.04074. arXiv:1911.04074 [cs]

-

[11]

SMARTS: An Open-Source Scalable Multi-Agent RL Training School for Autonomous Driving

Ming Zhou, Jun Luo, Julian Villella, Yaodong Yang, David Rusu, Jiayu Miao, Weinan Zhang, Montgomery Alban, Iman Fadakar, Zheng Chen, Chongxi Huang, Ying Wen, Kimia Hassan- zadeh, Daniel Graves, Zhengbang Zhu, Yihan Ni, Nhat Nguyen, Mohamed Elsayed, Haitham Ammar, Alexander Cowen-Rivers, Sanjeevan Ahilan, Zheng Tian, Daniel Palenicek, Kasra Rezaee, Peyman ...

work page 2020

-

[12]

arXiv preprint arXiv:2106.11810 (2021) 3, 7

Holger Caesar, Juraj Kabzan, Kok Seang Tan, Whye Kit Fong, Eric Wolff, Alex Lang, Luke Fletcher, Oscar Beijbom, and Sammy Omari. NuPlan: A closed-loop ML-based planning benchmark for autonomous vehicles, February 2022. URL http://arxiv.org/abs/2106. 11810. arXiv:2106.11810 [cs]

-

[13]

Eugene Vinitsky, Nathan Lichtlé, Xiaomeng Yang, Brandon Amos, and Jakob Fo- erster. Nocturne: a scalable driving benchmark for bringing multi-agent learn- ing one step closer to the real world. In S. Koyejo, S. Mohamed, A. Agar- wal, D. Belgrave, K. Cho, and A. Oh, editors,Advances in Neural Informa- tion Processing Systems, volume 35, pages 3962–3974. Cu...

-

[14]

URL https://proceedings.neurips.cc/paper_files/paper/2022/file/ 191e9e721a2748a860714fb23aaf7c5d-Paper-Datasets_and_Benchmarks.pdf

work page 2022

-

[15]

Jonathan Wilder Lavington, Ke Zhang, Vasileios Lioutas, Matthew Niedoba, Yunpeng Liu, Dylan Green, Saeid Naderiparizi, Xiaoxuan Liang, Setareh Dabiri, Adam ´Scibior, Berend Zwartsenberg, and Frank Wood. TorchDriveEnv: A Reinforcement Learning Benchmark for Autonomous Driving with Reactive, Realistic, and Diverse Non-Playable Characters, May 2024. URLhttp:...

-

[16]

Chen Chen, Xiaohua Zhao, Hao Liu, Guichao Ren, and Xiaoming Liu. Influence of ad- verse weather on drivers’ perceived risk during car following based on driving simulations. Journal of Modern Transportation, 27(4):282–292, December 2019. ISSN 2095-087X, 2196-

work page 2019

-

[17]

URL http://link.springer.com/10.1007/ s40534-019-00197-4

doi: 10.1007/s40534-019-00197-4. URL http://link.springer.com/10.1007/ s40534-019-00197-4

-

[18]

Mohamed M. Ahmed and Ali Ghasemzadeh. The impacts of heavy rain on speed and headway Behaviors: An investigation using the SHRP2 naturalistic driving study data.Transportation Research Part C: Emerging Technologies, 91:371–384, June 2018. ISSN 0968-090X. doi: 10.1016/j.trc.2018.04.012. URL https://www.sciencedirect.com/science/article/ pii/S0968090X1830487X

-

[19]

Yichuan Peng, Yuming Jiang, Jian Lu, and Yajie Zou. Examining the effect of adverse weather on road transportation using weather and traffic sensors.PLoS ONE, 13(10):e0205409, October

-

[20]

doi: 10.1371/journal.pone.0205409

ISSN 1932-6203. doi: 10.1371/journal.pone.0205409. URL https://pmc.ncbi.nlm. nih.gov/articles/PMC6191113/

-

[21]

Zhengqing Li and Baljit Singh Bhathal Singh. Autonomous Driving in Adverse Weather: A Multi-Modal Fusion Framework with Uncertainty-Aware Learning for Robust Obstacle Detection.International Journal of Advanced Computer Science and Applications, 16(8), 2025

work page 2025

-

[22]

Weather Effects on Obstacle Detection for Autonomous Car

Rui Song, Jon Wetherall, Simon Maskell, and Jason Ralph. Weather Effects on Obstacle Detection for Autonomous Car. volume 2, pages 331–341. SCITEPRESS, May 2020. ISBN 978-989-758-419-0. doi: 10.5220/0009354503310341. URL https://www.scitepress. org/PublishedPapers/2020/93545

-

[23]

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA) , year =

Tao Li, Haozhe Lei, and Quanyan Zhu. Self-adaptive driving in nonstationary environments through conjectural online lookahead adaptation. In2023 IEEE International Conference on Robotics and Automation (ICRA), pages 7205–7211, 2023. doi: 10.1109/ICRA48891.2023. 10161368

-

[24]

Ivan Fursa, Elias Fandi, Valentina Musat, Jacob Culley, Enric Gil, Izzeddin Teeti, Louise Bilous, Isaac Vander Sluis, Alexander Rast, and Andrew Bradley. Worsening Perception: Real-time Degradation of Autonomous Vehicle Perception Performance for Simulation of Adverse Weather Conditions, July 2021. URL http://arxiv.org/abs/2103.02760. arXiv:2103.02760 [cs]

-

[25]

Milad Rahmati. Edge AI-Powered Real-Time Decision-Making for Autonomous Vehicles in Adverse Weather Conditions, March 2025. URL http://arxiv.org/abs/2503.09638. arXiv:2503.09638 [cs]. 11

-

[26]

Amin Jalal Aghdasian, Farzaneh Abdollahi, and Ali Kamali Iglie. Tackling Snow-Induced Challenges: Safe Autonomous Lane-Keeping with Robust Reinforcement Learning, December

- [27]

-

[28]

Daniel Freeman, Erik Frey, Anton Raichuk, Sertan Girber, Igor Mordatch, and Olivier Bachem

C. Daniel Freeman, Erik Frey, Anton Raichuk, Sertan Girber, Igor Mordatch, and Olivier Bachem. Brax — a differentiable physics engine for large scale rigid body simulation. In NeurIPS Datasets and Benchmarks Track, 2021

work page 2021

-

[29]

Orbit: A unified simulation framework for interactive robot learning environments

Mayank Mittal, Calvin Yu, Qinxi Yu, Jingzhou Liu, Nikita Rudin, David Hoeller, Jia Lin Yuan, Ritvik Singh, Yunrong Guo, Hammad Mazhar, et al. Orbit: A unified simulation framework for interactive robot learning environments. InIEEE Robotics and Automation Letters (RA-L), 2023

work page 2023

-

[30]

Chuanyun Fu and Tarek Sayed. Comparison of threshold determination methods for the deceleration rate to avoid a crash (DRAC)-based crash estimation.Accident Analysis & Pre- vention, 153:106051, April 2021. ISSN 0001-4575. doi: 10.1016/j.aap.2021.106051. URL https://www.sciencedirect.com/science/article/pii/S0001457521000828

-

[31]

Cosmos World Foundation Model Platform for Physical AI

NVIDIA, Niket Agarwal, Arslan Ali, Maciej Bala, Yogesh Balaji, Erik Barker, Tiffany Cai, Prithvijit Chattopadhyay, Yongxin Chen, Yin Cui, Yifan Ding, Daniel Dworakowski, Jiaojiao Fan, Michele Fenzi, Francesco Ferroni, Sanja Fidler, Dieter Fox, Songwei Ge, Yunhao Ge, Jinwei Gu, Siddharth Gururani, Ethan He, Jiahui Huang, Jacob Huffman, Pooya Jannaty, Jingy...

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[32]

All coordinates (road and agent) are shifted so that each scenario is locally centered at the origin

Re-centering.A scene center is computed as the mean of all road polyline points. All coordinates (road and agent) are shifted so that each scenario is locally centered at the origin

-

[33]

Scenes with significant 3D structure (bridges, ramps) are filtered during curation (Appendix I)

Z-flattening.Waymo road elevation data is collapsed to a constant ground height ( z= 0 ). Scenes with significant 3D structure (bridges, ramps) are filtered during curation (Appendix I)

-

[34]

Pairs separated by more than 3.0 m are treated as discontinuities and skipped

Segment generation.Each consecutive pair of polyline points defines an oriented box segment placed at the midpoint and rotated to align with the point-to-point direction (width 0.10 m, height 0.10 m). Pairs separated by more than 3.0 m are treated as discontinuities and skipped. Both endpoints must fall within±B/2of the scene center (B=200m)

-

[35]

The different reconstructed Waymo Motion Open Dataset scene is illustrated in Figure 5

USD prim creation.Segments are grouped by polyline type and rendered as a PointInstancer with a single unit-cube prototype; per-segment position, orientation, and scale arrays encode the geometry compactly. The different reconstructed Waymo Motion Open Dataset scene is illustrated in Figure 5

-

[36]

Metadata baking.Five arrays are written to the world root prim’s USDcustomData: segment midpoint positions, direction vectors, polyline type codes, half-lengths, and half-widths. This flat, GPU-friendly format enables constant-time runtime access without re-parsing the JSON or traversing the USD prim hierarchy. Multi-world grid assembly.SceneFactory arran...

-

[37]

Goal position(g b x, gb y), each divided by the scene bounding-box half-size (B/2 = 100m)

-

[38]

Heading error to the goal, encoded as(sin ∆ψ,cos ∆ψ)

-

[39]

Euclidean goal distance∥g b xy∥, divided byB/2

-

[40]

Body-frame velocity(v b x, vb y), each divided by 10 m/s. When the weather-to-friction module is active, the 4-dim weather context (Equation 2) is appended to the ego group, yielding an 11-dim input to the ego encoder. Road geometry context ( Kr ×5 = 1,750 -dim).Each agent selects Kr = 350 road-segment midpoints from the pre-loaded GPU metadata tensors (§...

-

[41]

Relative position(∆x b,∆y b)in ego body frame, each divided byr

-

[42]

Polyline type code, divided by a normalizer (= 20)

-

[43]

Road direction unit vector(d b x, db y)in ego body frame. Neighbor vehicles (Kv ×7 = 168 -dim).The Kv = 24 nearest alive agents (by Euclidean distance in world XY) are selected per ego agent. Self-distance and dead-agent distances are set to infinity before the argsort. Each neighbor contributes seven features:

-

[44]

Relative position(∆x b,∆y b)in ego body frame, each divided byB/2

-

[45]

Chassis length and width, each divided byB/2. 16

-

[46]

Relative heading∆ψ, divided byπ

-

[47]

Speed∥v xy∥, divided by 10 m/s

-

[48]

Pairwise time-to-collision (TTC), computed via a swept-circle test. Each vehicle is approximated by three equal-radius circles placed along the longitudinal axis at offsets {−d,0,+d} from the chassis centre, where r= max(0.45,0.55W) and d= min WB 2 ,max(0, L 2 −0.8r) . For the default vehicle ( L=4.0 m, W=2.0 m, WB=2.6 m) this gives r≈1.10 m and d≈1.12 m....

-

[49]

Triggered when the maximum contact-sensor force exceeds 25 N

Collision( −6.0). Triggered when the maximum contact-sensor force exceeds 25 N. A warm-up window of 24 steps after spawn suppresses false positives from initial settling

-

[50]

Crash( −10.0). Triggered when the vehicle falls below the ground plane ( zrel <0 ), drifts more than 100 m from its start position, or tilts beyond a gravity-vector threshold (gb z >−0.15 , indicating a rollover or severe pitch)

-

[51]

Lane-forbidden( −20.0). Triggered when any of the vehicle’s three bounding circles overlaps a road-edge segment (Waymo types 15–16), detected via an oriented-bounding-box overlap test. After termination, the agent is teleported off-stage and all subsequent dense rewards are masked to zero. Dense shaping rewards.Six terms provide per-step signal for active agents:

-

[52]

Route progress(weight w= 2.0 ). The displacement between consecutive steps is projected onto the tangent of the nearest driving-lane segment (Waymo types 1–2), oriented toward the goal: rprog = clamp (pt −p t−1)· ˆtlane,−2,+2 ·w . This rewards forward progress along the route and penalizes backwards motion

-

[53]

Geometric lane-keeping(weight w= 0.08 ). A continuous quality score combines lateral offset and heading alignment with the nearest driving lane: q= exp −(ℓ/σ)2 · (1−w h) +w h ·max(0,cos(ψ−ψ lane)) , where ℓ is the signed lateral offset, σ= 1.75 m is the tolerance, and wh = 0.8 is the heading weight. The reward isr lane =q·w

-

[54]

Off-road penalty(weight w=−0.5 ). Applied when the vehicle’s lateral offset exceeds 3.25 m or its distance to the nearest lane exceeds 6.0 m

-

[55]

Idle penalty(weight w=−0.05 ). Applied when the vehicle speed drops below 1.0 m/s, discour- aging the policy from learning to stop and wait

-

[56]

Inter-vehicle TTC penalty.The minimum pairwise TTC across all neighbors is converted to a penalty: p(τ) = min αv/max(τ, τ floor), p max,v , withα v = 0.10,p max,v = 0.35, andτ floor = 0.5s. The reward isr ttc =−p(τ). 18 Table 7: Reward components used in all experiments. Sparse rewards are delivered once and trigger agent termination; dense rewards are ap...

-

[57]

This encourages the policy to slow down when approaching road boundaries

Road-edge TTC penalty.The same α/τ penalty form is applied to the closest road-edge segment within 40 m ahead: αe = 0.07, pmax,e = 0.40, τfloor = 0.5 s. This encourages the policy to slow down when approaching road boundaries. Termination and time-out.An agent terminates upon any sparse event (goal, collision, crash, or lane-forbidden). If no event occurs...

-

[58]

Refinement: tunes all 20 parameters jointly on all rollouts with a narrowed search window (18% of the full range)

-

[59]

Brake preservation: re-tunes longitudinal parameters with a tight window (10%) to prevent regression. Each stage inherits the best configuration from the previous stage. The CEM uses a population of 24 candidates, an elite fraction of 25%, an initial standard deviation of 25% of each parameter’s range, and a minimum standard deviation of 5% to prevent pre...

work page 2025

-

[60]

Batched joint-state tensors.The custom URDF articulated vehicle (§3.2) exposes positions, velocities, and orientations as GPU-resident tensors of shape (num_envs,num_agents, d) via Isaac Lab’sArticulation API. Observation construction reduces to a handful of batchedtorch operations with no Python per-agent loop. 23 Table 10: Per-step timing breakdown at 6...

-

[61]

The Baseline places all worlds in a single PhysX scene, limiting solver parallelism

Cloned PhysX scenes.Isaac Lab’s environment cloning creates N independent PhysX solver islands, enabling the GPU broad-phase and narrow-phase to dispatch them in parallel. The Baseline places all worlds in a single PhysX scene, limiting solver parallelism

-

[62]

GPU-resident training loop.RSL-RL reads observations, rewards, and dones as CUDA tensors directly from the environment; no CPU round-trip occurs during training. The Baseline converts observations to NumPy for Stable-Baselines3’s rollout buffer, incurring O(agents×obs_dim) bytes of device-to-host transfer every step. K Friction Awareness Ablation We compa...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.