Recognition: no theorem link

Thin-Client Interactive Gaussian Adaptive Streaming over HTTP/3

Pith reviewed 2026-05-12 01:12 UTC · model grok-4.3

The pith

TIGAS offloads 3D Gaussian Splatting rendering to a backend and streams adapted 2D views over HTTP/3 to enable interactive experiences on thin clients.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

TIGAS is a thin-client remote rendering framework for 3D Gaussian Splatting that streams view-dependent 2D projections over QUIC to minimize head-of-line blocking. A dedicated ABR algorithm adapts rendering quality to fluctuating network conditions to maintain motion-to-photon latency within strict 6DoF interactive constraints. Backend rendering occurs in under 10 milliseconds, supporting an average SSIM of 0.88 across 14 models and real movement traces, with an experimental WebGPU super-resolution option for further quality analysis.

What carries the argument

The ABR algorithm that dynamically adjusts rendering quality based on network conditions while enforcing 6DoF latency limits.

If this is right

- Resource-constrained devices can access photorealistic 3D scenes without local high-end GPUs.

- Interactive 6DoF navigation stays responsive even with varying network quality.

- Perceptual quality remains high with an average SSIM of 0.88 in tested scenarios.

- Super-resolution processing on the client introduces measurable trade-offs in processing load.

- The system provides a practical platform for experimenting with 3DGS delivery methods.

Where Pith is reading between the lines

- Extending the approach to other volumetric rendering techniques could broaden its applicability.

- Lowering client-side demands might enable longer battery life in mobile XR use cases.

- Consistent performance across continents suggests robustness for global applications.

- Combining with emerging web standards could further reduce deployment barriers.

Load-bearing premise

The assumption that an adaptive bitrate algorithm can reliably match rendering quality to network changes without pushing latency beyond interactive 6DoF limits or burdening the thin client.

What would settle it

A scenario with sudden network drops where either the end-to-end latency exceeds interactive thresholds or the structural similarity index falls well below the reported average.

Figures

read the original abstract

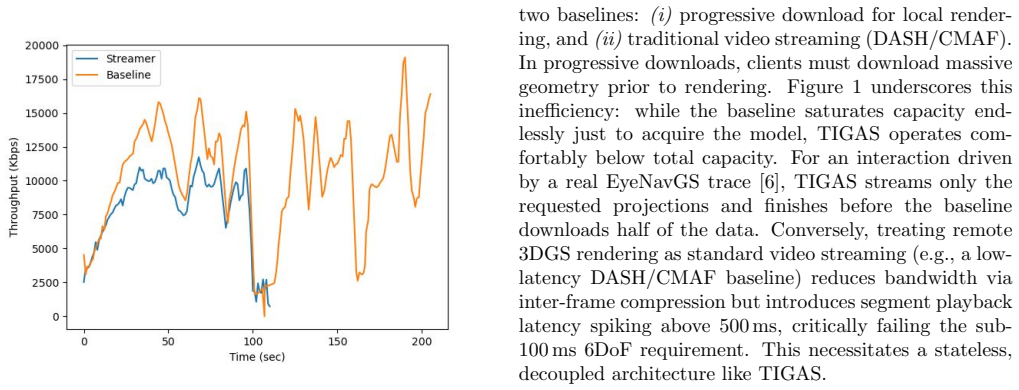

Recent advancements in 3D Gaussian Splatting (3DGS) have enabled photorealistic rendering of complex scenes, yet widespread adoption on mobile and Extended Reality (XR) devices is hindered by substantial computational and bandwidth requirements. While existing solutions often focus on model compression for client-side rendering, they still demand significant GPU power, limiting applicability on resource-constrained hardware. We propose TIGAS (Thin-client Interactive Gaussian Adaptive Streaming), a remote rendering framework offloading rasterization to a backend. To bypass the prohibitive latencies connected to fluctuating network conditions, TIGAS streams view-dependent 2D projections to a lightweight web client over QUIC, minimizing head-of-line (HoL) blocking. A dedicated ABR algorithm adapts rendering quality to fluctuating network conditions, maintaining motion-to-photon latency within strict 6DoF interactive constraints. Furthermore, we discuss the integration of an experimental WebGPU super-resolution pipeline to analyze the trade-offs between perceptual quality enhancements and thin-client processing bottlenecks. We extensively evaluate TIGAS across multi-continental environments using 14 3DGS models and real 6DoF EyeNavGS movement traces. Powered by a backend rendering frames in under 10 milliseconds, TIGAS maintains latency within interactive thresholds while achieving an average SSIM of 0.88, serving both as a robust testbed for 3DGS streaming research and a capable delivery system. The source code is available at: https://github.com/Rekenar/GaussianAdaptiveStreamer.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces TIGAS, a thin-client remote rendering framework for 3D Gaussian Splatting (3DGS) models that offloads rasterization to a backend server and streams view-dependent 2D projections to a lightweight web client over QUIC (HTTP/3) to minimize head-of-line blocking. A custom ABR algorithm adapts rendering quality to network conditions while targeting motion-to-photon latency suitable for 6DoF interactive use; an optional WebGPU super-resolution stage is also evaluated for quality-latency trade-offs. The system is tested across 14 3DGS models and real EyeNavGS 6DoF traces in multi-continental settings, reporting backend render times under 10 ms, average SSIM of 0.88, and latency remaining within interactive thresholds.

Significance. If the latency and adaptation claims are substantiated, TIGAS would provide a practical path for deploying photorealistic 3DGS on mobile/XR hardware that cannot run full models locally, while the open-source release (https://github.com/Rekenar/GaussianAdaptiveStreamer) supplies a reproducible testbed for future 3DGS streaming research. The use of real movement traces and multi-continental paths strengthens external validity relative to synthetic evaluations.

major comments (2)

- [§4] §4 (Evaluation) and associated figures/tables: the central claim that TIGAS 'maintains latency within interactive thresholds' (abstract and §1) is supported only by aggregate latency and SSIM figures. No 95th/99th-percentile end-to-end motion-to-photon latencies, no fraction of frames exceeding the stated threshold, and no per-trace adaptation reaction times under controlled bandwidth variance are reported. These omissions make it impossible to verify that the ABR never violates the 15–20 ms 6DoF budget under realistic fluctuations.

- [§3.2] §3.2 (ABR algorithm): the description of how the ABR selects quality levels and reacts to network changes lacks quantitative characterization of its decision latency and stability. Without measured round-trip times from pose capture to displayed frame (or at least the distribution of adaptation intervals) when bandwidth is artificially varied, the weakest assumption identified in the review—that ABR consistently respects 6DoF constraints—remains untested.

minor comments (2)

- [Abstract] Abstract: the phrase 'maintaining motion-to-photon latency within strict 6DoF interactive constraints' should be accompanied by the numerical threshold used (e.g., 20 ms) for clarity.

- [§5] §5 (WebGPU super-resolution): the trade-off analysis would benefit from explicit per-frame client-side processing times rather than qualitative discussion of 'lightweight' bottlenecks.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback on our manuscript. The comments on the evaluation section and ABR characterization are well-taken, and we will revise the paper to provide stronger quantitative support for the latency claims while preserving the existing results from real 6DoF traces and multi-continental tests.

read point-by-point responses

-

Referee: [§4] §4 (Evaluation) and associated figures/tables: the central claim that TIGAS 'maintains latency within interactive thresholds' (abstract and §1) is supported only by aggregate latency and SSIM figures. No 95th/99th-percentile end-to-end motion-to-photon latencies, no fraction of frames exceeding the stated threshold, and no per-trace adaptation reaction times under controlled bandwidth variance are reported. These omissions make it impossible to verify that the ABR never violates the 15–20 ms 6DoF budget under realistic fluctuations.

Authors: We agree that aggregate statistics alone leave room for stronger verification of tail behavior under network fluctuations. The current evaluation already demonstrates average end-to-end latencies well below the 15–20 ms budget across 14 models and real EyeNavGS traces, with backend rendering consistently under 10 ms. To directly address the concern, the revised manuscript will add 95th- and 99th-percentile motion-to-photon latencies, the fraction of frames exceeding the threshold, and per-trace adaptation reaction times. We will also include new controlled-bandwidth-variance experiments that replay the traces while injecting realistic fluctuations, allowing explicit measurement of ABR reaction intervals. revision: yes

-

Referee: [§3.2] §3.2 (ABR algorithm): the description of how the ABR selects quality levels and reacts to network changes lacks quantitative characterization of its decision latency and stability. Without measured round-trip times from pose capture to displayed frame (or at least the distribution of adaptation intervals) when bandwidth is artificially varied, the weakest assumption identified in the review—that ABR consistently respects 6DoF constraints—remains untested.

Authors: The ABR in §3.2 selects quality levels using estimated available bandwidth and target latency, with the overall system evaluation showing that motion-to-photon latency remains interactive. We acknowledge that explicit per-decision timing and stability metrics under controlled variance would strengthen the presentation. In the revision we will add (i) measured decision latency of the ABR, (ii) distributions of adaptation intervals, and (iii) round-trip time statistics from pose capture to displayed frame, all obtained from the same real traces augmented with artificial bandwidth variation experiments. These additions will be placed in an expanded §4. revision: yes

Circularity Check

No circularity: TIGAS is an empirical system evaluated on external traces and models.

full rationale

The paper proposes TIGAS as a remote rendering framework with an ABR algorithm for 3DGS streaming over QUIC, evaluated directly on 14 models and real EyeNavGS 6DoF traces. Reported results (backend render <10 ms, average SSIM 0.88, latency within thresholds) are implementation measurements, not predictions or derivations that reduce to fitted inputs or self-citations by construction. No equations, uniqueness theorems, or ansatzes are presented that loop back to the paper's own data or prior self-work in a load-bearing way. The evaluation is externally falsifiable via the released code and traces.

Axiom & Free-Parameter Ledger

free parameters (1)

- ABR parameters

Reference graph

Works this paper leans on

-

[1]

Beyond infer- ence: Performance analysis of dnn server overheads for computer vision

Ahmed Abouelhamayed, Susanne Balle, Deshanand Singh, and Mohamed Abdelfattah. Beyond infer- ence: Performance analysis of dnn server overheads for computer vision. InProceedings of the 61st ACM/IEEE Design Automation Conference, DAC ’24, page 1–6. ACM, June 2024

work page 2024

-

[2]

Mark Allman, Ethan Blanton, and Vern Paxson. TCP Congestion Control. RFC 5681, September 2009

work page 2009

-

[3]

Gen- stream: Semantic streaming framework for genera- tive reconstruction of human-centric media

Emanuele Artioli, Daniele Lorenzi, Shivi Vats, Farzad Tashtarian, and Christian Timmerer. Gen- stream: Semantic streaming framework for genera- tive reconstruction of human-centric media. InPro- ceedings of the 33rd ACM International Conference on Multimedia, MM ’25, page 12276–12284, New York, NY, USA, 2025. Association for Computing Machinery

work page 2025

-

[4]

Hypertext Transfer Protocol Version 2 (HTTP/2)

Mike Belshe, Roberto Peon, and Martin Thomson. Hypertext Transfer Protocol Version 2 (HTTP/2). https://httpwg.org/specs/rfc7540.html, 2015

work page 2015

-

[5]

Mike Bishop. HTTP/3. RFC 9114, June 2022

work page 2022

-

[6]

Eyenavgs: A 6-dof navigation dataset and record- n-replay software for real-world 3dgs scenes in vr

Zihao Ding, Cheng-Tse Lee, Mufeng Zhu, Tao Guan, Yuan-Chun Sun, Cheng-Hsin Hsu, and Yao Liu. Eyenavgs: A 6-dof navigation dataset and record- n-replay software for real-world 3dgs scenes in vr. arXiv preprint arXiv:2506.02380, 2025

-

[7]

Lightgaussian: Un- bounded 3d gaussian compression with 15x reduction and 200+ fps, 2023

Zhiwen Fan, Kevin Wang, Kairun Wen, Zehao Zhu, Dejia Xu, and Zhangyang Wang. Lightgaussian: Un- bounded 3d gaussian compression with 15x reduction and 200+ fps, 2023

work page 2023

-

[8]

Ben Fei, Jingyi Xu, Rui Zhang, Qingyuan Zhou, Wei- dong Yang, and Ying He. 3D Gaussian Splatting as a New Era: A Survey.IEEE Transactions on Visu- alization and Computer Graphics, 31(8):4429–4449, 2025

work page 2025

-

[9]

Roy T. Fielding, Jim Gettys, Jeffrey Mogul, Hen- rik Frystyk, Larry Masinter, Paul Leach, and Tim Berners-Lee. Hypertext Transfer Protocol – HTTP/1.1.https://tools.ietf.org/html/ rfc2616, 1999

work page 1999

-

[10]

Eagles: Efficient accelerated 3d gaussians with lightweight encodings, 2024

Sharath Girish, Kamal Gupta, and Abhinav Shrivas- tava. Eagles: Efficient accelerated 3d gaussians with lightweight encodings, 2024

work page 2024

-

[11]

4dgc: Rate-aware 4d gaussian com- pression for efficient streamable free-viewpoint video

Qiang Hu, Zihan Zheng, Houqiang Zhong, Sihua Fu, Li Song, Xiaoyun Zhang, Guangtao Zhai, and Yanfeng Wang. 4dgc: Rate-aware 4d gaussian com- pression for efficient streamable free-viewpoint video. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 875–885, 2025

work page 2025

-

[12]

Jana Iyengar and Martin Thomson. QUIC: A UDP- Based Multiplexed and Secure Transport.https: //www.rfc-editor.org/rfc/rfc9000.html, 2021

work page 2021

-

[13]

Online learning for low-latency adaptive streaming

Theo Karagkioules, Rufael Mekuria, Dirk Griffioen, and Arjen Wagenaar. Online learning for low-latency adaptive streaming. InProceedings of the 11th ACM Multimedia Systems Conference, MMSys ’20, page 315–320, New York, NY, USA, 2020. Association for Computing Machinery. 9

work page 2020

-

[14]

3D Gaussian Splatting for Real-Time Radiance Field Rendering

Bernhard Kerbl, Georgios Kopanas, Thomas Leimkuehler, and George Drettakis. 3D Gaussian Splatting for Real-Time Radiance Field Rendering. ACM Transactions on Graphics, 42(4), July 2023

work page 2023

-

[15]

Nikhil Ketkar, Jojo Moolayil, Nikhil Ketkar, and Jojo Moolayil.Deep Learning with Python: Learn Best Practices of Deep Learning Models with Py- Torch. Springer, 2021

work page 2021

-

[16]

Tanja Kojic, Steven Schmidt, Sebastian M¨ oller, and Jan-Niklas Voigt-Antons. Influence of network de- lay in virtual reality multiplayer exergames: Who is actually delayed? In2019 Eleventh Interna- tional Conference on Quality of Multimedia Experi- ence (QoMEX), pages 1–3, 2019

work page 2019

-

[17]

Akcay, Abdelhak Bentaleb, Ali C

May Lim, Mehmet N. Akcay, Abdelhak Bentaleb, Ali C. Begen, and Roger Zimmermann. When they go high, we go low: low-latency live streaming in dash.js with lol. InProceedings of the 11th ACM Multimedia Systems Conference, MMSys ’20, page 321–326, New York, NY, USA, 2020. Association for Computing Machinery

work page 2020

-

[18]

Bangya Liu and Suman Banerjee. Swings: Slid- ing window gaussian splatting for volumetric video streaming with arbitrary length.arXiv preprint arXiv:2409.07759, 2024

-

[19]

Weihang Liu, Yuke Li, Yuxuan Li, Jingyi Yu, and Xin Lou. Duplex-gs: Proxy-guided weighted blend- ing for real-time order-independent gaussian splat- ting, 2025

work page 2025

-

[20]

Neves: Real-time neural video enhance- ment for http adaptive streaming

Daniele Lorenzi, Farzad Tashtarian, and Christian Timmerer. Neves: Real-time neural video enhance- ment for http adaptive streaming. In2025 Inter- national Conference on Visual Communications and Image Processing (VCIP), pages 1–3, 2025

work page 2025

-

[21]

Starlette: The little asgi frame- work that shines, 2024

Encode OSS Ltd. Starlette: The little asgi frame- work that shines, 2024

work page 2024

-

[22]

Nerf: Representing scenes as neural ra- diance fields for view synthesis

Ben Mildenhall, Pratul P Srinivasan, Matthew Tan- cik, Jonathan T Barron, Ravi Ramamoorthi, and Ren Ng. Nerf: Representing scenes as neural ra- diance fields for view synthesis. InEuropean Confer- ence on Computer Vision (ECCV), 2020

work page 2020

-

[23]

Compgs: Smaller and faster gaussian splatting with vector quantization, 2024

KL Navaneet, Kossar Pourahmadi Meibodi, Soroush Abbasi Koohpayegani, and Hamed Pir- siavash. Compgs: Smaller and faster gaussian splatting with vector quantization, 2024

work page 2024

-

[24]

Uvgs: Reimagining unstructured 3d gaussian splatting using uv mapping, 2025

Aashish Rai, Dilin Wang, Mihir Jain, Nikolaos Sarafianos, Kefan Chen, Srinath Sridhar, and Aayush Prakash. Uvgs: Reimagining unstructured 3d gaussian splatting using uv mapping, 2025

work page 2025

-

[25]

A survey of interactive remote rendering systems.ACM Computing Surveys (CSUR), 47(4):1–29, 2015

Shu Shi and Cheng-Hsin Hsu. A survey of interactive remote rendering systems.ACM Computing Surveys (CSUR), 47(4):1–29, 2015

work page 2015

-

[26]

LapisGS: Layered progressive 3D Gaussian splatting for adaptive streaming

Yuang Shi, G´ eraldine Morin, Simone Gasparini, and Wei Tsang Ooi. LapisGS: Layered progressive 3D Gaussian splatting for adaptive streaming. InPro- ceedings of the 2025 International Conference on 3D Vision (3DV), pages 991–1000. IEEE, 2025

work page 2025

-

[27]

Lts: A dash streaming system for dynamic multi-layer 3d gaussian splatting scenes

Yuan-Chun Sun, Yuang Shi, Cheng-Tse Lee, Mufeng Zhu, Wei Tsang Ooi, Yao Liu, Chun-Ying Huang, and Cheng-Hsin Hsu. Lts: A dash streaming system for dynamic multi-layer 3d gaussian splatting scenes. InProceedings of the 16th ACM Multimedia Systems Conference, MMSys ’25, page 136–147, New York, NY, USA, 2025. Association for Computing Machin- ery

work page 2025

-

[28]

Babak Taraghi, Hermann Hellwagner, and Chris- tian Timmerer. Lll-cadvise: Live low-latency cloud- based adaptive video streaming evaluation frame- work.IEEE Access, 11:25723–25734, 2023

work page 2023

-

[29]

Jeroen van der Hooft, Stefano Petrangeli, Tim Wauters, Rafael Huysegems, Patrice Rondao Alface, Tom Bostoen, and Filip De Turck. Http/2-based adaptive streaming of hevc video over 4g/lte net- works.IEEE Communications Letters, 20(11):2177– 2180, 2016

work page 2016

-

[30]

No Redundancy, No Stall: Lightweight Streaming 3D Gaussian Splatting for Real-time Rendering

Linye Wei, Jiajun Tang, Fan Fei, Boxin Shi, Run- sheng Wang, and Meng Li. No Redundancy, No Stall: Lightweight Streaming 3D Gaussian Splatting for Real-time Rendering. In2025 IEEE/ACM In- ternational Conference On Computer Aided Design (ICCAD), pages 1–9, 2025

work page 2025

-

[31]

Jinbo Yan, Rui Peng, Zhiyan Wang, Luyang Tang, Jiayu Yang, Jie Liang, Jiahao Wu, and Ronggang Wang. Instant gaussian stream: Fast and generaliz- able streaming of dynamic scene reconstruction via gaussian splatting. InProceedings of the Computer Vision and Pattern Recognition Conference, pages 16520–16531, 2025

work page 2025

-

[32]

Chenqi Zhang, Yu Feng, Jieru Zhao, Guangda Liu, Wenchao Ding, Chentao Wu, and Minyi Guo. STREAMINGGS: Voxel-Based Streaming 3D Gaus- sian Splatting with Memory Optimization and Ar- chitectural Support, 2025

work page 2025

-

[33]

Sgss: Streaming 6-dof navigation of gaussian splat scenes

Mufeng Zhu, Mingju Liu, Cunxi Yu, Cheng-Hsin Hsu, and Yao Liu. Sgss: Streaming 6-dof navigation of gaussian splat scenes. InProceedings of the 16th ACM Multimedia Systems Conference, MMSys ’25, page 46–56, New York, NY, USA, 2025. Association for Computing Machinery. 10

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.