Recognition: no theorem link

Mutual Information Optimal Density Control of Linear Systems and Generalized Schr\"{o}dinger Bridges with Reference Refinement

Pith reviewed 2026-05-12 04:45 UTC · model grok-4.3

The pith

Alternating optimization of mutual information optimal density control for discrete-time linear systems coincides with generalized Schrödinger bridge optimization.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

For a discrete-time linear system, the alternating optimization algorithm that solves the mutual information regularized optimal density control problem with Gaussian density constraints at specified times is exactly the same as the alternating optimization algorithm for the generalized Schrödinger bridge problem associated with the same linear system.

What carries the argument

Alternating optimization with closed-form steps derived from the linear dynamics, mutual information objective, and Gaussian marginal constraints.

If this is right

- Each iteration of the algorithm admits an explicit closed-form solution.

- Gaussian constraints directly bound the uncertainty of the state trajectory.

- Methods developed for generalized Schrödinger bridges can be transferred to mutual information optimal control.

- Reference measure refinement becomes available inside the density control loop.

Where Pith is reading between the lines

- The same equivalence may hold after time discretization of continuous-time linear systems.

- Numerical schemes from Schrödinger bridge literature could accelerate convergence for the density control problem.

- Safety specifications could be encoded by tightening the Gaussian covariance bounds at critical times.

Load-bearing premise

The underlying dynamics must be discrete-time and linear, and the density constraints must be Gaussian at fixed times.

What would settle it

For a two-dimensional linear system with two specified time instants and given Gaussian marginals, compute one full cycle of iterates from both the MI density control formulation and the generalized Schrödinger bridge formulation and check whether the control inputs and density parameters match to machine precision.

Figures

read the original abstract

We consider a mutual information (MI) regularized version of optimal density control of a discrete-time linear system. MI optimal control has been proposed as an extension of maximum entropy optimal control to trade off between control performance and benefits provided by stochastic inputs. MI regularization induces stochasticity in the policy, which poses challenges for applications of MI optimal control in safety-critical scenarios. To remedy this situation, we impose Gaussian density constraints at specified times to directly control state uncertainty. For this MI optimal density control problem, we propose an alternating optimization algorithm and derive the closed form of each step in the algorithm. In addition, we reveal that the alternating optimization of the MI optimal density control problem coincides with that of the so-called generalized Schr\"{o}dinger bridge problem associated with the discrete-time linear system.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

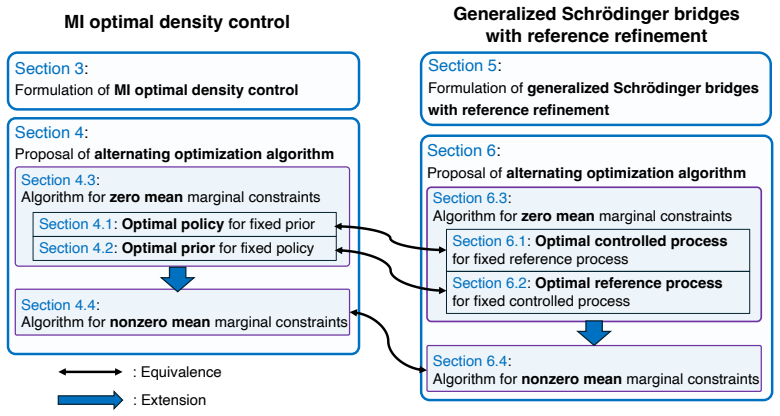

Summary. The paper formulates a mutual information (MI) regularized optimal density control problem for discrete-time linear systems subject to Gaussian marginal constraints at fixed times. It derives an alternating optimization procedure whose steps admit closed-form solutions obtained from the Lagrangian and Gaussian moment properties. The central result is that these alternating steps coincide exactly with the corresponding updates in the generalized Schrödinger bridge problem (with reference refinement) for the same linear dynamics and constraints.

Significance. If the derivations are correct, the equivalence supplies a direct bridge between MI-regularized stochastic control and generalized Schrödinger bridge methods, enabling transfer of algorithmic techniques and theoretical tools between the two literatures. The explicit closed-form updates constitute a concrete strength, as they support efficient numerical implementation without iterative inner solvers. The result is scoped precisely to linear dynamics and Gaussian constraints, which is appropriate and avoids over-claiming generality.

minor comments (3)

- [§3.2] §3.2, after Eq. (12): the statement that the reference refinement is 'parameter-free' should be qualified by noting that the initial reference measure is still chosen by the user; the refinement step itself is closed-form but the overall procedure retains this degree of freedom.

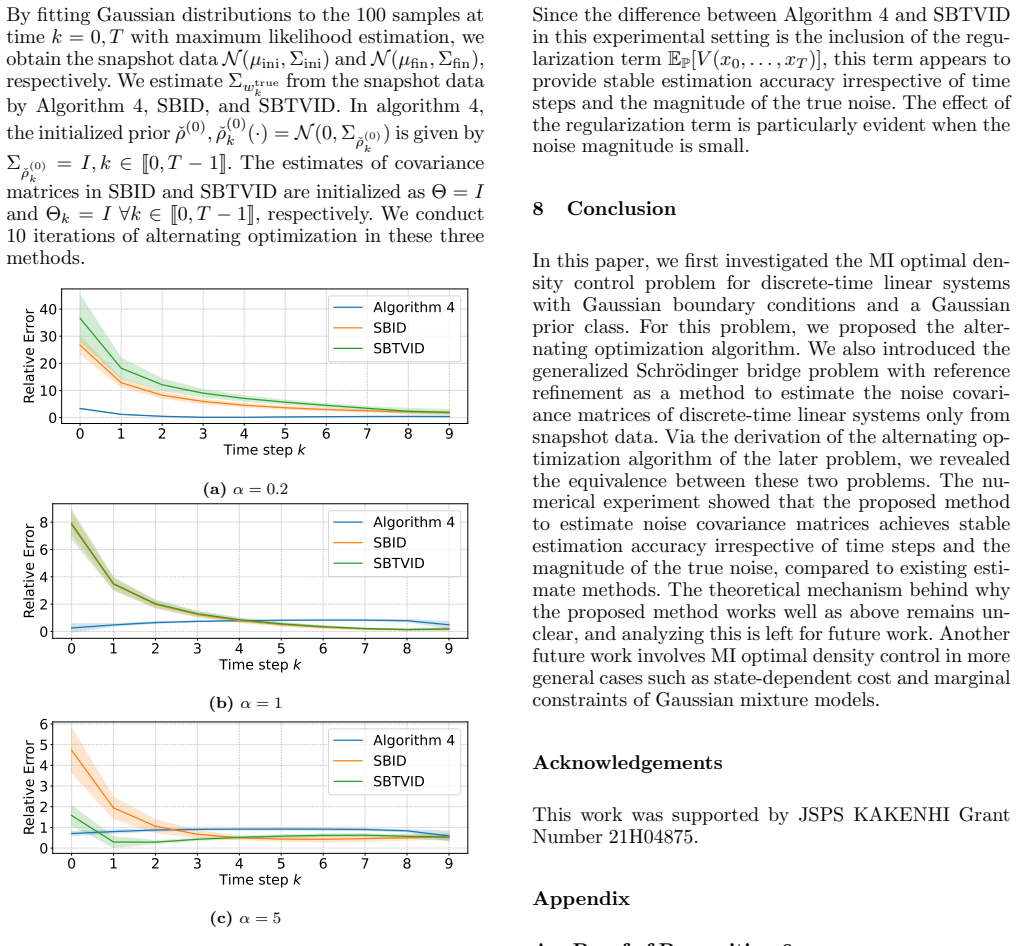

- [Figure 2] Figure 2: the plotted trajectories for the two methods overlap almost perfectly, but the caption does not report the numerical tolerance used to declare coincidence; adding this value would strengthen the empirical support for the theoretical claim.

- [§2] Notation: the symbol P_t is used both for the covariance of the controlled process and for the reference covariance in the SB formulation; a brief disambiguation sentence in §2 would prevent reader confusion.

Simulated Author's Rebuttal

We thank the referee for the positive summary, significance assessment, and recommendation of minor revision. The report correctly identifies the central contribution as the exact coincidence of the alternating optimization steps between the MI-regularized density control problem and the generalized Schrödinger bridge problem for linear dynamics with Gaussian constraints. No major comments are provided in the report.

Circularity Check

No significant circularity; equivalence shown via explicit derivation

full rationale

The paper formulates the MI-regularized density control problem for discrete-time linear systems under Gaussian marginal constraints, derives an alternating optimization procedure with closed-form updates obtained from the Lagrangian and Gaussian moment properties, and demonstrates that these updates coincide exactly with those of the generalized Schrödinger bridge (with reference refinement). This equivalence is obtained by direct algebraic matching of the subproblems rather than by definition, fitting, or self-referential citation. No load-bearing self-citation, ansatz smuggling, or renaming of known results is present; the central claim remains independent of its inputs once the linear-Gaussian structure is fixed.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption The plant is a discrete-time linear system

- domain assumption State density constraints are Gaussian at chosen times

Reference graph

Works this paper leans on

-

[1]

Optimal covariance control for discrete- time stochastic linear systems subject to constraints

Efstathios Bakolas. Optimal covariance control for discrete- time stochastic linear systems subject to constraints. In 2016 IEEE 55th Conference on Decision and Control (CDC), pages 1153–1158. IEEE, 2016

work page 2016

-

[2]

Efstathios Bakolas. Finite-horizon covariance control for discrete-time stochastic linear systems subject to input constraints. Automatica, 91:61–68, 2018

work page 2018

-

[3]

Kenneth F Caluya and Abhishek Halder. Wasserstein proximal algorithms for the Schr¨ odinger bridge problem: Density control with nonlinear drift. IEEE Transactions on Automatic Control, 67(3):1163–1178, 2021

work page 2021

-

[4]

Linear System Theory and Design

Chi-Tsong Chen. Linear System Theory and Design . Saunders college publishing, 1984

work page 1984

-

[5]

Density control of interacting agent systems

Yongxin Chen. Density control of interacting agent systems. IEEE Transactions on Automatic Control , 69(1):246–260, 2023

work page 2023

-

[6]

Optimal steering of a linear stochastic system to a final probability distribution, part I

Yongxin Chen, Tryphon T Georgiou, and Michele Pavon. Optimal steering of a linear stochastic system to a final probability distribution, part I. IEEE Transactions on Automatic Control, 61(5):1158–1169, 2015

work page 2015

-

[7]

On the relation between optimal transport and Schr¨ odinger bridges: A stochastic control viewpoint

Yongxin Chen, Tryphon T Georgiou, and Michele Pavon. On the relation between optimal transport and Schr¨ odinger bridges: A stochastic control viewpoint. Journal of Optimization Theory and Applications , 169:671–691, 2016

work page 2016

-

[8]

Elements of information theory

Thomas M Cover. Elements of information theory . John Wiley & Sons, 1999

work page 1999

-

[9]

Privacy- constrained policies via mutual information regularized policy gradients

Chris J Cundy, Rishi Desai, and Stefano Ermon. Privacy- constrained policies via mutual information regularized policy gradients. In International Conference on Artificial Intelligence and Statistics , pages 2809–2817. PMLR, 2024

work page 2024

-

[10]

Karthik Elamvazhuthi, Piyush Grover, and Spring Berman. Optimal transport over deterministic discrete-time nonlinear systems using stochastic feedback laws.IEEE control systems letters, 3(1):168–173, 2018. 18

work page 2018

-

[11]

Shoju Enami and Kenji Kashima. Mutual information optimal control of discrete-time linear systems.IEEE Control Systems Letters, 9:1982–1987, 2025

work page 1982

-

[12]

On policy stochasticity in mutual information optimal control of linear systems

Shoju Enami and Kenji Kashima. On policy stochasticity in mutual information optimal control of linear systems. arXiv preprint arXiv:2507.21543v2, 2025

-

[13]

Maximum entropy RL (provably) solves some robust RL problems

Benjamin Eysenbach and Sergey Levine. Maximum entropy RL (provably) solves some robust RL problems. arXiv preprint arXiv:2103.06257, 2021

-

[14]

Soft Q-learning with mutual-information regularization

Jordi Grau-Moya, Felix Leibfried, and Peter Vrancx. Soft Q-learning with mutual-information regularization. In International conference on learning representations, 2018

work page 2018

-

[15]

Reinforcement learning with deep energy-based policies

Tuomas Haarnoja, Haoran Tang, Pieter Abbeel, and Sergey Levine. Reinforcement learning with deep energy-based policies. In International conference on machine learning , pages 1352–1361. PMLR, 2017

work page 2017

-

[16]

Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor

Tuomas Haarnoja, Aurick Zhou, Pieter Abbeel, and Sergey Levine. Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. In International conference on machine learning , pages 1861–

-

[17]

Provably efficient maximum entropy exploration

Elad Hazan, Sham Kakade, Karan Singh, and Abby Van Soest. Provably efficient maximum entropy exploration. In International Conference on Machine Learning , pages 2681–2691. PMLR, 2019

work page 2019

-

[18]

Maximum entropy optimal density control of discrete-time linear systems and Schr¨ odinger bridges

Kaito Ito and Kenji Kashima. Maximum entropy optimal density control of discrete-time linear systems and Schr¨ odinger bridges. IEEE Transactions on Automatic Control, 2023

work page 2023

-

[19]

Maximum entropy density control of discrete-time linear systems with quadratic cost

Kaito Ito and Kenji Kashima. Maximum entropy density control of discrete-time linear systems with quadratic cost. IEEE Transactions on Automatic Control , 2024

work page 2024

-

[20]

Mutual-information regularization in markov decision processes and actor-critic learning

Felix Leibfried and Jordi Grau-Moya. Mutual-information regularization in markov decision processes and actor-critic learning. In Conference on Robot Learning, pages 360–373. PMLR, 2020

work page 2020

-

[21]

Reinforcement Learning and Control as Probabilistic Inference: Tutorial and Review

Sergey Levine. Reinforcement learning and control as probabilistic inference: Tutorial and review. arXiv preprint arXiv:1805.00909, 2018

work page internal anchor Pith review arXiv 2018

-

[22]

Frank L Lewis, Draguna Vrabie, and Vassilis L Syrmos. Optimal control. John Wiley & Sons, 2012

work page 2012

-

[23]

Generalized Schr¨ odinger bridge matching

Guan-Horng Liu, Yaron Lipman, Maximilian Nickel, Brian Karrer, Evangelos A Theodorou, and Ricky TQ Chen. Generalized Schr¨ odinger bridge matching. arXiv preprint arXiv:2310.02233, 2023

-

[24]

Deep RL with information constrained policies: Generalization in continuous control

Tyler Malloy, Chris R Sims, Tim Klinger, Miao Liu, Matthew Riemer, and Gerald Tesauro. Deep RL with information constrained policies: Generalization in continuous control. arXiv preprint arXiv:2010.04646 , 2020

-

[25]

Linear system identification from snapshot data by Schr¨ odinger bridge

Kohei Morimoto and Kenji Kashima. Linear system identification from snapshot data by Schr¨ odinger bridge. Proceedings of Machine Learning Research vol , 283:1–12, 2025

work page 2025

-

[26]

Optimal covariance control for stochastic systems under chance constraints

Kazuhide Okamoto, Maxim Goldshtein, and Panagiotis Tsiotras. Optimal covariance control for stochastic systems under chance constraints. IEEE Control Systems Letters , 2(2):266–271, 2018

work page 2018

-

[27]

Computational optimal transport: With applications to data science

Gabriel Peyr´ e and Marco Cuturi. Computational optimal transport: With applications to data science. Foundations and Trends® in Machine Learning, 11(5-6):355–607, 2019

work page 2019

-

[28]

¨Uber die umkehrung der naturgesetze

Erwin Schr¨ odinger. ¨Uber die umkehrung der naturgesetze. Sitzungsberichte der Preussischen Akademie der Wissenschaften. Physikalisch-mathematische Klasse, pages 144–153, 1931

work page 1931

-

[29]

Erwin Schr¨ odinger. Sur la th´ eorie relativiste de l’´ electron et l’interpr´ etation de la m´ ecanique quantique.Annales de l’Institut Henri Poincar´ e, 2(4):269–310, 1932

work page 1932

-

[30]

Multi-marginal Schr¨ odinger bridges with iterative reference refinement

Yunyi Shen, Renato Berlinghieri, and Tamara Broderick. Multi-marginal Schr¨ odinger bridges with iterative reference refinement. arXiv preprint arXiv:2408.06277 , 2024

-

[31]

Generalized Schr¨ odinger bridge on graphs

Panagiotis Theodoropoulos, Juno Nam, Evangelos Theodorou, and Jaemoo Choi. Generalized Schr¨ odinger bridge on graphs. arXiv preprint arXiv:2602.04675 , 2026

-

[32]

Nonlinear covariance control via differential dynamic programming

Zeji Yi, Zhefeng Cao, Evangelos Theodorou, and Yongxin Chen. Nonlinear covariance control via differential dynamic programming. In 2020 American Control Conference (ACC), pages 3571–3576. IEEE, 2020. 19

work page 2020

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.