Recognition: no theorem link

Quantitative Local Convergence of Mean-Field Stein Variational Gradient Flow

Pith reviewed 2026-05-12 05:04 UTC · model grok-4.3

The pith

The mean-field Stein variational gradient flow converges locally in L2 norm at explicit polynomial rates for Riesz kernels on the torus.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

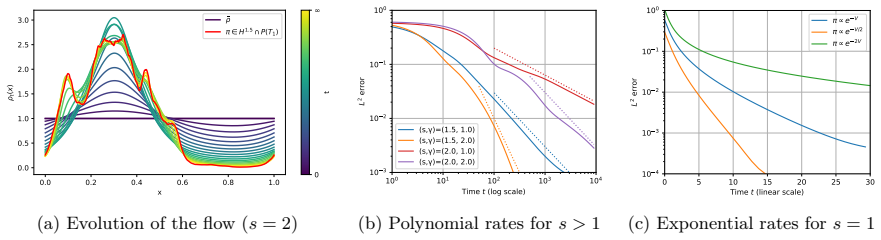

Assuming the initial density and the target are smooth and close in L2 norm, the mean-field SVGD dynamics on the torus with Riesz kernel converges to the target in L2 norm at an explicit polynomial rate that depends on the dimension and regularity parameters. These rates are sharp in some regimes. For kernels with Coulomb singularity, global exponential convergence holds.

What carries the argument

The mean-field Stein variational gradient flow, the continuous-time limit of the SVGD particle system, which evolves the density according to a velocity field derived from the kernel and the Stein discrepancy gradient toward the target.

Load-bearing premise

The initial density and target must be smooth and close enough in L2 norm, with the kernel of Riesz type on the d-dimensional torus.

What would settle it

Numerical computation of the L2 distance over time for a specific smooth initial density close to the target that deviates from the predicted polynomial decay rate.

Figures

read the original abstract

Stein Variational Gradient Descent (SVGD) is a deterministic interacting-particle method for sampling from a target probability measure given access to its score function. In the mean-field and continuous-time limit, it is known that the flow converges weakly toward the target, but no quantitative rate is known for the last iterate. In this paper, we establish quantitative local convergence in strong norms for this dynamics, when the interaction kernel is of Riesz type on the $d$-dimensional torus. Specifically, assuming that the initial density and the target are smooth and close in $L^2$-norm, we obtain explicit polynomial convergence rates in $L^2$-norm that depend on the dimension and on the regularity parameters of the kernel, the initialization and the target. We further show that these rates are sharp in certain regimes, and support the theory with numerical experiments. In the edge case of kernels with a Coulomb singularity, we recover the global exponential convergence result established in prior work. Our analysis is inspired by recent results on Wasserstein gradient flows of kernel mean discrepancies.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript establishes quantitative local convergence rates in the L² norm for the mean-field continuous-time limit of Stein Variational Gradient Descent (SVGD) when the interaction kernel is of Riesz type on the d-dimensional torus. Under the assumptions that the initial density and target are smooth and close in L² norm, the authors derive explicit polynomial convergence rates that depend on dimension and the regularity parameters of the kernel, initialization, and target. These rates are shown to be sharp in certain regimes, supported by numerical experiments, and the analysis recovers the known global exponential convergence for the Coulomb singularity case. The approach is inspired by recent results on Wasserstein gradient flows of kernel mean discrepancies.

Significance. If the central derivations hold, this work supplies the first explicit quantitative rates for last-iterate convergence of the mean-field SVGD flow in strong norms under local assumptions. The polynomial rates, their sharpness, and the internal consistency check via recovery of the Coulomb exponential rate constitute a meaningful advance for the theoretical analysis of deterministic particle sampling methods. The local L²-closeness hypothesis is a natural and practically relevant regime.

minor comments (3)

- [§3.2] §3.2, the statement of the main local convergence theorem: the dependence of the polynomial degree on the Sobolev regularity indices of the kernel and target could be made fully explicit in the theorem statement rather than deferred to the proof.

- [Numerical experiments] Figure 2 and the accompanying numerical discussion: the discretization of the continuous-time flow (time-stepping scheme and particle number) is not described in sufficient detail to allow direct reproduction of the observed rates.

- [Notation and preliminaries] The notation for the Riesz kernel singularity parameter α and the torus dimension d is introduced late; an early consolidated table of parameters would improve readability.

Simulated Author's Rebuttal

We thank the referee for the positive assessment of our work, the recognition of its significance, and the recommendation for minor revision. We are pleased that the local L² convergence rates, their sharpness, and the recovery of the Coulomb case are viewed as a meaningful advance.

Circularity Check

No significant circularity in derivation chain

full rationale

The central result derives explicit polynomial L2 convergence rates from smoothness and L2-closeness assumptions on the initial density and target (with Riesz kernel on the torus) via independent analysis of the mean-field SVGD flow. The abstract notes inspiration from prior Wasserstein gradient flow results and recovers a known global exponential rate in the Coulomb edge case as an internal check, but neither reduces the new local rates to a self-citation chain, fitted parameter, or definitional equivalence. No load-bearing step is shown to collapse by the paper's own equations to its inputs.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Interaction kernel is of Riesz type on the d-dimensional torus

- domain assumption Initial density and target are smooth and close in L2-norm

Reference graph

Works this paper leans on

-

[1]

Chizat, L. Quantitative Convergence of. arXiv preprint arXiv:2603.01977 , year =

- [2]

-

[3]

Dong Li , title =. Rev. Mat. Iberoam. , volume =

-

[4]

Korba, Anna and Salim, Adil and Arbel, Michael and Luise, Giulia and Gretton, Arthur , journal=. A non-asymptotic analysis for

-

[5]

Convergence and stability results for the particle system in the

Carrillo, Jos. Convergence and stability results for the particle system in the. Mathematics of Computation , volume=

-

[6]

Duncan, Andrew and N. On the geometry of. Journal of Machine Learning Research , volume=

-

[7]

Journal of Differential Equations , volume=

An invariance principle for gradient flows in the space of probability measures , author=. Journal of Differential Equations , volume=. 2023 , publisher=

work page 2023

- [8]

-

[9]

Advances in Neural Information Processing Systems , volume=

Stein variational gradient descent as gradient flow , author=. Advances in Neural Information Processing Systems , volume=

-

[10]

Improved finite-particle convergence rates for

Banerjee, Sayan and Balasubramanian, Krishnakumar and Ghosal, Promit , journal=. Improved finite-particle convergence rates for

-

[11]

A Note on the Convergence of Mirrored

Sun, Lukang and Richt. A Note on the Convergence of Mirrored. arXiv preprint arXiv:2206.09709 , year=

-

[12]

Finite-Particle Rates for Regularized

He, Ye and Balasubramanian, Krishnakumar and Banerjee, Sayan and Ghosal, Promit , journal=. Finite-Particle Rates for Regularized

-

[13]

Long-time asymptotics of noisy

Priser, Victor and Bianchi, Pascal and Salim, Adil , journal=. Long-time asymptotics of noisy

-

[14]

Understanding the variance collapse of

Ba, Jimmy and Erdogdu, Murat A and Ghassemi, Marzyeh and Sun, Shengyang and Suzuki, Taiji and Wu, Denny and Zhang, Tianzong , booktitle=. Understanding the variance collapse of

-

[15]

Foundations of Data Science , volume=

N. Foundations of Data Science , volume=. 2023 , publisher=

work page 2023

-

[16]

Salim, Adil and Sun, Lukang and Richtarik, Peter , booktitle=. A convergence theory for. 2022 , organization=

work page 2022

-

[17]

Chewi, Sinho and Le Gouic, Thibaut and Lu, Chen and Maunu, Tyler and Rigollet, Philippe , journal=

-

[18]

Lu, Jianfeng and Lu, Yulong and Nolen, James , journal=. Scaling limit of the. 2019 , publisher=

work page 2019

- [19]

-

[20]

Liu, Qiang and Wang, Dilin , journal=

-

[21]

Foundations of Computational Mathematics , volume=

Optimal rates for the regularized least-squares algorithm , author=. Foundations of Computational Mathematics , volume=. 2007 , publisher=

work page 2007

-

[22]

Constructive approximation , volume=

On early stopping in gradient descent learning , author=. Constructive approximation , volume=. 2007 , publisher=

work page 2007

-

[23]

Mathematical Research Center, University of Wisconsin--Madison, Technical Summary Report , number =

Askey, Richard , title =. Mathematical Research Center, University of Wisconsin--Madison, Technical Summary Report , number =

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.