Recognition: no theorem link

A Real-Calibrated Synthetic-First Data Engine

Pith reviewed 2026-05-12 04:10 UTC · model grok-4.3

The pith

A modular data engine curates diffusion-generated images to augment real datasets for human pose estimation at near-zero added labeling cost.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The Real-Calibrated Synthetic-First Data Engine combines controllable diffusion generation with multi-stage curation and filtering inside a configurable CLI pipeline so that synthetic images can be added to real anchors as near-zero-human-annotation-cost augmentation, yielding higher performance on human pose estimation than real data alone, while synthetic-only training remains substantially below real-only performance.

What carries the argument

The modular CLI pipeline that chains controllable diffusion generation, multi-stage curation and filtering, optional uncertainty-driven selection, and human verification.

If this is right

- Synthetic images become usable as low-cost supplements once filtered and mixed with real anchors.

- Synthetic-only training cannot yet substitute for real data in pose estimation.

- The same curation pattern appears in segmentation diagnostics.

- The pipeline design allows swapping of generation or filtering modules without rewriting the workflow.

Where Pith is reading between the lines

- The curation approach could be tested on other vision tasks that suffer from data scarcity.

- Reducing remaining human verification steps would increase the automation benefit.

- Scaling the real anchor set size might change the magnitude of the observed augmentation gains.

- Connecting the engine to newer generative models could further narrow the residual domain gap.

Load-bearing premise

The multi-stage curation and filtering steps are assumed to close the domain gap between synthetic and real images without introducing selection biases or requiring substantial hidden human effort.

What would settle it

An experiment in which adding the curated synthetic images to the real training set produces no accuracy gain or a drop in pose estimation performance on a held-out real test set.

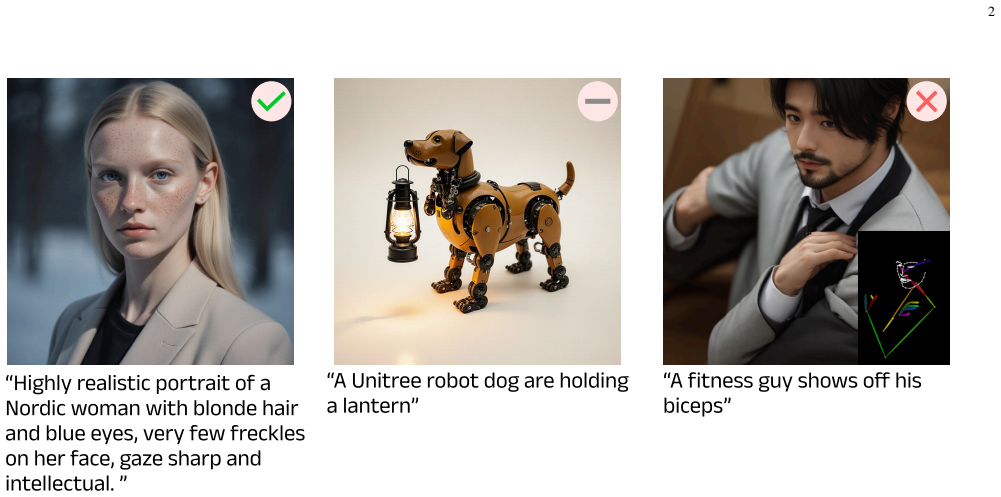

Figures

read the original abstract

Modern computer vision systems increasingly encounter performance limitations in data-scarce domains, where collecting large-scale, high-quality labeled data is costly or impractical. While controllable diffusion models enable scalable synthetic image generation, directly applying synthetic augmentation often leads to unstable performance gains due to dataset-level quality issues and insufficient feedback mechanisms. In this work, we present a Real-Calibrated Synthetic-First Data Engine, a modular data engineering framework that combines controllable diffusion generation and multi-stage curation/filtering within a unified pipeline, with optional support for uncertainty-driven selection and human verification. Instead of introducing new generative algorithms, our approach focuses on systematic dataset construction for improving the practical reliability of synthetic augmentation in low-data regimes. The framework is implemented as a modular CLI-based pipeline, where generation, filtering, selection, and validation components can be independently configured and replaced. This design emphasizes reproducibility, flexibility, and practical deployment in real-world data workflows. Through empirical evaluation centered on human pose estimation, we show that synthetic data improves a real-data baseline when used as near-zero-human-annotation-cost augmentation alongside real anchors, while synthetic-only training remains substantially below real-only performance. Supplementary segmentation diagnostics show the same domain-gap pattern. These results highlight the practical value of data-centric orchestration for low-data augmentation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a modular 'Real-Calibrated Synthetic-First Data Engine' as a CLI-based pipeline that combines controllable diffusion-based synthetic image generation with multi-stage curation, filtering, optional uncertainty-driven selection, and human verification. It claims that augmenting real-data anchors with these curated synthetic samples yields performance gains on human pose estimation (and similar patterns for segmentation) relative to real-only baselines, while synthetic-only training remains substantially weaker; the emphasis is on practical, reproducible data engineering rather than new generative algorithms.

Significance. If the reported gains prove robust and the curation pipeline is shown to operate with truly low hidden cost and without unmeasured selection bias, the work would offer a useful, deployable framework for reliable synthetic augmentation in data-scarce computer-vision settings, underscoring the value of systematic dataset orchestration over isolated generative advances.

major comments (2)

- [Empirical evaluation (centered on pose estimation)] The central empirical claim (synthetic augmentation improves real baselines while synthetic-only lags) is presented without reported metrics, statistical tests, baseline details, or ablation studies that isolate the multi-stage curation/filtering from simple volume increases or random sampling; this is load-bearing for the 'real-calibrated' and 'near-zero annotation cost' assertions.

- [Framework description and modular pipeline] The framework description states that curation/filtering closes the domain gap, yet the pipeline includes optional human verification and no quantification of annotation hours, bias analysis, or comparison against unfiltered synthetic samples; without these, the claim that gains arise automatically from the engine rather than hidden effort or easy-sample selection cannot be evaluated.

minor comments (2)

- [Abstract] The abstract references 'supplementary segmentation diagnostics' but supplies no figure, table, or metric details to support the stated domain-gap pattern.

- [Implementation and pipeline] Reproducibility would benefit from explicit listing of configuration parameters, random seeds, and exact filtering thresholds used in the reported experiments.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below, clarifying our position and outlining revisions where appropriate to improve the manuscript's transparency and rigor.

read point-by-point responses

-

Referee: [Empirical evaluation (centered on pose estimation)] The central empirical claim (synthetic augmentation improves real baselines while synthetic-only lags) is presented without reported metrics, statistical tests, baseline details, or ablation studies that isolate the multi-stage curation/filtering from simple volume increases or random sampling; this is load-bearing for the 'real-calibrated' and 'near-zero annotation cost' assertions.

Authors: We agree that additional quantitative detail would strengthen the presentation. The manuscript reports comparative performance trends for pose estimation (and segmentation) under real-only, synthetic-only, and augmented regimes, but we will revise to include explicit numerical results (e.g., PCK or mAP values), statistical significance tests with p-values, fuller baseline specifications, and targeted ablations that hold data volume constant while varying curation stages versus random sampling. These additions will better isolate the contribution of the multi-stage pipeline. revision: yes

-

Referee: [Framework description and modular pipeline] The framework description states that curation/filtering closes the domain gap, yet the pipeline includes optional human verification and no quantification of annotation hours, bias analysis, or comparison against unfiltered synthetic samples; without these, the claim that gains arise automatically from the engine rather than hidden effort or easy-sample selection cannot be evaluated.

Authors: We acknowledge the need for greater transparency on the optional human verification component. In revision we will add direct performance comparisons between the full pipeline and versions without human verification, as well as against unfiltered synthetic data at matched scale. We will also report diversity and bias-related metrics on the selected samples. However, exact annotation hours were not systematically logged during the original experiments, limiting our ability to provide precise quantification; we will instead emphasize the design goal of minimizing human effort and note this as a limitation. revision: partial

- Precise quantification of human annotation hours for the optional verification step, as this was not recorded in the original experimental logs.

Circularity Check

No circularity in empirical framework

full rationale

The paper presents a modular CLI-based data engineering pipeline for synthetic image generation, curation, and augmentation, evaluated via direct experimental comparisons on human pose estimation (and segmentation diagnostics). No equations, fitted parameters, predictions, or derivation chains appear in the abstract or described structure. Central claims rest on reported performance deltas between real-only, synthetic-only, and mixed regimes rather than any self-definitional, fitted-input, or self-citation load-bearing reductions. The work is self-contained as an engineering and empirical contribution with no mathematical ansatz or uniqueness theorem invoked.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Controllable diffusion models plus multi-stage filtering can produce synthetic images that usefully augment real data in low-data regimes

invented entities (1)

-

Real-Calibrated Synthetic-First Data Engine

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Meta-sim: Learning to generate synthetic datasets,

A. Kar, A. Prakash, M.-Y . Liu, E. Cameracci, J. Yuan, M. Rusiniak, D. Acuna, A. Torralba, and S. Fidler, “Meta-sim: Learning to generate synthetic datasets,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2019

work page 2019

-

[2]

G. Ros, L. Sellart, J. Materzynska, D. Vazquez, and A. M. Lopez, “The synthia dataset: A large collection of synthetic images for semantic segmentation of urban scenes,” inProceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2016

work page 2016

-

[3]

Hypersim: A photorealistic synthetic dataset for holistic indoor scene understanding,

M. Roberts, J. Ramapuram, A. Ranjan, A. Kumar, M. A. Bautista, N. Paczan, R. Webb, and J. M. Susskind, “Hypersim: A photorealistic synthetic dataset for holistic indoor scene understanding,” inProceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021, pp. 10 912–10 922

work page 2021

-

[4]

Q. Nguyen, T. Vu, A. Tran, and K. Nguyen, “Dataset diffusion: Diffusion-based synthetic dataset generation for pixel-level semantic segmentation,” 2023. [Online]. Available: https://arxiv.org/abs/2309. 14303

work page 2023

-

[5]

Generating and evaluating synthetic data in digital pathology through diffusion models,

M. Pozzi, S. Noei, E. Robbi, L. Cima, M. Moroni, E. Munari, E. Torresani, and G. Jurman, “Generating and evaluating synthetic data in digital pathology through diffusion models,”Scientific Reports, vol. 14, no. 1, p. 28435, 2024. [Online]. Available: https://doi.org/10.1038/s41598-024-79602-w

-

[6]

K. Singh, T. Navaratnam, J. Holmer, S. Schaub-Meyer, and S. Roth, “Is synthetic data all we need? benchmarking the robustness of 7 models trained with synthetic images,” 2024. [Online]. Available: https://arxiv.org/abs/2405.20469

-

[7]

Active learning inspired controlnet guidance for augmenting semantic segmentation datasets,

H. Kniesel, P. Hermosilla, and T. Ropinski, “Active learning inspired controlnet guidance for augmenting semantic segmentation datasets,”

-

[8]

Available: https://arxiv.org/abs/2503.09221

[Online]. Available: https://arxiv.org/abs/2503.09221

-

[9]

Scaling tumor segmentation: Best lessons from real and synthetic data,

Q. Chen, X. Zhou, C. Liu, H. Chen, W. Li, Z. Jiang, Z. Huang, Y . Zhao, D. Yu, J. He, Y . Zheng, L. Shao, A. Yuille, and Z. Zhou, “Scaling tumor segmentation: Best lessons from real and synthetic data,” 2025. [Online]. Available: https://arxiv.org/abs/2510.14831

-

[10]

Scaling laws of synthetic images for model training ... for now,

L. Fan, K. Chen, D. Krishnan, D. Katabi, P. Isola, and Y . Tian, “Scaling laws of synthetic images for model training ... for now,” 2023. [Online]. Available: https://arxiv.org/abs/2312.04567

-

[11]

A survey of deep active learning,

P. Ren, Y . Xiao, X. Chang, P.-Y . Huang, Z. Li, B. B. Gupta, X. Chen, and X. Wang, “A survey of deep active learning,”ACM Computing Surveys, vol. 54, no. 9, Oct. 2021. [Online]. Available: https://doi.org/10.1145/3472291

-

[12]

Human-in-the-loop machine learn- ing: a state of the art,

E. Mosqueira-Rey, E. Hern ´andez-Pereira, D. Alonso-R ´ıos, J. Bobes- Bascar´an, and A. Fernandez-Leal, “Human-in-the-loop machine learn- ing: a state of the art,”Artificial Intelligence Review, vol. 56, no. 4, pp. 3005–3054, 2023

work page 2023

-

[13]

Domain Randomization for Transferring Deep Neural Networks from Simulation to the Real World

J. Tobin, R. Fong, A. Ray, J. Schneider, W. Zaremba, and P. Abbeel, “Domain randomization for transferring deep neural networks from simulation to the real world,” 2017. [Online]. Available: https://arxiv.org/abs/1703.06907

work page Pith review arXiv 2017

-

[14]

Generative adversarial networks,

I. J. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair, A. Courville, and Y . Bengio, “Generative adversarial networks,” 2014

work page 2014

-

[15]

Denoising diffusion implicit models,

J. Song, C. Meng, and S. Ermon, “Denoising diffusion implicit models,” inInternational Conference on Learning Representations (ICLR), 2021

work page 2021

-

[16]

Playing for data: Ground truth from computer games,

S. R. Richter, V . Vineet, S. Roth, and V . Koltun, “Playing for data: Ground truth from computer games,” 2016. [Online]. Available: https://arxiv.org/abs/1608.02192

-

[17]

Controllable generation with text-to-image diffusion models: a survey,

P. Cao, F. Zhou, Q. Song, and L. Yang, “Controllable generation with text-to-image diffusion models: a survey,”IEEE Transactions on Pattern Analysis and Machine Intelligence, pp. 1–20, 2025. [Online]. Available: http://dx.doi.org/10.1109/TPAMI.2025.3646548

-

[18]

Adding conditional control to text-to-image diffusion models,

L. Zhang, A. Rao, and M. Agrawala, “Adding conditional control to text-to-image diffusion models,” 2023. [Online]. Available: https: //arxiv.org/abs/2302.05543

-

[19]

Lora: Low-rank adaptation of large language models,

E. J. Hu, Y . Shen, P. Wallis, Z. Allen-Zhu, Y . Li, S. Wang, L. Wang, and W. Chen, “Lora: Low-rank adaptation of large language models,”

-

[20]

LoRA: Low-Rank Adaptation of Large Language Models

[Online]. Available: https://arxiv.org/abs/2106.09685

work page internal anchor Pith review Pith/arXiv arXiv

-

[21]

Continual diffusion: Continual customization of text-to-image diffusion with c-lora,

J. S. Smith, Y .-C. Hsu, L. Zhang, T. Hua, Z. Kira, Y . Shen, and H. Jin, “Continual diffusion: Continual customization of text-to-image diffusion with c-lora,” 2024

work page 2024

-

[22]

A training-free synthetic data selection method for semantic segmentation,

H. Tang, S. Yu, J. Pang, and B. Zhang, “A training-free synthetic data selection method for semantic segmentation,” 2025. [Online]. Available: https://arxiv.org/abs/2501.15201

-

[23]

Knowing the distance: Understanding the gap between synthetic and real data for face parsing,

E. Friedman, A. Lehr, A. Gruzdev, V . Loginov, M. Kogan, M. Rubin, and O. Zvitia, “Knowing the distance: Understanding the gap between synthetic and real data for face parsing,” 2023. [Online]. Available: https://arxiv.org/abs/2303.15219

-

[24]

High-Resolution Image Synthesis with Latent Diffusion Models

R. Rombach, A. Blattmann, D. Lorenz, P. Esser, and B. Ommer, “High-resolution image synthesis with latent diffusion models,” 2022. [Online]. Available: https://arxiv.org/abs/2112.10752

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[25]

M. Tschannen, A. Gritsenko, X. Wang, M. F. Naeem, I. Alabdulmohsin, N. Parthasarathy, T. Evans, L. Beyer, Y . Xia, B. Mustafa, O. H ´enaff, J. Harmsen, A. Steiner, and X. Zhai, “Siglip 2: Multilingual vision- language encoders with improved semantic understanding, localization, and dense features,”arXiv preprint arXiv:2502.14786, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[26]

Fiftyone: a tool for dataset curation, analysis, and visualization,

V oxel51, “Fiftyone: a tool for dataset curation, analysis, and visualization,” Software, 2024. [Online]. Available: https://voxel51.com/ fiftyone

work page 2024

-

[27]

Label studio: Open-source data labeling,

HumanSignal, “Label studio: Open-source data labeling,” Software,

- [28]

-

[29]

Snorkel: rapid training data creation with weak supervision,

A. Ratner, S. H. Bach, H. Ehrenberg, J. Fries, S. Wu, and C. R ´e, “Snorkel: rapid training data creation with weak supervision,” Proceedings of the VLDB Endowment, vol. 11, no. 3, pp. 269–282, Nov

-

[30]

Available: http://dx.doi.org/10.14778/3157794.3157797

[Online]. Available: http://dx.doi.org/10.14778/3157794.3157797

-

[31]

Microsoft coco: Common objects in context,

T.-Y . Lin, M. Maire, S. Belongie, L. Bourdev, R. Girshick, J. Hays, P. Perona, D. Ramanan, P. Doll ´ar, and C. L. Zitnick, “Microsoft coco: Common objects in context,” inEuropean Conference on Computer Vision (ECCV), 2014, pp. 740–755

work page 2014

-

[32]

Ultralytics yolo11 documentation,

Ultralytics, “Ultralytics yolo11 documentation,” Software documenta- tion, 2024, accessed for model and pose-estimation implementation details. [Online]. Available: https://docs.ultralytics.com/

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.