Recognition: no theorem link

Insight: Enhancing Mobile Accessibility for Blind and Visually Impaired Users with LLMs

Pith reviewed 2026-05-12 02:06 UTC · model grok-4.3

The pith

Insight uses large language models to let blind users interact with phones through natural dialogue instead of sequential gestures.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Insight is an Android accessibility service that provides natural language interaction and real-time summarization of the screen using large language models. In a within-subject study, it reduced mental effort and task completion time compared to TalkBack, was preferred due to its dialogue interface, though users desired better interruption management. The results indicate that LLM-based interfaces can significantly improve mobile accessibility for BVI users and point to hybrid gesture-dialogue solutions for more inclusive design.

What carries the argument

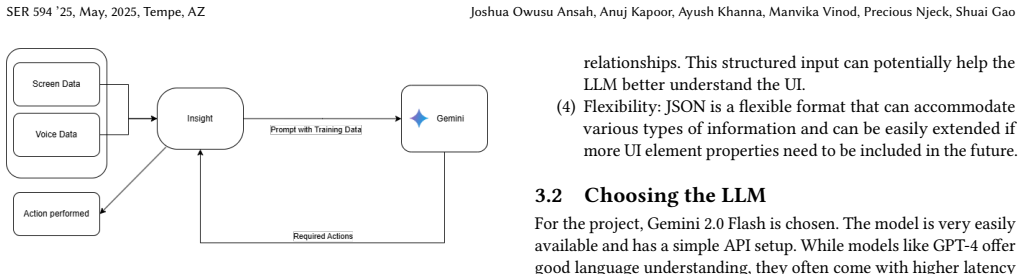

Insight, an LLM-powered Android accessibility service that enables natural language queries and screen summarization in place of sequential gesture feedback.

If this is right

- Users complete mobile tasks faster with less mental effort when using dialogue-based access.

- Natural language interfaces are preferred over traditional gesture systems by BVI users.

- Hybrid designs combining gestures and dialogue could address current limitations like interruption handling.

- LLM technology opens paths to more inclusive mobile design for visually impaired users.

Where Pith is reading between the lines

- Future work could test Insight in real-world daily use scenarios beyond controlled tasks.

- Combining this with voice assistants might further reduce cognitive load for users.

- Developers of accessibility tools should consider integrating LLM summarization to complement existing screen readers.

Load-bearing premise

The user study with participants reliably demonstrates better performance without being affected by small sample size, learning effects from repeated use, or inaccuracies in the LLM summaries.

What would settle it

A follow-up study with more participants, full statistics on task times and errors, and controls for practice effects that finds no improvement or higher error rates with Insight would disprove the main result.

Figures

read the original abstract

This research paper addresses the limitations of current mobile accessibility services like TalkBack, which provide manual gesture-based sequential feedback to BVI users. Motivated by the promise of large language models (LLMs), this paper introduces Insight, an Android accessibility service that provides natural language interaction and real-time summarization of the screen. The paper performs a within-subject experimental study with users to compare Insight and TalkBack on usability factors. Results show Insight reduced mental effort and task time, and was preferred because of its dialogue interface, but users felt the need for interruption management. Results show LLM-based interfaces can significantly improve mobile accessibility, and describe the potential of hybrid solutions combining gesture and dialogue modalities towards more inclusive design.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Insight, an Android accessibility service that integrates LLMs to enable natural language dialogue and real-time screen summarization for blind and visually impaired (BVI) users, addressing limitations of gesture-based tools like TalkBack. It reports results from a within-subject user study comparing Insight to TalkBack, claiming reductions in mental effort and task time, higher user preference for the dialogue interface, and a need for improved interruption management. The authors conclude that LLM-based interfaces can significantly enhance mobile accessibility and propose hybrid gesture-dialogue designs for more inclusive interfaces.

Significance. If the within-subject study results hold with adequate controls and reporting, this work could meaningfully advance HCI and accessibility research by providing empirical evidence for LLM integration in mobile tools, potentially reducing cognitive load for BVI users and inspiring multimodal designs. The hybrid modality suggestion identifies a practical direction for future systems, though its value depends on the robustness of the presented evidence.

major comments (2)

- The description of the within-subject experimental study provides no participant count, task details, counterbalancing procedure, statistical tests, effect sizes, or LLM summarization accuracy/failure rates. These omissions are load-bearing for the central claim that Insight 'significantly' reduces mental effort and task time versus TalkBack, as order effects, learning, or interface novelty cannot be ruled out without them.

- No quantitative results (e.g., mean task times, mental effort scores, preference percentages, or p-values) appear in the study summary or abstract. This prevents evaluation of the practical magnitude of the reported improvements and undermines the assertion of significant benefits.

minor comments (2)

- The abstract states results without including any supporting metrics or error analysis; adding a sentence with key quantitative outcomes would improve clarity and allow readers to assess claims immediately.

- The hybrid gesture-dialogue suggestion is presented as a forward-looking idea but lacks any supporting observations or data from the user study; consider moving it to a dedicated future-work subsection with explicit caveats.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed review of our manuscript. The comments highlight important gaps in the reporting of our user study, and we have revised the paper to address them directly. Below we respond point by point to the major comments.

read point-by-point responses

-

Referee: The description of the within-subject experimental study provides no participant count, task details, counterbalancing procedure, statistical tests, effect sizes, or LLM summarization accuracy/failure rates. These omissions are load-bearing for the central claim that Insight 'significantly' reduces mental effort and task time versus TalkBack, as order effects, learning, or interface novelty cannot be ruled out without them.

Authors: We agree that the original manuscript omitted critical methodological details required to evaluate the study’s internal validity. In the revised version we have substantially expanded the User Study section to report the participant count, the specific tasks performed by users, the counterbalancing procedure employed, the statistical tests conducted (including p-values and effect sizes), and quantitative measures of LLM summarization accuracy together with documented failure cases. These additions directly address concerns about order effects, learning, and novelty and allow readers to assess the robustness of the reported reductions in mental effort and task time. revision: yes

-

Referee: No quantitative results (e.g., mean task times, mental effort scores, preference percentages, or p-values) appear in the study summary or abstract. This prevents evaluation of the practical magnitude of the reported improvements and undermines the assertion of significant benefits.

Authors: We accept that the absence of numerical results in the abstract and study summary limits assessment of effect magnitude. We have updated both the abstract and the study summary in the revised manuscript to include the key quantitative outcomes: mean task completion times, NASA-TLX mental effort scores, user preference percentages, and associated p-values. These changes provide a clearer indication of the practical benefits observed while preserving the original qualitative conclusions. revision: yes

Circularity Check

No circularity: purely empirical user-study paper with no derivations or self-referential chains

full rationale

The paper introduces an Android accessibility service (Insight) and reports outcomes from a within-subject user study comparing it to TalkBack on usability metrics. No equations, parameters, predictions, ansatzes, or uniqueness theorems appear in the provided text. The central claims rest on observed study results (reduced mental effort, task time, user preference) rather than any derivation that could reduce to its own inputs by construction. Self-citations are absent from the load-bearing sections. This is the expected non-finding for an empirical HCI paper whose evidence is external to any internal loop.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

(2023, August 10).Vision impairment and blind- ness

World Health Organization. (2023, August 10).Vision impairment and blind- ness. https://www.who.int/news-room/fact-sheets/detail/blindness-and-visual- impairment

work page 2023

-

[2]

Kuber, R., Hastings, A., Tretter, M. (2012). Determining the accessibil- ity of mobile screen readers for blind users.UMBC Faculty Collection. https://www.researchgate.net/profile/Ravi-Kuber/publication/266630063_ Determining_the_Accessibility_of_Mobile_Screen_Readers_for_Blind_Users/ links/55428f810cf23ff71683604b/Determining-the-Accessibility-of-Mobile-...

-

[3]

Wall, S. A., Brewster, S. A. (2006). Tac-tiles: Multimodal pie charts for visually impaired users. InProceedings of the 4th Nordic Conference on Human-Computer Interaction: Changing Roles, 9–18. https://doi.org/10.1145/1182475.1182477

-

[4]

Khan, A., Khusro, S. (2019). Blind-friendly user interfaces – a pilot study on improving the accessibility of touchscreen interfaces.Multimedia Tools and Ap- plications,78(13), 17495–17519. https://doi.org/10.1007/s11042-018-7094-y

-

[5]

Lister, K., Coughlan, T., Iniesto, F., Freear, N., Devine, P. (2020). Accessible conver- sational user interfaces: Considerations for design. InProceedings of Web4All

work page 2020

-

[6]

https://doi.org/10.1145/3371300.3383343

- [7]

-

[8]

Wang, B., Li, G., Zhou, X., Chen, Z., Grossman, T., Li, Y. (2021). Screen2Words: Automatic mobile UI summarization with multimodal learning.UIST’21. https: //doi.org/10.1145/3472749.3474765

-

[9]

Zhe Liu, Chunyang Chen, Junjie Wang, Mengzhuo Chen, Boyu Wu, Yuekai Huang, Jun Hu, and Qing Wang. 2024. Unblind Text Inputs: Predicting Hint-text of Text Input in Mobile Apps via LLM. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI ’24). Association for Computing Machinery, New York, NY, USA, Article 51, 1–20. https://d...

-

[10]

Hakobyan, L., Lumsden, J., O’Sullivan, D., Bartlett, H. (2013). Mobile assistive technologies for the visually impaired.Survey of ophthalmology,58(6), 513–528. https://www.sciencedirect.com/science/article/pii/S0039625712002512

work page 2013

-

[11]

P., Ashok, V., Ramakrishnan, I

Ghosh, A., Uckun, U., Reddy, M. P., Ashok, V., Ramakrishnan, I. V., Kodandaram, S. R., Bi, X. (2024). Screen Reading Enabled by Large Language Models. InPro- ceedings of the 26th International ACM SIGACCESS Conference on Computers and Accessibility. https://dl.acm.org/doi/10.1145/3663548.3688491

-

[12]

R., Uckun, U., Bi, X., Ramakrishnan, I

Kodandaram, S. R., Uckun, U., Bi, X., Ramakrishnan, I. V., Ashok, V. (2024). En- abling Uniform Computer Interaction Experience for Blind Users through Large Language Models. InProceedings of the 26th International ACM SIGACCESS Con- ference on Computers and Accessibility. https://dl.acm.org/doi/10.1145/3663548. 3675605

-

[14]

F., Lanzilotti, R., Matera, M., Piccinno, A., Pinto, N., Piro, L., Pucci, E., Ragone, G

Costabile, M. F., Lanzilotti, R., Matera, M., Piccinno, A., Pinto, N., Piro, L., Pucci, E., Ragone, G. (2024). Participatory Design for Creating Conversational Agents to Improve Web Accessibility. https://ceur-ws.org/Vol-3778/short7.pdf

work page 2024

-

[15]

Zaina, L. A. M., Fortes, R. P. M., Casadei, V., Nozaki, L. S., Paiva, D. M. B. (2022). Preventing accessibility barriers: Guidelines for using user interface design patterns in mobile applications.Journal of Systems and Software,186, 111213. https://doi.org/10.1016/j.jss.2021.111213

-

[16]

and Yang, Qiang and Xie, Xing , number =

Chang, Y., Wang, X., Wang, J., Wu, Y., Yang, L., Zhu, K., Chen, H., Yi, X., Wang, C., Wang, Y., Ye, W., Zhang, Y., Chang, Y., Yu, P. S., Yang, Q., Xie, X. (2024). A Survey on Evaluation of Large Language Models.ACM Trans. Intell. Syst. Technol.,15(3), 39:1–39:45. https://doi.org/10.1145/3641289

-

[17]

(2023, November 2).What Are Large Language Models (LLMs)? | IBM

IBM. (2023, November 2).What Are Large Language Models (LLMs)? | IBM. https://www.ibm.com/think/topics/large-language-models

work page 2023

-

[18]

Mapping Natural Language Instructions to Mobile UI Action Sequences

Li, Y., He, J., Zhou, X., Zhang, Y., Baldridge, J. (2020).Mapping natural language instructions to mobile UI action sequences. InProceedings of ACL 2020, SER 594 ’25, May, 2025, Tempe, AZ Joshua Owusu Ansah, Anuj Kapoor, Ayush Khanna, Manvika Vinod, Precious Njeck, Shuai Gao 8198–8210. https://doi.org/10.18653/v1/2020.acl-main.729

-

[19]

(2024).Leveraging Large Language Models for Re- alizing Truly Intelligent User Interfaces

Oelen, A., and Auer, S. (2024).Leveraging Large Language Models for Re- alizing Truly Intelligent User Interfaces. InExtended Abstracts of the CHI Conference on Human Factors in Computing Systems, 1–8. https://doi.org/10.1145/ 3613905.3650949

-

[20]

Planas, E., Daniel, G., Brambilla, M., Cabot, J. (2021).Towards a model-driven approach for multiexperience AI-based user interfaces.Software and Sys- tems Modeling. https://doi.org/10.1007/s10270-021-00904-y

-

[21]

(2024).Ferret-UI: Grounded Mobile UI Understanding with Multimodal LLMs

You, K., Zhang, H., Schoop, E., Weers, F., Swearngin, A., Nichols, J., Yang, Y., and Gan, Z. (2024).Ferret-UI: Grounded Mobile UI Understanding with Multimodal LLMs. InComputer Vision – ECCV 2024, 240–55. https://doi.org/10. 1007/978-3-031-73039-9_14

work page 2024

-

[22]

Hao Wen, Yuanchun Li†, Guohong Liu, Shanhui Zhao, Tao Yu, Toby Jia-Jun Li, Shiqi Jiang, Yunhao Liu, Yaqin Zhang, Yunxin Liu. 2024. AutoDroid: LLM- powered Task Automation in Android. InInternational Conference On Mobile Computing And Networking (ACM MobiCom ’24), September 30–October 4, 2024, Washington D.C., DC, USA. ACM, New York, NY, USA, 15 pages. htt...

- [23]

-

[24]

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology.Qualitative Research in Psychology,3(2), 77–101. https://doi.org/10.1191/1478088706qp063oa

-

[25]

Greenwald, A. G. (1976). Within-subjects designs: To use or not to use?Psycho- logical Bulletin,83(2), 314

work page 1976

-

[26]

Laubheimer, P. (2025, March 3). Beyond the NPS: Measuring Perceived Usability with the SUS, NASA-TLX, and the Single Ease Question After Tasks and Usabil- ity Tests.Nielsen Norman Group. https://www.nngroup.com/articles/measuring- perceived-usability/

work page 2025

-

[27]

Yunpeng Song, Yiheng Bian, Yongtao Tang, Guiyu Ma, and Zhongmin Cai. 2024. VisionTasker: Mobile Task Automation Using Vision Based UI Understanding and LLM Task Planning. InProceedings of the 37th Annual ACM Symposium on User Interface Software and Technology (UIST ’24). Association for Computing Machinery, New York, NY, USA, Article 49, 1–17. https://doi...

-

[28]

An Android service to improve accessibility for BVI users

Anuj K, Ayush K. An Android service to improve accessibility for BVI users. https://github.com/anujkap/AccessibilityService/tree/dev Received 5 May 2025; revised 5 May 2025

work page 2025

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.