Recognition: 2 theorem links

· Lean TheoremA Statistical Framework for Learning Preferences from the Past

Pith reviewed 2026-05-12 02:10 UTC · model grok-4.3

The pith

A new statistical method estimates future user preferences from past choices by assuming more frequent selections indicate stronger preferences.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We introduce a novel statistical framework for predicting user preferences based on their past choices, under a natural monotonicity assumption: options that were chosen more frequently or more intensely in the past are more likely to be chosen again in the future. Our approach builds on a parametric model and proposes a non-parametric generalization. We develop a method of maximum likelihood estimation of the user preference probabilities under the above-mentioned monotonicity constraint and derive theoretical guarantees for our estimator.

What carries the argument

The non-parametric generalization of the choice model with maximum likelihood estimation under the monotonicity constraint on preference probabilities.

Load-bearing premise

The assumption that options chosen more frequently or more intensely in the past are more likely to be chosen again in the future.

What would settle it

If the maximum likelihood estimator under this monotonicity fails to outperform baseline methods in predicting held-out choices on real user data, the practical value of the framework would be called into question.

Figures

read the original abstract

In many real-world settings such as online recommendation or consumer choice modeling, individuals make repeated choices from a fixed set of options. Accurately estimating their underlying preferences is essential for generating personalized future recommendations. Probabilistic models for understanding user choice behavior from past decisions can serve as a valuable addition to existing recommender systems and choice prediction methods. To this end, in this article, we introduce a novel statistical framework for predicting user preferences based on their past choices, under a natural monotonicity assumption: options that were chosen more frequently or more intensely in the past are more likely to be chosen again in the future. Our approach builds on a parametric model proposed by Le Goff and Soulier (2017), originally used to describe how ants in an ant colony select a path among many pre-existing paths. We propose a non-parametric generalization of this model, drawing inspiration from the generalized elephant random walk introduced by Maulik et al. (2024). We develop a method of maximum likelihood estimation of the user preference probabilities under the above-mentioned monotonicity constraint. We also derive theoretical guarantees for our estimator and demonstrate the effectiveness of our method through both simulated experiments and real-world datasets.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces a novel statistical framework for predicting user preferences from repeated choices among a fixed set of options. It assumes a natural monotonicity property (options chosen more frequently or intensely in the past are more likely to be chosen again) and generalizes the parametric model of Le Goff and Soulier (2017) to a non-parametric version inspired by generalized elephant random walks. The paper develops maximum likelihood estimation of preference probabilities under the monotonicity constraint, derives theoretical guarantees for the estimator, and evaluates the approach on simulated experiments and real-world datasets.

Significance. If the claimed MLE procedure and its theoretical guarantees are valid, the framework would supply a flexible non-parametric tool for preference estimation in recommender systems and consumer choice modeling. The explicit incorporation of monotonicity and the cross-disciplinary link to elephant random walks constitute a potentially useful extension of existing parametric models, though the magnitude of the advance depends on the concrete form of the estimator, the strength of the guarantees, and the empirical gains demonstrated.

major comments (1)

- [Abstract] Abstract: the central claims rest on the development of an MLE under the monotonicity constraint together with derived theoretical guarantees, yet the abstract supplies only a high-level description; without the full derivation, the explicit form of the estimator, the precise statement of the guarantees (consistency, rates, etc.), and any supporting lemmas or proofs, it is impossible to verify whether the mathematics supports the claims or to identify potential gaps.

minor comments (1)

- The abstract refers to validation on 'real-world datasets' and 'simulated experiments' but provides no information on the specific datasets, choice of metrics, or baseline comparators used.

Simulated Author's Rebuttal

We thank the referee for their thoughtful review and for highlighting the need for clarity on how the abstract relates to the technical contributions. We address the single major comment below.

read point-by-point responses

-

Referee: [Abstract] Abstract: the central claims rest on the development of an MLE under the monotonicity constraint together with derived theoretical guarantees, yet the abstract supplies only a high-level description; without the full derivation, the explicit form of the estimator, the precise statement of the guarantees (consistency, rates, etc.), and any supporting lemmas or proofs, it is impossible to verify whether the mathematics supports the claims or to identify potential gaps.

Authors: We agree that the abstract is deliberately high-level, as is conventional for the format. The explicit MLE under the monotonicity constraint is derived in Section 3 via a constrained optimization that generalizes the parametric ant-colony model of Le Goff and Soulier (2017) using the generalized elephant random walk framework of Maulik et al. (2024). The consistency and convergence-rate guarantees are stated as Theorem 4.1 and Theorem 4.2 in Section 4, with the supporting lemmas and proofs collected in the appendix. Because the abstract is limited to 150 words, we elected to keep it concise while directing readers to these sections for verification. If the editor prefers, we can add one sentence to the abstract that names the estimator and the main theorems, but we believe the current structure is standard and sufficient. revision: no

Circularity Check

No significant circularity detected from abstract alone

full rationale

The abstract describes a novel non-parametric generalization of an external parametric model (Le Goff and Soulier 2017) inspired by a cited random walk construction (Maulik et al. 2024), with MLE under an explicit monotonicity constraint on choice frequencies and derived theoretical guarantees. No equations, derivation steps, or fitted quantities are presented that reduce by construction to inputs or self-citations. The monotonicity assumption is stated as an external modeling choice rather than derived internally, and the estimator is positioned as standard constrained MLE without renaming or self-referential prediction. With only the abstract available and no load-bearing self-citation chain or definitional loop exhibited, the derivation chain cannot be shown to collapse; this is the expected honest non-finding when full text and specific reductions are unavailable.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Monotonicity assumption: options chosen more frequently or intensely in the past are more likely to be chosen again in the future

Lean theorems connected to this paper

-

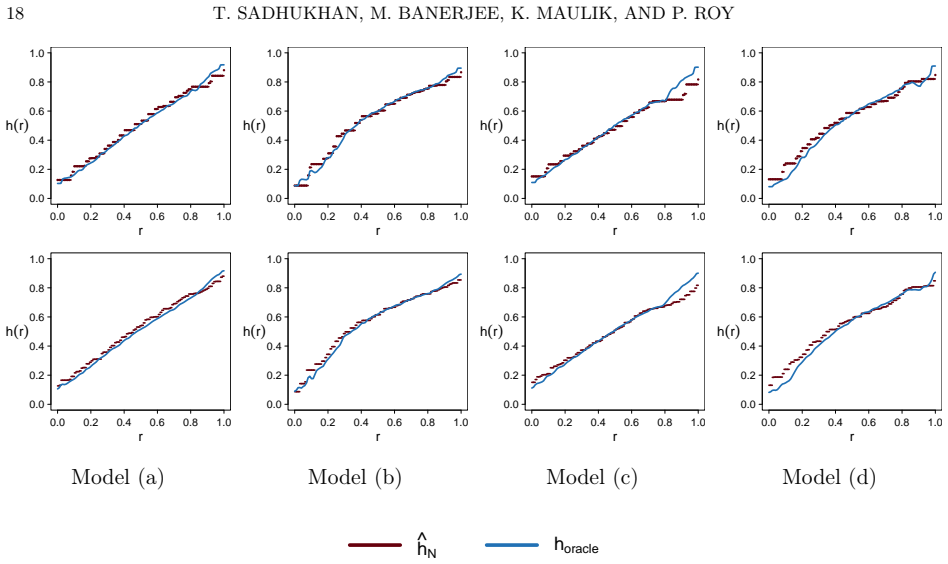

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearoptions that were chosen more frequently or more intensely in the past are more likely to be chosen again in the future... nonparametric maximum likelihood estimator ĥ_N ... slogcm ... Chernoff’s distribution

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability uncleargeneralized elephant random walk... h(x)=p f(x)+(1-p)(1-f(x))

Reference graph

Works this paper leans on

-

[1]

Charu C. Aggarwal.Recommender Systems. Springer Cham, 2016. The Textbook

work page 2016

-

[2]

Miriam Ayer, H. D. Brunk, G. M. Ewing, W. T. Reid, and Edward Silverman. An empirical distribution function for sampling with incomplete information.Ann. Math. Statist., 26:641–647, 1955

work page 1955

-

[3]

Moulinath Banerjee and Jon A. Wellner. Likelihood ratio tests for monotone functions.Ann. Statist., 29(6):1699–1731, 2001

work page 2001

-

[4]

Moulinath Banerjee and Jon A. Wellner. Confidence intervals for current status data.Scand. J. Statist., 32(3):405–424, 2005

work page 2005

-

[5]

H. D. Brunk. Estimation of isotonic regression. InNonparametric Techniques in Statistical Inference (Proc. Sympos., Indiana Univ., Bloomington, Ind., 1969), pages 177–197. Cambridge Univ. Press, London-New York, 1970

work page 1969

-

[6]

Sequential recommendation model for next purchase prediction

Xin Chen, Alex Reibman, and Sanjay Arora. Sequential recommendation model for next purchase prediction. InProceedings of the 5th International Conference on Machine Learning & Applications, volume 13 ofComputer Science & Information Technology (CS & IT), pages 141–158. Academy & Industry Research Collaboration Center, 2023

work page 2023

-

[7]

Parameter estimation of a two-colored urn model class.Int

Line Chloé Le Goff and Philippe Soulier. Parameter estimation of a two-colored urn model class.Int. J. Biostat., 13(1):20160029, 24, 2017

work page 2017

-

[8]

Deep neural networks for youtube recommendations

Paul Covington, Jay Adams, and Emre Sargin. Deep neural networks for youtube recommendations. In Proceedings of the 10th ACM Conference on Recommender Systems, RecSys ’16, page 191–198, New York, NY, USA, 2016. Association for Computing Machinery

work page 2016

-

[9]

J.-L. Deneubourg, S. Aron, S. Goss, and J. M. Pasteels. The self-organizing exploratory pattern of the argentine ant.J. Insect Behav., 3(2):159–168, 1990

work page 1990

-

[10]

On the theory of mortality measurement

Ulf Grenander. On the theory of mortality measurement. II.Skand. Aktuarietidskr., 39:125–153 (1957), 1956

work page 1957

-

[11]

P. Groeneboom. Estimating a monotone density. InProceedings of the Berkeley conference in honor of Jerzy Neyman and Jack Kiefer, Vol. II (Berkeley, Calif., 1983), Wadsworth Statist./Probab. Ser., pages 539–555. Wadsworth, Belmont, CA, 1985

work page 1983

-

[12]

Cambridge University Press, New York,

Piet Groeneboom and Geurt Jongbloed.Nonparametric estimation under shape constraints, volume 38 ofCambridge Series in Statistical and Probabilistic Mathematics. Cambridge University Press, New York,

-

[13]

Estimators, algorithms and asymptotics

-

[14]

Wellner.Information bounds and nonparametric maximum likelihood estimation, volume 19 ofDMV Seminar

Piet Groeneboom and Jon A. Wellner.Information bounds and nonparametric maximum likelihood estimation, volume 19 ofDMV Seminar. Birkhäuser Verlag, Basel, 1992

work page 1992

-

[15]

F. Maxwell Harper and Joseph A. Konstan. The movielens datasets: History and context.ACM Trans. Interact. Intell. Syst., 5(4), December 2015

work page 2015

-

[16]

Estimating a monotone density from censored observations.Ann

Youping Huang and Cun-Hui Zhang. Estimating a monotone density from censored observations.Ann. Statist., 22(3):1256–1274, 1994. LEARNING PREFERENCES FROM THE PAST 31

work page 1994

-

[17]

Asymptotic properties of generalized elephant random walks, 2024

Krishanu Maulik, Parthanil Roy, and Tamojit Sadhukhan. Asymptotic properties of generalized elephant random walks, 2024. Preprint

work page 2024

-

[18]

Mahdi Pakdaman Naeini, Gregory Cooper, and Milos Hauskrecht. Obtaining well calibrated probabilities using bayesian binning.Proceedings of the AAAI Conference on Artificial Intelligence, 29(1), Feb. 2015

work page 2015

-

[19]

B. L. S. Prakasa Rao. Estimation of a unimodal density.Sankhy¯ a Ser. A, 31:23–36, 1969

work page 1969

-

[20]

Tim Robertson, F. T. Wright, and R. L. Dykstra.Order restricted statistical inference. Wiley Series in Probability and Mathematical Statistics: Probability and Mathematical Statistics. John Wiley & Sons, Ltd., Chichester, 1988

work page 1988

-

[21]

Consistency of the GMLE with mixed case interval-censored data.Scand

Anton Schick and Qiqing Yu. Consistency of the GMLE with mixed case interval-censored data.Scand. J. Statist., 27(1):45–55, 2000

work page 2000

-

[22]

Shuguang Song. Estimation with univariate “mixed case” interval censored data.Statist. Sinica, 14(1):269– 282, 2004

work page 2004

-

[23]

Aad W. van der Vaart and Jon A. Wellner.Weak convergence and empirical processes. Springer Series in Statistics. Springer-Verlag, New York, 1996. With applications to statistics

work page 1996

-

[24]

Jon A. Wellner and Ying Zhang. Two estimators of the mean of a counting process with panel count data. Technical report, University of Washington, Department of Statistics, November 1998. Preprint

work page 1998

-

[25]

Jon A. Wellner and Ying Zhang. Two estimators of the mean of a counting process with panel count data.Ann. Statist., 28(3):779–814, 2000

work page 2000

-

[26]

Deep learning based recommender system: A survey and new perspectives.ACM Comput

Shuai Zhang, Lina Yao, Aixin Sun, and Yi Tay. Deep learning based recommender system: A survey and new perspectives.ACM Comput. Surv., 52(1), February 2019. Theoretical Statistics and Mathematics Unit, Indian Statistical Institute, 203 B. T. Road, Kolkata 700108, West Bengal, India Email address:tamojit96sadhukhan@gmail.com Department of Statistics, Unive...

work page 2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.