Recognition: 2 theorem links

· Lean TheoremLearning to Focus Synthetic Aperture Radar On-line with State-Space Models

Pith reviewed 2026-05-12 03:31 UTC · model grok-4.3

The pith

The first online SAR processor forms focused images line by line using a distilled state-space model.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

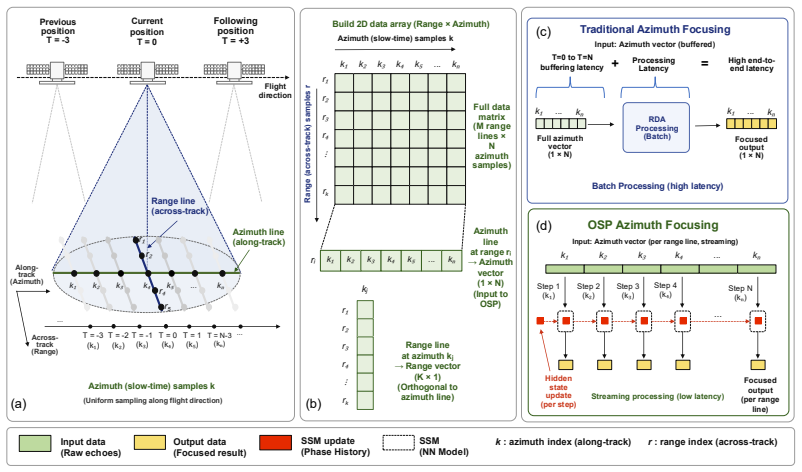

We present the first Online SAR Processor (OSP), an online image-formation framework that treats SAR sensing as a stream and produces focused SAR image output line by line during acquisition. OSP uses a tiny state-space surrogate model trained with teacher-student distillation and multi-stage losses. We evaluate the method on 300GB of SAR data from Maya4, a Sentinel-1-derived dataset containing raw, range-compressed, range-cell-migration-corrected, and azimuth-compressed products. Relative to a linewise digital-signal-processing baseline, OSP delivers approximately 70× lower latency and 130× lower memory use; on a single AMD CPU core it processes one row in 16 ms with a memory footprint of 6

What carries the argument

A tiny state-space surrogate model trained with teacher-student distillation and multi-stage losses that learns to replicate the sequential focusing steps of conventional SAR processors.

If this is right

- SAR data can be focused and passed to analysis tasks incrementally as acquisition proceeds instead of waiting for a full scene.

- Processing runs at 16 ms per row with a 6 MB memory footprint on a single CPU core.

- Memory use drops by a factor of roughly 130 and latency by a factor of roughly 70 compared with linewise digital-signal-processing baselines.

- The resulting images remain clear enough to support downstream tasks such as vessel detection and flood mapping.

Where Pith is reading between the lines

- The approach could enable closed-loop SAR systems that adjust transmission parameters or flight paths on the basis of partially formed images.

- Similar distillation of state-space models might reduce compute in other sequential radar or sonar pipelines that currently rely on batch processing.

- Onboard deployment could cut the volume of raw data that must be downlinked from satellites by sending only focused results or detections.

Load-bearing premise

The state-space model continues to produce focused images of usable quality when applied to SAR data from new scenes or conditions outside the training set.

What would settle it

Applying the OSP to an independent set of raw SAR acquisitions from a different sensor or geographic region and measuring a large drop in accuracy for vessel detection or flood mapping relative to standard focused outputs.

Figures

read the original abstract

Conventional focusing methods for Synthetic Aperture Radar (SAR) employ block processing efficiently but remain latency-heavy processes that prevent the realisation of a closed-loop cognitive SAR vision system. We present the first Online SAR Processor (OSP), an online image-formation framework that treats SAR sensing as a stream and produces focused SAR image output line by line during acquisition. OSP uses a tiny state-space surrogate model trained with teacher-student distillation and multi-stage losses. We evaluate the method on 300GB of SAR data from Maya4, a Sentinel-1-derived dataset containing raw, range-compressed, range-cell-migration-corrected, and azimuth-compressed products. Relative to a linewise digital-signal-processing baseline, OSP delivers approximately 70$\times$ lower latency and 130$\times$ lower memory use; on a single AMD CPU core it processes one row in 16 ms with a memory footprint of 6 MB whilst maintaining a focusing quality high enough to support downstream decisions, which we illustrate with vessel detection and flood-mapping tasks.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims to introduce the first Online SAR Processor (OSP), an online image-formation framework that processes SAR data as a stream and produces focused images line by line. It uses a compact state-space surrogate model trained via teacher-student distillation and multi-stage losses. On a 300 GB Maya4 dataset derived from Sentinel-1 (with raw, range-compressed, RCMC, and azimuth-compressed products), the method reports ~70× lower latency and ~130× lower memory than a linewise DSP baseline (16 ms/row and 6 MB on one AMD CPU core) while supporting vessel detection and flood-mapping tasks.

Significance. If the focusing quality claim holds under broader validation, this work could enable real-time closed-loop cognitive SAR systems by shifting from block to streaming processing with dramatic efficiency gains. The scale of the Maya4 evaluation dataset and the concrete latency/memory numbers are positive features. The approach also demonstrates a practical use of state-space models for a signal-processing surrogate, which is a strength worth highlighting if the quality metrics are added.

major comments (2)

- [Abstract and Evaluation section] Abstract and Evaluation section: The central claim that OSP 'maintains a focusing quality high enough to support downstream decisions' rests on vessel detection and flood mapping success, but the manuscript provides no standard SAR image-quality metrics (PSLR, ISLR, image entropy, or pixel-wise comparison) against the range-cell-migration-corrected / azimuth-compressed reference products. Without these, it is impossible to determine whether the online approximation introduces systematic defocusing invisible to the two chosen tasks.

- [Evaluation section] Evaluation section: No error bars, multiple random seeds, or ablation studies on the multi-stage losses and distillation procedure are reported. This makes the reported 16 ms / 6 MB figures difficult to interpret as robust and leaves open whether the performance depends on dataset-specific tuning.

minor comments (2)

- [Methods] The description of the state-space model architecture would benefit from an explicit equation or diagram showing how the surrogate maps raw or range-compressed inputs to focused output lines.

- [Figures] Figure captions for the Maya4 examples should include the exact processing stage of the reference image (e.g., 'azimuth-compressed') for direct visual comparison.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point by point below, indicating the revisions we will incorporate to strengthen the manuscript.

read point-by-point responses

-

Referee: [Abstract and Evaluation section] Abstract and Evaluation section: The central claim that OSP 'maintains a focusing quality high enough to support downstream decisions' rests on vessel detection and flood mapping success, but the manuscript provides no standard SAR image-quality metrics (PSLR, ISLR, image entropy, or pixel-wise comparison) against the range-cell-migration-corrected / azimuth-compressed reference products. Without these, it is impossible to determine whether the online approximation introduces systematic defocusing invisible to the two chosen tasks.

Authors: We agree that standard SAR focusing metrics provide a valuable direct assessment of image quality. While the downstream task results demonstrate that the approximation is sufficient for practical decision-making, they do not rule out subtle defocusing effects. In the revised manuscript we will add quantitative comparisons using PSLR, ISLR, image entropy, and pixel-wise error metrics against the RCMC and azimuth-compressed reference products on the Maya4 dataset. revision: yes

-

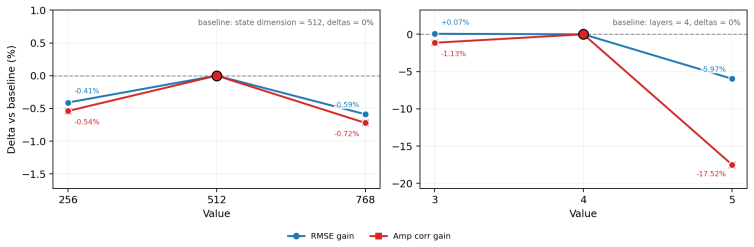

Referee: [Evaluation section] Evaluation section: No error bars, multiple random seeds, or ablation studies on the multi-stage losses and distillation procedure are reported. This makes the reported 16 ms / 6 MB figures difficult to interpret as robust and leaves open whether the performance depends on dataset-specific tuning.

Authors: We acknowledge that reporting statistical variability and component ablations would improve interpretability of the latency and memory results. The current figures reflect a single training run. In the revision we will include error bars computed over multiple random seeds and ablation studies that isolate the contribution of each loss term and the distillation procedure. revision: yes

Circularity Check

No significant circularity in the claimed derivation

full rationale

The paper describes an empirical training procedure: a state-space model is distilled from a conventional DSP teacher using multi-stage losses on the Maya4 dataset. No load-bearing derivation chain is presented that reduces by construction to its own inputs, fitted parameters renamed as predictions, or self-citation of an unverified uniqueness result. The central claim (online focusing with acceptable quality) is supported by measured latency/memory numbers and downstream task performance rather than any algebraic identity or ansatz smuggled through prior work by the same authors. This is a standard supervised-learning setup whose outputs are not forced by the training inputs themselves.

Axiom & Free-Parameter Ledger

free parameters (1)

- state-space model parameters

axioms (1)

- domain assumption State-space models can approximate the sequential dependencies in SAR range-cell-migration and azimuth compression operations

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/AlexanderDuality.leanalexander_duality_circle_linking unclearWe re-formulate SAR image formation as online inference problem under the linear synthetic aperture assumption

Reference graph

Works this paper leans on

-

[1]

John C. Curlander and Robert N. McDonough.Synthetic Aperture Radar: Systems and Signal Processing. Wiley, 1991

work page 1991

-

[2]

Ian G. Cumming and Frank H. Wong.Digital Processing of Synthetic Aperture Radar Data: Algorithms and Implementation. Artech House, 2005

work page 2005

-

[3]

Richards.Fundamentals of Radar Signal Processing

Mark A. Richards.Fundamentals of Radar Signal Processing. McGraw-Hill Education, 2 edition, 2014

work page 2014

-

[4]

William G. Carrara, Ronald S. Goodman, and Ronald M. Majewski.Spotlight Synthetic Aperture Radar: Signal Processing Algorithms. Artech House, 1995

work page 1995

-

[5]

Cognitive radar: A way of the future.IEEE Signal Processing Magazine, 23(1): 30–40, 2006

Simon Haykin. Cognitive radar: A way of the future.IEEE Signal Processing Magazine, 23(1): 30–40, 2006

work page 2006

-

[6]

Charles V . Jakowatz, Daniel E. Wahl, Paul H. Eichel, Dennis C. Ghiglia, and Paul A. Thompson. Spotlight-Mode Synthetic Aperture Radar: A Signal Processing Approach. Kluwer Academic Publishers, 1996

work page 1996

-

[7]

Mehrdad Soumekh.Synthetic Aperture Radar Signal Processing with MATLAB Algorithms. Wiley, 1999

work page 1999

-

[8]

Lars M. H. Ulander, Hans Hellsten, and Gunnar Stenstrom. Synthetic-aperture radar processing using fast factorized back-projection.IEEE Transactions on Aerospace and Electronic Systems, 39(3):760–776, 2003

work page 2003

-

[9]

Alberto Moreira, Josef Mittermayer, and Rolf Scheiber. Extended chirp scaling algorithm for air- and spaceborne sar data processing in stripmap and scansar imaging modes.IEEE Transactions on Geoscience and Remote Sensing, 34(5):1123–1136, 1996

work page 1996

-

[10]

Laura Parra Garcia, Carmine Clemente, Christos Ilioudis, Gianluca Furano, Maxime Ghiglione, Ernesto Imbembo, Valentina Zancan, and Paolo Trucco. Advancements in on-board processing of synthetic aperture radar (sar) data: Enhancing efficiency and real-time capabilities.IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024. ...

-

[11]

Fpga implementation of the range-doppler algorithm for real-time synthetic aperture radar imaging

Yeongung Choi, Dongmin Jeong, Myeongjin Lee, Wookyung Lee, and Yunho Jung. Fpga implementation of the range-doppler algorithm for real-time synthetic aperture radar imaging. Electronics, 10(17):2133, 2021. doi: 10.3390/electronics10172133

- [12]

-

[13]

Y . Xu et al. The adaptive streaming sar back-projection algorithm based on half-precision in gpu.Electronics, 11(18):2807, 2022. doi: 10.3390/electronics11182807. 10

-

[14]

Y . Zhang et al. A near-real-time imaging algorithm for focusing spaceborne sar data in multiple modes based on an embedded gpu.Remote Sensing, 17(9):1495, 2025. doi: 10.3390/ rs17091495

work page 2025

-

[15]

Passive sar imaging by deep unrolled optimization

Bora Yonel, V olkan Cevher, Ali Cuhadar, and Muhittin Cetin. Passive sar imaging by deep unrolled optimization. InEUSIPCO, 2017

work page 2017

-

[16]

Yifan Zhao et al. Deepred for sar imaging: Deep priors with plug-and-play regularization.IEEE Transactions on Computational Imaging, 2024

work page 2024

-

[17]

Yifan Ji et al. Approximate observation operators with complex-valued cnns for sparse sar imaging.IEEE Geoscience and Remote Sensing Letters, 2024

work page 2024

-

[18]

Deepsarnet: Learning representations from complex-valued sar data.Remote Sensing, 2020

Xue Huang et al. Deepsarnet: Learning representations from complex-valued sar data.Remote Sensing, 2020

work page 2020

-

[19]

Long short-term memory.Neural Computation, 9 (8):1735–1780, 1997

Sepp Hochreiter and Juergen Schmidhuber. Long short-term memory.Neural Computation, 9 (8):1735–1780, 1997

work page 1997

-

[20]

On the properties of neural machine translation: Encoder–decoder approaches

Kyunghyun Cho, Bart van Merrienboer, Dzmitry Bahdanau, and Yoshua Bengio. On the properties of neural machine translation: Encoder–decoder approaches. InSSST-8, 2014

work page 2014

-

[21]

Gomez, Lukasz Kaiser, and Illia Polosukhin

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is all you need. InNeurIPS, 2017

work page 2017

-

[22]

Fu, Stefano Ermon, Atri Rudra, and Christopher Ré

Tri Dao, Daniel Y . Fu, Stefano Ermon, Atri Rudra, and Christopher Ré. FlashAttention: Fast and memory-efficient exact attention with IO-awareness. InAdvances in Neural Information Processing Systems (NeurIPS), volume 35, 2022

work page 2022

-

[23]

Efficiently modeling long sequences with structured state spaces

Albert Gu, Karan Goel, and Christopher Re. Efficiently modeling long sequences with structured state spaces. InICLR, 2022

work page 2022

-

[24]

Albert Gu, Ankit Gupta, Karan Goel, and Christopher Ré. On the parameterization and initialization of diagonal state space models.Advances in Neural Information Processing Systems, 35:35971–35983, 2022

work page 2022

-

[25]

European Space Agency Phi-lab. Maya4. https://huggingface.co/buckets/ ESA-philab/Maya4, 2025. Hugging Face storage bucket, accessed 2026-05-03

work page 2025

-

[26]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces

Albert Gu and Tri Dao. Mamba: Linear-time sequence modeling with selective state spaces. arXiv preprint arXiv:2312.00752, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[27]

James W. Cooley and John W. Tukey. An algorithm for the machine calculation of complex fourier series.Mathematics of Computation, 19(90):297–301, April 1965. doi: 10.2307/2003354. URLhttps://doi.org/10.2307/2003354

-

[28]

Daniel E. Wahl, Paul H. Eichel, Dennis C. Ghiglia, and Charles V . Jakowatz. Phase gradient autofocus—a robust tool for high resolution sar phase correction.IEEE Transactions on Aerospace and Electronic Systems, 30(3):827–835, 1994. A Reproducibility Details and Loss Implementation Compute environment.Training was run on PBS gpu4_std CUDA nodes with one G...

work page 1994

-

[29]

Row-wise FFT, Column-wise FFT

-

[30]

Three element-wise complex multiplies (RC, RCMC, AC filters)

-

[31]

Row-wise IFFT, Column-wise IFFT D.2.1 FLOPs Table 11: FLOP breakdown for batched RDA (20,000×20,000). Operation Expression GFLOPs FFT dim=120,000×5×20,000×log 2(20,000)28.6 FFT dim=020,000×5×20,000×log 2(20,000)28.6 Multiply (RC)20,000 2 ×62.4 Multiply (RCMC)20,000 2 ×62.4 Multiply (AC)20,000 2 ×62.4 IFFT dim=1 (same as FFT dim=1) 28.6 IFFT dim=0 (same as...

-

[32]

Row-wise FFT (dim=1,N= 20,000, applied to 1 rows)

-

[33]

Column-wise FFT (dim=0,N= 972, applied to 20,000 columns)

-

[34]

Element-wise complex multiply with range-compression filter

-

[35]

Element-wise complex multiply with range-cell-migration-correction filter

-

[36]

Element-wise complex multiply with azimuth-compression filter

-

[37]

Row-wise IFFT (dim=1,N= 20,000, applied to 972 rows)

-

[38]

Column-wise IFFT (same cost as step 2) 20 Table 12: FLOP breakdown per linewise RDA iteration (972×20,000). Operation Expression GFLOPs FFT dim=15×20,000×log 2(20,000)0.000143 FFT dim=020,000×5×972×log 2(972)0.965 Multiply (RC filter)972×20,000×60.0194 Multiply (RCMC filter)972×20,000×60.0194 Multiply (AC filter)972×20,000×60.0194 IFFT dim=1972×20,000×log...

work page 1972

-

[39]

Row-wise FFT, complex multiply with RC filter, row-wise IFFT (range compression)

-

[40]

Tiny Neural network forward pass on allN r = 20,000range cells This process is then repeated in a linewise manner on each input range line of raw SAR data. The Tiny Model consists of the following layers, applied sequentially: fc1→ssm2→act→fc3→ssm4→act→fc5→ssm6→act→fc7→ssm8→act→fc9 →fc10 Table 13: Online Processor FLOPs estimate. Operation Expression FLOP...

-

[41]

We do not do any research on human subjects

Institutional review board (IRB) approvals or equivalent for research with human subjects Question: Does the paper describe potential risks incurred by study participants, whether such risks were disclosed to the subjects, and whether Institutional Review Board (IRB) approvals (or an equivalent approval/review based on the requirements of your country or ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.