Recognition: 2 theorem links

· Lean TheoremMulti-Fidelity Quantile Regression

Pith reviewed 2026-05-12 05:09 UTC · model grok-4.3

The pith

The high-fidelity quantile equals the low-fidelity quantile evaluated at a covariate-dependent level.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

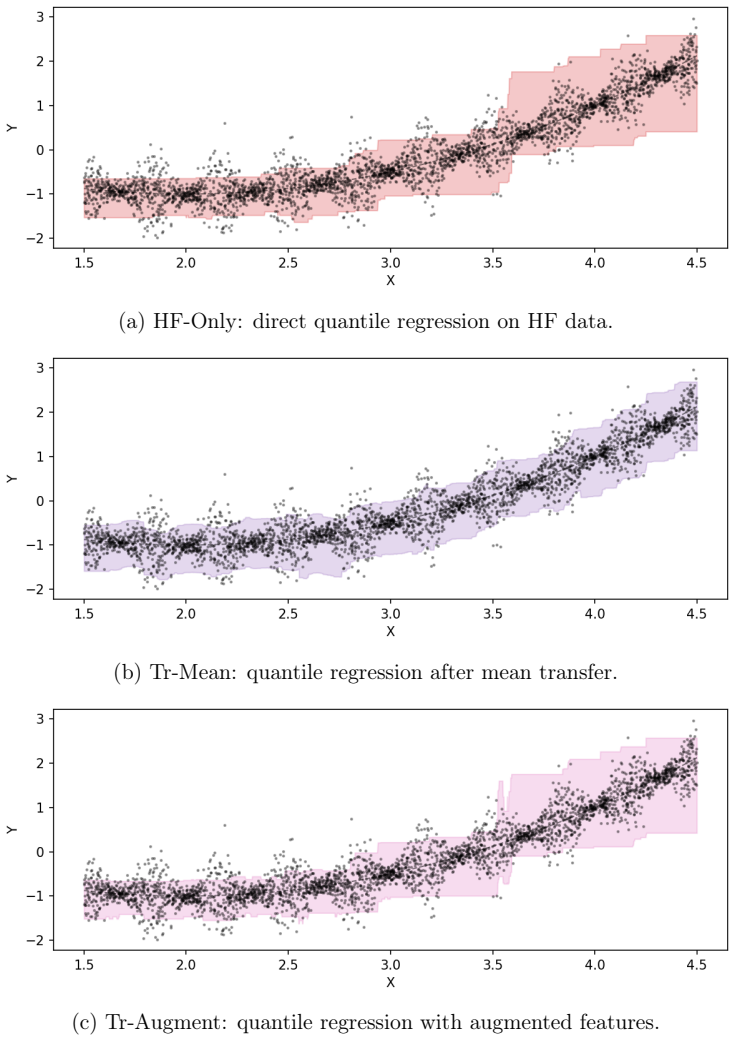

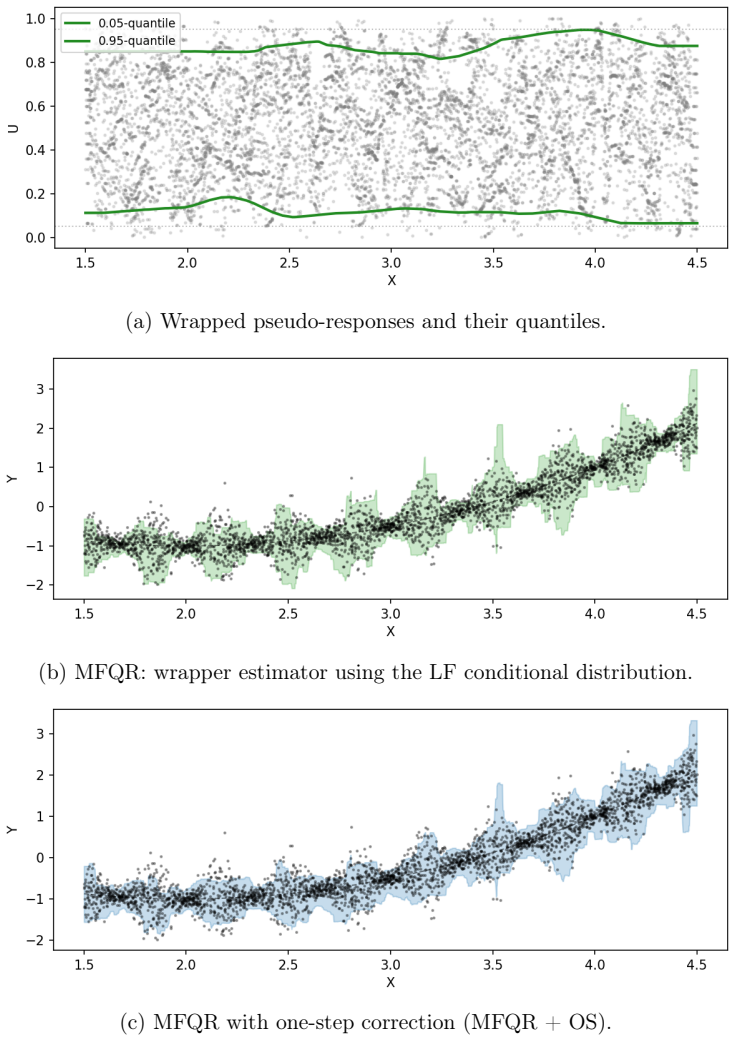

The central claim is that a local quantile link exists under which the high-fidelity conditional quantile equals the low-fidelity conditional quantile evaluated at a covariate-dependent level function. This reformulation converts multi-fidelity quantile regression into the simpler task of estimating the level function, which converges faster than the original quantile when the low- and high-fidelity conditional distributions share similar shapes; a correction step restores robustness when that similarity weakens.

What carries the argument

The local quantile link, which expresses each high-fidelity quantile as the low-fidelity quantile at a covariate-dependent level.

If this is right

- When the level function is smoother, the estimator converges faster than standard quantile regression that uses only high-fidelity data.

- The correction step improves accuracy in regimes where distributional shapes differ more strongly.

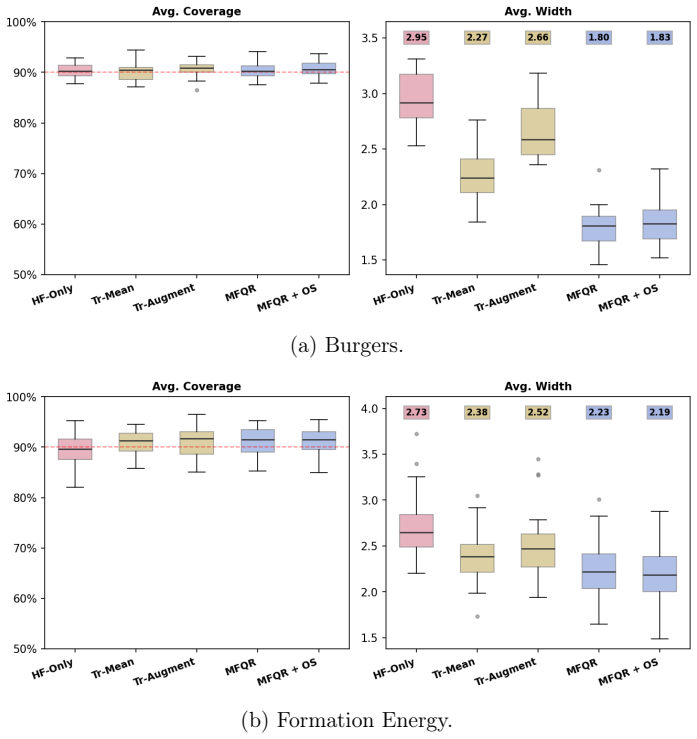

- Quantile estimates obtained this way are more accurate on both synthetic and real datasets.

- Conformal prediction intervals constructed from the estimates are tighter while preserving valid coverage.

Where Pith is reading between the lines

- This link representation could be applied to other conditional functionals such as means or tail probabilities if comparable fidelity relations hold.

- Chaining the link across a hierarchy of fidelity levels might produce cumulative savings in data collection cost.

- Performance will depend on having low-fidelity coverage over the full covariate domain, suggesting tests in settings with sparse low-fidelity observations.

- The approach is model-agnostic, so it can be paired with any base quantile estimator.

Load-bearing premise

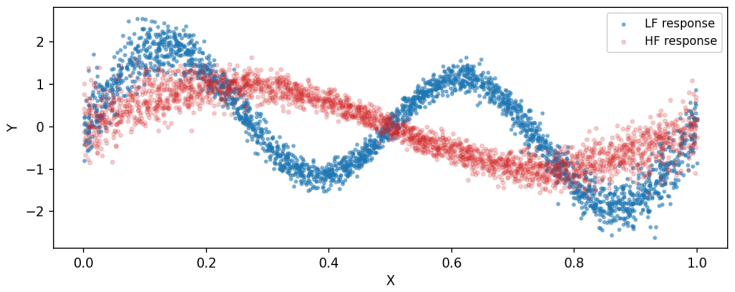

The low-fidelity and high-fidelity conditional distributions have similar shapes, so the level function varies more smoothly than the target high-fidelity quantile.

What would settle it

On synthetic data with deliberately dissimilar low- and high-fidelity conditional shapes, the multi-fidelity estimator shows no reduction in error or no faster convergence rate compared with direct high-fidelity quantile regression.

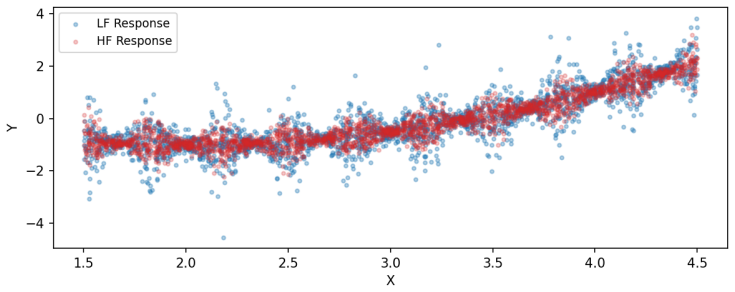

Figures

read the original abstract

High-fidelity (HF) data are often expensive to collect and therefore scarce, making conditional quantiles difficult to estimate accurately. We propose a two-stage, model-agnostic method for multi-fidelity quantile regression. The central idea is a local quantile link: at each covariate value, the HF quantile is represented as a low-fidelity (LF) quantile evaluated at a covariate-dependent level. This reformulation reduces the problem to estimating the level function, which can be smoother than the HF quantile itself when the LF and HF conditional distributions have similar shapes. We also study the complementary regime in which this advantage weakens and introduce a correction step to improve robustness. Our theory characterizes when the proposed estimator converges faster than direct quantile regression using HF data alone and when the correction step provides further improvement. Experiments on synthetic and real data show that our method yields more accurate quantile estimates and tighter conformal prediction intervals.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes a two-stage, model-agnostic multi-fidelity quantile regression method. The central idea is a local quantile link reformulation in which the high-fidelity (HF) conditional quantile at covariate x is expressed as the low-fidelity (LF) quantile evaluated at a covariate-dependent level α(x). This reduces the problem to estimating the level function α(x), which is claimed to be smoother than the direct HF quantile surface when the LF and HF conditional distributions have similar shapes. The work provides theoretical results characterizing convergence rates under this setup and in complementary regimes, introduces a correction step for robustness, and reports improved accuracy and tighter conformal prediction intervals on synthetic and real data.

Significance. If the similarity assumption holds with sufficient strength, the method offers a practical way to improve quantile estimation accuracy when HF data are scarce but LF data are plentiful. The model-agnostic two-stage structure and the correction step for robustness are clear strengths. The potential for tighter conformal intervals adds applied value. The absence of quantitative conditions on the similarity regime, however, limits the strength of the theoretical guarantees.

major comments (1)

- [Theory section] The theory section characterizing faster convergence: the claimed rate improvement requires that α(x) be smoother than the HF quantile surface, which occurs 'when the LF and HF conditional distributions have similar shapes.' No explicit quantitative bound is supplied on the deviation between the conditional distributions (e.g., a bound on sup_x |F_HF(x,·) - F_LF(x,·)| or on the difference in conditional densities) that would guarantee the smoothness ordering or the rate gain. Without such a condition the advantage is not assured even inside the regime the method targets.

minor comments (2)

- [Experiments] The experimental section would benefit from explicit reporting of the HF and LF sample sizes used in each synthetic example and from a quantitative metric (or diagnostic plot) confirming that the 'similar shapes' condition holds in the cases where improvement is observed.

- [Introduction / Methods] The definition of the level function α(x) and its estimation procedure should be stated more explicitly in the introduction or early methods section to improve accessibility for readers new to the reformulation.

Simulated Author's Rebuttal

We thank the referee for the careful reading of our manuscript and the constructive feedback. We address the major comment below and have revised the manuscript to incorporate a quantitative condition strengthening the theoretical guarantees.

read point-by-point responses

-

Referee: [Theory section] The theory section characterizing faster convergence: the claimed rate improvement requires that α(x) be smoother than the HF quantile surface, which occurs 'when the LF and HF conditional distributions have similar shapes.' No explicit quantitative bound is supplied on the deviation between the conditional distributions (e.g., a bound on sup_x |F_HF(x,·) - F_LF(x,·)| or on the difference in conditional densities) that would guarantee the smoothness ordering or the rate gain. Without such a condition the advantage is not assured even inside the regime the method targets.

Authors: We appreciate the referee's observation. The main theoretical results are formulated directly in terms of the relative smoothness of the level function α(·) and the HF quantile surface, with the similarity of conditional distribution shapes provided as motivation for when the rate improvement is expected. We agree, however, that an explicit quantitative link between the deviation of the conditional distributions and the smoothness ordering would make the conditions more precise. In the revised version we have added a lemma (now Lemma 3.3) that supplies such a bound: under the assumption that the conditional densities are bounded away from zero and Lipschitz continuous, if sup_x ||F_HF(x,·) − F_LF(x,·)||_∞ ≤ δ, then the Hölder exponent of α exceeds that of the HF quantile by an amount controlled by δ. This yields an explicit regime (δ sufficiently small relative to the sample sizes) in which the faster convergence rate is guaranteed. We have also expanded the discussion of the complementary regime to clarify when the advantage does not hold. revision: yes

Circularity Check

No circularity: reformulation is an independent modeling step with separate estimation

full rationale

The paper's central step is a modeling reformulation that represents the HF quantile as an LF quantile evaluated at a covariate-dependent level α(x), reducing the task to estimating this level function. This is presented as a choice that can yield smoother targets under the assumption of similar conditional distribution shapes, followed by a two-stage estimation procedure and theoretical characterization of rates. No equations reduce a claimed prediction or result back to fitted inputs by construction, no self-citations are load-bearing for the core claim, and the level-function estimation is treated as a distinct, model-agnostic step rather than a tautology. The derivation chain remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption LF and HF conditional distributions have similar shapes making the level function smoother than the HF quantile

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearAssumption 1 (Local Quantile Link). For a fixed target level τ and any covariate value x, the HF and LF quantile functions satisfy Q_H(τ|x) = Q_L(u_τ(x)|x) for some covariate-dependent level function u_τ(x) ∈ (0,1).

-

IndisputableMonolith/Foundation/AlphaCoordinateFixation.leanJ_uniquely_calibrated_via_higher_derivative unclearProposition 2. Under Assumptions 3–4, q_τ ∈ H(min{β_μ, β_σ}, C_q), r_τ ∈ H(β_σ, …), u_τ ∈ H(β_ρ, C_τ C_ρ).

Reference graph

Works this paper leans on

-

[1]

Semiparametric Efficient Estimation of Quantile Regression , author=. Statistica Sinica , volume=

-

[2]

Accurate predictions on small data with a tabular foundation model , author=. Nature , volume=. 2025 , publisher=

work page 2025

-

[3]

Qu, Jingang and Holzm. Tab. International Conference on Machine Learning , year=

-

[4]

Foundation models of scientific knowledge for chemistry: Opportunities, challenges and lessons learned , author=. Proceedings of BigScience Episode\# 5--Workshop on Challenges & Perspectives in Creating Large Language Models , pages=

-

[5]

Npj Computational Materials , volume=

Foundation models for materials discovery--current state and future directions , author=. Npj Computational Materials , volume=. 2025 , publisher=

work page 2025

-

[6]

Nature Reviews Drug Discovery , year =

Vamathevan, Jessica and Clark, Dominic and Czodrowski, Paul and Dunham, Ian and Ferran, Edgardo and Lee, George and Li, Bin and Madabhushi, Anant and Shah, Parantu and Spitzer, Michaela and Zhao, Shanrong , title =. Nature Reviews Drug Discovery , year =

-

[7]

Tabor, Daniel P. and Roch, Lo. Accelerating the discovery of materials for clean energy in the era of smart automation , journal =. 2018 , volume =

work page 2018

-

[8]

Butler, Keith T. and Davies, Daniel W. and Cartwright, Hugh and Isayev, Olexandr and Walsh, Aron , title =. Nature , year =

-

[9]

Journal of Business & Economic Statistics , volume=

A note on distributed quantile regression by pilot sampling and one-step updating , author=. Journal of Business & Economic Statistics , volume=. 2022 , publisher=

work page 2022

-

[10]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Debiased inference on heterogeneous quantile treatment effects with regression rank scores , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2023 , publisher=

work page 2023

-

[11]

Deep PPG: Large-scale heart rate estimation with convolutional neural networks , author=. Sensors , volume=. 2019 , publisher=

work page 2019

-

[12]

Inductive confidence machines for regression , author=. Machine learning: ECML 2002: 13th European conference on machine learning Helsinki, Finland, August 19--23, 2002 proceedings 13 , pages=. 2002 , organization=

work page 2002

-

[13]

Physical Review Research , volume=

Multifidelity deep neural operators for efficient learning of partial differential equations with application to fast inverse design of nanoscale heat transport , author=. Physical Review Research , volume=. 2022 , publisher=

work page 2022

-

[14]

Journal of forecasting , volume=

A quantile regression neural network approach to estimating the conditional density of multiperiod returns , author=. Journal of forecasting , volume=. 2000 , publisher=

work page 2000

-

[15]

SIAM journal on control and optimization , volume=

Monotone operators and the proximal point algorithm , author=. SIAM journal on control and optimization , volume=. 1976 , publisher=

work page 1976

-

[16]

Foundations and Trends in optimization , volume=

Proximal algorithms , author=. Foundations and Trends in optimization , volume=. 2014 , publisher=

work page 2014

-

[17]

Nature Reviews Physics , volume=

Physics-informed machine learning , author=. Nature Reviews Physics , volume=. 2021 , publisher=

work page 2021

-

[18]

arXiv preprint arXiv:2204.09157 , pages=

Multifidelity deep operator networks , author=. arXiv preprint arXiv:2204.09157 , pages=

-

[19]

arXiv preprint arXiv:2409.19712 , year=

Posterior conformal prediction , author=. arXiv preprint arXiv:2409.19712 , year=

-

[20]

Review of multi-fidelity models, Advances in Computational Science and Engineering, 1, 351--400 , author=

-

[21]

Expert opinion on drug discovery , volume=

Binding affinity in drug design: experimental and computational techniques , author=. Expert opinion on drug discovery , volume=. 2019 , publisher=

work page 2019

-

[22]

Binding affinity via docking: fact and fiction , author=. Molecules , volume=. 2018 , publisher=

work page 2018

-

[23]

Localized conformal prediction: A generalized inference framework for conformal prediction , author=. Biometrika , volume=. 2023 , publisher=

work page 2023

-

[24]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Conformal prediction with local weights: randomization enables robust guarantees , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2025 , publisher=

work page 2025

-

[25]

Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=

Conformal prediction with conditional guarantees , author=. Journal of the Royal Statistical Society Series B: Statistical Methodology , volume=. 2025 , publisher=

work page 2025

-

[26]

Sensors and Actuators B: Chemical , volume=

Field calibration of an electronic nose for benzene estimation in an urban pollution monitoring scenario , author=. Sensors and Actuators B: Chemical , volume=. 2008 , publisher=

work page 2008

-

[27]

Science and Technology for the Built Environment , volume=

The ASHRAE Great Energy Predictor III competition: Overview and results , author=. Science and Technology for the Built Environment , volume=. 2020 , publisher=

work page 2020

-

[28]

Wind Turbine SCADA Dataset: Real-time operational data from a wind turbine in Turkey , author=. 2018 , howpublished=

work page 2018

-

[29]

Nature Machine Intelligence , volume=

The need for uncertainty quantification in machine-assisted medical decision making , author=. Nature Machine Intelligence , volume=. 2019 , publisher=

work page 2019

-

[30]

Nature Computational Science , volume=

A probabilistic graphical model foundation for enabling predictive digital twins at scale , author=. Nature Computational Science , volume=. 2021 , publisher=

work page 2021

-

[31]

Algorithmic learning in a random world , author=. 2005 , publisher=

work page 2005

-

[32]

Advances in Neural Information Processing Systems , volume=

Conformalized quantile regression , author=. Advances in Neural Information Processing Systems , volume=

-

[33]

Predicting the output from a complex computer code when fast approximations are available , author=. Biometrika , volume=. 2000 , publisher=

work page 2000

-

[34]

Survey of multifidelity methods in uncertainty propagation, inference, and optimization , author=. SIAM Review , volume=. 2018 , publisher=

work page 2018

-

[35]

Journal of Computational Physics , volume=

Nonlinear information fusion algorithms for selection and adaptation of multi-fidelity models , author=. Journal of Computational Physics , volume=. 2017 , publisher=

work page 2017

-

[36]

International Conference on Machine Learning , pages=

On the calibration of modern neural networks , author=. International Conference on Machine Learning , pages=. 2017 , organization=

work page 2017

-

[37]

Advances in Neural Information Processing Systems , volume=

Conformal prediction under covariate shift , author=. Advances in Neural Information Processing Systems , volume=

-

[38]

Molecular therapy Nucleic acids , volume=

Artificial intelligence for drug discovery: Resources, methods, and applications , author=. Molecular therapy Nucleic acids , volume=. 2023 , publisher=

work page 2023

-

[39]

International journal of molecular sciences , volume=

Systems pharmacology in small molecular drug discovery , author=. International journal of molecular sciences , volume=. 2016 , publisher=

work page 2016

-

[40]

The Annals of Statistics , volume=

Conformal prediction beyond exchangeability , author=. The Annals of Statistics , volume=. 2023 , publisher=

work page 2023

-

[41]

Advances in Neural Information Processing Systems , volume=

Simple and scalable predictive uncertainty estimation using deep ensembles , author=. Advances in Neural Information Processing Systems , volume=

-

[42]

Advances in Neural Information Processing Systems , volume=

Can you trust your model's uncertainty? Evaluating predictive uncertainty under shift , author=. Advances in Neural Information Processing Systems , volume=

-

[43]

Journal of Computational Physics , volume=

Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations , author=. Journal of Computational Physics , volume=. 2019 , publisher=

work page 2019

-

[44]

NeurIPS Workshop on Bayesian Deep Learning , year=

Generative Ensemble Regression: Learning Particle Repulsion for Broad Coverage , author=. NeurIPS Workshop on Bayesian Deep Learning , year=

-

[45]

A Gentle Introduction to Conformal Prediction and Distribution-Free Uncertainty Quantification

A gentle introduction to conformal prediction and distribution-free uncertainty quantification , author=. arXiv preprint arXiv:2107.07511 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[46]

International Conference on Machine Learning , year=

Flexible Model Aggregation for Quantile Regression , author=. International Conference on Machine Learning , year=

-

[47]

Journal of Computational Physics , volume=

Composite neural network for multi-fidelity data: DeepONet application , author=. Journal of Computational Physics , volume=. 2020 , publisher=

work page 2020

-

[48]

arXiv preprint arXiv:2211.14578 , year=

Estimation and inference for transfer learning with high-dimensional quantile regression , author=. arXiv preprint arXiv:2211.14578 , year=

-

[49]

arXiv preprint arXiv:2212.00428 , year=

Transfer learning for high-dimensional quantile regression via convolution smoothing , author=. arXiv preprint arXiv:2212.00428 , year=

-

[50]

Transfer learning with large-scale quantile regression , author=. Technometrics , volume=. 2024 , publisher=

work page 2024

-

[51]

International Conference on Machine Learning , year=

Bayesian transfer learning for multi-fidelity data fusion , author=. International Conference on Machine Learning , year=

- [52]

-

[53]

IEEE Transactions on Biomedical Engineering , volume=

TROIKA: A general framework for heart rate monitoring using wrist-type photoplethysmographic signals during intensive physical exercise , author=. IEEE Transactions on Biomedical Engineering , volume=. 2015 , publisher=

work page 2015

-

[54]

NPJ Digital Medicine , volume=

Investigating sources of inaccuracy in wearable optical heart rate sensors , author=. NPJ Digital Medicine , volume=. 2020 , publisher=

work page 2020

-

[55]

Physiological measurement , volume=

Photoplethysmography and its application in clinical physiological measurement , author=. Physiological measurement , volume=. 2007 , publisher=

work page 2007

-

[56]

Journal of Personalized Medicine , volume=

Accuracy of wearable heart rate monitors in cardiac rehabilitation , author=. Journal of Personalized Medicine , volume=. 2019 , publisher=

work page 2019

-

[57]

International Conference on Intelligent Green Building and Smart Grid (IGBSG) , pages=

Wind turbine fault detection system based on SCADA data , author=. International Conference on Intelligent Green Building and Smart Grid (IGBSG) , pages=. 2014 , organization=

work page 2014

-

[58]

Environmental Research Letters , volume=

Using machine learning to predict wind turbine power output , author=. Environmental Research Letters , volume=. 2013 , publisher=

work page 2013

-

[59]

Evaluating techniques for redirecting turbine wakes using SOWFA , author=. Renewable Energy , volume=. 2014 , publisher=

work page 2014

-

[60]

IEC 61400-12-1: Power performance measurements of electricity producing wind turbines , author=. 2005 , institution=

work page 2005

-

[61]

Sensors and Actuators B: Chemical , volume=

Field calibration of a cluster of low-cost commercially available sensors for air quality monitoring , author=. Sensors and Actuators B: Chemical , volume=. 2015 , publisher=

work page 2015

-

[62]

Guideline 14-2002: Measurement of energy and demand savings , author=. 2002 , institution=

work page 2002

-

[63]

Journal of Machine Learning Research , volume=

Quantile regression forests , author=. Journal of Machine Learning Research , volume=

-

[64]

Journal of Economic Perspectives , volume=

Quantile regression , author=. Journal of Economic Perspectives , volume=

-

[65]

Journal of Business & Economic Statistics , volume=

CAViaR: Conditional autoregressive value at risk by regression quantiles , author=. Journal of Business & Economic Statistics , volume=

-

[66]

Statistics in Medicine , volume=

Quantile regression for analyzing nonuniform associations between risk factors and health outcomes , author=. Statistics in Medicine , volume=

-

[67]

Monthly Weather Review , volume=

Statistical downscaling of extreme precipitation events using censored quantile regression , author=. Monthly Weather Review , volume=

-

[68]

International Conference on Machine Learning , pages=

Accurate uncertainties for deep learning using calibrated regression , author=. International Conference on Machine Learning , pages=

-

[69]

A comparison of some conformal quantile regression methods , author=. Stat , volume=

-

[70]

SIAM Journal on Scientific Computing , volume=

Optimal model management for multifidelity Monte Carlo estimation , author=. SIAM Journal on Scientific Computing , volume=

-

[71]

arXiv preprint arXiv:1609.07196 , year=

Review of multi-fidelity models , author=. arXiv preprint arXiv:1609.07196 , year=

-

[72]

Uncertainty quantification: Can we trust artificial intelligence in drug discovery? , author=. Iscience , volume=. 2022 , publisher=

work page 2022

-

[73]

Exploring multi-fidelity data in materials science: Challenges, applications, and optimized learning strategies , author=. Applied Sciences , volume=. 2023 , publisher=

work page 2023

-

[74]

Aerospace Science and Technology , volume=

Overview of Gaussian process based multi-fidelity techniques with variable relationship between fidelities, application to aerospace systems , author=. Aerospace Science and Technology , volume=

- [75]

-

[76]

Computers & Geosciences , volume=

Quantile regression neural networks: Implementation in R and application to precipitation downscaling , author=. Computers & Geosciences , volume=

-

[77]

Advances in Neural Information Processing Systems , volume=

Single-model uncertainties for deep learning , author=. Advances in Neural Information Processing Systems , volume=

- [78]

-

[79]

Journal of the American Statistical Association , volume=

Distribution-free prediction sets , author=. Journal of the American Statistical Association , volume=

-

[80]

Advances in Neural Information Processing Systems , volume=

Adaptive conformal inference under distribution shift , author=. Advances in Neural Information Processing Systems , volume=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.