Recognition: 2 theorem links

· Lean TheoremA PAC-Bayes Approach for Controlling Unknown Linear Discrete-time Systems

Pith reviewed 2026-05-12 05:12 UTC · model grok-4.3

The pith

A PAC-Bayes bound gives high-probability performance guarantees for any stochastic controller learned on unknown linear discrete-time systems.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We present a PAC-Bayes framework for learning controllers for unknown stochastic linear discrete-time systems, where the system parameters are drawn from a fixed but unknown distribution. We derive a data-dependent high probability bound on the performance of any learned (stochastic) controller that holds for unbounded quadratic cost. We also propose novel efficient learning algorithms with theoretical guarantees that can be implemented for both finite and infinite controller spaces. In the special case where LQG is optimal, the learned controllers achieve comparable performance to LQG.

What carries the argument

A data-dependent PAC-Bayes generalization bound that upper-bounds the expected quadratic cost of a stochastic controller using its empirical cost on sampled systems plus a complexity penalty based on divergence from a prior.

If this is right

- Any controller obtained by minimizing the bound receives a high-probability performance certificate without knowledge of the true parameter distribution.

- The same bound and optimization procedure apply directly to both finite and infinite controller parameter spaces.

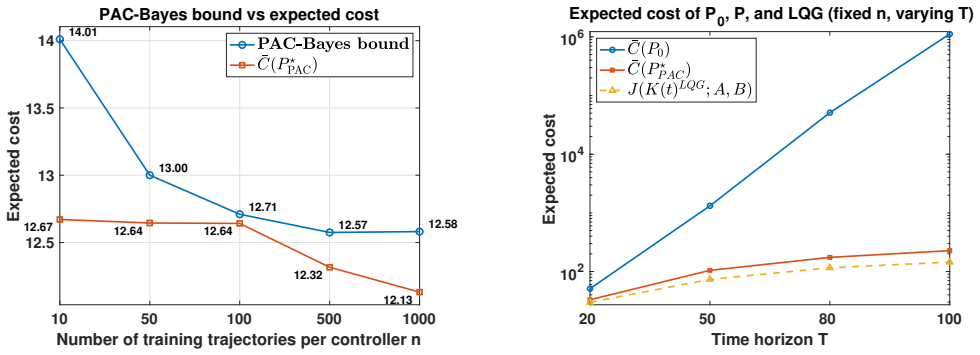

- When the true optimum is linear-quadratic-Gaussian, the learned controllers reach performance levels comparable to the optimum in numerical experiments.

- The bound remains valid for unbounded quadratic costs, removing a restriction present in earlier results.

Where Pith is reading between the lines

- The approach could support certification of controllers for repeated deployments where each instance draws fresh parameters from the same distribution.

- Analogous data-dependent bounds might be developed for nonlinear dynamics or for costs that penalize control effort differently.

- Allowing the prior or the posterior to adapt when the parameter distribution drifts would remove a practical limitation of the current guarantees.

Load-bearing premise

System parameters are independently sampled each time from one fixed unknown distribution, and the controller is allowed to be stochastic.

What would settle it

Train a controller using the proposed method on samples from one distribution, then draw many fresh independent samples from the same distribution and check whether the fraction of trajectories whose cost exceeds the bound exceeds the claimed probability.

Figures

read the original abstract

This paper presents a PAC-Bayes framework for learning controllers for unknown stochastic linear discrete-time systems, where the system parameters are drawn from a fixed but unknown distribution. We derive a data-dependent high probability bound on the performance of any learned (stochastic) controller, and propose novel efficient learning algorithms with theoretical guarantees, which can be implemented for both finite and infinite controller spaces. Compared to prior work, our bound holds for unbounded quadratic cost. In the special case where LQG is optimal, our numerical results suggest that the learned controllers achieve comparable performance to LQG.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript develops a PAC-Bayes framework for unknown linear discrete-time systems whose parameters are drawn from a fixed but unknown distribution. It derives a data-dependent high-probability bound on the expected quadratic cost of any learned stochastic controller and proposes efficient algorithms with guarantees that apply to both finite and infinite controller spaces. The central novelty is that the bound is stated to hold for unbounded quadratic costs, with numerical results suggesting performance comparable to LQG when LQG is optimal.

Significance. If the bound is rigorously established, the work would meaningfully extend PAC-Bayes methods to control problems with unbounded losses, a setting that arises naturally with quadratic costs. The data-dependent character of the bound and the algorithms for infinite controller spaces are concrete strengths that could support safer learning-based control under parametric uncertainty.

major comments (2)

- [Main PAC-Bayes bound and its proof] The derivation of the PAC-Bayes bound for unbounded quadratic costs (main theorem and its proof) does not explicitly verify or impose conditions ensuring finite exponential moments of the loss. For linear systems the quadratic cost is finite almost surely only when the closed-loop matrix is stable for almost every parameter draw; if the unknown distribution over parameters places positive mass on unstable or marginally stable poles, the expectation can be infinite and the concentration inequality fails to apply. The manuscript must either restrict the prior/posterior to stabilizing controllers or state the required integrability conditions on the parameter distribution.

- [Learning algorithms for infinite spaces] The learning algorithms for infinite controller spaces (Section on algorithms and optimization) are presented as efficient with theoretical guarantees, yet it is unclear how the posterior optimization automatically excludes controllers that produce infinite expected cost. Without such a mechanism the data-dependent bound cannot be evaluated or optimized in practice when the parameter distribution has unstable support.

minor comments (2)

- [Abstract and Introduction] The abstract and introduction would benefit from a concise statement of the precise assumptions (e.g., stabilizability, moment conditions) under which the unbounded-cost claim holds.

- [Numerical experiments] Numerical results should report statistics over multiple independent trials (mean and standard deviation of the achieved cost) rather than single-run comparisons to LQG.

Simulated Author's Rebuttal

We are grateful to the referee for the detailed and insightful comments. Below we provide point-by-point responses to the major comments and indicate the revisions we will make to address them.

read point-by-point responses

-

Referee: [Main PAC-Bayes bound and its proof] The derivation of the PAC-Bayes bound for unbounded quadratic costs (main theorem and its proof) does not explicitly verify or impose conditions ensuring finite exponential moments of the loss. For linear systems the quadratic cost is finite almost surely only when the closed-loop matrix is stable for almost every parameter draw; if the unknown distribution over parameters places positive mass on unstable or marginally stable poles, the expectation can be infinite and the concentration inequality fails to apply. The manuscript must either restrict the prior/posterior to stabilizing controllers or state the required integrability conditions on the parameter distribution.

Authors: We thank the referee for pointing this out. The PAC-Bayes bound in the main theorem is derived under the assumption that the expected loss is finite, which requires the closed-loop system to be stable almost surely with respect to the parameter distribution. While the manuscript focuses on stabilizing controllers and the numerical examples use stable systems, we agree that this condition should be stated explicitly. In the revised manuscript, we will add a paragraph in the relevant section clarifying that the prior and posterior distributions are supported only on controllers that stabilize the system for almost all parameter realizations, ensuring the finite exponential moments required for the concentration inequality. This addresses the concern without restricting the generality of the framework, as unstable controllers would yield infinite cost anyway. revision: yes

-

Referee: [Learning algorithms for infinite spaces] The learning algorithms for infinite controller spaces (Section on algorithms and optimization) are presented as efficient with theoretical guarantees, yet it is unclear how the posterior optimization automatically excludes controllers that produce infinite expected cost. Without such a mechanism the data-dependent bound cannot be evaluated or optimized in practice when the parameter distribution has unstable support.

Authors: We appreciate this observation. The algorithms optimize the posterior over a parameterized family of controllers where stability is enforced through the choice of parameterization. However, to make this explicit and ensure the bound can be evaluated, in the revised version we will include a detailed description of how the optimization procedure restricts to stabilizing controllers, for example by using a reparameterization that guarantees closed-loop stability or by incorporating stability constraints in the optimization. revision: yes

Circularity Check

No circularity: PAC-Bayes bound derived from concentration inequalities without reduction to inputs or self-citations

full rationale

The derivation applies standard PAC-Bayes concentration to the expected quadratic cost under a fixed unknown parameter distribution, yielding a data-dependent high-probability bound that holds for unbounded losses via explicit moment or stability conditions stated in the paper. No equation reduces the bound to a fitted quantity by construction, no load-bearing step relies on a self-citation whose content is unverified, and the algorithms optimize the derived bound rather than presupposing its form. The central claim remains a non-tautological generalization guarantee independent of the specific controller parameterization chosen by the user.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption System is linear discrete-time with parameters drawn i.i.d. from a fixed unknown distribution

- domain assumption Quadratic cost may be unbounded

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclear?

unclearRelation between the paper passage and the cited Recognition theorem.

We derive a data-dependent high probability bound on the performance of any learned (stochastic) controller... our bound holds for unbounded quadratic cost.

What do these tags mean?

- matches

- The paper's claim is directly supported by a theorem in the formal canon.

- supports

- The theorem supports part of the paper's argument, but the paper may add assumptions or extra steps.

- extends

- The paper goes beyond the formal theorem; the theorem is a base layer rather than the whole result.

- uses

- The paper appears to rely on the theorem as machinery.

- contradicts

- The paper's claim conflicts with a theorem or certificate in the canon.

- unclear

- Pith found a possible connection, but the passage is too broad, indirect, or ambiguous to say the theorem truly supports the claim.

Reference graph

Works this paper leans on

-

[1]

Alquier, P. et al. (2024). User-friendly introduction to pac-bayes bounds. Foundations and Trends in Machine Learning , 17(2), 174--303

work page 2024

-

[2]

Anderson, B.D. and Moore, J.B. (2007). Optimal control: linear quadratic methods. Courier Corporation

work page 2007

-

[3]

B \'e gin, L., Germain, P., Laviolette, F., and Roy, J.F. (2016). Pac-bayesian bounds based on the r \'e nyi divergence. In Artificial Intelligence and Statistics, 435--444. PMLR

work page 2016

-

[4]

Boroujeni, M.G., Galimberti, C.L., Krause, A., and Ferrari-Trecate, G. (2024). A pac-bayesian framework for optimal control with stability guarantees. In 2024 IEEE 63rd Conference on Decision and Control (CDC), 8237--8244. IEEE

work page 2024

-

[5]

Brunke, L., Greeff, M., Hall, A.W., Yuan, Z., Zhou, S., Panerati, J., and Schoellig, A.P. (2022). Safe learning in robotics: From learning-based control to safe reinforcement learning. Annual Review of Control, Robotics, and Autonomous Systems, 5(1), 411--444

work page 2022

-

[6]

Buldygin, V.V. and Kozachenko, Y.V. (1980). Sub-gaussian random variables. Ukrainian Mathematical Journal, 32, 483--489

work page 1980

-

[7]

Campi, M.C. and Kumar, P. (1998). Adaptive linear quadratic gaussian control: the cost-biased approach revisited. SIAM Journal on Control and Optimization, 36(6), 1890--1907

work page 1998

-

[8]

Campi, M. and Kumar, P. (1996). Optimal adaptive control of an lqg system. In Proceedings of 35th IEEE Conference on Decision and Control, volume 1, 349--353. IEEE

work page 1996

-

[9]

Dean, S., Mania, H., Matni, N., Recht, B., and Tu, S. (2018). Regret bounds for robust adaptive control of the linear quadratic regulator. Advances in Neural Information Processing Systems, 31

work page 2018

-

[10]

Duncan, T.E., Guo, L., and Pasik-Duncan, B. (2002). Adaptive continuous-time linear quadratic gaussian control. IEEE Transactions on automatic control, 44(9), 1653--1662

work page 2002

-

[11]

Grant, M. and Boyd, S. (2014). CVX : Matlab software for disciplined convex programming, version 2.1. https://cvxr.com/cvx. Accessed: Mar. 2014

work page 2014

-

[12]

Honorio, J. and Jaakkola, T. (2014). Tight bounds for the expected risk of linear classifiers and pac-bayes finite-sample guarantees. In Artificial Intelligence and Statistics, 384--392. PMLR

work page 2014

-

[13]

Lee, K., Jeon, S., Kim, H., and Kum, D. (2019). Optimal path tracking control of autonomous vehicle: Adaptive full-state linear quadratic gaussian (lqg) control. Ieee Access, 7, 109120--109133

work page 2019

-

[15]

Lissa, P., Deane, C., Schukat, M., Seri, F., Keane, M., and Barrett, E. (2021). Deep reinforcement learning for home energy management system control. Energy and AI, 3, 100043

work page 2021

-

[16]

Liu, W., Wang, G., Sun, J., Bullo, F., and Chen, J. (2024). Learning robust data-based lqg controllers from noisy data. IEEE Transactions on Automatic Control, 69(12), 8526--8538

work page 2024

-

[17]

Majumdar, A. and Goldstein, M. (2018). Pac-bayes control: Synthesizing controllers that provably generalize to novel environments. In Conference on robot learning, 293--305. PMLR

work page 2018

-

[18]

Parekh, S. and Losey, D.P. (2023). Learning latent representations to co-adapt to humans. Autonomous Robots, 47(6), 771--796

work page 2023

-

[19]

Qian, F., Huang, J., Liu, D., and Hu, S. (2015). Adaptive dual control of discrete-time lqg problems with unknown-but-bounded parameter. Asian Journal of Control, 17(3), 942--951

work page 2015

-

[20]

Van Den Berg, J., Abbeel, P., and Goldberg, K. (2011). Lqg-mp: Optimized path planning for robots with motion uncertainty and imperfect state information. The International Journal of Robotics Research, 30(7), 895--913

work page 2011

-

[21]

Van Den Berg, J., Wilkie, D., Guy, S.J., Niethammer, M., and Manocha, D. (2012). Lqg-obstacles: Feedback control with collision avoidance for mobile robots with motion and sensing uncertainty. In 2012 IEEE International Conference on Robotics and Automation, 346--353. IEEE

work page 2012

-

[22]

Zhang, Y., Fidan, B., and Ioannou, P.A. (2003). Backstepping control of linear time-varying systems with known and unknown parameters. IEEE Transactions on Automatic Control, 48(11), 1908--1925

work page 2003

-

[23]

SIAM Journal on Control and Optimization , volume=

Adaptive linear quadratic gaussian control: the cost-biased approach revisited , author=. SIAM Journal on Control and Optimization , volume=. 1998 , publisher=

work page 1998

-

[24]

IEEE Transactions on automatic control , volume=

Adaptive continuous-time linear quadratic Gaussian control , author=. IEEE Transactions on automatic control , volume=. 2002 , publisher=

work page 2002

-

[25]

IEEE Transactions on Automatic Control , volume=

Backstepping control of linear time-varying systems with known and unknown parameters , author=. IEEE Transactions on Automatic Control , volume=. 2003 , publisher=

work page 2003

-

[26]

Advances in Neural Information Processing Systems , volume=

Regret bounds for robust adaptive control of the linear quadratic regulator , author=. Advances in Neural Information Processing Systems , volume=

-

[27]

IEEE Transactions on Automatic Control , volume=

Learning robust data-based LQG controllers from noisy data , author=. IEEE Transactions on Automatic Control , volume=. 2024 , publisher=

work page 2024

-

[28]

Asian Journal of Control , volume=

Adaptive dual control of discrete-Time LQG problems with unknown-but-bounded parameter , author=. Asian Journal of Control , volume=. 2015 , publisher=

work page 2015

-

[29]

Deep reinforcement learning for home energy management system control , author=. Energy and AI , volume=. 2021 , publisher=

work page 2021

-

[30]

Annual Review of Control, Robotics, and Autonomous Systems , volume=

Safe learning in robotics: From learning-based control to safe reinforcement learning , author=. Annual Review of Control, Robotics, and Autonomous Systems , volume=. 2022 , publisher=

work page 2022

-

[31]

User-friendly introduction to PAC-Bayes bounds , author=. Foundations and Trends. 2024 , publisher=

work page 2024

-

[32]

PAC-Bayesian bounds based on the R

B. PAC-Bayesian bounds based on the R. Artificial Intelligence and Statistics , pages=. 2016 , organization=

work page 2016

-

[33]

Conference on robot learning , pages=

PAC-Bayes control: Synthesizing controllers that provably generalize to novel environments , author=. Conference on robot learning , pages=. 2018 , organization=

work page 2018

-

[34]

2024 IEEE 63rd Conference on Decision and Control (CDC) , pages=

A PAC-Bayesian framework for optimal control with stability guarantees , author=. 2024 IEEE 63rd Conference on Decision and Control (CDC) , pages=. 2024 , organization=

work page 2024

-

[35]

Artificial Intelligence and Statistics , pages=

Tight bounds for the expected risk of linear classifiers and PAC-Bayes finite-sample guarantees , author=. Artificial Intelligence and Statistics , pages=. 2014 , organization=

work page 2014

- [36]

-

[37]

PAC-Bayes Controller Code and Supplementary Materials , author =. 2025 , howpublished =

work page 2025

-

[38]

Proceedings of 35th IEEE Conference on Decision and Control , volume=

Optimal adaptive control of an LQG system , author=. Proceedings of 35th IEEE Conference on Decision and Control , volume=. 1996 , organization=

work page 1996

-

[39]

Buldygin, V. V. and Kozachenko, Yu. V. , title =. Ukrainian Mathematical Journal , volume =

-

[40]

Optimal control: linear quadratic methods , author=. 2007 , publisher=

work page 2007

-

[41]

2012 IEEE International Conference on Robotics and Automation , pages=

LQG-obstacles: Feedback control with collision avoidance for mobile robots with motion and sensing uncertainty , author=. 2012 IEEE International Conference on Robotics and Automation , pages=. 2012 , organization=

work page 2012

-

[42]

Optimal path tracking control of autonomous vehicle: Adaptive full-state linear quadratic Gaussian (LQG) control , author=. Ieee Access , volume=. 2019 , publisher=

work page 2019

-

[43]

The International Journal of Robotics Research , volume=

LQG-MP: Optimized path planning for robots with motion uncertainty and imperfect state information , author=. The International Journal of Robotics Research , volume=. 2011 , publisher=

work page 2011

-

[44]

Learning latent representations to co-adapt to humans , author=. Autonomous Robots , volume=. 2023 , publisher=

work page 2023

-

[45]

Formal Verification and Control with Conformal Prediction,

Formal verification and control with conformal prediction , author=. arXiv preprint arXiv:2409.00536 , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.