Recognition: no theorem link

When Should Teachers Control AI Generation for Mathematics Visuals?

Pith reviewed 2026-05-12 04:34 UTC · model grok-4.3

The pith

Post-generation control gives teachers higher predictability and correctness when using AI to create mathematics visuals.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

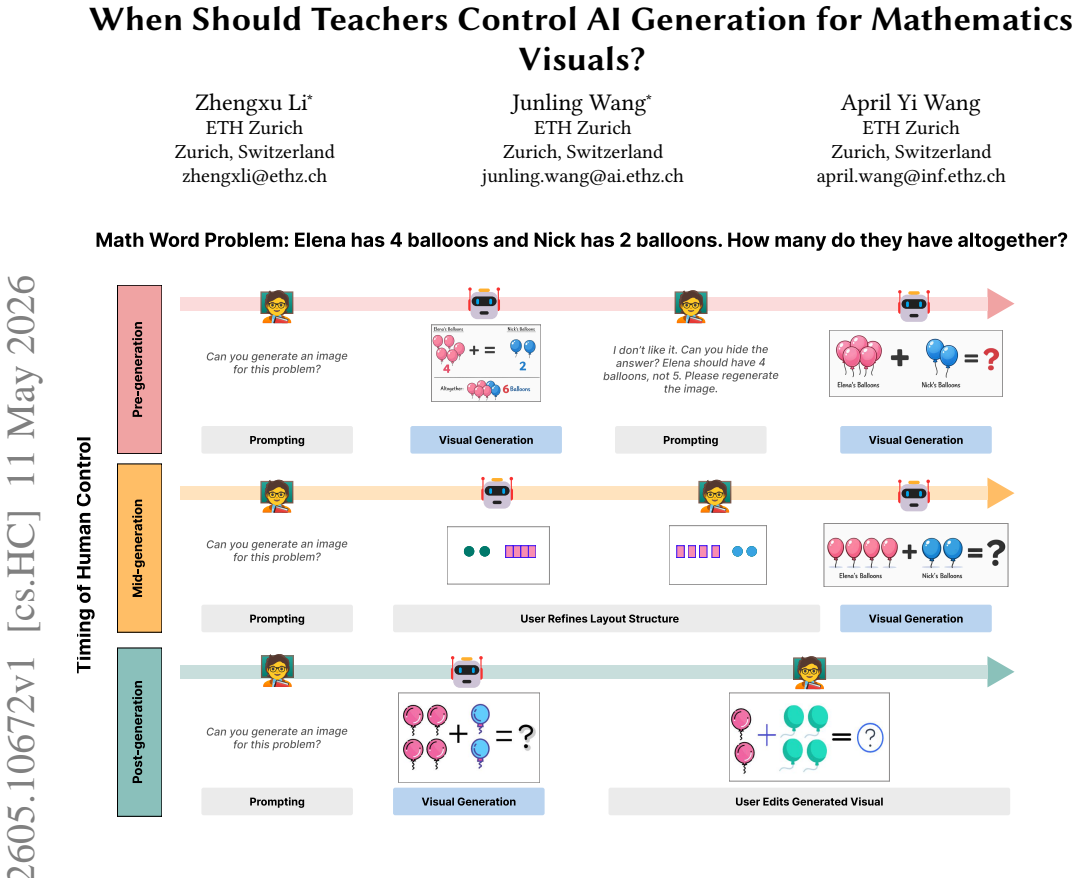

In correctness-sensitive educational tasks, post-generation control—where teachers directly modify AI-generated visuals through object-level edits—receives higher ratings on predictability and correctness than pre-generation control through natural language prompts or mid-generation control through layout confirmation, with qualitative data showing that post-generation preserves user agency via low-cost verification.

What carries the argument

A design space of three stages of human control in the generative AI pipeline: pre-generation (intent via prompts only), mid-generation (inspect and confirm explicit layout structure), and post-generation (object-level edits after full generation).

If this is right

- Generative tools for education should offer stage-dependent workflows that combine automation with direct manipulation rather than forcing one control point.

- Pre-generation prompting supports fast ideation but lowers perceived agency and predictability for accuracy-sensitive work.

- Mid-generation layout checks improve structural fit but add user effort without raising correctness ratings.

- Post-generation object edits let teachers verify and fix content directly, raising confidence in predictability without sacrificing all speed.

Where Pith is reading between the lines

- Similar control-stage preferences may appear in other subjects where diagrams must match precise rules, such as science illustrations or language concept maps.

- Tool builders could test hybrid interfaces that default to post-generation editing but allow optional mid-generation checks for users who want early structure.

- Classroom studies could measure whether students learn more when visuals are created under post-generation control versus prompt-only methods.

Load-bearing premise

That ratings from 24 primary mathematics teachers on a set of specific visual tasks will reflect how teachers generally need to ensure instructional correctness in real classrooms.

What would settle it

A follow-up test in which teachers use the three control stages to prepare actual lesson materials and independent raters check whether the final visuals contain fewer pedagogical errors under post-generation control.

Figures

read the original abstract

Generative AI has the potential to help teachers rapidly create classroom-ready visual materials, particularly in mathematics where diagrams and visual representations must be pedagogically meaningful and instructionally correct. However, current generative tools primarily support prompting and post-hoc editing, leaving open a key question for correctness-sensitive educational authoring: when in the generation pipeline should teachers exert control? In this paper, we investigate how the timing of human control in AI-assisted generation shapes teachers' visual authoring practices in correctness-sensitive tasks. We introduce a design space of three stages of control: pre-generation control, where users specify intent solely through natural language prompts before generation; mid-generation control, where users inspect and confirm an explicit layout structure before the system completes generation; and post-generation control, where users directly modify AI-generated visuals after generation through object-level edits. In a within-subject, mixed-methods study with 24 primary mathematics teachers, post-generation control received higher ratings on predictability and correctness, while other subjective measures showed no reliable differences. Qualitative findings explain these differences by revealing workflow trade-offs: highly automated, pre-generation control supports rapid ideation but reduces perceived agency and predictability; mid-generation control improves structural alignment at the cost of additional effort; and post-generation control preserves user agency through low-cost, direct verification and correction. Together, these results suggest that in correctness-sensitive educational tasks, effective generative tools should align system behavior with teacher intent and support stage-dependent workflows that combine automation with direct manipulation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper reports a within-subject mixed-methods study with 24 primary mathematics teachers comparing three stages of human control in AI-assisted generation of mathematics visuals: pre-generation (prompt-only), mid-generation (layout confirmation), and post-generation (object-level edits). It claims that post-generation control yields higher ratings on predictability and correctness with no reliable differences on other subjective measures, supported by qualitative insights into workflow trade-offs between automation, agency, and effort, leading to recommendations for stage-dependent tool designs in correctness-sensitive educational tasks.

Significance. If the central findings hold after addressing validation gaps, the work provides concrete empirical guidance for HCI researchers and tool designers on aligning generative AI with teacher needs in mathematics education. The mixed-methods approach, explicit mapping of control stages to perceived trade-offs, and focus on a high-stakes domain (pedagogically correct diagrams) add value beyond generic prompting studies.

major comments (2)

- [Results] The claim that post-generation control improves perceived correctness (abstract and results) is load-bearing for the design recommendations, yet correctness is operationalized solely via unvalidated teacher Likert ratings with no reported objective measures such as expert-coded mathematical accuracy, error counts, inter-rater reliability on artifacts, or curriculum alignment checks. Without triangulation to actual output quality, the ratings may reflect editing workflow preference rather than instructional soundness.

- [Study Design] The abstract and study description provide no details on statistical tests, effect sizes, confidence intervals, task selection criteria, or counterbalancing procedure despite the within-subject design with n=24; this weakens confidence in the directional claims for predictability and correctness and makes it difficult to assess whether null results on other measures reflect true equivalence or underpowering.

minor comments (1)

- [Abstract] The abstract could briefly note the specific types of mathematics tasks used (e.g., geometry diagrams, fraction representations) to clarify the scope of 'correctness-sensitive' content.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. The comments highlight key opportunities to strengthen the transparency of our reporting and to more carefully qualify our claims regarding perceived correctness. We address each major comment below and indicate the revisions we will make to the manuscript.

read point-by-point responses

-

Referee: [Results] The claim that post-generation control improves perceived correctness (abstract and results) is load-bearing for the design recommendations, yet correctness is operationalized solely via unvalidated teacher Likert ratings with no reported objective measures such as expert-coded mathematical accuracy, error counts, inter-rater reliability on artifacts, or curriculum alignment checks. Without triangulation to actual output quality, the ratings may reflect editing workflow preference rather than instructional soundness.

Authors: We agree that the study relies exclusively on teachers' subjective Likert ratings of correctness and does not include objective validation such as expert-coded accuracy or error counts. This is a genuine limitation: the higher ratings for post-generation control could partly reflect satisfaction with the direct-editing workflow rather than superior instructional quality of the final artifacts. In the revised manuscript we will (1) explicitly state this limitation in a dedicated Limitations subsection, (2) qualify the abstract and results claims to emphasize that the findings concern perceived rather than objectively verified correctness, and (3) add a forward-looking paragraph recommending future studies that triangulate teacher ratings with expert review or automated curriculum-alignment checks. We retain the perceptual results as valuable for HCI tool design but will not overstate their implications for pedagogical soundness. revision: partial

-

Referee: [Study Design] The abstract and study description provide no details on statistical tests, effect sizes, confidence intervals, task selection criteria, or counterbalancing procedure despite the within-subject design with n=24; this weakens confidence in the directional claims for predictability and correctness and makes it difficult to assess whether null results on other measures reflect true equivalence or underpowering.

Authors: We acknowledge that the abstract and the high-level study overview omit these methodological specifics. The full Methods section does describe the within-subjects design, a Latin-square counterbalancing procedure to control order effects, task selection criteria (standard primary-school topics in fractions, geometry, and measurement drawn from the national curriculum), and the statistical approach (repeated-measures ANOVA with Greenhouse-Geisser correction, partial eta-squared effect sizes, and 95% confidence intervals). To address the referee's concern, we will expand the abstract to include the key statistical tests, effect sizes, and sample size, and we will insert a concise “Study Design and Analysis” paragraph immediately after the participant description that explicitly lists task criteria, counterbalancing, power considerations, and the handling of null results. These additions will improve transparency without altering the underlying data or conclusions. revision: yes

Circularity Check

No circularity: direct empirical user study with no derivations or self-referential predictions

full rationale

The paper describes a within-subject mixed-methods study with 24 primary mathematics teachers, collecting subjective Likert ratings and qualitative feedback on three control stages in AI-assisted visual generation. No equations, fitted parameters, model predictions, or derivation chains appear in the abstract or described methods. Central claims rest on direct data collection rather than any reduction to inputs by construction, self-citation load-bearing, or ansatz smuggling. Methodological concerns such as lack of objective validation for correctness ratings fall under study validity, not circularity per the analysis rules.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Mahmoud Mohammad Sayed Abdallah. 2011.Web-based new literacies and EFL curriculum design in teacher education: A design study for expanding EFL student teachers’ language-related literacy practices in an Egyptian pre-service teacher education programme. University of Exeter (United Kingdom)

work page 2011

-

[2]

Safinah Ali, Prerna Ravi, Katherine Moore, Hal Abelson, and Cynthia Breazeal

-

[3]

InProceedings of the AAAI Conference on Artificial Intelligence, Vol

A picture is worth a thousand words: Co-designing text-to-image gen- eration learning materials for k-12 with educators. InProceedings of the AAAI Conference on Artificial Intelligence, Vol. 38. 23260–23267

-

[4]

James E Allen, Curry I Guinn, and Eric Horvtz. 1999. Mixed-initiative interaction. IEEE Intelligent Systems and their Applications14, 5 (1999), 14–23

work page 1999

-

[5]

Shm Garanganao Almeda, JD Zamfirescu-Pereira, Kyu Won Kim, Pradeep Mani Rathnam, and Bjoern Hartmann. 2024. Prompting for discovery: Flex- ible sense-making for ai art-making with dreamsheets. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems. 1–17

work page 2024

-

[6]

Manal A Almuhanna. 2025. Teachers’ perspectives of integrating AI-powered technologies in K-12 education for creating customized learning materials and resources.Education and Information Technologies30, 8 (2025), 10343–10371

work page 2025

-

[7]

Saleema Amershi, Daniel Weld, Mihaela Vorvoreanu, Adam Fourney, Besmira Nushi, Penny Collisson, Jina Suh, Shamsi Iqbal, Paul N Bennett, Kori Inkpen, et al. 2019. Guidelines for human-AI interaction. InProceedings of the 2019 CHI When Should Teachers Control AI Generation for Mathematics Visuals? conference on human factors in computing systems. 1–13. doi:...

-

[8]

Yehudit Aperstein, Yuval Cohen, and Alexander Apartsin. 2025. Generative ai-based platform for deliberate teaching practice: A review and a suggested framework.Education Sciences15, 4 (2025), 405

work page 2025

-

[9]

Yuliya Ardasheva, Zhe Wang, Anna Karin Roo, Olusola O Adesope, and Judith A Morrison. 2018. Representation visuals’ impacts on science interest and read- ing comprehension of adolescent English learners.The Journal of Educational Research111, 5 (2018), 631–643

work page 2018

-

[10]

Eliza Bobek and Barbara Tversky. 2016. Creating visual explanations improves learning.Cognitive Research: Principles and Implications1 (12 2016). doi:10.1186/ s41235-016-0031-6

work page 2016

-

[11]

Rishi Bommasani, Drew A Hudson, Ehsan Adeli, Russ Altman, Simran Arora, Sydney von Arx, Michael S Bernstein, Jeannette Bohg, Antoine Bosselut, Emma Brunskill, et al. 2021. On the opportunities and risks of foundation models.arXiv preprint arXiv:2108.07258(2021)

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[12]

John Brooke et al. 1996. SUS-A quick and dirty usability scale.Usability evaluation in industry189, 194 (1996), 4–7

work page 1996

- [13]

-

[14]

Monjoy Narayan Choudhury, Junling Wang, Yifan Hou, and Mrinmaya Sachan

-

[15]

Can Vision-Language Models Solve Visual Math Equations?. InProceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, Christos Christodoulopoulos, Tanmoy Chakraborty, Carolyn Rose, and Violet Peng (Eds.). Association for Computational Linguistics, Suzhou, China, 10799– 10808. doi:10.18653/v1/2025.emnlp-main.547

-

[16]

John Joon Young Chung and Eytan Adar. 2023. Promptpaint: Steering text-to- image generation through paint medium-like interactions. InProceedings of the 36th Annual ACM Symposium on User Interface Software and Technology. 1–17

work page 2023

-

[17]

James M Clark and Allan Paivio. 1991. Dual coding theory and education. Educational psychology review3, 3 (1991), 149–210

work page 1991

-

[18]

Ruth C Clark and Chopeta Lyons. 2010.Graphics for learning: Proven guidelines for planning, designing, and evaluating visuals in training materials. John Wiley & Sons

work page 2010

-

[19]

1996.Analytical reasoning with multiple external representations

Richard Cox. 1996.Analytical reasoning with multiple external representations. Ph. D. Dissertation. University of Edinburgh UK

work page 1996

-

[20]

Berkeley J Dietvorst, Joseph P Simmons, and Cade Massey. 2018. Overcoming al- gorithm aversion: People will use imperfect algorithms if they can (even slightly) modify them.Management science64, 3 (2018), 1155–1170

work page 2018

-

[21]

Raymond Duval. 1999. Representation, Vision and Visualization: Cognitive Functions in Mathematical Thinking. Basic Issues for Learning. (1999)

work page 1999

-

[22]

2012.Teaching, learning, and visual literacy: The dual role of visual representation

Billie Eilam. 2012.Teaching, learning, and visual literacy: The dual role of visual representation. Cambridge University Press

work page 2012

-

[23]

Franz Faul, Edgar Erdfelder, Axel Buchner, and Albert-Georg Lang. 2009. Sta- tistical power analyses using G* Power 3.1: Tests for correlation and regression analyses.Behavior research methods41, 4 (2009), 1149–1160

work page 2009

-

[24]

Emily R Fyfe, Nicole M McNeil, Ji Y Son, and Robert L Goldstone. 2014. Con- creteness fading in mathematics and science instruction: A systematic review. Educational psychology review26, 1 (2014), 9–25

work page 2014

-

[25]

Vladimir Geroimenko. 2025.The Essential Guide to Prompt Engineering: Key Principles, Techniques, Challenges, and Security Risks. Springer Nature

work page 2025

-

[26]

Daibao Guo, Erin M McTigue, Sharon D Matthews, and Wendi Zimmer. 2020. The impact of visual displays on learning across the disciplines: A systematic review.Educational Psychology Review32, 3 (2020), 627–656

work page 2020

-

[27]

Sandra G Hart and Lowell E Staveland. 1988. Development of NASA-TLX (Task Load Index): Results of empirical and theoretical research. InAdvances in psy- chology. Vol. 52. Elsevier, 139–183

work page 1988

-

[28]

Derek Haylock. 2007. Key concepts in teaching primary mathematics. (2007)

work page 2007

-

[29]

James Hollan, Edwin Hutchins, and David Kirsh. 2000. Distributed cognition: toward a new foundation for human-computer interaction research.ACM Trans- actions on Computer-Human Interaction (TOCHI)7, 2 (2000), 174–196

work page 2000

-

[30]

2019.Artificial intelligence in education promises and implications for teaching and learning

Wayne Holmes, Maya Bialik, and Charles Fadel. 2019.Artificial intelligence in education promises and implications for teaching and learning. Center for Curriculum Redesign

work page 2019

-

[31]

Wayne Holmes, Kaśka Porayska-Pomsta, Kenneth Holstein, Elaine Sutherland, Toby Baker, Simon Buckingham Shum, Octavio C. Santos, Ma. Mercedes T. Rodrigo, Mutlu Cukurova, Ig Ibert Bittencourt, and Kenneth R. Koedinger. 2021. Ethics of AI in Education: Towards a Community-Wide Framework.International Journal of Artificial Intelligence in Education(2021). htt...

-

[32]

William Horton and Katherine Horton. 2003.E-learning Tools and Technologies: A consumer’s guide for trainers, teachers, educators, and instructional designers. John Wiley & Sons

work page 2003

-

[33]

Eric Horvitz. 1999. Principles of mixed-initiative user interfaces. InProceedings of the SIGCHI conference on Human Factors in Computing Systems. 159–166

work page 1999

-

[34]

Eric Horvitz, Paul Koch, and Johnson Apacible. 2004. BusyBody: creating and fielding personalized models of the cost of interruption. InProceedings of the 2004 ACM conference on Computer supported cooperative work. 507–510

work page 2004

-

[35]

Yifan Hou, Buse Giledereli, Yilei Tu, and Mrinmaya Sachan. 2025. Do vision- language models really understand visual language?. InProceedings of the 42nd International Conference on Machine Learning(Vancouver, Canada)(ICML’25). JMLR.org, Article 938, 50 pages

work page 2025

-

[36]

Noha Hussen, Ahmed Samir, Aliaa Adel, Abdelrahman Gaber, Mommen Attaia, and Ahmed Mohamed. 2025. Advancing Creativity: A Comprehensive Review of AI-Driven Text-to-Image Generation and Its Applications.Advanced Sciences and Technology Journal2, 2 (2025), 1–17

work page 2025

-

[37]

Ivana Kajić, Olivia Wiles, Isabela Albuquerque, Matthias Bauer, Su Wang, Jordi Pont-Tuset, and Aida Nematzadeh. 2024. Evaluating numerical reasoning in text-to-image models.Advances in Neural Information Processing Systems37 (2024), 42211–42224

work page 2024

-

[38]

Ian Khan. 2024.The quick guide to prompt engineering: Generative AI tips and tricks for ChatGPT, Bard, Dall-E, and Midjourney. John Wiley & Sons

work page 2024

-

[39]

Hyung-Kwon Ko, Gwanmo Park, Hyeon Jeon, Jaemin Jo, Juho Kim, and Jinwook Seo. 2023. Large-scale text-to-image generation models for visual artists’ cre- ative works. InProceedings of the 28th international conference on intelligent user interfaces. 919–933

work page 2023

-

[40]

Kenneth R Koedinger, Jihee Kim, Julianna Zhuxin Jia, Elizabeth A McLaughlin, and Norman L Bier. 2015. Learning is not a spectator sport: Doing is better than watching for learning from a MOOC. InProceedings of the second (2015) ACM conference on learning@ scale. 111–120

work page 2015

-

[41]

Florian Lehmann. 2023. Mixed-Initiative Interaction with Computational Gener- ative Systems. InExtended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems. 1–6

work page 2023

-

[42]

Haichuan Lin, Yilin Ye, Jiazhi Xia, and Wei Zeng. 2025. SketchFlex: Facilitating Spatial-Semantic Coherence in Text-to-Image Generation with Region-Based Sketches. InProceedings of the 2025 CHI Conference on Human Factors in Comput- ing Systems. 1–19

work page 2025

-

[43]

Jiawei Lin, Jiaqi Guo, Shizhao Sun, Zijiang Yang, Jian-Guang Lou, and Dongmei Zhang. 2023. Layoutprompter: Awaken the design ability of large language models.Advances in Neural Information Processing Systems36 (2023), 43852– 43879

work page 2023

-

[44]

Vivian Liu and Lydia B Chilton. 2022. Design guidelines for prompt engineering text-to-image generative models. InProceedings of the 2022 CHI conference on human factors in computing systems. 1–23

work page 2022

-

[45]

Jennifer M Logg, Julia A Minson, and Don A Moore. 2019. Algorithm appreciation: People prefer algorithmic to human judgment.Organizational Behavior and Human Decision Processes151 (2019), 90–103

work page 2019

-

[46]

Duri Long and Brian Magerko. 2020. What is AI literacy? Competencies and design considerations. InProceedings of the 2020 CHI conference on human factors in computing systems. 1–16

work page 2020

-

[47]

Michael Macdonald-Ross. 1977. How numbers are shown: A review of research on the presentation of quantitative data in texts.A V communication review25, 4 (1977), 359–409

work page 1977

-

[48]

James G March. 1991. Exploration and exploitation in organizational learning. Organization science2, 1 (1991), 71–87

work page 1991

-

[49]

Richard E Mayer. 2005. Cognitive theory of multimedia learning.The Cambridge handbook of multimedia learning41, 1 (2005), 31–48

work page 2005

-

[50]

Midjourney, Inc. 2022. Midjourney – The Generative AI Service. https://www. midjourney.com. Accessed: 2025-12-14

work page 2022

-

[51]

Emily C Miller, Samuel Severance, and Joseph Krajcik. 2021. Motivating teaching, sustaining change in practice: Design principles for teacher learning in project- based learning contexts.Journal of Science Teacher Education32, 7 (2021), 757– 779

work page 2021

-

[52]

Reza Hadi Mogavi, Chao Deng, Justin Juho Kim, Pengyuan Zhou, Young D Kwon, Ahmed Hosny Saleh Metwally, Ahmed Tlili, Simone Bassanelli, Antonio Bucchiarone, Sujit Gujar, et al . 2024. ChatGPT in education: A blessing or a curse? A qualitative study exploring early adopters’ utilization and perceptions. Computers in Human Behavior: Artificial Humans2, 1 (20...

work page 2024

-

[53]

2013.The design of everyday things: Revised and expanded edition

Don Norman. 2013.The design of everyday things: Revised and expanded edition. Basic books

work page 2013

-

[54]

Donald A Norman. 1986. Cognitive engineering. InUser centered system design. CRC Press, 31–62

work page 1986

-

[55]

Stephen J Pape and Mourat A Tchoshanov. 2001. The role of representation (s) in developing mathematical understanding.Theory into practice40, 2 (2001), 118–127

work page 2001

-

[56]

Xiaohan Peng, Janin Koch, and Wendy E Mackay. 2024. Designprompt: Using multimodal interaction for design exploration with generative ai. InProceedings of the 2024 ACM Designing Interactive Systems Conference. 804–818

work page 2024

-

[57]

Peter Pirolli and Stuart Card. 2005. The sensemaking process and leverage points for analyst technology as identified through cognitive task analysis. In Proceedings of international conference on intelligence analysis, Vol. 5. McLean, VA, USA, 2–4

work page 2005

-

[58]

2024.Generative AI and education: Digital pedagogies, teaching innovation and learning design

B Mairéad Pratschke. 2024.Generative AI and education: Digital pedagogies, teaching innovation and learning design. Springer. Zhengxu Li, Junling Wang, and April Yi Wang

work page 2024

-

[59]

Norma C Presmeg. 2006. Research on visualization in learning and teaching mathematics.Handbook of research on the psychology of mathematics education: Past, present and future(2006), 205–235

work page 2006

-

[60]

Aditya Ramesh, Mikhail Pavlov, Gabriel Goh, Scott Gray, Chelsea Voss, Alec Rad- ford, Mark Chen, and Ilya Sutskever. 2021. Zero-shot text-to-image generation. InInternational conference on machine learning. Pmlr, 8821–8831

work page 2021

-

[61]

Martina A Rau. 2017. Conditions for the effectiveness of multiple visual repre- sentations in enhancing STEM learning.Educational Psychology Review29, 4 (2017), 717–761

work page 2017

-

[62]

Arijit Ray, Filip Radenovic, Abhimanyu Dubey, Bryan Plummer, Ranjay Krishna, and Kate Saenko. 2023. Cola: A benchmark for compositional text-to-image retrieval.Advances in Neural Information Processing Systems36 (2023), 46433– 46445

work page 2023

-

[63]

Alexander Renkl and Katharina Scheiter. 2017. Studying visual displays: How to instructionally support learning.Educational Psychology Review29, 3 (2017), 599–621

work page 2017

-

[64]

Kathryn M Rich, Aman Yadav, and Rachel A Larimore. 2020. Teacher implementa- tion profiles for integrating computational thinking into elementary mathematics and science instruction.Education and Information Technologies25, 4 (2020), 3161–3188

work page 2020

-

[65]

Ferdinand Rivera. 2011.Toward a visually-oriented school mathematics curriculum: Research, theory, practice, and issues. Vol. 49. Springer Science & Business Media

work page 2011

-

[66]

Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. 2022. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10684–10695

work page 2022

-

[67]

Daniel M Russell, Mark J Stefik, Peter Pirolli, and Stuart K Card. 1993. The cost structure of sensemaking. InProceedings of the INTERACT’93 and CHI’93 conference on Human factors in computing systems. 269–276

work page 1993

-

[68]

Johnny Saldaña. 2021. The coding manual for qualitative researchers. (2021)

work page 2021

-

[69]

Ben Shneiderman. 1983. Direct manipulation: A step beyond programming languages.Computer16, 08 (1983), 57–69

work page 1983

-

[70]

Ben Shneiderman. 2022.Human-centered AI. Oxford University Press

work page 2022

-

[71]

Sangho Suh, Meng Chen, Bryan Min, Toby Jia-Jun Li, and Haijun Xia. 2024. Lumi- nate: Structured generation and exploration of design space with large language models for human-ai co-creation. InProceedings of the 2024 CHI Conference on Human Factors in Computing Systems. 1–26

work page 2024

-

[72]

Hou In Ivan Tam, Hou In Derek Pun, Austin T Wang, Angel X Chang, and Manolis Savva. 2025. Scenemotifcoder: Example-driven visual program learning for generating 3d object arrangements. In2025 International Conference on 3D Vision (3DV). IEEE, 179–188

work page 2025

- [73]

-

[74]

Can Wang, Hongliang Zhong, Menglei Chai, Mingming He, Dongdong Chen, and Jing Liao. 2025. Chat2Layout: Interactive 3D furniture layout with a multimodal LLM.IEEE transactions on visualization and computer graphics(2025)

work page 2025

-

[75]

Junling Wang, Anna Rutkiewicz, April Wang, and Mrinmaya Sachan. 2025. Gen- erating pedagogically meaningful visuals for math word problems: A new bench- mark and analysis of text-to-image models. InFindings of the Association for Computational Linguistics: ACL 2025. 11229–11257

work page 2025

-

[76]

Zhijie Wang, Yuheng Huang, Da Song, Lei Ma, and Tianyi Zhang. 2024. Promptcharm: Text-to-image generation through multi-modal prompting and refinement. InProceedings of the 2024 CHI Conference on Human Factors in Com- puting Systems. 1–21

work page 2024

-

[77]

Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. 2022. Chain-of-thought prompting elicits reason- ing in large language models.Advances in neural information processing systems 35 (2022), 24824–24837

work page 2022

-

[78]

Hsin-Kai Wu and Priti Shah. 2004. Exploring visuospatial thinking in chemistry learning.Science Education88, 3 (2004), 465–492

work page 2004

-

[79]

Xindi Wu, Dingli Yu, Yangsibo Huang, Olga Russakovsky, and Sanjeev Arora

-

[80]

Conceptmix: A compositional image generation benchmark with control- lable difficulty.Advances in Neural Information Processing Systems37 (2024), 86004–86047

work page 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.