Recognition: no theorem link

Variational predictive resampling

Pith reviewed 2026-05-13 02:02 UTC · model grok-4.3

The pith

Variational predictive resampling with mean-field predictives converges to the exact Bayesian posterior in Gaussian location models.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

VPR repeatedly imputes future observations from the current variational predictive, updates the variational approximation after each imputation, and records the parameter implied by the completed sample. The paper establishes conditions under which the law of the returned parameter is well-defined and shows that finite-horizon approximations converge to this limit. In a tractable Gaussian location model, VPR with mean-field variational predictives converges to the exact Bayesian posterior, whereas the optimal mean-field VI approximation retains a non-vanishing asymptotic gap.

What carries the argument

Variational predictive resampling (VPR), the procedure of iterative imputation from variational predictives followed by variational updates to produce parameter samples.

If this is right

- VPR recovers posterior dependence missed by mean-field VI in linear regression, logistic regression, and hierarchical linear mixed-effects models.

- VPR substantially improves posterior uncertainty quantification compared with mean-field VI while remaining computationally competitive with MCMC.

- The finite-horizon approximation to VPR converges to the limiting distribution under the stated conditions.

- The method exploits VI's predictive strength to produce better posterior approximations than direct application of the same variational family.

Where Pith is reading between the lines

- The resampling step may offer a general way to correct under-dispersion in other variational families beyond mean-field.

- If the convergence holds more broadly, VPR could serve as an intermediate step between cheap VI and expensive MCMC in high-dimensional or structured models.

- The approach highlights that predictive distributions from VI can be used to bootstrap improved posterior sampling without altering the variational family itself.

Load-bearing premise

The paper assumes conditions exist under which the law of the parameter returned by VPR is well defined and that the finite-horizon approximation converges to this limit.

What would settle it

In the Gaussian location model, generate many VPR samples with mean-field predictives and compare their empirical distribution directly to the known exact posterior; a persistent mismatch would falsify convergence while a vanishing gap relative to MF-VI would confirm the claimed improvement.

Figures

read the original abstract

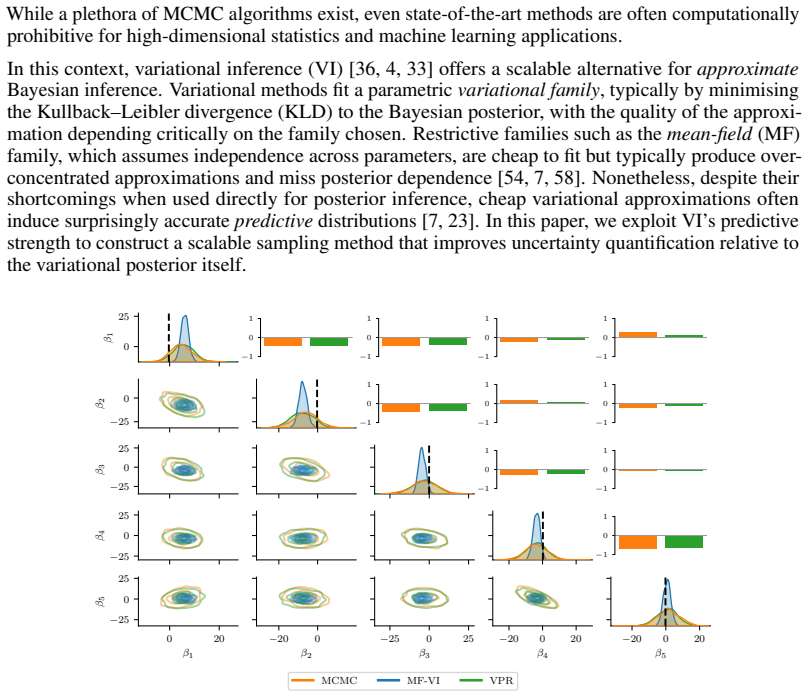

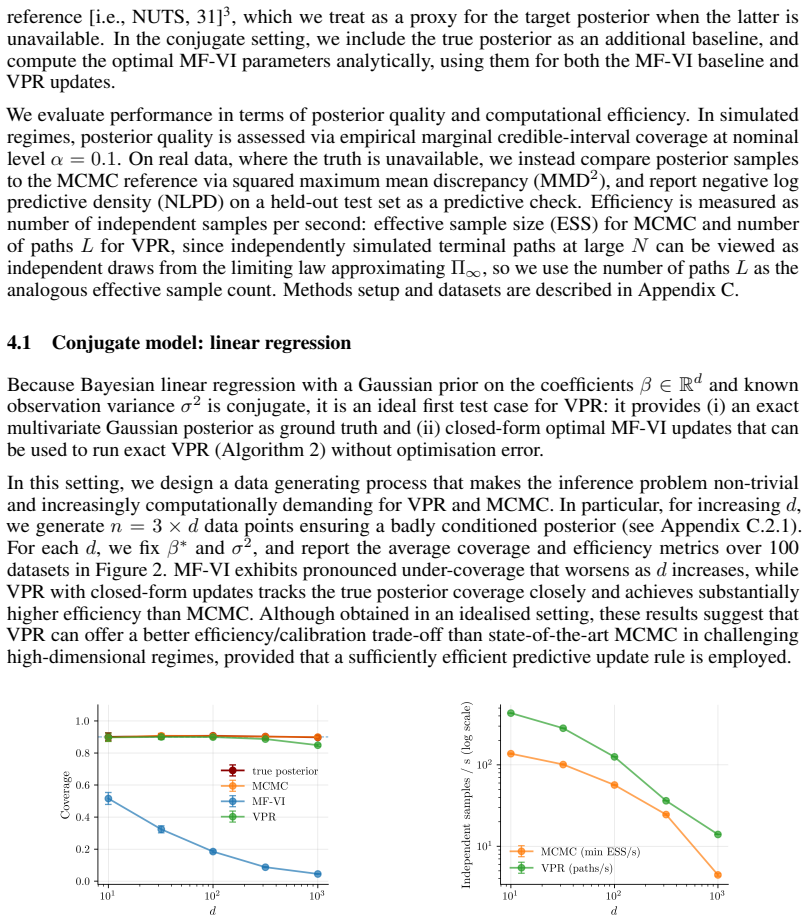

Bayesian inference provides principled uncertainty quantification, but accurate posterior sampling with MCMC can be computationally prohibitive for modern applications. Variational inference (VI) offers a scalable alternative and often yields accurate predictive distributions, but cheap variational families such as mean-field (MF) can produce over-concentrated approximations that miss posterior dependence. We propose variational predictive resampling (VPR), a scalable posterior sampling method that exploits VI's predictive strength within a predictive-resampling framework to better approximate the Bayesian posterior. Given a prior-likelihood pair, VPR repeatedly imputes future observations from the current variational predictive, updates the variational approximation after each imputation, and records the parameter value implied by the completed sample. We establish conditions under which the law of the parameter returned by VPR is well defined and show that its finite-horizon approximation converges to this limit. In a tractable Gaussian location model, we show that VPR with MF variational predictives converges to the exact Bayesian posterior, whereas the optimal MF-VI approximation retains a non-vanishing asymptotic gap. Experiments on linear regression, logistic regression, and hierarchical linear mixed-effects models demonstrate that VPR substantially improves posterior uncertainty quantification and recovers posterior dependence missed by MF-VI, while remaining computationally competitive with, and often more efficient than, MCMC.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes variational predictive resampling (VPR), a hybrid method that repeatedly imputes future observations from the current variational predictive distribution, updates the variational approximation, and records the implied parameter to generate approximate posterior samples. It establishes conditions for the limiting law of the returned parameter to be well-defined via a fixed-point argument and proves convergence of the finite-horizon procedure to this limit. In a Gaussian location model, mean-field VPR is shown to recover the exact Bayesian posterior while the optimal mean-field VI approximation retains a positive KL gap. Experiments on linear regression, logistic regression, and hierarchical linear mixed-effects models indicate that VPR improves posterior uncertainty quantification and recovers dependence missed by MF-VI, while remaining competitive with MCMC in runtime.

Significance. If the results hold, VPR offers a scalable route to better posterior approximations by leveraging the predictive accuracy of VI within a resampling framework, addressing a known weakness of mean-field families. The explicit conditions, finite-horizon convergence proof, and closed-form demonstration in the Gaussian case are notable strengths, as is the empirical evidence across multiple model classes. The work could inform hybrid inference methods that combine variational efficiency with improved uncertainty calibration.

major comments (2)

- [Theory section] Theory section on well-definedness: the fixed-point argument establishing that the law of the VPR parameter is well-defined should explicitly verify the contraction condition (e.g., via the metric on the space of probability measures) for the mean-field family; without this, it is unclear whether the argument applies beyond the Gaussian location model where closed forms are available.

- [Gaussian location model] Gaussian location model derivation: while the manuscript derives that MF-VPR iterates recover the exact posterior, the quantification of the non-vanishing asymptotic KL gap for static MF-VI should include the explicit limiting value of the gap as a function of the prior variance and sample size to make the comparison fully parameter-free and falsifiable.

minor comments (3)

- [Method] The description of recording the 'parameter value implied by the completed sample' is concise but would benefit from an explicit algorithmic step or pseudocode line showing how the final variational parameters are converted to a posterior draw.

- [Experiments] Experiments: the runtime comparisons to MCMC would be strengthened by reporting effective sample sizes or Gelman-Rubin statistics for the MCMC baselines to substantiate the claim of computational competitiveness.

- [Notation] Notation: the distinction between the variational predictive used for imputation and the final variational approximation after updates should be denoted with distinct symbols to avoid reader confusion in the recursive definitions.

Simulated Author's Rebuttal

We thank the referee for their thoughtful review and positive recommendation for minor revision. We address each major comment below.

read point-by-point responses

-

Referee: [Theory section] Theory section on well-definedness: the fixed-point argument establishing that the law of the VPR parameter is well-defined should explicitly verify the contraction condition (e.g., via the metric on the space of probability measures) for the mean-field family; without this, it is unclear whether the argument applies beyond the Gaussian location model where closed forms are available.

Authors: The fixed-point argument in Section 3 is stated under general conditions on the variational family that ensure the update map is a contraction on the space of probability measures. We acknowledge that an explicit verification for the mean-field family would strengthen the presentation and clarify applicability beyond the Gaussian case. In the revision we will add a short paragraph (or appendix entry) confirming that the mean-field family satisfies the contraction condition with respect to the metric employed in the fixed-point argument, at least for the exponential-family models considered in the paper. revision: yes

-

Referee: [Gaussian location model] Gaussian location model derivation: while the manuscript derives that MF-VPR iterates recover the exact posterior, the quantification of the non-vanishing asymptotic KL gap for static MF-VI should include the explicit limiting value of the gap as a function of the prior variance and sample size to make the comparison fully parameter-free and falsifiable.

Authors: We agree that an explicit expression for the limiting KL gap improves clarity and falsifiability. Because both the optimal mean-field VI solution and the true posterior are Gaussian in this model, the KL divergence admits a closed form. In the revision we will derive and state the exact limiting value of the gap (as n → ∞) as a function of the prior variance and the data-generating parameter, thereby making the comparison fully explicit. revision: yes

Circularity Check

No significant circularity; derivation is self-contained

full rationale

The paper establishes the VPR parameter law via an explicit fixed-point argument on the predictive process, proves finite-horizon convergence to the limit, and in the Gaussian location model derives closed-form recursions showing MF-VPR recovers the exact posterior while static MF-VI retains a KL gap. These steps use the model's tractability for independent verification and do not reduce to fitted inputs, self-citations, or definitions by construction. No load-bearing step matches the enumerated circular patterns.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption A prior-likelihood pair exists that admits a variational family whose predictive can be used for imputation.

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.