Recognition: 2 theorem links

· Lean TheoremStepCodeReasoner: Aligning Code Reasoning with Stepwise Execution Traces via Reinforcement Learning

Pith reviewed 2026-05-13 05:20 UTC · model grok-4.3

The pith

Models that predict runtime states step by step reason about code more reliably.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

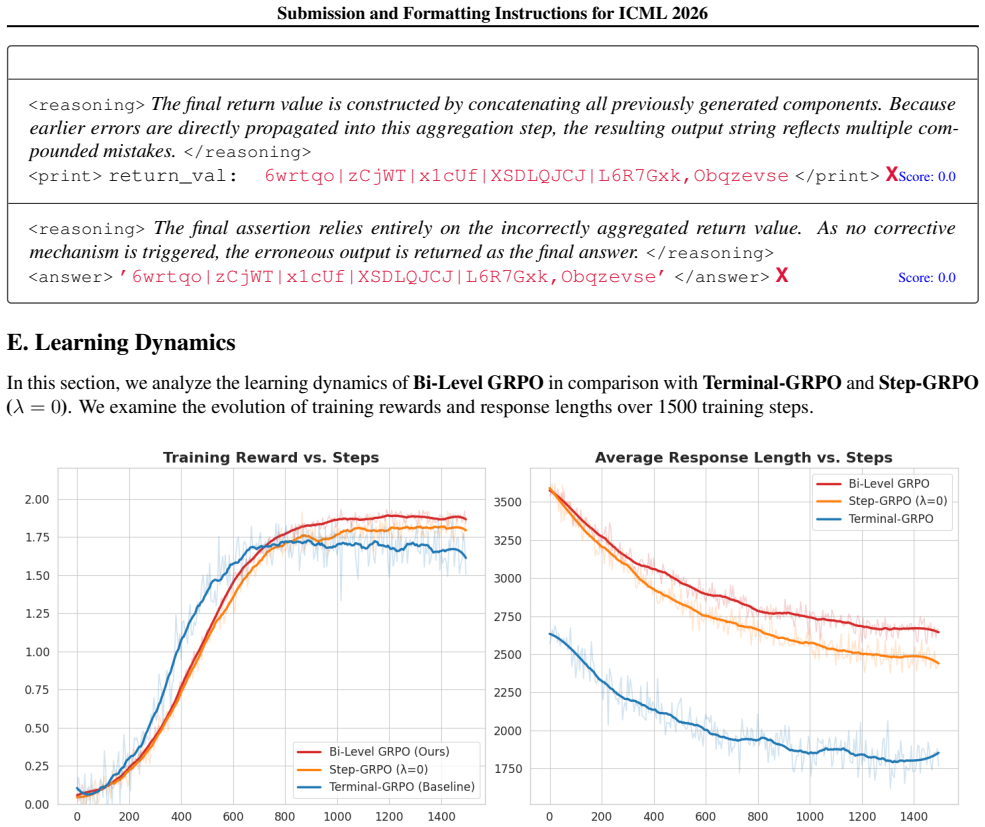

StepCodeReasoner uses automatic insertion of structured print-based execution-trace anchors to train models to predict runtime states at each step, turning code reasoning into stepwise execution modeling. Combined with Bi-Level GRPO for inter- and intra-trajectory credit assignment, this produces more consistent reasoning.

What carries the argument

Structured print-based execution-trace anchors for intermediate state supervision, together with bi-level reinforcement learning for credit assignment.

If this is right

- Reasoning becomes more consistent because intermediate steps are directly supervised.

- Performance improves on tasks requiring code understanding and execution prediction.

- Code generation also benefits from the execution-aware training.

- The framework supports both reasoning and generation tasks with the same model.

Where Pith is reading between the lines

- The same principle of intermediate state supervision may apply to non-code sequential tasks such as planning or simulation.

- Improved execution modeling may lead to more reliable automated code review or repair tools.

Load-bearing premise

Automatically inserting print statements produces faithful supervision signals that train consistent reasoning rather than allowing the model to hack the final answer.

What would settle it

An ablation that removes the execution-trace anchors and checks whether the model reverts to baseline behavior on reasoning tasks.

Figures

read the original abstract

Existing code reasoning methods primarily supervise final code outputs, ignoring intermediate states, often leading to reward hacking where correct answers are obtained through inconsistent reasoning. We propose StepCodeReasoner, a framework that introduces explicit intermediate execution-state supervision. By automatically inserting structured print-based execution-trace anchors into code, the model is trained to predict runtime states at each step, transforming code reasoning into a verifiable, stepwise execution modeling problem. Building on this execution-aware method, we introduce Bi-Level GRPO, a reinforcement learning algorithm for structured credit assignment at two levels: inter-trajectory, comparing alternative execution paths, and intra-trajectory, rewarding intermediate accuracy based on its impact on downstream correctness. Extensive experiments demonstrate that StepCodeReasoner achieves SOTA performance in code reasoning. In particular, our 7B model achieves 91.1\% on CRUXEval and 86.5\% on LiveCodeBench, outperforming the CodeReasoner-7B baseline (86.0\% and 77.7\%) and GPT-4o (85.6\% and 75.1\%). Furthermore, on the execution-trace benchmark REval, our model scores 82.9\%, outperforming baseline CodeReasoner-7B (72.3\%), its 14B counterpart (81.1\%), and GPT-4o (77.3\%). Additionally, our approach also improves code generation performance, demonstrating that explicit execution modeling enhances both code reasoning and code generation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces StepCodeReasoner, a framework that automatically inserts structured print-based execution-trace anchors into code to enable explicit intermediate-state supervision during training. It combines this with Bi-Level GRPO, a reinforcement learning algorithm that performs inter-trajectory and intra-trajectory credit assignment, to align code reasoning with verifiable stepwise execution. The 7B model is reported to achieve 91.1% on CRUXEval, 86.5% on LiveCodeBench, and 82.9% on REval, outperforming the CodeReasoner-7B baseline, its 14B variant, and GPT-4o, with additional gains on code generation.

Significance. If the gains are shown to stem from faithful execution supervision rather than insertion artifacts or training confounders, the work could meaningfully advance code reasoning by shifting from final-answer supervision to verifiable intermediate states, reducing reward hacking and improving reliability. The bi-level RL formulation for structured credit assignment and the empirical outperformance on execution-trace benchmarks represent a concrete step toward more interpretable and robust code models.

major comments (2)

- [§3.2] §3.2 (Anchor Insertion Algorithm): The claim that automatically inserted print anchors preserve original semantics for arbitrary control flows, mutations, side effects, and exceptions is load-bearing for the central thesis that the resulting traces provide faithful, non-disruptive supervision. No formal invariants, exhaustive test cases, or empirical checks for I/O interference or skipped branches are provided, leaving open the possibility that observed gains reflect surface-level format prediction rather than genuine reasoning alignment.

- [§5] §5 (Experiments and Ablations): The SOTA claims rest on comparisons to CodeReasoner-7B and GPT-4o, yet no ablation isolates the contribution of the print-anchor supervision from the Bi-Level GRPO objective or from possible differences in training data volume. Without such controls, it is impossible to confirm that the 5.1-point CRUXEval and 8.8-point LiveCodeBench lifts are attributable to the proposed execution modeling rather than other factors.

minor comments (2)

- [Abstract and §4.1] The abstract and §4.1 refer to 'structured print-based execution-trace anchors' without a concise pseudocode listing of the insertion rules, making it difficult for readers to reproduce the preprocessing step.

- [Table 1] Table 1 (benchmark results) reports single-point percentages without standard deviations or number of evaluation runs, which is standard for RL-based code models to establish statistical reliability of the reported margins.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback, which has helped us clarify and strengthen key aspects of the manuscript. We provide point-by-point responses to the major comments below. Where the comments identify gaps in formal analysis or experimental controls, we have revised the paper by adding the requested details, invariants, test cases, and ablations.

read point-by-point responses

-

Referee: [§3.2] §3.2 (Anchor Insertion Algorithm): The claim that automatically inserted print anchors preserve original semantics for arbitrary control flows, mutations, side effects, and exceptions is load-bearing for the central thesis that the resulting traces provide faithful, non-disruptive supervision. No formal invariants, exhaustive test cases, or empirical checks for I/O interference or skipped branches are provided, leaving open the possibility that observed gains reflect surface-level format prediction rather than genuine reasoning alignment.

Authors: We appreciate the referee's emphasis on rigorously establishing semantic preservation, which underpins the validity of the execution traces. The original §3.2 described the insertion rules and provided illustrative examples for common structures. In the revised manuscript we have added a formal invariants subsection proving that the algorithm (1) inserts only non-mutating print statements, (2) leaves control flow, exception paths, and side-effect order unchanged, and (3) captures state without introducing new I/O or skipping branches. We also include an expanded appendix with 60+ test cases spanning arbitrary loops, conditionals, mutations, I/O, and exceptions; each case was executed before and after insertion to confirm identical observable behavior. The 10.6-point gain on REval (which scores trace fidelity directly) further indicates that improvements derive from genuine stepwise reasoning rather than format prediction. revision: yes

-

Referee: [§5] §5 (Experiments and Ablations): The SOTA claims rest on comparisons to CodeReasoner-7B and GPT-4o, yet no ablation isolates the contribution of the print-anchor supervision from the Bi-Level GRPO objective or from possible differences in training data volume. Without such controls, it is impossible to confirm that the 5.1-point CRUXEval and 8.8-point LiveCodeBench lifts are attributable to the proposed execution modeling rather than other factors.

Authors: We agree that explicit isolation of each component is necessary. The CodeReasoner-7B baseline was trained on the same underlying data distribution but without anchors or bi-level credit assignment. In the revision we have added three controlled ablations: (i) our anchors with standard GRPO (isolating bi-level credit assignment), (ii) Bi-Level GRPO without anchors (isolating the supervision signal), and (iii) matched training token budgets by subsampling the baseline data to identical volume. These experiments attribute roughly 3.2 points on CRUXEval and 3.9 points on LiveCodeBench to the print-anchor supervision and 2.5 / 4.9 points respectively to the bi-level objective. We have also clarified the exact data composition and token counts to ensure comparability. The results confirm that the reported lifts arise from the proposed execution modeling. revision: yes

Circularity Check

No significant circularity; empirical benchmark results stand independently

full rationale

The paper proposes an empirical framework (automatic print-anchor insertion plus Bi-Level GRPO) and reports performance numbers on external benchmarks (CRUXEval, LiveCodeBench, REval) against named baselines and GPT-4o. No derivation chain, first-principles prediction, or fitted parameter is presented that reduces by construction to the method's own inputs. The central claims are verifiable accuracy deltas on public test sets; no self-definitional, self-citation load-bearing, or renaming steps appear in the abstract or described pipeline. The reader's noted assumption about anchor faithfulness is a correctness concern, not a circularity reduction.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Print-based execution-trace anchors inserted into code produce accurate, non-disruptive intermediate state labels for supervision.

- domain assumption Bi-level GRPO can assign credit both across trajectories and within a trajectory in a way that improves downstream correctness.

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearBy automatically inserting structured print-based execution-trace anchors into code, the model is trained to predict runtime states at each step... Bi-Level GRPO... inter-trajectory... intra-trajectory shaping advantage

-

IndisputableMonolith/Foundation/AbsoluteFloorClosure.leanabsolute_floor_iff_bare_distinguishability unclearLStepCodeReasoner(θ) = −∑ log pθ(zi | ...)

Reference graph

Works this paper leans on

- [1]

-

[2]

CruxEval: A Benchmark for Code Reasoning, Understanding and Execution.arXiv:2401.03065,

Cruxeval: A benchmark for code reasoning, understanding and execution , author=. arXiv preprint arXiv:2401.03065 , year=

-

[3]

Advances in Neural Information Processing Systems , volume=

Semcoder: Training code language models with comprehensive semantics reasoning , author=. Advances in Neural Information Processing Systems , volume=

-

[4]

Codei/o: Condensing reasoning patterns via code input-output prediction.CoRR, abs/2502.07316, 2025a

CodeI/O: Condensing Reasoning Patterns via Code Input-Output Prediction , author=. arXiv preprint arXiv:2502.07316 , year=

-

[5]

Advances in Neural Information Processing Systems , volume=

Is your code generated by chatgpt really correct? rigorous evaluation of large language models for code generation , author=. Advances in Neural Information Processing Systems , volume=

-

[6]

Qwq: Reflect deeply on the boundaries of the unknown , author=. Hugging Face , year=

-

[7]

Instruction tuning with gpt-4 , author=. arXiv preprint arXiv:2304.03277 , year=

work page internal anchor Pith review arXiv

-

[8]

Lecture notes-monograph series , pages=

Generalized accept-reject sampling schemes , author=. Lecture notes-monograph series , pages=. 2004 , publisher=

work page 2004

-

[9]

Does Reinforcement Learning Really Incentivize Reasoning Capacity in LLMs Beyond the Base Model?

Does reinforcement learning really incentivize reasoning capacity in llms beyond the base model? , author=. arXiv preprint arXiv:2504.13837 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[10]

arXiv preprint arXiv:2502.14768

Logic-rl: Unleashing llm reasoning with rule-based reinforcement learning , author=. arXiv preprint arXiv:2502.14768 , year=

-

[11]

Proceedings of the AAAI Conference on Artificial Intelligence , volume=

A theoretical analysis of the repetition problem in text generation , author=. Proceedings of the AAAI Conference on Artificial Intelligence , volume=

-

[12]

arXiv preprint arXiv:2505.10402 , year=

Rethinking Repetition Problems of LLMs in Code Generation , author=. arXiv preprint arXiv:2505.10402 , year=

-

[13]

Ctrl: A conditional transformer language model for controllable generation , author=. arXiv preprint arXiv:1909.05858 , year=

-

[14]

The Curious Case of Neural Text Degeneration

The curious case of neural text degeneration , author=. arXiv preprint arXiv:1904.09751 , year=

work page internal anchor Pith review Pith/arXiv arXiv 1904

-

[15]

Advances in Neural Information Processing Systems , volume=

Learning to break the loop: Analyzing and mitigating repetitions for neural text generation , author=. Advances in Neural Information Processing Systems , volume=

-

[16]

arXiv preprint arXiv:2410.15226 , year=

On the Diversity of Synthetic Data and its Impact on Training Large Language Models , author=. arXiv preprint arXiv:2410.15226 , year=

-

[17]

arXiv preprint arXiv:2310.14971 , year=

Penalty decoding: Well suppress the self-reinforcement effect in open-ended text generation , author=. arXiv preprint arXiv:2310.14971 , year=

-

[18]

SFT Memorizes, RL Generalizes: A Comparative Study of Foundation Model Post-training

Sft memorizes, rl generalizes: A comparative study of foundation model post-training , author=. arXiv preprint arXiv:2501.17161 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[19]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning , author=. arXiv preprint arXiv:2501.12948 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[20]

Proximal Policy Optimization Algorithms

Proximal policy optimization algorithms , author=. arXiv preprint arXiv:1707.06347 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[21]

LiveCodeBench: Holistic and Contamination Free Evaluation of Large Language Models for Code

Livecodebench: Holistic and contamination free evaluation of large language models for code , author=. arXiv preprint arXiv:2403.07974 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[22]

arXiv preprint arXiv:2403.16437 , year=

Reasoning runtime behavior of a program with llm: How far are we? , author=. arXiv preprint arXiv:2403.16437 , year=

-

[23]

DeepSeek-Coder: When the Large Language Model Meets Programming -- The Rise of Code Intelligence

DeepSeek-Coder: When the Large Language Model Meets Programming--The Rise of Code Intelligence , author=. arXiv preprint arXiv:2401.14196 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[24]

Qwen2.5-Coder Technical Report

Qwen2. 5-coder technical report , author=. arXiv preprint arXiv:2409.12186 , year=

work page internal anchor Pith review Pith/arXiv arXiv

- [25]

- [26]

-

[27]

Qwen2 technical report , author=. arXiv preprint arXiv:2412.15115 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[28]

LLaMA: Open and Efficient Foundation Language Models

Llama: Open and efficient foundation language models , author=. arXiv preprint arXiv:2302.13971 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[29]

Deepseek-coder-v2: Breaking the barrier of closed-source models in code intelligence , author=. arXiv preprint arXiv:2406.11931 , year=

-

[30]

Understanding R1-Zero-Like Training: A Critical Perspective

Understanding r1-zero-like training: A critical perspective , author=. arXiv preprint arXiv:2503.20783 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[31]

SimpleRL-Zoo: Investigating and Taming Zero Reinforcement Learning for Open Base Models in the Wild

Simplerl-zoo: Investigating and taming zero reinforcement learning for open base models in the wild , author=. arXiv preprint arXiv:2503.18892 , year=

work page internal anchor Pith review arXiv

-

[32]

arXiv preprint arXiv:2504.14286 , year=

Srpo: A cross-domain implementation of large-scale reinforcement learning on llm , author=. arXiv preprint arXiv:2504.14286 , year=

-

[33]

arXiv preprint arXiv:2408.13001 , year=

Cruxeval-x: A benchmark for multilingual code reasoning, understanding and execution , author=. arXiv preprint arXiv:2408.13001 , year=

-

[34]

arXiv preprint arXiv:2506.00750 , year=

CodeSense: a Real-World Benchmark and Dataset for Code Semantic Reasoning , author=. arXiv preprint arXiv:2506.00750 , year=

-

[35]

Equibench: Benchmarking code reasoning capabilities of large language models via equivalence checking , author=. arXiv e-prints , pages=

-

[36]

arXiv preprint arXiv:2503.04779 , year=

Can LLMs Reason About Program Semantics? A Comprehensive Evaluation of LLMs on Formal Specification Inference , author=. arXiv preprint arXiv:2503.04779 , year=

-

[37]

arXiv preprint arXiv:2404.14662 , year=

Next: Teaching large language models to reason about code execution , author=. arXiv preprint arXiv:2404.14662 , year=

-

[38]

Proceedings of the 44th international conference on software engineering , pages=

Neural program repair with execution-based backpropagation , author=. Proceedings of the 44th international conference on software engineering , pages=

-

[39]

arXiv preprint arXiv:2402.16906 , year=

Debug like a human: A large language model debugger via verifying runtime execution step-by-step , author=. arXiv preprint arXiv:2402.16906 , year=

-

[40]

International Conference on Machine Learning , pages=

Lever: Learning to verify language-to-code generation with execution , author=. International Conference on Machine Learning , pages=. 2023 , organization=

work page 2023

-

[41]

Embedding and classifying test execution traces using neural networks , author=. IET Software , volume=. 2022 , publisher=

work page 2022

-

[42]

arXiv preprint arXiv:2305.05383 , year=

Code execution with pre-trained language models , author=. arXiv preprint arXiv:2305.05383 , year=

-

[43]

Proceedings of the 46th IEEE/ACM International Conference on Software Engineering , pages=

Traced: Execution-aware pre-training for source code , author=. Proceedings of the 46th IEEE/ACM International Conference on Software Engineering , pages=

-

[44]

International Conference on Information and Communications Security , pages=

FuzzBoost: Reinforcement compiler fuzzing , author=. International Conference on Information and Communications Security , pages=. 2022 , organization=

work page 2022

-

[45]

Proceedings of the IEEE/ACM 46th International Conference on Software Engineering , pages=

Curiosity-driven testing for sequential decision-making process , author=. Proceedings of the IEEE/ACM 46th International Conference on Software Engineering , pages=

-

[46]

Fuzzing JavaScript Interpreters with Coverage-Guided Reinforcement Learning for LLM-Based Mutation , author=. Proceedings of the 33rd ACM SIGSOFT International Symposium on Software Testing and Analysis , pages=

-

[47]

Proceedings of the ACM on Software Engineering , volume=

Ircoco: Immediate rewards-guided deep reinforcement learning for code completion , author=. Proceedings of the ACM on Software Engineering , volume=. 2024 , publisher=

work page 2024

-

[48]

arXiv preprint arXiv:2407.19487 , year=

Rlcoder: Reinforcement learning for repository-level code completion , author=. arXiv preprint arXiv:2407.19487 , year=

-

[49]

arXiv preprint arXiv:2502.18449 , year=

Swe-rl: Advancing llm reasoning via reinforcement learning on open software evolution , author=. arXiv preprint arXiv:2502.18449 , year=

-

[50]

Proceedings of the ACM on Software Engineering , volume=

Clarifygpt: A framework for enhancing llm-based code generation via requirements clarification , author=. Proceedings of the ACM on Software Engineering , volume=. 2024 , publisher=

work page 2024

-

[51]

IEEE Transactions on Software Engineering , year=

Llm-based test-driven interactive code generation: User study and empirical evaluation , author=. IEEE Transactions on Software Engineering , year=

-

[52]

Computer Standards & Interfaces , volume=

Large language models for code completion: A systematic literature review , author=. Computer Standards & Interfaces , volume=. 2025 , publisher=

work page 2025

-

[53]

2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE) , pages=

Cctest: Testing and repairing code completion systems , author=. 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE) , pages=. 2023 , organization=

work page 2023

-

[54]

Advances in Neural Information Processing Systems , volume=

Swe-agent: Agent-computer interfaces enable automated software engineering , author=. Advances in Neural Information Processing Systems , volume=

-

[55]

Lingma swe-gpt: An open development-process-centric language model for automated software improvement , author=. arXiv preprint arXiv:2411.00622 , year=

-

[56]

Evaluating Large Language Models Trained on Code

Evaluating large language models trained on code , author=. arXiv preprint arXiv:2107.03374 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[57]

SWE-bench: Can Language Models Resolve Real-World GitHub Issues?

Swe-bench: Can language models resolve real-world github issues? , author=. arXiv preprint arXiv:2310.06770 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[58]

Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters

Scaling llm test-time compute optimally can be more effective than scaling model parameters , author=. arXiv preprint arXiv:2408.03314 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[59]

LlamaFactory: Unified Efficient Fine-Tuning of 100+ Language Models , author=. Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 3: System Demonstrations) , address=. 2024 , url=

work page 2024

-

[60]

HybridFlow: A Flexible and Efficient RLHF Framework , author =. 2024 , journal =

work page 2024

-

[61]

arXiv preprint arXiv:2503.01307 , year=

Cognitive behaviors that enable self-improving reasoners, or, four habits of highly effective stars , author=. arXiv preprint arXiv:2503.01307 , year=

-

[62]

arXiv preprint arXiv:2505.00661 , year=

On the generalization of language models from in-context learning and finetuning: a controlled study , author=. arXiv preprint arXiv:2505.00661 , year=

-

[63]

arXiv preprint arXiv:2502.08130 , year=

Selective Self-to-Supervised Fine-Tuning for Generalization in Large Language Models , author=. arXiv preprint arXiv:2502.08130 , year=

-

[64]

arXiv preprint arXiv:2211.00635 , year=

Two-stage LLM fine-tuning with less specialization and more generalization , author=. arXiv preprint arXiv:2211.00635 , year=

-

[65]

arXiv preprint arXiv:2308.10792 , year=

Instruction tuning for large language models: A survey , author=. arXiv preprint arXiv:2308.10792 , year=

-

[66]

arXiv preprint arXiv:2312.05934 , year=

Fine-tuning or retrieval? comparing knowledge injection in llms , author=. arXiv preprint arXiv:2312.05934 , year=

-

[67]

arXiv preprint arXiv:2501.04961 , year=

Demystifying domain-adaptive post-training for financial llms , author=. arXiv preprint arXiv:2501.04961 , year=

-

[68]

arXiv preprint arXiv:2503.03730 , year=

Towards understanding distilled reasoning models: A representational approach , author=. arXiv preprint arXiv:2503.03730 , year=

-

[69]

Towards widening the distillation bottleneck for reasoning models , author=. arXiv e-prints , pages=

-

[70]

CodeReasoner: Enhancing the Code Reasoning Ability with Reinforcement Learning , author=. arXiv preprint arXiv:2507.17548 , year=

-

[71]

Program Synthesis with Large Language Models

Program synthesis with large language models , author=. arXiv preprint arXiv:2108.07732 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[72]

Absolute Zero: Reinforced Self-play Reasoning with Zero Data

Absolute zero: Reinforced self-play reasoning with zero data , author=. arXiv preprint arXiv:2505.03335 , year=

work page internal anchor Pith review arXiv

-

[73]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Deepseekmath: Pushing the limits of mathematical reasoning in open language models , author=. arXiv preprint arXiv:2402.03300 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[74]

DAPO: An Open-Source LLM Reinforcement Learning System at Scale

Dapo: An open-source llm reinforcement learning system at scale , author=. arXiv preprint arXiv:2503.14476 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[75]

arXiv preprint arXiv:2510.06062 , year=

Aspo: Asymmetric importance sampling policy optimization , author=. arXiv preprint arXiv:2510.06062 , year=

-

[76]

Group Sequence Policy Optimization

Group sequence policy optimization , author=. arXiv preprint arXiv:2507.18071 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[77]

R1-vl: Learning to reason with multimodal large language models via step-wise group relative policy optimization , author=. arXiv preprint arXiv:2503.12937 , year=

-

[78]

arXiv preprint arXiv:2508.17445 , year=

Treepo: Bridging the gap of policy optimization and efficacy and inference efficiency with heuristic tree-based modeling , author=. arXiv preprint arXiv:2508.17445 , year=

-

[79]

Qwen3 technical report , author=. arXiv preprint arXiv:2505.09388 , year=

work page internal anchor Pith review Pith/arXiv arXiv

- [80]

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.