Recognition: 2 theorem links

· Lean TheoremMetaphor Is Not All Attention Needs

Pith reviewed 2026-05-13 05:56 UTC · model grok-4.3

The pith

Poetic jailbreaks succeed because they trigger distinct attention patterns in LLMs that stay separate from safety detection.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

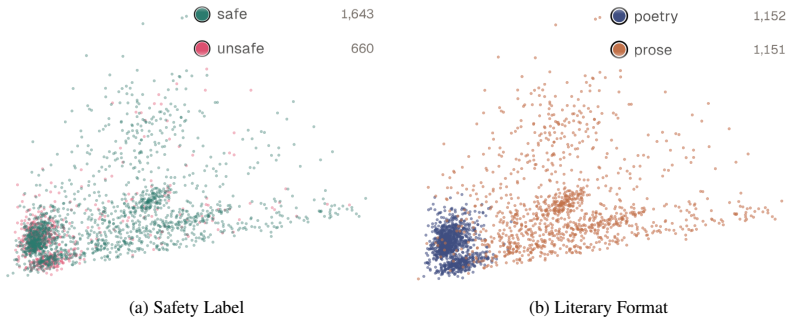

Models distinguish poetic from prose formats with high accuracy, yet struggle to predict jailbreak success within each format. Clustering of attention representations shows clear separation by literary format but not by safety label. These results indicate that jailbreak success is not caused by a failure to recognize poetic formatting; rather, poetic prompts induce distinct processing patterns that remain largely independent of harmful-content detection. Literary jailbreaks appear to misalign models not through any single poetic device but through accumulated stylistic irregularities that alter prompt processing and avoid lexical triggers considered during post-training.

What carries the argument

Vectorized attention-map representations, input-level ablation of poetic devices, clustering of those vectors, and linear probes trained to predict both safety outcome and literary format.

If this is right

- Safety mechanisms must account for style-induced shifts in model behavior rather than relying only on lexical triggers.

- No single poetic device drives the effect; success comes from accumulated stylistic irregularities.

- Post-training needs to address processing changes that arise from irregular formatting.

- Interpretability tools such as attention clustering can expose processing paths that operate independently of safety labels.

Where Pith is reading between the lines

- The same separation of processing paths may occur with other non-poetic stylistic jailbreaks such as code snippets or formal registers.

- Style-aware safety training could improve robustness across a wider range of models and prompt styles.

- Attention-based probes might be run at inference time to flag prompts that induce risky processing shifts.

Load-bearing premise

Vectorized attention maps and clustering faithfully capture the processing differences that matter for safety decisions, and results on Qwen3-14B generalize to other models.

What would settle it

Finding a strong correlation between attention-cluster membership and jailbreak success inside the poetic format group, or showing that removing one specific poetic device consistently restores safety alignment.

Figures

read the original abstract

Large language models are increasingly deployed in safety-critical applications, where their ability to resist harmful instructions is essential. Although post-training aims to make models robust against many jailbreak strategies, recent evidence shows that stylistic reformulations, such as poetic transformation, can still bypass safety mechanisms with alarming effectiveness. This raises a central question: why do literary jailbreaks succeed? In this work, we investigate whether their effectiveness depends on specific poetic devices, on a failure to recognize literary formatting, or on deeper changes in how models process stylistically irregular prompts. We address this problem through an interpretability analysis of attention patterns. We perform input-level ablation studies to assess the contribution of individual and combinations of poetic devices; construct an interpretable vector representation of attention maps; cluster these representations and train linear probes to predict safety outcomes and literary format. Our results show that models distinguish poetic from prose formats with high accuracy, yet struggle to predict jailbreak success within each format. Clustering further reveals clear separation by literary format, but not by safety label. These findings indicate that jailbreak success is not caused by a failure to recognize poetic formatting; rather, poetic prompts induce distinct processing patterns that remain largely independent of harmful-content detection. Overall, literary jailbreaks appear to misalign large language models not through any single poetic device, but through accumulated stylistic irregularities that alter prompt processing and avoid lexical triggers considered during post-training. This suggests that robustness requires safety mechanisms that account for style-induced shifts in model behavior. We use Qwen3-14B as a representative open-weight case study.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper investigates why poetic reformulations of harmful prompts (literary jailbreaks) succeed against safety-aligned LLMs. Using Qwen3-14B as a case study, it performs ablation on poetic devices, constructs vectorized representations of attention maps, applies clustering, and trains linear probes to predict literary format versus jailbreak success. The central claim is that models reliably distinguish poetic from prose formats, yet format and safety labels are largely independent in the learned representations; jailbreak success arises from accumulated stylistic irregularities that shift processing patterns away from lexical triggers rather than from any failure to detect the literary format itself.

Significance. If the empirical separation holds under more expressive probes and across models, the work supplies a concrete interpretability argument that style-induced attention shifts can decouple from content-based safety filters. This would directly motivate safety training regimes that incorporate stylistic variation rather than relying solely on post-training lexical or semantic guards, and it offers a reusable vectorization-plus-probe pipeline for studying format effects in other alignment settings.

major comments (2)

- [interpretability analysis and probe experiments] The independence conclusion rests on linear probes achieving high accuracy for format but low accuracy for jailbreak success within each format, plus clustering separating only by format. Because the vectorized attention representation is fixed and only linear classifiers are reported, non-linear interactions between heads, layers, or token attentions that could still link style to safety decisions are not ruled out; the paper should either add non-linear probes (e.g., small MLPs) or provide a justification that linearity is sufficient for the relevant decision boundary.

- [experimental setup and conclusion] All quantitative results are obtained on a single model (Qwen3-14B). While the manuscript presents this as a representative case study, the claim that poetic prompts induce processing patterns independent of harmful-content detection is stated in general terms; without at least one additional model or an explicit discussion of architectural differences that might modulate the effect, the scope of the independence finding remains unclear.

minor comments (2)

- [clustering results] The abstract states that clustering reveals 'clear separation by literary format, but not by safety label,' yet no quantitative cluster metrics (e.g., silhouette scores, adjusted Rand index against safety labels) are referenced; adding these numbers would strengthen the visual claim.

- [methods] The vectorization procedure for attention maps is described only at a high level; a short appendix or paragraph specifying how multi-head, multi-layer maps are flattened or aggregated (mean, concatenation, etc.) would improve reproducibility.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our paper investigating the attention mechanisms behind poetic jailbreaks. We provide point-by-point responses to the major comments and indicate the planned revisions.

read point-by-point responses

-

Referee: The independence conclusion rests on linear probes achieving high accuracy for format but low accuracy for jailbreak success within each format, plus clustering separating only by format. Because the vectorized attention representation is fixed and only linear classifiers are reported, non-linear interactions between heads, layers, or token attentions that could still link style to safety decisions are not ruled out; the paper should either add non-linear probes (e.g., small MLPs) or provide a justification that linearity is sufficient for the relevant decision boundary.

Authors: We appreciate the referee's suggestion regarding the potential for non-linear interactions. Our linear probes were chosen for their interpretability in showing that format is separable while safety is not within formats. To strengthen this, we will add experiments with small MLPs as non-linear probes in the revised manuscript to rule out more complex dependencies. revision: yes

-

Referee: All quantitative results are obtained on a single model (Qwen3-14B). While the manuscript presents this as a representative case study, the claim that poetic prompts induce processing patterns independent of harmful-content detection is stated in general terms; without at least one additional model or an explicit discussion of architectural differences that might modulate the effect, the scope of the independence finding remains unclear.

Authors: We agree that broadening the scope would be valuable. Since the work is presented as a case study on Qwen3-14B, we will revise the manuscript to include a dedicated discussion on how the findings relate to common architectural elements in LLMs, such as attention mechanisms, and clarify the generalizability limits. We believe this addresses the concern without requiring additional model experiments at this stage. revision: partial

Circularity Check

No circularity: purely empirical interpretability study

full rationale

The paper performs input ablation, constructs vectorized attention-map representations, runs clustering, and trains linear probes to predict format vs. safety labels on Qwen3-14B. All claims rest on observed accuracies, cluster separations, and probe performance rather than any derivation, fitted parameter renamed as prediction, or self-citation chain. No equations, uniqueness theorems, or ansatzes appear; the central claim follows directly from the experimental outcomes without reduction to inputs by construction.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Attention maps can be meaningfully vectorized and clustered to reveal processing differences.

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearOur results show that models distinguish poetic from prose formats with high accuracy, yet struggle to predict jailbreak success within each format.

Reference graph

Works this paper leans on

-

[1]

A General Language Assistant as a Laboratory for Alignment

A general language assistant as a laboratory for alignment , author=. arXiv preprint arXiv:2112.00861 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[2]

Advances in neural information processing systems , volume=

Training language models to follow instructions with human feedback , author=. Advances in neural information processing systems , volume=

-

[3]

Constitutional AI: Harmlessness from AI Feedback

Constitutional ai: Harmlessness from ai feedback , author=. arXiv preprint arXiv:2212.08073 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[4]

Jailbreak- ing chatgpt via prompt engineering: An empirical study

Jailbreaking chatgpt via prompt engineering: An empirical study , author=. arXiv preprint arXiv:2305.13860 , year=

-

[5]

33rd USENIX Security Symposium (USENIX Security 24) , pages=

Don't listen to me: Understanding and exploring jailbreak prompts of large language models , author=. 33rd USENIX Security Symposium (USENIX Security 24) , pages=

-

[6]

Tricking llms into disobedience: Formalizing, analyzing, and detecting jailbreaks , author=. Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024) , pages=

work page 2024

-

[7]

How johnny can persuade llms to jailbreak them: Rethinking persuasion to challenge ai safety by humanizing llms , author=. Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , pages=

-

[8]

arXiv preprint arXiv:2511.15304 , year=

Adversarial poetry as a universal single-turn jailbreak mechanism in large language models , author=. arXiv preprint arXiv:2511.15304 , year=

-

[9]

arXiv preprint arXiv:2601.08837 , year=

From Adversarial Poetry to Adversarial Tales: An Interpretability Research Agenda , author=. arXiv preprint arXiv:2601.08837 , year=

-

[10]

Neural Machine Translation by Jointly Learning to Align and Translate , author=. 2016 , eprint=

work page 2016

- [11]

- [12]

- [13]

-

[14]

Attention Sinks: A 'Catch, Tag, Release' Mechanism for Embeddings , author=. 2025 , eprint=

work page 2025

-

[15]

Efficient Streaming Language Models with Attention Sinks , author=. 2024 , eprint=

work page 2024

-

[16]

Punctuation and Predicates in Language Models , author=. 2025 , eprint=

work page 2025

-

[17]

Analyzing Multi-Head Self-Attention: Specialized Heads Do the Heavy Lifting, the Rest Can Be Pruned , author=. 2019 , eprint=

work page 2019

-

[18]

AttentionDefense: Leveraging System Prompt Attention for Explainable Defense Against Novel Jailbreaks , author=. 2025 , eprint=

work page 2025

-

[19]

Artificial Text Detection via Examining the Topology of Attention Maps , url=

Kushnareva, Laida and Cherniavskii, Daniil and Mikhailov, Vladislav and Artemova, Ekaterina and Barannikov, Serguei and Bernstein, Alexander and Piontkovskaya, Irina and Piontkovski, Dmitri and Burnaev, Evgeny , year=. Artificial Text Detection via Examining the Topology of Attention Maps , url=. doi:10.18653/v1/2021.emnlp-main.50 , booktitle=

-

[20]

DHCP: Detecting Hallucinations by Cross-modal Attention Pattern in Large Vision-Language Models , author=. 2025 , eprint=

work page 2025

-

[21]

Guess or Recall? Training CNNs to Classify and Localize Memorization in LLMs , author=. 2025 , eprint=

work page 2025

-

[22]

What Does BERT Look At? An Analysis of BERT's Attention , author=. 2019 , eprint=

work page 2019

-

[23]

Lookback Lens: Detecting and Mitigating Contextual Hallucinations in Large Language Models Using Only Attention Maps , author=. 2024 , eprint=

work page 2024

-

[24]

The Map of Misbelief: Tracing Intrinsic and Extrinsic Hallucinations Through Attention Patterns , author=. 2025 , eprint=

work page 2025

-

[25]

Jailbreaking Leaves a Trace: Understanding and Detecting Jailbreak Attacks from Internal Representations of Large Language Models , author=. 2026 , eprint=

work page 2026

-

[26]

Safety Alignment Should Be Made More Than Just A Few Attention Heads , author=. 2025 , eprint=

work page 2025

-

[27]

Attention Slipping: A Mechanistic Understanding of Jailbreak Attacks and Defenses in LLMs , author=. 2025 , eprint=

work page 2025

-

[28]

Universal Jailbreak Suffixes Are Strong Attention Hijackers , author=. 2025 , eprint=

work page 2025

-

[29]

Introducing v0.5 of the AI Safety Benchmark from MLCommons , author=. 2024 , eprint=

work page 2024

-

[30]

AILuminate: Introducing v1.0 of the AI Risk and Reliability Benchmark from MLCommons , author=. 2025 , eprint=

work page 2025

-

[31]

Fine-Tuning Language Models from Human Preferences , author=. 2020 , eprint=

work page 2020

-

[32]

Open Problems in Mechanistic Interpretability

Open problems in mechanistic interpretability , author=. arXiv preprint arXiv:2501.16496 , year=

work page internal anchor Pith review arXiv

- [33]

- [34]

-

[35]

arXiv preprint arXiv:2403.19851 , year=

Localizing paragraph memorization in language models , author=. arXiv preprint arXiv:2403.19851 , year=

-

[36]

GPT-4 Is Too Smart To Be Safe: Stealthy Chat with LLMs via Cipher , author=. 2024 , eprint=

work page 2024

-

[37]

ArtPrompt: ASCII Art-based Jailbreak Attacks against Aligned LLMs , author=. 2024 , eprint=

work page 2024

-

[38]

LLMs are Vulnerable to Malicious Prompts Disguised as Scientific Language , author=. 2025 , eprint=

work page 2025

- [39]

-

[40]

Analyzing memorization in large language models through the lens of model attribution , author=. Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers) , pages=

work page 2025

-

[41]

The assistant axis: Situating and stabilizing the default persona of language models , author=. arXiv preprint arXiv:2601.10387 , year=

-

[42]

Linda R. Waugh , journal =. The Poetic Function in the Theory of Roman Jakobson , urldate =

- [43]

-

[44]

2025 , howpublished =

work page 2025

-

[45]

DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning , volume=

Guo, Daya and Yang, Dejian and Zhang, Haowei and Song, Junxiao and others , year=. DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning , volume=. Nature , publisher=. doi:10.1038/s41586-025-09422-z , number=

-

[46]

Qwen3 Technical Report , author=. arXiv preprint arXiv:2505.09388 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[47]

Jakobson, Roman , title =. Style in Language , editor =. 1960 , pages =

work page 1960

-

[48]

arXiv preprint arXiv:2512.05117 (2025)

The Universal Weight Subspace Hypothesis , author=. arXiv preprint arXiv:2512.05117 , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.