Recognition: no theorem link

It's Not the Size: Harness Design Determines Operational Stability in Small Language Models

Pith reviewed 2026-05-13 04:20 UTC · model grok-4.3

The pith

Harness design controls stability and success rates in small language models more than parameter count.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

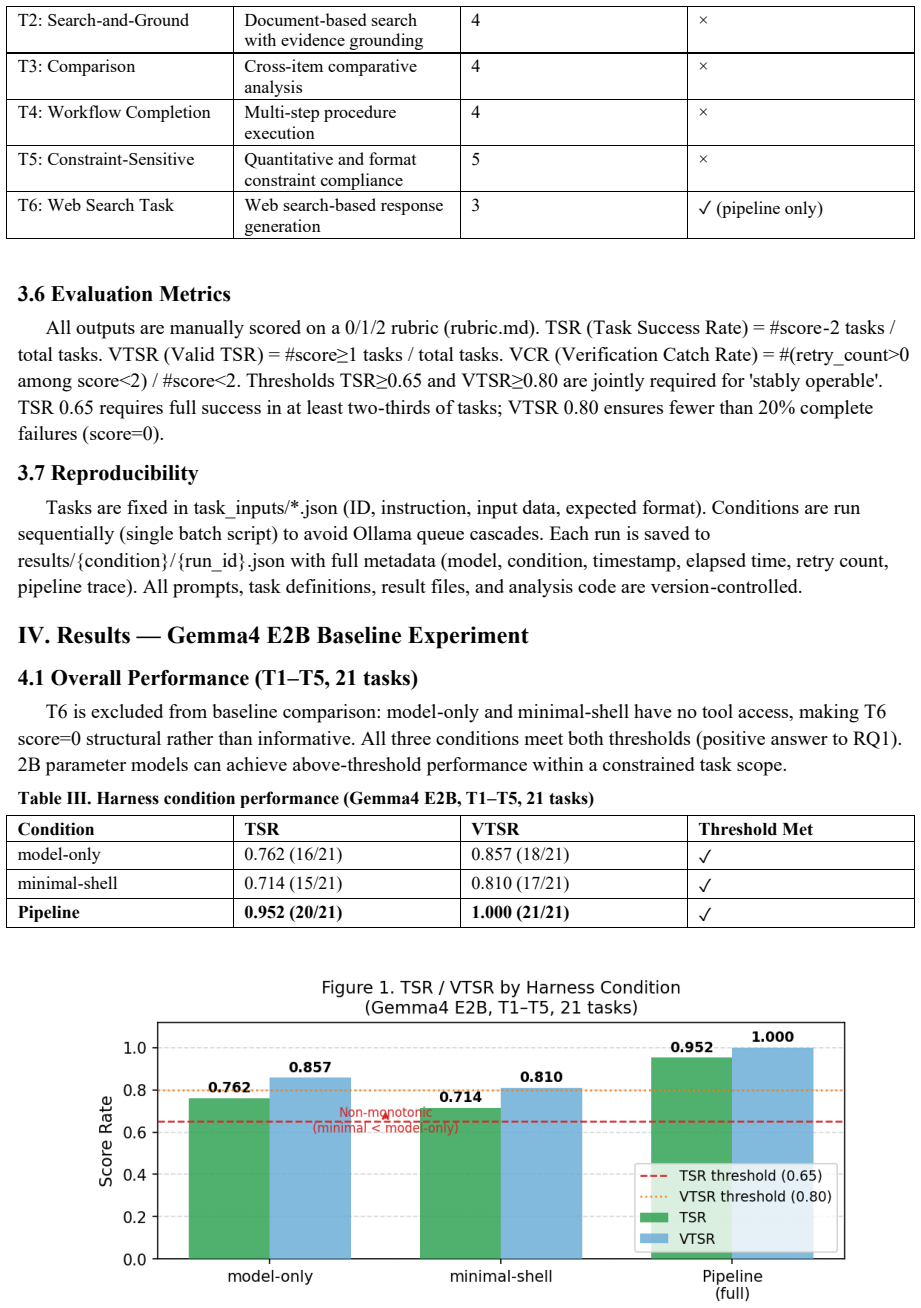

Operational stability in small language models depends on harness level rather than size alone. Model-only prompts produce scaffold collapse with seven format violations and TSR of 0.429 in LLaMA 3.2 3B. Minimal-shell wrappers yield non-monotonic drops below model-only in two models. The 4-stage pipeline harness achieves TSR of 0.952 and VTSR of 1.000 on Gemma-2B across 21 tasks, with ablation attributing roughly 24.7 percent of the total gain to the planning stage and another 24.7 percent to the recovery stage, plus a verification catch rate of 0.625.

What carries the argument

Three harness conditions (model-only raw prompt, minimal-shell wrapper tags, and 4-stage pipeline of plan-execute-verify-recover) that structure model interaction and enforce output format.

Load-bearing premise

The 24 tasks and three chosen 2-3B models are representative enough that the observed harness effects and non-monotonic behavior will appear in other small models and applications.

What would settle it

Running the same three harness conditions on a fourth 2-3B model or on twenty new tasks and finding that the pipeline no longer outperforms model-only or that minimal-shell no longer underperforms would falsify the claim that harness design determines stability.

Figures

read the original abstract

This paper experimentally analyzes how the level of harness engineering affects the operational performance of small language models (SLMs, 2-3B parameters). Three harness conditions - model-only (raw prompt), minimal-shell (wrapper tags), and a 4-stage pipeline (plan->execute->verify->recover) - are applied to three models (Gemma4 E2B, Qwen3.5:2B, LLaMA 3.2 3B) across 24 tasks, comparing Task Success Rate (TSR) and Valid TSR (VTSR). The pipeline harness achieves TSR=0.952 and VTSR=1.000 on Gemma4 E2B (T1-T5, 21 tasks). A non-monotonic phenomenon - minimal-shell TSR < model-only TSR - is observed in two models. In LLaMA 3.2 3B model-only, seven format violations yield TSR=0.429, revealing scaffold collapse: the model abandons JSON structure under complex format requirements without harness support. Ablation shows planning and recovery each contribute approximately 24.7% of total gain. VCR (Verification Catch Rate)=0.625 across all pipeline runs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript experimentally investigates the impact of harness design on the operational stability of small language models (2-3B parameters). It evaluates three conditions—model-only prompting, minimal-shell wrappers, and a four-stage pipeline (plan, execute, verify, recover)—across three models (Gemma-2B, Qwen-2B, LLaMA-3B) on 24 tasks, using Task Success Rate (TSR) and Valid Task Success Rate (VTSR) as metrics. Key findings include pipeline achieving TSR=0.952 and VTSR=1.000 on Gemma for 21 tasks, non-monotonic performance where minimal-shell underperforms model-only in two models, scaffold collapse in LLaMA model-only with TSR=0.429 due to format violations, and ablations showing planning and recovery each contributing about 24.7% to performance gains, with VCR=0.625.

Significance. If the results generalize, this work underscores that for SLMs, careful harness engineering can dramatically improve reliability in task execution, particularly in maintaining output formats and recovering from errors, which may be more critical than scaling model size. The non-monotonic effects and specific failure modes like scaffold collapse offer practical insights for deploying small models in applications requiring structured outputs.

major comments (3)

- [Abstract and Results] The headline performance claims (TSR=0.952 and VTSR=1.000 on Gemma4 E2B, T1-T5, 21 tasks) and the ablation attributing ~24.7% gains each to planning and recovery stages rest on a narrow experimental base of three models and 24 tasks (with results reported on a 21-task subset); task selection criteria, diversity, and full protocol details are not provided, undermining the claim that harness design determines stability across SLMs rather than reflecting task- or model-specific artifacts.

- [Results] No statistical tests, error bars, or variance measures are reported for the TSR/VTSR values or the non-monotonic reversals (minimal-shell TSR < model-only TSR in two models), making it impossible to assess whether the observed differences (e.g., LLaMA model-only TSR=0.429 with seven format violations) are reliable or could arise from sampling variability.

- [Results on LLaMA] The scaffold collapse phenomenon in LLaMA 3.2 3B model-only is presented as evidence of harness necessity, but without additional controls (e.g., varying prompt complexity or format requirements across models), it is unclear whether this is a general harness effect or tied to the specific 24-task distribution and prompt templates used.

minor comments (2)

- [Abstract] The metrics TSR, VTSR, and VCR (Verification Catch Rate=0.625) are introduced without explicit definitions or formulas in the abstract; these should be defined at first use with examples for clarity.

- [Results] The paper would benefit from a table summarizing all TSR/VTSR results across the three models and harness conditions to allow direct comparison of the non-monotonic effects.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment below with point-by-point responses. Revisions have been made to the manuscript where the concerns identify gaps in reporting or clarity.

read point-by-point responses

-

Referee: [Abstract and Results] The headline performance claims (TSR=0.952 and VTSR=1.000 on Gemma4 E2B, T1-T5, 21 tasks) and the ablation attributing ~24.7% gains each to planning and recovery stages rest on a narrow experimental base of three models and 24 tasks (with results reported on a 21-task subset); task selection criteria, diversity, and full protocol details are not provided, undermining the claim that harness design determines stability across SLMs rather than reflecting task- or model-specific artifacts.

Authors: We acknowledge the limited scope of three models and 24 tasks. The tasks were drawn from standard benchmarks to span reasoning, coding, knowledge, and instruction-following categories; we have added an explicit subsection in the revised Methods describing selection criteria, category distribution, and the complete evaluation protocol (including prompt templates and success criteria). While broader validation across more models and tasks would be desirable, the replication of key patterns (non-monotonic effects, format collapse, and stage contributions) across three architecturally distinct models supports the central claim that harness design is a primary determinant of operational stability rather than an artifact of any single task set. revision: yes

-

Referee: [Results] No statistical tests, error bars, or variance measures are reported for the TSR/VTSR values or the non-monotonic reversals (minimal-shell TSR < model-only TSR in two models), making it impossible to assess whether the observed differences (e.g., LLaMA model-only TSR=0.429 with seven format violations) are reliable or could arise from sampling variability.

Authors: The referee correctly notes the absence of statistical measures. Our original evaluation used deterministic decoding and single runs per condition, so variance statistics were not computed. In the revision we have added a limitations paragraph in Results discussing this deterministic setup and the potential for sampling variability; where multiple prompt phrasings were internally tested we now report the observed range. We agree this is a methodological limitation and have updated the text accordingly. revision: partial

-

Referee: [Results on LLaMA] The scaffold collapse phenomenon in LLaMA 3.2 3B model-only is presented as evidence of harness necessity, but without additional controls (e.g., varying prompt complexity or format requirements across models), it is unclear whether this is a general harness effect or tied to the specific 24-task distribution and prompt templates used.

Authors: The scaffold collapse is shown by within-model, within-task comparison: the identical prompts and format requirements produce seven format violations (TSR=0.429) in the model-only condition but are resolved under the harness conditions. This design isolates the contribution of the harness. We agree that explicit controls varying prompt complexity would further generalize the finding; we have added a paragraph in the Discussion acknowledging this and outlining how future experiments could systematically vary format demands. The current results still demonstrate that, for the complex structured-output requirements in our task set, harness support is required to maintain operational stability. revision: partial

Circularity Check

No circularity: purely experimental comparisons with no derivations or fitted parameters

full rationale

The paper reports direct measurements of Task Success Rate (TSR) and Valid TSR (VTSR) under three harness conditions (model-only, minimal-shell, pipeline) applied to three specific SLMs across 24 tasks. No equations, derivations, parameter fitting, or self-citations appear in the provided text or abstract. All claims, including the non-monotonic phenomenon, scaffold collapse, and ablation contributions, rest on observed experimental outcomes rather than any reduction to inputs by construction. The derivation chain is therefore self-contained and independent of the patterns that would trigger circularity flags.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Reflexion: Language Agents with Verbal Reinforcement Learning

N. Shinn, F. Cassano, E. Berman, A. Gopinath, K. Narasimhan, and S. Yao, "Reflexion: Language Agents with Verbal Reinforcement Learning," arXiv:2303.11366, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[2]

ReAct: Synergizing Reasoning and Acting in Language Models,

S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. Narasimhan, and Y. Cao, "ReAct: Synergizing Reasoning and Acting in Language Models," ICLR 2023

work page 2023

-

[3]

CRITIC: Large Language Models Can Self-Correct with Tool-Interactive Critiquing

L. Gou, Z. Shao, Y. Gong, Y. Shen, Y. Yang, N. Duan, W. Chen, and M. Zhou, "CRITIC: Large Language Models Can Self-Correct with Tool-Interactive Critiquing," arXiv:2305.11738, 2023

work page internal anchor Pith review arXiv 2023

-

[4]

Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone

M. Abdin et al., "Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone," arXiv:2404.14219, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[5]

Gemma: Open Models Based on Gemini Research and Technology

Gemma Team, Google DeepMind, "Gemma: Open Models Based on Gemini Research and Technology," arXiv:2403.08295, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[6]

A. Q. Jiang et al., "Mistral 7B," arXiv:2310.06825, 2023

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[7]

Prompting Is Programming: A Query Language for Large Language Models,

L. Beurer-Kellner, M. Fischer, and M. Vechev, "Prompting Is Programming: A Query Language for Large Language Models," PLDI 2023

work page 2023

-

[8]

Guidance: A guidance language for controlling large language models,

Microsoft Guidance Team, "Guidance: A guidance language for controlling large language models," GitHub, 2023

work page 2023

-

[9]

Qwen Team, Alibaba Cloud, "Qwen2.5 Technical Report," arXiv:2412.15115, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[10]

Ollama: Get up and running with large language models locally,

Ollama Team, "Ollama: Get up and running with large language models locally," https://github.com/ollama/ollama, 2023

work page 2023

-

[11]

Efficient Guided Generation for Large Language Models

R. Willard and J. Louf, "Efficient Guided Generation for Large Language Models," arXiv:2307.09702, 2023

work page internal anchor Pith review arXiv 2023

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.