Recognition: no theorem link

Unveiling Hidden Lyman Alpha Emitters in the DESI DR1 Data

Pith reviewed 2026-05-13 03:00 UTC · model grok-4.3

The pith

A convolutional neural network trained on inspected spectra detects 19,685 hidden Lyman alpha emitters in DESI DR1 with 95 percent purity.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

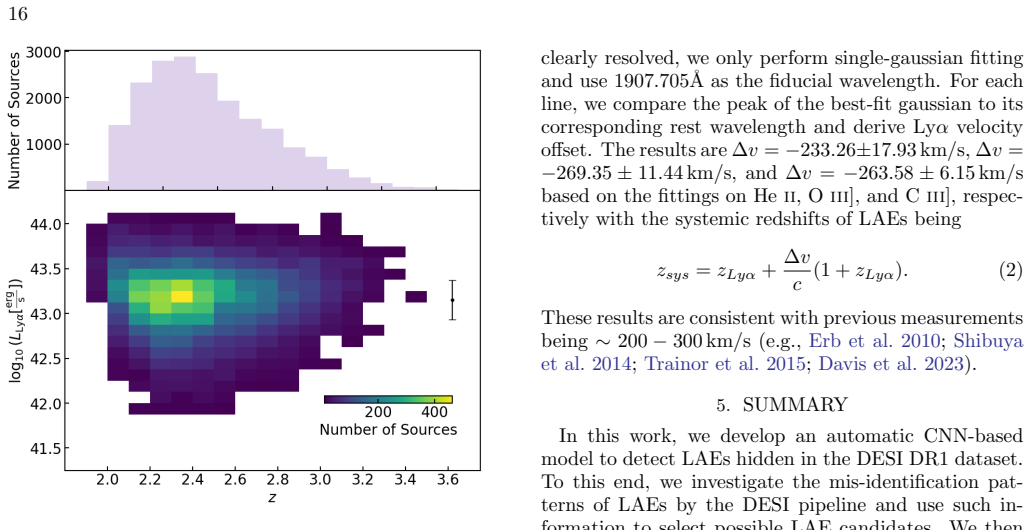

The final CNN model achieves 95.2 percent purity and 95.9 percent completeness for LAE detection; applied to roughly two million DESI DR1 spectra it identifies 19,685 LAEs between z approximately 2 and 3.5, most with Ly-alpha luminosities above 10 to the 43 erg per second, and the high-signal-to-noise composite spectrum of the sample displays P-Cygni profiles of metal lines together with Mg II emission.

What carries the argument

Convolutional neural network trained on visually classified DESI spectra to both classify sources as LAEs and measure their Ly-alpha redshifts.

Load-bearing premise

The small visually inspected training set of LAEs and non-LAEs is representative of the full DESI dataset and free of selection biases that would prevent the CNN from generalizing to unseen spectra.

What would settle it

Human re-inspection of a random subset of the 19,685 candidates returns a purity well below 95 percent.

Figures

read the original abstract

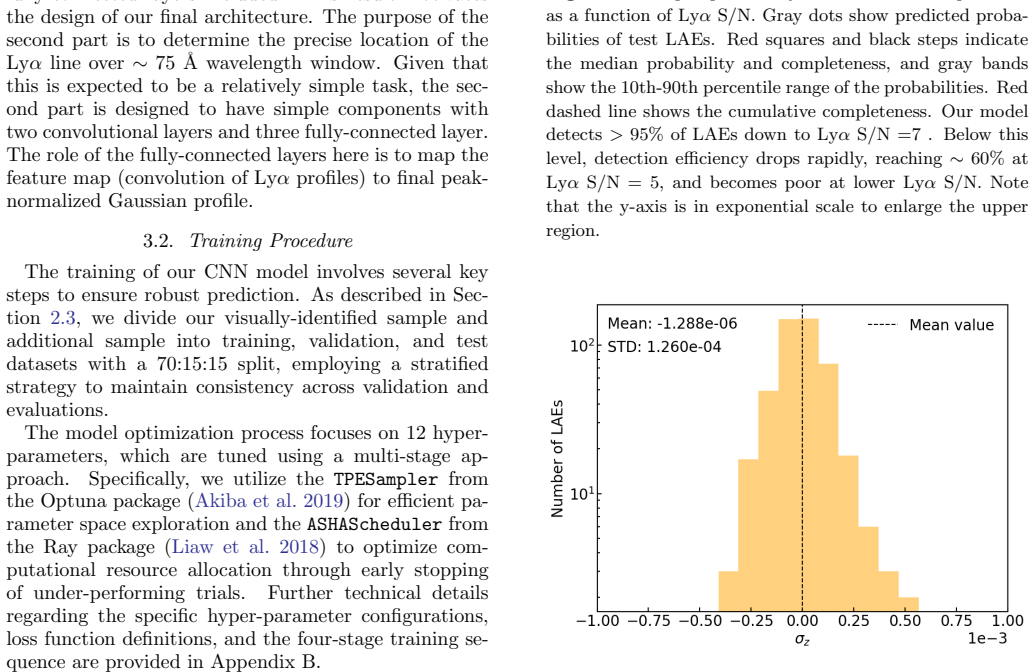

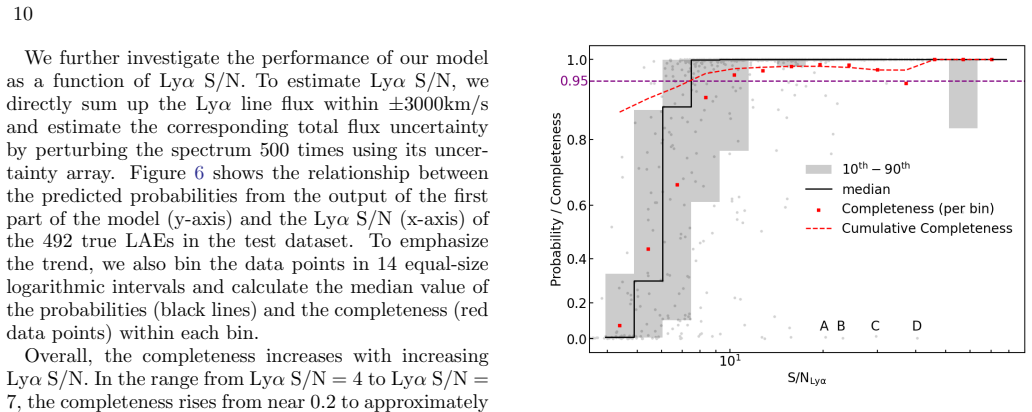

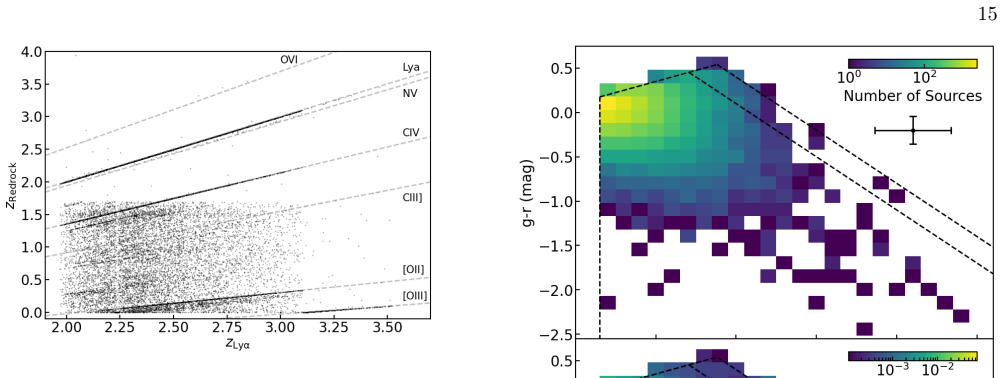

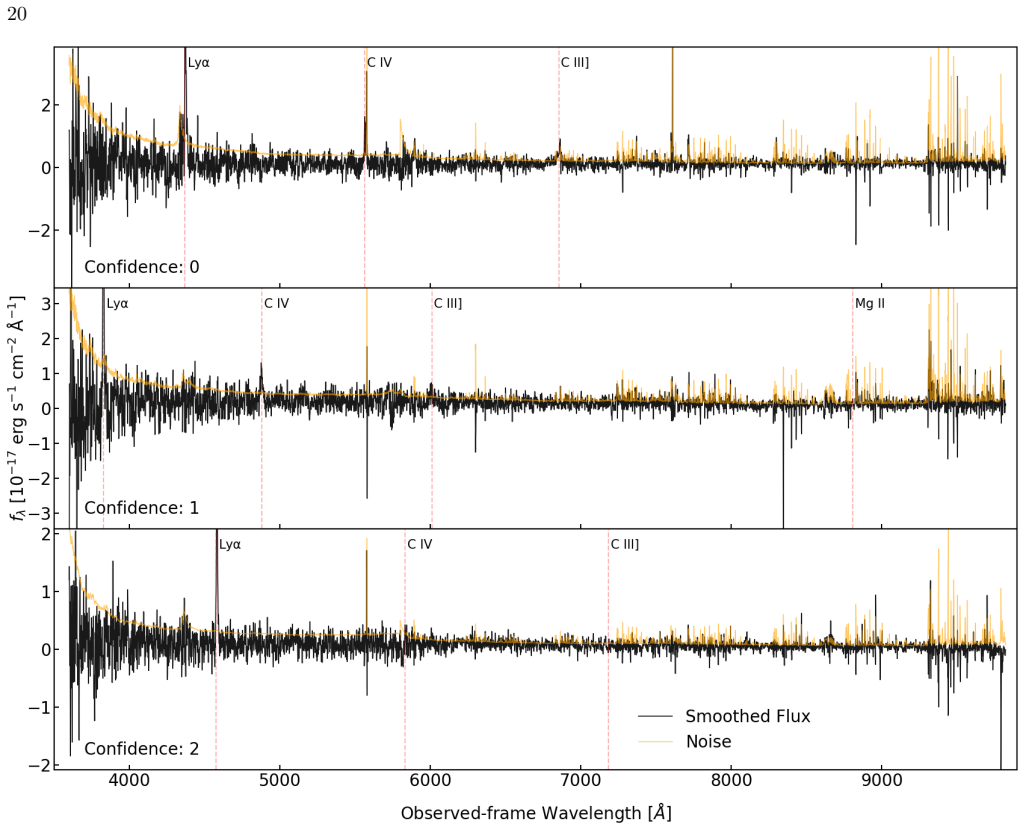

We present an automatic method based on machine-learning convolutional neural network (CNN) architecture to detect Lyman alpha emitters (LAE) hidden in the Data Release 1 spectroscopic dataset of the Dark Energy Spectroscopic Instrument (DESI). Those LAEs mostly have incorrect redshift estimations because the current DESI pipeline is not designed to detect and measure the redshifts of galaxies at $z>2$. To uncover those sources, we first visually inspect thousands of DESI spectra and construct a sample, consisting of both LAEs and non-LAEs, for training and testing the CNN-based model to (1) detect LAEs in DESI spectra and (2) determine their Ly$\alpha$ redshifts. The final model yields $95.2\%$ purity and $95.9\%$ completeness for detecting LAEs. We apply this model to approximately $2\times10^{6}$ spectra of sources targeted as emission-line galaxies and detect 19,685 LAEs from $z\sim2$ to $3.5$ within 12 minutes with a single GPU, illustrating the high efficiency of this model for identifying LAEs. The detected LAEs are mostly at the bright end of the luminosity function with Ly$\alpha$ luminosity $L_{\rm Ly\alpha} \gtrsim 10^{43}$ erg/s. The high signal-to-noise composite spectrum of the detected LAEs further shows various spectral features, including P-Cygni profiles of metal lines and MgII emission lines, possible indicators of Lyman continuum escape fraction, revealing the rich astrophysical information in this LAE sample. Finally, this sample can be used to train and validate the pipelines for redshift determination of LAEs for the preparation of the DESI-II survey.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript presents a CNN-based machine learning method to detect Lyman-alpha emitters (LAEs) in DESI DR1 spectra that are missed by the standard pipeline at z>2 due to incorrect redshifts. The authors construct a training set via visual inspection of thousands of spectra (LAEs and non-LAEs), train the model to both detect LAEs and estimate Lyα redshifts, report 95.2% purity and 95.9% completeness, apply it to ~2 million emission-line galaxy spectra to identify 19,685 LAEs at z~2-3.5, and analyze the sample's luminosity function and composite spectrum (showing P-Cygni profiles and MgII emission).

Significance. If the generalization holds, the work delivers a scalable, GPU-efficient tool for mining large spectroscopic surveys for high-z LAEs, yielding a sizable sample at the bright end of the luminosity function (L_Lyα ≳ 10^43 erg/s) that can inform studies of Lyman continuum escape and serve as training data for DESI-II pipelines. The rapid runtime on 2M spectra and the astrophysical content of the composite spectrum are clear strengths.

major comments (3)

- [Abstract] Abstract: The headline 95.2% purity and 95.9% completeness are stated without any information on the total number of visually inspected spectra, the train/test split sizes or ratios, the validation strategy (e.g., k-fold cross-validation), or uncertainty estimates on the metrics. These omissions make it impossible to judge whether the quoted performance is robust or sensitive to small-sample effects.

- [Results (application)] Results section (application to full catalog): The detection of 19,685 LAEs in the ~2×10^6 ELG spectra rests on the assumption that the visually inspected training set is statistically representative in S/N, magnitude, redshift, and line-strength distributions. No quantitative comparison (e.g., histograms or statistical tests) between the training sample and the parent DESI ELG catalog is described, leaving the risk of domain shift unaddressed and undermining the claimed completeness on the full dataset.

- [Methods (CNN training)] Methods (CNN training): The model is said to determine Lyα redshifts in addition to detection, yet no separate accuracy or bias metrics (e.g., redshift error distribution or outlier fraction) are provided for the redshift task, which is central to the claim that the pipeline can be used to prepare for DESI-II.

minor comments (3)

- [Abstract] Abstract: 'redshift estimations' should read 'redshift estimates' for grammatical correctness.

- [Introduction] The manuscript would benefit from a brief comparison to prior ML or template-based LAE finders in the literature to contextualize the CNN performance.

- [Results] Figure captions or text should explicitly state whether the reported purity/completeness include or exclude the redshift estimation step.

Simulated Author's Rebuttal

We thank the referee for their careful and constructive review of our manuscript. Their comments identify opportunities to improve clarity, robustness, and completeness of the presentation. We address each major comment point by point below and will revise the manuscript accordingly.

read point-by-point responses

-

Referee: [Abstract] Abstract: The headline 95.2% purity and 95.9% completeness are stated without any information on the total number of visually inspected spectra, the train/test split sizes or ratios, the validation strategy (e.g., k-fold cross-validation), or uncertainty estimates on the metrics. These omissions make it impossible to judge whether the quoted performance is robust or sensitive to small-sample effects.

Authors: We agree that the abstract would benefit from additional context on the training procedure. While abstracts are space-limited, we will revise the abstract to note that thousands of spectra were visually inspected and that cross-validation was used. We will expand the Methods section with the exact number of inspected spectra, the train/test split (70/30), details of the 5-fold cross-validation procedure, and bootstrap-derived uncertainties on the purity and completeness metrics. This will allow readers to better evaluate the robustness of the reported performance. revision: yes

-

Referee: [Results (application)] Results section (application to full catalog): The detection of 19,685 LAEs in the ~2×10^6 ELG spectra rests on the assumption that the visually inspected training set is statistically representative in S/N, magnitude, redshift, and line-strength distributions. No quantitative comparison (e.g., histograms or statistical tests) between the training sample and the parent DESI ELG catalog is described, leaving the risk of domain shift unaddressed and undermining the claimed completeness on the full dataset.

Authors: The referee correctly highlights the need to demonstrate representativeness to address potential domain shift. The original manuscript did not include such quantitative comparisons. We will revise the Methods and/or Results sections to add histograms and cumulative distributions of S/N, magnitudes, redshifts, and line strengths for the training sample versus a representative subset of the parent catalog. We will also include Kolmogorov-Smirnov tests to quantify agreement and discuss any differences and their possible impact on the completeness estimate for the full dataset. revision: yes

-

Referee: [Methods (CNN training)] Methods (CNN training): The model is said to determine Lyα redshifts in addition to detection, yet no separate accuracy or bias metrics (e.g., redshift error distribution or outlier fraction) are provided for the redshift task, which is central to the claim that the pipeline can be used to prepare for DESI-II.

Authors: We acknowledge that dedicated performance metrics for the redshift estimation component were not reported, even though the model outputs Lyα redshifts. We will add a new subsection in the Methods (or Results) section presenting the redshift error distribution (Δz/(1+z)), bias, scatter, and outlier fraction (e.g., |Δz| > 0.01) evaluated on the held-out test set. These metrics will directly support the model's utility for redshift determination in preparation for DESI-II. revision: yes

Circularity Check

No circularity; standard supervised ML pipeline with independent labels and application to unseen data.

full rationale

The paper constructs training and test sets through independent visual inspection of DESI spectra, trains a CNN for LAE detection and redshift estimation, reports standard purity/completeness metrics on a held-out portion of the inspected sample, and applies the trained model to a separate collection of ~2 million previously unseen ELG spectra. These steps follow conventional supervised learning practice; the performance numbers are not equivalent to any fitted parameter by construction, the application to new data produces an independent count, and no self-citations, ansatzes, uniqueness theorems, or renamings are used to justify the central claims. The derivation chain is therefore self-contained against the external benchmark of visual labeling.

Axiom & Free-Parameter Ledger

free parameters (1)

- CNN architecture hyperparameters

axioms (1)

- domain assumption Visual inspection provides accurate ground-truth labels for LAEs versus non-LAEs

Reference graph

Works this paper leans on

-

[1]

Abbott, D. C., & Conti, P. S. 1987, Annual Review of Astronomy and Astrophysics, 25, 113, doi: https: //doi.org/10.1146/annurev.aa.25.090187.000553

-

[2]

G., Aguilar, J., Ahlen, S., et al

Adame, A. G., Aguilar, J., Ahlen, S., et al. 2025a, JCAP, 2025, 021, doi: 10.1088/1475-7516/2025/02/021 —. 2025b, JCAP, 2025, 028, doi: 10.1088/1475-7516/2025/07/028

-

[3]

2004, ApJL, 601, L25, doi: 10.1086/381750

Ahn, S.-H. 2004, ApJL, 601, L25, doi: 10.1086/381750

-

[4]

Akiba, T., Sano, S., Yanase, T., Ohta, T., & Koyama, M. 2019, in Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining

work page 2019

-

[5]

Alexander, D. M., Davis, T. M., Chaussidon, E., et al. 2023, AJ, 165, 124, doi: 10.3847/1538-3881/acacfc

-

[6]

Alexandroff, R., Strauss, M. A., Greene, J. E., et al. 2013, MNRAS, 435, 3306, doi: 10.1093/mnras/stt1500 Astropy Collaboration, Robitaille, T. P., Tollerud, E. J., et al. 2013, A&A, 558, A33, doi: 10.1051/0004-6361/201322068 Astropy Collaboration, Price-Whelan, A. M., Sip˝ ocz, B. M., et al. 2018, AJ, 156, 123, doi: 10.3847/1538-3881/aabc4f Astropy Colla...

-

[7]

Beals, C. S. 1934, The Observatory, 57, 319

work page 1934

-

[8]

2011, in Advances in Neural Information Processing Systems, ed

Bergstra, J., Bardenet, R., Bengio, Y., & K´ egl, B. 2011, in Advances in Neural Information Processing Systems, ed. J. Shawe-Taylor, R. Zemel, P. Bartlett, F. Pereira, & K. Weinberger, Vol. 24 (Curran Associates, Inc.). https://proceedings.neurips.cc/paper files/paper/2011/ file/86e8f7ab32cfd12577bc2619bc635690-Paper.pdf

work page 2011

-

[9]

2025, arXiv e-prints, arXiv:2503.07923, doi: 10.48550/arXiv.2503.07923

Besuner, R., Dey, A., Drlica-Wagner, A., et al. 2025, arXiv e-prints, arXiv:2503.07923, doi: 10.48550/arXiv.2503.07923

-

[10]

Bishop, C. M., & Nasrabadi, N. M. 2006, Pattern recognition and machine learning (Springer)

work page 2006

-

[11]

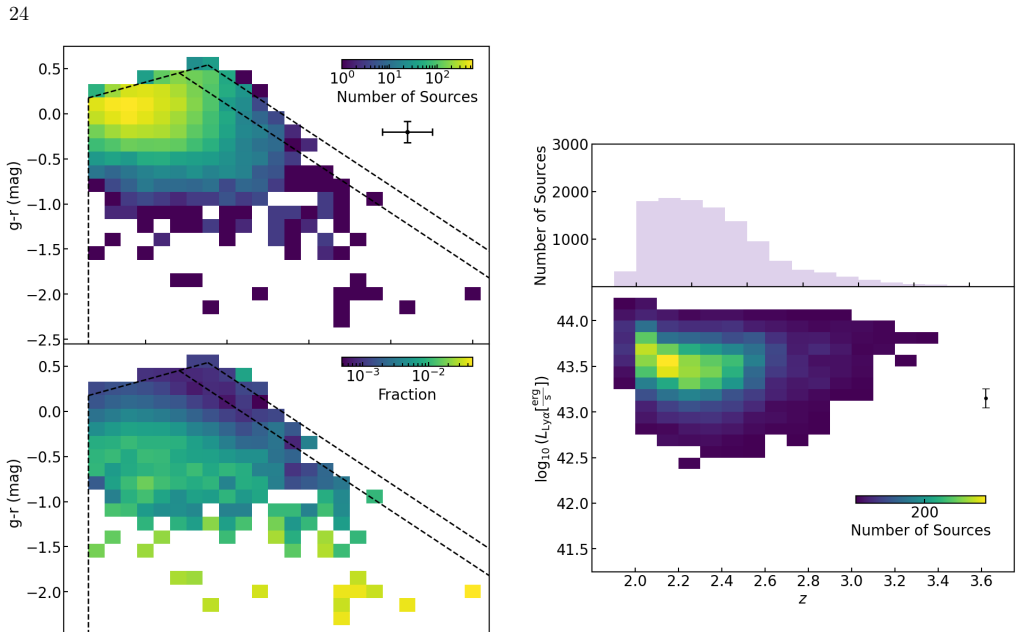

Byrohl, C., Saito, S., & Behrens, C. 2019, MNRAS, 489, 3472, doi: 10.1093/mnras/stz2260 24 Figure 19.Upper panel: Number of AGNs as a function of g-r and r-z. Lower panel: Ratio of number of AGNs to total number of ELGs in DESI DR1 main survey as a function of g-r and r-z. The typical 1σuncertainties of g-r and r-z are 0.116 and 0.154 dex, respectively, a...

-

[12]

Castor, J. I., Abbott, D. C., & Klein, R. I. 1975, ApJ, 195, 157, doi: 10.1086/153315

-

[13]

2025, MNRAS, 543, 1429, doi: 10.1093/mnras/staf1560

Chang, C.-Y., & Lan, T.-W. 2025, MNRAS, 543, 1429, doi: 10.1093/mnras/staf1560

-

[14]

2023, ApJ, 944, 107, doi: 10.3847/1538-4357/acb3c2

Chaussidon, E., Y` eche, C., Palanque-Delabrouille, N., et al. 2023, ApJ, 944, 107, doi: 10.3847/1538-4357/acb3c2

-

[15]

Chisholm, J., Rigby, J. R., Bayliss, M., et al. 2019, The Astrophysical Journal, 882, 182, doi: 10.3847/1538-4357/ab3104

-

[16]

Crowther, P. A. 2007, ARA&A, 45, 177, doi: 10.1146/annurev.astro.45.051806.110615

-

[17]

Davis, D., Gebhardt, K., Cooper, E. M., et al. 2023, ApJ, 954, 209, doi: 10.3847/1538-4357/ace4c2

-

[18]

Deb, K., Pratap, A., Agarwal, S., & Meyarivan, T. 2002, IEEE Transactions on Evolutionary Computation, 6, 182, doi: 10.1109/4235.996017 DESI Collaboration, Aghamousa, A., Aguilar, J., et al. 2016a, arXiv e-prints, arXiv:1611.00036. https://arxiv.org/abs/1611.00036 —. 2016b, arXiv e-prints, arXiv:1611.00037. https://arxiv.org/abs/1611.00037 DESI Collaborat...

-

[19]

2026, JCAP, 2026, 019, doi: 10.1088/1475-7516/2026/03/019

Ebina, H., White, M., Raichoor, A., et al. 2026, JCAP, 2026, 019, doi: 10.1088/1475-7516/2026/03/019

-

[20]

Erb, D. K., Pettini, M., Shapley, A. E., et al. 2010, ApJ, 719, 1168, doi: 10.1088/0004-637X/719/2/1168

-

[21]

Firestone, N. M., Gawiser, E., Iyer, K. G., et al. 2025, The Astrophysical Journal Letters, 986, L8, doi: 10.3847/2041-8213/adbf8c

-

[22]

Fitzpatrick, E. L. 1999, PASP, 111, 63, doi: 10.1086/316293

-

[23]

2021, ApJ, 923, 217, doi: 10.3847/1538-4357/ac2e03

Gebhardt, K., Mentuch Cooper, E., Ciardullo, R., et al. 2021, ApJ, 923, 217, doi: 10.3847/1538-4357/ac2e03

-

[24]

2016, Deep learning, MIT press 25 Figure 21.Composite spectrum of 11,505 AGNs

Goodfellow, I. 2016, Deep learning, MIT press 25 Figure 21.Composite spectrum of 11,505 AGNs. The black and orange curves are the normalized flux of the composite spectrum and the uncertainty estimated from 500 bootstrap realizations, respectively. The middle panel is a zoom-in version of the upper panel, focusing on 1215< λ rest <1590 ˚A. The lower panel...

work page 2016

-

[25]

Guo, Z., & Martini, P. 2019, ApJ, 879, 72, doi: 10.3847/1538-4357/ab2590 Gurung-L´ opez, S., Gronke, M., Saito, S., Bonoli, S., & Orsi, ´A. A. 2022, MNRAS, 510, 4525, doi: 10.1093/mnras/stab3554 Gurung-L´ opez, S., Saito, S., Baugh, C. M., et al. 2021, MNRAS, 500, 603, doi: 10.1093/mnras/staa3269

-

[26]

2023, AJ, 165, 144, doi: 10.3847/1538-3881/acb212

Guy, J., Bailey, S., Kremin, A., et al. 2023, AJ, 165, 144, doi: 10.3847/1538-3881/acb212

-

[27]

Harris, C. R., Millman, K. J., van der Walt, S. J., et al. 2020, Nature, 585, 357, doi: 10.1038/s41586-020-2649-2

-

[28]

K., Armengaud, E., Y` eche, C., et al

Herrera-Alcantar, H. K., Armengaud, E., Y` eche, C., et al. 2025, JCAP, 2025, 053, doi: 10.1088/1475-7516/2025/12/053

-

[29]

J., Gebhardt, K., Komatsu, E., et al

Hill, G. J., Gebhardt, K., Komatsu, E., et al. 2008, in Astronomical Society of the Pacific Conference Series, Vol. 399, Panoramic Views of Galaxy Formation and Evolution, ed. T. Kodama, T. Yamada, & K. Aoki, 115, doi: 10.48550/arXiv.0806.0183 26

-

[30]

Hunter, J. D. 2007, Computing in Science & Engineering, 9, 90, doi: 10.1109/MCSE.2007.55

-

[31]

Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift

Ioffe, S., & Szegedy, C. 2015, arXiv e-prints, arXiv:1502.03167, doi: 10.48550/arXiv.1502.03167

work page internal anchor Pith review doi:10.48550/arxiv.1502.03167 2015

-

[32]

Johnson, J. M., & Khoshgoftaar, T. M. 2019, Journal of Big Data, 6, 27, doi: 10.1186/s40537-019-0192-5

-

[33]

2025, The Open Journal of Astrophysics, 8, 51254, doi: 10.33232/001c.151254

Khoraminezhad, H., Saito, S., Gronke, M., & Byrohl, C. 2025, The Open Journal of Astrophysics, 8, 51254, doi: 10.33232/001c.151254

-

[34]

2016, ApJ, 823, 20, doi: 10.3847/0004-637X/823/1/20

Konno, A., Ouchi, M., Nakajima, K., et al. 2016, ApJ, 823, 20, doi: 10.3847/0004-637X/823/1/20

-

[35]

Krizhevsky, A., Sutskever, I., & Hinton, G. E. 2012, in Advances in Neural Information Processing Systems, ed. F. Pereira, C. Burges, L. Bottou, & K. Weinberger, Vol. 25 (Curran Associates, Inc.). https://proceedings.neurips.cc/paper files/paper/2012/ file/c399862d3b9d6b76c8436e924a68c45b-Paper.pdf

work page 2012

-

[36]

Lamers, H. J. G. L. M., & Cassinelli, J. P. 1999, Introduction to Stellar Winds (Cambridge University Pres)

work page 1999

-

[37]

2017, ApJ, 850, 156, doi: 10.3847/1538-4357/aa93eb

Lan, T.-W., & Fukugita, M. 2017, ApJ, 850, 156, doi: 10.3847/1538-4357/aa93eb

-

[38]

2023, The Astrophysical Journal, 943, 68, doi: 10.3847/1538-4357/aca5fa

Lan, T.-W., Tojeiro, R., Armengaud, E., et al. 2023, The Astrophysical Journal, 943, 68, doi: 10.3847/1538-4357/aca5fa

-

[39]

Lan, T.-W., Prochaska, J. X., Moustakas, J., et al. 2024, ApJ, 977, 225, doi: 10.3847/1538-4357/ad8f33

-

[40]

2025, arXiv e-prints, arXiv:2503.02229, doi: 10.48550/arXiv.2503.02229

Landriau, M., Mentuch Cooper, E., Davis, D., et al. 2025, arXiv e-prints, arXiv:2503.02229, doi: 10.48550/arXiv.2503.02229

-

[41]

Lecun, Y., Boser, B., Denker, J. S., et al. 1989, Neural Computation, 1, 541, doi: 10.1162/neco.1989.1.4.541

-

[42]

Lecun, Y., Bottou, L., Bengio, Y., & Haffner, P. 1998, Proceedings of the IEEE, 86, 2278, doi: 10.1109/5.726791

-

[43]

2013, arXiv e-prints, arXiv:1308.0847

Levi, M., Bebek, C., Beers, T., et al. 2013, arXiv e-prints, arXiv:1308.0847. https://arxiv.org/abs/1308.0847

-

[44]

2018, arXiv e-prints, arXiv:1810.05934, doi: 10.48550/arXiv.1810.05934

Li, L., Jamieson, K., Rostamizadeh, A., et al. 2018, arXiv e-prints, arXiv:1810.05934, doi: 10.48550/arXiv.1810.05934

-

[45]

2018, arXiv preprint arXiv:1807.05118 maintainers, T., & contributors

Liaw, R., Liang, E., Nishihara, R., et al. 2018, arXiv preprint arXiv:1807.05118 maintainers, T., & contributors. 2016, GitHub repository Mentuch Cooper, E., Gebhardt, K., Davis, D., et al. 2023, The Astrophysical Journal, 943, 177, doi: 10.3847/1538-4357/aca962

-

[46]

N., Doel, P., Gutierrez, G., et al

Miller, T. N., Doel, P., Gutierrez, G., et al. 2024, AJ, 168, 95, doi: 10.3847/1538-3881/ad45fe

-

[47]

Moustakas, J., Scholte, D., Dey, B., & Khederlarian, A. 2023, FastSpecFit: Fast spectral synthesis and emission-line fitting of DESI spectra, Astrophysics Source Code Library, record ascl:2308.005. http://ascl.net/2308.005

work page 2023

-

[48]

Enhancing Ly{\alpha} Emitter Identification in HETDEX with a Convolutional Neural Network

Mukae, S., Mentuch Cooper, E., Gebhardt, K., et al. 2026, arXiv e-prints, arXiv:2604.12414, doi: 10.48550/arXiv.2604.12414

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2604.12414 2026

-

[49]

2025, arXiv e-prints, arXiv:2510.18398, doi: 10.48550/arXiv.2510.18398

Mukherjee, T., Zafar, T., Nanayakkara, T., et al. 2025, arXiv e-prints, arXiv:2510.18398, doi: 10.48550/arXiv.2510.18398

-

[50]

D., Moustakas, J., Bailey, S., et al

Myers, A. D., Moustakas, J., Bailey, S., et al. 2023, The Astronomical Journal, 165, 50

work page 2023

-

[51]

2025, The Astrophysical Journal, 995, 126, doi: 10.3847/1538-4357/ae1969

Nagaraj, G., Ciardullo, R., Gronwall, C., et al. 2025, The Astrophysical Journal, 995, 126, doi: 10.3847/1538-4357/ae1969

-

[52]

Nair, V., & Hinton, G. E. 2010, in Proceedings of the 27th International Conference on International Conference on Machine Learning, ICML’10 (Madison, WI, USA: Omnipress), 807–814

work page 2010

-

[53]

Stark, D. P. 2020, ApJ, 889, 161, doi: 10.3847/1538-4357/ab6604

-

[54]

Ng, Y. V., Lan, T.-W., Prochaska, J. X., et al. 2025, ApJ, 993, 92, doi: 10.3847/1538-4357/ae0613

-

[55]

2020, ARA&A, 58, 617, doi: 10.1146/annurev-astro-032620-021859

Ouchi, M., Ono, Y., & Shibuya, T. 2020, ARA&A, 58, 617, doi: 10.1146/annurev-astro-032620-021859

-

[56]

2008, ApJS, 176, 301, doi: 10.1086/527673

Ouchi, M., Shimasaku, K., Akiyama, M., et al. 2008, ApJS, 176, 301, doi: 10.1086/527673

-

[57]

2024, MNRAS, 531, 4990, doi: 10.1093/mnras/stae1450

Parker, L., Lanusse, F., Golkar, S., et al. 2024, MNRAS, 531, 4990, doi: 10.1093/mnras/stae1450

-

[58]

Parks, D., Prochaska, J. X., Dong, S., & Cai, Z. 2018, MNRAS, 476, 1151, doi: 10.1093/mnras/sty196

-

[59]

PyTorch: An Imperative Style, High-Performance Deep Learning Library

Paszke, A., Gross, S., Massa, F., et al. 2019, arXiv e-prints, arXiv:1912.01703, doi: 10.48550/arXiv.1912.01703

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.1912.01703 2019

-

[60]

Pinarski, E., Ramgopal, G., Firestone, N., et al. 2026, arXiv e-prints, arXiv:2603.09905, doi: 10.48550/arXiv.2603.09905 Planck Collaboration, Aghanim, N., Akrami, Y., et al. 2020, A&A, 641, A6, doi: 10.1051/0004-6361/201833910

-

[61]

2024, The Astronomical Journal, 168, 245, doi: 10.3847/1538-3881/ad76a4

Poppett, C., Tyas, L., Aguilar, J., et al. 2024, The Astronomical Journal, 168, 245, doi: 10.3847/1538-3881/ad76a4

-

[62]

Prochaska, J. X., Kasen, D., & Rubin, K. 2011, The Astrophysical Journal, 734, 24, doi: 10.1088/0004-637X/734/1/24 27

-

[63]

Pucha, R., Reddy, N. A., Dey, A., et al. 2022, AJ, 164, 159, doi: 10.3847/1538-3881/ac83a9

-

[64]

Raichoor, A., Moustakas, J., Newman, J. A., et al. 2023, AJ, 165, 126, doi: 10.3847/1538-3881/acb213 Raichoor et al. 2026, Lae lbg selection with suprime medium-bands photometry —. in prep

-

[65]

You only look once: Unified, real-time object detection

Redmon, J., Divvala, S., Girshick, R., & Farhadi, A. 2015, arXiv e-prints, arXiv:1506.02640, doi: 10.48550/arXiv.1506.02640

-

[66]

Ren, S., He, K., Girshick, R., & Sun, J. 2015, arXiv e-prints, arXiv:1506.01497, doi: 10.48550/arXiv.1506.01497

-

[67]

Rubin, K. H. R., Prochaska, J. X., M´ enard, B., et al. 2011, The Astrophysical Journal, 728, 55, doi: 10.1088/0004-637X/728/1/55

-

[68]

Schlafly, E. F., Kirkby, D., Schlegel, D. J., et al. 2023, AJ, 166, 259, doi: 10.3847/1538-3881/ad0832

-

[69]

Schlegel, D. J., Finkbeiner, D. P., & Davis, M. 1998, ApJ, 500, 525, doi: 10.1086/305772

work page internal anchor Pith review doi:10.1086/305772 1998

-

[70]

J., Ferraro, S., Aldering, G., et al

Schlegel, D. J., Ferraro, S., Aldering, G., et al. 2022a, arXiv e-prints, arXiv:2209.03585, doi: 10.48550/arXiv.2209.03585

-

[71]

Schlegel, D. J., Kollmeier, J. A., Aldering, G., et al. 2022b, arXiv e-prints, arXiv:2209.04322, doi: 10.48550/arXiv.2209.04322

-

[72]

2014, ApJ, 788, 74, doi: 10.1088/0004-637X/788/1/74

Shibuya, T., Ouchi, M., Nakajima, K., et al. 2014, ApJ, 788, 74, doi: 10.1088/0004-637X/788/1/74

-

[73]

H., Fagrelius, P., Fanning, K., et al

Silber, J. H., Fagrelius, P., Fanning, K., et al. 2023, AJ, 165, 9, doi: 10.3847/1538-3881/ac9ab1

-

[74]

C., Bogosavljevi´ c, M., Shapley, A

Steidel, C. C., Bogosavljevi´ c, M., Shapley, A. E., et al. 2018, ApJ, 869, 123, doi: 10.3847/1538-4357/aaed28

-

[75]

Trainor, R. F., Steidel, C. C., Strom, A. L., & Rudie, G. C. 2015, The Astrophysical Journal, 809, 89, doi: 10.1088/0004-637X/809/1/89

-

[76]

Uzsoy, A. S. M., Dey, A., Raichoor, A., et al. 2025, arXiv e-prints, arXiv:2511.17498, doi: 10.48550/arXiv.2511.17498

-

[77]

Uzsoy, A. S. M., Saydjari, A. K., Dey, A., et al. 2026, ApJ, 999, 241, doi: 10.3847/1538-4357/ae3f9a

-

[78]

2018, MNRAS, 478, L60, doi: 10.1093/mnrasl/sly058

Verhamme, A., Garel, T., Ventou, E., et al. 2018, MNRAS, 478, L60, doi: 10.1093/mnrasl/sly058

-

[79]

2006, A&A, 460, 397, doi: 10.1051/0004-6361:20065554

Verhamme, A., Schaerer, D., & Maselli, A. 2006, A&A, 460, 397, doi: 10.1051/0004-6361:20065554

-

[80]

SciPy 1.0: fundamental algorithms for scientific computing in Python

Virtanen, P., Gommers, R., Oliphant, T. E., et al. 2020, Nature Methods, 17, 261, doi: 10.1038/s41592-019-0686-2

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.