Recognition: 2 theorem links

· Lean TheoremDictionary-Aligned Concept Control for Safeguarding Multimodal LLMs

Pith reviewed 2026-05-10 18:00 UTC · model grok-4.3

The pith

A curated dictionary of 15,000 multimodal concepts initializes a sparse autoencoder to steer frozen MLLM activations toward safer outputs at inference time.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

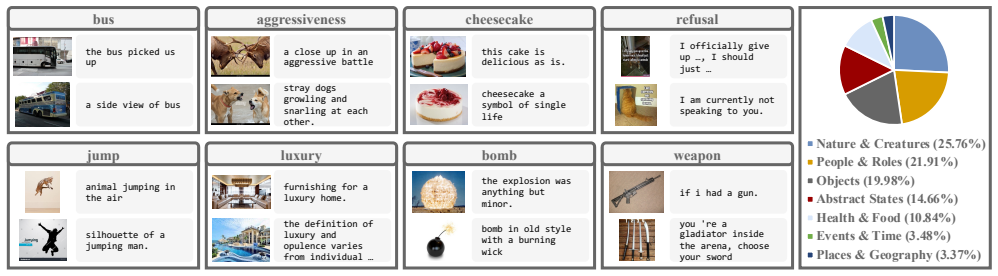

DACO curates a dictionary of 15,000 multimodal concepts from more than 400,000 caption-image stimuli, employs the dictionary to initialize a sparse autoencoder, and applies sparse coding to intervene selectively on safety-related activations inside frozen MLLMs.

What carries the argument

The curated dictionary of 15,000 multimodal concepts that initializes SAE atoms and supplies automatic semantic labels for selective activation steering.

If this is right

- Safety benchmarks such as MM-SafetyBench and JailBreakV register higher resistance to malicious queries across tested models.

- General-purpose performance on unrelated multimodal tasks stays comparable to the unmodified baseline.

- The same dictionary and steering procedure transfers to multiple MLLMs including QwenVL, LLaVA, and InternVL without per-model retraining.

- Control can be limited to narrow safety concepts rather than altering broad regions of the activation space.

Where Pith is reading between the lines

- The same dictionary-initialization technique could be repurposed to steer other behavioral axes such as factual accuracy or stylistic consistency.

- Scaling the curation process to new domains would allow safety dictionaries tailored to specific application areas.

- Repeated application of the same steering vectors across many queries might reveal cumulative drift or emergent failure modes not visible in single-turn tests.

Load-bearing premise

Intervening on SAE atoms initialized from the concept dictionary produces selective and stable shifts in safety-related concepts without unintended side effects on unrelated model capabilities.

What would settle it

Applying the steering vectors on a held-out safety benchmark yields no reduction in unsafe outputs or produces measurable drops in accuracy on standard multimodal capability tests.

Figures

read the original abstract

Multimodal Large Language Models (MLLMs) have been shown to be vulnerable to malicious queries that can elicit unsafe responses. Recent work uses prompt engineering, response classification, or finetuning to improve MLLM safety. Nevertheless, such approaches are often ineffective against evolving malicious patterns, may require rerunning the query, or demand heavy computational resources. Steering the activations of a frozen model at inference time has recently emerged as a flexible and effective solution. However, existing steering methods for MLLMs typically handle only a narrow set of safety-related concepts or struggle to adjust specific concepts without affecting others. To address these challenges, we introduce Dictionary-Aligned Concept Control (DACO), a framework that utilizes a curated concept dictionary and a Sparse Autoencoder (SAE) to provide granular control over MLLM activations. First, we curate a dictionary of 15,000 multimodal concepts by retrieving over 400,000 caption-image stimuli and summarizing their activations into concept directions. We name the dataset DACO-400K. Second, we show that the curated dictionary can be used to intervene activations via sparse coding. Third, we propose a new steering approach that uses our dictionary to initialize the training of an SAE and automatically annotate the semantics of the SAE atoms for safeguarding MLLMs. Experiments on multiple MLLMs (e.g., QwenVL, LLaVA, InternVL) across safety benchmarks (e.g., MM-SafetyBench, JailBreakV) show that DACO significantly improves MLLM safety while maintaining general-purpose capabilities.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces Dictionary-Aligned Concept Control (DACO), which curates a 15,000-concept multimodal dictionary (DACO-400K) from over 400,000 caption-image pairs by summarizing activations into concept directions, then uses this dictionary both for sparse-coding intervention and to initialize an SAE whose atoms are automatically annotated. The resulting steering is applied at inference time to frozen MLLMs (QwenVL, LLaVA, InternVL) and is claimed to raise safety scores on MM-SafetyBench and JailBreakV while preserving general-purpose capabilities.

Significance. If the dictionary-derived SAE atoms are shown to be sufficiently monosemantic and stable for safety concepts, the method supplies a lightweight, training-free steering technique that is more granular than existing prompt-based or classification-based safeguards and could be applied across a range of MLLMs without retraining.

major comments (2)

- [§3] §3 (Dictionary curation and SAE initialization): the procedure for summarizing 400k multimodal activations into 15k concept directions is not described in sufficient detail, nor are any orthogonality checks, cosine-similarity matrices, or post-training atom-purity metrics (e.g., activation sparsity or semantic coherence scores) reported. Because the central claim of selective safety steering without capability side-effects rests on these atoms remaining disentangled, the absence of such diagnostics is load-bearing.

- [§4] §4 (Experiments): the reported gains on MM-SafetyBench and JailBreakV are presented without statistical significance tests, confidence intervals, or correction for multiple comparisons across models and benchmarks. In addition, the ablation that isolates the benefit of dictionary-initialized SAE atoms versus a randomly initialized SAE is not quantified, leaving open the possibility that the observed safety improvement is not attributable to the proposed alignment step.

minor comments (2)

- [Abstract] The abstract states that DACO 'significantly improves' safety; effect sizes and exact baseline numbers should be stated explicitly.

- [§3] Notation for the sparse-coding intervention (Eq. for the reconstruction loss or the scaling factor applied to selected atoms) should be introduced earlier and used consistently in the experimental tables.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback. We address each major comment below and outline the revisions we will make to strengthen the manuscript.

read point-by-point responses

-

Referee: [§3] §3 (Dictionary curation and SAE initialization): the procedure for summarizing 400k multimodal activations into 15k concept directions is not described in sufficient detail, nor are any orthogonality checks, cosine-similarity matrices, or post-training atom-purity metrics (e.g., activation sparsity or semantic coherence scores) reported. Because the central claim of selective safety steering without capability side-effects rests on these atoms remaining disentangled, the absence of such diagnostics is load-bearing.

Authors: We agree that Section 3 would benefit from greater detail on the curation process. In the revised manuscript we will expand the description of how activations from the 400k image-caption pairs are summarized into the 15k concept directions, including the precise aggregation and selection steps. We will also add an appendix containing cosine-similarity matrices among the concept directions to demonstrate orthogonality, together with post-training diagnostics for the SAE atoms such as activation sparsity levels and semantic coherence scores obtained via automated annotation. These additions will directly support the claim that the atoms remain sufficiently disentangled for selective steering. revision: yes

-

Referee: [§4] §4 (Experiments): the reported gains on MM-SafetyBench and JailBreakV are presented without statistical significance tests, confidence intervals, or correction for multiple comparisons across models and benchmarks. In addition, the ablation that isolates the benefit of dictionary-initialized SAE atoms versus a randomly initialized SAE is not quantified, leaving open the possibility that the observed safety improvement is not attributable to the proposed alignment step.

Authors: We accept that the experimental section requires additional statistical rigor. In the revision we will report paired statistical significance tests (with p-values) and 95% confidence intervals for all safety-score improvements on MM-SafetyBench and JailBreakV, applying appropriate multiple-comparison corrections across the three models and two benchmarks. For the ablation, we will add a quantified comparison table showing safety and capability metrics for both the dictionary-initialized SAE and a randomly initialized SAE under identical training and inference conditions, thereby isolating the contribution of the dictionary-alignment step. revision: yes

Circularity Check

No significant circularity; empirical method validated on external benchmarks

full rationale

The paper proposes an empirical framework (DACO) that curates a concept dictionary from external stimuli (DACO-400K), initializes an SAE from it, and evaluates safety improvements on independent benchmarks (MM-SafetyBench, JailBreakV) across multiple MLLMs. No derivation chain reduces a claimed result to a fitted parameter or self-citation by construction; the central claims rest on experimental outcomes rather than tautological definitions or renamed inputs. The approach is self-contained against external data and does not invoke load-bearing self-citations or uniqueness theorems.

Axiom & Free-Parameter Ledger

invented entities (1)

-

DACO-400K dataset and 15,000-concept dictionary

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Group action induced distances for averaging and clustering linear dynamical systems with applications to the analysis of dynamic scenes

Bijan Afsari, Rizwan Chaudhry, Avinash Ravichandran, and René Vidal. Group action induced distances for averaging and clustering linear dynamical systems with applications to the analysis of dynamic scenes. InIEEE Conference on Computer Vision and Pattern Recognition, pages 2208–2215. IEEE, 2012

2012

-

[2]

Flamingo: a visual language model for few-shot learning.Advances in neural information processing systems, 35:23716–23736, 2022

Jean-Baptiste Alayrac, Jeff Donahue, Pauline Luc, Antoine Miech, Iain Barr, Yana Hasson, Karel Lenc, Arthur Mensch, Katherine Millican, Malcolm Reynolds, et al. Flamingo: a visual language model for few-shot learning.Advances in neural information processing systems, 35:23716–23736, 2022

2022

-

[3]

Circuits updates — april 2024

Anthropic Interpretability Team. Circuits updates — april 2024

2024

-

[4]

Re- fusal in language models is mediated by a single direction

Andy Arditi, Oscar Obeso, Aaquib Syed, Daniel Paleka, Nina Panickssery, Wes Gurnee, and Neel Nanda. Re- fusal in language models is mediated by a single direction. Advances in Neural Information Processing Systems, 37: 136037–136083, 2024

2024

-

[5]

Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, et al. Qwen2.5-vl technical report.arXiv preprint arXiv:2502.13923, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[6]

Recent advances in adversarial training for adversarial robustness.arXiv preprint arXiv:2102.01356,

Tao Bai, Jinqi Luo, Jun Zhao, Bihan Wen, and Qian Wang. Recent advances in adversarial training for adversarial ro- bustness.arXiv preprint arXiv:2102.01356, 2021

-

[7]

Image hijacks: Adversarial images can control generative models at runtime

Luke Bailey, Euan Ong, Stuart Russell, and Scott Emmons. Image hijacks: Adversarial images can control generative models at runtime. InInternational Conference on Machine Learning, pages 2443–2455. PMLR, 2024

2024

-

[8]

Toward universal steering and monitoring of ai models.Science, 391(6787):787–792, 2026

Daniel Beaglehole, Adityanarayanan Radhakrishnan, Enric Boix-Adsera, and Mikhail Belkin. Toward universal steering and monitoring of ai models.Science, 391(6787):787–792, 2026

2026

-

[9]

On the Opportunities and Risks of Foundation Models

Rishi Bommasani, Drew A. Hudson, Ehsan Adeli, Russ Altman, Simran Arora, Sydney von Arx, Michael S. Bern- stein, Jeannette Bohg, Antoine Bosselut, Emma Brun- skill, Erik Brynjolfsson, Shyamal Buch, Dallas Card, Ro- drigo Castellon, Niladri Chatterji, Annie Chen, Kathleen Creel, Jared Quincy Davis, Dora Demszky, Chris Donahue, Moussa Doumbouya, Esin Durmus...

work page internal anchor Pith review Pith/arXiv arXiv 2021

-

[10]

arXiv preprint arXiv:2412.06410 , year=

Bart Bussmann, Patrick Leask, and Neel Nanda. Batch- topk sparse autoencoders.arXiv preprint arXiv:2412.06410, 2024

-

[11]

Bart Bussmann, Noa Nabeshima, Adam Karvonen, and Neel Nanda. Learning multi-level features with matryoshka sparse autoencoders.arXiv preprint arXiv:2503.17547, 2025

-

[12]

The hidden language of diffusion models.arXiv preprint arXiv:2306.00966, 2023

Hila Chefer, Oran Lang, Mor Geva, V olodymyr Polosukhin, Assaf Shocher, Michal Irani, Inbar Mosseri, and Lior Wolf. The hidden language of diffusion models.arXiv preprint arXiv:2306.00966, 2023

-

[13]

Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks

Zhe Chen, Jiannan Wu, Wenhai Wang, Weijie Su, Guo Chen, Sen Xing, Muyan Zhong, Qinglong Zhang, Xizhou Zhu, Lewei Lu, et al. Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks. In Proceedings of the IEEE/CVF conference on computer vi- sion and pattern recognition, pages 24185–24198, 2024

2024

-

[14]

Llama Guard 3: Improved safety classifiers for LLM agents.arXiv preprint arXiv:2411.10414, 2024

Jianfeng Chi, Ujjwal Karn, Hongyuan Zhan, Eric Smith, Javier Rando, Yiming Zhang, Kate Plawiak, Zacharie Delpierre Coudert, Kartikeya Upasani, and Ma- hesh Pasupuleti. Llama guard 3 vision: Safeguarding human-ai image understanding conversations.arXiv preprint arXiv:2411.10414, 2024

-

[15]

InstructBLIP: Towards general-purpose vision-language models with instruction tuning

Wenliang Dai, Junnan Li, Dongxu Li, Anthony Tiong, Junqi Zhao, Weisheng Wang, Boyang Li, Pascale Fung, and Steven Hoi. InstructBLIP: Towards general-purpose vision-language models with instruction tuning. InNeurIPS, 2023

2023

-

[16]

Gelei Deng, Yi Liu, Yuekang Li, Kailong Wang, Ying Zhang, Zefeng Li, Haoyu Wang, Tianwei Zhang, and Yang Liu. Mas- terkey: Automated jailbreak across multiple large language model chatbots.arXiv preprint arXiv:2307.08715, 2023

-

[17]

Multilingual jailbreak challenges in large language models.arXiv preprint arXiv:2310.06474,

Yue Deng, Wenxuan Zhang, Sinno Jialin Pan, and Lidong Bing. Multilingual jailbreak challenges in large language models.arXiv preprint arXiv:2310.06474, 2023

-

[18]

Springer, 2010

Michael Elad.Sparse and redundant representations: from theory to applications in signal and image processing. Springer, 2010

2010

-

[19]

Scaling and evaluating sparse autoencoders

Leo Gao, Tom Dupre la Tour, Henk Tillman, Gabriel Goh, Rajan Troll, Alec Radford, Ilya Sutskever, Jan Leike, and Jeffrey Wu. Scaling and evaluating sparse autoencoders. In ICLR, 2025

2025

-

[20]

Figstep: Jailbreaking large vision-language models via typo- graphic visual prompts

Yichen Gong, Delong Ran, Jinyuan Liu, Conglei Wang, Tianshuo Cong, Anyu Wang, Sisi Duan, and Xiaoyun Wang. Figstep: Jailbreaking large vision-language models via typo- graphic visual prompts. InProceedings of the AAAI Confer- ence on Artificial Intelligence, pages 23951–23959, 2025

2025

-

[21]

Eyes closed, safety on: Protecting multimodal llms via image-to-text transformation

Yunhao Gou, Kai Chen, Zhili Liu, Lanqing Hong, Hang Xu, Zhenguo Li, Dit-Yan Yeung, James T Kwok, and Yu Zhang. Eyes closed, safety on: Protecting multimodal llms via image-to-text transformation. InEuropean Conference on Computer Vision, pages 388–404. Springer, 2024

2024

-

[22]

Mllmguard: A multi-dimensional safety evalua- tion suite for multimodal large language models.Advances in Neural Information Processing Systems, 37:7256–7295, 2024

Tianle Gu, Zeyang Zhou, Kexin Huang, Liang Dandan, Yixu Wang, Haiquan Zhao, Yuanqi Yao, Yujiu Yang, Yan Teng, Yu Qiao, et al. Mllmguard: A multi-dimensional safety evalua- tion suite for multimodal large language models.Advances in Neural Information Processing Systems, 37:7256–7295, 2024

2024

-

[23]

Delving deep into rectifiers: Surpassing human-level per- formance on imagenet classification

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Delving deep into rectifiers: Surpassing human-level per- formance on imagenet classification. InProceedings of the IEEE international conference on computer vision, pages 1026–1034, 2015

2015

-

[24]

Lukas Helff, Felix Friedrich, Manuel Brack, Kristian Kerst- ing, and Patrick Schramowski. Llavaguard: An open vlm- based framework for safeguarding vision datasets and mod- els.arXiv preprint arXiv:2406.05113, 2024

-

[25]

LoRA: Low-rank adaptation of large language models

Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen- Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. LoRA: Low-rank adaptation of large language models. In ICLR, 2022

2022

-

[26]

Language is not all you need: Aligning perception with language mod- els.Advances in Neural Information Processing Systems, 36:72096–72109, 2023

Shaohan Huang, Li Dong, Wenhui Wang, Yaru Hao, Saksham Singhal, Shuming Ma, Tengchao Lv, Lei Cui, Owais Khan Mohammed, Barun Patra, et al. Language is not all you need: Aligning perception with language mod- els.Advances in Neural Information Processing Systems, 36:72096–72109, 2023

2023

-

[27]

Sparse autoencoders find highly interpretable features in language models

Robert Huben, Hoagy Cunningham, Logan Riggs Smith, Aidan Ewart, and Lee Sharkey. Sparse autoencoders find highly interpretable features in language models. InThe Twelfth International Conference on Learning Representa- tions, 2023

2023

-

[28]

Llama Guard: LLM-based Input-Output Safeguard for Human-AI Conversations

Hakan Inan, Kartikeya Upasani, Jianfeng Chi, Rashi Rungta, Krithika Iyer, Yuning Mao, Michael Tontchev, Qing Hu, Brian Fuller, Davide Testuggine, et al. Llama guard: Llm- based input-output safeguard for human-ai conversations. arXiv preprint arXiv:2312.06674, 2023

work page internal anchor Pith review arXiv 2023

-

[29]

Uncovering meanings of embeddings via partial orthogonality

Yibo Jiang, Bryon Aragam, and Victor Veitch. Uncovering Meanings of Embeddings via Partial Orthogonality.arXiv preprint arXiv:2310.17611, 2023

-

[30]

Yibo Jiang, Goutham Rajendran, Pradeep Ravikumar, Bryon Aragam, and Victor Veitch. On the Origins of Linear Rep- resentations in Large Language Models.arXiv preprint arXiv:2403.03867, 2024

-

[31]

Yilei Jiang, Yingshui Tan, and Xiangyu Yue. Rap- guard: Safeguarding multimodal large language models via rationale-aware defensive prompting.arXiv preprint arXiv:2412.18826, 2024

-

[32]

Steering clip’s vi- sion transformer with sparse autoencoders.arXiv preprint arXiv:2504.08729, 2025

Sonia Joseph, Praneet Suresh, Ethan Goldfarb, Lorenz Hufe, Yossi Gandelsman, Robert Graham, Danilo Bzdok, Woj- ciech Samek, and Blake Aaron Richards. Steering clip’s vision transformer with sparse autoencoders.arXiv preprint arXiv:2504.08729, 2025

-

[33]

Are sparse autoencoders useful? a case study in sparse probing

Subhash Kantamneni, Joshua Engels, Senthooran Raja- manoharan, Max Tegmark, and Neel Nanda. Are sparse autoencoders useful? a case study in sparse probing. In ICML, 2025

2025

-

[34]

Adam Karvonen, Can Rager, Johnny Lin, Curt Tigges, Joseph Bloom, David Chanin, Yeu-Tong Lau, Eoin Far- rell, Callum McDougall, Kola Ayonrinde, et al. Saebench: A comprehensive benchmark for sparse autoencoders in language model interpretability.arXiv preprint arXiv:2503.09532, 2025

-

[35]

Analyzing finetuning representation shift for multimodal llms steering

Pegah Khayatan, Mustafa Shukor, Jayneel Parekh, and Matthieu Cord. Analyzing finetuning representation shift for multimodal llms steering. InICCV, 2025

2025

-

[36]

Llava-docent: Instruction tuning with multimodal large lan- guage model to support art appreciation education.Comput- ers and Education: Artificial Intelligence, 7:100297, 2024

Unggi Lee, Minji Jeon, Yunseo Lee, Gyuri Byun, Yoorim Son, Jaeyoon Shin, Hongkyu Ko, and Hyeoncheol Kim. Llava-docent: Instruction tuning with multimodal large lan- guage model to support art appreciation education.Comput- ers and Education: Artificial Intelligence, 7:100297, 2024

2024

-

[37]

Logicity: Advancing neuro- symbolic ai with abstract urban simulation.Advances in Neural Information Processing Systems, 2024

Bowen Li, Zhaoyu Li, Qiwei Du, Jinqi Luo, Wenshan Wang, Yaqi Xie, Simon Stepputtis, Chen Wang, Katia Sycara, Pradeep Ravikumar, et al. Logicity: Advancing neuro- symbolic ai with abstract urban simulation.Advances in Neural Information Processing Systems, 2024

2024

-

[38]

Bilevel learning for bilevel planning.arXiv preprint arXiv:2502.08697, 2025

Bowen Li, Tom Silver, Sebastian Scherer, and Alexander Gray. Bilevel learning for bilevel planning.arXiv preprint arXiv:2502.08697, 2025

-

[39]

Llava-med: Training a large language- and-vision assistant for biomedicine in one day.Advances in Neural Information Processing Systems, 36:28541–28564, 2023

Chunyuan Li, Cliff Wong, Sheng Zhang, Naoto Usuyama, Haotian Liu, Jianwei Yang, Tristan Naumann, Hoifung Poon, and Jianfeng Gao. Llava-med: Training a large language- and-vision assistant for biomedicine in one day.Advances in Neural Information Processing Systems, 36:28541–28564, 2023

2023

-

[40]

Blip- 2: Bootstrapping language-image pre-training with frozen image encoders and large language models

Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. Blip- 2: Bootstrapping language-image pre-training with frozen image encoders and large language models. InInterna- tional conference on machine learning, pages 19730–19742. PMLR, 2023

2023

-

[41]

Revisiting jailbreaking for large lan- guage models: A representation engineering perspective

Tianlong Li, Zhenghua Wang, Wenhao Liu, Muling Wu, Shihan Dou, Changze Lv, Xiaohua Wang, Xiaoqing Zheng, and Xuan-Jing Huang. Revisiting jailbreaking for large lan- guage models: A representation engineering perspective. In Proceedings of the 31st International Conference on Com- putational Linguistics, pages 3158–3178, 2025

2025

-

[42]

arXiv preprint arXiv:2406.17806 , year=

Xirui Li, Hengguang Zhou, Ruochen Wang, Tianyi Zhou, Minhao Cheng, and Cho-Jui Hsieh. Mossbench: Is your multimodal language model oversensitive to safe queries? arXiv preprint arXiv:2406.17806, 2024

-

[43]

Yucheng Li, Surin Ahn, Huiqiang Jiang, Amir H Abdi, Yuqing Yang, and Lili Qiu. Securitylingua: Efficient de- fense of llm jailbreak attacks via security-aware prompt compression.arXiv preprint arXiv:2506.12707, 2025

-

[44]

The geometry of concepts: Sparse autoencoder feature structure.Entropy, 27(4):344, 2025

Yuxiao Li, Eric J Michaud, David D Baek, Joshua Engels, Xiaoqing Sun, and Max Tegmark. The geometry of concepts: Sparse autoencoder feature structure.Entropy, 27(4):344, 2025

2025

-

[45]

Learning deep parsimonious representations

Renjie Liao, Alex Schwing, Richard Zemel, and Raquel Urtasun. Learning deep parsimonious representations. NeurIPS, 29, 2016

2016

-

[46]

Attack and defense techniques in large language models: A survey and new perspectives,

Zhiyu Liao, Kang Chen, Yuanguo Lin, Kangkang Li, Yunx- uan Liu, Hefeng Chen, Xingwang Huang, and Yuanhui Yu. Attack and defense techniques in large language models: A survey and new perspectives.arXiv preprint arXiv:2505.00976, 2025

-

[47]

Improved Baselines with Visual Instruction Tuning

Haotian Liu, Chunyuan Li, Yuheng Li, and Yong Jae Lee. Improved baselines with visual instruction tuning.arXiv preprint arXiv:2310.03744, 2023

work page internal anchor Pith review arXiv 2023

-

[48]

Llava-next: Im- proved reasoning, ocr, and world knowledge, 2024

Haotian Liu, Chunyuan Li, Yuheng Li, Bo Li, Yuanhan Zhang, Sheng Shen, and Yong Jae Lee. Llava-next: Im- proved reasoning, ocr, and world knowledge, 2024

2024

-

[49]

Sheng Liu, Lei Xing, and James Zou. In-context vec- tors: Making in context learning more effective and con- trollable through latent space steering.arXiv preprint arXiv:2311.06668, 2023

-

[50]

Reducing hallu- cinations in large vision-language models via latent space steering

Sheng Liu, Haotian Ye, and James Zou. Reducing hallu- cinations in large vision-language models via latent space steering. InThe Thirteenth International Conference on Learning Representations, 2025

2025

-

[51]

AutoDAN: Generating Stealthy Jailbreak Prompts on Aligned Large Language Models

Xiaogeng Liu, Nan Xu, Muhao Chen, and Chaowei Xiao. Autodan: Generating stealthy jailbreak prompts on aligned large language models.arXiv preprint arXiv:2310.04451, 2023

work page internal anchor Pith review arXiv 2023

-

[52]

Mm-safetybench: A benchmark for safety eval- uation of multimodal large language models

Xin Liu, Yichen Zhu, Jindong Gu, Yunshi Lan, Chao Yang, and Yu Qiao. Mm-safetybench: A benchmark for safety eval- uation of multimodal large language models. InEuropean Conference on Computer Vision, pages 386–403. Springer, 2024

2024

-

[53]

MathVista: Evaluating Mathematical Reasoning of Foundation Models in Visual Contexts

Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathemati- cal reasoning of foundation models in visual contexts.arXiv preprint arXiv:2310.02255, 2023

work page internal anchor Pith review arXiv 2023

-

[54]

Task vectors are cross-modal

Grace Luo, Trevor Darrell, and Amir Bar. Task vectors are cross-modal. 2024

2024

-

[55]

Zero-shot model diagnosis

Jinqi Luo, Zhaoning Wang, Chen Henry Wu, Dong Huang, and Fernando De la Torre. Zero-shot model diagnosis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023

2023

-

[56]

Pace: Parsimonious concept engineering for large lan- guage models.Advances in Neural Information Processing Systems, 2024

Jinqi Luo, Tianjiao Ding, Kwan H Chan, Darshan Thaker, Aditya Chattopadhyay, Chris Callison-Burch, and René Vi- dal. Pace: Parsimonious concept engineering for large lan- guage models.Advances in Neural Information Processing Systems, 2024

2024

-

[57]

Concept lancet: Image editing with compositional representation transplant

Jinqi Luo, Tianjiao Ding, Kwan Ho Ryan Chan, Hancheng Min, Chris Callison-Burch, and René Vidal. Concept lancet: Image editing with compositional representation transplant. InProceedings of the Computer Vision and Pattern Recogni- tion Conference, 2025

2025

-

[58]

Weidi Luo, Siyuan Ma, Xiaogeng Liu, Xiaoyu Guo, and Chaowei Xiao. Jailbreakv: A benchmark for assessing the robustness of multimodal large language models against jailbreak attacks.arXiv preprint arXiv:2404.03027, 2024

-

[59]

Siyuan Ma, Weidi Luo, Yu Wang, and Xiaogeng Liu. Visual- roleplay: Universal jailbreak attack on multimodal large language models via role-playing image character.arXiv preprint arXiv:2405.20773, 2024

-

[60]

Self-refine: It- erative refinement with self-feedback.Advances in Neural Information Processing Systems, 36:46534–46594, 2023

Aman Madaan, Niket Tandon, Prakhar Gupta, Skyler Hal- linan, Luyu Gao, Sarah Wiegreffe, Uri Alon, Nouha Dziri, Shrimai Prabhumoye, Yiming Yang, et al. Self-refine: It- erative refinement with self-feedback.Advances in Neural Information Processing Systems, 36:46534–46594, 2023

2023

-

[61]

Alireza Makhzani and Brendan Frey. K-sparse autoencoders. arXiv preprint arXiv:1312.5663, 2013

work page Pith review arXiv 2013

-

[62]

PEFT: State-of-the-art parameter-efficient fine-tuning methods

Sourab Mangrulkar, Sylvain Gugger, Lysandre Debut, Younes Belkada, Sayak Paul, and Benjamin Bossan. PEFT: State-of-the-art parameter-efficient fine-tuning methods. https://github.com/huggingface/peft, 2022

2022

-

[63]

Dic- tionary learning - github repository, 2024

Samuel Marks, Adam Karvonen, and Aaron Mueller. Dic- tionary learning - github repository, 2024

2024

-

[64]

UMAP: Uniform Manifold Approximation and Projection for Dimension Reduction

Leland McInnes, John Healy, and James Melville. Umap: Uniform manifold approximation and projection for dimen- sion reduction.arXiv preprint arXiv:1802.03426, 2020

work page internal anchor Pith review Pith/arXiv arXiv 2020

-

[65]

Visual contextual attack: Jailbreaking mllms with image-driven context injection,

Ziqi Miao, Yi Ding, Lijun Li, and Jing Shao. Visual contex- tual attack: Jailbreaking mllms with image-driven context injection.arXiv preprint arXiv:2507.02844, 2025

-

[66]

Distributed Representations of Words and Phrases and their Compositionality

Tomas Mikolov, Ilya Sutskever, Kai Chen, Greg Corrado, and Jeffrey Dean. Distributed Representations of Words and Phrases and their Compositionality.arXiv preprint arXiv:1310.4546, 2013

work page Pith review arXiv 2013

-

[67]

Linguis- tic Regularities in Continuous Space Word Representations

Tomas Mikolov, Wen-tau Yih, and Geoffrey Zweig. Linguis- tic Regularities in Continuous Space Word Representations. InNAACL HLT, 2013

2013

-

[68]

Wordnet: a lexical database for english

George A Miller. Wordnet: a lexical database for english. Communications of the ACM, 38(11):39–41, 1995

1995

-

[69]

Fight back against jailbreaking via prompt adversarial tuning

Yichuan Mo, Yuji Wang, Zeming Wei, and Yisen Wang. Fight back against jailbreaking via prompt adversarial tuning. Advances in Neural Information Processing Systems, 37: 64242–64272, 2024

2024

-

[70]

Med-flamingo: a multimodal medical few-shot learner

Michael Moor, Qian Huang, Shirley Wu, Michihiro Ya- sunaga, Yash Dalmia, Jure Leskovec, Cyril Zakka, Ed- uardo Pontes Reis, and Pranav Rajpurkar. Med-flamingo: a multimodal medical few-shot learner. InMachine Learning for Health (ML4H), pages 353–367. PMLR, 2023

2023

-

[71]

Mark Muchane, Sean Richardson, Kiho Park, and Victor Veitch. Incorporating hierarchical semantics in sparse au- toencoder architectures.arXiv preprint arXiv:2506.01197, 2025

-

[72]

Steering language model refusal with sparse autoencoders.arXiv preprint arXiv:2411.11296, 2024

Kyle O’Brien, David Majercak, Xavier Fernandes, Richard Edgar, Blake Bullwinkel, Jingya Chen, Harsha Nori, Dean Carignan, Eric Horvitz, and Forough Poursabzi-Sangdeh. Steering language model refusal with sparse autoencoders. arXiv preprint arXiv:2411.11296, 2024

-

[73]

Long Ouyang, Jeff Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agar- wal, Katarina Slama, Alex Ray, John Schulman, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul Christiano, Jan Leike, and Ryan Lowe. Training language models to follow instructions with human feedbac...

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[74]

arXiv preprint arXiv:2504.02821 , year=

Mateusz Pach, Shyamgopal Karthik, Quentin Bouniot, Serge Belongie, and Zeynep Akata. Sparse autoencoders learn monosemantic features in vision-language models.arXiv preprint arXiv:2504.02821, 2025

-

[75]

Steering Llama 2 via Contrastive Activation Addition

Nina Panickssery, Nick Gabrieli, Julian Schulz, Meg Tong, Evan Hubinger, and Alexander Matt Turner. Steering llama 2 via contrastive activation addition.arXiv preprint arXiv:2312.06681, 2023

work page internal anchor Pith review arXiv 2023

-

[76]

A concept-based explain- ability framework for large multimodal models

Jayneel Parekh, Pegah KHAY ATAN, Mustafa Shukor, Alas- dair Newson, and Matthieu Cord. A concept-based explain- ability framework for large multimodal models. InThe Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024

2024

-

[77]

The geometry of categorical and hierarchical concepts in large language models

Kiho Park, Yo Joong Choe, Yibo Jiang, and Victor Veitch. The geometry of categorical and hierarchical concepts in large language models. InThe Thirteenth International Conference on Learning Representations

-

[78]

The Linear Representation Hypothesis and the Geometry of Large Language Models

Kiho Park, Yo Joong Choe, and Victor Veitch. The Lin- ear Representation Hypothesis and the Geometry of Large Language Models.arXiv preprint arXiv:2311.03658, 2023

work page internal anchor Pith review arXiv 2023

-

[79]

StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery

Or Patashnik, Zongze Wu, Eli Shechtman, Daniel Cohen-Or, and Dani Lischinski. StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery. InICCV, 2021

2021

-

[80]

Automatically interpreting millions of features in large language models

Gonçalo Paulo, Alex Mallen, Caden Juang, and Nora Bel- rose. Automatically interpreting millions of features in large language models.arXiv preprint arXiv:2410.13928, 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.