Recognition: unknown

Cognitive Pivot Points and Visual Anchoring: Unveiling and Rectifying Hallucinations in Multimodal Reasoning Models

Pith reviewed 2026-05-10 15:59 UTC · model grok-4.3

The pith

Multimodal reasoning models hallucinate when they stop querying visual evidence at high-entropy decision points.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

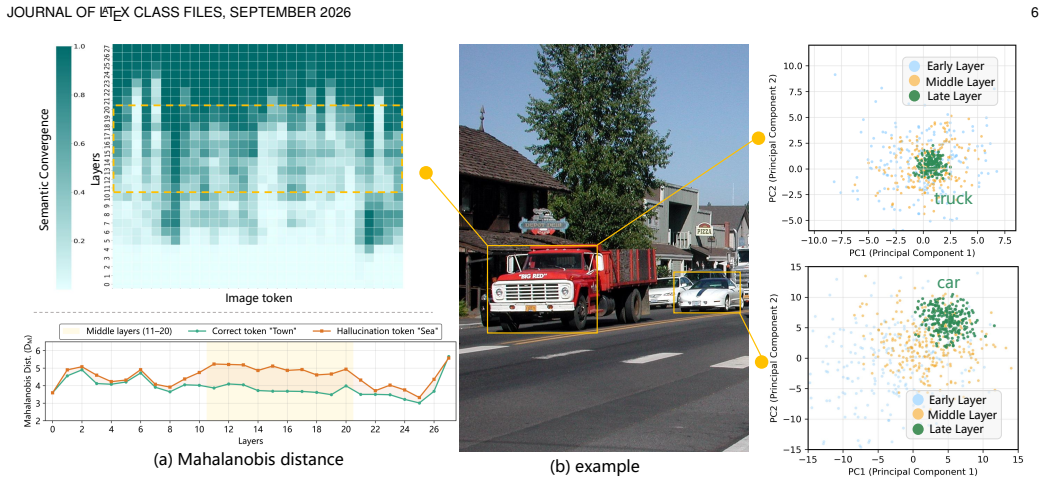

Multimodal Large Reasoning Models remain vulnerable to hallucinations during extended reasoning chains. These errors correlate strongly with cognitive bifurcation points that exhibit high entropy states. The root cause is a localized breakdown in visual semantic anchoring within intermediate network layers; at these high-uncertainty transitions the model fails to query visual evidence and reverts to language priors. The authors therefore introduce V-STAR, a training paradigm that augments outcome supervision with fine-grained internal attention guidance. Its central components are the Hierarchical Visual Attention Reward, which dynamically incentivizes visual attention across critical layers

What carries the argument

Hierarchical Visual Attention Reward (HVAR) within the GRPO framework, which detects high-entropy states and rewards visual attention in intermediate layers to restore anchoring to the visual input.

If this is right

- Outcome-level supervision alone is insufficient; fine-grained internal attention guidance at uncertain steps measurably reduces hallucinations.

- Detecting high-entropy states allows targeted reinforcement of visual queries that overrides language priors.

- Forced reflection around bifurcation points converts external debiasing into an internalized habit of visual verification.

- The resulting capability operates without added test-time compute or performance loss on standard reasoning metrics.

Where Pith is reading between the lines

- The same entropy-triggered anchoring technique could be tested on other chain-of-thought tasks where models drift from input evidence.

- Because the failure is localized to intermediate layers, lighter interventions focused on those layers may suffice for broader multimodal models.

- If entropy detection proves reliable across architectures, it offers a general signal for inserting verification steps in any long reasoning sequence.

Load-bearing premise

That dynamically rewarding visual attention at high-entropy points during training will cause the model to maintain visual anchoring automatically in later use without reducing overall reasoning performance.

What would settle it

Train a model with the proposed method, then measure whether hallucinations and visual attention metrics at previously identified high-entropy bifurcation points differ from those of an identical baseline model on the same long-chain visual reasoning tasks.

Figures

read the original abstract

Multimodal Large Reasoning Models (MLRMs) have achieved remarkable strides in visual reasoning through test time compute scaling, yet long chain reasoning remains prone to hallucinations. We identify a concerning phenomenon termed the Reasoning Vision Truth Disconnect (RVTD): hallucinations are strongly correlated with cognitive bifurcation points that often exhibit high entropy states. We attribute this vulnerability to a breakdown in visual semantic anchoring, localized within the network's intermediate layers; specifically, during these high uncertainty transitions, the model fails to query visual evidence, reverting instead to language priors. Consequently, we advocate a shift from solely outcome level supervision to augmenting it with fine grained internal attention guidance. To this end, we propose V-STAR (Visual Structural Training with Attention Reinforcement), a lightweight, holistic training paradigm designed to internalize visually aware reasoning capabilities. Central to our approach is the Hierarchical Visual Attention Reward (HVAR), integrated within the GRPO framework. Upon detecting high entropy states, this mechanism dynamically incentivizes visual attention across critical intermediate layers, thereby anchoring the reasoning process back to the visual input. Furthermore, we introduce the Forced Reflection Mechanism (FRM), a trajectory editing strategy that disrupts cognitive inertia by triggering reflection around high entropy cognitive bifurcation points and encouraging verification of subsequent steps against the visual input, thereby translating external debiasing interventions into an intrinsic capability for hallucination mitigation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper identifies a phenomenon called Reasoning Vision Truth Disconnect (RVTD) in Multimodal Large Reasoning Models (MLRMs), claiming that hallucinations correlate strongly with high-entropy cognitive bifurcation points in intermediate layers where visual semantic anchoring breaks down and models revert to language priors. It proposes V-STAR, a training paradigm using Hierarchical Visual Attention Reward (HVAR) within the GRPO framework to dynamically incentivize visual attention at high-entropy states, plus Forced Reflection Mechanism (FRM) for trajectory editing to encourage verification against visual input, aiming to internalize hallucination mitigation.

Significance. If the correlation measurements, layer-localized attention breakdowns, and mitigation results hold under the reported experimental controls, this work offers a concrete internal mechanism for addressing hallucinations beyond outcome-level supervision, with potential to improve reliability in long-chain visual reasoning without external debiasing at inference time. The provision of attention visualizations, trajectory analyses, and integration with existing GRPO strengthens the case for practical adoption.

major comments (1)

- [Experimental Evaluation] The central RVTD correlation and layer-localization claims are supported by the experimental sections, attention maps, and trajectory analyses, but the assumption that HVAR+FRM translates to intrinsic capability without performance degradation requires explicit reporting of overall reasoning accuracy metrics (e.g., on standard VQA or reasoning benchmarks) alongside hallucination rates to confirm no trade-off.

minor comments (2)

- [Introduction] The abstract and introduction introduce multiple new terms (RVTD, HVAR, FRM, V-STAR) without a consolidated notation table; adding one would improve readability.

- [Method] Clarify the precise entropy threshold and detection method used to trigger HVAR in the GRPO integration, as the high-level description leaves implementation details ambiguous for reproduction.

Simulated Author's Rebuttal

We thank the referee for the positive evaluation, recognition of the RVTD phenomenon and V-STAR contributions, and recommendation for minor revision. We address the single major comment below.

read point-by-point responses

-

Referee: The central RVTD correlation and layer-localization claims are supported by the experimental sections, attention maps, and trajectory analyses, but the assumption that HVAR+FRM translates to intrinsic capability without performance degradation requires explicit reporting of overall reasoning accuracy metrics (e.g., on standard VQA or reasoning benchmarks) alongside hallucination rates to confirm no trade-off.

Authors: We agree that confirming the absence of performance trade-offs is essential for validating that HVAR and FRM internalize hallucination mitigation as an intrinsic capability. The current manuscript emphasizes hallucination reduction in long-chain visual reasoning; to strengthen the claim, the revised version will include explicit accuracy results on standard benchmarks (e.g., VQA-v2 and visual reasoning tasks) reported alongside hallucination rates under identical experimental controls. This addition will directly demonstrate that V-STAR improves reliability without degrading overall reasoning performance. revision: yes

Circularity Check

No significant circularity identified

full rationale

The paper identifies RVTD as an empirical correlation between hallucinations and high-entropy bifurcation points, attributes it to visual anchoring failure in intermediate layers, and proposes V-STAR incorporating HVAR within the pre-existing GRPO framework plus FRM as a trajectory intervention. No equations, parameter fits, or first-principles derivations are present that reduce any claimed result to quantities defined by the paper's own outputs or self-citations. The central claims rest on experimental observations, attention visualizations, and trajectory analyses treated as independent evidence rather than self-referential constructions, rendering the argument self-contained.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption High-entropy states during reasoning correspond to cognitive bifurcation points at which visual semantic anchoring fails and language priors dominate.

- domain assumption Fine-grained internal attention guidance can be translated into an intrinsic model capability via reward shaping and trajectory editing.

invented entities (3)

-

Reasoning Vision Truth Disconnect (RVTD)

no independent evidence

-

Hierarchical Visual Attention Reward (HVAR)

no independent evidence

-

Forced Reflection Mechanism (FRM)

no independent evidence

Reference graph

Works this paper leans on

-

[1]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

D. Guo, D. Yang, H. Zhang, J. Song, P . Wang, Q. Zhu, R. Xu, R. Zhang, S. Ma, X. Biet al., “Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning,”arXiv preprint arXiv:2501.12948, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[2]

Tree of thoughts: Deliberate problem solving with large language models,

S. Yao, D. Yu, J. Zhao, I. Shafran, T. Griffiths, Y. Cao, and K. Narasimhan, “Tree of thoughts: Deliberate problem solving with large language models,”Advances in neural information processing systems, vol. 36, pp. 11 809–11 822, 2023

2023

-

[3]

Sparks of Artificial General Intelligence: Early experiments with GPT-4

S. Bubeck, V . Chandrasekaran, R. Eldan, J. Gehrke, E. Horvitz, E. Kamar, P . Lee, Y. T. Lee, Y. Li, S. Lundberg, H. Nori, H. Palangi, M. T. Ribeiro, and Y. Zhang, “Sparks of artificial general intelligence: Early experiments with gpt-4,”arXiv preprint arXiv:2303.12712, 2023

work page internal anchor Pith review arXiv 2023

-

[4]

Training language models to follow instructions with human feedback,

L. Ouyang, J. Wu, X. Jiang, D. Almeida, C. Wainwright, P . Mishkin, C. Zhang, S. Agarwal, K. Slama, A. Rayet al., “Training language models to follow instructions with human feedback,”Advances in neural information processing systems, vol. 35, pp. 27 730–27 744, 2022

2022

-

[5]

A. Jaech, A. Kalai, A. Lerer, A. Richardson, A. El-Kishky, A. Low, A. Helyar, A. Madry, A. Beutel, A. Carneyet al., “Openai o1 system card,”arXiv preprint arXiv:2412.16720, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[6]

Self-Consistency Improves Chain of Thought Reasoning in Language Models

X. Wang, J. Wei, D. Schuurmans, Q. Le, E. Chi, S. Narang, A. Chowd- hery, and D. Zhou, “Self-consistency improves chain of thought reasoning in language models,”arXiv preprint arXiv:2203.11171, 2022

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[7]

Chain-of-thought prompting elicits reasoning in large language models,

J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V . Le, D. Zhouet al., “Chain-of-thought prompting elicits reasoning in large language models,”Advances in neural information processing systems, vol. 35, pp. 24 824–24 837, 2022

2022

-

[8]

Large language models are zero-shot reasoners,

T. Kojima, S. S. Gu, M. Reid, Y. Matsuo, and Y. Iwasawa, “Large language models are zero-shot reasoners,”Advances in neural information processing systems, vol. 35, pp. 22 199–22 213, 2022

2022

-

[9]

Learn to explain: Multimodal reasoning via thought chains for science question answering,

P . Lu, S. Mishra, T. Xia, L. Qiu, K.-W. Chang, S.-C. Zhu, O. Tafjord, P . Clark, and A. Kalyan, “Learn to explain: Multimodal reasoning via thought chains for science question answering,”Advances in neural information processing systems, vol. 35, pp. 2507–2521, 2022

2022

-

[10]

Llava-cot: Let vision language models reason step-by-step,

G. Xu, P . Jin, Z. Wu, H. Li, Y. Song, L. Sun, and L. Yuan, “Llava-cot: Let vision language models reason step-by-step,” inProceedings of the IEEE/CVF International Conference on Computer Vision, 2025, pp. 2087–2098

2025

-

[11]

Multimodal Chain-of-Thought Reasoning in Language Models

Z. Zhang, A. Zhang, M. Li, H. Zhao, G. Karypis, and A. Smola, “Multimodal chain-of-thought reasoning in language models,”arXiv preprint arXiv:2302.00923, 2023

work page internal anchor Pith review arXiv 2023

-

[12]

Visual instruction tuning,

H. Liu, C. Li, Q. Wu, and Y. J. Lee, “Visual instruction tuning,” Advances in neural information processing systems, vol. 36, pp. 34 892– 34 916, 2023

2023

-

[13]

Flamingo: a visual language model for few-shot learning,

J.-B. Alayrac, J. Donahue, P . Luc, A. Miech, I. Barr, Y. Hasson, K. Lenc, A. Mensch, K. Millican, M. Reynoldset al., “Flamingo: a visual language model for few-shot learning,”Advances in neural information processing systems, vol. 35, pp. 23 716–23 736, 2022

2022

-

[14]

Blip-2: Bootstrapping language- image pre-training with frozen image encoders and large language models,

J. Li, D. Li, S. Savarese, and S. Hoi, “Blip-2: Bootstrapping language- image pre-training with frozen image encoders and large language models,” inInternational conference on machine learning. PMLR, 2023, pp. 19 730–19 742

2023

-

[15]

Instructblip: Towards general-purpose vision-language models with instruction tuning,

W. Dai, J. Li, D. Li, A. Tiong, J. Zhao, W. Wang, B. Li, P . N. Fung, and S. Hoi, “Instructblip: Towards general-purpose vision-language models with instruction tuning,”Advances in neural information processing systems, vol. 36, pp. 49 250–49 267, 2023

2023

-

[16]

MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models

D. Zhu, J. Chen, X. Shen, X. Li, and M. Elhoseiny, “Minigpt-4: Enhancing vision-language understanding with advanced large language models,”arXiv preprint arXiv:2304.10592, 2023

work page internal anchor Pith review arXiv 2023

-

[17]

Lvlm-ehub: A comprehensive evaluation benchmark for large vision-language models,

P . Xu, W. Shao, K. Zhang, P . Gao, S. Liu, M. Lei, F. Meng, S. Huang, Y. Qiao, and P . Luo, “Lvlm-ehub: A comprehensive evaluation benchmark for large vision-language models,”IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 47, no. 3, pp. 1877– 1893, 2025

2025

-

[19]

Rlhf-v: Towards trustworthy mllms via behavior alignment from fine-grained correctional human feedback,

T. Yu, Y. Yao, H. Zhang, T. He, Y. Han, G. Cui, J. Hu, Z. Liu, H.-T. Zheng, M. Sunet al., “Rlhf-v: Towards trustworthy mllms via behavior alignment from fine-grained correctional human feedback,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 13 807–13 816

2024

-

[20]

Math-shepherd: Verify and reinforce llms step-by-step without human annotations,

P . Wang, L. Li, Z. Shao, R. Xu, D. Dai, Y. Li, D. Chen, Y. Wu, and Z. Sui, “Math-shepherd: Verify and reinforce llms step-by-step without human annotations,” inProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2024, pp. 9426–9439

2024

-

[21]

Mitigating object hallucinations in large vision-language models through visual contrastive decoding,

S. Leng, H. Zhang, G. Chen, X. Li, S. Lu, C. Miao, and L. Bing, “Mitigating object hallucinations in large vision-language models through visual contrastive decoding,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 13 872–13 882

2024

-

[22]

Evaluating object hallucination in large vision-language models,

Y. Li, Y. Du, K. Zhou, J. Wang, W. X. Zhao, and J.-R. Wen, “Evaluating object hallucination in large vision-language models,” inProceedings of the 2023 conference on empirical methods in natural language processing, 2023, pp. 292–305

2023

-

[23]

Mitigating Hallucination in Large Multi-Modal Models via Robust Instruction Tuning

F. Liu, K. Lin, L. Li, J. Wang, Y. Yacoob, and L. Wang, “Mitigating hallucination in large multi-modal models via robust instruction tuning,”arXiv preprint arXiv:2306.14565, 2023

work page internal anchor Pith review arXiv 2023

-

[24]

Hallusionbench: an advanced diagnostic suite for entangled language hallucination and visual illusion in large vision-language models,

T. Guan, F. Liu, X. Wu, R. Xian, Z. Li, X. Liu, X. Wang, L. Chen, F. Huang, Y. Yacoobet al., “Hallusionbench: an advanced diagnostic suite for entangled language hallucination and visual illusion in large vision-language models,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 14 375– 14 385. JOURNAL OF LATEX...

2024

-

[25]

J. Ma, P . Wang, D. Kong, Z. Wang, J. Liu, H. Pei, and J. Zhao, “ Robust Visual Question Answering: Datasets, Methods, and Future Challenges ,”IEEE Transactions on Pattern Analysis & Machine Intelligence, vol. 46, no. 08, pp. 5575–5594, Aug. 2024. [Online]. Available: https://doi.ieeecomputersociety.org/10.1109/TPAMI. 2024.3366154

-

[26]

Cross-Modal Causal Relational Reasoning for Event-Level Visual Question Answering ,

Y. Liu, G. Li, and L. Lin, “ Cross-Modal Causal Relational Reasoning for Event-Level Visual Question Answering ,”IEEE Transactions on Pattern Analysis & Machine Intelligence, vol. 45, no. 10, pp. 11 624–11 641, Oct. 2023. [Online]. Available: https: //doi.ieeecomputersociety.org/10.1109/TPAMI.2023.3284038

-

[27]

Let’s verify step by step,

H. Lightman, V . Kosaraju, Y. Burda, H. Edwards, B. Baker, T. Lee, J. Leike, J. Schulman, I. Sutskever, and K. Cobbe, “Let’s verify step by step,” inThe twelfth international conference on learning representations, 2023

2023

-

[28]

Star: Bootstrapping reasoning with reasoning,

E. Zelikman, Y. Wu, J. Mu, and N. Goodman, “Star: Bootstrapping reasoning with reasoning,”Advances in Neural Information Processing Systems, vol. 35, pp. 15 476–15 488, 2022

2022

-

[29]

Reflexion: Language agents with verbal reinforcement learning,

N. Shinn, F. Cassano, A. Gopinath, K. Narasimhan, and S. Yao, “Reflexion: Language agents with verbal reinforcement learning,” Advances in neural information processing systems, vol. 36, pp. 8634– 8652, 2023

2023

-

[30]

A. Grattafiori, A. Dubey, A. Jauhri, A. Pandey, A. Kadian, A. Al- Dahle, A. Letman, A. Mathur, A. Schelten, A. Vaughanet al., “The llama 3 herd of models,”arXiv preprint arXiv:2407.21783, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[31]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P . Dhariwal, A. Radford, and O. Klimov, “Proximal policy optimization algorithms,”arXiv preprint arXiv:1707.06347, 2017

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[32]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Z. Shao, P . Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y. Li, Y. Wuet al., “Deepseekmath: Pushing the limits of mathematical reasoning in open language models,”arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[33]

Qwen2.5-Math Technical Report: Toward Mathematical Expert Model via Self-Improvement

A. Yang, B. Zhang, B. Hui, B. Gao, B. Yu, C. Li, D. Liu, J. Tu, J. Zhou, J. Linet al., “Qwen2. 5-math technical report: Toward mathematical expert model via self-improvement,”arXiv preprint arXiv:2409.12122, 2024

work page internal anchor Pith review arXiv 2024

-

[34]

Self-refine: Itera- tive refinement with self-feedback,

A. Madaan, N. Tandon, P . Gupta, S. Hallinan, L. Gao, S. Wiegreffe, U. Alon, N. Dziri, S. Prabhumoye, Y. Yanget al., “Self-refine: Itera- tive refinement with self-feedback,”Advances in neural information processing systems, vol. 36, pp. 46 534–46 594, 2023

2023

-

[35]

SimpleRL-Zoo: Investigating and Taming Zero Reinforcement Learning for Open Base Models in the Wild

W. Zeng, Y. Huang, Q. Liu, W. Liu, K. He, Z. Ma, and J. He, “Simplerl-zoo: Investigating and taming zero reinforcement learning for open base models in the wild,”arXiv preprint arXiv:2503.18892, 2025

work page internal anchor Pith review arXiv 2025

-

[36]

Scaling up rl: Unlocking diverse reasoning in llms via prolonged training,

M. Liu, S. Diao, J. Hu, X. Lu, X. Dong, H. Zhang, A. Bukharin, S. Zhang, J. Zeng, M. N. Sreedharet al., “Scaling up rl: Unlocking diverse reasoning in llms via prolonged training,”arXiv preprint arXiv:2507.12507, 2025

-

[37]

DAPO: An Open-Source LLM Reinforcement Learning System at Scale

Q. Yu, Z. Zhang, R. Zhu, Y. Yuan, X. Zuo, Y. Yue, W. Dai, T. Fan, G. Liu, L. Liuet al., “Dapo: An open-source llm reinforcement learning system at scale,”arXiv preprint arXiv:2503.14476, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[38]

Understanding R1-Zero-Like Training: A Critical Perspective

Z. Liu, C. Chen, W. Li, P . Qi, T. Pang, C. Du, W. S. Lee, and M. Lin, “Understanding r1-zero-like training: A critical perspective,”arXiv preprint arXiv:2503.20783, 2025

work page internal anchor Pith review arXiv 2025

-

[39]

Deepscaler: Surpassing o1-preview with a 1.5 b model by scaling rl,

M. Luo, S. Tan, J. Wong, X. Shi, W. Y. Tang, M. Roongta, C. Cai, J. Luo, T. Zhang, L. E. Liet al., “Deepscaler: Surpassing o1-preview with a 1.5 b model by scaling rl,”Notion Blog, vol. 3, no. 5, 2025

2025

-

[40]

Concrete Problems in AI Safety

D. Amodei, C. Olah, J. Steinhardt, P . Christiano, J. Schulman, and D. Man ´e, “Concrete problems in ai safety,”arXiv preprint arXiv:1606.06565, 2016

work page internal anchor Pith review arXiv 2016

-

[41]

High-Dimensional Continuous Control Using Generalized Advantage Estimation

J. Schulman, P . Moritz, S. Levine, M. Jordan, and P . Abbeel, “High-dimensional continuous control using generalized advantage estimation,”arXiv preprint arXiv:1506.02438, 2015

work page internal anchor Pith review arXiv 2015

-

[42]

Deep reinforcement learning from human preferences,

P . F. Christiano, J. Leike, T. Brown, M. Martic, S. Legg, and D. Amodei, “Deep reinforcement learning from human preferences,” Advances in neural information processing systems, vol. 30, 2017

2017

-

[43]

Aligning large multimodal models with factually augmented rlhf,

Z. Sun, S. Shen, S. Cao, H. Liu, C. Li, Y. Shen, C. Gan, L. Gui, Y.-X. Wang, Y. Yanget al., “Aligning large multimodal models with factually augmented rlhf,” inFindings of the Association for Computational Linguistics: ACL 2024, 2024, pp. 13 088–13 110

2024

-

[44]

Rlaif-v: Open-source ai feedback leads to super gpt-4v trustworthiness,

T. Yu, H. Zhang, Q. Li, Q. Xu, Y. Yao, D. Chen, X. Lu, G. Cui, Y. Dang, T. Heet al., “Rlaif-v: Open-source ai feedback leads to super gpt-4v trustworthiness,” inProceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 19 985–19 995

2025

-

[45]

Toward Visual Grounding: A Survey ,

L. Xiao, X. Yang, X. Lan, Y. Wang, and C. Xu, “ Toward Visual Grounding: A Survey ,”IEEE Transactions on Pattern Analysis & Machine Intelligence, vol. 48, no. 03, pp. 2749–2771, Mar. 2026. [Online]. Available: https://doi.ieeecomputersociety.org/10.1109/ TPAMI.2025.3630635

-

[46]

From Show to Tell: A Survey on Deep Learning-Based Image Captioning ,

M. Stefanini, M. Cornia, L. Baraldi, S. Cascianelli, G. Fiameni, and R. Cucchiara, “ From Show to Tell: A Survey on Deep Learning-Based Image Captioning ,”IEEE Transactions on Pattern Analysis & Machine Intelligence, vol. 45, no. 01, pp. 539–559, Jan

-

[47]

Available: https://doi.ieeecomputersociety.org/10

[Online]. Available: https://doi.ieeecomputersociety.org/10. 1109/TPAMI.2022.3148210

-

[48]

Sharegpt4v: Improving large multi-modal models with better captions,

L. Chen, J. Li, X. Dong, P . Zhang, C. He, J. Wang, F. Zhao, and D. Lin, “Sharegpt4v: Improving large multi-modal models with better captions,” inEuropean Conference on Computer Vision. Springer, 2024, pp. 370–387

2024

-

[49]

A. Hurst, A. Lerer, A. P . Goucher, A. Perelman, A. Ramesh, A. Clark, A. Ostrow, A. Welihinda, A. Hayes, A. Radfordet al., “Gpt-4o system card,”arXiv preprint arXiv:2410.21276, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[50]

Z. Lin, C. Liu, R. Zhang, P . Gao, L. Qiu, H. Xiao, H. Qiu, C. Lin, W. Shao, K. Chenet al., “Sphinx: The joint mixing of weights, tasks, and visual embeddings for multi-modal large language models,” arXiv preprint arXiv:2311.07575, 2023

-

[51]

Learning to compose and reason with language tree structures for visual grounding,

R. Hong, D. Liu, X. Mo, X. He, and H. Zhang, “Learning to compose and reason with language tree structures for visual grounding,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 44, no. 2, pp. 684–696, 2022

2022

-

[52]

Eyes wide shut? exploring the visual shortcomings of multimodal llms,

S. Tong, Z. Liu, Y. Zhai, Y. Ma, Y. LeCun, and S. Xie, “Eyes wide shut? exploring the visual shortcomings of multimodal llms,” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 9568–9578

2024

-

[53]

Shikra: Unleashing Multimodal LLM's Referential Dialogue Magic

K. Chen, Z. Zhang, W. Zeng, R. Zhang, F. Zhu, and R. Zhao, “Shikra: Unleashing multimodal llm’s referential dialogue magic,”arXiv preprint arXiv:2306.15195, 2023

work page internal anchor Pith review arXiv 2023

-

[54]

Cogvlm: Visual expert for pretrained language models,

W. Wang, Q. Lv, W. Yu, W. Hong, J. Qi, Y. Wang, J. Ji, Z. Yang, L. Zhao, X. Songet al., “Cogvlm: Visual expert for pretrained language models,”Advances in Neural Information Processing Systems, vol. 37, pp. 121 475–121 499, 2024

2024

-

[55]

Qwen2-VL: Enhancing Vision-Language Model's Perception of the World at Any Resolution

P . Wang, S. Bai, S. Tan, S. Wang, Z. Fan, J. Bai, K. Chen, X. Liu, J. Wang, W. Geet al., “Qwen2-vl: Enhancing vision-language model’s perception of the world at any resolution,”arXiv preprint arXiv:2409.12191, 2024

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[56]

Mmbench: Is your multi-modal model an all-around player?

Y. Liu, H. Duan, Y. Zhang, B. Li, S. Zhang, W. Zhao, Y. Yuan, J. Wang, C. He, Z. Liuet al., “Mmbench: Is your multi-modal model an all-around player?” inEuropean conference on computer vision. Springer, 2024, pp. 216–233

2024

-

[58]

Mmmu: A massive multi-discipline multimodal understanding and reasoning benchmark for expert agi,

X. Yue, Y. Ni, K. Zhang, T. Zheng, R. Liu, G. Zhang, S. Stevens, D. Jiang, W. Ren, Y. Sunet al., “Mmmu: A massive multi-discipline multimodal understanding and reasoning benchmark for expert agi,” inProceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 9556–9567

2024

-

[60]

Hallucination of Multimodal Large Language Models: A Survey

Z. Bai, P . Wang, T. Xiao, T. He, Z. Han, Z. Zhang, and M. Z. Shou, “Hallucination of multimodal large language models: A survey,” arXiv preprint arXiv:2404.18930, 2024

work page internal anchor Pith review arXiv 2024

-

[61]

Debiasing multimodal large language models via penalization of language priors,

Y. Zhang, Y. Shi, W. Yu, Q. Wen, X. Wang, W. Yang, Z. Zhang, L. Wang, and R. Jin, “Debiasing multimodal large language models via penalization of language priors,” inProceedings of the 33rd ACM International Conference on Multimedia, 2025, pp. 4232–4241

2025

-

[62]

Vision-language models for vision tasks: A survey,

J. Zhang, J. Huang, S. Jin, and S. Lu, “Vision-language models for vision tasks: A survey,”IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 46, no. 8, pp. 5625–5644, 2024

2024

-

[63]

Woodpecker: Hallucination correction for multimodal large language models,

S. Yin, C. Fu, S. Zhao, T. Xu, H. Wang, D. Sui, Y. Shen, K. Li, X. Sun, and E. Chen, “Woodpecker: Hallucination correction for multimodal large language models,”Science China Information Sciences, vol. 67, no. 12, p. 220105, 2024

2024

-

[64]

Trusting your evidence: Hallucinate less with context- aware decoding,

W. Shi, X. Han, M. Lewis, Y. Tsvetkov, L. Zettlemoyer, and W.- t. Yih, “Trusting your evidence: Hallucinate less with context- aware decoding,” inProceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 2: Short Papers), 2024, pp. 783–791

2024

-

[65]

Dola: Decoding by contrasting layers improves factuality in large language models

Y.-S. Chuang, Y. Xie, H. Luo, Y. Kim, J. Glass, and P . He, “Dola: De- coding by contrasting layers improves factuality in large language models,”arXiv preprint arXiv:2309.03883, 2023. JOURNAL OF LATEX CLASS FILES, SEPTEMBER 2026 17

-

[66]

Inference- time intervention: Eliciting truthful answers from a language model,

K. Li, O. Patel, F. Vi´egas, H. Pfister, and M. Wattenberg, “Inference- time intervention: Eliciting truthful answers from a language model,”Advances in Neural Information Processing Systems, vol. 36, pp. 41 451–41 530, 2023

2023

-

[67]

Locating and editing factual associations in gpt,

K. Meng, D. Bau, A. Andonian, and Y. Belinkov, “Locating and editing factual associations in gpt,”Advances in neural information processing systems, vol. 35, pp. 17 359–17 372, 2022

2022

-

[68]

In-context Learning and Induction Heads

C. Olsson, N. Elhage, N. Nanda, N. Joseph, N. DasSarma, T. Henighan, B. Mann, A. Askell, Y. Bai, A. Chenet al., “In-context learning and induction heads,”arXiv preprint arXiv:2209.11895, 2022

work page internal anchor Pith review arXiv 2022

-

[69]

Transformer feed- forward layers are key-value memories,

M. Geva, R. Schuster, J. Berant, and O. Levy, “Transformer feed- forward layers are key-value memories,” inProceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021, pp. 5484–5495

2021

-

[70]

A mathematical framework for transformer circuits,

N. Elhage, N. Nanda, C. Olsson, T. Henighan, N. Joseph, B. Mann, A. Askell, Y. Bai, A. Chen, T. Conerlyet al., “A mathematical framework for transformer circuits,”Transformer Circuits Thread, vol. 1, no. 1, p. 12, 2021

2021

-

[71]

N. Elhage, T. Hume, C. Olsson, N. Schiefer, T. Henighan, S. Kravec, Z. Hatfield-Dodds, R. Lasenby, D. Drain, C. Chenet al., “Toy models of superposition,”arXiv preprint arXiv:2209.10652, 2022

work page internal anchor Pith review arXiv 2022

-

[72]

S. Marks and M. Tegmark, “The geometry of truth: Emergent linear structure in large language model representations of true/false datasets,”arXiv preprint arXiv:2310.06824, 2023

work page internal anchor Pith review arXiv 2023

-

[73]

React: Synergizing reasoning and acting in language mod- els,

S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. R. Narasimhan, and Y. Cao, “React: Synergizing reasoning and acting in language mod- els,” inThe eleventh international conference on learning representations, 2022

2022

-

[74]

MathVista: Evaluating Mathematical Reasoning of Foundation Models in Visual Contexts

P . Lu, H. Bansal, T. Xia, J. Liu, C. Li, H. Hajishirzi, H. Cheng, K.-W. Chang, M. Galley, and J. Gao, “Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts,” 2024. [Online]. Available: https://arxiv.org/abs/2310.02255

work page internal anchor Pith review arXiv 2024

-

[75]

S. Bai, K. Chen, X. Liu, J. Wang, W. Ge, S. Song, K. Dang, P . Wang, S. Wang, J. Tang, H. Zhong, Y. Zhu, M. Yang, Z. Li, J. Wan, P . Wang, W. Ding, Z. Fu, Y. Xu, J. Ye, X. Zhang, T. Xie, Z. Cheng, H. Zhang, Z. Yang, H. Xu, and J. Lin, “Qwen2.5-vl technical report,” 2025. [Online]. Available: https://arxiv.org/abs/2502.13923

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[76]

Y. Yang, X. He, H. Pan, X. Jiang, Y. Deng, X. Yang, H. Lu, D. Yin, F. Rao, M. Zhu, B. Zhang, and W. Chen, “R1-onevision: Advancing generalized multimodal reasoning through cross-modal formalization,” 2025. [Online]. Available: https://arxiv.org/abs/2503.10615

-

[77]

Vision-R1: Incentivizing Reasoning Capability in Multimodal Large Language Models

W. Huang, B. Jia, Z. Zhai, S. Cao, Z. Ye, F. Zhao, Z. Xu, X. Tang, Y. Hu, and S. Lin, “Vision-r1: Incentivizing reasoning capability in multimodal large language models,” 2026. [Online]. Available: https://arxiv.org/abs/2503.06749

work page internal anchor Pith review arXiv 2026

-

[78]

Vl- rethinker: Incentivizing self-reflection of vision-language models with reinforcement learning

H. Wang, C. Qu, Z. Huang, W. Chu, F. Lin, and W. Chen, “Vl-rethinker: Incentivizing self-reflection of vision-language models with reinforcement learning,” 2025. [Online]. Available: https://arxiv.org/abs/2504.08837

-

[79]

Vl-cogito: Progressive curriculum reinforcement learning for advanced multimodal reasoning

R. Yuan, C. Xiao, S. Leng, J. Wang, L. Li, W. Xu, H. P . Chan, D. Zhao, T. Xu, Z. Wei, H. Zhang, and Y. Rong, “Vl-cogito: Progressive curriculum reinforcement learning for advanced multimodal reasoning,” 2025. [Online]. Available: https://arxiv.org/abs/2507.22607

-

[80]

Y. Deng, H. Bansal, F. Yin, N. Peng, W. Wang, and K.-W. Chang, “Openvlthinker: Complex vision-language reasoning via iterative sft-rl cycles,” 2025. [Online]. Available: https: //arxiv.org/abs/2503.17352

-

[81]

X. Wang, Z. Yang, C. Feng, H. Lu, L. Li, C.-C. Lin, K. Lin, F. Huang, and L. Wang, “Sota with less: Mcts-guided sample selection for data-efficient visual reasoning self-improvement,” 2025. [Online]. Available: https://arxiv.org/abs/2504.07934

-

[82]

P . Wu and S. Xie, “V*: Guided visual search as a core mechanism in multimodal llms,” 2023. [Online]. Available: https://arxiv.org/abs/2312.14135

-

[83]

Eyes wide shut? exploring the visual shortcomings of multimodal llms

S. Tong, Z. Liu, Y. Zhai, Y. Ma, Y. LeCun, and S. Xie, “Eyes wide shut? exploring the visual shortcomings of multimodal llms,” 2024. [Online]. Available: https://arxiv.org/abs/2401.06209

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.