Recognition: unknown

FastGrasp: Learning-based Whole-body Control method for Fast Dexterous Grasping with Mobile Manipulators

Pith reviewed 2026-05-10 14:26 UTC · model grok-4.3

The pith

FastGrasp uses two-stage reinforcement learning to enable fast dexterous grasping on mobile manipulators by generating grasp candidates from point clouds and coordinating base, arm and hand with tactile adjustments.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

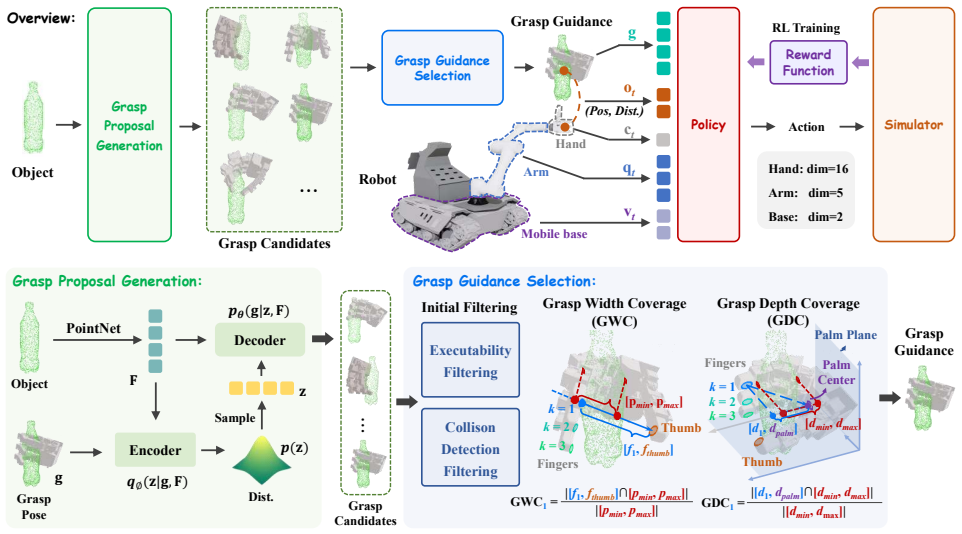

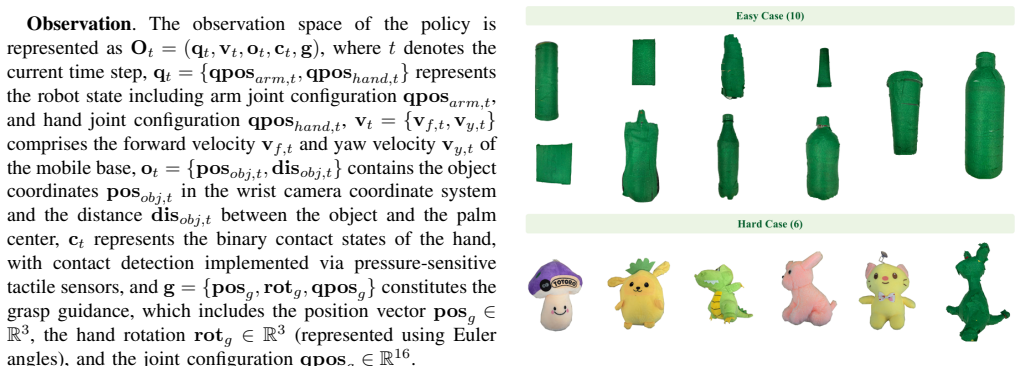

FastGrasp integrates grasp guidance, whole-body control, and tactile feedback for mobile fast grasping. Our two-stage reinforcement learning strategy first generates diverse grasp candidates via conditional variational autoencoder conditioned on object point clouds, then executes coordinated movements of mobile base, arm, and hand guided by optimal grasp selection. Tactile sensing enables real-time grasp adjustments to handle impact effects and object variations.

What carries the argument

Two-stage reinforcement learning strategy in which a conditional variational autoencoder first produces grasp candidates from object point clouds and a second policy then selects and drives coordinated base-arm-hand motion while using tactile feedback for online correction.

Load-bearing premise

That training the two-stage system with CVAE grasp generation, whole-body reinforcement learning, and tactile feedback will produce policies that generalize across object shapes and transfer from simulation to real robots even at high speeds.

What would settle it

Deploy the trained system on a physical mobile manipulator and measure grasp success rate on ten object shapes and sizes never shown during training while commanding base velocities at least 50 percent higher than any speed used in simulation; a drop below 70 percent success would falsify robust generalization.

Figures

read the original abstract

Fast grasping is critical for mobile robots in logistics, manufacturing, and service applications. Existing methods face fundamental challenges in impact stabilization under high-speed motion, real-time whole-body coordination, and generalization across diverse objects and scenarios, limited by fixed bases, simple grippers, or slow tactile response capabilities. We propose \textbf{FastGrasp}, a learning-based framework that integrates grasp guidance, whole-body control, and tactile feedback for mobile fast grasping. Our two-stage reinforcement learning strategy first generates diverse grasp candidates via conditional variational autoencoder conditioned on object point clouds, then executes coordinated movements of mobile base, arm, and hand guided by optimal grasp selection. Tactile sensing enables real-time grasp adjustments to handle impact effects and object variations. Extensive experiments demonstrate superior grasping performance in both simulation and real-world scenarios, achieving robust manipulation across diverse object geometries through effective sim-to-real transfer.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces FastGrasp, a learning-based whole-body control framework for fast dexterous grasping with mobile manipulators. It uses a two-stage reinforcement learning pipeline: a conditional variational autoencoder (CVAE) generates diverse grasp candidates conditioned on object point clouds, followed by coordinated control of the mobile base, arm, and hand with tactile sensing for real-time impact adjustment and object variation handling. The central claim is that this approach overcomes limitations of fixed-base or slow-tactile systems and achieves superior grasping performance with robust sim-to-real transfer across diverse object geometries in both simulation and real-world experiments.

Significance. If the quantitative results hold, the work would represent a meaningful advance in mobile manipulation by jointly addressing high-speed impact stabilization, real-time whole-body coordination, and generalization via integrated CVAE grasp generation and tactile feedback. The two-stage RL design and emphasis on sim-to-real transfer could inform practical deployments in logistics and service robotics, provided the performance gains are shown to be statistically reliable against strong baselines.

major comments (1)

- Abstract: The manuscript asserts 'superior grasping performance' and 'robust manipulation across diverse object geometries through effective sim-to-real transfer' yet supplies no quantitative metrics, success rates, baseline comparisons, error bars, or experimental protocols. Without these data the central empirical claim cannot be evaluated and remains unverifiable from the presented material.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback on our manuscript. We address the major comment point-by-point below and will revise the paper to strengthen the presentation of our empirical claims.

read point-by-point responses

-

Referee: Abstract: The manuscript asserts 'superior grasping performance' and 'robust manipulation across diverse object geometries through effective sim-to-real transfer' yet supplies no quantitative metrics, success rates, baseline comparisons, error bars, or experimental protocols. Without these data the central empirical claim cannot be evaluated and remains unverifiable from the presented material.

Authors: We agree that the abstract would benefit from including key quantitative results to support the claims of superior performance and robust sim-to-real transfer. The full manuscript (Sections 4 and 5) already contains detailed experimental protocols, success rates with error bars, baseline comparisons, and sim-to-real results across diverse objects. In the revised version, we will update the abstract to concisely report the main quantitative highlights (e.g., grasping success rates in simulation and real-world experiments, performance gains over baselines) while remaining within length constraints. This change will make the central claims directly verifiable from the abstract without altering the underlying results or experimental design. revision: yes

Circularity Check

No significant circularity detected

full rationale

The manuscript presents FastGrasp as an empirical learning-based proposal: a two-stage RL pipeline (CVAE grasp generation from point clouds, followed by whole-body coordination and tactile adjustment). No equations, fitted parameters renamed as predictions, self-definitional loops, or load-bearing self-citations appear in the abstract or described framework. Claims rest on experimental validation in simulation and real-world settings rather than on any derivation that reduces to its own inputs by construction. The architecture is introduced as a novel combination, not derived from prior results by the same authors in a circular manner.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Dreamvideo: Com- posing your dream videos with customized subject and motion

Z. Wei, Z. Xu, J. Guo, Y . Hou, C. Gao, Z. Cai, J. Luo, and L. Shao, “D (r, o) grasp: A unified representation of robot and object interaction for cross-embodiment dexterous grasping,”arXiv preprint arXiv:2410.01702, 2024

-

[2]

Robustdex- grasp: Robust dexterous grasping of general objects,

H. Zhang, Z. Wu, L. Huang, S. Christen, and J. Song, “Robustdex- grasp: Robust dexterous grasping of general objects,”arXiv preprint arXiv:2504.05287, 2025

-

[3]

Adg-net: A sim2real multimodal learning framework for adaptive dexterous grasping,

H. Zhang, J. Lyu, C. Zhou, H. Liang, Y . Tu, F. Sun, and J. Zhang, “Adg-net: A sim2real multimodal learning framework for adaptive dexterous grasping,”IEEE Transactions on Cybernetics, 2025

2025

-

[4]

An architecture for reactive mobile manipulation on-the-move,

B. Burgess-Limerick, C. Lehnert, J. Leitner, and P. Corke, “An architecture for reactive mobile manipulation on-the-move,” in2023 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2023, pp. 1623–1629

2023

-

[5]

A holistic approach to reactive mobile manipulation,

J. Haviland, N. S ¨underhauf, and P. Corke, “A holistic approach to reactive mobile manipulation,”IEEE Robotics and Automation Letters, vol. 7, no. 2, pp. 3122–3129, 2022

2022

-

[6]

Reactive base control for on-the-move mobile manipulation in dynamic envi- ronments,

B. Burgess-Limerick, J. Haviland, C. Lehnert, and P. Corke, “Reactive base control for on-the-move mobile manipulation in dynamic envi- ronments,”IEEE Robotics and Automation Letters, vol. 9, no. 3, pp. 2048–2055, 2024

2048

-

[7]

Learning robot tactile sensing for object manipulation,

Y . Chebotar, O. Kroemer, and J. Peters, “Learning robot tactile sensing for object manipulation,” in2014 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2014, pp. 3368–3375

2014

-

[8]

Dextouch: Learning to seek and manipulate objects with tactile dexterity,

K.-W. Lee, Y . Qin, X. Wang, and S.-C. Lim, “Dextouch: Learning to seek and manipulate objects with tactile dexterity,”IEEE Robotics and Automation Letters, 2024

2024

-

[9]

Realdex: Towards human-like grasping for robotic dexterous hand,

Y . Liu, Y . Yang, Y . Wang, X. Wu, J. Wang, Y . Yao, S. Schwertfeger, S. Yang, W. Wang, J. Yuet al., “Realdex: Towards human-like grasping for robotic dexterous hand,”arXiv preprint arXiv:2402.13853, 2024

-

[10]

3d diffusion policy: Generalizable visuomotor policy learning via simple 3d representations

Y . Ze, G. Zhang, K. Zhang, C. Hu, M. Wang, and H. Xu, “3d diffusion policy: Generalizable visuomotor policy learning via simple 3d representations,”arXiv preprint arXiv:2403.03954, 2024

-

[11]

Y . Wang, J. Ye, C. Xiao, Y . Zhong, H. Tao, H. Yu, Y . Liu, J. Yu, and Y . Ma, “Dexh2r: A benchmark for dynamic dexterous grasping in human-to-robot handover,”arXiv preprint arXiv:2506.23152, 2025

-

[12]

Learning dexterous manipulation from exemplar object trajectories and pre-grasps,

S. Dasari, A. Gupta, and V . Kumar, “Learning dexterous manipulation from exemplar object trajectories and pre-grasps,”arXiv preprint arXiv:2209.11221, 2022

-

[13]

Dexgrasp anything: Towards universal robotic dexterous grasping with physics awareness,

Y . Zhong, Q. Jiang, J. Yu, and Y . Ma, “Dexgrasp anything: Towards universal robotic dexterous grasping with physics awareness,” inPro- ceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 22 584–22 594

2025

-

[14]

Evolvinggrasp: Evo- lutionary grasp generation via efficient preference alignment

Y . Zhu, Y . Zhong, Z. Yang, P. Cong, J. Yu, X. Zhu, and Y . Ma, “Evolvinggrasp: Evolutionary grasp generation via efficient preference alignment,”arXiv preprint arXiv:2503.14329, 2025

-

[15]

Hands for dexterous manipulation and robust grasping: A difficult road toward simplicity,

A. Bicchi, “Hands for dexterous manipulation and robust grasping: A difficult road toward simplicity,”IEEE Transactions on robotics and automation, vol. 16, no. 6, pp. 652–662, 2002

2002

-

[16]

An overview of dexterous manipulation,

A. M. Okamura, N. Smaby, and M. R. Cutkosky, “An overview of dexterous manipulation,” inProceedings 2000 ICRA. Millennium Con- ference. IEEE International Conference on Robotics and Automation. Symposia Proceedings (Cat. No. 00CH37065), vol. 1. IEEE, 2000, pp. 255–262

2000

-

[17]

Dexterous hand series,

Shadow Robot Company, “Dexterous hand series,” https://www. shadowrobot.com/dexterous-hand-series/, 2023, accessed: 2024-05- 13

2023

-

[18]

Unidexgrasp: Universal robotic dexterous grasping via learning diverse proposal generation and goal-conditioned policy,

Y . Xu, W. Wan, J. Zhang, H. Liu, Z. Shan, H. Shen, R. Wang, H. Geng, Y . Weng, J. Chenet al., “Unidexgrasp: Universal robotic dexterous grasping via learning diverse proposal generation and goal-conditioned policy,” inProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 4737–4746

2023

-

[19]

A hybrid visual servo controller for robust grasping by wheeled mobile robots,

Y . Wang, H. Lang, and C. W. De Silva, “A hybrid visual servo controller for robust grasping by wheeled mobile robots,”IEEE/ASME transactions on Mechatronics, vol. 15, no. 5, pp. 757–769, 2009

2009

-

[20]

Reinforce- ment learning of manipulation and grasping using dynamical move- ment primitives for a humanoidlike mobile manipulator,

Z. Li, T. Zhao, F. Chen, Y . Hu, C.-Y . Su, and T. Fukuda, “Reinforce- ment learning of manipulation and grasping using dynamical move- ment primitives for a humanoidlike mobile manipulator,”IEEE/ASME Transactions on Mechatronics, vol. 23, no. 1, pp. 121–131, 2017

2017

-

[21]

Motion planning for mobile manipulator to pick up an object while base robot’s moving,

W. Shan, K. Nagatani, and Y . Tanaka, “Motion planning for mobile manipulator to pick up an object while base robot’s moving,” in2004 IEEE International Conference on Robotics and Biomimetics. IEEE, 2004, pp. 350–355

2004

-

[22]

Hierarchical reinforcement learning with model guidance for mobile manipulation,

Y . Zhou, Y . Zhou, K. Jin, and H. Wang, “Hierarchical reinforcement learning with model guidance for mobile manipulation,”IEEE/ASME Transactions on Mechatronics, 2025

2025

-

[23]

Catch it! learn- ing to catch in flight with mobile dexterous hands,

Y . Zhang, T. Liang, Z. Chen, Y . Ze, and H. Xu, “Catch it! learn- ing to catch in flight with mobile dexterous hands,”arXiv preprint arXiv:2409.10319, 2024

-

[24]

Toward vision-based high sam- pling interaction force estimation with master position and orientation for teleoperation,

K.-W. Lee, D.-K. Ko, and S.-C. Lim, “Toward vision-based high sam- pling interaction force estimation with master position and orientation for teleoperation,”IEEE Robotics and Automation Letters, vol. 6, no. 4, pp. 6640–6646, 2021

2021

-

[25]

Recent progress on tactile object recognition,

H. Liu, Y . Wu, F. Sun, and D. Guo, “Recent progress on tactile object recognition,”International Journal of Advanced Robotic Systems, vol. 14, no. 4, p. 1729881417717056, 2017

2017

-

[26]

Tactile-based manipulation of deformable objects with dynamic center of mass,

M. Kaboli, K. Yao, and G. Cheng, “Tactile-based manipulation of deformable objects with dynamic center of mass,” in2016 IEEE-RAS 16th International Conference on Humanoid Robots (Humanoids). IEEE, 2016, pp. 752–757

2016

-

[27]

Tactile-based manipulation of wires for switchgear assembly,

S. Pirozzi and C. Natale, “Tactile-based manipulation of wires for switchgear assembly,”IEEE/ASME Transactions on Mechatronics, vol. 23, no. 6, pp. 2650–2661, 2018

2018

-

[28]

Omni.anim.people,

NVIDIA Corporation, “Omni.anim.people,” 2022, [Accessed: 01- Feb-2023]. [Online]. Available: https://docs.omniverse.nvidia.com/ app isaacsim/app isaacsim/ext omni anim people.html

2022

-

[29]

Ffhnet: Generating multi-fingered robotic grasps for unknown objects in real- time,

V . Mayer, Q. Feng, J. Deng, Y . Shi, Z. Chen, and A. Knoll, “Ffhnet: Generating multi-fingered robotic grasps for unknown objects in real- time,” in2022 International Conference on Robotics and Automation (ICRA). IEEE, 2022, pp. 762–769

2022

-

[30]

Learning structured output represen- tation using deep conditional generative models,

K. Sohn, H. Lee, and X. Yan, “Learning structured output represen- tation using deep conditional generative models,”Advances in neural information processing systems, vol. 28, 2015

2015

-

[31]

Pointnet: Deep learning on point sets for 3d classification and segmentation,

C. R. Qi, H. Su, K. Mo, and L. J. Guibas, “Pointnet: Deep learning on point sets for 3d classification and segmentation,” inProceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 652–660

2017

-

[32]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov, “Proximal policy optimization algorithms,”arXiv preprint arXiv:1707.06347, 2017

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[33]

Bodex: Scalable and efficient robotic dexterous grasp synthesis using bilevel optimization,

J. Chen, Y . Ke, and H. Wang, “Bodex: Scalable and efficient robotic dexterous grasp synthesis using bilevel optimization,” in2025 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2025, pp. 01–08

2025

-

[34]

Hand posture subspaces for dexterous robotic grasping,

M. T. Ciocarlie and P. K. Allen, “Hand posture subspaces for dexterous robotic grasping,”The International Journal of Robotics Research, vol. 28, no. 7, pp. 851–867, 2009

2009

-

[35]

Domain randomization for transferring deep neural networks from simulation to the real world,

J. Tobin, R. Fong, A. Ray, J. Schneider, W. Zaremba, and P. Abbeel, “Domain randomization for transferring deep neural networks from simulation to the real world,” in2017 IEEE/RSJ international con- ference on intelligent robots and systems (IROS). IEEE, 2017, pp. 23–30

2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.