Recognition: unknown

Partition-of-Unity Gaussian Kolmogorov-Arnold Networks

Pith reviewed 2026-05-08 05:01 UTC · model grok-4.3

The pith

Normalizing each Gaussian basis by the sum over local centers in Kolmogorov-Arnold networks reduces sensitivity to the scale parameter and improves accuracy and training stability.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

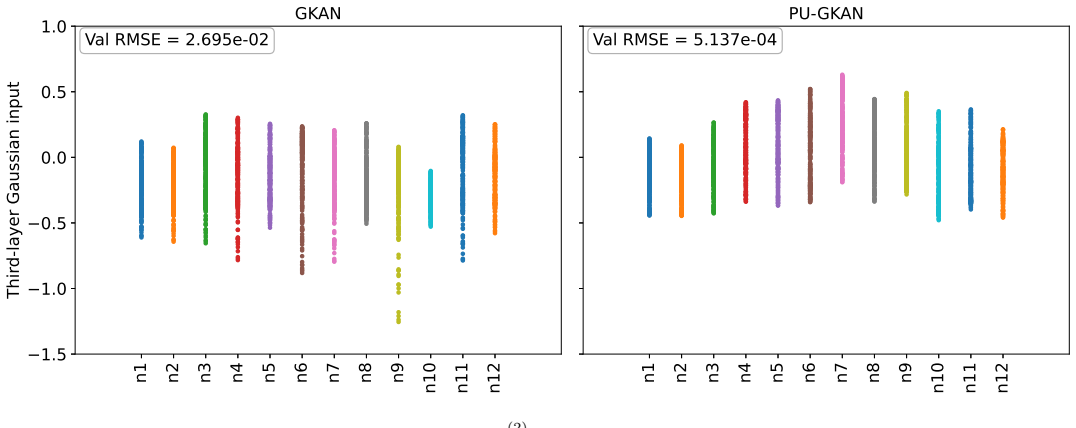

The central claim is that Shepard-type partition-of-unity normalization applied to the Gaussian bases of a Kolmogorov-Arnold network stabilizes the model by reducing its sensitivity to the scale parameter ε while preserving the standard edge-based architecture. The normalization supplies exact constant reproduction at each edge and an explicit finite-feature kernel representation of the induced layer maps; experiments confirm that these properties translate into higher validation accuracy and more reliable training across function-approximation and physics-informed tasks.

What carries the argument

The Shepard-type partition-of-unity normalization that divides the Gaussian value on each edge by the sum of the Gaussians evaluated at the fixed centers belonging to that edge.

If this is right

- Each edge exactly reproduces constant functions without extra constraints.

- Every layer admits an explicit finite-feature kernel representation whose empirical feature matrices can be written in closed form.

- A practical interval for the scale parameter can be chosen once from overlap and conditioning considerations and then used across tasks.

- The accuracy and stability benefits remain when the architecture is deepened, when the input dimension is raised, and when the same construction is applied to physics-informed learning of the Helmholtz and wave equations.

Where Pith is reading between the lines

- The same local-sum normalization could be tested on other radial-basis-function networks outside the Kolmogorov-Arnold setting to check whether the stabilization effect is architecture-independent.

- Because the method reduces the need for careful scale tuning, it may lower the overall computational budget required to reach a target accuracy in repeated training runs.

- The explicit kernel view created by the normalization opens a route to combine the networks with classical kernel-analysis tools such as condition-number bounds or eigenvalue estimates.

Load-bearing premise

The observed gains in stability and accuracy arise specifically from the partition-of-unity normalization rather than from the particular rule chosen for selecting the Gaussian scale or from other details of the numerical implementation.

What would settle it

Repeating the same suite of experiments on identical targets and architectures but with the local-sum normalization removed would show whether the reductions in scale sensitivity and the accuracy improvements disappear.

Figures

read the original abstract

Gaussian basis functions provide an efficient and flexible alternative to spline activations in KANs. In this work, we introduce the partition-of-unity Gaussian KAN (PU-GKAN), a Shepard-type normalized Gaussian KAN in which the Gaussian basis values on each edge are divided by their local sum over fixed centers. This produces a partition-of-unity feature map with trainable coefficients, while preserving the standard edge-based KAN structure. The normalized construction gives exact constant reproduction at the edge level and admits an explicit finite-feature kernel interpretation. We formulate both the standard Gaussian KAN (GKAN) and PU-GKAN from a finite-feature and additive-kernel viewpoint, making the induced layer kernels and empirical feature matrices explicit. Using the first-layer feature matrix as the reference object, we adopt a practical scale-selection interval for \(\epsilon\), with the lower endpoint determined by adjacent-center overlap and the upper endpoint determined by a conservative conditioning threshold. Numerical experiments show that PU-GKAN reduces sensitivity to \(\epsilon\), improves validation accuracy for most smooth and moderately non-smooth targets, and gives more stable training behavior. The benefit persists across sample-size and center-number sweeps, higher-dimensional architectures, Mat\'ern RBF bases, and physics-informed examples involving Helmholtz and wave equations. These results indicate that Shepard-type partition-of-unity normalization is a simple and effective stabilization mechanism for RBF-based KANs.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces the partition-of-unity Gaussian KAN (PU-GKAN) by applying Shepard normalization to Gaussian basis functions on the edges of a KAN, yielding a feature map that exactly reproduces constants and admits an explicit finite-feature kernel representation. Both standard GKAN and PU-GKAN are derived from an additive-kernel viewpoint with explicit layer kernels and empirical feature matrices. A practical ε-selection interval (lower bound from center overlap, upper from conditioning) is adopted, and numerical experiments are reported to show that PU-GKAN reduces ε-sensitivity, improves validation accuracy on smooth and moderately non-smooth targets, and yields more stable training; these benefits are claimed to persist across sample-size/center-number sweeps, higher dimensions, Matérn bases, and PINN examples for Helmholtz and wave equations.

Significance. If the empirical gains are shown to arise specifically from the partition-of-unity normalization rather than from the shared ε-selection procedure or other implementation details, the construction supplies a lightweight stabilization technique for RBF-based KANs together with a transparent kernel view. The explicit finite-feature and additive-kernel derivations constitute a clear methodological strength that could aid reproducibility and theoretical analysis in scientific machine learning.

major comments (2)

- [Numerical experiments and scale-selection description] The central claim that Shepard normalization itself reduces ε-sensitivity and improves accuracy (abstract, final paragraph; also the numerical-experiments summary) rests on comparisons that employ an identical practical ε interval for both GKAN and PU-GKAN. Because normalization alters the conditioning of the first-layer feature matrix, the same numerical interval selects systematically different effective scales; without an explicit ablation that fixes ε to identical values (or removes the selection rule) while holding all other implementation choices constant, the observed gains cannot be unambiguously attributed to the partition-of-unity property rather than to the interaction between normalization and the chosen ε procedure.

- [Numerical experiments] The reported improvements in validation accuracy and training stability are stated without accompanying quantitative tables, error bars, data-split details, hyperparameter-search protocol, or statistical-significance tests (abstract and numerical-experiments summary). This absence prevents assessment of effect sizes and reproducibility, directly undermining the claim that benefits “persist across” the listed sweeps.

minor comments (1)

- [Formulation section] The notation for the normalized basis functions and the explicit kernel expressions would benefit from a single consolidated table or diagram that contrasts the un-normalized and normalized feature matrices side-by-side.

Simulated Author's Rebuttal

We thank the referee for the thoughtful and detailed comments on our manuscript. We have carefully reviewed the major concerns regarding the attribution of benefits to the partition-of-unity normalization and the reporting of experimental results. Below, we provide point-by-point responses and indicate the revisions we plan to implement.

read point-by-point responses

-

Referee: The central claim that Shepard normalization itself reduces ε-sensitivity and improves accuracy (abstract, final paragraph; also the numerical-experiments summary) rests on comparisons that employ an identical practical ε interval for both GKAN and PU-GKAN. Because normalization alters the conditioning of the first-layer feature matrix, the same numerical interval selects systematically different effective scales; without an explicit ablation that fixes ε to identical values (or removes the selection rule) while holding all other implementation choices constant, the observed gains cannot be unambiguously attributed to the partition-of-unity property rather than to the interaction between normalization and the chosen ε procedure.

Authors: We agree that the shared ε-selection procedure interacts with normalization and that this interaction could confound direct attribution of gains to the partition-of-unity property. The practical interval is defined identically for both models using the same criteria (adjacent-center overlap for the lower bound and a conservative conditioning threshold for the upper bound), which we view as the appropriate way to compare the two constructions under the method as proposed. Nevertheless, to isolate the normalization effect more rigorously, we will add an explicit ablation study in the revised manuscript. This study will fix ε to identical numerical values across a representative range for both GKAN and PU-GKAN while holding all other implementation choices constant and will report the resulting performance differences. revision: yes

-

Referee: The reported improvements in validation accuracy and training stability are stated without accompanying quantitative tables, error bars, data-split details, hyperparameter-search protocol, or statistical-significance tests (abstract and numerical-experiments summary). This absence prevents assessment of effect sizes and reproducibility, directly undermining the claim that benefits “persist across” the listed sweeps.

Authors: We acknowledge that the current presentation of numerical results lacks the quantitative detail needed for full assessment of effect sizes and reproducibility. In the revised manuscript we will expand the numerical-experiments section to include quantitative tables reporting mean validation accuracies together with standard deviations computed over multiple independent runs, explicit descriptions of the data-split procedures, the hyperparameter-search protocol employed, and statistical significance tests (e.g., paired t-tests) comparing GKAN and PU-GKAN. These additions will directly support the claims that the observed benefits persist across the reported sweeps. revision: yes

Circularity Check

No circularity; formulation and claims are independent of inputs

full rationale

The paper constructs both GKAN and PU-GKAN explicitly from finite-feature expansions and additive-kernel representations, yielding explicit layer kernels and feature matrices as direct consequences of the definitions. The ε scale-selection rule is presented as a practical heuristic derived from overlap and conditioning properties of the feature matrix, not fitted to target performance. All reported benefits are empirical outcomes from numerical experiments across multiple regimes, with no step in the derivation chain reducing a claimed result to a fitted parameter or self-referential definition. No self-citations appear as load-bearing premises.

Axiom & Free-Parameter Ledger

free parameters (1)

- ε (Gaussian scale)

axioms (1)

- standard math Normalized basis functions form a partition of unity and therefore reproduce constants exactly at the edge level.

Reference graph

Works this paper leans on

-

[1]

KAN: Kolmogorov-Arnold Networks

Z. Liu, Y. Wang, S. Vaidya, F. Ruehle, J. Halverson, M. Soljaˇ ci´ c, T. Y. Hou, and M. Tegmark, “KAN: Kolmogorov- Arnold networks,” arXiv preprint arXiv:2404.19756, 2024

work page internal anchor Pith review arXiv 2024

-

[2]

Kan 2.0: Kolmogorov-arnold networks meet science,

Z. Liu, P. Ma, Y. Wang, W. Matusik, and M. Tegmark, “KAN 2.0: Kolm ogorov-Arnold networks meet science,” arXiv preprint arXiv:2408.10205, 2024. [Online]. Available: https://github.com/KindXiaoming/pykan

-

[3]

Physics-informe d neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differ ential equations,

M. Raissi, P. Perdikaris, and G. E. Karniadakis, “Physics-informe d neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differ ential equations,” J. Comput. Phys. , vol. 378, pp. 686–707, 2019

2019

-

[4]

Physics-informed machine learning,

G. E. Karniadakis, I. G. Kevrekidis, L. Lu, P. Perdikaris, S. Wang , and L. Yang, “Physics-informed machine learning,” Nat. Rev. Phys. , vol. 3, no. 6, pp. 422–440, 2021

2021

-

[5]

Stable weight updating: A key to reliable PDE solutions using deep learning,

A. Noorizadegan, R. Cavoretto, D. L. Young, and C. S. Chen, “ Stable weight updating: A key to reliable PDE solutions using deep learning,” Engineering Analysis with Boundary Elements , vol. 168, p. 105933, 2024. 17

2024

-

[6]

Gradient alignment in physics- informed neural networks: a second-order optimization perspective

S. Wang, A. K. Bhartari, B. Li, and P. Perdikaris, “Gradient alignm ent in physics-informed neural networks: A second-order optimization perspective,” arXiv preprint arXiv:2502.00604, 2025

-

[7]

Enha ncing supervised surface reconstruction through implicit weight regularization,

A. Noorizadegan, Y. C. Hon, D.-L. Young, and C.-S. Chen, “Enha ncing supervised surface reconstruction through implicit weight regularization,” Engineering Analysis with Boundary Elements , vol. 180, p. 106439, 2025. doi: https://doi.org/10.1016/j.enganabound.2025.106439

-

[8]

Powe r-enhanced residual network for function ap- proximation and physics-informed inverse problems,

A. Noorizadegan, D. L. Young, Y. C. Hon, and C. S. Chen, “Powe r-enhanced residual network for function ap- proximation and physics-informed inverse problems,” Applied Mathematics and Computation , vol. 480, p. 128910, 2024

2024

-

[9]

Understanding and mitigat ing gradient flow pathologies in physics-informed neural networks,

S. Wang, Y. Teng, and P. Perdikaris, “Understanding and mitigat ing gradient flow pathologies in physics-informed neural networks,” SIAM Journal on Scientific Computing , vol. 43, no. 5, pp. A3055–A3081, 2021

2021

-

[10]

A Practitioner's Guide to Kolmogorov-Arnold Networks

A. Noorizadegan, S. Wang, , L. Ling, and J.P. Dominguez-Morale s, A Practitioner’s Guide to Kolmogorov-Arnold Networks. arXiv preprint arXiv:2510.25781, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[11]

S. Sidharth, K. A. R., and A. K. P., “Chebyshev polynomial-based Kolmogorov-Arnold networks: An efficient archi- tecture for nonlinear function approximation,” arXiv preprint arXiv:2405.07200, 2024

-

[12]

N. A. Daryakenari, K. Shukla, and G. E. Karniadakis, “Represe ntation meets optimization: Training PINNs and PIKANs for gray-box discovery in systems pharmacology,” arXiv preprint arXiv:2504.07379, 2025

-

[13]

KKANs: Kur kova-Kolmogorov-Arnold networks and their learning dynamics,

J. D. Toscano, L.-L. Wang, and G. E. Karniadakis, “KKANs: Kur kova-Kolmogorov-Arnold networks and their learning dynamics,” Neural Netw. , vol. 191, p. 107831, 2025

2025

-

[14]

fKAN: Fractional Kolmogorov-Arnold networks with trainable Jacobi basis functions,

A. A. Aghaei, “fKAN: Fractional Kolmogorov-Arnold networks with trainable Jacobi basis functions,” arXiv preprint arXiv:2406.07456, 2024. [Online]. Available: https://github.com/alirezaafzalaghaei/fKAN

-

[15]

Kolmogorov–Arnold PointNet: Deep learning for prediction of fluid fields on irregular geometries,

A. Kashefi and T. Mukerji, “Kolmogorov–Arnold PointNet: Deep learning for prediction of fluid fields on irregular geometries,” arXiv preprint arXiv:2504.06327, 2025. [Online]. Available: https://github.com/Ali-Stanford/Physics_Informed_KA N_PointNet

-

[16]

J. Xu, Z. Chen, J. Li, S. Yang, W. Wang, X. Hu, and E. C. H. Ngai, “FourierKAN-GCF: Fourier Kolmogorov- Arnold network—An effective and efficient feature transformation for graph collaborative filtering,” arXiv preprint arXiv:2406.01034, 2024. [Online]. Available: https://github.com/Jinfeng-Xu/FKAN-GCF

-

[17]

Kolmogorov-Arnold Fou rier networks,

J. Zhang, Y. Fan, K. Cai, and K. Wang, “Kolmogorov-Arnold Fou rier networks,” arXiv preprint arXiv:2502.06018,

-

[18]

Available: https://github.com/kolmogorovArnoldFourierNetwork/KAF

[Online]. Available: https://github.com/kolmogorovArnoldFourierNetwork/KAF

-

[19]

Q. Qiu, T. Zhu, H. Gong, L. Chen, and H. Ning, “ReLU-KAN: New K olmogorov-Arnold networks that only need matrix addition, dot multiplication, and ReLU,” arXiv preprint arXiv:2406.02075, 2024. [Online]. Available: https://github.com/quiqi/relu_kan

-

[20]

C. C. So and S. P. Yung, “Higher-order ReLU-KANs (HRKANs) f or solving physics-informed neural networks (PINNs) more accurately, robustly, and faster,” arXiv preprint arXiv:2409.14248, 2024

-

[21]

Adaptive training of grid- dependent physics-informed Kolmogorov-Arnold networks,

S. Rigas, M. Papachristou, T. Papadopoulos, F. Anagnostopo ulos, and G. Alexandridis, “Adaptive training of grid- dependent physics-informed Kolmogorov-Arnold networks,” IEEE Access, vol. 12, pp. 176982–176998, 2024. [Online]. Available: https://github.com/srigas/jaxKAN

2024

-

[22]

Wav-kan: Wavelet kolmogorov-arnold networks,

Z. Bozorgasl and H. Chen, “Wav-KAN: Wavelet Kolmogorov-Ar nold networks,” arXiv preprint arXiv:2405.12832,

-

[23]

Available: https://github.com/zavareh1/Wav-KAN

[Online]. Available: https://github.com/zavareh1/Wav-KAN

-

[24]

A. A. Howard, B. Jacob, S. H. Murphy, A. Heinlein, and P. Stinis, “Finite basis Kolmogorov-Arnold networks: Domain decomposition for data-driven and physics-informed problems,” arXiv preprint arXiv:2406.19662, 2024. [Online]. Available: https://github.com/pnnl/neuromancer/tree/feature/fbkans/examples/KANs

-

[25]

S. T. Seydi, “Exploring the potential of polynomial basis functio ns in Kolmogorov-Arnold networks: A comparative study of different groups of polynomials,” arXiv preprint arXiv:2406.02583, 2024

-

[26]

J. A. Actor, G. Harper, B. Southworth, and E. C. Cyr, “Leve raging KANs for expedient training of multichannel MLPs via preconditioning and geometric refinement,” arXiv preprint arXiv:2505.18131, 2025

-

[27]

SINDy-KANs: Sparse identification of nonlinear dynamics through Kolmogorov-Arnold networks,

A. A. Howard, N. Zolman, B. Jacob, S. L. Brunton, and P. Stinis , “SINDy-KANs: Sparse identification of nonlinear dynamics through Kolmogorov-Arnold networks,” 2026. 18

2026

- [28]

-

[29]

R. L. Hardy, Multiquadric equations of topography and other ir regular surfaces, Journal of Geophysical Research (1896-1977), 76(8), 1905–1915, 1971

1977

-

[30]

E. Kansa, Multiquadrics scattered data approximation scheme with applications to computational fluid-dynamics solutions to parabolic, hyperbolic and elliptic partial differential equa tions, Computers and Mathematics with Appli- cations, 19(8), 147–161, 1990

1990

-

[31]

Available: https://arxiv.org/abs/2405.06721

Z. Li, “Kolmogorov-Arnold networks are radial basis function n etworks,” arXiv preprint arXiv:2405.06721, 2024. [Online]. Available: https://github.com/ZiyaoLi/fast-kan

-

[32]

B. C. Koenig, S. Kim, and S. Deng, “LeanKAN: A parameter-lean Kolmogorov-Arnold network layer with im- proved memory efficiency and convergence behavior,” arXiv preprint arXiv:2502.17844, 2025. [Online]. Available: https://github.com/DENG-MIT/LeanKAN

-

[33]

DeepOKAN: D eep operator network based on Kolmogorov- Arnold networks for mechanics problems,

D. W. Abueidda, P. Pantidis, and M. E. Mobasher, “DeepOKAN: D eep operator network based on Kolmogorov- Arnold networks for mechanics problems,” Computer Methods in Applied Mechanics and Engineering , vol. 436, p. 117699, 2025. GitHub: https://github.com/DiabAbu/Dee

2025

-

[34]

S. T. Chiu, S. W. Cheung, U. Braga-Neto, C. S. Lee, and R. P. L i, “Free-RBF-KAN: Kolmogorov-Arnold Networks with Adaptive Radial Basis Functions for Efficient Function Learning,” arXiv preprint arXiv:2601.07760, 2026

-

[35]

FasterKAN,

A. Delis, “FasterKAN,” GitHub repository, 2024. [Online]. Available : https://github.com/AthanasiosDelis/faster-kan

2024

-

[36]

CVKAN: Complex-valued Kolmogo rov-Arnold networks,

M. Wolff, F. Eilers, and X. Jiang, “CVKAN: Complex-valued Kolmogo rov-Arnold networks,” arXiv preprint arXiv:2502.02417, 2025. [Online]. Available: https://github.com/M-Wolff/CVKAN

-

[37]

Improved Complex -Valued Kolmogorov–Arnold Networks with Theo- retical Support,

R. Che, L. af Klinteberg, and M. Aryapoor, “Improved Complex -Valued Kolmogorov–Arnold Networks with Theo- retical Support,” in Proc. 24th EPIA Conf. on Artificial Intelligence (EPIA) , Faro, Portugal, Oct. 2025, Part I, pp. 439–451. Springer, Heidelberg

2025

-

[38]

RBF-KAN: Radial Basis Functio n-Kolmogorov-Arnold Networks,

Z. Chao, X. Liu, Z. Wu, and X. Li, “RBF-KAN: Radial Basis Functio n-Kolmogorov-Arnold Networks,” IEEE Internet Things J. , 2026

2026

-

[39]

Eff ective condition number for the selection of the RBF shape parameter with the fictitious point method,

A. Noorizadegan, C.-S. Chen, D. L. Young, and C. S. Chen, “Eff ective condition number for the selection of the RBF shape parameter with the fictitious point method,” Applied Numerical Mathematics , vol. 178, pp. 280–295, 2022

2022

-

[40]

On the selection of a better radial basis function and its shape parameter in interpolation problems,

C.-S. Chen, A. Noorizadegan, D. L. Young, and C. S. Chen, “On the selection of a better radial basis function and its shape parameter in interpolation problems,” Applied Mathematics and Computation , vol. 442, p. 127713, 2023

2023

-

[41]

Bending analysis of quasicrystal plates using adaptive radial basis function method,

A. Noorizadegan, A. Naji, T. L. Lee, R. Cavoretto, and D. L. Y oung, “Bending analysis of quasicrystal plates using adaptive radial basis function method,” J. Comput. Appl. Math. , vol. 450, p. 115990, 2024

2024

-

[42]

Introducing the evaluat ion condition number: A novel assessment of conditioning in radial basis function methods. Engineering Analysis with Boundary Elements,

A. Noorizadegan, and R. Schaback, “Introducing the evaluat ion condition number: A novel assessment of conditioning in radial basis function methods. Engineering Analysis with Boundary Elements,” 166, p.105827, 2024

2024

-

[43]

Schaback, Error estimates and condition numbers for radia l basis function interpolation

R. Schaback, Error estimates and condition numbers for radia l basis function interpolation. Advances in Computa- tional Mathematics, 3(3): 251–264, 1995

1995

-

[44]

Small errors imply large evaluation instabilities,

R. Schaback, “Small errors imply large evaluation instabilities,” Advances in Computational Mathematics , vol. 49, no. 2, p. 25, 2023

2023

-

[45]

Scaling of radial basis functions ,

E. Larsson and R. Schaback, “Scaling of radial basis functions ,” IMA Journal of Numerical Analysis , vol. 44, no. 2, pp. 1130–1152, 2024

2024

-

[46]

Scale-Parameter Selection in Gaussian Kolmogorov-Arnold Networks

A. Noorizadegan and S. Wang, “Scaling of Gaussian Kolmogorov– Arnold networks,” arXiv preprint arXiv:2604.21174,

work page internal anchor Pith review Pith/arXiv arXiv

-

[47]

doi: https://doi.org/10.48550/arXiv.2604.21174

work page internal anchor Pith review Pith/arXiv arXiv doi:10.48550/arxiv.2604.21174

-

[48]

On choosing “optimal

G. E. Fasshauer and J. G. Zhang, “On choosing “optimal” shape parameters for RBF approximation,” Numer. Algorithms, vol. 45, no. 1, pp. 345–368, 2007

2007

-

[49]

A two-dimensional interpolation function for irre gularly-spaced data,

D. Shepard, “A two-dimensional interpolation function for irre gularly-spaced data,” in Proceedings of the 23rd ACM National Conference, 1968, pp. 517–524. 19

1968

-

[50]

Remarks on some nonparametric estimates of a density function,

M. Rosenblatt, “Remarks on some nonparametric estimates of a density function,” Ann. Math. Statist. , vol. 27, pp. 832–837, 1956

1956

-

[51]

On estimation of a probability density function and m ode,

E. Parzen, “On estimation of a probability density function and m ode,” Ann. Math. Statist. , vol. 33, pp. 1065–1076, 1962

1962

-

[52]

On estimating regression,

E. A. Nadaraya, “On estimating regression,” Theory Probab. Appl. , vol. 9, pp. 141–142, 1964

1964

-

[53]

Smooth regression analysis,

G. S. Watson, “Smooth regression analysis,” Sankhy¯ a Ser. A, vol. 26, pp. 359–372, 1964

1964

-

[54]

Locally determined smooth interpolation at irregula rly-spaced points in several variables,

R. Franke, “Locally determined smooth interpolation at irregula rly-spaced points in several variables,” J. Inst. Math. Applic., vol. 19, pp. 471–482, 1977

1977

-

[55]

Finite basis phy sics-informed neural networks (FBPINNs): A scalable domain decomposition approach for solving differential equa tions,

B. Moseley, A. Markham, and T. Nissen-Meyer, “Finite basis phy sics-informed neural networks (FBPINNs): A scalable domain decomposition approach for solving differential equa tions,” Advances in Computational Mathematics , vol. 49, no. 4, p. 62, 2023

2023

-

[56]

Finite basis phy sics-informed neural networks as a Schwarz domain decomposition method,

V. Dolean, A. Heinlein, S. Mishra, and B. Moseley, “Finite basis phy sics-informed neural networks as a Schwarz domain decomposition method,” in DDM in Science and Engineering XXVII , pp. 165–172, Springer, 2024

2024

-

[57]

Multilevel doma in decomposition-based architectures for physics- informed neural networks,

V. Dolean, A. Heinlein, S. Mishra, and B. Moseley, “Multilevel doma in decomposition-based architectures for physics- informed neural networks,” Computer Methods in Applied Mechanics and Engineering , vol. 429, p. 117116, 2024

2024

-

[58]

A. Heinlein, A. A. Howard, D. Beecroft, and P. Stinis, “Multifidelit y domain decomposition–based physics-informed neural networks for time-dependent problems,” arXiv preprint arXiv:2401.07888, 2024

-

[59]

Efficient com putation of partition of unity interpolants through a block-based searching technique,

R. Cavoretto, A. De Rossi, and E. Perracchione, “Efficient com putation of partition of unity interpolants through a block-based searching technique,” Computers & Mathematics with Applications , vol. 71, no. 12, pp. 2568–2581, 2016

2016

-

[60]

A RBF partit ion of unity collocation method based on finite difference for initial–boundary value problems,

G. Garmanjani, R. Cavoretto, and M. Esmaeilbeigi, “A RBF partit ion of unity collocation method based on finite difference for initial–boundary value problems,” Computers & Mathematics with Applications , vol. 75, no. 11, pp. 4066–4090, 2018

2018

-

[61]

Partition of unity interpolation using stable kernel-based techniques,

R. Cavoretto, S. De Marchi, A. De Rossi, E. Perracchione, and G. Santin, “Partition of unity interpolation using stable kernel-based techniques,” Applied Numerical Mathematics , vol. 116, pp. 95–107, 2017. 20

2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.