Recognition: unknown

RAS: a Reliability Oriented Metric for Automatic Speech Recognition

Pith reviewed 2026-05-07 17:48 UTC · model grok-4.3

The pith

ASR models can be trained to abstain from uncertain segments using a human-calibrated reliability metric that improves trustworthiness without losing much accuracy.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

We introduce an abstention-aware transcription framework that enables ASR models to explicitly abstain from uncertain segments. RAS is a reliability-oriented metric that balances transcription informativeness and error aversion, with its trade-off parameter calibrated by human preference. Models are trained through supervised bootstrapping followed by reinforcement learning, yielding substantial improvements in transcription reliability while maintaining competitive accuracy.

What carries the argument

RAS metric, which scores ASR output by trading off informativeness against error aversion with a parameter set according to human preference.

Load-bearing premise

Human preferences used to set the RAS trade-off parameter are stable and representative enough that optimizing for them produces reliable behavior in new conditions.

What would settle it

A controlled user study in which listeners rate whether RAS-trained models produce fewer misleading outputs than standard models on the same noisy audio, or whether abstentions instead reduce overall usefulness.

Figures

read the original abstract

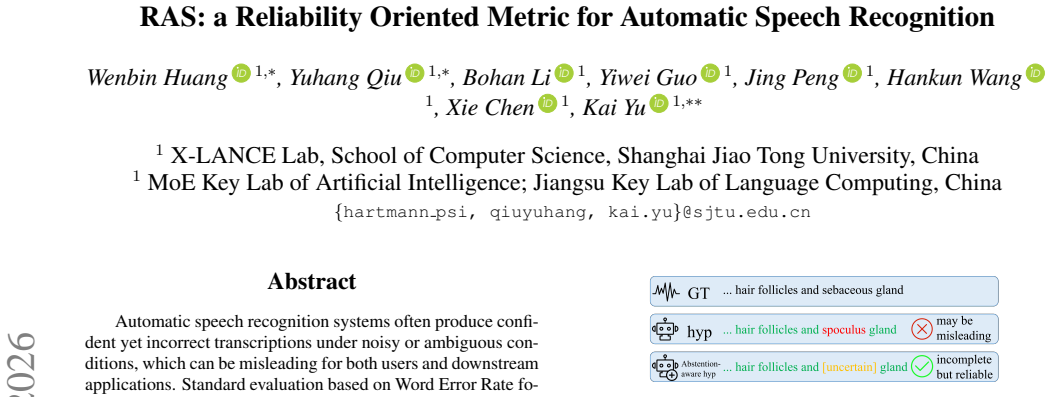

Automatic speech recognition systems often produce confident yet incorrect transcriptions under noisy or ambiguous conditions, which can be misleading for both users and downstream applications. Standard evaluation based on Word Error Rate focuses solely on accuracy and fails to capture transcription reliability. We introduce an abstention-aware transcription framework that enables ASR models to explicitly abstain from uncertain segments. To evaluate reliability under abstention, we propose RAS, a reliability-oriented metric that balances transcription informativeness and error aversion, with its trade-off parameter calibrated by human preference. We then train an abstention-aware ASR model through supervised bootstrapping followed by reinforcement learning. Our experiments demonstrate substantial improvements in transcription reliability while maintaining competitive accuracy.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces an abstention-aware transcription framework for ASR that permits models to abstain from uncertain segments rather than producing potentially erroneous output. It defines RAS as a reliability-oriented metric balancing informativeness against error aversion, with the single trade-off parameter calibrated from human preferences, and trains the model via supervised bootstrapping followed by reinforcement learning. Experiments are reported to yield substantial gains in transcription reliability while preserving competitive accuracy.

Significance. If the RAS metric proves stable and the abstention behavior generalizes, the framework could meaningfully improve ASR reliability in noisy or ambiguous settings by reducing misleading transcriptions for users and downstream tasks. The explicit incorporation of abstention and human-calibrated reliability evaluation represents a constructive direction beyond standard WER-based assessment.

major comments (3)

- [§3] §3 (RAS definition): the metric is described as balancing informativeness and error aversion via a human-calibrated trade-off parameter, yet no explicit equation or derivation is supplied that would allow verification of whether the final RAS value remains independent of the calibration data or introduces circularity.

- [§4.2] §4.2 (preference calibration): the trade-off parameter is set by human preferences, but the manuscript reports neither inter-rater agreement statistics, held-out preference validation, nor robustness checks across accents/noise conditions; this step is load-bearing for the claim that RL optimization produces generalizable abstention behavior.

- [§5] §5 (experiments): the claimed 'substantial improvements' in reliability are presented without error bars, statistical significance tests, or ablation on the effect of the RAS parameter itself, preventing assessment of whether the gains are reproducible or attributable to the proposed method.

minor comments (2)

- [Abstract] Abstract: quantitative results (e.g., RAS scores or WER deltas) and the functional form of the RAS equation would strengthen the summary of contributions.

- [Notation] Notation: define all symbols for informativeness and error-aversion terms at first use and maintain consistency across equations and text.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback on our manuscript. The comments identify important areas for improving clarity, rigor, and reproducibility. We address each major comment below and will incorporate revisions to strengthen the paper.

read point-by-point responses

-

Referee: [§3] §3 (RAS definition): the metric is described as balancing informativeness and error aversion via a human-calibrated trade-off parameter, yet no explicit equation or derivation is supplied that would allow verification of whether the final RAS value remains independent of the calibration data or introduces circularity.

Authors: We agree that an explicit equation and derivation are necessary for verification. The RAS metric is formulated as a linear combination of an informativeness term (fraction of segments transcribed) and an error-aversion term (error rate on transcribed segments), with the single scalar trade-off parameter set once via human calibration. The calibration step determines only the operating point and does not enter the per-instance RAS computation, so the metric value on any fixed test set is independent of the calibration data. In the revised manuscript we will add the precise equation together with a short derivation showing this separation. revision: yes

-

Referee: [§4.2] §4.2 (preference calibration): the trade-off parameter is set by human preferences, but the manuscript reports neither inter-rater agreement statistics, held-out preference validation, nor robustness checks across accents/noise conditions; this step is load-bearing for the claim that RL optimization produces generalizable abstention behavior.

Authors: We acknowledge that the human-preference calibration section lacks the supporting statistics the referee requests. The original study collected preferences from multiple annotators on a range of audio conditions, yet inter-rater agreement, held-out validation, and cross-condition robustness were not reported. In the revision we will add these analyses (including agreement coefficients and results on held-out preference sets) and will include additional experiments confirming that the calibrated parameter yields stable abstention behavior under varied accents and noise levels. revision: yes

-

Referee: [§5] §5 (experiments): the claimed 'substantial improvements' in reliability are presented without error bars, statistical significance tests, or ablation on the effect of the RAS parameter itself, preventing assessment of whether the gains are reproducible or attributable to the proposed method.

Authors: We agree that the experimental results would be more convincing with the requested statistical details. The reported gains were obtained from multiple training runs, but error bars, significance tests, and an explicit ablation on the RAS trade-off parameter were omitted. In the revised version we will include standard-deviation error bars, paired statistical tests, and an ablation study that varies the RAS parameter while holding other factors fixed, thereby clarifying both reproducibility and the contribution of the proposed metric. revision: yes

Circularity Check

No significant circularity detected in RAS derivation or training pipeline

full rationale

The paper defines RAS as a metric balancing informativeness and error aversion via a trade-off parameter set by external human preference data. It then applies supervised bootstrapping followed by RL to train an abstention-aware ASR model. No equations, self-citations, or steps are shown that reduce the claimed reliability improvements or metric values to the inputs by construction; human preferences function as an independent calibration source rather than a fitted internal loop. The empirical demonstrations remain falsifiable against held-out data and external benchmarks, satisfying the criteria for a non-circular proposal.

Axiom & Free-Parameter Ledger

free parameters (1)

- RAS trade-off parameter

Reference graph

Works this paper leans on

-

[1]

These outputs are frequently the result of forced decod- ing under weak acoustic evidence, yielding errors that appear confident rather than explicitly uncertain

Introduction Modern automatic speech recognition (ASR) systems achieve high accuracy in clean acoustic conditions, yet they often still produce superficially fluent transcripts in the presence of noise, overlapping speech, signal degradation, or low-resource set- tings. These outputs are frequently the result of forced decod- ing under weak acoustic evide...

-

[2]

using RAS as the reward—we significantly enhance system reliability. Experimental results demonstrate that our approach substantially improves transcription trustworthiness, particu- arXiv:2604.24278v2 [cs.SD] 28 Apr 2026 larly in low-resource and noisy conditions, while maintaining competitive accuracy. Our main contributions are summarized as follows: •...

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[3]

absorbing

Reliability-Aware Score To equip ASR models with explicit rejection capability, we ex- tend the original vocabulary with a special placeholder mark PH. Unlike ordinary lexical words, thePHrepresents ab- stention: when the model encounters low-quality or ambiguous speech and cannot reliably determine the underlying content, it outputsPHto indicate uncertai...

-

[4]

Training Abstention-Aware ASR Our training pipeline is designed in two consecutive stages to enhance the model’s uncertainty awareness and self-correction capabilities. 3.1. Stage 1: placeholder supervision (PH-Supv) The primary objective of this stage is to construct a dataset that guides the base model to identify and flag prediction errors. 3.1.1. Trai...

-

[5]

Experiments 4.1. Datasets We conduct experiments on two datasets: LibriSpeech [22], a widely used English audiobook corpus, and the TALCS Cor- pus [27], an English-Mandarin code-switching dataset. For Lib- riSpeech, we utilize train-clean-360 for training and test-clean for evaluation. To assess ASR reliability under adverse acous- tic conditions, we simu...

-

[6]

We propose the RAS, a prin- cipled metric calibrated via human preferences to balance infor- mativeness with error aversion

Conclusion This work redefines ASR reliability by introducing placeholder- based abstention, shifting the paradigm from speculative tran- scription to risk-aware reporting. We propose the RAS, a prin- cipled metric calibrated via human preferences to balance infor- mativeness with error aversion. By implementing an abstention- aware training pipeline, we ...

-

[7]

We utilize these tools to improve lin- guistic clarity and assist in technical debugging

Generative AI Use Disclosure Generative AIs are used in this work for manuscript polishing and code troubleshooting. We utilize these tools to improve lin- guistic clarity and assist in technical debugging. The conceptual framework, experimental design, and final writing are entirely conducted by human authors, who take full responsibility for the content...

-

[8]

An optimum character recognition system using de- cision functions,

C. K. Chow, “An optimum character recognition system using de- cision functions,”IRE Transactions on Electronic Computers, vol. EC-6, no. 4, pp. 247–254, 1957

1957

-

[9]

Calibrated structured prediction,

V . Kuleshov and P. Liang, “Calibrated structured prediction,” in Proceedings of the 29th International Conference on Neural In- formation Processing Systems - V olume 2, ser. NIPS’15. Cam- bridge, MA, USA: MIT Press, 2015, p. 3474–3482

2015

-

[10]

Reducing tool hallucination via reliability alignment,

H. Xu, Z. Zhu, L. Pan, Z. Wang, S. Zhu, D. Ma, R. Cao, L. Chen, and K. Yu, “Reducing tool hallucination via reliability alignment,” inProceedings of the 42nd International Conference on Machine Learning, ser. Proceedings of Machine Learning Research, vol

-

[11]

69 992–70 006

PMLR, 13–19 Jul 2025, pp. 69 992–70 006

2025

-

[12]

Re- jection improves reliability: Training LLMs to refuse unknown questions using RL from knowledge feedback,

H. Xu, Z. Zhu, S. Zhang, D. Ma, S. Fan, L. Chen, and K. Yu, “Re- jection improves reliability: Training LLMs to refuse unknown questions using RL from knowledge feedback,” inFirst Confer- ence on Language Modeling, 2024

2024

-

[13]

Abstention is all you need,

E. Sch ¨onw¨alder, C. Falkenberg, C. Hartmann, and W. Lehner, “Abstention is all you need,”2025 IEEE 12th International Con- ference on Data Science and Advanced Analytics (DSAA), pp. 1– 10, 2025

2025

-

[14]

En- hancing LLM reliability via explicit knowledge boundary model- ing,

H. Zheng, H. Xu, Y . Liu, S. Fan, L. Chen, P. Fung, and K. Yu, “En- hancing LLM reliability via explicit knowledge boundary model- ing,” inSecond Conference on Language Modeling, 2025

2025

-

[15]

Confidence measures for speech recognition: A sur- vey,

H. Jiang, “Confidence measures for speech recognition: A sur- vey,”Speech Communications, vol. 45, pp. 455–470, 2005

2005

-

[16]

An evaluation of word-level confidence estimation for end-to-end automatic speech recognition,

D. Oneat ¸˘a, A. Caranica, A. Stan, and H. Cucu, “An evaluation of word-level confidence estimation for end-to-end automatic speech recognition,”2021 IEEE Spoken Language Technology Workshop (SLT), pp. 258–265, 2021

2021

-

[17]

ASR rescoring and confidence estimation with electra,

H. Futami, H. Inaguma, M. Mimura, S. Sakai, and T. Kawahara, “ASR rescoring and confidence estimation with electra,”2021 IEEE Automatic Speech Recognition and Understanding Work- shop (ASRU), pp. 380–387, 2021

2021

-

[18]

Word- level Confidence Estimation for CTC Models,

B. Naowarat, T. Kongthaworn, and E. Chuangsuwanich, “Word- level Confidence Estimation for CTC Models,” inInterspeech 2023, 2023, pp. 3297–3301

2023

-

[19]

Identifying and calibrat- ing overconfidence in noisy speech recognition,

M. Huo, Y . Zhang, and Y . Tang, “Identifying and calibrat- ing overconfidence in noisy speech recognition,”arXiv preprint arXiv:2509.07195, 2025

-

[20]

L. R. Rabiner,A tutorial on hidden Markov models and selected applications in speech recognition. San Francisco, CA, USA: Morgan Kaufmann Publishers Inc., 1990, p. 267–296

1990

-

[21]

The 1997 hub- 5ne evaluation plan for recognition of conversational speech over the telephone,

National Institute of Standards and Technology, “The 1997 hub- 5ne evaluation plan for recognition of conversational speech over the telephone,” https://catalog.ldc.upenn.edu/docs/LDC2002S25/ hub5nev3.htm, 1997, accessed Feb 14, 2026

1997

-

[22]

Binary codes capable of correcting deletions, insertions, and reversals,

V . I. Levenshtein, “Binary codes capable of correcting deletions, insertions, and reversals,”Soviet physics. Doklady, vol. 10, pp. 707–710, 1965

1965

-

[23]

Advocating character error rate for multilingual ASR evaluation,

D. Thennal, J. James, D. P. Gopinathet al., “Advocating character error rate for multilingual ASR evaluation,” inFindings of the As- sociation for Computational Linguistics: NAACL 2025, 2025, pp. 4926–4935

2025

-

[24]

From WER and RIL to MER and WIL: improved evaluation measures for connected speech recognition,

A. C. Morris, V . Maier, and P. Green, “From WER and RIL to MER and WIL: improved evaluation measures for connected speech recognition,” inInterspeech 2004, 2004, pp. 2765–2768

2004

-

[25]

BLEU: a method for automatic evaluation of machine translation,

K. Papineni, S. Roukos, T. Ward, and W.-J. Zhu, “BLEU: a method for automatic evaluation of machine translation,” inPro- ceedings of the 40th Annual Meeting of the Association for Com- putational Linguistics. Philadelphia, Pennsylvania, USA: Asso- ciation for Computational Linguistics, Jul. 2002, pp. 311–318

2002

-

[26]

ROUGE: A package for automatic evaluation of sum- maries,

C.-Y . Lin, “ROUGE: A package for automatic evaluation of sum- maries,” inText Summarization Branches Out. Barcelona, Spain: Association for Computational Linguistics, Jul. 2004, pp. 74–81

2004

-

[27]

BERTScore: Evaluating text generation with BERT,

T. Zhang, V . Kishore, F. Wu, K. Q. Weinberger, and Y . Artzi, “BERTScore: Evaluating text generation with BERT,” inInter- national Conference on Learning Representations, 2020

2020

-

[28]

Robust speech recognition via large-scale weak su- pervision,

A. Radford, J. W. Kim, T. Xu, G. Brockman, C. Mcleavey, and I. Sutskever, “Robust speech recognition via large-scale weak su- pervision,” inProceedings of the 40th International Conference on Machine Learning, ser. Proceedings of Machine Learning Re- search, vol. 202. PMLR, 23–29 Jul 2023, pp. 28 492–28 518

2023

-

[29]

R. S. Sutton, A. G. Bartoet al.,Reinforcement learning: An intro- duction. MIT press Cambridge, 1998, vol. 1, no. 1

1998

-

[30]

Lib- rispeech: An asr corpus based on public domain audio books,

V . Panayotov, G. Chen, D. Povey, and S. Khudanpur, “Lib- rispeech: An asr corpus based on public domain audio books,” in2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2015, pp. 5206–5210

2015

-

[31]

Alignment for efficient tool calling of large language models,

H. Xu, Z. Wang, Z. Zhu, L. Pan, X. Chen, S. Fan, L. Chen, and K. Yu, “Alignment for efficient tool calling of large language models,” inProceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, C. Christodoulopoulos, T. Chakraborty, C. Rose, and V . Peng, Eds. Suzhou, China: As- sociation for Computational Linguistics, Nov. 2...

2025

-

[32]

Beaqlejs: Html 5 and javascript based framework for the subjective evaluation of audio quality

S. Kraft and U. Z ¨olzer, “Beaqlejs: Html 5 and javascript based framework for the subjective evaluation of audio quality.” Linux Audio Conference, 05 2014

2014

-

[33]

Rank analysis of incomplete block designs: I. the method of paired comparisons,

R. A. Bradley and M. E. Terry, “Rank analysis of incomplete block designs: I. the method of paired comparisons,”Biometrika, vol. 39, no. 3/4, pp. 324–345, 1952

1952

-

[34]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y . Li, Y . Wuet al., “Deepseekmath: Pushing the lim- its of mathematical reasoning in open language models,”arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review arXiv 2024

-

[35]

TALCS: An open-source Mandarin-English code-switching cor- pus and a speech recognition baseline,

C. Li, S. Deng, Y . Wang, G. Wang, Y . Gong, C. Chen, and J. Bai, “TALCS: An open-source Mandarin-English code-switching cor- pus and a speech recognition baseline,” inInterspeech 2022, 2022, pp. 1741–1745

2022

-

[36]

Medical ASR recording dataset,

Hani89, “Medical ASR recording dataset,” 2023. [Online]. Available: https://huggingface.co/datasets/Hani89/medical asr recording dataset

2023

-

[37]

The AMI meeting corpus,

I. Mccowan, J. Carletta, W. Kraaij, S. Ashby, S. Bourban, M. Flynn, M. Guillemot, T. Hain, J. Kadlec, V . Karaiskos, M. Kro- nenthal, G. Lathoud, M. Lincoln, A. Lisowska Masson, W. Post, D. Reidsma, and P. Wellner, “The AMI meeting corpus,”Int’l. Conf. on Methods and Techniques in Behavioral Research, 01 2005

2005

-

[38]

Decoupled weight decay regulariza- tion,

I. Loshchilov and F. Hutter, “Decoupled weight decay regulariza- tion,” inInternational Conference on Learning Representations, 2019

2019

-

[39]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov, “Proximal policy optimization algorithms,”arXiv preprint arXiv:1707.06347, 2017

work page internal anchor Pith review arXiv 2017

-

[40]

Adam: A method for stochastic opti- mization,

D. P. Kingma and J. Ba, “Adam: A method for stochastic opti- mization,” inInternational Conference on Learning Representa- tions (ICLR), 2015

2015

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.