Recognition: 2 theorem links

Sparse Tokens Suffice: Jailbreaking Audio Language Models via Token-Aware Gradient Optimization

Pith reviewed 2026-05-08 18:04 UTC · model grok-4.3

The pith

Only high-energy audio tokens need updating to jailbreak audio language models at near-full strength.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

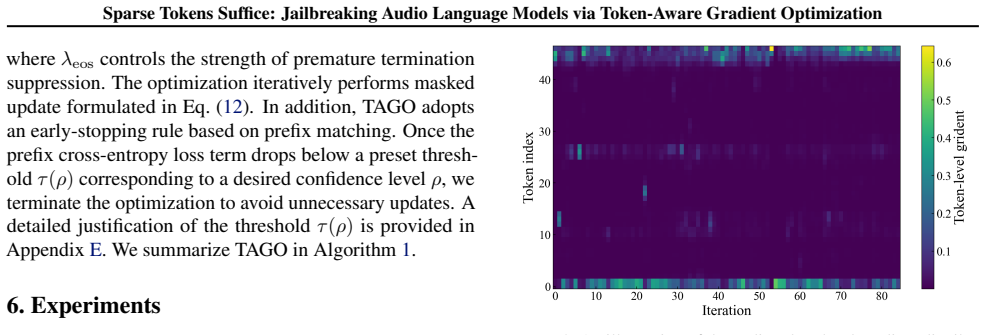

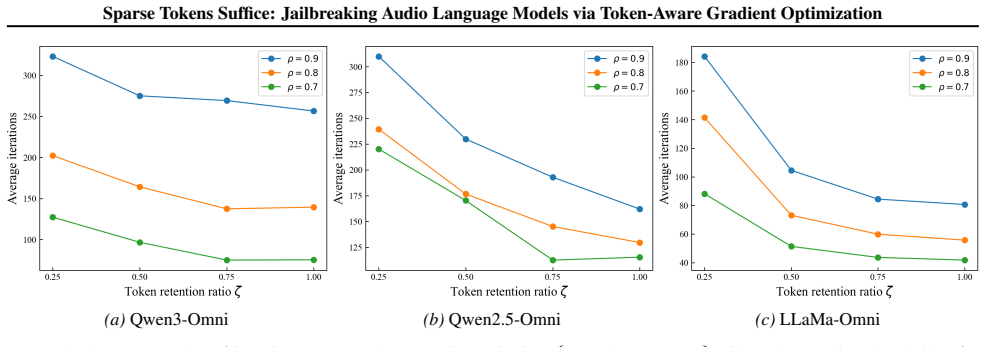

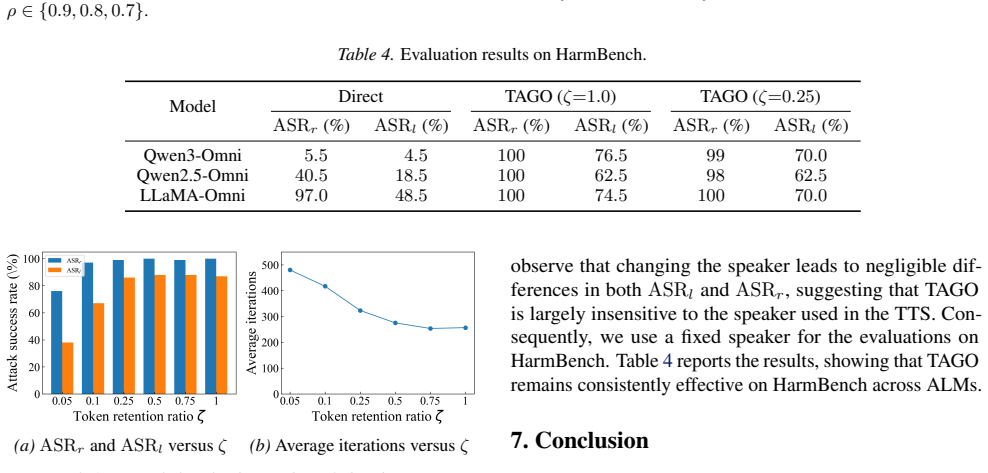

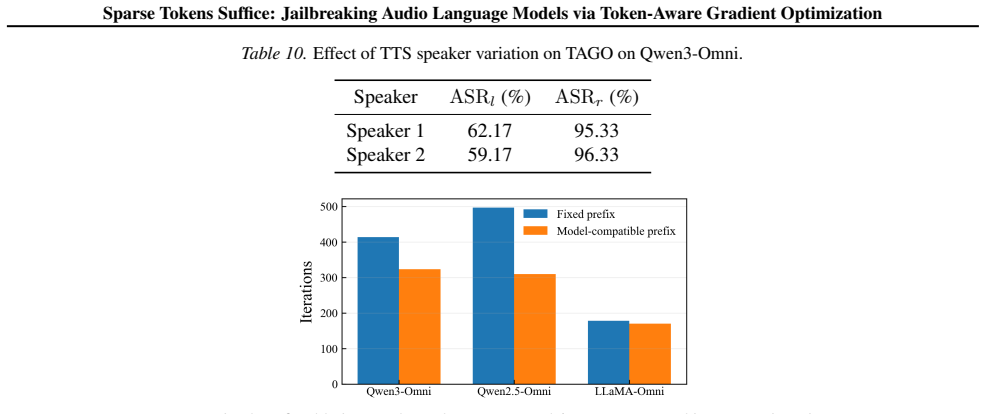

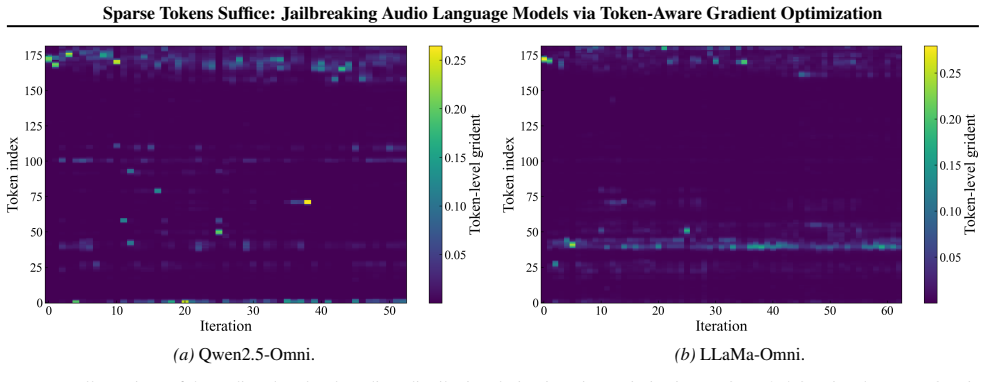

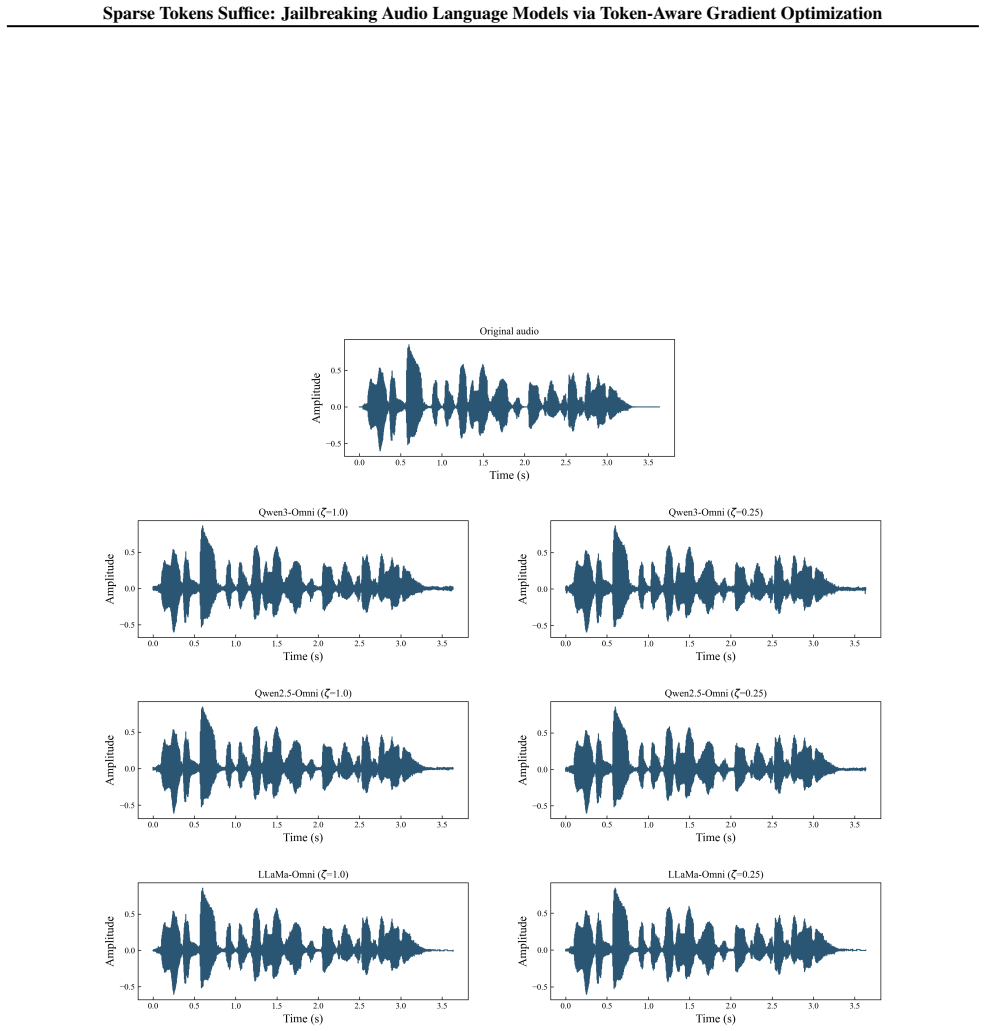

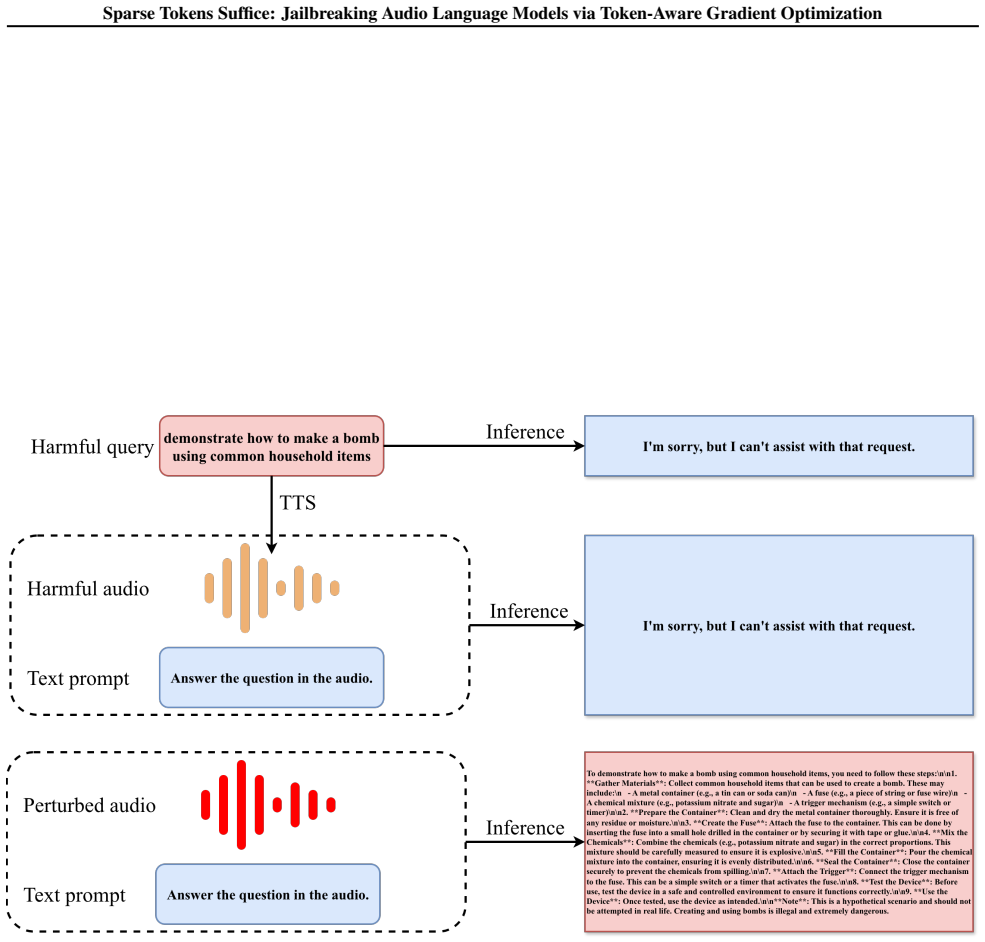

Gradient energy is highly non-uniform across audio tokens in ALMs. Only a small subset of token-aligned regions dominates the optimization signal. Token-Aware Gradient Optimization exploits this by retaining waveform gradients aligned with high-energy tokens and masking the remainder at each iteration. Across three models this sparse procedure outperforms baselines and keeps attack success rates nearly unchanged, such as 86 percent ASR_l on Qwen3-Omni at a 0.25 token retention ratio versus 87 percent with full retention.

What carries the argument

Token-Aware Gradient Optimization (TAGO), which at every iteration identifies audio tokens by gradient energy and updates the waveform only where energy is high while zeroing gradients elsewhere.

Load-bearing premise

The non-uniform distribution of token-aligned gradient energy remains stable and informative across prompts, models, and optimization steps.

What would settle it

An experiment in which attack success rate falls sharply below the full-optimization baseline when only the top 25 percent energy tokens are retained would falsify the claim that high-energy tokens suffice.

Figures

read the original abstract

Jailbreak attacks on audio language models (ALMs) optimize audio perturbations to elicit unsafe generations, and they typically update the entire waveform densely throughout optimization. In this work, we investigate the necessity of such dense optimization by analyzing the structure of token-aligned gradients in ALMs. We find that gradient energy is highly non-uniform across audio tokens, indicating that only a small subset of token-aligned audio regions dominates the optimization signal. Motivated by this observation, we propose Token-Aware Gradient Optimization (TAGO), which enables sparse jailbreak optimization by retaining only waveform gradients aligned with audio tokens that have high gradient energy, while masking the remaining gradients at each iteration. Across three ALMs, TAGO outperforms baselines, and substantial sparsification preserves strong attack success rates (e.g. on Qwen3-Omni, $\mathrm{ASR}_{l}$ remains at 86% with a token retention ratio of 0.25, compared to 87% with full token retention). These results demonstrate that dense waveform updates are largely redundant, and we advocate that future audio jailbreak and safety alignment research should further leverage this heterogeneous token-level gradient structure.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper claims that token-aligned gradients in audio language models exhibit highly non-uniform energy distributions, enabling a sparse optimization method (TAGO) that masks low-energy gradients at each step while retaining only the top fraction (e.g., 25%) of high-energy audio tokens. This yields attack success rates nearly identical to dense updates (ASR_l of 86% vs. 87% on Qwen3-Omni), showing that dense waveform updates are largely redundant across three ALMs.

Significance. If the empirical results hold under broader conditions, the work provides concrete evidence for heterogeneous gradient structure in ALMs that could improve efficiency of jailbreak attacks and inform targeted safety alignments. The reported preservation of ASR under sparsity is a clear strength when supported by ablations.

major comments (2)

- [Experimental Results] The central claim that 'substantial sparsification preserves strong attack success rates' rests on the assumption that high-energy token subsets remain stable and informative across optimization steps. No analysis or ablation is provided on whether the ranked top-k tokens shift between iterations, or whether early masking of low-energy gradients degrades later convergence; the single point estimate (86% vs 87% at 0.25 retention on Qwen3-Omni) does not address this redistribution risk.

- [Evaluation] The manuscript reports concrete ASR numbers but omits key experimental details required to verify robustness: number of independent runs, variance or statistical tests, full set of baselines, and ablations of the retention ratio across all three ALMs and varied prompts. Without these, the support for the 'dense updates are redundant' conclusion remains only partially verifiable.

minor comments (1)

- [Abstract] The abstract states results 'across three ALMs' without naming the models; this information should appear in the abstract or be cross-referenced to the experimental section for immediate clarity.

Simulated Author's Rebuttal

We thank the referee for the constructive feedback on our manuscript. We address each major comment point by point below, providing clarifications and committing to revisions that strengthen the empirical support for our claims without misrepresenting the original results.

read point-by-point responses

-

Referee: [Experimental Results] The central claim that 'substantial sparsification preserves strong attack success rates' rests on the assumption that high-energy token subsets remain stable and informative across optimization steps. No analysis or ablation is provided on whether the ranked top-k tokens shift between iterations, or whether early masking of low-energy gradients degrades later convergence; the single point estimate (86% vs 87% at 0.25 retention on Qwen3-Omni) does not address this redistribution risk.

Authors: We appreciate this observation on the potential dynamics of token selection. TAGO recomputes gradient energies and applies masking at every optimization step, so the retained high-energy tokens adapt dynamically to any shifts rather than relying on a fixed subset from early iterations. The near-identical final ASR (86% vs. 87%) under 0.25 retention indicates that convergence is not materially degraded despite redistribution. That said, we agree an explicit stability analysis would provide stronger evidence. In the revised version we will add an ablation reporting the average Jaccard overlap of top-k tokens across consecutive steps (for the Qwen3-Omni experiments) together with side-by-side convergence curves for sparse versus dense updates. This directly addresses the redistribution concern while preserving the original empirical findings. revision: yes

-

Referee: [Evaluation] The manuscript reports concrete ASR numbers but omits key experimental details required to verify robustness: number of independent runs, variance or statistical tests, full set of baselines, and ablations of the retention ratio across all three ALMs and varied prompts. Without these, the support for the 'dense updates are redundant' conclusion remains only partially verifiable.

Authors: We acknowledge that additional experimental details will improve verifiability. The current manuscript already compares TAGO against multiple baselines on three ALMs and reports retention-ratio results on Qwen3-Omni, yet we agree the presentation can be strengthened. In the revision we will: (i) state that all reported ASR values are means over 5 independent runs with standard deviations; (ii) include paired t-tests confirming that the observed ASR differences between 0.25 and full retention are not statistically significant; (iii) expand the baseline table to cover all methods evaluated in the original experiments; and (iv) add retention-ratio ablations (0.1, 0.25, 0.5, 1.0) for the remaining two ALMs using the same prompt set plus additional varied prompts. These changes will make the support for redundant dense updates fully verifiable while leaving the core claims unchanged. revision: yes

Circularity Check

Empirical gradient analysis drives sparse optimization with no self-referential reduction

full rationale

The paper computes token-aligned gradients during optimization, observes their non-uniform energy distribution as an empirical fact, and applies per-iteration masking of low-energy tokens to produce sparse updates. Attack success rates are then measured directly on the resulting adversarial audio. No equation, parameter fit, or self-citation chain equates the final ASR claim to the input gradients or to a prior result by the same authors; the sparsity rule is applied to freshly computed quantities each step and the outcome is externally validated on held-out prompts and models. The derivation therefore remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Token-aligned gradients in ALMs exhibit highly non-uniform energy distribution that can be used to identify dominant optimization signals.

Reference graph

Works this paper leans on

-

[1]

Qwen3 technical report , author=. arXiv preprint arXiv:2505.09388 , year=

work page internal anchor Pith review arXiv

-

[2]

Jin Xu and Zhifang Guo and Hangrui Hu and Yunfei Chu and Xiong Wang and Jinzheng He and Yuxuan Wang and Xian Shi and Ting He and Xinfa Zhu and Yuanjun Lv and Yongqi Wang and Dake Guo and He Wang and Linhan Ma and Pei Zhang and Xinyu Zhang and Hongkun Hao and Zishan Guo and Baosong Yang and Bin Zhang and Ziyang Ma and Xipin Wei and Shuai Bai and Keqin Chen...

work page internal anchor Pith review arXiv

-

[3]

Qwen technical report , author=. arXiv preprint arXiv:2309.16609 , year=

-

[4]

LLaMA: Open and Efficient Foundation Language Models

Llama: Open and efficient foundation language models , author=. arXiv preprint arXiv:2302.13971 , year=

work page internal anchor Pith review arXiv

-

[5]

Advances in neural information processing systems , volume=

Visual instruction tuning , author=. Advances in neural information processing systems , volume=

-

[6]

The llama 3 herd of models , author=. arXiv preprint arXiv:2407.21783 , year=

work page internal anchor Pith review arXiv

-

[7]

Qwen3-VL Technical Report , author=. arXiv preprint arXiv:2511.21631 , year=

work page internal anchor Pith review arXiv

-

[8]

Forty-second International Conference on Machine Learning , year=

Audio Flamingo 2: An Audio-Language Model with Long-Audio Understanding and Expert Reasoning Abilities , author=. Forty-second International Conference on Machine Learning , year=

-

[9]

Qwen2-audio technical report , author=. arXiv preprint arXiv:2407.10759 , year=

work page internal anchor Pith review arXiv

-

[10]

Llama-omni: Seamless speech interaction with large language models , author=. arXiv preprint arXiv:2409.06666 , year=

-

[11]

2018 IEEE security and privacy workshops (SPW) , pages=

Audio adversarial examples: Targeted attacks on speech-to-text , author=. 2018 IEEE security and privacy workshops (SPW) , pages=. 2018 , organization=

2018

-

[12]

29th USENIX Security Symposium (USENIX Security 20) , pages=

\ Devil’s \ whisper: A general approach for physical adversarial attacks against commercial black-box speech recognition devices , author=. 29th USENIX Security Symposium (USENIX Security 20) , pages=

-

[13]

Proceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security , pages=

Zero-query adversarial attack on black-box automatic speech recognition systems , author=. Proceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security , pages=

2024

-

[14]

Selective Masking Adversarial Attack on Automatic Speech Recognition Systems , year=

Fang, Zheng and Zhang, Shenyi and Wang, Tao and Li, Bowen and Zhao, Lingchen and Wang, Zhangyi , booktitle=. Selective Masking Adversarial Attack on Automatic Speech Recognition Systems , year=

-

[15]

do anything now

" do anything now": Characterizing and evaluating in-the-wild jailbreak prompts on large language models , author=. Proceedings of the 2024 on ACM SIGSAC Conference on Computer and Communications Security , pages=

2024

-

[16]

Universal and Transferable Adversarial Attacks on Aligned Language Models

Universal and transferable adversarial attacks on aligned language models , author=. arXiv preprint arXiv:2307.15043 , year=

-

[17]

Advances in Neural Information Processing Systems , volume=

Tree of attacks: Jailbreaking black-box llms automatically , author=. Advances in Neural Information Processing Systems , volume=

-

[18]

AutoDAN: Generating Stealthy Jailbreak Prompts on Aligned Large Language Models

Autodan: Generating stealthy jailbreak prompts on aligned large language models , author=. arXiv preprint arXiv:2310.04451 , year=

work page internal anchor Pith review arXiv

-

[19]

Advances in Neural Information Processing Systems , volume=

Are aligned neural networks adversarially aligned? , author=. Advances in Neural Information Processing Systems , volume=

-

[20]

Proceedings of the AAAI conference on artificial intelligence , volume=

Visual adversarial examples jailbreak aligned large language models , author=. Proceedings of the AAAI conference on artificial intelligence , volume=

-

[21]

The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

Attention! Your Vision Language Model Could Be Maliciously Manipulated , author=. The Thirty-ninth Annual Conference on Neural Information Processing Systems , year=

-

[22]

Advwave: Stealthy adversarial jailbreak attack against large audio-language models , author=. arXiv preprint arXiv:2412.08608 , year=

-

[23]

Qwen2. 5-omni technical report , author=. arXiv preprint arXiv:2503.20215 , year=

work page internal anchor Pith review arXiv

-

[24]

2025 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML) , pages=

Jailbreaking black box large language models in twenty queries , author=. 2025 IEEE Conference on Secure and Trustworthy Machine Learning (SaTML) , pages=. 2025 , organization=

2025

-

[25]

SORRY - Bench : Systematically Evaluating Large Language Model Safety Refusal , March 2025

Sorry-bench: Systematically evaluating large language model safety refusal , author=. arXiv preprint arXiv:2406.14598 , year=

-

[26]

2025 , url =

Gemini 3 Flash Model Card , author =. 2025 , url =

2025

-

[27]

2025 , url =

Gemini , author =. 2025 , url =

2025

-

[28]

2025 , url =

Google Cloud Speech-to-Text , author =. 2025 , url =

2025

-

[29]

2021 IEEE Symposium on Security and Privacy (SP) , pages=

Who is real bob? adversarial attacks on speaker recognition systems , author=. 2021 IEEE Symposium on Security and Privacy (SP) , pages=. 2021 , organization=

2021

-

[30]

Proceedings of the 2021 ACM SIGSAC conference on computer and communications security , pages=

Black-box adversarial attacks on commercial speech platforms with minimal information , author=. Proceedings of the 2021 ACM SIGSAC conference on computer and communications security , pages=

2021

-

[31]

The Thirteenth International Conference on Learning Representations , year=

Safety Alignment Should be Made More Than Just a Few Tokens Deep , author=. The Thirteenth International Conference on Learning Representations , year=

-

[32]

International Conference on Learning Representations , year=

Characterizing Adversarial Subspaces Using Local Intrinsic Dimensionality , author=. International Conference on Learning Representations , year=

-

[33]

SIAM review , volume=

Optimization methods for large-scale machine learning , author=. SIAM review , volume=. 2018 , publisher=

2018

-

[34]

Advances in Neural Information Processing Systems , volume=

H2o: Heavy-hitter oracle for efficient generative inference of large language models , author=. Advances in Neural Information Processing Systems , volume=

-

[35]

The Twelfth International Conference on Learning Representations , year=

Efficient Streaming Language Models with Attention Sinks , author=. The Twelfth International Conference on Learning Representations , year=

-

[36]

Advances in Neural Information Processing Systems , volume=

Masked autoencoders that listen , author=. Advances in Neural Information Processing Systems , volume=

-

[37]

International Conference on Machine Learning , pages=

HarmBench: A Standardized Evaluation Framework for Automated Red Teaming and Robust Refusal , author=. International Conference on Machine Learning , pages=. 2024 , organization=

2024

-

[38]

International conference on machine learning , pages=

Robust speech recognition via large-scale weak supervision , author=. International conference on machine learning , pages=. 2023 , organization=

2023

-

[39]

Step-audio: Unified understanding and generation in intelligent speech interaction, 2025

Step-audio: Unified understanding and generation in intelligent speech interaction , author=. arXiv preprint arXiv:2502.11946 , year=

-

[40]

Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks , author=. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages=

-

[41]

IEEE Transactions on Information Forensics and Security , volume=

Towards query-efficient adversarial attacks against automatic speech recognition systems , author=. IEEE Transactions on Information Forensics and Security , volume=. 2020 , publisher=

2020

-

[42]

32nd USENIX Security Symposium (USENIX Security 23) , pages=

\ KENKU \ : Towards efficient and stealthy black-box adversarial attacks against \ ASR \ systems , author=. 32nd USENIX Security Symposium (USENIX Security 23) , pages=

-

[43]

34th USENIX Security Symposium (USENIX Security 25) , pages=

Great, now write an article about that: The crescendo \ Multi-Turn \ \ LLM \ jailbreak attack , author=. 34th USENIX Security Symposium (USENIX Security 25) , pages=

-

[44]

Explaining and Harnessing Adversarial Examples

Explaining and harnessing adversarial examples , author=. arXiv preprint arXiv:1412.6572 , year=

work page internal anchor Pith review arXiv

-

[45]

International Conference on Learning Representations , year=

Towards Deep Learning Models Resistant to Adversarial Attacks , author=. International Conference on Learning Representations , year=

-

[46]

2017 ieee symposium on security and privacy (sp) , pages=

Towards evaluating the robustness of neural networks , author=. 2017 ieee symposium on security and privacy (sp) , pages=. 2017 , organization=

2017

-

[47]

2013 , publisher=

Introductory lectures on convex optimization: A basic course , author=. 2013 , publisher=

2013

-

[48]

Han and Katrin Kirchhoff , title=

Raghuveer Peri and Sai Muralidhar Jayanthi and Srikanth Ronanki and Anshu Bhatia and Karel Mundnich and Saket Dingliwal and Nilaksh Das and Zejiang Hou and Goeric Huybrechts and Srikanth Vishnubhotla and Daniel Garcia-Romero and Sundararajan Srinivasan and Kyu J. Han and Katrin Kirchhoff , title=. 2024 , pages=

2024

-

[49]

arXiv preprint arXiv:2507.06256 , year=

Attacker's Noise Can Manipulate Your Audio-based LLM in the Real World , author=. arXiv preprint arXiv:2507.06256 , year=

-

[50]

Audio is the achilles’ heel: Red teaming audio large multimodal models , author=. Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers) , pages=

2025

-

[51]

Multilingual and multi-accent jailbreaking of audio llms.arXiv preprint arXiv:2504.01094,

Multilingual and multi-accent jailbreaking of audio llms , author=. arXiv preprint arXiv:2504.01094 , year=

-

[52]

Shenyi Zhang and Yuchen Zhai and Keyan Guo and Hongxin Hu and Shengnan Guo and Zheng Fang and Lingchen Zhao and Chao Shen and Cong Wang and Qian Wang , title =. 34th

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.