Recognition: no theorem link

Dependence on Early and Late Reverberation of Single-Channel Speaker Distance Estimation

Pith reviewed 2026-05-11 02:56 UTC · model grok-4.3

The pith

Single-channel speaker distance estimation relies on early reverberation cues without time calibration but switches to propagation delay when calibration is available.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

When time calibration is absent the model extracts reverberation-based cues from the room impulse response, with early reflections proving the most informative component, and accuracy improves as early energy strengthens while degrading in longer reverberation. When time calibration is present the model achieves centimeter-level accuracy by extracting only the propagation delay, independent of reverberation content.

What carries the argument

Decomposition of simulated room impulse responses into full, direct-only, no-late, and no-early versions using mixing time from the echo density function, tested across four calibration scenarios from synchronized known source level to arbitrary onset and unknown level.

If this is right

- Estimation accuracy improves with stronger early energy, as measured by higher direct-to-reverberant ratio and C50.

- Performance degrades in rooms with higher reverberation time T60.

- Early reflections remain the dominant cue when no time reference is available.

- The network discards all reverberation information once propagation delay is directly observable.

Where Pith is reading between the lines

- The same model may lose accuracy in real rooms whose mixing times differ from the simulated echo-density estimate.

- Hybrid systems could first detect whether a recording is time-calibrated and then route to either delay-based or reflection-based estimators.

- Adding explicit early-reflection features during training might increase robustness when calibration is partial or unknown.

Load-bearing premise

The mixing time derived from the echo density function correctly separates early reflections from late reverberation in simulated impulse responses, and the network's cue selection on those decomposed signals will behave the same way on real recordings.

What would settle it

Run the trained model on real-room recordings with known source distances, apply controlled time shifts and level changes to simulate the calibration scenarios, and check whether the observed error patterns and cue reliance match the simulated decomposition results.

Figures

read the original abstract

Single-channel speaker distance estimation has recently achieved centimeter-level accuracy in simulated environments, yet it remains unclear which components of the room impulse response (RIR) the model exploits and how performance depends on the recording conditions. In this work, we decompose simulated RIRs into four variants (full, direct-only, no-late, and no-early) using the mixing time estimated from the echo density function as the boundary between early reflections and late reverberation. We define four calibration scenarios, from fully calibrated (synchronised capture, known source level) to fully uncalibrated (arbitrary onset, unknown level), and evaluate all combinations on a matched dataset. Results show that without time calibration, mean absolute error (MAE) increases to $1.29$ m and the model extracts reverberation-based cues, with early reflections emerging as the most informative component. Further analysis against DRR, $C_{50}$, and $T_{60}$ confirms that estimation accuracy improves with stronger early energy and degrades in highly reverberant environments. When time calibration is available, the model achieves a MAE of $0.14$ m by extracting the propagation delay alone, regardless of the RIR content.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript investigates the acoustic cues exploited by single-channel speaker distance estimation models by decomposing simulated room impulse responses (RIRs) into four variants (full, direct-only, no-late, no-early) with the mixing time from the echo density function as the early/late boundary. It evaluates all combinations under four calibration scenarios ranging from fully calibrated to uncalibrated, reporting an MAE of 1.29 m without time calibration (with early reflections as the dominant cue) and 0.14 m with time calibration (relying on propagation delay alone). Supporting analysis correlates estimation accuracy with DRR, C50, and T60, showing better performance with stronger early energy and degradation in reverberant conditions.

Significance. If the RIR decomposition holds, the results would meaningfully advance understanding of neural model behavior in acoustic signal processing by identifying early reflections as the primary cue for distance estimation in uncalibrated settings and quantifying robustness to reverberation. The concrete MAE figures (1.29 m and 0.14 m) together with explicit correlations to standard metrics (DRR, C50, T60) constitute a clear empirical contribution that could guide data augmentation strategies and model interpretability efforts.

major comments (1)

- [RIR Decomposition] RIR Decomposition (methods section): The boundary between early reflections and late reverberation is defined solely via mixing time estimated from the echo density function, yet no validation against energy-decay curves, image-source ground truth, or alternative estimators is provided. Because the central claim that early reflections are the most informative component rests on the relative MAE performance of the no-early versus no-late variants, this unvalidated partition is load-bearing for the attribution of reverberation-based cues.

minor comments (2)

- [Abstract] Abstract: The four calibration scenarios are referenced but not enumerated; a one-sentence listing would improve immediate clarity for readers.

- [Results] Results section: Acronyms DRR, C50, and T60 should be expanded at first use (or collected in a table) to aid readers outside core acoustics.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed review, which correctly identifies a methodological point that merits strengthening. We address the single major comment below and will incorporate the suggested validation to improve the robustness of our RIR decomposition.

read point-by-point responses

-

Referee: RIR Decomposition (methods section): The boundary between early reflections and late reverberation is defined solely via mixing time estimated from the echo density function, yet no validation against energy-decay curves, image-source ground truth, or alternative estimators is provided. Because the central claim that early reflections are the most informative component rests on the relative MAE performance of the no-early versus no-late variants, this unvalidated partition is load-bearing for the attribution of reverberation-based cues.

Authors: We agree that the absence of explicit validation for the mixing-time boundary is a limitation that weakens the attribution of distance cues to early reflections. Although the echo-density-function estimator is a standard method in room acoustics for identifying the transition to diffuse reverberation, we did not include direct comparisons in the original manuscript. In the revised version we will add a dedicated validation subsection that (i) compares the estimated mixing times against the knee point of the Schroeder energy-decay curves on a representative subset of rooms, (ii) contrasts them with ground-truth mixing times obtained from the known image-source geometry used to generate the RIRs, and (iii) reports the sensitivity of the reported MAE values to small shifts (±5 ms) around the estimated boundary. These additions will confirm that the early/late partition is appropriate and that the performance gap between the no-early and no-late conditions is not an artifact of the chosen threshold. revision: yes

Circularity Check

No circularity: empirical evaluation on simulated RIR decompositions

full rationale

The paper reports measured MAE outcomes from a neural model evaluated on four RIR variants (full, direct-only, no-late, no-early) created by applying an echo-density mixing-time boundary. These performance numbers are experimental results, not quantities defined in terms of themselves or forced by prior fitted parameters. No self-citation chain, ansatz smuggling, or uniqueness theorem is invoked to justify the central claims. The decomposition method is treated as an input assumption whose validity can be checked externally; it does not make the reported accuracy differences tautological. This is a standard non-circular empirical study.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Mixing time from echo density function separates early and late reverberation

Reference graph

Works this paper leans on

-

[1]

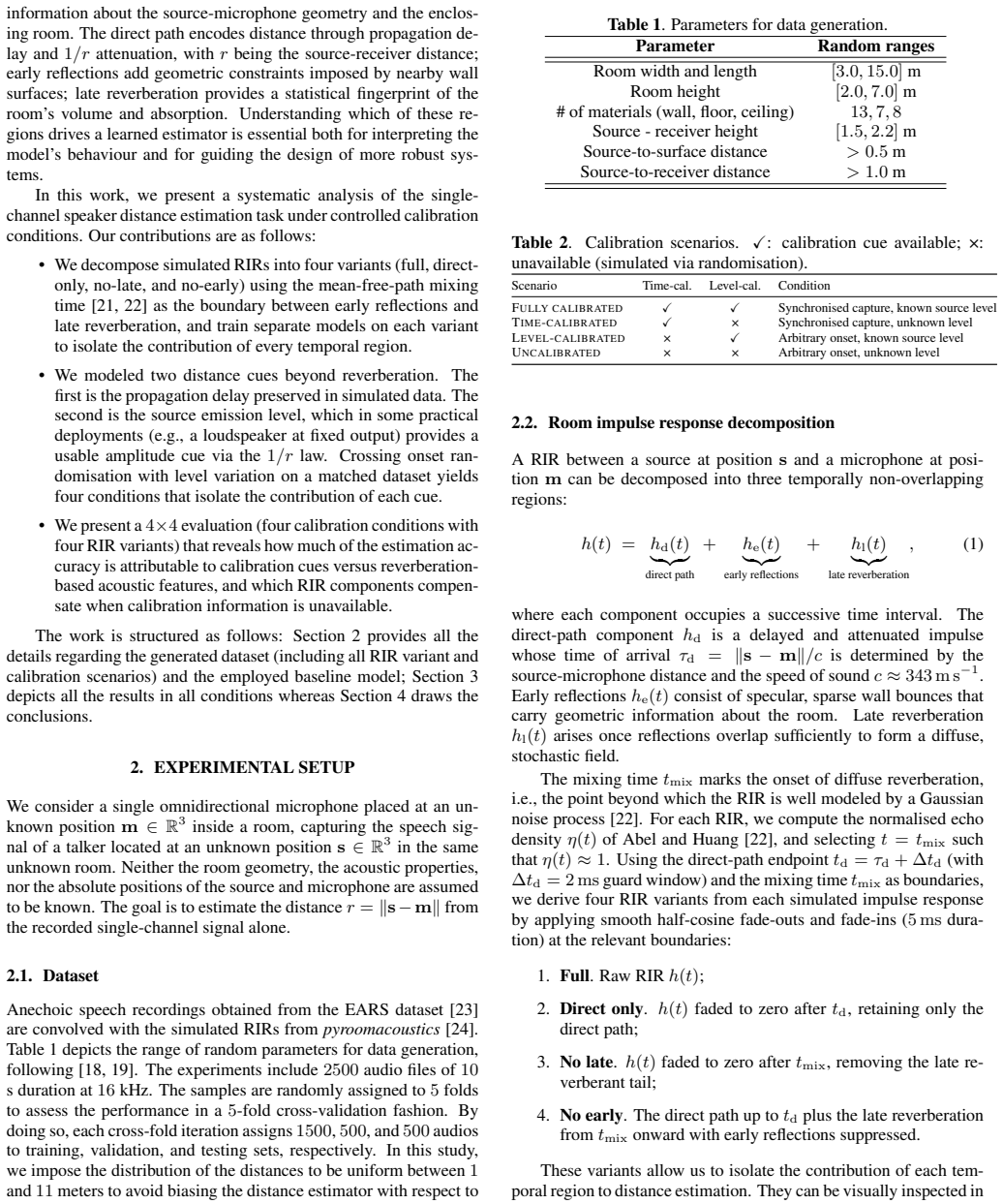

INTRODUCTION Estimating the distance of a sound source from a single-channel recording is a problem of practical interest in hearing aid devices [1], hands-free communication [2], and speech recognition [3]. Unlike direction of arrival (DoA) estimation, which humans solve reliably through binaural cues such as interaural time difference (ITD) and interaur...

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[2]

EXPERIMENTAL SETUP We consider a single omnidirectional microphone placed at an un- known positionm∈R 3 inside a room, capturing the speech sig- nal of a talker located at an unknown positions∈R 3 in the same unknown room. Neither the room geometry, the acoustic properties, nor the absolute positions of the source and microphone are assumed to be known. T...

-

[3]

EXPERIMENTAL RESULTS In this section a study on which temporal component of the RIR is used in each scenario is analyzed. Further analysis is carried out on the effect of propagation delay and source level for the speaker distance estimation task. 3.1. Dependence on RIR components Figure 3 presents the performance of all four RIR variants under the uncali...

-

[4]

CONCLUSIONS This work presented a systematic analysis of how individual RIR components contribute to single-channel speaker distance estima- tion under different calibration conditions. When time calibration is available, the propagation delay alone yields MAE≈0.14m and all RIR components become redundant. Level calibration provides negligible benefit in ...

-

[5]

Signal processing in high-end hearing aids: State of the art, challenges, and future trends,

V . Hamacher, J. Chalupper, J. Eggers, E. Fischer, U. Kornagel, H. Puder, and U. Rass, “Signal processing in high-end hearing aids: State of the art, challenges, and future trends,”EURASIP Journal on Advances in Signal Processing, vol. 2005, no. 18, pp. 1–15, 2005

work page 2005

-

[6]

Hands-free voice communication in an automobile with a microphone array,

S. Oh, V . Viswanathan, and P. Papamichalis, “Hands-free voice communication in an automobile with a microphone array,” in IEEE International Conference on Acoustics, Speech, and Sig- nal Processing, 1992

work page 1992

-

[7]

Environmen- tal conditions and acoustic transduction in hands-free speech recognition,

M. Omologo, P. Svaizer, and M. Matassoni, “Environmen- tal conditions and acoustic transduction in hands-free speech recognition,”Speech Communication, vol. 25, no. 1-3, pp. 75– 95, 1998

work page 1998

-

[8]

Auditory model based direction estimation of concurrent speakers from binau- ral signals,

M. Dietz, S. D. Ewert, and V . Hohmann, “Auditory model based direction estimation of concurrent speakers from binau- ral signals,”Speech Communication, vol. 53, no. 5, pp. 592– 605, 2011

work page 2011

-

[9]

Auditory distance perception in humans: A summary of past and present research,

P. Zahorik, D. S. Brungart, and A. W. Bronkhorst, “Auditory distance perception in humans: A summary of past and present research,”ACTA Acustica united with Acustica, vol. 91, no. 3, pp. 409–420, 2005

work page 2005

-

[10]

Modeling the per- ception of audiovisual distance: Bayesian causal inference and other models,

C. Mendonça, P. Mandelli, and V . Pulkki, “Modeling the per- ception of audiovisual distance: Bayesian causal inference and other models,”PloS one, vol. 11, no. 12, p. e0165391, 2016

work page 2016

-

[11]

Some experiments on the recognition of speech, with one and with two ears,

E. C. Cherry, “Some experiments on the recognition of speech, with one and with two ears,”The Journal of the Acoustical Society of America, vol. 25, no. 5, pp. 975–979, 1953

work page 1953

-

[12]

Speaker Distance Detection Using a Single Micro- phone,

E. Georganti, T. May, S. van de Par, A. Harma, and J. Mour- jopoulos, “Speaker Distance Detection Using a Single Micro- phone,”IEEE Transactions on Audio, Speech, and Language Processing, vol. 19, no. 7, pp. 1949–1961, 2011

work page 1949

-

[13]

Y . Lu and M. Cooke, “Binaural Estimation of Sound Source Distance via the Direct-to-Reverberant Energy Ratio for Static and Moving Sources,”IEEE Transactions on Audio, Speech, and Language Processing, vol. 18, no. 7, pp. 1793–1805, 2010

work page 2010

-

[14]

Sound Source Distance Learning Based on Binaural Signals,

S. Vesa, “Sound Source Distance Learning Based on Binaural Signals,” inIEEE Workshop on Applications of Signal Process- ing to Audio and Acoustics (WASPAA), 2007

work page 2007

-

[15]

Binaural Sound Source Distance Learning in Rooms,

——, “Binaural Sound Source Distance Learning in Rooms,” IEEE Transactions on Audio, Speech, and Language Process- ing, vol. 17, no. 8, pp. 1498–1507, 2009

work page 2009

-

[16]

Sound Source Distance Estimation in Rooms based on Sta- tistical Properties of Binaural Signals,

E. Georganti, T. May, S. van de Par, and J. Mourjopoulos, “Sound Source Distance Estimation in Rooms based on Sta- tistical Properties of Binaural Signals,”IEEE Transactions on Audio, Speech, and Language Processing, vol. 21, no. 8, pp. 1727–1741, 2013

work page 2013

-

[17]

Distance-Based Sound Separation,

K. Patterson, K. Wilson, S. Wisdom, and J. R. Hershey, “Distance-Based Sound Separation,” inInterspeech, 2022

work page 2022

-

[18]

Joint direction and proximity classification of overlapping sound events from bin- aural audio,

D. A. Krause, A. Politis, and A. Mesaros, “Joint direction and proximity classification of overlapping sound events from bin- aural audio,” inIEEE Workshop on Applications of Signal Pro- cessing to Audio and Acoustics (WASPAA), 2021

work page 2021

-

[19]

Sound source distance estimation using deep learning: An image classification approach,

M. Yiwere and E. J. Rhee, “Sound source distance estimation using deep learning: An image classification approach,”Sen- sors, vol. 20, no. 1, p. 172, 2019

work page 2019

-

[20]

A Few-Shot Learn- ing Approach for Sound Source Distance Estimation Using Relation Networks,

A. Sobhdel, R. Razavi-Far, and V . Palade, “A Few-Shot Learn- ing Approach for Sound Source Distance Estimation Using Relation Networks,” inInternational Conference on Machine Learning and Applications (ICMLA), 2024

work page 2024

-

[21]

R. Venkatesan and A. Ganesh, “Analysis of monaural and bin- aural statistical properties for the estimation of distance of a target speaker,”Circuits, Systems, and Signal Processing, vol. 39, p. 3626–3651, 2020

work page 2020

-

[22]

Single-Channel Speaker Distance Estimation in Reverberant Environments,

M. Neri, A. Politis, D. A. Krause, M. Carli, and T. Virtanen, “Single-Channel Speaker Distance Estimation in Reverberant Environments,” inIEEE Workshop on Applications of Signal Processing to Audio and Acoustics (WASPAA), 2023, pp. 1–5

work page 2023

-

[23]

Speaker Distance Estimation in Enclosures From Single-Channel Audio,

——, “Speaker Distance Estimation in Enclosures From Single-Channel Audio,”IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 32, pp. 2242–2254, 2024

work page 2024

-

[24]

Generative data augmentation challenge: Synthesis of room acoustics for speaker distance estimation,

J. Lin, G. Götz, H. S. Llopis, H. Hafsteinsson, S. Guðjónsson, D. G. Nielsen, F. Pind, P. Smaragdis, D. Manocha, J. Hershey, T. Kristjansson, and M. Kim, “Generative data augmentation challenge: Synthesis of room acoustics for speaker distance estimation,” inIEEE International Conference on Acoustics, Speech and Signal Processing Workshops (ICASSPW), 2025

work page 2025

- [25]

-

[26]

A simple, robust measure of reverber- ation echo density,

J. S. Abel and P. Huang, “A simple, robust measure of reverber- ation echo density,” inAudio Engineering Society Convention

-

[27]

Audio Engineering Society, 2006

work page 2006

-

[28]

EARS: An Anechoic Fullband Speech Dataset Benchmarked for Speech Enhancement and Dereverberation,

J. Richter, Y . Wu, S. Krenn, S. Welker, B. Lay, S. Watanabe, A. Richard, and T. Gerkmann, “EARS: An Anechoic Fullband Speech Dataset Benchmarked for Speech Enhancement and Dereverberation,” inInterspeech, 2024

work page 2024

-

[29]

Pyroomacoustics: A python package for audio room simulation and array pro- cessing algorithms,

R. Scheibler, E. Bezzam, and I. Dokmani ´c, “Pyroomacoustics: A python package for audio room simulation and array pro- cessing algorithms,” inIEEE ICASSP, 2018

work page 2018

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.