Recognition: 2 theorem links

· Lean TheoremModeling Decision-Making with Will for Cooperation in Social Dilemmas

Pith reviewed 2026-05-12 00:55 UTC · model grok-4.3

The pith

Agents with persistent 'will' that ignore local costs catalyze cooperation in social dilemmas where rational maximizers fail.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

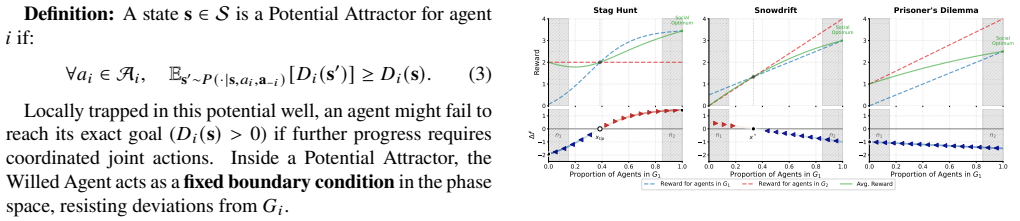

Willed agents, modeled as potential minimizers that pursue fixed goals while ignoring local utility fluctuations, shrink the feasible state space and function as boundary constraints that accelerate convergence in canonical social dilemmas. In spatiotemporal Stag Hunt simulations, they serve as cooperation catalysts that let groups surpass high-risk thresholds where continuous rational optimization collapses. Agents that autonomously suspend re-evaluation outperform continuous optimizers, and heterogeneous will strengths further increase cooperation rates.

What carries the argument

Will formalized as potential minimizers; this mechanism imposes persistent goal pursuit that contracts the reachable state space and supplies boundary constraints accelerating cooperative equilibria.

Load-bearing premise

That formalizing will as goal persistence while ignoring local costs will shrink the state space and produce higher cooperation rates than pure utility maximization.

What would settle it

A replication of the spatiotemporal Stag Hunt simulations in which groups composed solely of continuous utility maximizers reach cooperative equilibria at the same risk thresholds as groups that include willed agents.

Figures

read the original abstract

Standard rational actor models often attribute cooperation failures in social dilemmas to insufficient incentives, overlooking the destabilizing effects of continuous utility maximization. To address this, we propose a framework of ``will" defined as a mechanism that persistently pursues goals while ignoring local cost-benefit fluctuations. We formalize the Willed Agents as potential minimizers, distinguishing them from cumulative utility maximization. Dynamical analysis of infinite population demonstrates that willed agents shrink the feasible state space, acting as boundary constraints that accelerate convergence in canonical social dilemmas. Through multi-agent simulations in a spatiotemporal Stag Hunt Game, we show that willed agents function as ``cooperation catalysts", enabling groups to surmount high-risk thresholds where purely utility maximization fails. We find that heterogeneous will strength promotes cooperation, and that agents who autonomously suspend rational re-evaluation can significantly outperform continuous optimizers. These findings suggest that successful cooperation relies on the cognitive capacity to strategically constrain calculation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes a framework of 'will' as a decision-making mechanism that persistently pursues goals while ignoring local cost-benefit fluctuations, formalized as potential minimization distinct from cumulative utility maximization. Dynamical analysis in infinite populations shows willed agents shrink the feasible state space and accelerate convergence in social dilemmas. Multi-agent simulations in a spatiotemporal Stag Hunt game claim that willed agents act as cooperation catalysts, enabling groups to surmount high-risk thresholds where pure utility maximization fails, with heterogeneous will strengths promoting cooperation and autonomous suspension of re-evaluation outperforming continuous optimizers.

Significance. If the formalization is shown to be goal-agnostic, the work offers a multi-scale (dynamical + simulation) approach to modeling cognitive constraints on calculation in multi-agent systems, potentially explaining cooperation beyond incentive-based rational actor models. The simulations provide concrete evidence of threshold-surmounting effects within the framework, and the heterogeneous will strength result is a falsifiable prediction worth testing.

major comments (3)

- [Formalization of Willed Agents] Formalization section (abstract and § on Willed Agents): The potential function for willed agents must be specified explicitly (e.g., via equation) to show it does not encode a pre-chosen cooperative target such as mutual stag hunting. If the potential is defined relative to an exogenous goal, the claimed state-space shrinking and catalysis reduce to properties of the definition rather than emergent from ignoring fluctuations, making the distinction from utility maximization non-load-bearing.

- [Dynamical analysis] Dynamical analysis of infinite population: The claim that potential minimizers act as boundary constraints requires the explicit dynamical equations (e.g., modified replicator or mean-field update) and a demonstration that the contraction of feasible states occurs independently of the specific cooperative equilibrium chosen.

- [Multi-agent simulations in spatiotemporal Stag Hunt Game] Multi-agent simulations section: The Stag Hunt results (catalysis at high-risk thresholds) need controls that isolate the 'ignoring local fluctuations' aspect, such as an ablation where agents minimize the same potential but re-evaluate continuously, plus reporting of will-strength parameterization, error bars, and sensitivity to initial conditions.

minor comments (2)

- [Simulations] Ensure consistent terminology between 'will strength' (listed as free parameter) and its implementation in simulations; clarify whether it is fixed, evolved, or sampled.

- [Abstract] The abstract's phrase 'autonomously suspend rational re-evaluation' should be defined with reference to the potential-minimizer update rule to avoid ambiguity.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive report. The comments identify important points where the manuscript can be strengthened by greater explicitness in the formalization, dynamics, and simulation controls. We accept the recommendation for major revision and will incorporate the requested clarifications and additional analyses. Below we respond point by point.

read point-by-point responses

-

Referee: [Formalization of Willed Agents] Formalization section (abstract and § on Willed Agents): The potential function for willed agents must be specified explicitly (e.g., via equation) to show it does not encode a pre-chosen cooperative target such as mutual stag hunting. If the potential is defined relative to an exogenous goal, the claimed state-space shrinking and catalysis reduce to properties of the definition rather than emergent from ignoring fluctuations, making the distinction from utility maximization non-load-bearing.

Authors: We agree that an explicit equation is required to make the formalization load-bearing. In the revised manuscript we will add the precise definition of the potential function V_i(s) for agent i, which is the squared Euclidean distance (or analogous mismatch) between the current state s and the agent's fixed, exogenously chosen goal g_i. The goal g_i is selected independently of the social-dilemma payoff matrix and can be any persistent target (cooperative or otherwise). The dynamics then follow from persistent minimization of V_i while ignoring instantaneous utility fluctuations. We will also include a short proof sketch showing that the feasible-state contraction is a direct consequence of the hard constraint on re-evaluation frequency rather than from any special choice of g_i. This addresses the concern that the distinction from cumulative utility maximization is definitional rather than emergent. revision: yes

-

Referee: [Dynamical analysis] Dynamical analysis of infinite population: The claim that potential minimizers act as boundary constraints requires the explicit dynamical equations (e.g., modified replicator or mean-field update) and a demonstration that the contraction of feasible states occurs independently of the specific cooperative equilibrium chosen.

Authors: We will supply the missing explicit dynamical equations in the revision. The infinite-population model is a modified replicator dynamics in which the growth rate of each strategy is gated by the agent's current potential gradient: agents only update when the projected change in V_i exceeds a threshold, otherwise they remain fixed. We will derive the resulting mean-field ODE and show analytically that the feasible region contracts to a lower-dimensional manifold whose dimension depends only on the number of distinct goals, not on whether those goals coincide with the cooperative Nash equilibrium. Numerical integration of the ODE for both cooperative and non-cooperative goal choices will be added to confirm that the boundary-constraint effect is independent of the target equilibrium. revision: yes

-

Referee: [Multi-agent simulations in spatiotemporal Stag Hunt Game] Multi-agent simulations section: The Stag Hunt results (catalysis at high-risk thresholds) need controls that isolate the 'ignoring local fluctuations' aspect, such as an ablation where agents minimize the same potential but re-evaluate continuously, plus reporting of will-strength parameterization, error bars, and sensitivity to initial conditions.

Authors: We accept that these controls and reporting standards are necessary. In the revised version we will add: (i) an ablation condition in which agents minimize the identical potential function but re-evaluate at every time step (continuous optimizers), (ii) explicit parameterization of will strength (the re-evaluation threshold and the persistence parameter), (iii) mean and standard-error bars computed over at least 50 independent runs per condition, and (iv) a sensitivity analysis sweeping initial spatial configurations and will-strength distributions. These additions will isolate the contribution of autonomous suspension of re-evaluation and will allow readers to assess robustness directly. revision: yes

Circularity Check

Will as potential minimization embeds state-space shrinkage and cooperation catalysis by construction

specific steps

-

self definitional

[Abstract]

"We formalize the Willed Agents as potential minimizers, distinguishing them from cumulative utility maximization. Dynamical analysis of infinite population demonstrates that willed agents shrink the feasible state space, acting as boundary constraints that accelerate convergence in canonical social dilemmas."

The formalization sets agents as potential minimizers that pursue goals while ignoring fluctuations; the subsequent 'demonstration' that this shrinks state space and accelerates convergence follows directly from the definition of potential minimization (trajectories are constrained to the potential's level sets or minima), with no additional independent mechanism introduced.

-

self definitional

[Abstract]

"Through multi-agent simulations in a spatiotemporal Stag Hunt Game, we show that willed agents function as ``cooperation catalysts'', enabling groups to surmount high-risk thresholds where purely utility maximization fails."

The simulation result is presented as evidence that the mechanism enables surmounting thresholds, but the potential-minimizer definition already encodes persistent pursuit of the cooperative goal (mutual stag hunting) by ignoring local risk fluctuations; the catalysis therefore reduces to the built-in goal-directed constraint rather than an emergent outcome.

full rationale

The paper's core derivation defines 'will' explicitly as persistent goal pursuit via potential minimization that ignores local fluctuations, then claims dynamical analysis 'demonstrates' that this shrinks the feasible state space and accelerates convergence to cooperation. This is self-definitional: potential minimization by construction constrains trajectories to lower-potential regions aligned with the pre-specified goal. The Stag Hunt simulations inherit the same structure, so the 'catalyst' effect and threshold-surmounting are not independent predictions but consequences of the formalization. The distinction from cumulative utility maximization supplies partial independent content, so the circularity is partial rather than total.

Axiom & Free-Parameter Ledger

free parameters (1)

- will strength

axioms (1)

- domain assumption Willed agents are potential minimizers that persistently pursue goals while ignoring local cost-benefit fluctuations.

invented entities (1)

-

will

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel echoesWe formalize the Willed Agents as potential minimizers... D_i(s) = inf_g∈G_i d_i(s,g)

-

IndisputableMonolith/Foundation/BranchSelection.leanbranch_selection unclearWilled Agents... shrink the feasible state space, acting as boundary constraints

Reference graph

Works this paper leans on

-

[1]

Acemoglu, D. (2015). Why nations fail?The Pakistan Devel- opment Review,54(4), 301–312. Arthur,W.B.(1994).Inductivereasoningandboundedratio- nality.The American economic review,84(2), 406–411. Baker,C.,Saxe,R.,&Tenenbaum,J.(2011).Bayesiantheory of mind: Modeling joint belief-desire attribution.Proceed- ings of the annual meeting of the cognitive science s...

work page 2015

-

[2]

Bratman, M. (1987). Intention, plans, and practical reason. Braver, T. S. (2012). The variable nature of cognitive con- trol: A dual mechanisms framework.Trends in cognitive sciences,16(2), 106–113. Camerer,C.(2003).Behavioralgametheory:Experimentsin strategic interaction. Princeton university press. Camerer, C. F., Ho, T.-H., & Chong, J.-K. (2004). A cog...

-

[3]

Greif,A.(2006).Institutionsandthepathtothemodernecon- omy: Lessons from medieval trade. Cambridge University Press. Hardin, G. (1968). The tragedy of the commons: The popu- lation problem has no technical solution; it requires a fun- damental extension in morality.science,162(3859), 1243–

work page 2006

-

[4]

Heckhausen, J., & Heckhausen, H. (2018).Motivation and action. Springer. Hobbes, T., & Popiel, J. (2004).Leviathan. Barnes & No- ble, Incorporated. https://books.google.com/books?id= zpcP4AV_jPAC Hoffman, M., Yoeli, E., & Nowak, M. A. (2015). Cooperate without looking: Why we care what people think and not just what they do.Proceedings of the National Aca...

-

[5]

https://doi.org/10.1038/ srep00646 McClennen, E. F. (1990).Rationality and dynamic choice: Foundational explorations. Cambridge university press. Michel-Mata,S.,Kawakatsu,M.,Sartini,J.,Kessinger,T.A., Plotkin,J.B.,&Tarnita,C.E.(2024).Theevolutionofpri- vate reputations in information-abundant landscapes.Na- ture,634(8035), 883–889. North, D. C. (1990).Ins...

work page 1990

-

[6]

Simon, H. A. (1956). Rational choice and the structure of the environment.Psychological review,63(2),

work page 1956

-

[7]

Tirole, J. (2017). Economics for the common good. Van Huyck, J. B., Battalio, R. C., & Beil, R. O. (1990). Tacit coordinationgames,strategicuncertainty,andcoordination failure.The American Economic Review,80(1), 234–248

work page 2017

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.