Recognition: no theorem link

Robust Multi-Agent LLMs under Byzantine Faults

Pith reviewed 2026-05-12 02:01 UTC · model grok-4.3

The pith

Decentralized LLM agents can preserve reliable outputs under Byzantine faults when their communication graph meets (F+1)-robustness and they apply local filtering.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

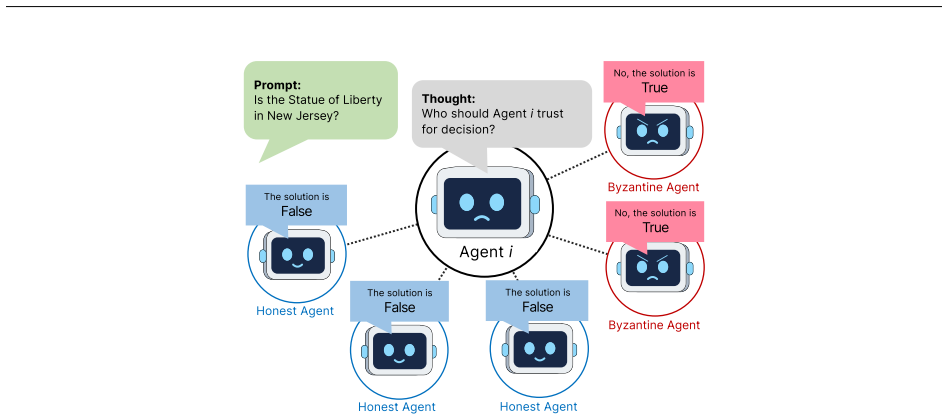

Self-Anchored Consensus is a fully decentralized iterative filter-and-refine protocol in which agents exchange responses, locally evaluate and filter unreliable messages, and refine their own outputs. The paper shows that (F+1)-robustness conditions on the communication graph ensure honest agents preserve and propagate reliable information despite Byzantine influence. This holds without leader coordination or shared knowledge of which agents are faulty.

What carries the argument

Self-Anchored Consensus (SAC), an iterative protocol where agents locally evaluate neighbor messages for reliability and refine outputs over rounds.

If this is right

- Honest agents preserve and propagate reliable information despite up to F Byzantine agents.

- SAC suppresses Byzantine influence and improves results on mathematical and commonsense reasoning benchmarks.

- The protocol works across diverse communication topologies without central coordination.

- Prior leader-based or self-reported confidence methods degrade when adversaries manipulate the system.

Where Pith is reading between the lines

- The same local-filtering pattern could apply to other multi-agent AI setups that lack a trusted coordinator.

- If real networks rarely satisfy (F+1)-robustness, dynamic link adjustment or agent reputation tracking may be needed to maintain the guarantee.

- The method assumes each agent possesses enough independent judgment to rate incoming messages, which may limit use in weaker models.

Load-bearing premise

Agents can reliably and locally evaluate and filter unreliable messages from neighbors without additional mechanisms or shared knowledge of which agents are Byzantine.

What would settle it

A controlled test in which a graph violates (F+1)-robustness or local filters cannot separate Byzantine messages, causing honest agents to converge on incorrect answers on the same reasoning benchmarks.

Figures

read the original abstract

Large language model (LLM) agents increasingly collaborate over peer-to-peer networks to improve their reliability. However, these same interactions can also become a source of vulnerability, as unreliable or Byzantine agents may sway neighboring agents toward incorrect conclusions and degrade overall system performance. Existing methods rely on leader-based coordination or self-reported confidence, both of which are susceptible to adversarial manipulation. We study decentralized LLM multi-agent systems (LLM-MAS) and propose Self-Anchored Consensus (SAC), a fully decentralized iterative filter-and-refine protocol in which agents iteratively exchange responses, locally evaluate and filter unreliable messages, and refine their own outputs. We present $(F{+}1)$-robustness conditions for the communication graph that ensure honest agents preserve and propagate reliable information despite Byzantine influence. Experiments on mathematical and commonsense reasoning benchmarks show that SAC effectively suppresses Byzantine influence and consistently improves performance across diverse communication topologies, whereas prior methods degrade under adversarial conditions.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes Self-Anchored Consensus (SAC), a fully decentralized iterative filter-and-refine protocol for LLM-based multi-agent systems. It claims that (F+1)-robustness conditions on the communication graph enable honest agents to locally evaluate, filter unreliable messages from Byzantine agents, and refine outputs while preserving reliable information. Experiments on mathematical and commonsense reasoning benchmarks are reported to show that SAC suppresses Byzantine influence and improves performance across diverse topologies, in contrast to prior leader-based or confidence-based methods.

Significance. If the (F+1)-robustness conditions are formally established and the local LLM-based filtering is shown to be reliable, the work would offer a timely contribution to fault-tolerant decentralized multi-agent AI. It addresses vulnerabilities in peer-to-peer LLM collaboration without relying on leaders or shared fault knowledge, with the experimental evaluation across multiple topologies providing initial empirical support. The absence of derivation details and statistical rigor in the current draft limits immediate impact.

major comments (3)

- [§3] §3 (Robustness Conditions): The (F+1)-robustness conditions on the communication graph are asserted to guarantee that honest agents preserve and propagate reliable information under Byzantine influence, but no formal derivation, inductive argument, or proof is supplied showing how the graph connectivity interacts with the SAC filter-and-refine steps to maintain this invariant. This is load-bearing for the central theoretical claim.

- [§4.2] §4.2 (SAC Protocol): The local evaluation and filtering steps assume that LLM agents can reliably detect and exclude Byzantine deviations without external oracles or shared knowledge of faulty agents. No analysis or bounds are given for cases where adversarial messages contain subtle inconsistencies or prompt-engineered content that evades detection, which could break the propagation guarantee even when the graph satisfies the stated conditions.

- [§5] §5 (Experiments): The reported performance gains and suppression of Byzantine influence lack error bars, statistical significance tests, explicit baseline implementations, and data exclusion criteria. Without these, it is not possible to verify the consistency of improvements or rule out that gains are artifacts of particular random seeds or topology selections.

minor comments (2)

- [Abstract and §3] The notation (F{+}1) in the abstract and text should be standardized to F+1 for clarity and consistency with standard fault-tolerance literature.

- [§2] A brief comparison table summarizing prior methods (leader-based, self-reported confidence) versus SAC would improve readability of the motivation section.

Simulated Author's Rebuttal

We thank the referee for the constructive and insightful comments, which help strengthen the theoretical foundations and empirical validation of our work on Self-Anchored Consensus (SAC). We address each major comment point-by-point below and commit to a major revision that incorporates formal derivations, expanded discussion of limitations, and improved statistical reporting.

read point-by-point responses

-

Referee: [§3] §3 (Robustness Conditions): The (F+1)-robustness conditions on the communication graph are asserted to guarantee that honest agents preserve and propagate reliable information under Byzantine influence, but no formal derivation, inductive argument, or proof is supplied showing how the graph connectivity interacts with the SAC filter-and-refine steps to maintain this invariant. This is load-bearing for the central theoretical claim.

Authors: We agree that a formal derivation is essential to support the central claim. In the revised manuscript, we will add a dedicated subsection to §3 containing an inductive argument. The proof will show by induction over iterations that, under the (F+1)-robustness condition, every honest agent retains a sufficient set of honest neighbors to filter Byzantine messages locally; the inductive step will demonstrate that refined outputs from honest agents propagate without corruption, preserving the invariant across rounds. revision: yes

-

Referee: [§4.2] §4.2 (SAC Protocol): The local evaluation and filtering steps assume that LLM agents can reliably detect and exclude Byzantine deviations without external oracles or shared knowledge of faulty agents. No analysis or bounds are given for cases where adversarial messages contain subtle inconsistencies or prompt-engineered content that evades detection, which could break the propagation guarantee even when the graph satisfies the stated conditions.

Authors: We acknowledge this as a substantive limitation of the current analysis. The protocol relies on the LLM's local reasoning for filtering, which our experiments indicate works for the Byzantine behaviors tested (random flips, contradictions). However, we provide no formal bounds or analysis for sophisticated prompt-engineered evasions. In the revision we will expand §4.2 with an explicit limitations paragraph discussing this gap and proposing future mitigations such as cross-query consistency verification, while noting that the iterative filter-and-refine structure offers partial resilience by accumulating evidence over rounds. revision: partial

-

Referee: [§5] §5 (Experiments): The reported performance gains and suppression of Byzantine influence lack error bars, statistical significance tests, explicit baseline implementations, and data exclusion criteria. Without these, it is not possible to verify the consistency of improvements or rule out that gains are artifacts of particular random seeds or topology selections.

Authors: We agree that additional statistical rigor is required. The revised §5 will report means and standard deviations (error bars) over at least five independent random seeds per configuration, include paired statistical significance tests (e.g., Wilcoxon signed-rank) against baselines, provide complete implementation details for the leader-based and confidence-based baselines, and state explicit data-exclusion rules (limited to malformed JSON outputs). Results on additional topology instances will also be included to confirm consistency. revision: yes

Circularity Check

No circularity; claims rest on independent protocol and graph conditions

full rationale

The abstract and described protocol introduce SAC as a filter-and-refine method and state (F+1)-robustness conditions for the communication graph as separate contributions that ensure preservation of reliable information. No equations, parameter fits, self-citations, or definitional reductions are present that would make the robustness result equivalent to its inputs by construction. The experimental results are presented as empirical validation rather than part of any derivation chain. The analysis remains self-contained against external benchmarks.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Individual agents possess a local mechanism to evaluate and filter unreliable messages from neighbors.

- domain assumption The communication graph satisfies (F+1)-robustness for the number of Byzantine agents F.

Reference graph

Works this paper leans on

-

[1]

Lamport, Leslie and Shostak, Robert and Pease, Marshall , journal =. The. 1982 , publisher =

work page 1982

-

[2]

IEEE Journal on Selected Areas in Communications , volume =

Resilient Asymptotic Consensus in Robust Networks , author =. IEEE Journal on Selected Areas in Communications , volume =. 2013 , publisher =

work page 2013

-

[3]

Su, Lili and Vaidya, Nitin H. , journal=. Byzantine-Resilient Multiagent Optimization , year=

-

[4]

Dolev, Danny and Lynch, Nancy A. and Pinter, Shlomit S. and Stark, Eugene W. and Weihl, William E. , title =. 1986 , issue_date =. doi:10.1145/5925.5931 , journal =

-

[5]

Kieckhafer, R.M. and Azadmanesh, M.H. , journal=. Reaching approximate agreement with mixed-mode faults , year=

-

[6]

IEEE Transactions on Automatic Control , year=

Resilient Distributed Economic Dispatch in Smart Grids , author=. IEEE Transactions on Automatic Control , year=

-

[7]

Distributed Optimization Under Adversarial Nodes , year=

Sundaram, Shreyas and Gharesifard, Bahman , journal=. Distributed Optimization Under Adversarial Nodes , year=

-

[8]

IEEE Transactions on Neural Networks and Learning Systems , volume=

Communication-efficient and resilient distributed Q-learning , author=. IEEE Transactions on Neural Networks and Learning Systems , volume=. 2023 , publisher=

work page 2023

-

[9]

Resilient Distributed Averaging , year=

Dibaji, Seyed Mehran and Safi, Mostafa and Ishii, Hideaki , booktitle=. Resilient Distributed Averaging , year=

-

[10]

Graph-theoretic approaches for analyzing the resilience of distributed control systems: A tutorial and survey , journal =. 2023 , issn =. doi:https://doi.org/10.1016/j.automatica.2023.111264 , author =

-

[11]

A Notion of Robustness in Complex Networks , year=

Zhang, Haotian and Fata, Elaheh and Sundaram, Shreyas , journal=. A Notion of Robustness in Complex Networks , year=

-

[12]

Determining r-and (r, s)-robustness of digraphs using mixed integer linear programming , author=. Automatica , volume=. 2020 , publisher=

work page 2020

-

[13]

Saldaña, D. and Prorok, A. and Campos, M. F. M. and Kumar, V. , title =. Springer Proceedings in Advanced Robotics , year =

-

[14]

Resilient Multiagent Reinforcement Learning With Function Approximation , year=

Ye, Lintao and Figura, Martin and Lin, Yixuan and Pal, Mainak and Das, Pranoy and Liu, Ji and Gupta, Vijay , journal=. Resilient Multiagent Reinforcement Learning With Function Approximation , year=

-

[15]

Minimal construction of graphs with maximum robustness,

Minimal Construction of Graphs with Maximum Robustness , author =. arXiv preprint arXiv:2507.00415 , year =

-

[16]

Distributed Resilience-Aware Control in Multi-Robot Networks , year=

Lee, Haejoon and Panagou, Dimitra , booktitle=. Distributed Resilience-Aware Control in Multi-Robot Networks , year=

-

[17]

Rethinking the Reliability of Multi-agent System: A Perspective from

Zheng, Lifan and Chen, Jiawei and Yin, Qinghong and Zhang, Jingyuan and Zeng, Xinyi and Tian, Yu , booktitle =. Rethinking the Reliability of Multi-agent System: A Perspective from. 2026 , note =

work page 2026

-

[18]

Proceedings of the 33rd International Joint Conference on Artificial Intelligence (IJCAI) , pages =

Large Language Model based Multi-agents: A Survey of Progress and Challenges , author =. Proceedings of the 33rd International Joint Conference on Artificial Intelligence (IJCAI) , pages =

-

[19]

Proceedings of the 41st International Conference on Machine Learning (ICML) , volume =

Improving Factuality and Reasoning in Language Models through Multiagent Debate , author =. Proceedings of the 41st International Conference on Machine Learning (ICML) , volume =. 2024 , publisher =

work page 2024

-

[20]

Evil Geniuses: Delving into the Safety of

Tian, Yu and Yang, Xiao and Zhang, Jingyuan and Dong, Yinpeng and Su, Hang , journal =. Evil Geniuses: Delving into the Safety of

-

[21]

Not What You've Signed Up For: Compromising Real-World

Greshake, Kai and Abdelnabi, Sahar and Mishra, Shailesh and Endres, Christoph and Holz, Thorsten and Fritz, Mario , booktitle =. Not What You've Signed Up For: Compromising Real-World. 2023 , publisher =

work page 2023

-

[22]

Let's Verify Step by Step , author=. arXiv preprint arXiv:2305.20050 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[23]

Zhiqiang Hu and Lei Wang and Yihuai Lan and Wanyu Xu and Ee-Peng Lim and Lidong Bing and Xing Xu and Soujanya Poria and Roy Ka-Wei Lee , booktitle =. 2023 , pages =

work page 2023

-

[24]

Li, Guohao and Hammoud, Hasan Abed Al Kader and Itani, Hani and Khizbullin, Dmitrii and Ghanem, Bernard , booktitle =

-

[25]

and Burger, Doug and Wang, Chi , journal =

Wu, Qingyun and Bansal, Gagan and Zhang, Jieyu and Wu, Yiran and Li, Beibin and Zhu, Erkang and Jiang, Li and Zhang, Xiaoyun and Zhang, Shaokun and Liu, Jiale and Awadallah, Ahmed Hassan and White, Ryen W. and Burger, Doug and Wang, Chi , journal =

-

[26]

International Conference on Learning Representations (ICLR) , year =

Hong, Sirui and Zheng, Xiawu and Chen, Jonathan and Cheng, Yuheng and Wang, Jinlin and Zhang, Ceyao and Wang, Zili and Yau, Steven Ka Shing and Lin, Zijuan and Zhou, Liyang and Ran, Chenyu and Xiao, Lingfeng and Wu, Chenglin and Schmidhuber, J. International Conference on Learning Representations (ICLR) , year =

-

[27]

Chen, Weize and Su, Yusheng and Zuo, Jingwei and Yang, Cheng and Yuan, Chenfei and Chan, Chen-Ming and Yu, Heyang and Lu, Yaxi and Hung, Yi-Hsin and Qian, Chen and Qin, Yujia and Cong, Xin and Xie, Ruobing and Liu, Zhiyuan and Sun, Maosong and Zhou, Jie , booktitle =

-

[28]

Encouraging Divergent Thinking in Large Language Models through Multi-Agent Debate

Encouraging Divergent Thinking in Large Language Models through Multi-Agent Debate , author =. arXiv preprint arXiv:2305.19118 , year =

work page internal anchor Pith review arXiv

-

[29]

arXiv preprint arXiv:2406.07155 , year=

Scaling Large Language Model-based Multi-Agent Collaboration , author =. arXiv preprint arXiv:2406.07155 , year =

-

[30]

Tran, Khanh-Tung and Dao, Dung and Nguyen, Minh-Duong and Pham, Quoc-Viet and O'Sullivan, Barry and Nguyen, Hoang D. , journal =. Multi-agent Collaboration Mechanisms: A Survey of

-

[31]

Yu, Miao and Wang, Shilong and Zhang, Guibin and Mao, Junyuan and Yin, Chenlong and Liu, Qijiong and Wen, Qingsong and Wang, Kun and Wang, Yang , journal =

-

[32]

Cut the Crap: An Economical Communication Pipeline for

Zhang, Guibin and Yue, Yanwei and Li, Zhixun and Yun, Sukwon and Wan, Guancheng and Wang, Kun and Cheng, Dawei and Yu, Jeffrey Xu and Chen, Tianlong , journal =. Cut the Crap: An Economical Communication Pipeline for

-

[33]

Luo, Haoxiang and Sun, Gang and Liu, Yinqiu and Zhao, Dongzhao and Niyato, Dusit and Yu, Han and Dustdar, Schahram , journal =. A Weighted

-

[34]

Jo, Yongrae and Park, Chanik , journal =

- [35]

-

[36]

and Gueta, Guy Golan and Abraham, Ittai , booktitle =

Yin, Maofan and Malkhi, Dahlia and Reiter, Michael K. and Gueta, Guy Golan and Abraham, Ittai , booktitle =. 2019 , publisher =

work page 2019

-

[37]

IEEE Transactions on Control of Network Systems , volume =

A Notion of Robustness in Complex Networks , author =. IEEE Transactions on Control of Network Systems , volume =. 2015 , publisher =

work page 2015

-

[38]

2017 American Control Conference (ACC) , pages =

Resilient Consensus for Time-Varying Networks of Dynamic Agents , author =. 2017 American Control Conference (ACC) , pages =. 2017 , organization =

work page 2017

-

[39]

2017 IEEE 56th Annual Conference on Decision and Control (CDC) , pages =

r -Robustness and (r,s) -Robustness of Circulant Graphs , author =. 2017 IEEE 56th Annual Conference on Decision and Control (CDC) , pages =. 2017 , organization =

work page 2017

-

[40]

IEEE Robotics and Automation Letters , volume =

Formations for Resilient Robot Teams , author =. IEEE Robotics and Automation Letters , volume =. 2017 , publisher =

work page 2017

-

[41]

Acta Mathematica Hungarica , volume=

On the strength of connectedness of a random graph , author=. Acta Mathematica Hungarica , volume=. 1961 , publisher=

work page 1961

-

[42]

arXiv preprint arXiv:2502.15153 , year=

When Disagreements Elicit Robustness: Investigating Self-Repair Capabilities under LLM Multi-Agent Disagreements , author=. arXiv preprint arXiv:2502.15153 , year=

-

[43]

Uncertainty Estimation for Tumor Prediction with Unlabeled Data , year=

Yun, Juyoung and Abousamra, Shahira and Li, Chen and Gupta, Rajarsi and Kurc, Tahsin and Samaras, Dimitris and Van Dyke, Alison and Saltz, Joel and Chen, Chao , booktitle=. Uncertainty Estimation for Tumor Prediction with Unlabeled Data , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.