Recognition: unknown

Look Once, Beam Twice: Camera-Primed Real-Time Double-Directional mmWave Beam Management for Vehicular Connectivity

Pith reviewed 2026-05-08 16:13 UTC · model grok-4.3

The pith

Camera observations enable hybrid real-time mmWave beam alignment for vehicles with outage rates down to 1.1%.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

VIBE is a hybrid model-based, closed-loop learning architecture for real-time double-directional mmWave beam management primed by camera sensing. It fuses machine learning, model-based reasoning, and closed-loop RF feedback to bypass exhaustive training overhead, reduce beam-search space via camera observations, and apply lightweight refinement plus offset tracking for dynamic adaptation. Evaluations across testbeds, public datasets, and vehicular experiments show lower outage rates than 5G NR hierarchical beamforming and better performance than end-to-end ML models, with rates as low as 1.1-1.4%.

What carries the argument

VIBE, the hybrid architecture that primes beam-pair search with camera observations and adapts via closed-loop feedback and refinement.

If this is right

- VIBE maintains lower outage rates than 5G NR hierarchical beamforming across comparisons.

- It outperforms state-of-the-art end-to-end ML models for beam selection when tested on public datasets.

- The system achieves outage rates as low as 1.1-1.4% in online indoor/outdoor testbeds and real-time vehicular experiments.

- VIBE demonstrates strong generalization capabilities suitable for real-time V2X communication.

Where Pith is reading between the lines

- The hybrid design implies that end-to-end ML models alone may fail to generalize across the variability of real mmWave channels under mobility.

- Closed-loop RF feedback can correct initial camera-based predictions, reducing sensitivity to vision errors in changing environments.

- The low-overhead approach could extend to other mobile scenarios where sensor data helps constrain radio resource selection.

Load-bearing premise

Camera observations reliably correlate with optimal mmWave beam directions and can shrink the search space without missing viable pairs or adding unacceptable latency in dynamic vehicular environments.

What would settle it

Real-world vehicular tests where VIBE produces higher outage rates than 5G NR hierarchical beamforming or where camera-based narrowing frequently excludes the best beam pairs would falsify the performance advantage.

Figures

read the original abstract

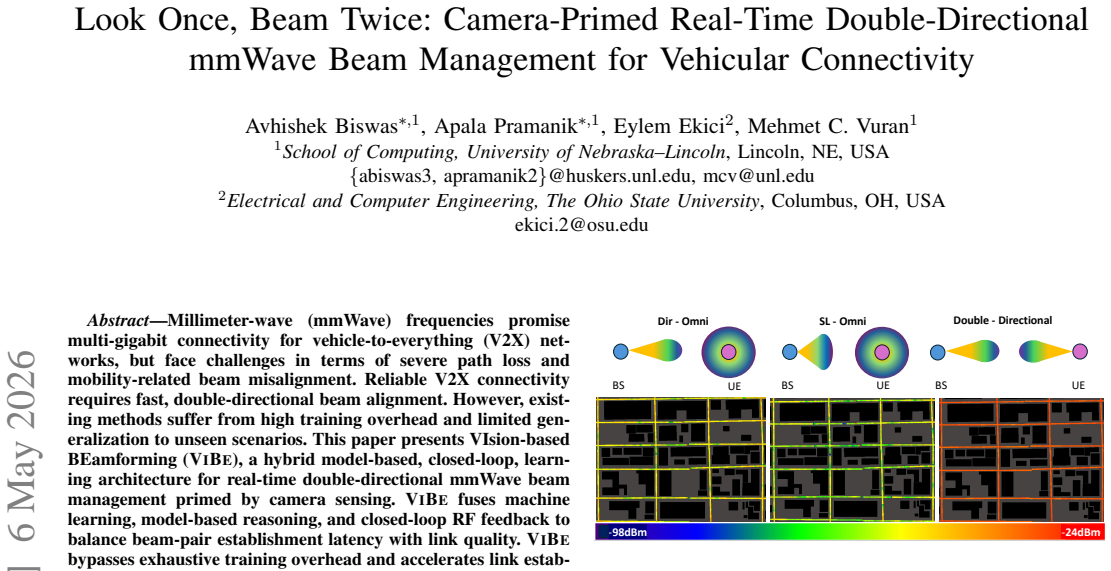

Millimeter-wave (mmWave) frequencies promise multi-gigabit connectivity for vehicle-to-everything (V2X) networks, but face challenges in terms of severe path loss and mobility-related beam misalignment. Reliable V2X connectivity requires fast, double-directional beam alignment. However, existing methods suffer from high training overhead and limited generalization to unseen scenarios. This paper presents VIsion-based BEamforming(VIBE), a hybrid model-based, closed-loop, learning architecture for real-time double-directional mmWave beam management primed by camera sensing. VIBE fuses machine learning, model-based reasoning, and closed-loop RF feedback to balance beam-pair establishment latency with link quality. VIBE bypasses exhaustive training overhead and accelerates link establishment by leveraging camera observations to reduce the beam-search space. Lightweight beam refinement and offset tracking mechanisms adaptively refine beams in response to dynamic application requirements. VIBE is implemented and evaluated across online indoor/outdoor testbeds, public datasets, and real-time vehicular experiments, demonstrating strong generalization capabilities, making it suitable for real-time V2X communication. Comparisons with 5G NR hierarchical beamforming show that VIBE consistently maintains lower outage rates. Furthermore, VIBE outperforms state-of-the-art end-to-end ML models for beam selection when evaluated on public datasets and achieves outage rates as low as 1.1-1.4 %. The results show that a hybrid model-based, closed-loop learning architecture is better suited for real-world mmWave vehicular connectivity than end-to-end trained ML models. For reproducibility, we publish our code to https://github.com/UNL-CPN-Lab/Look-Once-Beam-Twice.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes VIBE, a hybrid model-based closed-loop learning architecture for real-time double-directional mmWave beam management in vehicular V2X networks. Camera observations are used to prime and reduce the beam search space, combined with lightweight beam refinement and offset tracking mechanisms that adapt to dynamic conditions. The system is evaluated on indoor/outdoor testbeds, public datasets, and real-time vehicular experiments, claiming lower outage rates than 5G NR hierarchical beamforming and outperforming end-to-end ML models for beam selection, with outage rates as low as 1.1-1.4%. Code is released for reproducibility.

Significance. If the results hold, the work provides concrete evidence that hybrid vision-primed, model-based, closed-loop architectures can achieve reliable low-latency beam alignment in high-mobility mmWave scenarios where pure end-to-end ML or standard protocols fall short. The public code release is a clear strength that supports verification and extension. This has potential practical value for V2X deployments requiring multi-gigabit links under mobility.

major comments (2)

- [§5] §5 (experimental evaluation): The reported outage rates of 1.1-1.4% and consistent outperformance versus 5G NR and ML baselines are central to the claims, yet the section does not report the number of independent trials, statistical significance tests, or confidence intervals on the outage metrics. This makes it difficult to assess whether the gains are robust across the claimed testbeds and datasets.

- [§4.2] §4.2 (camera priming and search-space reduction): The core assumption that camera observations reliably correlate with optimal RF beam pairs without missing viable directions is load-bearing for the latency and outage claims, but the paper provides limited analysis of failure modes (e.g., visual obstructions, multipath, or weather effects) or quantitative bounds on the reduction in search space.

minor comments (3)

- [Abstract and §1] The abstract and §1 could more explicitly state the exact beam codebook sizes and maximum search-space reduction factor achieved by the camera priming step.

- [§5] Figure captions in §5 should include the precise mobility speeds, environment types, and number of beam pairs evaluated for each plotted curve to improve reproducibility.

- [§2] The related-work section would benefit from a brief comparison table summarizing latency and outage of the cited end-to-end ML baselines on the same public datasets used for VIBE.

Simulated Author's Rebuttal

We thank the referee for the positive recommendation of minor revision and the constructive comments, which help clarify the robustness of our claims. We address each major point below, committing to targeted revisions that strengthen the manuscript without altering its core contributions.

read point-by-point responses

-

Referee: [§5] §5 (experimental evaluation): The reported outage rates of 1.1-1.4% and consistent outperformance versus 5G NR and ML baselines are central to the claims, yet the section does not report the number of independent trials, statistical significance tests, or confidence intervals on the outage metrics. This makes it difficult to assess whether the gains are robust across the claimed testbeds and datasets.

Authors: We agree that these statistical details are important for assessing robustness. In the revised manuscript, we will expand §5 to report the number of independent trials (50 per vehicular scenario and 100 per public dataset evaluation), 95% confidence intervals on outage rates via bootstrap methods, and statistical significance results (paired t-tests with p < 0.01 versus 5G NR baselines). These additions draw from our retained raw experimental logs and will be presented in new tables and text. revision: yes

-

Referee: [§4.2] §4.2 (camera priming and search-space reduction): The core assumption that camera observations reliably correlate with optimal RF beam pairs without missing viable directions is load-bearing for the latency and outage claims, but the paper provides limited analysis of failure modes (e.g., visual obstructions, multipath, or weather effects) or quantitative bounds on the reduction in search space.

Authors: We acknowledge the value of explicit discussion here. While the low outage rates across our indoor/outdoor and vehicular experiments already provide empirical support for the correlation, we will revise §4.2 to add a paragraph analyzing failure modes, explaining how closed-loop RF offset tracking and fallback mechanisms mitigate visual obstructions and multipath. We will also report quantitative bounds, including an average 16× search-space reduction (from 1024 to 64 beam pairs) with standard deviation across datasets. Comprehensive weather-specific testing remains outside the current scope but will be noted as future work. revision: partial

Circularity Check

No significant circularity

full rationale

The paper presents VIBE, a hybrid camera-primed beam management architecture that fuses ML, model-based reasoning, and closed-loop RF feedback to reduce beam search space for mmWave V2X. Central performance claims rest on empirical evaluations across online indoor/outdoor testbeds, public datasets, real-time vehicular experiments, and direct comparisons to 5G NR hierarchical beamforming and state-of-the-art end-to-end ML models, with reported outage rates of 1.1-1.4% and released code for reproducibility. No load-bearing derivation, equation, or prediction reduces by construction to fitted parameters defined from the same data, self-citations, or ansatzes; the architecture choices are presented as design decisions validated externally rather than tautological redefinitions of inputs.

Axiom & Free-Parameter Ledger

Reference graph

Works this paper leans on

-

[1]

Audi of America, Verizon partner to bring 5G to vehicle lineup,

Audi, “Audi of America, Verizon partner to bring 5G to vehicle lineup,” https://media.audiusa.com/releases/511, Feb. 2022

2022

-

[2]

Verizon to build 5G test track in Germany with Audi,

P. Lipscombe, “Verizon to build 5G test track in Germany with Audi,” https://www.datacenterdynamics.com/en/news/verizon-to-build-5g-tes t-track-in-germany-with-audi/, Mar. 2024

2024

-

[3]

mmWave 5G TCU is enabling new in-vehicle experiences,

Samsung, “mmWave 5G TCU is enabling new in-vehicle experiences,” https://www.samsung.com/global/business/networks/insights/press-relea se/0111-mmwave-5g-tcu-is-enabling-new-in-vehicle-experiences/, Jan. 2021

2021

-

[4]

NTT Corp., NTT DOCOMO and NEC demonstrate dis- tributed MIMO technology for high-frequency 6G communications in automobiles and trains,

NTT Corp., “NTT Corp., NTT DOCOMO and NEC demonstrate dis- tributed MIMO technology for high-frequency 6G communications in automobiles and trains,” https://group.ntt/en/newsrelease/2025/03/25/25 0325a.html, Mar. 2025

2025

-

[5]

5G-advanced toward 6G: Past, present, and future,

W. Chenet al., “5G-advanced toward 6G: Past, present, and future,” IEEE Journal on Selected Areas in Communications, vol. 41, no. 6, pp. 1592–1619, Mar 2023

2023

-

[6]

A tale of two mobile generations: 5G-advanced and 6G in 3GPP release 20,

X. Lin, “A tale of two mobile generations: 5G-advanced and 6G in 3GPP release 20,”IEEE Communications Standards Magazine, pp. 1–9, Jun 2025

2025

-

[7]

Beam alignment in mmWave V2X communications: A survey,

J. Tanet al., “Beam alignment in mmWave V2X communications: A survey,”IEEE Communications Surveys & Tutorials, vol. 26, no. 3, pp. 1676–1709, Aug. 2024

2024

-

[8]

High-data-rate millimeter-wave radios,

D. Lockie and D. Peck, “High-data-rate millimeter-wave radios,”IEEE Microwave Magazine, vol. 10, no. 5, pp. 75–83, Aug 2009

2009

-

[9]

The impact of beamwidth on temporal channel variation in vehicular channels and its implications,

V . Va, J. Choi, and R. W. Heath, “The impact of beamwidth on temporal channel variation in vehicular channels and its implications,”IEEE Trans. Vehicular Technology, vol. 66, no. 6, pp. 5014–5029, Jun. 2017

2017

-

[10]

Vivisecting beam management in operational 5G mmWave networks,

Y . Fenget al., “Vivisecting beam management in operational 5G mmWave networks,”Proc. ACM CoNEXT, vol. 3, no. CoNEXT2, pp. 1–26, Jun 2025

2025

-

[11]

Wireless Insite 3D Wireless Prediction Software,

Remcom, “Wireless Insite 3D Wireless Prediction Software,” https://ww w.remcom.com/wireless-insite-propagation-software/, 2024

2024

-

[12]

Millimeter-wave V2X channels: Propagation statistics, beamforming, and blockage,

C. K. Anjinappa and I. Guvenc, “Millimeter-wave V2X channels: Propagation statistics, beamforming, and blockage,” inIEEE VTC-Fall, Aug 2018

2018

-

[13]

Millimeter wave communications: From point-to-point links to agile network connections,

O. Abariet al., “Millimeter wave communications: From point-to-point links to agile network connections,” inProc. ACM HotNets Workshop, Nov 2016

2016

-

[14]

KM learning for millimeter-wave beam alignment and tracking: Predictability and interpretability,

Q. Duan, T. Kim, and H. Ghauch, “KM learning for millimeter-wave beam alignment and tracking: Predictability and interpretability,”IEEE Access, vol. 9, pp. 117 204–117 216, Aug 2021

2021

-

[15]

A comparative measurement study of commercial 5G mmWave deployments,

A. Narayananet al., “A comparative measurement study of commercial 5G mmWave deployments,” inProc. IEEE INFOCOM, May 2022

2022

-

[16]

A close look at 5G in the wild: Unrealized potentials and implications,

Y . Liu and C. Peng, “A close look at 5G in the wild: Unrealized potentials and implications,” inProc. IEEE INFOCOM, May 2023

2023

-

[17]

Beam discovery using linear block codes for millimeter wave communication networks,

Y . Shabara, C. E. Koksal, and E. Ekici, “Beam discovery using linear block codes for millimeter wave communication networks,”IEEE/ACM Trans. on Networking, vol. 27, no. 4, pp. 1446–1459, May 2018

2018

-

[18]

A survey of beam management for mmWave and THz communications towards 6G,

Q. Xueet al., “A survey of beam management for mmWave and THz communications towards 6G,”IEEE Communications Surveys & Tutorials, vol. 26, no. 3, pp. 1520–1559, Feb 2024

2024

-

[19]

Machine learning on camera images for fast mmWave beamforming,

B. Salehiet al., “Machine learning on camera images for fast mmWave beamforming,” inProc. IEEE MASS, Dec 2020

2020

-

[20]

Millimeter wave base stations with cameras: Vision-aided beam and blockage prediction,

M. Alrabeiah, A. Hredzak, and A. Alkhateeb, “Millimeter wave base stations with cameras: Vision-aided beam and blockage prediction,” in Proc. IEEE VTC, Nov 2019

2019

-

[21]

Vision-aided 6G wireless communications: Blockage prediction and proactive handoff,

G. Charan, M. Alrabeiah, and A. Alkhateeb, “Vision-aided 6G wireless communications: Blockage prediction and proactive handoff,”IEEE Trans. Vehicular Technology, vol. 70, no. 10, Oct 2021

2021

-

[22]

Vision-position multi-modal beam prediction using real millimeter wave datasets,

G. Charanet al., “Vision-position multi-modal beam prediction using real millimeter wave datasets,” inProc. IEEE WCNC, Nov 2021, pp. 2727–2731

2021

-

[23]

Machine learning-based mmWave MIMO beam tracking in V2I scenarios: Algorithms and datasets,

A. Oliveiraet al., “Machine learning-based mmWave MIMO beam tracking in V2I scenarios: Algorithms and datasets,” inProc. IEEE LATINCOM, Dec 2024, pp. 1–5

2024

-

[24]

Police secretly monitored New Orleans with facial recognition cameras,

The Washington Post, “Police secretly monitored New Orleans with facial recognition cameras,” https://www.washingtonpost.com/busin ess/2025/05/19/live-facial-recognition-police-new-orleans/, May. 2025

2025

-

[25]

Advanced Driver Assistance Market Size, Share, & Analysis,

Markets and Markets, “Advanced Driver Assistance Market Size, Share, & Analysis,” https://www.marketsandmarkets.com/Market-Reports/dri ver-assistance-systems-market-1201.html/, May 2025

2025

-

[26]

Beam-forecast: Facilitating mobile 60 GHz networks via model-driven beam steering,

A. Zhou, X. Zhang, and H. Ma, “Beam-forecast: Facilitating mobile 60 GHz networks via model-driven beam steering,” inProc. IEEE INFOCOM, May 2017, pp. 1–9

2017

-

[27]

Computer vision aided beam tracking in a real-world millimeter wave deployment,

S. Jiang and A. Alkhateeb, “Computer vision aided beam tracking in a real-world millimeter wave deployment,” inProc. IEEE GLOBECOMM Workshops, Dec 2022

2022

-

[28]

Camera based mmWave beam prediction: Towards multi-candidate real-world scenarios,

G. Charanet al., “Camera based mmWave beam prediction: Towards multi-candidate real-world scenarios,”IEEE Transactions on Vehicular Technology, vol. 74, no. 4, pp. 5897–5913, Dec 2024

2024

-

[29]

Environment semantic aided communication: A real world demonstration for beam prediction,

S. Imran, G. Charan, and A. Alkhateeb, “Environment semantic aided communication: A real world demonstration for beam prediction,” in Proc. IEEE ICC Workshops, Jun 2023

2023

-

[30]

Vision-assisted digital twin creation for mmwave beam management,

M. Arnoldet al., “Vision-assisted digital twin creation for mmwave beam management,” inIEEE International Conference on Communica- tions, Jun 2024

2024

-

[31]

Multi-camera views based beam searching and BS selection with reduced training overhead,

B. Linet al., “Multi-camera views based beam searching and BS selection with reduced training overhead,”IEEE Trans. Communications, vol. 72, no. 5, pp. 2793–2805, Jan 2024

2024

-

[32]

Vision aided beam tracking and frequency handoff for mmWave communications,

T. Zhang, J. Liu, and F. Gao, “Vision aided beam tracking and frequency handoff for mmWave communications,” inProc. IEEE INFOCOM Workshops, Jul 2022

2022

-

[33]

Omni-CNN: A modality-agnostic neural network for mmwave beam selection,

B. Salehiet al., “Omni-CNN: A modality-agnostic neural network for mmwave beam selection,”IEEE Trans. Vehicular Technology, vol. 73, no. 6, pp. 8169–8183, Jan 2024

2024

-

[34]

FLASH-and-prune: Federated learning for automated selection of high-band mmwave sectors using model pruning,

B. Salehiet al., “FLASH-and-prune: Federated learning for automated selection of high-band mmwave sectors using model pruning,”IEEE Trans. Mobile Computing, vol. 23, no. 12, pp. 11 655–11 669, May 2024

2024

-

[35]

DeepSense 6G: A large-scale real-world multi- modal sensing and communication dataset,

A. Alkhateebet al., “DeepSense 6G: A large-scale real-world multi- modal sensing and communication dataset,”IEEE Communications Magazine., vol. 61, no. 9, pp. 122–128, Sep. 2023

2023

-

[36]

Radar aided 6G beam prediction: Deep learning algorithms and real-world demonstration,

U. Demirhan and A. Alkhateeb, “Radar aided 6G beam prediction: Deep learning algorithms and real-world demonstration,” inProc. IEEE WCNC, Apr 2022

2022

-

[37]

LiDAR aided future beam prediction in real-world millimeter wave V2I communications,

S. Jiang, G. Charan, and A. Alkhateeb, “LiDAR aided future beam prediction in real-world millimeter wave V2I communications,”IEEE Wireless Communication Letters, vol. 12, no. 2, pp. 212–216, May 2022

2022

-

[38]

AUTOMOTIVE CAMERA MARKET OVERVIEW,

M. M. G. Reports, “AUTOMOTIVE CAMERA MARKET OVERVIEW,” https://www.marketgrowthreports.com/market-reports/a utomotive-camera-market-100220#: ∼:text=AUTOMOTIVE%20CAME RA%20MARKET%20TRENDS,-the-art%20imaging%20technologies/, December 2025

2025

-

[39]

Channel estimation and hybrid precoding for millimeter wave cellular systems,

A. Alkhateebet al., “Channel estimation and hybrid precoding for millimeter wave cellular systems,”IEEE Journal of Selected Topics in Signal Processing, vol. 8, no. 5, pp. 831–846, Jul 2014

2014

-

[40]

Reliable vehicle pose estimation using vision and a single-track model,

J. Nilsson, J. Fredriksson, and A. C. ¨Odblom, “Reliable vehicle pose estimation using vision and a single-track model,”IEEE Trans. on Intelligent Transportation Systems, vol. 15, no. 6, pp. 2630–2643, May 2014

2014

-

[41]

Pinhole camera model,

P. Sturm, “Pinhole camera model,” inComputer Vision: A Reference Guide, R. Kimmel, M. M. Bronstein, and P. Favaro, Eds. Springer, Apr. 2021, pp. 983–986

2021

-

[42]

YOLOv11: An Overview of the Key Architectural Enhancements

M. Hussain and R. Khanam, “YOLOv11: An overview of the key architectural enhancements,” 2024. [Online]. Available: https: //arxiv.org/abs/2410.17725

work page internal anchor Pith review arXiv 2024

-

[43]

A Configurable 60GHz phased array platform for multi-link mmWave channel characterization,

A. Shkel, A. Mehrabani, and J. Kusuma, “A Configurable 60GHz phased array platform for multi-link mmWave channel characterization,” in IEEE ICC Workshops, Jun 2021

2021

-

[44]

oToGuard level 2+ all-in-one ADAS,

Otobrite, “oToGuard level 2+ all-in-one ADAS,” https://www.otobrite.c om/product/otoguard#: ∼:text=Level%200 ∼2+%20ADAS%20functions ,LCA%2C%20LKA%2C%20and%20more., 2024

2024

-

[45]

How drive agx, cuda and tensorrt achieve fast, accurate autonomous vehicle perception,

NVIDIA-Technical-Blog, “How drive agx, cuda and tensorrt achieve fast, accurate autonomous vehicle perception,” https://developer.nvidia.com/blog/how-drive-agx-cuda-and-tensorrt- achieve-fast-accurate-autonomous-vehicle-perception/, Oct 2019

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.