Recognition: 2 theorem links

· Lean TheoremDo Joint Audio-Video Generation Models Understand Physics?

Pith reviewed 2026-05-11 02:08 UTC · model grok-4.3

The pith

Joint audio-video generation models generate plausible content but fail to maintain real-world physical consistency across transitions.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

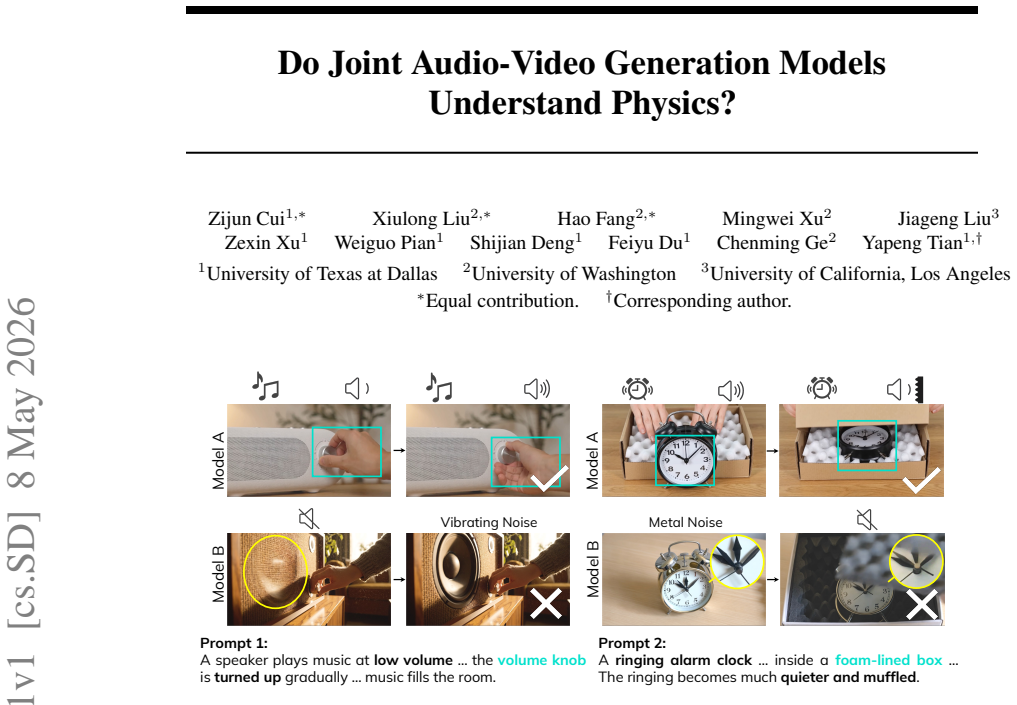

Joint audio-video generation models do not possess robust understanding of audio-visual physics. They perform adequately on steady-state scenes but degrade markedly on event-driven and environment-driven transitions. Leading proprietary and open-source systems all collapse when presented with Anti-AV-Physics prompts that request physically inconsistent audio-video pairings, indicating reliance on pattern matching rather than physical principles.

What carries the argument

AV-Phys Bench, a benchmark using three scene categories (Steady State, Event Transition, Environment Transition), Anti-AV-Physics prompts, and five evaluation dimensions of semantic adherence and physical commonsense.

Load-bearing premise

The five evaluation dimensions together give a sufficient and unbiased measure of physical understanding without needing further human validation or external physics simulators.

What would settle it

Running the top models on the Anti-AV-Physics prompts and finding that their generated audio and video both remain internally consistent with real physics, contrary to the benchmark scores, would challenge the conclusion that they lack robust physical understanding.

Figures

read the original abstract

Joint audio-video generation models are rapidly approaching professional production quality, raising a central question: do they understand audio-visual physics, or merely generate plausible sounds and frames that violate real-world consistency? We introduce AV-Phys Bench, a benchmark for evaluating physical commonsense in joint audio-video generation. AV-Phys Bench tests models across three scene categories: Steady State, Event Transition, and Environment Transition. It covers physics-grounded subcategories drawn from real-world scenes, plus Anti-AV-Physics prompts that deliberately request physically inconsistent audio-video behavior. Each generation is evaluated along five dimensions: visual semantic adherence, audio semantic adherence, visual physical commonsense, audio physical commonsense, and cross-modal physical commonsense. Across three proprietary and four open-source models, we find that Seedance 2.0 performs best overall, but all models remain far from robust physical understanding. Performance drops sharply on event-driven and environment-driven transitions, and even strong proprietary systems collapse on Anti-AV-Physics prompts. We further introduce AV-Phys Agent, a ReAct-style evaluator that combines a multimodal language model with deterministic acoustic measurement tools, producing rankings that closely align with human ratings. Our results identify cross-modal physical consistency and transition-driven scene dynamics as key open challenges for joint audio-video generation.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces AV-Phys Bench, a benchmark for evaluating physical commonsense in joint audio-video generation models. It categorizes scenes into Steady State, Event Transition, and Environment Transition, includes Anti-AV-Physics prompts, and scores generations along five dimensions (visual/audio semantic adherence, visual/audio physical commonsense, cross-modal physical commonsense) using the AV-Phys Agent (a ReAct-style multimodal LLM augmented with deterministic acoustic tools). Experiments on three proprietary and four open-source models show Seedance 2.0 performing best overall, with sharp drops on transition scenes and Anti-AV-Physics prompts, and report that agent rankings align with human ratings. The central claim is that current models lack robust audio-visual physical understanding.

Significance. If the benchmark and agent are shown to be reliable, the work would usefully identify cross-modal consistency and transition dynamics as open challenges for joint AV generation, providing a concrete evaluation framework that could steer future model training and architecture choices in multimodal synthesis.

major comments (4)

- [Section 3] Section 3 (Benchmark Construction): The description of prompt sourcing, subcategory selection from real-world scenes, and exact criteria for Anti-AV-Physics prompts lacks sufficient detail on curation process, diversity metrics, or potential biases, which directly affects the validity of the reported performance drops on Event/Environment Transitions.

- [Section 4.2] Section 4.2 (Evaluation Dimensions): The five dimensions are presented as jointly measuring physical understanding, yet no quantitative grounding (e.g., comparison of generated clips against physics simulators for trajectory conservation or wave propagation) or ablation is provided to show they are necessary and sufficient; this is load-bearing for the claim that all models 'remain far from robust physical understanding.'

- [Section 5] Section 5 (Results and Agent Validation): While agent-human alignment is claimed, no inter-rater agreement statistics, statistical significance tests on model differences, or confidence intervals are reported; the assertion that Seedance 2.0 is best overall and that proprietary models collapse on Anti-AV-Physics prompts therefore rests on unquantified differences.

- [Section 4.3] Section 4.3 (AV-Phys Agent): The ReAct-style agent relies on LLM judgments for visual/audio physical commonsense and cross-modal consistency without external physics-based verification (e.g., extracting 3D motion or acoustic spectra and checking against conservation equations), leaving open whether low scores reflect genuine violations or evaluator heuristics.

minor comments (2)

- [Section 4] Notation for the five evaluation dimensions is introduced without a clear summary table, making it hard to track which dimension drives the reported failures on transitions.

- [Abstract and Section 1] The abstract and introduction use 'robust physical understanding' without a precise operational definition tied to the benchmark, which should be clarified early.

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback on our manuscript. We address each major comment point by point below, providing clarifications and outlining planned revisions to enhance the rigor, transparency, and validity of the benchmark and evaluations.

read point-by-point responses

-

Referee: [Section 3] Section 3 (Benchmark Construction): The description of prompt sourcing, subcategory selection from real-world scenes, and exact criteria for Anti-AV-Physics prompts lacks sufficient detail on curation process, diversity metrics, or potential biases, which directly affects the validity of the reported performance drops on Event/Environment Transitions.

Authors: We agree that expanded details on curation will strengthen the paper's validity. In the revised manuscript, we will augment Section 3 with: (1) explicit sources for real-world scene subcategories (e.g., referenced physics video corpora and textbooks), (2) quantitative diversity metrics including counts of unique physics phenomena, scene complexity distributions, and subcategory balance, and (3) precise criteria for Anti-AV-Physics prompts (e.g., deliberate violation of conservation laws while preserving semantic coherence). We will also add a paragraph on bias mitigation strategies, such as prompt length balancing and expert review for over-representation of object categories. These changes directly support the reported drops on transition scenes. revision: yes

-

Referee: [Section 4.2] Section 4.2 (Evaluation Dimensions): The five dimensions are presented as jointly measuring physical understanding, yet no quantitative grounding (e.g., comparison of generated clips against physics simulators for trajectory conservation or wave propagation) or ablation is provided to show they are necessary and sufficient; this is load-bearing for the claim that all models 'remain far from robust physical understanding.'

Authors: We acknowledge the value of additional grounding. The dimensions were logically decomposed from physical commonsense literature into visual/audio/cross-modal components to enable fine-grained diagnosis. Full simulator comparisons are impractical for the benchmark's open-ended, diverse scenes. In revision, we will add to Section 4.2: explicit rationale with illustrative examples for each dimension, an ablation study on a representative subset demonstrating their individual contributions to aggregate scores, and an explicit limitations paragraph noting the absence of simulator-based verification. This will better substantiate the central claim while highlighting avenues for future work. revision: partial

-

Referee: [Section 5] Section 5 (Results and Agent Validation): While agent-human alignment is claimed, no inter-rater agreement statistics, statistical significance tests on model differences, or confidence intervals are reported; the assertion that Seedance 2.0 is best overall and that proprietary models collapse on Anti-AV-Physics prompts therefore rests on unquantified differences.

Authors: We agree that statistical quantification is essential for robust claims. In the revised Section 5, we will report: inter-rater agreement (e.g., Fleiss' kappa across human evaluators), statistical significance tests (e.g., Wilcoxon signed-rank tests with p-values) for all model pairwise differences, and 95% confidence intervals on the per-dimension and aggregate scores. These will be incorporated into the text, tables, and figures. This will provide quantitative backing for Seedance 2.0's ranking and the observed collapses on Anti-AV-Physics prompts. revision: yes

-

Referee: [Section 4.3] Section 4.3 (AV-Phys Agent): The ReAct-style agent relies on LLM judgments for visual/audio physical commonsense and cross-modal consistency without external physics-based verification (e.g., extracting 3D motion or acoustic spectra and checking against conservation equations), leaving open whether low scores reflect genuine violations or evaluator heuristics.

Authors: We appreciate this point on verification. The agent hybridizes multimodal LLM reasoning with deterministic acoustic tools (e.g., spectral analysis for wave propagation checks) to ground audio evaluations. Comprehensive external physics simulation (3D trajectories, conservation equations) is not scalable across the benchmark's varied creative scenes without per-scene custom simulators. In revision, we will expand Section 4.3 to: detail the acoustic tool implementations with examples, describe prompting strategies that explicitly reference physical principles, and include a dedicated limitations discussion on potential LLM heuristics alongside suggestions for simulator integration in future extensions. The human alignment results provide empirical support for the current approach. revision: partial

Circularity Check

No significant circularity in empirical benchmark evaluation

full rationale

The paper is an empirical benchmark study that introduces AV-Phys Bench and AV-Phys Agent to evaluate joint audio-video generation models on physical commonsense. There are no mathematical derivations, equations, fitted parameters, or first-principles predictions that reduce to self-defined inputs. Central claims rest on observed performance differences across models, scene categories, and prompt types, with the agent explicitly validated by alignment to human ratings. No load-bearing steps rely on self-citation chains, ansatzes, or renaming of known results; the work is self-contained as an experimental evaluation.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Physical commonsense in audio-video pairs can be decomposed into the five listed adherence and consistency dimensions

invented entities (2)

-

AV-Phys Bench

no independent evidence

-

AV-Phys Agent

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Foundation/RealityFromDistinction.leanreality_from_one_distinction unclearAV-Phys Bench tests models across three scene categories: Steady State, Event Transition, and Environment Transition... five dimensions: visual semantic adherence, audio semantic adherence, visual physical commonsense, audio physical commonsense, and cross-modal physical commonsense.

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearA V-Phys Agent... pairs a multimodal language model with deterministic acoustic measurement tools (onset detection, pitch analysis, loudness metering, reverberation estimation...)

Reference graph

Works this paper leans on

-

[1]

Cosmos World Foundation Model Platform for Physical AI

Niket Agarwal, Arslan Ali, Maciej Bala, Yogesh Balaji, Erik Barker, Tiffany Cai, Prithvijit Chattopadhyay, Yongxin Chen, Yin Cui, Yifan Ding, et al. Cosmos world foundation model platform for physical ai.arXiv preprint arXiv:2501.03575, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[2]

Videophy: Evaluating physical commonsense for video generation

Hritik Bansal, Zongyu Lin, Tianyi Xie, Zeshun Zong, Michal Yarom, Yonatan Bitton, Chenfanfu Jiang, Yizhou Sun, Kai-Wei Chang, and Aditya Grover. Videophy: Evaluating physical commonsense for video generation.arXiv preprint arXiv:2406.03520, 2024

-

[3]

Hritik Bansal, Clark Peng, Yonatan Bitton, Roman Goldenberg, Aditya Grover, and Kai-Wei Chang. Videophy-2: A challenging action-centric physical commonsense evaluation in video generation.arXiv preprint arXiv:2503.06800, 2025

-

[4]

Video generation models as world simulators

Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Leo Jing, David Schnurr, Joe Taylor, Troy Luhman, Eric Luhman, et al. Video generation models as world simulators. OpenAI Blog, 1(8):1, 2024

work page 2024

-

[5]

Genie: Generative interactive environments

Jake Bruce, Michael D Dennis, Ashley Edwards, Jack Parker-Holder, Yuge Shi, Edward Hughes, Matthew Lai, Aditi Mavalankar, Richie Steigerwald, Chris Apps, et al. Genie: Generative interactive environments. InForty-first International Conference on Machine Learning, 2024

work page 2024

-

[6]

Zhe Cao, Tao Wang, Jiaming Wang, Yanghai Wang, Yuanxing Zhang, Jialu Chen, Miao Deng, Jiahao Wang, Yubin Guo, Chenxi Liao, et al. T2av-compass: Towards unified evaluation for text-to-audio-video generation.arXiv preprint arXiv:2512.21094, 2025

-

[7]

Changan Chen, Carl Schissler, Sanchit Garg, Philip Kobernik, Alexander Clegg, Paul Calamia, Dhruv Batra, Philip Robinson, and Kristen Grauman. Soundspaces 2.0: A simulation platform for visual-acoustic learning.Advances in Neural Information Processing Systems, 35:8896– 8911, 2022

work page 2022

-

[8]

Ethan Chern, Hansi Teng, Hanwen Sun, Hao Wang, Hong Pan, Hongyu Jia, Jiadi Su, Jin Li, Junjie Yu, Lijie Liu, et al. Speed by simplicity: A single-stream architecture for fast audio-video generative foundation model.arXiv preprint arXiv:2603.21986, 2026

-

[9]

Gheorghe Comanici, Eric Bieber, Mike Schaekermann, Ice Pasupat, Noveen Sachdeva, Inderjit Dhillon, Marcel Blistein, Ori Ram, Dan Zhang, Evan Rosen, et al. Gemini 2.5: Pushing the frontier with advanced reasoning, multimodality, long context, and next generation agentic capabilities.arXiv preprint arXiv:2507.06261, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[10]

Introducing Veo 3.1 and advanced capa- bilities in Flow

Jess Gallegos, Thomas Iljic, and Google DeepMind. Introducing Veo 3.1 and advanced capa- bilities in Flow. https://blog.google/technology/ai/veo-updates-flow/, October

-

[11]

Google Blog, October 15, 2025. Technical details inherited from the Veo 3 Tech Re- port,https://storage.googleapis.com/deepmind-media/veo/Veo-3-Tech-Report. pdf

work page 2025

-

[12]

Look, listen, and act: Towards audio-visual embodied navigation

Chuang Gan, Yiwei Zhang, Jiajun Wu, Boqing Gong, and Joshua B Tenenbaum. Look, listen, and act: Towards audio-visual embodied navigation. In2020 IEEE International Conference on Robotics and Automation (ICRA), pages 9701–9707. IEEE, 2020

work page 2020

-

[13]

Jing Gu, Xian Liu, Yu Zeng, Ashwin Nagarajan, Fangrui Zhu, Daniel Hong, Yue Fan, Qianqi Yan, Kaiwen Zhou, Ming-Yu Liu, et al. " phyworldbench": A comprehensive evaluation of physical realism in text-to-video models.arXiv preprint arXiv:2507.13428, 2025

-

[14]

Xuyang Guo, Jiayan Huo, Zhenmei Shi, Zhao Song, Jiahao Zhang, and Jiale Zhao. T2vphysbench: A first-principles benchmark for physical consistency in text-to-video gen- eration.arXiv preprint arXiv:2505.00337, 2025

-

[15]

LTX-2: Efficient Joint Audio-Visual Foundation Model

Yoav HaCohen, Benny Brazowski, Nisan Chiprut, Yaki Bitterman, Andrew Kvochko, Avishai Berkowitz, Daniel Shalem, Daphna Lifschitz, Dudu Moshe, Eitan Porat, et al. Ltx-2: Efficient joint audio-visual foundation model.arXiv preprint arXiv:2601.03233, 2026. 10

work page Pith review arXiv 2026

-

[16]

Videoscore: Building automatic metrics to simulate fine-grained human feedback for video generation

Xuan He, Dongfu Jiang, Ge Zhang, Max Ku, Achint Soni, Sherman Siu, Haonan Chen, Abhranil Chandra, Ziyan Jiang, Aaran Arulraj, et al. Videoscore: Building automatic metrics to simulate fine-grained human feedback for video generation. InProceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 2105–2123, 2024

work page 2024

-

[17]

Clipscore: A reference-free evaluation metric for image captioning

Jack Hessel, Ari Holtzman, Maxwell Forbes, Ronan Le Bras, and Yejin Choi. Clipscore: A reference-free evaluation metric for image captioning. InProceedings of the 2021 conference on empirical methods in natural language processing, pages 7514–7528, 2021

work page 2021

-

[18]

VABench: A Comprehensive Benchmark for Audio-Video Generation

Daili Hua, Xizhi Wang, Bohan Zeng, Xinyi Huang, Hao Liang, Junbo Niu, Xinlong Chen, Quanqing Xu, and Wentao Zhang. Vabench: A comprehensive benchmark for audio-video generation.arXiv preprint arXiv:2512.09299, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[19]

Vbench: Comprehensive benchmark suite for video generative models

Ziqi Huang, Yinan He, Jiashuo Yu, Fan Zhang, Chenyang Si, Yuming Jiang, Yuanhan Zhang, Tianxing Wu, Qingyang Jin, Nattapol Chanpaisit, et al. Vbench: Comprehensive benchmark suite for video generative models. InProceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 21807–21818, 2024

work page 2024

-

[20]

A reference-free metric for evaluating music enhancement algorithms

K Kilgour, M Zuluaga, D Roblek, and M Sharifi. A reference-free metric for evaluating music enhancement algorithms. Interspeech, 2019

work page 2019

-

[21]

The measurement of observer agreement for categorical data.biometrics, pages 159–174, 1977

J Richard Landis and Gary G Koch. The measurement of observer agreement for categorical data.biometrics, pages 159–174, 1977

work page 1977

-

[22]

Video generation models: A survey of post-training and alignment

Chaoyu Li, Xiaoyi Gu, Yogesh Kulkarni, Eun Woo Im, Mohammadmahdi Honarmand, Zeyu Wang, Juntong Song, Fei Du, Xilin Jiang, Kexin Zheng, et al. Video generation models: A survey of post-training and alignment. 2026

work page 2026

-

[23]

Kai Liu, Wei Li, Lai Chen, Shengqiong Wu, Yanhao Zheng, Jiayi Ji, Fan Zhou, Jiebo Luo, Ziwei Liu, Hao Fei, et al. Javisdit: Joint audio-video diffusion transformer with hierarchical spatio-temporal prior synchronization.arXiv preprint arXiv:2503.23377, 2025

-

[24]

Kai Liu, Yanhao Zheng, Kai Wang, Shengqiong Wu, Rongjunchen Zhang, Jiebo Luo, Dimitrios Hatzinakos, Ziwei Liu, Hao Fei, and Tat-Seng Chua. Javisdit++: Unified modeling and optimization for joint audio-video generation.arXiv preprint arXiv:2602.19163, 2026

-

[25]

Caven: An embodied conversational agent for efficient audio-visual navigation in noisy environments

Xiulong Liu, Sudipta Paul, Moitreya Chatterjee, and Anoop Cherian. Caven: An embodied conversational agent for efficient audio-visual navigation in noisy environments. InProceedings of the AAAI conference on artificial intelligence, volume 38, pages 3765–3773, 2024

work page 2024

-

[26]

Ovi: Twin backbone cross-modal fusion for audio-video generation

Chetwin Low, Weimin Wang, and Calder Katyal. Ovi: Twin backbone cross-modal fusion for audio-video generation.arXiv preprint arXiv:2510.01284, 2025

-

[27]

Tavgbench: Benchmarking text to audible-video generation

Yuxin Mao, Xuyang Shen, Jing Zhang, Zhen Qin, Jinxing Zhou, Mochu Xiang, Yiran Zhong, and Yuchao Dai. Tavgbench: Benchmarking text to audible-video generation. InProceedings of the 32nd ACM International Conference on Multimedia, pages 6607–6616, 2024

work page 2024

-

[28]

Fanqing Meng, Jiaqi Liao, Xinyu Tan, Wenqi Shao, Quanfeng Lu, Kaipeng Zhang, Yu Cheng, Dianqi Li, Yu Qiao, and Ping Luo. Towards world simulator: Crafting physical commonsense- based benchmark for video generation.arXiv preprint arXiv:2410.05363, 2024

-

[29]

Saman Motamed, Laura Culp, Kevin Swersky, Priyank Jaini, and Robert Geirhos. Do gener- ative video models understand physical principles? InProceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pages 948–958, 2026

work page 2026

- [30]

-

[31]

Seedance 2.0: Advancing Video Generation for World Complexity

Team Seedance, De Chen, Liyang Chen, Xin Chen, Ying Chen, Zhuo Chen, Zhuowei Chen, Feng Cheng, Tianheng Cheng, Yufeng Cheng, et al. Seedance 2.0: Advancing video generation for world complexity.arXiv preprint arXiv:2604.14148, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[32]

Savgbench: Benchmarking spatially aligned audio-video generation

Kazuki Shimada, Christian Simon, Takashi Shibuya, Shusuke Takahashi, and Yuki Mitsufuji. Savgbench: Benchmarking spatially aligned audio-video generation. InICASSP 2026-2026 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 11977–11981. IEEE, 2026. 11

work page 2026

-

[33]

Aaditya Singh, Adam Fry, Adam Perelman, Adam Tart, Adi Ganesh, Ahmed El-Kishky, Aidan McLaughlin, Aiden Low, AJ Ostrow, Akhila Ananthram, et al. Openai gpt-5 system card.arXiv preprint arXiv:2601.03267, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[34]

T2v- compbench: A comprehensive benchmark for compositional text-to-video generation

Kaiyue Sun, Kaiyi Huang, Xian Liu, Yue Wu, Zihan Xu, Zhenguo Li, and Xihui Liu. T2v- compbench: A comprehensive benchmark for compositional text-to-video generation. In Proceedings of the Computer Vision and Pattern Recognition Conference, pages 8406–8416, 2025

work page 2025

-

[35]

Yirong Sun, Yanjun Chen, Xin Qiu, Gang Zhang, Hongyu Chen, Daokuan Wu, Chengming Li, Min Yang, Dawei Zhu, Wei Zhang, et al. Sonicbench: Dissecting the physical perception bottleneck in large audio language models.arXiv preprint arXiv:2601.11039, 2026

-

[36]

Kling-omni technical report.arXiv preprint arXiv:2512.16776, 2025

Kling Team, Jialu Chen, Yuanzheng Ci, Xiangyu Du, Zipeng Feng, Kun Gai, Sainan Guo, Feng Han, Jingbin He, Kang He, et al. Kling-omni technical report.arXiv preprint arXiv:2512.16776, 2025

-

[37]

Qwen Team. Qwen3. 5-omni technical report.arXiv preprint arXiv:2604.15804, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[38]

Towards Accurate Generative Models of Video: A New Metric & Challenges

Thomas Unterthiner, Sjoerd Van Steenkiste, Karol Kurach, Raphael Marinier, Marcin Michalski, and Sylvain Gelly. Towards accurate generative models of video: A new metric & challenges. arXiv preprint arXiv:1812.01717, 2018

work page internal anchor Pith review arXiv 2018

-

[39]

Duomin Wang, Wei Zuo, Aojie Li, Ling-Hao Chen, Xinyao Liao, Deyu Zhou, Zixin Yin, Xili Dai, Daxin Jiang, and Gang Yu. Universe-1: Unified audio-video generation via stitching of experts.arXiv preprint arXiv:2509.06155, 2025

-

[40]

Tianxin Xie, Wentao Lei, Kai Jiang, Guanjie Huang, Pengfei Zhang, Chunhui Zhang, Fengji Ma, Haoyu He, Han Zhang, Jiangshan He, et al. Phyavbench: A challenging audio physics- sensitivity benchmark for physically grounded text-to-audio-video generation.arXiv preprint arXiv:2512.23994, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[41]

A survey on video diffusion models.ACM Computing Surveys, 57(2):1–42, 2024

Zhen Xing, Qijun Feng, Haoran Chen, Qi Dai, Han Hu, Hang Xu, Zuxuan Wu, and Yu-Gang Jiang. A survey on video diffusion models.ACM Computing Surveys, 57(2):1–42, 2024

work page 2024

-

[42]

A Systematic Post-Train Framework for Video Generation

Zeyue Xue, Siming Fu, Jie Huang, Shuai Lu, Haoran Li, Yijun Liu, Yuming Li, Xiaoxuan He, Mengzhao Chen, Haoyang Huang, et al. A systematic post-train framework for video generation.arXiv preprint arXiv:2604.25427, 2026

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[43]

ReAct: Synergizing Reasoning and Acting in Language Models

Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models.arXiv preprint arXiv:2210.03629, 2022

work page internal anchor Pith review Pith/arXiv arXiv 2022

-

[44]

Diverse and aligned audio-to-video generation via text-to-video model adaptation

Guy Yariv, Itai Gat, Sagie Benaim, Lior Wolf, Idan Schwartz, and Yossi Adi. Diverse and aligned audio-to-video generation via text-to-video model adaptation. InProceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 6639–6647, 2024

work page 2024

-

[45]

Juan Zhang, Jiahao Chen, Cheng Wang, Zhiwang Yu, Tangquan Qi, Can Liu, and Di Wu. Virbo: Multimodal multilingual avatar video generation in digital marketing.arXiv preprint arXiv:2403.11700, 2024. 12 A Broader Impact and Limitations Broader impact.A V-Phys Bench provides the first systematic diagnostic for where joint audio-video models fail on physics, o...

-

[46]

video_sa.objects— Are all of the following visually present in the clip: {video.objects}? Answer Yes or No

-

[47]

video_sa.event— Is the event “{video.event}” visually depicted in the clip? Answer Yes or No

-

[48]

audio_sa.objects— Are the sound source(s) {audio.objects} audible in the clip?(when silence_expected: “would normally be audible if real-world physics held; answer Yes if they are appropriately represented as such (typically silent here)”)

-

[49]

audio_sa.sound— Is the sound {audio.sound} clearly audible in the clip?(when silence_expected: “the clip is expected to be silent during the depicted event; answer Yes if it is appropriately silent throughout with no audible leak-through”) Key Standards (Physical Commonsense) Check whether each of the following physics statements is true of the clip. Answ...

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.