Recognition: 2 theorem links

· Lean TheoremStructured Recurrent Mixers for Massively Parallelized Sequence Generation

Pith reviewed 2026-05-12 01:24 UTC · model grok-4.3

The pith

The Structured Recurrent Mixer enables algebraic conversion between parallel training and recurrent inference representations.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

The Structured Recurrent Mixer is an architecture that allows for algebraic conversion between a sequence parallel representation at train time and a recurrent representation at inference, notably without the need for specialized kernels or device-specific memory management. This dual representation allows for greater training efficiency, higher input information capacity, and larger inference throughput and concurrency when compared to other linear complexity models. Mojo/MAX inference implementations of SRMs exhibit 12x the throughput and 170x the concurrency of similarly powerful Transformers inferenced on vLLM, increases characteristic of Pytorch implementations resulting in a 30%增加 in

What carries the argument

Structured Recurrent Mixer supporting algebraic conversion between sequence-parallel and recurrent representations

If this is right

- SRMs achieve greater training efficiency than other linear complexity models.

- They support higher input information capacity.

- Inference delivers larger throughput and concurrency, reaching 12x and 170x over vLLM Transformers.

- This produces a 30% increase in compute-constant GSM8k Pass@k.

- SRMs serve as effective candidates for reinforcement learning training.

Where Pith is reading between the lines

- If recurrent models scale better in the batch dimension than in sequence length, inference hardware could be redesigned around high-concurrency batch processing rather than long-context optimizations.

- The portable algebraic conversion without custom kernels could allow the same model weights to run efficiently on a wider range of standard hardware.

- The approach may extend to other sequence modeling domains where the training-inference efficiency trade-off is currently limiting.

Load-bearing premise

The algebraic conversion between parallel and recurrent representations preserves full model capacity and performance without information loss or the need for device-specific optimizations, and that experimental comparisons to other models are conducted under equivalent conditions.

What would settle it

Training an SRM, converting it to its recurrent form, and measuring a drop in GSM8k Pass@k relative to the parallel version or to a matched-capacity transformer would indicate loss of capacity or inequivalent conditions.

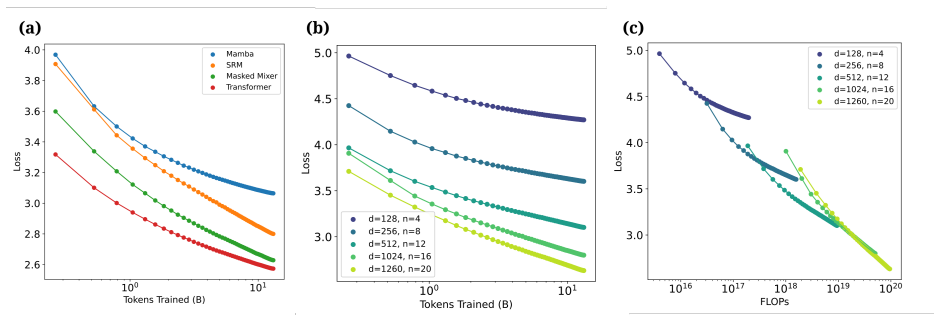

Figures

read the original abstract

Over the last two decades, language modeling has experienced a shift from predominantly recurrent architectures that process tokens sequentially during training and inference to non-recurrent models that process sequence elements in parallel during training, which results in greater training efficiency and stability at the expense of lower inference throughput. Here we introduce the Structured Recurrent Mixer, an architecture that allows for algebraic conversion between a sequence parallel representation at train time and a recurrent representation at inference, notably without the need for specialized kernels or device-specific memory management. We show experimentally that this dual representation allows for greater training efficiency, higher input information capacity, and larger inference throughput and concurrency when compared to other linear complexity models. We postulate that recurrent models are poorly suited to extended sequence length scaling for information-rich inputs typical of language, but are well suited to scaling in the sample (batch) dimension due to their constant memory per sample. We provide Mojo/MAX inference implementations of SRMs exhibiting 12x the throughput and 170x the concurrency of similarly powerful Transformers inferenced on vLLM, increases characteristic of Pytorch implementations resulting in a 30\% increase in compute-constant GSM8k Pass@k. We conclude by demonstrating that SRMs are effective reinforcement learning training candidates.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces the Structured Recurrent Mixer (SRM), an architecture supporting algebraic conversion between a sequence-parallel representation for training and a recurrent representation for inference, without requiring specialized kernels. The authors claim this duality yields greater training efficiency, higher input information capacity, and substantially larger inference throughput and concurrency than other linear-complexity models. They report Mojo/MAX implementations achieving 12× throughput and 170× concurrency relative to equivalently powerful Transformers run on vLLM, a 30% lift in compute-constant GSM8K Pass@k, and suitability as RL training candidates. The work also postulates that recurrent forms are better suited to batch-dimension scaling than to long-sequence scaling for language-like inputs.

Significance. If the algebraic mapping is exactly invertible and capacity-preserving, the SRM could meaningfully reconcile the training advantages of parallel models with the inference advantages of recurrent ones, especially in high-concurrency and batch-scaling regimes. The provision of concrete, runnable inference code is a concrete strength that would allow direct verification of the reported speedups.

major comments (3)

- [Abstract and §3 (conversion description)] The central claim that the parallel-to-recurrent conversion is exactly invertible and preserves full model capacity without information loss or device-specific approximations is asserted but not derived. No explicit algebraic mapping, invertibility proof, or analysis of state accumulation or norm growth appears in the abstract or early sections; the experimental numbers (12× throughput, 170× concurrency) are therefore not yet shown to follow from a purely algebraic property rather than implementation details.

- [Experimental results (§5) and Table 1] Table 1 and the GSM8K results paragraph: the 30% Pass@k improvement is reported under “compute-constant” conditions, yet no data-split details, baseline implementation descriptions, hyperparameter matching protocol, or error bars are supplied. Without these, it is impossible to determine whether the gain is attributable to the SRM architecture or to differences in training regime or evaluation.

- [Discussion and §4 (postulate)] The postulate that recurrent models are poorly suited to extended sequence lengths but well suited to batch scaling is stated without supporting scaling curves or theoretical argument. The manuscript therefore leaves open whether the reported concurrency gains generalize beyond the tested batch sizes or whether hidden assumptions (e.g., bounded hidden-state norms) are required for the conversion to remain exact at scale.

minor comments (2)

- [§3] Notation for the dual representations is introduced without a compact summary table; a single table listing the parallel and recurrent forms side-by-side would improve readability.

- [Appendix] The Mojo/MAX implementation details are referenced but not linked or summarized; a short appendix with pseudocode or kernel signatures would help readers reproduce the throughput numbers.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. We address each major comment below with clarifications drawn directly from the manuscript and indicate the revisions we will make to improve clarity and reproducibility.

read point-by-point responses

-

Referee: [Abstract and §3 (conversion description)] The central claim that the parallel-to-recurrent conversion is exactly invertible and preserves full model capacity without information loss or device-specific approximations is asserted but not derived. No explicit algebraic mapping, invertibility proof, or analysis of state accumulation or norm growth appears in the abstract or early sections; the experimental numbers (12× throughput, 170× concurrency) are therefore not yet shown to follow from a purely algebraic property rather than implementation details.

Authors: The explicit algebraic mapping, including the conversion matrices and proof of exact invertibility (via orthogonality ensuring no information loss or norm growth), is derived in Section 3. The abstract and early sections state the consequence of this duality but do not repeat the full derivation for brevity. To address the concern, we will revise the abstract to include a one-sentence reference to the algebraic invertibility and add a concise summary paragraph plus norm-growth analysis at the start of §3. This will make clear that the reported throughput and concurrency gains follow directly from the algebraic property, as independently verifiable with the provided Mojo/MAX inference code. revision: yes

-

Referee: [Experimental results (§5) and Table 1] Table 1 and the GSM8K results paragraph: the 30% Pass@k improvement is reported under “compute-constant” conditions, yet no data-split details, baseline implementation descriptions, hyperparameter matching protocol, or error bars are supplied. Without these, it is impossible to determine whether the gain is attributable to the SRM architecture or to differences in training regime or evaluation.

Authors: We agree that these details are essential for assessing the source of the improvement. The manuscript already specifies compute-constant training (equalized FLOPs and wall-clock time) and uses standard GSM8K splits, but we will expand §5 and Table 1 to explicitly list: the train/test split sizes, baseline model implementations with matched hyperparameters (learning rate, batch size, and optimizer settings taken from the original papers), the exact protocol used to equalize compute across models, and error bars from five independent runs with different random seeds. These additions will confirm that the 30% Pass@k gain is attributable to SRM's higher information capacity. revision: yes

-

Referee: [Discussion and §4 (postulate)] The postulate that recurrent models are poorly suited to extended sequence lengths but well suited to batch scaling is stated without supporting scaling curves or theoretical argument. The manuscript therefore leaves open whether the reported concurrency gains generalize beyond the tested batch sizes or whether hidden assumptions (e.g., bounded hidden-state norms) are required for the conversion to remain exact at scale.

Authors: The postulate follows from the constant per-sample memory of the recurrent form (enabling batch-dimension scaling) versus the fixed hidden-state size limiting capacity on long, information-dense sequences. We will add a short theoretical paragraph in the discussion section deriving this from the state-size invariance and confirming that our formulation maintains bounded norms under the algebraic conversion. The concurrency results already span a range of batch sizes; we will explicitly state the tested range and the bounded-norm assumption. No additional scaling curves are available in the current experiments, but the algebraic exactness holds independently of batch size within the analyzed regime. revision: partial

Circularity Check

No circularity: claims rest on experimental comparisons and asserted algebraic equivalence

full rationale

The paper's central contribution is the introduction of the Structured Recurrent Mixer with an algebraic (not fitted) conversion between parallel training and recurrent inference representations. All reported gains (training efficiency, information capacity, 12x throughput, 170x concurrency, 30% GSM8k lift) are presented as outcomes of external experimental benchmarks against other linear-complexity models and specific Mojo/MAX implementations. No equations, parameter fits, self-citations, or uniqueness theorems appear in the provided text that reduce any result to its own inputs by construction. The derivation chain is therefore self-contained against external validation.

Axiom & Free-Parameter Ledger

invented entities (1)

-

Structured Recurrent Mixer

no independent evidence

Lean theorems connected to this paper

-

IndisputableMonolith/Cost/FunctionalEquation.leanwashburn_uniqueness_aczel unclearalgebraic conversion between a sequence parallel representation at train time and a recurrent representation at inference, notably without the need for specialized kernels

-

IndisputableMonolith/Foundation/ArithmeticFromLogic.leanembed_strictMono_of_one_lt unclearrecurrent models are poorly suited to extended sequence length scaling ... but are well suited to scaling in the sample (batch) dimension due to their constant memory per sample

Reference graph

Works this paper leans on

-

[1]

Proceedings of the 29th symposium on operating systems principles , pages=

Efficient memory management for large language model serving with pagedattention , author=. Proceedings of the 29th symposium on operating systems principles , pages=

-

[3]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models , author=. 2024 , eprint=

work page 2024

-

[4]

Understanding R1-Zero-Like Training: A Critical Perspective , author=. 2025 , eprint=

work page 2025

- [5]

-

[6]

The Curious Case of Neural Text Degeneration , author=. 2020 , eprint=

work page 2020

-

[7]

Large Language Monkeys: Scaling Inference Compute with Repeated Sampling , author=. 2024 , eprint=

work page 2024

- [8]

-

[9]

Hungry Hungry Hippos: Towards Language Modeling with State Space Models , author=. 2023 , eprint=

work page 2023

-

[10]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces , author=. 2024 , eprint=

work page 2024

-

[11]

Transformers are SSMs: Generalized Models and Efficient Algorithms Through Structured State Space Duality , author=. 2024 , eprint=

work page 2024

-

[12]

Long Short-Term Memory , year=

Hochreiter, Sepp and Schmidhuber, Jürgen , journal=. Long Short-Term Memory , year=

-

[13]

Masked Mixers for Language Generation and Retrieval , author=. 2025 , eprint=

work page 2025

-

[14]

Language Model Memory and Memory Models for Language , author=. 2026 , eprint=

work page 2026

-

[15]

RWKV: Reinventing RNNs for the Transformer Era , author=. 2023 , eprint=

work page 2023

-

[16]

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models , author=. 2023 , eprint=

work page 2023

-

[17]

European conference on machine learning , pages=

Bandit based monte-carlo planning , author=. European conference on machine learning , pages=. 2006 , organization=

work page 2006

-

[18]

International conference on computers and games , pages=

Efficient selectivity and backup operators in Monte-Carlo tree search , author=. International conference on computers and games , pages=. 2006 , organization=

work page 2006

-

[19]

ReST-MCTS*: LLM Self-Training via Process Reward Guided Tree Search , author=. 2024 , eprint=

work page 2024

-

[20]

Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge , author=. ArXiv , year=

-

[21]

HellaSwag: Can a Machine Really Finish Your Sentence? , author=. 2019 , eprint=

work page 2019

-

[22]

The LAMBADA dataset: Word prediction requiring a broad discourse context , author=. 2016 , eprint=

work page 2016

-

[23]

SQuAD: 100,000+ Questions for Machine Comprehension of Text , author=. 2016 , eprint=

work page 2016

-

[26]

Instruction-Following Evaluation for Large Language Models , author=. 2023 , eprint=

work page 2023

-

[27]

Simple linear attention language models balance the recall-throughput tradeoff , author=. 2024 , eprint=

work page 2024

-

[28]

It's All in the Heads: Using Attention Heads as a Baseline for Cross-Lingual Transfer in Commonsense Reasoning , author=. 2021 , eprint=

work page 2021

-

[29]

doi:10.5281/zenodo.12608602 , url =

Gao, Leo and Tow, Jonathan and Abbasi, Baber and Biderman, Stella and Black, Sid and DiPofi, Anthony and Foster, Charles and Golding, Laurence and Hsu, Jeffrey and Le Noac'h, Alain and Li, Haonan and McDonell, Kyle and Muennighoff, Niklas and Ociepa, Chris and Phang, Jason and Reynolds, Laria and Schoelkopf, Hailey and Skowron, Aviya and Sutawika, Lintang...

-

[30]

Learning internal representations by error propagation , author=

-

[31]

Granite Code Models: A Family of Open Foundation Models for Code Intelligence , author=. 2024 , eprint=

work page 2024

-

[32]

NVIDIA Nemotron 3: Efficient and Open Intelligence , author=. 2025 , eprint=

work page 2025

-

[33]

Kimi Linear: An Expressive, Efficient Attention Architecture , author=. 2025 , eprint=

work page 2025

-

[34]

Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention , author=. 2020 , eprint=

work page 2020

-

[35]

Zhuoran Shen and Mingyuan Zhang and Haiyu Zhao and Shuai Yi and Hongsheng Li , title =. CoRR , volume =. 2018 , url =

work page 2018

-

[36]

Hyena Hierarchy: Towards Larger Convolutional Language Models , author=. 2023 , eprint=

work page 2023

-

[37]

Programming massively parallel processors: a hands-on approach , author=. 2016 , publisher=

work page 2016

- [38]

- [39]

- [40]

- [41]

- [42]

-

[43]

Llama 2: Open Foundation and Fine-Tuned Chat Models , author=. 2023 , eprint=

work page 2023

-

[44]

von Werra, Leandro and Belkada, Younes and Tunstall, Lewis and Beeching, Edward and Thrush, Tristan and Lambert, Nathan and Huang, Shengyi and Rasul, Kashif and Gallouédec, Quentin , license =

-

[45]

ARC-AGI-3: A New Challenge for Frontier Agentic Intelligence , author=. 2026 , eprint=

work page 2026

-

[46]

Large Language Monkeys: Scaling Inference Compute with Repeated Sampling

Bradley Brown, Jordan Juravsky, Ryan Ehrlich, Ronald Clark, Quoc V. Le, Christopher Ré, and Azalia Mirhoseini. Large language monkeys: Scaling inference compute with repeated sampling, 2024. URL https://arxiv.org/abs/2407.21787

work page internal anchor Pith review arXiv 2024

-

[47]

Parallel scaling law for language models, 2025

Mouxiang Chen, Binyuan Hui, Zeyu Cui, Jiaxi Yang, Dayiheng Liu, Jianling Sun, Junyang Lin, and Zhongxin Liu. Parallel scaling law for language models, 2025. URL https://arxiv.org/abs/2505.10475

-

[48]

ARC-AGI-3: A New Challenge for Frontier Agentic Intelligence

ARC Prize Foundation. Arc-agi-3: A new challenge for frontier agentic intelligence, 2026. URL https://arxiv.org/abs/2603.24621

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[49]

Learning internal representations by error propagation

David E Rumelhart, Geoffrey E Hinton, and Ronald J Williams. Learning internal representations by error propagation. Technical report, 1985

work page 1985

-

[50]

Sepp Hochreiter and Jürgen Schmidhuber. Long short-term memory. Neural Computation, 9 0 (8): 0 1735--1780, 1997. doi:10.1162/neco.1997.9.8.1735

-

[51]

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is all you need. 2023. URL https://arxiv.org/abs/1706.03762

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[52]

Cox, Ruchir Puri, and Rameswar Panda

Mayank Mishra, Matt Stallone, Gaoyuan Zhang, Yikang Shen, Aditya Prasad, Adriana Meza Soria, Michele Merler, Parameswaran Selvam, Saptha Surendran, Shivdeep Singh, Manish Sethi, Xuan-Hong Dang, Pengyuan Li, Kun-Lung Wu, Syed Zawad, Andrew Coleman, Matthew White, Mark Lewis, Raju Pavuluri, Yan Koyfman, Boris Lublinsky, Maximilien de Bayser, Ibrahim Abdelaz...

-

[53]

https://www.ibm.com/granite/docs/models/granite

G ranite 4.0 - I B M G ranite --- ibm.com. https://www.ibm.com/granite/docs/models/granite. [Accessed 16-04-2026]

work page 2026

-

[54]

Nvidia nemotron 3: Efficient and open intelligence, 2025

NVIDIA: Aaron Blakeman and Aaron Grattafiori et al. Nvidia nemotron 3: Efficient and open intelligence, 2025. URL https://arxiv.org/abs/2512.20856

-

[55]

Qwen3.5 : Towards native multimodal agents, February 2026

Qwen Team . Qwen3.5 : Towards native multimodal agents, February 2026. URL https://qwen.ai/blog?id=qwen3.5

work page 2026

-

[56]

Kimi Team, Yu Zhang, Zongyu Lin, Xingcheng Yao, Jiaxi Hu, Fanqing Meng, Chengyin Liu, Xin Men, Songlin Yang, Zhiyuan Li, Wentao Li, Enzhe Lu, Weizhou Liu, Yanru Chen, Weixin Xu, Longhui Yu, Yejie Wang, Yu Fan, Longguang Zhong, Enming Yuan, Dehao Zhang, Yizhi Zhang, T. Y. Liu, Haiming Wang, Shengjun Fang, Weiran He, Shaowei Liu, Yiwei Li, Jianlin Su, Jiezh...

work page internal anchor Pith review arXiv 2025

-

[57]

Scaling Laws for Neural Language Models

Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B. Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models, 2020. URL https://arxiv.org/abs/2001.08361

work page internal anchor Pith review Pith/arXiv arXiv 2020

-

[58]

Angelos Katharopoulos, Apoorv Vyas, Nikolaos Pappas, and François Fleuret. Transformers are rnns: Fast autoregressive transformers with linear attention, 2020. URL https://arxiv.org/abs/2006.16236

-

[59]

Efficient attention: Attention with linear complexities.arXiv preprint arXiv:1812.01243, 2018

Zhuoran Shen, Mingyuan Zhang, Haiyu Zhao, Shuai Yi, and Hongsheng Li. Efficient attention: Attention with linear complexities. CoRR, abs/1812.01243, 2018. URL http://arxiv.org/abs/1812.01243

-

[60]

Fu, Tri Dao, Stephen Baccus, Yoshua Bengio, Stefano Ermon, and Christopher Ré

Michael Poli, Stefano Massaroli, Eric Nguyen, Daniel Y. Fu, Tri Dao, Stephen Baccus, Yoshua Bengio, Stefano Ermon, and Christopher Ré. Hyena hierarchy: Towards larger convolutional language models, 2023. URL https://arxiv.org/abs/2302.10866

-

[61]

RWKV: Reinventing RNNs for the Transformer Era

Bo Peng, Eric Alcaide, Quentin Anthony, Alon Albalak, Samuel Arcadinho, Stella Biderman, Huanqi Cao, Xin Cheng, Michael Chung, Matteo Grella, Kranthi Kiran GV, Xuzheng He, Haowen Hou, Jiaju Lin, Przemyslaw Kazienko, Jan Kocon, Jiaming Kong, Bartlomiej Koptyra, Hayden Lau, Krishna Sri Ipsit Mantri, Ferdinand Mom, Atsushi Saito, Guangyu Song, Xiangru Tang, ...

work page internal anchor Pith review arXiv 2023

-

[62]

Daniel Y. Fu, Tri Dao, Khaled K. Saab, Armin W. Thomas, Atri Rudra, and Christopher Ré. Hungry hungry hippos: Towards language modeling with state space models, 2023. URL https://arxiv.org/abs/2212.14052

-

[63]

Mamba: Linear-Time Sequence Modeling with Selective State Spaces

Albert Gu and Tri Dao. Mamba: Linear-time sequence modeling with selective state spaces, 2024. URL https://arxiv.org/abs/2312.00752

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[64]

Tri Dao and Albert Gu. Transformers are ssms: Generalized models and efficient algorithms through structured state space duality, 2024. URL https://arxiv.org/abs/2405.21060

work page internal anchor Pith review Pith/arXiv arXiv 2024

- [65]

-

[66]

Accelerating toeplitz neural network with constant-time inference complexity

Zhen Qin and Yiran Zhong. Accelerating toeplitz neural network with constant-time inference complexity. In Houda Bouamor, Juan Pino, and Kalika Bali, editors, Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 12206--12215, Singapore, December 2023. Association for Computational Linguistics. doi:10.18653/v1/2023....

-

[67]

Llama 2: Open Foundation and Fine-Tuned Chat Models

Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller, Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Harts...

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[68]

Think you have Solved Question Answering? Try ARC, the AI2 Reasoning Challenge

Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. ArXiv, abs/1803.05457, 2018

work page internal anchor Pith review Pith/arXiv arXiv 2018

-

[69]

HellaSwag: Can a Machine Really Finish Your Sentence?

Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. Hellaswag: Can a machine really finish your sentence?, 2019. URL https://arxiv.org/abs/1905.07830

work page internal anchor Pith review Pith/arXiv arXiv 2019

-

[70]

arXiv preprint arXiv:1606.06031 , year=

Denis Paperno, Germán Kruszewski, Angeliki Lazaridou, Quan Ngoc Pham, Raffaella Bernardi, Sandro Pezzelle, Marco Baroni, Gemma Boleda, and Raquel Fernández. The lambada dataset: Word prediction requiring a broad discourse context, 2016. URL https://arxiv.org/abs/1606.06031

-

[71]

SQuAD: 100,000+ Questions for Machine Comprehension of Text

Pranav Rajpurkar, Jian Zhang, Konstantin Lopyrev, and Percy Liang. Squad: 100,000+ questions for machine comprehension of text, 2016. URL https://arxiv.org/abs/1606.05250

work page internal anchor Pith review arXiv 2016

-

[72]

Pranav Rajpurkar, Robin Jia, and Percy Liang. Know what you don ' t know: Unanswerable questions for SQ u AD . In Iryna Gurevych and Yusuke Miyao, editors, Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 784--789, Melbourne, Australia, July 2018. Association for Computational Linguist...

-

[73]

Yushi Bai, Xin Lv, Jiajie Zhang, Hongchang Lyu, Jiankai Tang, Zhidian Huang, Zhengxiao Du, Xiao Liu, Aohan Zeng, Lei Hou, Yuxiao Dong, Jie Tang, and Juanzi Li. L ong B ench: A bilingual, multitask benchmark for long context understanding. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pa...

-

[74]

Instruction-Following Evaluation for Large Language Models

Jeffrey Zhou, Tianjian Lu, Swaroop Mishra, Siddhartha Brahma, Sujoy Basu, Yi Luan, Denny Zhou, and Le Hou. Instruction-following evaluation for large language models, 2023. URL https://arxiv.org/abs/2311.07911

work page internal anchor Pith review Pith/arXiv arXiv 2023

-

[75]

Simple linear attention language models balance the recall-throughput tradeoff, 2024

Simran Arora, Sabri Eyuboglu, Michael Zhang, Aman Timalsina, Silas Alberti, Dylan Zinsley, James Zou, Atri Rudra, and Christopher Ré. Simple linear attention language models balance the recall-throughput tradeoff, 2024

work page 2024

-

[76]

Alexey Tikhonov and Max Ryabinin. It's all in the heads: Using attention heads as a baseline for cross-lingual transfer in commonsense reasoning, 2021

work page 2021

-

[77]

Leo Gao, Jonathan Tow, Baber Abbasi, Stella Biderman, Sid Black, Anthony DiPofi, Charles Foster, Laurence Golding, Jeffrey Hsu, Alain Le Noac'h, Haonan Li, Kyle McDonell, Niklas Muennighoff, Chris Ociepa, Jason Phang, Laria Reynolds, Hailey Schoelkopf, Aviya Skowron, Lintang Sutawika, Eric Tang, Anish Thite, Ben Wang, Kevin Wang, and Andy Zou. The languag...

- [78]

-

[79]

Deepseek. Deepseek-v3 technical report. 2025. URL https://arxiv.org/abs/2412.19437

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[80]

M A X : A high-performance inference framework for A I --- modular.com

Modular. M A X : A high-performance inference framework for A I --- modular.com. https://www.modular.com/open-source/max. [Accessed 20-04-2026]

work page 2026

-

[81]

Efficient memory management for large language model serving with pagedattention

Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for large language model serving with pagedattention. In Proceedings of the 29th symposium on operating systems principles, pages 611--626, 2023

work page 2023

-

[82]

Proximal Policy Optimization Algorithms

John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms, 2017. URL https://arxiv.org/abs/1707.06347

work page internal anchor Pith review Pith/arXiv arXiv 2017

-

[83]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, Y. K. Li, Y. Wu, and Daya Guo. Deepseekmath: Pushing the limits of mathematical reasoning in open language models, 2024. URL https://arxiv.org/abs/2402.03300

work page internal anchor Pith review Pith/arXiv arXiv 2024

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.