Recognition: no theorem link

Large Language Models for Causal Relations Extraction in Social Media: A Validation Framework for Disaster Intelligence

Pith reviewed 2026-05-13 02:46 UTC · model grok-4.3

The pith

Large language models can extract causal relations from disaster social media posts when validated against expert reference graphs.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

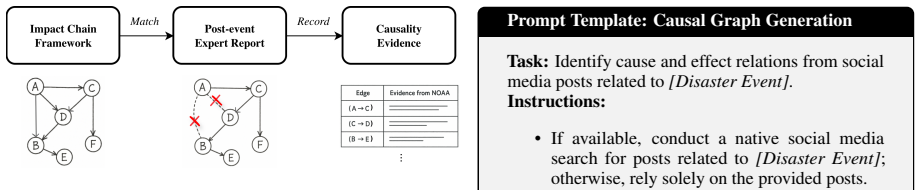

The authors propose an expert-grounded evaluation framework to assess large language models' ability to extract causal relations from disaster-related social media. By comparing LLM-generated causal graphs with reference graphs from disaster reports and verifying support from post-event evidence, they demonstrate the potential of LLMs for this task while highlighting risks of model priors influencing the outputs.

What carries the argument

The expert-grounded evaluation framework that compares LLM-generated causal graphs with reference graphs derived from disaster-specific reports.

If this is right

- Causal extractions from social media can strengthen real-time situational awareness of casualties, damage, and infrastructure effects.

- Decision-support systems can use the framework to decide whether to trust LLM outputs or to filter model-prior effects.

- The same validation steps can flag when models introduce unsupported causal claims instead of grounding them in post content.

- Validated causal graphs could feed into broader disaster response tools once their reliability against reports is confirmed.

Where Pith is reading between the lines

- The framework could transfer to other noisy social-media domains such as public-health or supply-chain discussions.

- Running the same checks on live tweet streams rather than archived posts would test whether the method scales to ongoing events.

- Combining the extracted graphs with sensor or official report data streams could reduce reliance on any single source.

Load-bearing premise

Reference graphs derived from disaster reports accurately represent the true causal relations present in the corresponding social media posts.

What would settle it

A direct side-by-side comparison in which LLM graphs diverge substantially from reference graphs on the same posts, or in which many extracted relations lack supporting post-event evidence, would falsify the claim of effective extraction.

Figures

read the original abstract

During disasters, extracting causal relations from social media can strengthen situational awareness by identifying factors linked to casualties, physical damage, infrastructure disruption, and cascading impacts. However, disaster-related posts are often informal, fragmented, and context-dependent, and they may describe personal experiences rather than explicit causal relations. In this work, we examine whether Large Language Models (LLMs) can effectively extract causal relations from disaster-related social media posts. To this end, we (1) propose an expert-grounded evaluation framework that compares LLM-generated causal graphs with reference graphs derived from disaster-specific reports and (2) assess whether the extracted relations are supported by post-event evidence or instead reflect model priors. Our findings highlight both the potential and risks of using LLMs for causal relation extraction in disaster decision-support systems.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper proposes an expert-grounded evaluation framework for assessing whether Large Language Models can extract causal relations from informal, fragmented disaster-related social media posts. The framework compares LLM-generated causal graphs to reference graphs derived from disaster-specific reports and evaluates whether extracted relations are supported by post-event evidence or instead reflect model priors, with the goal of informing the use of LLMs in disaster decision-support systems.

Significance. If the central methodological concern is resolved, the work could offer a structured approach to validating causal extraction from noisy social media data in high-stakes settings. This would be relevant for disaster intelligence applications where identifying factors linked to casualties, damage, and cascading effects is critical. The paper does not report machine-checked proofs, reproducible code, or parameter-free derivations.

major comments (1)

- Section 3 (framework) and the evaluation protocol: reference graphs are constructed from disaster reports rather than from expert annotation of the specific social media posts. Because posts are described as informal and limited to personal experience while reports aggregate broader evidence, the two sources can contain non-overlapping relations. Any mismatch then conflates LLM failure to extract what is in the post with the reference containing information the post never stated. No verification step is described to ensure reference relations are entailed by the input posts, which is load-bearing for the claim that the framework reliably measures extraction performance.

minor comments (1)

- The abstract states that the work assesses 'findings' on potential and risks but supplies no quantitative metrics, error analysis, data exclusion criteria, or concrete examples of extracted relations versus reference graphs.

Simulated Author's Rebuttal

We thank the referee for their constructive and detailed feedback. The major comment identifies a substantive issue in the evaluation protocol, which we address directly below. We have revised the manuscript to incorporate a verification step and clarify the framework's objectives.

read point-by-point responses

-

Referee: Section 3 (framework) and the evaluation protocol: reference graphs are constructed from disaster reports rather than from expert annotation of the specific social media posts. Because posts are described as informal and limited to personal experience while reports aggregate broader evidence, the two sources can contain non-overlapping relations. Any mismatch then conflates LLM failure to extract what is in the post with the reference containing information the post never stated. No verification step is described to ensure reference relations are entailed by the input posts, which is load-bearing for the claim that the framework reliably measures extraction performance.

Authors: We agree that this distinction is important and that the original manuscript description risks the conflation noted. Our framework is intentionally designed to validate LLM-extracted relations against post-event evidence from authoritative reports, as the practical goal in disaster intelligence is to surface causal links supported by broader evidence rather than to perform strict information extraction from implicit, personal posts. Nevertheless, we acknowledge that without an explicit check, mismatches could be misinterpreted as LLM shortcomings when the reference simply contains additional information. To address this, we will revise Section 3 to add an expert verification step: independent annotators will review each reference relation and confirm whether it is entailed by or reasonably inferable from the corresponding social media posts (with inter-annotator agreement reported). We will also discuss cases where report-derived relations extend beyond the posts. This addition strengthens the protocol while preserving the framework's focus on evidence-supported validation versus model priors. The revised manuscript reflects these changes. revision: partial

Circularity Check

No circularity: evaluation uses independent external reference graphs from disaster reports

full rationale

The paper's central evaluation compares LLM-extracted causal graphs against reference graphs derived from separate disaster-specific reports, which are external to the social media posts and LLM outputs. This setup does not reduce any result to its own inputs by definition, fitted parameters renamed as predictions, or self-citation chains. No equations, ansatzes, or uniqueness claims are presented that collapse the framework onto itself. The assessment of model priors versus post-event evidence is likewise grounded in the external reports rather than internal redefinition. The derivation chain remains self-contained against these benchmarks.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Reference graphs derived from disaster-specific reports accurately represent the true causal relations in the social media posts under study

Reference graph

Works this paper leans on

-

[1]

Exploring platform migration patterns between twitter and mastodon: A user behavior study , author=. ICWSM , year=

-

[2]

Classifying covid-19 related meta ads using discourse representation through a hypergraph , author=. SBP-BRiMS , year=

-

[3]

arXiv preprint arXiv:2504.00071 , year=

Navigating decentralized online social networks: An overview of technical and societal challenges in architectural choices , author=. arXiv preprint arXiv:2504.00071 , year=

-

[4]

IEEE Data Descriptions , year=

Descriptor: a temporal multi-network dataset of social interactions in bluesky social (bluetempnet) , author=. IEEE Data Descriptions , year=

- [5]

-

[6]

Nothing stands alone: Relational fake news detection with hypergraph neural networks , author=. IEEE Big Data) , year=

-

[7]

An Interventional Approach to Real-Time Disaster Assessment via Causal Attribution , author=. CIKM , year=

-

[8]

Workshop on Differentiable Learning of Combinatorial Algorithms , year=

Reinforcement learning assisted dynamic large scale graph learning , author=. Workshop on Differentiable Learning of Combinatorial Algorithms , year=

-

[9]

arXiv preprint arXiv:2509.11634 , year=

Assessing On-the-Ground Disaster Impact Using Online Data Sources , author=. arXiv preprint arXiv:2509.11634 , year=

-

[10]

arXiv preprint arXiv:2512.08071 , year=

CAMO: Causality-Guided Adversarial Multimodal Domain Generalization for Crisis Classification , author=. arXiv preprint arXiv:2512.08071 , year=

-

[11]

User Behavior Across Platforms: Modeling Groups and Migration , author=. 2026 , school=

work page 2026

-

[12]

Fediverse Sharing: Cross-Platform Interaction Dynamics Between Threads and Mastodon Users , author=. ASONAM , year=

-

[13]

CauseBox: A Causal Inference Toolbox for BenchmarkingTreatment Effect Estimators with Machine Learning Methods , author=. CIKM , year=

-

[14]

Causal modeling of twitter activity during covid-19 , author=. Computation , year=

-

[15]

Border-independent multi-functional, multi-hazard exposure modelling in Alpine regions , author=. Natural Hazards , year=

-

[16]

Can Large Language Models Infer Causal Relationships from Real-World Text?

Can Large Language Models Infer Causal Relationships from Real-World Text? , author=. arXiv preprint arXiv:2505.18931 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[17]

Climate impact chains—a conceptual modelling approach for climate risk assessment in the context of adaptation planning , author=. 2022 , publisher=

work page 2022

-

[18]

Rapid assessment of disaster damage using social media activity , author=. Science advances , volume=. 2016 , publisher=

work page 2016

- [19]

-

[20]

arXiv preprint arXiv:2202.11768 , year=

From unstructured text to causal knowledge graphs: A transformer-based approach , author=. arXiv preprint arXiv:2202.11768 , year=

-

[21]

SERE: Structural Example Retrieval for Enhancing LLMs in Event Causality Identification

SERE: Structural Example Retrieval for Enhancing LLMs in Event Causality Identification , author=. arXiv preprint arXiv:2605.03701 , year=

work page internal anchor Pith review Pith/arXiv arXiv

-

[22]

Microblogging during two natural hazards events: what twitter may contribute to situational awareness , author=. SIGCHI , pages=

-

[23]

International Journal of Climate Change Strategies and Management , year=

The vulnerability sourcebook and climate impact chains--a standardised framework for a climate vulnerability and risk assessment , author=. International Journal of Climate Change Strategies and Management , year=

-

[24]

International Conference on Energy and Environmental Science , pages=

Development of a Platform for the Generation, Visualisation and Quantification of Disaster Impact Chains , author=. International Conference on Energy and Environmental Science , pages=. 2023 , organization=

work page 2023

-

[25]

Hurricane Irma in Florida: Building Performance Observations, Recommendations, and Technical Guidance , institution =. 2018 , month =

work page 2018

-

[26]

Cangialosi, John P. and Latto, Andrew S. and Berg, Robbie J. , title =. 2018 , month =

work page 2018

-

[27]

Cangialosi, John P. and Latto, Andrew S. and Berg, Robbie , title =. 2021 , month =

work page 2021

-

[28]

2017 Hurricane Season FEMA After-Action Report , institution =. 2018 , month =

work page 2017

-

[29]

Hurricane harvey twitter dataset , author=. Univ. North Texas, Denton, TX, USA, Tech. Rep. ark:/67531/metadc993940 , year=

-

[30]

Hurricane Harvey 2017 Twitter Data , year =

work page 2017

- [31]

- [32]

-

[33]

Social Media-Based Crisis Communication: Analysis of Twitter Data from Local Agencies During Hurricane Irma , author=

- [34]

-

[35]

China Conference on Knowledge Graph and Semantic Computing , year=

Harvesting event schemas from large language models , author=. China Conference on Knowledge Graph and Semantic Computing , year=

-

[36]

Crisismmd: Multimodal twitter datasets from natural disasters , author=. ICWSM , year=

-

[37]

Unsupervised detection of sub-events in large scale disasters , author=. AAAI , year=

-

[38]

arXiv preprint arXiv:2309.03528 , year=

Common Ground In Crisis: Causal Narrative Networks of Public Official Communications During the COVID-19 Pandemic , author=. arXiv preprint arXiv:2309.03528 , year=

-

[39]

Identifying predictive causal factors from news streams , author=. IJCNLP , year=

-

[40]

npj Urban Sustainability , year=

Spatiotemporal heterogeneity reveals urban-rural differences in post-disaster recovery , author=. npj Urban Sustainability , year=

-

[41]

International journal of disaster risk reduction , year=

Social media for emergency rescue: An analysis of rescue requests on Twitter during Hurricane Harvey , author=. International journal of disaster risk reduction , year=

-

[42]

Humaid: Human-annotated disaster incidents data from twitter with deep learning benchmarks , author=. ICWSM , year=

-

[43]

"Actionable Help" in Crises: A Novel Dataset and Resource-Efficient Models for Identifying Request and Offer Social Media Posts , author=. arXiv preprint arXiv:2502.16839 , year=

-

[44]

GRACE: Generating Cause and Effect of Disaster Sub-Events from Social Media Text , author=. WWW , year=

-

[45]

arXiv preprint arXiv:1809.01202 , year=

Causal explanation analysis on social media , author=. arXiv preprint arXiv:1809.01202 , year=

-

[46]

Khetan, V. and Ramnani, R. and Anand, M. and Sengupta, S. and Fano, A. E. , title =. Intelligent Computing , publisher =

-

[47]

Twitter as a Lifeline: Human-annotated Twitter Corpora for NLP of Crisis-related Messages , author=. ACL , year=

-

[48]

Workshop on social media analytics , year=

Causal discovery in social media using quasi-experimental designs , author=. Workshop on social media analytics , year=

-

[49]

Event causality extraction via implicit cause-effect interactions , author=. EMNLP , year=

-

[50]

Workshop: NeusymBridge@LREC-COLING , year=

Open event causality extraction by the assistance of llm in task annotation, dataset, and method , author=. Workshop: NeusymBridge@LREC-COLING , year=

-

[51]

Unified structure generation for universal information extraction , author=. ACL , year=

-

[52]

Knowledge-preserving incremental social event detection via heterogeneous gnns , author=. WWW , year=

-

[53]

arXiv preprint arXiv:2301.11621 , year=

Event causality extraction with event argument correlations , author=. arXiv preprint arXiv:2301.11621 , year=

-

[54]

arXiv preprint arXiv:2501.17880 , year=

Assessment of the January 2025 Los Angeles County wildfires: A multi-modal analysis of impact, response, and population exposure , author=. arXiv preprint arXiv:2501.17880 , year=

-

[55]

Paths to causality: finding informative subgraphs within knowledge graphs for knowledge-based causal discovery , author=. KDD , year=

-

[56]

CrisisBench: Benchmarking crisis-related social media datasets for humanitarian information processing , author=. ICWSM , year=

-

[57]

International Journal of Information Management , year=

Social media for intelligent public information and warning in disasters: An interdisciplinary review , author=. International Journal of Information Management , year=

-

[58]

arXiv preprint arXiv:2305.07375 , year=

Is chatgpt a good causal reasoner? a comprehensive evaluation , author=. arXiv preprint arXiv:2305.07375 , year=

-

[59]

arXiv preprint arXiv:2402.17644 , year=

Are llms capable of data-based statistical and causal reasoning? benchmarking advanced quantitative reasoning with data , author=. arXiv preprint arXiv:2402.17644 , year=

-

[60]

Multi-agent causal discovery using large language models

Multi-agent causal discovery using large language models , author=. arXiv preprint arXiv:2407.15073 , year=

-

[61]

Causal reasoning and large language models: Opening a new frontier for causality

Causal reasoning and large language models: Opening a new frontier for causality , author=. arXiv preprint arXiv:2305.00050 , year=

-

[62]

The importance of alignment of time anchors for observational causal inference research , author=

Timing is everything. The importance of alignment of time anchors for observational causal inference research , author=. Annals of the American Thoracic Society , year=

-

[63]

Temporal causal inference with time lag , author=. Neural computation , year=

-

[64]

Social Network Analysis and Mining , year=

From facebook posts to news headlines: using transformer models to predict post-disaster impact on mass media content , author=. Social Network Analysis and Mining , year=

-

[65]

Causal Order: The Key to Leveraging Imperfect Experts in Causal Inference , author=. 2025 , publisher=

work page 2025

-

[66]

Text2Event: Controllable Sequence-to-Structure Generation for End-to-end Event Extraction , author=. ACL , year=

-

[67]

Information extraction in low-resource scenarios: Survey and perspective , author=. ICKG , year=

-

[68]

Universal information extraction as unified semantic matching , author=. AAAI , year=

-

[69]

Computational Intelligence , year=

A survey of techniques for event detection in Twitter , author=. Computational Intelligence , year=

-

[70]

Natural Hazards and Earth System Sciences Discussions , year=

Opportunities and risks of disaster data from social media: a systematic review of incident information , author=. Natural Hazards and Earth System Sciences Discussions , year=

-

[71]

Causality: models, reasoning, and inference, by judea pearl, cambridge university press, 2000 , author=. Econometric Theory , year=

work page 2000

- [72]

-

[73]

The Diffusion of Causal Language in Social Networks , author=. ICWSM , volume=

-

[74]

Causal inference using llm-guided discovery , author=. AAAI Workshop , year=

-

[75]

Future Generation Computer Systems , year=

Real-time event detection for online behavioral analysis of big social data , author=. Future Generation Computer Systems , year=

-

[76]

Beyond trending topics: Real-world event identification on Twitter , author=. ICWSM , year=

-

[77]

EventFact: Visual analytics of temporal relationships between online news events , author=. CHI , year=

-

[78]

Event detection and evolution analysis with clustering of word embedding trajectory , author=. AAAI , year=

-

[79]

NewEvent: Weakly supervised new event type induction and tagging , author=. NACCL , year=

-

[80]

A Weakly Supervised Framework for News Event Detection with Distant Supervision Signals , author=. ACL Findings , year=

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.