Recognition: unknown

FINER-SQL: Boosting Small Language Models for Text-to-SQL

Pith reviewed 2026-05-07 12:55 UTC · model grok-4.3

The pith

FINER-SQL uses dense rewards to let 3B language models match large LLMs on Text-to-SQL generation.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

FINER-SQL replaces sparse binary rewards with dense and interpretable rewards in a group relative policy optimization setup. It uses a memory reward to align reasoning with verified traces and an atomic reward to measure operation-level overlap, granting partial credit for structurally correct SQLs. This transforms discrete correctness into continuous learning, enabling stable optimization of small models without a critic.

What carries the argument

Memory reward for semantic stability through trace alignment and atomic reward for partial credit on operation overlap, both derived from execution feedback.

If this is right

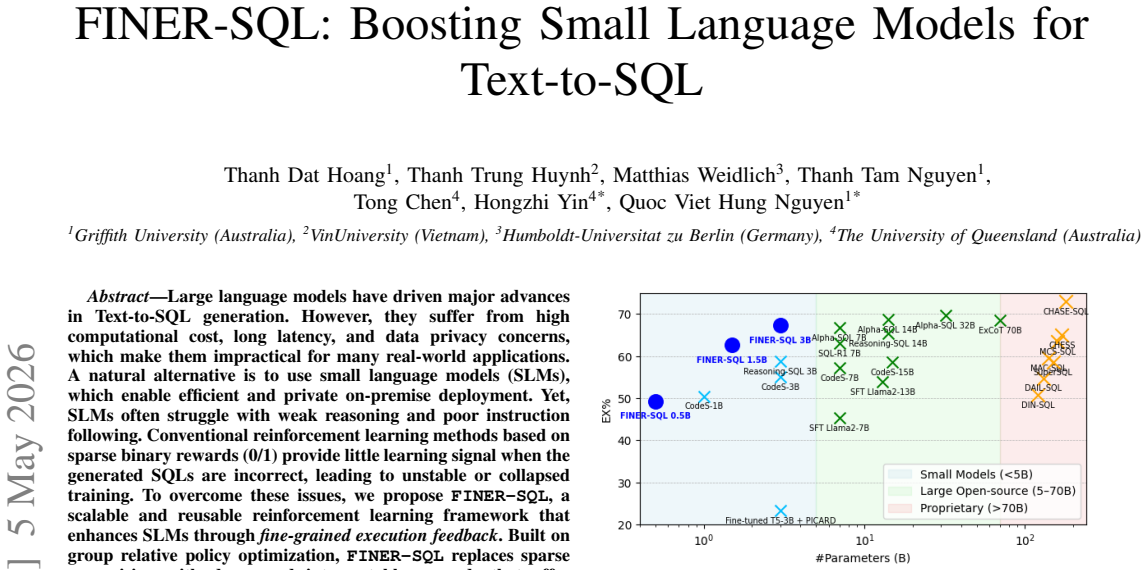

- A 3B parameter model reaches 67.73% execution accuracy on the BIRD benchmark.

- The same model attains 85% execution accuracy on the Spider benchmark.

- Inference time is reduced to 5.57 seconds per sample.

- The approach matches the performance of much larger language models while enabling on-premise deployment.

Where Pith is reading between the lines

- This reward design may extend to other generation tasks where outputs can be partially evaluated through execution or verification.

- Local deployment of such models could mitigate concerns over sending sensitive data to external API providers.

- Combining these rewards with self-consistency checks might further improve reliability on complex queries.

Load-bearing premise

The memory and atomic reward functions supply stable, unbiased learning signals that improve generalization rather than encouraging reward hacking or overfitting to the specific benchmarks used.

What would settle it

Running the trained 3B model on a newly collected Text-to-SQL dataset with unseen schemas and measuring if its execution accuracy stays competitive with larger models or drops sharply.

Figures

read the original abstract

Large language models have driven major advances in Text-to-SQL generation. However, they suffer from high computational cost, long latency, and data privacy concerns, which make them impractical for many real-world applications. A natural alternative is to use small language models (SLMs), which enable efficient and private on-premise deployment. Yet, SLMs often struggle with weak reasoning and poor instruction following. Conventional reinforcement learning methods based on sparse binary rewards (0/1) provide little learning signal when the generated SQLs are incorrect, leading to unstable or collapsed training. To overcome these issues, we propose FINER-SQL, a scalable and reusable reinforcement learning framework that enhances SLMs through fine-grained execution feedback. Built on group relative policy optimization, FINER-SQL replaces sparse supervision with dense and interpretable rewards that offer continuous feedback even for incorrect SQLs. It introduces two key reward functions: a memory reward, which aligns reasoning with verified traces for semantic stability, and an atomic reward, which measures operation-level overlap to grant partial credit for structurally correct but incomplete SQLs. This approach transforms discrete correctness into continuous learning, enabling stable, critic-free optimization. Experiments on the BIRD and Spider benchmarks show that FINER-SQL achieves up to 67.73\% and 85\% execution accuracy with a 3B model -- matching much larger LLMs while reducing inference latency to 5.57~s/sample. These results highlight a cost-efficient and privacy-preserving path toward high-performance Text-to-SQL generation. Our code is available at https://github.com/thanhdath/finer-sql.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript proposes FINER-SQL, a reinforcement learning framework based on group relative policy optimization for improving small language models on Text-to-SQL tasks. It replaces sparse binary rewards with two dense rewards: a memory reward that aligns reasoning steps to verified execution traces and an atomic reward that grants partial credit based on operation-level structural overlap. Experiments on the BIRD and Spider benchmarks report that a 3B-parameter model reaches execution accuracies of up to 67.73% and 85%, respectively, while achieving an inference latency of 5.57 s/sample.

Significance. If the performance claims hold under rigorous controls, the work would demonstrate a viable route to accurate, low-latency, and privacy-preserving Text-to-SQL systems using modest hardware. The dense-reward formulation could also inform RL methods for other structured generation problems where binary feedback yields insufficient learning signal.

major comments (3)

- [Abstract] Abstract: The execution-accuracy figures (67.73% on BIRD, 85% on Spider) are stated without any accompanying information on the baselines employed, ablation studies that isolate the memory and atomic reward components, number of random seeds, standard deviations, or statistical tests.

- [Reward Design] Reward functions (memory reward and atomic reward): Both rewards are derived from execution feedback on the identical BIRD and Spider splits used for final reporting. No ablation or out-of-distribution evaluation is described that would demonstrate the rewards improve general SQL reasoning rather than optimizing surrogate signals (trace matching and partial structural overlap) on the evaluation sets themselves.

- [Experiments] Experiments section: The claim that the 3B model matches much larger LLMs lacks specification of the exact model sizes and training regimes of the comparators, as well as any controls or diagnostics for reward hacking during GRPO optimization.

minor comments (2)

- The GitHub link is given, yet the manuscript would benefit from an explicit reproducibility statement detailing how the memory and atomic reward functions can be re-implemented from the provided code.

- Formal equations for the memory and atomic reward functions would improve clarity and allow readers to verify the claimed interpretability.

Simulated Author's Rebuttal

We thank the referee for their constructive feedback on our manuscript. We have carefully considered each comment and provide point-by-point responses below. Where appropriate, we will make revisions to improve the clarity and rigor of the paper.

read point-by-point responses

-

Referee: [Abstract] Abstract: The execution-accuracy figures (67.73% on BIRD, 85% on Spider) are stated without any accompanying information on the baselines employed, ablation studies that isolate the memory and atomic reward components, number of random seeds, standard deviations, or statistical tests.

Authors: We agree with this observation. The abstract is intended to be concise, but we will revise it to briefly reference the primary baselines used in our comparisons (such as fine-tuned 3B models without RL and larger LLMs) and indicate that detailed ablation studies isolating the memory and atomic rewards are presented in the Experiments section. We will also add information on the number of random seeds (3), report standard deviations, and mention that statistical tests were performed to validate the improvements. revision: yes

-

Referee: [Reward Design] Reward functions (memory reward and atomic reward): Both rewards are derived from execution feedback on the identical BIRD and Spider splits used for final reporting. No ablation or out-of-distribution evaluation is described that would demonstrate the rewards improve general SQL reasoning rather than optimizing surrogate signals (trace matching and partial structural overlap) on the evaluation sets themselves.

Authors: This concern about potential overfitting to the evaluation sets is valid and merits clarification. In our setup, the reward functions utilize execution feedback from the training and development portions of the datasets during the GRPO optimization process, while the reported accuracies are on the standard held-out test splits. To further demonstrate that the rewards promote general SQL reasoning, we will include additional ablation studies and evaluate on out-of-distribution Text-to-SQL examples in the revised manuscript. revision: yes

-

Referee: [Experiments] Experiments section: The claim that the 3B model matches much larger LLMs lacks specification of the exact model sizes and training regimes of the comparators, as well as any controls or diagnostics for reward hacking during GRPO optimization.

Authors: We will update the Experiments section to provide the exact specifications of the comparator models, including their parameter counts (e.g., 7B, 70B) and training regimes (e.g., prompting vs. fine-tuning). Additionally, we will incorporate diagnostics for reward hacking, such as tracking the correlation between reward values and actual execution accuracy throughout training, and qualitative analysis of generated queries to ensure no exploitation of the dense reward signals. revision: yes

Circularity Check

No significant circularity in derivation chain

full rationale

The paper's central claims rest on an empirical evaluation of a reinforcement learning framework (built on standard group relative policy optimization) using two reward functions derived from execution feedback on public benchmarks (BIRD, Spider). No equations, predictions, or first-principles results are shown to reduce by construction to fitted inputs, self-definitions, or author self-citations. The performance numbers (67.73% and 85% execution accuracy) are reported outcomes of training and testing on those benchmarks rather than tautological renamings or load-bearing self-references. The derivation is self-contained against external benchmarks and standard RL machinery.

Axiom & Free-Parameter Ledger

axioms (1)

- domain assumption Group relative policy optimization yields stable training for small language models on structured generation tasks

invented entities (2)

-

memory reward

no independent evidence

-

atomic reward

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Rat-sql: Relation-aware schema encoding and linking for text-to-sql parsers,

B. Wang, R. Shin, X. Liu, O. Polozov, and M. Richardson, “Rat-sql: Relation-aware schema encoding and linking for text-to-sql parsers,” in ACL, 2020, pp. 7567–7578

2020

-

[2]

Dr.spider: A diagnostic evaluation benchmark towards text-to-SQL robustness,

S. Chang, J. Wang, M. Dong, L. Pan, H. Zhuet al., “Dr.spider: A diagnostic evaluation benchmark towards text-to-SQL robustness,” in ICLR, 2023

2023

-

[3]

Exploring underexplored limitations of cross-domain text-to-SQL generalization,

Y . Gan, X. Chen, and M. Purver, “Exploring underexplored limitations of cross-domain text-to-SQL generalization,” inEMNLP, 2021, pp. 8926– 8931

2021

-

[4]

Handling probabilistic integrity constraints in pay-as- you-go reconciliation of data models,

N. Q. V . Hung, M. Weidlich, N. T. Tam, Z. Mikl ´os, K. Aberer, A. Gal, and B. Stantic, “Handling probabilistic integrity constraints in pay-as- you-go reconciliation of data models,”Information Systems, vol. 83, pp. 166–180, 2019

2019

-

[5]

Handling data sparsity and model poisoning attacks in federated sequential recommender systems,

M. H. Nguyen, T. T. Nguyen, J. Jo, D. A. Nguyen, H. Yin, and Q. V . H. Nguyen, “Handling data sparsity and model poisoning attacks in federated sequential recommender systems,”Knowledge-Based Systems, p. 115545, 2026

2026

-

[6]

Handling low homophily in recommender systems with partitioned graph transformer,

T. T. Nguyen, T. T. Nguyen, M. Weidlich, J. Jo, Q. V . H. Nguyen, H. Yin, and A. W.-C. Liew, “Handling low homophily in recommender systems with partitioned graph transformer,”IEEE Transactions on Knowledge and Data Engineering, 2024

2024

-

[7]

Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-SQL task,

T. Yu, R. Zhang, K. Yang, M. Yasunaga, D. Wang, Z. Li, J. Ma, I. Li, Q. Yao, S. Roman, Z. Zhang, and D. Radev, “Spider: A large-scale human-labeled dataset for complex and cross-domain semantic parsing and text-to-SQL task,” inEMNLP, 2018, pp. 3911–3921

2018

-

[8]

Can llm already serve as a database interface? a big bench for large-scale database grounded text-to-sqls,

J. Li, B. Hui, G. Qu, J. Yang, B. Li, B. Li, B. Wang, B. Qin, R. Geng, N. Huoet al., “Can llm already serve as a database interface? a big bench for large-scale database grounded text-to-sqls,”NeurIPS, vol. 36, 2024

2024

-

[9]

Codes: Towards building open-source language models for text-to-sql,

H. Li, J. Zhang, H. Liu, J. Fan, X. Zhang, J. Zhu, R. Wei, H. Pan, C. Li, and H. Chen, “Codes: Towards building open-source language models for text-to-sql,”SIGMOD, vol. 2, no. 3, pp. 1–28, 2024

2024

- [10]

-

[11]

Chase-SQL: Multi-path reasoning and preference optimized candidate selection in text-to-sql,

M. Pourreza, H. Li, R. Sun, Y . Chung, S. Talaei, G. T. Kakkar, Y . Gan, A. Saberi, F. Ozcan, and S. O. Arik, “Chase-sql: Multi-path reasoning and preference optimized candidate selection in text-to-sql,” arXiv preprint arXiv:2410.01943, 2025

-

[12]

An efficient and effective evaluator for text2sql models on unseen and unlabeled data,

K. T. Pham, T. T. Nguyen, V . Huynh, H. Yin, and Q. V . H. Nguyen, “An efficient and effective evaluator for text2sql models on unseen and unlabeled data,” in2026 IEEE 42nd International Conference on Data Engineering (ICDE). IEEE, 2026

2026

-

[13]

Prototype learning for interpretable respiratory sound analysis,

Z. Ren, T. T. Nguyen, and W. Nejdl, “Prototype learning for interpretable respiratory sound analysis,” inProc. ICASSP, 2022, pp. 9087–9091

2022

-

[14]

A dual benchmarking study of facial forgery and facial forensics,

M. T. Pham, T. T. Huynh, T. T. Nguyen, T. T. Nguyen, T. T. Nguyen, J. Jo, H. Yin, and Q. V . Hung Nguyen, “A dual benchmarking study of facial forgery and facial forensics,”CAAI Transactions on Intelligence Technology, vol. 9, no. 6, pp. 1377–1397, 2024

2024

-

[15]

Masksql: Safeguarding privacy for llm-based text-to-sql via abstraction,

S. Abedini, S. Mohapatra, D. Emerson, M. Shafieinejad, J. C. Cresswell, and X. He, “Masksql: Safeguarding privacy for llm-based text-to-sql via abstraction,”arXiv preprint arXiv:2509.23459, 2025

-

[16]

Privacy-R1: Privacy-Aware Multi-LLM Agent Collaboration via Reinforcement Learning

Z. Hui, Y . R. Dong, S. Sivapiromrat, E. Shareghi, and N. Collier, “Privacypad: A reinforcement learning framework for dynamic privacy- aware delegation,”arXiv preprint arXiv:2510.16054, 2025

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[17]

Privacy-preserving explainable ai: a survey,

T. T. Nguyen, T. T. Huynh, Z. Ren, T. T. Nguyen, P. L. Nguyen, H. Yin, and Q. V . H. Nguyen, “Privacy-preserving explainable ai: a survey,” Science China Information Sciences, vol. 68, no. 1, p. 111101, 2025

2025

-

[18]

Poisoning gnn-based recommender systems with generative surrogate-based attacks,

T. Nguyen Thanh, N. D. K. Quach, T. T. Nguyen, T. T. Huynh, V . H. Vu, P. L. Nguyen, J. Jo, and Q. V . H. Nguyen, “Poisoning gnn-based recommender systems with generative surrogate-based attacks,”ACM Transactions on Information Systems, vol. 41, no. 3, pp. 1–24, 2023

2023

-

[19]

Detecting rumours with latency guarantees using massive streaming data,

T. T. Nguyen, T. T. Huynh, H. Yin, M. Weidlich, T. T. Nguyen, T. S. Mai, and Q. V . H. Nguyen, “Detecting rumours with latency guarantees using massive streaming data,”The VLDB Journal, vol. 32, no. 2, pp. 369–387, 2023

2023

-

[20]

Is long context all you need? leveraging llm’s extended context for NL2SQL,

Y . Chung, G. T. Kakkar, Y . Gan, B. Milne, and F. Ozcan, “Is long context all you need? leveraging llm’s extended context for NL2SQL,” PVLDB, vol. 18, no. 8, pp. 2735–2747, 2025

2025

-

[21]

LLaMA: Open and Efficient Foundation Language Models

H. Touvron, T. Lavril, G. Izacard, X. Martinet, M.-A. Lachaux, T. Lacroix, B. Rozi `ere, N. Goyal, E. Hambro, F. Azharet al., “Llama: Open and efficient foundation language models,”arXiv preprint arXiv:2302.13971, 2023

work page internal anchor Pith review arXiv 2023

-

[22]

A. Q. Jiang, A. Sablayrolles, A. Menschet al., “Mistral 7b,”arXiv preprint arXiv:2310.06825, 2023

work page internal anchor Pith review arXiv 2023

-

[23]

Efficient integration of multi-order dynamics and internal dynamics in stock movement prediction,

T. T. Huynh, M. H. Nguyen, T. T. Nguyen, P. L. Nguyen, M. Weidlich, Q. V . H. Nguyen, and K. Aberer, “Efficient integration of multi-order dynamics and internal dynamics in stock movement prediction,” in Proceedings of the Sixteenth ACM International Conference on Web Search and Data Mining, 2023, pp. 850–858

2023

-

[24]

Efficient and effective multi-modal queries through heterogeneous network embedding,

C. T. Duong, T. T. Nguyen, H. Yin, M. Weidlich, T. S. Mai, K. Aberer, and Q. V . H. Nguyen, “Efficient and effective multi-modal queries through heterogeneous network embedding,”IEEE Transactions on Knowledge and Data Engineering, vol. 34, no. 11, pp. 5307–5320, 2022

2022

-

[25]

Portable graph-based rumour detection against multi-modal heterophily,

T. T. Nguyen, Z. Ren, T. T. Nguyen, J. Jo, Q. V . H. Nguyen, and H. Yin, “Portable graph-based rumour detection against multi-modal heterophily,”Knowledge-Based Systems, vol. 284, p. 111310, 2024

2024

-

[26]

Manipulating recommender systems: A survey of poisoning attacks and countermeasures,

T. T. Nguyen, N. Quoc Viet Hung, T. T. Nguyen, T. T. Huynh, T. T. Nguyen, M. Weidlich, and H. Yin, “Manipulating recommender systems: A survey of poisoning attacks and countermeasures,”ACM Computing Surveys, vol. 57, no. 1, pp. 1–39, 2024

2024

-

[27]

Smart: A tool for analyzing and reconciling schema matching networks,

Q. V . H. Nguyen, T. T. Nguyen, V . T. Chau, T. K. Wijaya, Z. Mikl ´os, K. Aberer, A. Gal, and M. Weidlich, “Smart: A tool for analyzing and reconciling schema matching networks,” inICDE, 2015, pp. 1488–1491

2015

-

[28]

Tag-based paper retrieval: minimizing user effort with diversity awareness,

Q. V . H. Nguyen, S. T. Do, T. T. Nguyen, and K. Aberer, “Tag-based paper retrieval: minimizing user effort with diversity awareness,” inIn- ternational Conference on Database Systems for Advanced Applications, 2015, pp. 510–528

2015

-

[29]

Proximal Policy Optimization Algorithms

J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov, “Prox- imal policy optimization algorithms,”arXiv preprint arXiv:1707.06347, 2017

work page internal anchor Pith review arXiv 2017

-

[30]

DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models

Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y . Li, Y . Wuet al., “Deepseekmath: Pushing the limits of mathematical reasoning in open language models,”arXiv preprint arXiv:2402.03300, 2024

work page internal anchor Pith review arXiv 2024

-

[31]

Arctic-text2sql-r1: Simple rewards, strong reasoning in text-to-sql,

Z. Yao, G. Sun, L. Borchmann, Z. Shen, M. Deng, B. Zhai, H. Zhang, A. Li, and Y . He, “Arctic-text2sql-r1: Simple rewards, strong reasoning in text-to-sql,”arXiv preprint arXiv:2505.20315, 2025

-

[32]

Excot: Optimizing reasoning for text-to-sql with execution feedback,

B. Zhai, C. Xu, Y . He, and Z. Yao, “Excot: Optimizing reasoning for text-to-sql with execution feedback,”arXiv preprint arXiv:2503.19988, 2025

-

[33]

Model-agnostic and diverse explanations for streaming rumour graphs,

T. T. Nguyen, T. C. Phan, M. H. Nguyen, M. Weidlich, H. Yin, J. Jo, and Q. V . H. Nguyen, “Model-agnostic and diverse explanations for streaming rumour graphs,”Knowledge-Based Systems, vol. 253, p. 109438, 2022

2022

-

[34]

Deep mincut: Learning node embeddings from detecting communities,

C. T. Duong, T. T. Nguyen, T.-D. Hoang, H. Yin, M. Weidlich, and Q. V . H. Nguyen, “Deep mincut: Learning node embeddings from detecting communities,”Pattern Recognition, p. 109126, 2022

2022

-

[35]

Boosting small language models for text-to- sql with fine-grained execution feedback and cost-efficient rewards,

T. D. Hoang, T. T. Huynh, M. Weidlich, T. T. Nguyen, T. Chen, H. Yin, and Q. V . H. Nguyen, “Boosting small language models for text-to- sql with fine-grained execution feedback and cost-efficient rewards,” in ICDE. IEEE, 2026

2026

-

[36]

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Biet al., “Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning,”arXiv preprint arXiv:2501.12948, 2025

work page internal anchor Pith review arXiv 2025

-

[37]

Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback

Y . Bai, A. Jones, K. Ndousse, A. Askell, A. Chen, N. DasSarma, D. Drain, S. Fort, D. Ganguli, T. Henighanet al., “Training a helpful and harmless assistant with reinforcement learning from human feedback,” arXiv preprint arXiv:2204.05862, 2022

work page internal anchor Pith review arXiv 2022

-

[38]

Reconciling schema matching networks through crowdsourcing,

Q. V . H. Nguyen, T. Nguyen Thanh, Z. Mikl ´os, and K. Aberer, “Reconciling schema matching networks through crowdsourcing,”EAI Endorsed Transactions on Collaborative Computing, vol. 1, no. 2, p. e2, 2014

2014

-

[39]

Isomorphic graph embedding for progressive maximal frequent subgraph mining,

T. T. Nguyen, T. T. Nguyen, T. H. Nguyen, H. Yin, T. T. Nguyen, J. Jo, and Q. V . H. Nguyen, “Isomorphic graph embedding for progressive maximal frequent subgraph mining,”ACM Transactions on Intelligent Systems and Technology, vol. 15, no. 1, pp. 1–26, 2023

2023

-

[40]

Direct preference optimization: Your language model is secretly a reward model,

R. Rafailov, A. Sharma, E. Mitchell, C. D. Manning, S. Ermon, and C. Finn, “Direct preference optimization: Your language model is secretly a reward model,”NeurIPS, vol. 36, pp. 53 728–53 741, 2023

2023

-

[41]

Orpo: Monolithic preference optimiza- tion without reference model,

J. Hong, N. Lee, and J. Thorne, “Orpo: Monolithic preference optimiza- tion without reference model,” inEMNLP, 2024, pp. 11 170–11 189

2024

-

[42]

Fast-fedul: A training-free federated unlearning with provable skew resilience,

T. T. Huynh, T. B. Nguyen, P. L. Nguyen, T. T. Nguyen, M. Weidlich, Q. V . H. Nguyen, and K. Aberer, “Fast-fedul: A training-free federated unlearning with provable skew resilience,” inJoint European Confer- ence on Machine Learning and Knowledge Discovery in Databases. Springer, 2024, pp. 55–72

2024

-

[43]

Eires: Efficient integration of remote data in event stream processing,

B. Zhao, H. van der Aa, T. T. Nguyen, Q. V . H. Nguyen, and M. Weidlich, “Eires: Efficient integration of remote data in event stream processing,” inProceedings of the 2021 International Conference on Management of Data, 2021, pp. 2128–2141

2021

-

[44]

Seq2SQL: Generating Structured Queries from Natural Language using Reinforcement Learning

V . Zhong, C. Xiong, and R. Socher, “Seq2sql: Generating structured queries from natural language using reinforcement learning,”arXiv preprint arXiv:1709.00103, 2017

work page internal anchor Pith review arXiv 2017

-

[45]

SQLNet: Generating Structured Queries From Natural Language Without Reinforcement Learning

X. Xu, C. Liu, and D. Song, “Sqlnet: Generating structured queries from natural language without reinforcement learning,”arXiv preprint arXiv:1711.04436, 2017

work page Pith review arXiv 2017

-

[46]

Towards complex text-to-sql in cross-domain database with intermedi- ate representation,

J. Guo, Z. Zhan, Y . Gao, Y . Xiao, J.-G. Lou, T. Liu, and D. Zhang, “Towards complex text-to-sql in cross-domain database with intermedi- ate representation,” inProceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2019, pp. 4524–4535

2019

-

[47]

SyntaxSQLNet: Syntax tree networks for complex and cross-domain text-to-SQL task,

T. Yu, M. Yasunaga, K. Yang, R. Zhang, D. Wang, Z. Li, and D. Radev, “SyntaxSQLNet: Syntax tree networks for complex and cross-domain text-to-SQL task,” inEMNLP, 2018, pp. 1653–1663

2018

-

[48]

Picard: Parsing incremen- tally for constrained auto-regressive decoding from language models,

T. Scholak, N. Schucher, and D. Bahdanau, “Picard: Parsing incremen- tally for constrained auto-regressive decoding from language models,” inEMNLP, 2021, pp. 9895–9901

2021

-

[49]

An evaluation of diversification techniques,

D. C. Thang, N. T. Tam, N. Q. V . Hung, and K. Aberer, “An evaluation of diversification techniques,” inInternational Conference on Database and Expert Systems Applications, 2015, pp. 215–231

2015

-

[50]

Factcatch: Incremental pay-as-you-go fact checking with minimal user effort,

T. T. Nguyen, M. Weidlich, H. Yin, B. Zheng, Q. H. Nguyen, and Q. V . H. Nguyen, “Factcatch: Incremental pay-as-you-go fact checking with minimal user effort,” inProceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, 2020, pp. 2165–2168

2020

-

[51]

Sql-palm: Improved large language model adaptation for text-to-sql,

R. Sun, S. ¨O. Arik, H. Nakhost, H. Dai, R. Sinha, P. Yin, and T. Pfister, “Sql-palm: Improved large language model adaptation for text-to-sql,” CoRR, 2023

2023

-

[52]

Din-sql: Decomposed in-context learning of text-to-sql with self-correction,

M. Pourreza and D. Rafiei, “Din-sql: Decomposed in-context learning of text-to-sql with self-correction,” 2023

2023

-

[53]

On-device diagnostic recommendation with heterogeneous federated blocknets,

M. H. Nguyen, T. T. Huynh, T. T. Nguyen, P. L. Nguyen, H. T. Pham, J. Jo, and T. T. Nguyen, “On-device diagnostic recommendation with heterogeneous federated blocknets,”Science China Information Sciences, vol. 68, no. 4, p. 140102, 2025

2025

-

[54]

Multilingual text-to-sql: Benchmarking the limits of language models with collaborative language agents,

K. T. Pham, T. H. Nguyen, J. Jo, Q. V . H. Nguyen, and T. T. Nguyen, “Multilingual text-to-sql: Benchmarking the limits of language models with collaborative language agents,” inAustralasian Database Conference. Springer, 2025, pp. 108–123

2025

-

[55]

Multi-task learning of heterogeneous hypergraph representations in lbsns,

D. D. A. Nguyen, M. H. Nguyen, P. L. Nguyen, J. Jo, H. Yin, and T. T. Nguyen, “Multi-task learning of heterogeneous hypergraph representations in lbsns,” inInternational Conference on Advanced Data Mining and Applications. Springer, 2024, pp. 161–177

2024

-

[56]

Sql-o1: A self-reward heuristic dynamic search method for text- to-sql,

S. Lyu, H. Luo, R. Li, Z. Ou, J. Sun, Y . Qin, X. Shang, M. Song, and Y . Zhu, “Sql-o1: A self-reward heuristic dynamic search method for text-to-sql,”arXiv preprint arXiv:2502.11741, 2025

-

[57]

Nature vs. nurture: Feature vs. structure for graph neural networks,

D. C. Thang, H. T. Dat, N. T. Tam, J. Jo, N. Q. V . Hung, and K. Aberer, “Nature vs. nurture: Feature vs. structure for graph neural networks,” PRL, vol. 159, pp. 46–53, 2022

2022

-

[58]

Learning holistic interactions in lbsns with high-order, dynamic, and multi-role contexts,

H. T. Trung, T. Van Vinh, N. T. Tam, J. Jo, H. Yin, and N. Q. V . Hung, “Learning holistic interactions in lbsns with high-order, dynamic, and multi-role contexts,”IEEE Transactions on Knowledge and Data Engineering, vol. 35, no. 5, pp. 5002–5016, 2022

2022

-

[59]

Network alignment with holistic embeddings,

T. T. Huynh, C. T. Duong, T. T. Nguyen, V . T. Van, A. Sattar, H. Yin, and Q. V . H. Nguyen, “Network alignment with holistic embeddings,” TKDE, vol. 35, no. 2, pp. 1881–1894, 2021

2021

-

[60]

What-if analysis with conflicting goals: Rec- ommending data ranges for exploration,

Q. V . H. Nguyen, K. Zheng, M. Weidlich, B. Zheng, H. Yin, T. T. Nguyen, and B. Stantic, “What-if analysis with conflicting goals: Rec- ommending data ranges for exploration,” in2018 IEEE 34th Interna- tional Conference on Data Engineering (ICDE). IEEE, 2018, pp. 89– 100

2018

-

[61]

Diversifying group recommendation,

N. T. Toan, P. T. Cong, N. T. Tam, N. Q. V . Hung, and B. Stantic, “Diversifying group recommendation,”IEEE Access, vol. 6, pp. 17 776– 17 786, 2018

2018

-

[62]

Answer validation for generic crowdsourcing tasks with minimal efforts,

N. Q. V . Hung, D. C. Thang, N. T. Tam, M. Weidlich, K. Aberer, H. Yin, and X. Zhou, “Answer validation for generic crowdsourcing tasks with minimal efforts,”The VLDB Journal, vol. 26, pp. 855–880, 2017

2017

-

[63]

Argument discovery via crowdsourcing,

Q. V . H. Nguyen, C. T. Duong, T. T. Nguyen, M. Weidlich, K. Aberer, H. Yin, and X. Zhou, “Argument discovery via crowdsourcing,”The VLDB Journal, vol. 26, no. 4, pp. 511–535, 2017

2017

-

[64]

Example-based explanations for streaming fraud detection on graphs,

T. T. Nguyen, T. C. Phan, H. T. Pham, T. T. Nguyen, J. Jo, and Q. V . H. Nguyen, “Example-based explanations for streaming fraud detection on graphs,”Information Sciences, vol. 621, pp. 319–340, 2023

2023

-

[65]

Chain-of-thought prompting elicits reasoning in large language models,

J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V . Le, D. Zhouet al., “Chain-of-thought prompting elicits reasoning in large language models,”NeurIPS, vol. 35, pp. 24 824–24 837, 2022

2022

-

[66]

The dawn of natural language to sql: Are we fully ready?

B. Li, Y . Luo, C. Chai, G. Li, and N. Tang, “The dawn of natural language to sql: Are we fully ready?”PVLDB, vol. 17, no. 11, pp. 3318–3331, 2024

2024

-

[67]

Evaluating the instruction-following abilities of language models using knowledge tasks,

R. Murthy, P. Kumar, P. Venkateswaran, and D. Contractor, “Evaluating the instruction-following abilities of language models using knowledge tasks,”arXiv preprint arXiv:2410.12972, 2024

-

[68]

InFoBench: Evaluating instruction following ability in large language models,

Y . Qin, K. Song, Y . Hu, W. Yao, S. Cho, X. Wang, X. Wu, F. Liu, P. Liu, and D. Yu, “InFoBench: Evaluating instruction following ability in large language models,” inACL, 2024, pp. 13 025–13 048

2024

-

[69]

Instruction-Following Evaluation for Large Language Models

J. Zhou, T. Lu, S. Mishra, S. Brahma, S. Basu, Y . Luan, D. Zhou, and L. Hou, “Instruction-following evaluation for large language models,” arXiv preprint arXiv:2311.07911, 2023

work page internal anchor Pith review arXiv 2023

-

[70]

Fine-tuning text-to- sql models with reinforcement-learning training objectives,

X.-B. Nguyen, X.-H. Phan, and M. Piccardi, “Fine-tuning text-to- sql models with reinforcement-learning training objectives,”Natural Language Processing Journal, vol. 10, p. 100135, 2025

2025

-

[71]

Let’s verify step by step,

H. Lightman, V . Kosaraju, Y . Burda, H. Edwards, B. Baker, T. Lee, J. Leike, J. Schulman, I. Sutskever, and K. Cobbe, “Let’s verify step by step,” inICLR, 2024

2024

-

[72]

Self-rewarding language models,

W. Yuan, R. Y . Pang, K. Cho, X. Li, S. Sukhbaatar, J. Xu, and J. E. Weston, “Self-rewarding language models,” inICML, 2024

2024

-

[73]

Autopsv: Automated process-supervised verifier,

J. Lu, Z. Dou, H. Wang, Z. Cao, J. Dai, Y . Feng, and Z. Guo, “Autopsv: Automated process-supervised verifier,”NeurIPS, vol. 37, pp. 79 935– 79 962, 2024

2024

-

[74]

Math-shepherd: Verify and reinforce llms step-by-step without human annotations,

P. Wang, L. Li, Z. Shao, R. Xu, D. Dai, Y . Li, D. Chen, Y . Wu, and Z. Sui, “Math-shepherd: Verify and reinforce llms step-by-step without human annotations,” inACL, 2024, pp. 9426–9439

2024

-

[75]

Alpha-sql: Zero-shot text-to-sql using monte carlo tree search,

B. Li, J. Zhang, J. Fan, Y . Xu, C. Chen, N. Tang, and Y . Luo, “Alpha-sql: Zero-shot text-to-sql using monte carlo tree search,” inICML, 2025

2025

-

[76]

M. Pourreza, S. Talaei, R. Sun, X. Wan, H. Li, A. Mirhoseini, A. Saberi, S. Ariket al., “Reasoning-sql: Reinforcement learning with sql tai- lored partial rewards for reasoning-enhanced text-to-sql,”arXiv preprint arXiv:2503.23157, 2025

-

[77]

Scaling text2sql via llm-efficient schema filtering with functional dependency graph rerankers,

T. D. Hoang, T. T. Nguyen, T. T. Huynh, H. Yin, and Q. V . H. Nguyen, “Scaling text2sql via llm-efficient schema filtering with functional dependency graph rerankers,”arXiv preprint arXiv:2512.16083, 2025

-

[78]

Y . Han, C. Liu, and P. Wang, “A comprehensive survey on vector database: Storage and retrieval technique, challenge,”arXiv preprint arXiv:2310.11703, 2023

-

[79]

Qwen3 Embedding: Advancing Text Embedding and Reranking Through Foundation Models

Y . Zhang, M. Li, D. Long, X. Zhang, H. Lin, B. Yang, P. Xie, A. Yang, D. Liu, J. Linet al., “Qwen3 embedding: Advancing text embedding and reranking through foundation models,”arXiv preprint arXiv:2506.05176, 2025

work page internal anchor Pith review arXiv 2025

-

[80]

Billion-scale similarity search with gpus,

J. Johnson, M. Douze, and H. J ´egou, “Billion-scale similarity search with gpus,”T-BD, vol. 7, no. 3, pp. 535–547, 2019

2019

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.