Recognition: unknown

EVE: Verifiable Self-Evolution of MLLMs via Executable Visual Transformations

Pith reviewed 2026-05-10 05:20 UTC · model grok-4.3

The pith

Multimodal models can self-improve continuously by generating their own training tasks through executable Python scripts that transform images and yield verifiable answers.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

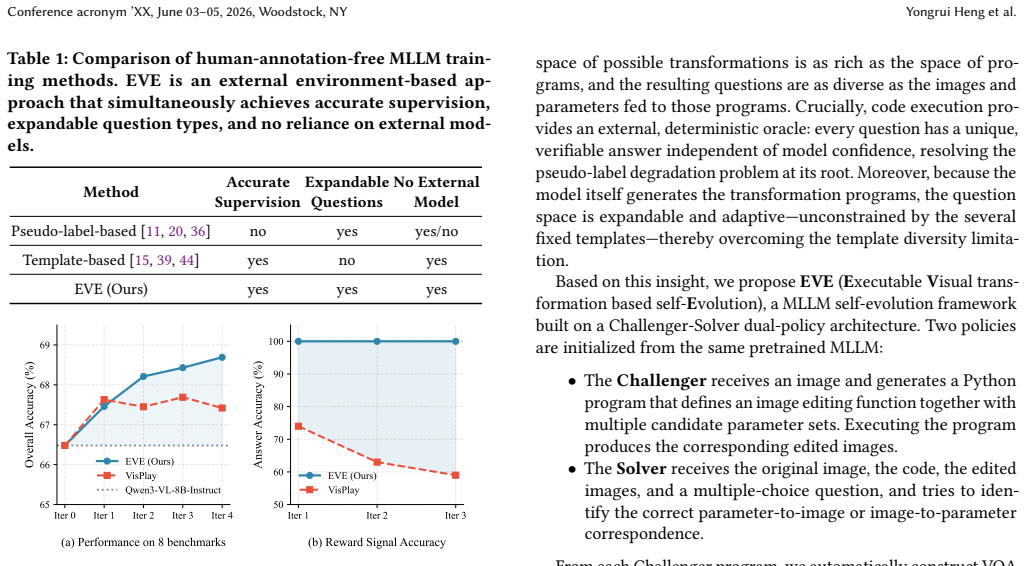

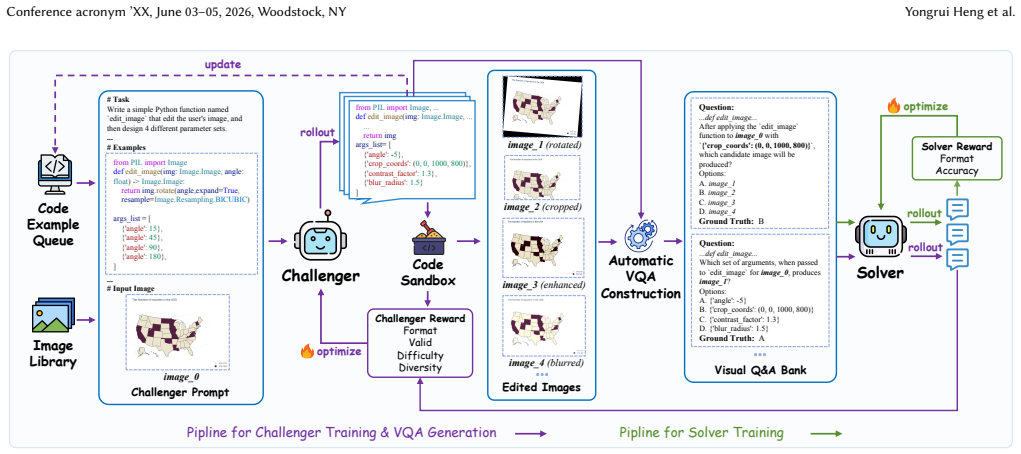

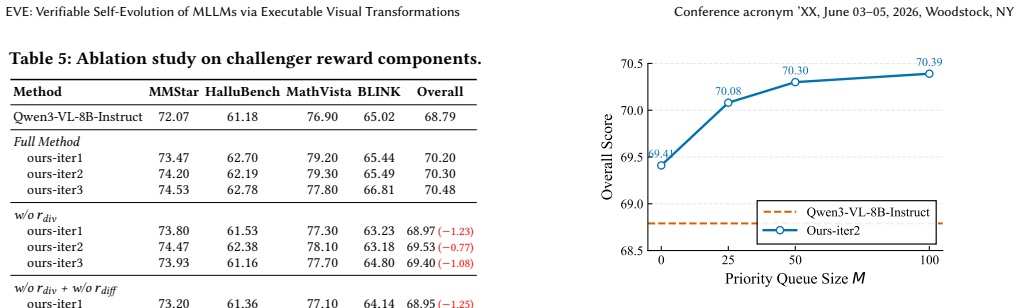

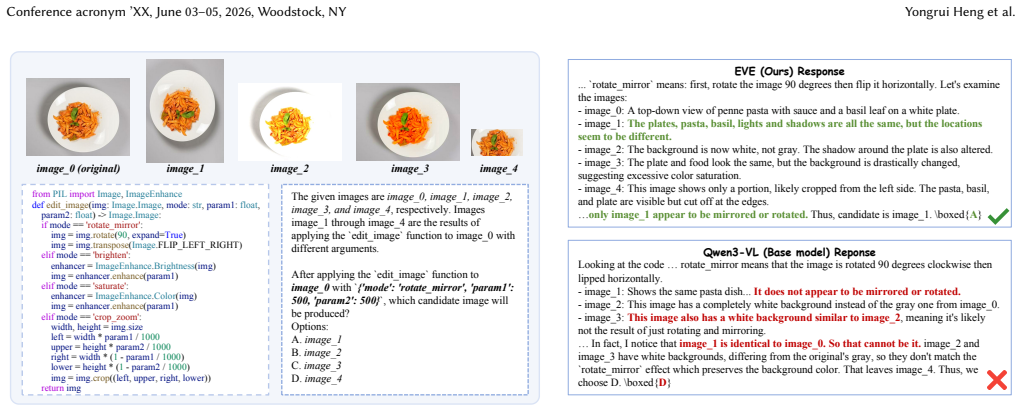

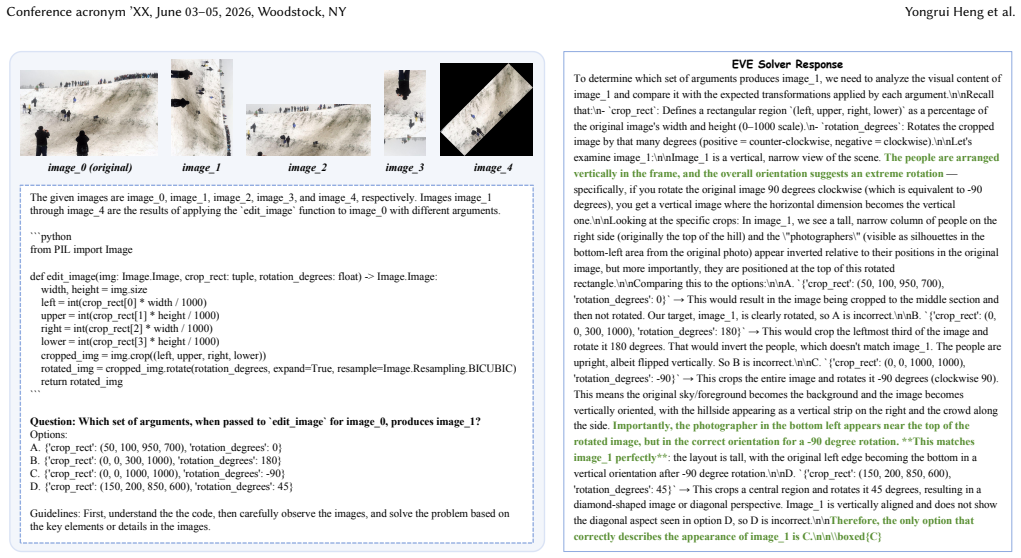

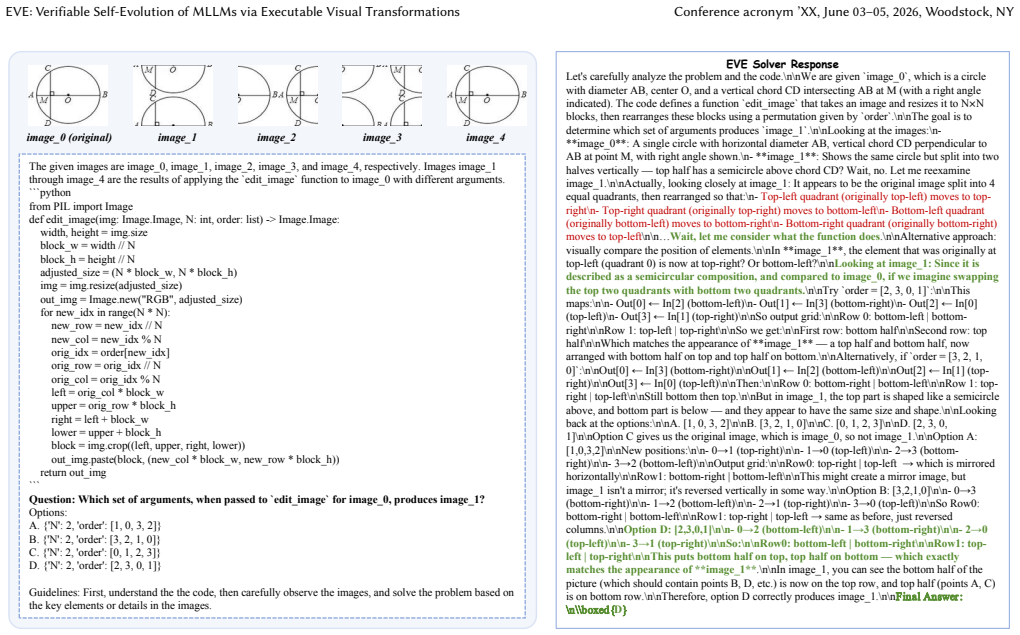

EVE is a dual-policy framework in which a Challenger maintains an expanding queue of visual transformation code examples and synthesizes fresh Python scripts; each script executes to produce a visual question-answering instance whose answer is determined exactly by the code itself. A multi-dimensional reward combining semantic diversity and dynamic difficulty calibration directs the Challenger to enlarge both the variety and hardness of the queue, enabling reciprocal improvement between Challenger and Solver without reliance on model-generated labels.

What carries the argument

The Challenger-Solver dual-policy architecture, in which the Challenger synthesizes and refines executable visual transformation scripts that are run to create VQA problems carrying absolute, code-verified ground-truth answers.

If this is right

- Training data can be expanded indefinitely while remaining grounded in executable verification rather than drifting predictions.

- Task difficulty and semantic variety increase automatically under the calibrated reward signals.

- The Solver policy receives supervision whose correctness is independent of its own current capabilities.

- The overall system surpasses prior self-evolution approaches that rely on either pseudo-labels or static templates.

Where Pith is reading between the lines

- The same executable-transformation idea could be tested on tasks beyond VQA, such as generating visual reasoning chains or image-editing instructions.

- If the code queue continues to grow, computational cost of sampling and executing scripts may become a practical bottleneck at large scale.

- The approach implicitly assumes access to a Python interpreter and image-processing libraries during training, which may limit deployment settings.

Load-bearing premise

The reward system will keep enlarging the library of transformation codes in both variety and difficulty without the Challenger falling into repetitive patterns, and every new script will produce valid questions whose answers are always correct when the code runs.

What would settle it

Execute multiple rounds of the EVE loop and check whether accuracy on a fixed held-out VQA benchmark plateaus or declines, or whether manual inspection finds a non-negligible fraction of generated questions whose code-derived answers are factually wrong.

Figures

read the original abstract

Self-evolution of multimodal large language models (MLLMs) remains a critical challenge: pseudo-label-based methods suffer from progressive quality degradation as model predictions drift, while template-based methods are confined to a static set of transformations that cannot adapt in difficulty or diversity. We contend that robust, continuous self-improvement requires not only deterministic external feedback independent of the model's internal certainty, but also a mechanism to perpetually diversify the training distribution. To this end, we introduce EVE (Executable Visual transformation-based self-Evolution), a novel framework that entirely bypasses pseudo-labels by harnessing executable visual transformations continuously enriched in both variety and complexity. EVE adopts a Challenger-Solver dual-policy architecture. The Challenger maintains and progressively expands a queue of visual transformation code examples, from which it synthesizes novel Python scripts to perform dynamic visual transformations. Executing these scripts yields VQA problems with absolute, execution-verified ground-truth answers, eliminating any reliance on model-generated supervision. A multi-dimensional reward system integrating semantic diversity and dynamic difficulty calibration steers the Challenger to enrich its code example queue while posing progressively more challenging tasks, preventing mode collapse and fostering reciprocal co-evolution between the two policies. Extensive experiments demonstrate that EVE consistently surpasses existing self-evolution methods, establishing a robust and scalable paradigm for verifiable MLLM self-evolution. The code is available at https://github.com/0001Henry/EVE .

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The paper introduces EVE, a Challenger-Solver dual-policy framework for verifiable self-evolution of MLLMs. The Challenger synthesizes and maintains a queue of executable Python scripts for visual transformations that generate VQA instances with absolute ground-truth answers obtained via code execution (bypassing pseudo-labels). A multi-dimensional reward combining semantic diversity and dynamic difficulty calibration is used to expand the queue in variety and complexity while co-evolving with the Solver policy. The authors claim that extensive experiments demonstrate consistent outperformance over existing self-evolution methods, establishing a robust scalable paradigm.

Significance. If the empirical results and the perpetual-expansion assumption hold, the work would be significant: it replaces model-dependent supervision with external deterministic verification through code execution, directly addressing pseudo-label drift. The open-sourced code and the dual-policy co-evolution mechanism are concrete strengths that could support reproducible follow-up work on verifiable MLLM training.

major comments (3)

- [Method (Challenger policy and reward formulation)] The central claim that the multi-dimensional reward (semantic diversity + dynamic difficulty) perpetually expands the transformation queue without mode collapse or invalid VQA outputs is load-bearing for the 'robust and scalable paradigm' assertion, yet the manuscript supplies neither a formal analysis of the reward dynamics nor an empirical trace of queue evolution (e.g., diversity metrics or failure rates over training steps).

- [Experiments] The abstract states that 'extensive experiments demonstrate that EVE consistently surpasses existing self-evolution methods,' but the provided text contains no details on the datasets, baselines, metrics, ablations, statistical significance tests, or number of runs; without these, the outperformance claim cannot be evaluated.

- [Reward system and VQA generation] The weakest assumption—that synthesized scripts always produce valid VQA problems with unambiguous, execution-verified ground truth after geometric or semantic transforms—is asserted but not supported by edge-case analysis or failure-mode statistics; a single counter-example of ambiguous references would undermine the verifiable advantage.

minor comments (2)

- [Method] Notation for the two policies (Challenger vs. Solver) and the reward components should be introduced with explicit equations rather than high-level prose to improve reproducibility.

- [Experiments] The GitHub link is provided, but the manuscript should include a brief reproducibility checklist (e.g., exact environment, seed values, and how the initial code-example queue is seeded).

Simulated Author's Rebuttal

We thank the referee for the constructive and detailed feedback. We address each major comment point by point below and indicate the planned revisions.

read point-by-point responses

-

Referee: [Method (Challenger policy and reward formulation)] The central claim that the multi-dimensional reward (semantic diversity + dynamic difficulty) perpetually expands the transformation queue without mode collapse or invalid VQA outputs is load-bearing for the 'robust and scalable paradigm' assertion, yet the manuscript supplies neither a formal analysis of the reward dynamics nor an empirical trace of queue evolution (e.g., diversity metrics or failure rates over training steps).

Authors: We agree that a formal analysis of the reward dynamics and empirical traces of queue evolution would strengthen the central claims. The revised manuscript will add a dedicated subsection with the mathematical formulation of the multi-dimensional reward and new figures showing queue evolution, including quantitative metrics for semantic diversity, dynamic difficulty, and failure rates (e.g., invalid script percentages) across training steps. These additions will explicitly demonstrate the absence of mode collapse. revision: yes

-

Referee: [Experiments] The abstract states that 'extensive experiments demonstrate that EVE consistently surpasses existing self-evolution methods,' but the provided text contains no details on the datasets, baselines, metrics, ablations, statistical significance tests, or number of runs; without these, the outperformance claim cannot be evaluated.

Authors: The full manuscript contains Section 4 (Experiments) specifying the datasets (VQA-v2, GQA, OKVQA), baselines, metrics, ablation studies, and results averaged over multiple runs. To address the concern that these details were not sufficiently prominent, we will add a concise summary table and statistical significance tests (e.g., paired t-tests with p-values) in the revised version. revision: partial

-

Referee: [Reward system and VQA generation] The weakest assumption—that synthesized scripts always produce valid VQA problems with unambiguous, execution-verified ground truth after geometric or semantic transforms—is asserted but not supported by edge-case analysis or failure-mode statistics; a single counter-example of ambiguous references would undermine the verifiable advantage.

Authors: We acknowledge the need for explicit validation of this assumption. The revised manuscript will include a new subsection on edge cases and failure modes, reporting empirical statistics on the rate of ambiguous or invalid VQA instances generated by the scripts, along with how the reward system and post-execution filtering mitigate them. This will provide concrete evidence supporting the verifiable advantage. revision: yes

Circularity Check

No significant circularity detected

full rationale

The paper's core mechanism uses external Python code execution to generate VQA instances with absolute, execution-verified ground truth, which is independent of model predictions and directly addresses pseudo-label drift. The Challenger's multi-dimensional reward (semantic diversity + dynamic difficulty) is presented conceptually without equations, fitted parameters, or reductions that would make synthesized scripts or queue expansions equivalent to the inputs by construction. No self-citations, uniqueness theorems, or ansatzes from prior author work are invoked as load-bearing steps in the derivation. The dual-policy co-evolution therefore rests on an externally verifiable process rather than self-referential fitting or renaming.

Axiom & Free-Parameter Ledger

free parameters (1)

- multi-dimensional reward weights

axioms (1)

- domain assumption Execution of synthesized Python scripts on images produces valid VQA problems whose ground-truth answers are absolute and independent of any model prediction.

invented entities (2)

-

Challenger policy

no independent evidence

-

Solver policy

no independent evidence

Reference graph

Works this paper leans on

-

[1]

Sanghwan Bae, Jiwoo Hong, Min Young Lee, Hanbyul Kim, JeongYeon Nam, and Donghyun Kwak. 2026. Online difficulty filtering for reasoning oriented reinforcement learning. InProceedings of the 19th Conference of the European Chapter of the Association for Computational Linguistics (Volume 1: Long Papers). 700–719

2026

-

[2]

Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, Wei Ding, Chang Gao, Chunjiang Ge, et al . 2025. Qwen3-vl technical report.arXiv preprint arXiv:2511.21631(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[3]

Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Yuhang Zang, Zehui Chen, Haodong Duan, Jiaqi Wang, Yu Qiao, Dahua Lin, et al . 2024. Are we on the right way for evaluating large vision-language models?Advances in Neural Information Processing Systems37 (2024), 27056–27087

2024

- [4]

-

[5]

Shijian Deng, Kai Wang, Tianyu Yang, Harsh Singh, and Yapeng Tian. 2025. Self- Improvement in Multimodal Large Language Models: A Survey. InFindings of the Association for Computational Linguistics: EMNLP 2025. 1987–2006

2025

-

[6]

Haodong Duan, Junming Yang, Yuxuan Qiao, Xinyu Fang, Lin Chen, Yuan Liu, Xiaoyi Dong, Yuhang Zang, Pan Zhang, Jiaqi Wang, et al. 2024. Vlmevalkit: An open-source toolkit for evaluating large multi-modality models. InProceedings of the 32nd ACM International Conference on Multimedia. 11198–11201

2024

-

[7]

Xingyu Fu, Yushi Hu, Bangzheng Li, Yu Feng, Haoyu Wang, Xudong Lin, Dan Roth, Noah A Smith, Wei-Chiu Ma, and Ranjay Krishna. 2024. Blink: Multimodal large language models can see but not perceive. InEuropean Conference on Computer Vision. Springer, 148–166

2024

-

[8]

Tianrui Guan, Fuxiao Liu, Xiyang Wu, Ruiqi Xian, Zongxia Li, Xiaoyu Liu, Xijun Wang, Lichang Chen, Furong Huang, Yaser Yacoob, et al. 2024. Hallusionbench: an advanced diagnostic suite for entangled language hallucination and visual illusion in large vision-language models. InProceedings of the IEEE/CVF conference on computer vision and pattern recognitio...

2024

- [9]

- [10]

-

[11]

Yicheng He, Chengsong Huang, Zongxia Li, Jiaxin Huang, and Yonghui Yang

- [12]

- [13]

- [14]

-

[15]

Aaron Hurst, Adam Lerer, Adam P Goucher, Adam Perelman, Aditya Ramesh, Aidan Clark, AJ Ostrow, Akila Welihinda, Alan Hayes, Alec Radford, et al. 2024. Gpt-4o system card.arXiv preprint arXiv:2410.21276(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[16]

Ahmadreza Jeddi, Hakki Can Karaimer, Hue Nguyen, Zhongling Wang, Ke Zhao, Javad Rajabi, Ran Zhang, Raghav Goyal, Babak Taati, and Radek Grzeszczuk

- [17]

- [18]

-

[19]

Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. 2023. Efficient memory management for large language model serving with pagedattention. In Proceedings of the 29th symposium on operating systems principles. 611–626

2023

-

[20]

Bo Li, Yuanhan Zhang, Dong Guo, Renrui Zhang, Feng Li, Hao Zhang, Kaichen Zhang, Peiyuan Zhang, Yanwei Li, Ziwei Liu, et al. 2024. Llava-onevision: Easy visual task transfer.arXiv preprint arXiv:2408.03326(2024)

work page Pith review arXiv 2024

-

[21]

Mingxin Li, Yanzhao Zhang, Dingkun Long, Chen Keqin, Sibo Song, Shuai Bai, Zhibo Yang, Pengjun Xie, An Yang, Dayiheng Liu, Jingren Zhou, and Junyang Lin. 2026. Qwen3-VL-Embedding and Qwen3-VL-Reranker: A Unified Frame- work for State-of-the-Art Multimodal Retrieval and Ranking.arXiv preprint arXiv:2601.04720(2026)

work page internal anchor Pith review arXiv 2026

- [22]

-

[23]

Zongxia Li, Wenhao Yu, Chengsong Huang, Rui Liu, Zhenwen Liang, Fux- iao Liu, Jingxi Che, Dian Yu, Jordan Boyd-Graber, Haitao Mi, and Dong Yu

-

[24]

Self-Rewarding Vision-Language Model via Reasoning Decomposition

Self-Rewarding Vision-Language Model via Reasoning Decomposition. arXiv:2508.19652 [cs.CV] https://arxiv.org/abs/2508.19652

work page internal anchor Pith review Pith/arXiv arXiv

-

[25]

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, and Andy Zeng. 2023. Code as policies: Language model programs for embodied control. In2023 IEEE International conference on robotics and automation (ICRA). IEEE, 9493–9500

2023

- [26]

- [27]

- [28]

-

[29]

Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. 2023. Mathvista: Evaluating Math Reasoning of foundation models in visual contexts.arXiv preprint arXiv:2310.02255(2023)

work page internal anchor Pith review arXiv 2023

-

[30]

Kishore Papineni, Salim Roukos, Todd Ward, and Wei-Jing Zhu. 2002. Bleu: a method for automatic evaluation of machine translation. InProceedings of the 40th annual meeting of the Association for Computational Linguistics. 311–318

2002

- [31]

-

[32]

Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, et al. 2021. Learning transferable visual models from natural language supervision. InInternational conference on machine learning. PmLR, 8748–8763

2021

-

[33]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Yang Wu, et al. 2024. Deepseekmath: Push- ing the limits of Math Reasoning in open language models.arXiv preprint arXiv:2402.03300(2024)

work page internal anchor Pith review Pith/arXiv arXiv 2024

-

[34]

Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. 2024. HybridFlow: A Flexible and Efficient RLHF Framework.arXiv preprint arXiv: 2409.19256(2024)

work page internal anchor Pith review arXiv 2024

-

[35]

Aaditya Singh, Adam Fry, Adam Perelman, Adam Tart, Adi Ganesh, Ahmed El-Kishky, Aidan McLaughlin, Aiden Low, AJ Ostrow, Akhila Ananthram, et al

-

[36]

Openai gpt-5 system card.arXiv preprint arXiv:2601.03267(2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

- [37]

-

[38]

Meghana Sunil, Manikandarajan Venmathimaran, and Muthu Subash Kavitha

- [39]

-

[40]

Dídac Surís, Sachit Menon, and Carl Vondrick. 2023. Vipergpt: Visual inference via python execution for reasoning. InProceedings of the IEEE/CVF international conference on computer vision. 11888–11898

2023

- [41]

- [42]

- [43]

-

[44]

Blaschko

Zifu Wang, Junyi Zhu, Bo Tang, Zhiyu Li, Feiyu Xiong, Jiaqian Yu, and Matthew B. Blaschko. 2025. Jigsaw-R1: A Study of Rule-based Visual Reinforcement Learning with Jigsaw Puzzles

2025

- [45]

- [46]

-

[47]

Qiying Yu, Zheng Zhang, Ruofei Zhu, Yufeng Yuan, Xiaochen Zuo, Yu Yue, Weinan Dai, Tiantian Fan, Gaohong Liu, Lingjun Liu, et al. 2025. Dapo: An open- source llm reinforcement learning system at scale.arXiv preprint arXiv:2503.14476 (2025)

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[48]

Weihao Yu, Zhengyuan Yang, Linjie Li, Jianfeng Wang, Kevin Lin, Zicheng Liu, Xinchao Wang, and Lijuan Wang. 2023. Mm-vet: Evaluating large multimodal models for integrated capabilities.arXiv preprint arXiv:2308.02490(2023)

work page internal anchor Pith review arXiv 2023

-

[49]

Yu Zeng, Wenxuan Huang, Shiting Huang, Xikun Bao, Yukun Qi, Yiming Zhao, Qiuchen Wang, Lin Chen, Zehui Chen, Huaian Chen, et al. 2025. Agentic Jigsaw Conference acronym ’XX, June 03–05, 2026, Woodstock, NY Yongrui Heng et al. Interaction Learning for Enhancing Visual Perception and Reasoning in Vision- Language Models.arXiv preprint arXiv:2510.01304(2025)

- [50]

- [51]

-

[52]

Andrew Zhao, Yiran Wu, Yang Yue, Tong Wu, Quentin Xu, Matthieu Lin, Shen- zhi Wang, Qingyun Wu, Zilong Zheng, and Gao Huang. 2025. Absolute zero: Reinforced self-play reasoning with zero data.arXiv preprint arXiv:2505.03335 (2025)

work page internal anchor Pith review arXiv 2025

- [53]

- [54]

-

[55]

InternVL3: Exploring Advanced Training and Test-Time Recipes for Open-Source Multimodal Models

Jinguo Zhu, Weiyun Wang, Zhe Chen, Zhaoyang Liu, Shenglong Ye, Lixin Gu, Hao Tian, Yuchen Duan, Weijie Su, Jie Shao, et al. 2025. Internvl3: Exploring advanced training and test-time recipes for open-source multimodal models. arXiv preprint arXiv:2504.10479(2025). EVE: Verifiable Self-Evolution of MLLMs via Executable Visual Transformations Conference acr...

work page internal anchor Pith review arXiv 2025

-

[56]

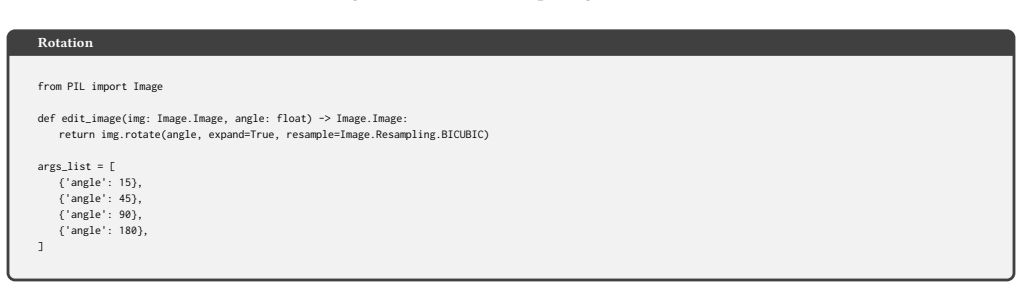

The`edit_image`function must accept a PIL Image object and specific parameters, returning a modified PIL Image object

-

[57]

The Python code must include necessary imports, the`edit_image`function, and a list of dictionaries named`args_list`

-

[58]

Ensure that the 4 sets of parameters in`args_list`produce 4 visually distinct editing results

-

[59]

No comments must be added to the`edit_image`function

-

[60]

photographers\

The examples below are for format reference only. The parameters must be designed according to the specific content of the user's image; do not copy them directly. # Examples ## Example 1 ```python {code_str1} ``` ## Example 2 ```python {code_str2} ``` Observe the given image, design the code with reference to the image content. Output your reasoning proc...

2026

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.