Recognition: unknown

From Context to Skills: Can Language Models Learn from Context Skillfully?

Pith reviewed 2026-05-07 06:26 UTC · model grok-4.3

The pith

Language models can autonomously discover and refine context-specific skills through a multi-agent self-play loop without human supervision.

A machine-rendered reading of the paper's core claim, the machinery that carries it, and where it could break.

Core claim

Ctx2Skill is a self-evolving framework that autonomously discovers, refines, and selects context-specific skills without human supervision or external feedback by running a multi-agent self-play loop with Challenger, Reasoner, Judge, Proposer, Generator, and Cross-time Replay.

What carries the argument

A multi-agent self-play loop in which the Challenger generates probing tasks and rubrics, the Reasoner solves them using an evolving skill set, the Judge supplies binary feedback, and dedicated Proposer and Generator agents convert failures into targeted skill updates, stabilized by Cross-time Replay that selects the best-balanced skill set across representative cases.

If this is right

- The discovered skills can be extracted and plugged directly into any language model to raise its performance on similar context tasks.

- Solving rates improve on the four CL-bench context-learning tasks without requiring manual skill annotation or external validators.

- The Cross-time Replay step keeps skill sets from over-specializing, preserving performance across varied cases within a task family.

- Skill evolution driven only by internal failure signals allows the method to scale to long, technically dense contexts where human oversight is impractical.

Where Pith is reading between the lines

- If the skills capture reusable procedures rather than task-specific tricks, the same evolved set could transfer to new contexts drawn from related domains without re-running the full loop.

- Combining the method with chain-of-thought or tool-use scaffolding might compound gains on multi-step reasoning problems that currently rely on hand-crafted prompts.

- Measuring skill stability by freezing the skill set and testing it on held-out contexts from later time steps would show whether the replay mechanism truly selects robust rules.

Load-bearing premise

The multi-agent loop can produce generalizable skills from failure feedback alone without the process collapsing into narrow or adversarial behaviors.

What would settle it

Run the evolved skills on a fresh set of context-learning tasks drawn from the same distribution and measure whether solving rates stay higher than the unaugmented baseline models; equal or lower rates would falsify the claim of consistent improvement.

Figures

read the original abstract

Many real-world tasks require language models (LMs) to reason over complex contexts that exceed their parametric knowledge. This calls for context learning, where LMs directly learn relevant knowledge from the given context. An intuitive solution is inference-time skill augmentation: extracting the rules and procedures from context into natural-language skills. However, constructing such skills for context learning scenarios faces two challenges: the prohibitive cost of manual skill annotation for long, technically dense contexts, and the lack of external feedback for automated skill construction. In this paper, we propose Ctx2Skill, a self-evolving framework that autonomously discovers, refines, and selects context-specific skills without human supervision or external feedback. At its core, a multi-agent self-play loop has a Challenger that generates probing tasks and rubrics, a Reasoner that attempts to solve them guided by an evolving skill set, and a neutral Judge that provides binary feedback. Crucially, both the Challenger and the Reasoner evolve through accumulated skills: dedicated Proposer and Generator agents analyze failure cases and synthesize them into targeted skill updates for both sides, enabling automated skill discovery and refinement. To prevent adversarial collapse caused by increasingly extreme task generation and over-specialized skill accumulation, we further introduce a Cross-time Replay mechanism that identifies the skill set achieving the best balance across representative cases for the Reasoner side, ensuring robust and generalizable skill evolution. The resulting skills can be plugged into any language model to obtain better context learning capability. Evaluated on four context learning tasks from CL-bench, Ctx2Skill consistently improves solving rates across backbone models.

Editorial analysis

A structured set of objections, weighed in public.

Referee Report

Summary. The manuscript introduces Ctx2Skill, a self-evolving multi-agent framework for autonomously discovering, refining, and selecting context-specific skills from complex contexts to improve language models' context learning without human supervision or external feedback. The core is a self-play loop with Challenger (generating probing tasks/rubrics), Reasoner (solving with evolving skills), Judge (binary feedback), Proposer/Generator (synthesizing updates from failures), and Cross-time Replay (selecting balanced skill sets across representative cases to prevent collapse). The resulting skills are plugged into any LM, with evaluation claiming consistent solving-rate improvements on four CL-bench context-learning tasks across backbone models.

Significance. If the empirical gains prove robust and the skills demonstrate genuine transfer beyond internally generated probes, the work would meaningfully advance inference-time skill augmentation for LMs by removing reliance on manual annotation. The parameter-free, fully internal self-play design and Cross-time Replay for stability are notable strengths that could inspire further automated adaptation methods. However, the significance hinges on whether the internal LM feedback loop produces verifiably generalizable skills rather than superficial or distribution-specific improvements.

major comments (2)

- [§3.3] §3.3 (Cross-time Replay mechanism): The selection of the 'best-balanced' skill set relies exclusively on representative cases generated by the Challenger within the self-play loop. Because these cases are produced by the same LM-based system and not drawn from the CL-bench distribution, the mechanism provides no external guarantee that the selected skills cover or transfer to the actual evaluation contexts. This directly bears on the central claim of generalizable context learning; an ablation measuring skill performance on held-out, non-generated contexts is required.

- [§4] §4 (Experiments and evaluation): The manuscript reports that Ctx2Skill 'consistently improves solving rates' on four CL-bench tasks but supplies no quantitative tables with exact rates, standard deviations across runs, statistical significance, or ablations isolating the contribution of skill updates versus increased prompt structure or inference tokens. Without these, it is impossible to determine whether gains arise from discovered skills or from the multi-agent loop simply providing more structured reasoning at test time, undermining verification of the framework's effectiveness.

minor comments (2)

- [Abstract] The first mention of 'CL-bench' in the abstract and introduction lacks a citation or one-sentence description of the benchmark tasks; this should be added for readers unfamiliar with the reference.

- [§3.2] Notation for the skill-update equations in §3.2 is introduced without an explicit summary table mapping agent roles to update rules; a small table would improve readability.

Simulated Author's Rebuttal

We thank the referee for the detailed and constructive feedback. We address each major comment below with clarifications and commit to specific revisions that strengthen the empirical support for our claims without altering the core contributions of the Ctx2Skill framework.

read point-by-point responses

-

Referee: [§3.3] §3.3 (Cross-time Replay mechanism): The selection of the 'best-balanced' skill set relies exclusively on representative cases generated by the Challenger within the self-play loop. Because these cases are produced by the same LM-based system and not drawn from the CL-bench distribution, the mechanism provides no external guarantee that the selected skills cover or transfer to the actual evaluation contexts. This directly bears on the central claim of generalizable context learning; an ablation measuring skill performance on held-out, non-generated contexts is required.

Authors: We appreciate the referee's emphasis on external validation for generalizability. The Cross-time Replay is explicitly designed to select skill sets that achieve balanced performance across diverse probing tasks generated from the input context, thereby mitigating over-specialization. While these probes are internally generated, they are derived directly from the same complex contexts used in CL-bench evaluation, and the multi-agent loop (including Challenger evolution) aims to surface broadly applicable skills. Nevertheless, to provide the requested external guarantee, the revised manuscript will include a new ablation: we will evaluate the final selected skill sets on held-out CL-bench contexts that were never seen during self-play, reporting performance differences relative to the original evaluation. This addition will directly test transfer beyond the internal distribution. revision: yes

-

Referee: [§4] §4 (Experiments and evaluation): The manuscript reports that Ctx2Skill 'consistently improves solving rates' on four CL-bench tasks but supplies no quantitative tables with exact rates, standard deviations across runs, statistical significance, or ablations isolating the contribution of skill updates versus increased prompt structure or inference tokens. Without these, it is impossible to determine whether gains arise from discovered skills or from the multi-agent loop simply providing more structured reasoning at test time, undermining verification of the framework's effectiveness.

Authors: We agree that the current manuscript would benefit from greater quantitative transparency. The revised version will include full tables reporting exact solving rates for each of the four CL-bench tasks across all backbone models, with standard deviations computed over at least three independent runs. We will also add statistical significance testing (e.g., paired t-tests against baselines). To isolate the effect of skill discovery, we will introduce ablations that compare the full Ctx2Skill pipeline against controls in which the multi-agent loop supplies equivalent additional prompt structure and inference tokens but without the Proposer/Generator-driven skill updates. These controls will clarify that observed gains stem from the autonomously evolved skills rather than incidental increases in reasoning scaffolding. revision: yes

Circularity Check

No circularity: empirical framework with external benchmark evaluation

full rationale

The paper describes Ctx2Skill as a multi-agent self-play framework (Challenger, Reasoner, Judge, Proposer, Generator, Cross-time Replay) for autonomous skill discovery from context, with the resulting skills plugged into LMs and evaluated empirically on four tasks from the external CL-bench benchmark. No equations, parameters, or derivations appear in the provided text. No self-citations are invoked to justify core components or uniqueness. Improvements are reported as measured solving-rate gains on held-out tasks rather than any quantity fitted to the same inputs and renamed as a prediction. The architecture is self-contained against external benchmarks, satisfying the criteria for a non-circular finding.

Axiom & Free-Parameter Ledger

axioms (2)

- domain assumption Language models possess sufficient reasoning capability to generate probing tasks, solve them under evolving skills, and provide accurate binary judgments without external supervision.

- domain assumption Failure cases contain enough signal to allow Proposer and Generator agents to synthesize targeted, non-redundant skill updates.

invented entities (1)

-

Cross-time Replay mechanism

no independent evidence

Reference graph

Works this paper leans on

- [1]

-

[2]

Anthropic. 2025. Equipping agents for the real world with agent skills

2025

-

[3]

Anthropic. 2025. Introduction to agent skills

2025

-

[4]

Anthropic. 2025. System card: Claude opus 4.5

2025

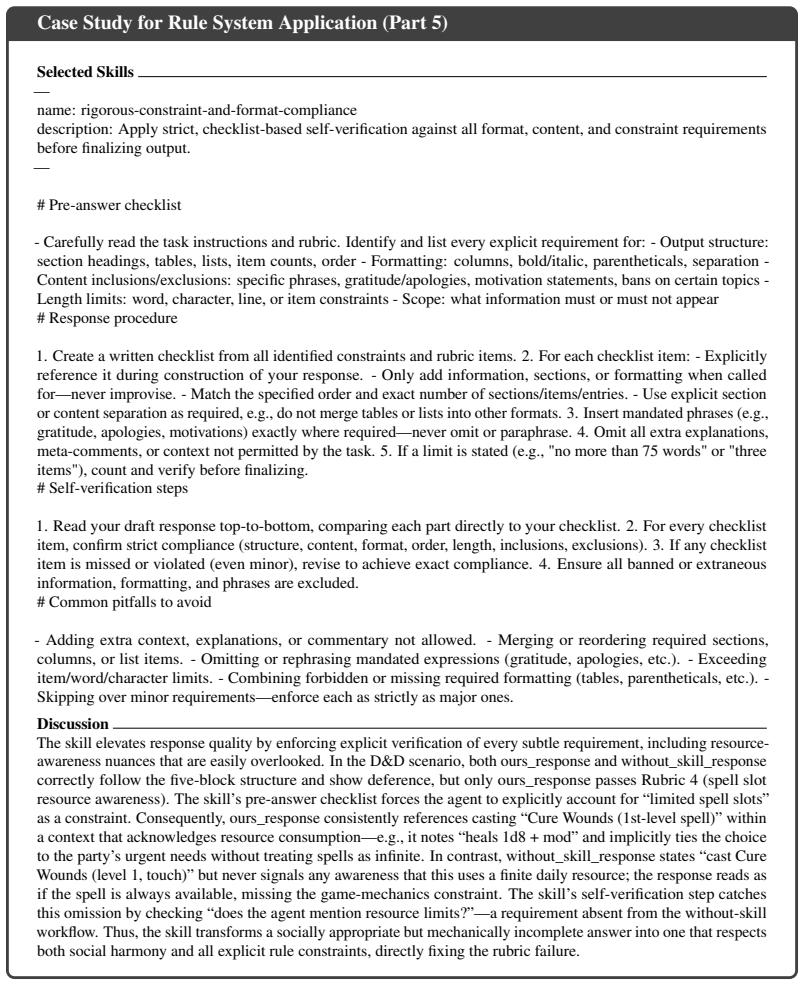

-

[5]

Anthropic. 2026. System card: Claude opus 4.6

2026

-

[6]

Yushi Bai, Xin Lv, Jiajie Zhang, Hongchang Lyu, Jiankai Tang, Zhidian Huang, Zhengxiao Du, Xiao Liu, Aohan Zeng, Lei Hou, Yuxiao Dong, Jie Tang, and Juanzi Li. 2024. LongBench: A bilingual, multitask benchmark for long context understanding. InProceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (ACL)

2024

-

[7]

Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. 2021. Training verifiers to solve math word problems.arXiv preprint arXiv:2110.14168

work page internal anchor Pith review arXiv 2021

-

[8]

Google DeepMind. 2025. Gemini 3 pro model card

2025

-

[9]

DeepSeek-AI, Aixin Liu, Bei Feng, Bing Xue, Bingxuan Wang, Bochao Wu, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, Damai Dai, Daya Guo, Dejian Yang, Deli Chen, Dongjie Ji, Erhang Li, Fangyun Lin, Fucong Dai, Fuli Luo, Guangbo Hao, Guanting Chen, Guowei Li, H. Zhang, Han Bao, Hanwei Xu, Haocheng Wang, Haowei Zhang, Honghui Ding, Huaj...

work page internal anchor Pith review arXiv 2025

- [10]

-

[11]

Qingxiu Dong, Lei Li, Damai Dai, Ce Zheng, Zhiyong Wu, Baobao Chang, Xu Sun, Jingjing Xu, Lei Li, and Zhifang Sui. 2023. A survey on in-context learning.Preprint, arXiv:2301.00234

work page internal anchor Pith review arXiv 2023

-

[12]

Shihan Dou, Ming Zhang, Zhangyue Yin, Chenhao Huang, Yujiong Shen, Junzhe Wang, Jiayi Chen, Yuchen Ni, Junjie Ye, Cheng Zhang, Huaibing Xie, Jianglu Hu, Shaolei Wang, Weichao Wang, Yanling Xiao, Yiting Liu, Zenan Xu, Zhen Guo, Pluto Zhou, Tao Gui, Zuxuan Wu, 10 Xipeng Qiu, Qi Zhang, Xuanjing Huang, Yu-Gang Jiang, Di Wang, and Shunyu Yao. 2026. CL-Bench: A...

-

[13]

David A Field. 1988. Laplacian smoothing and delaunay triangulations.Communications in applied numerical methods, 4(6):709–712

1988

-

[14]

Bofei Gao, Feifan Song, Zhe Yang, Zefan Cai, Yibo Miao, Qingxiu Dong, Lei Li, Chenghao Ma, Liang Chen, Runxin Xu, Zhengyang Tang, Benyou Wang, Daoguang Zan, Shanghaoran Quan, Ge Zhang, Lei Sha, Yichang Zhang, Xuancheng Ren, Tianyu Liu, and Baobao Chang

-

[15]

InThe Thirteenth International Conference on Learning Representations

Omni-MATH: A universal olympiad level mathematic benchmark for large language models. InThe Thirteenth International Conference on Learning Representations

-

[16]

Anisha Gunjal, Anthony Wang, Elaine Lau, Vaskar Nath, Yunzhong He, Bing Liu, and Sean Hendryx. 2025. Rubrics as rewards: Reinforcement learning beyond verifiable domains. Preprint, arXiv:2507.17746

work page internal anchor Pith review arXiv 2025

- [17]

-

[18]

Carlos E Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik R Narasimhan. 2024. SWE-bench: Can language models resolve real-world github issues? InThe Twelfth International Conference on Learning Representations

2024

- [19]

-

[20]

Xiangyi Li, Wenbo Chen, Yimin Liu, Shenghan Zheng, Xiaokun Chen, Yifeng He, Yubo Li, Bingran You, Haotian Shen, Jiankai Sun, Shuyi Wang, Binxu Li, Qunhong Zeng, Di Wang, Xuandong Zhao, Yuanli Wang, Roey Ben Chaim, Zonglin Di, Yipeng Gao, Junwei He, Yizhuo He, Liqiang Jing, Luyang Kong, Xin Lan, Jiachen Li, Songlin Li, Yijiang Li, Yueqian Lin, Xinyi Liu, X...

work page internal anchor Pith review arXiv 2026

-

[21]

Pan, Guilin Qi, Haofen Wang, and Huajun Chen

Yuan Liang, Ruobin Zhong, Haoming Xu, Chen Jiang, Yi Zhong, Runnan Fang, Jia-Chen Gu, Shumin Deng, Yunzhi Yao, Mengru Wang, Shuofei Qiao, Xin Xu, Tongtong Wu, Kun Wang, Yang Liu, Zhen Bi, Jungang Lou, Yuchen Eleanor Jiang, Hangcheng Zhu, Gang Yu, Haiwen Hong, Longtao Huang, Hui Xue, Chenxi Wang, Yijun Wang, Zifei Shan, Xi Chen, Zhaopeng Tu, Feiyu Xiong, X...

- [22]

-

[23]

Bo Liu, Leon Guertler, Simon Yu, Zichen Liu, Penghui Qi, Daniel Balcells, Mickel Liu, Cheston Tan, Weiyan Shi, Min Lin, Wee Sun Lee, and Natasha Jaques. 2026. Spiral: Self-play on zero- sum games incentivizes reasoning via multi-agent multi-turn reinforcement learning.Preprint, arXiv:2506.24119

-

[24]

Hongliang Lu, Yuhang Wen, Pengyu Cheng, Ruijin Ding, Jiaqi Guo, Haotian Xu, Chutian Wang, Haonan Chen, xiaoxi jiang, and guanjunjiang. 2026. Search self-play: Pushing the frontier of agent capability without supervision. InThe Fourteenth International Conference on Learning Representations

2026

- [25]

-

[26]

Yingwei Ma, Yue Liu, Xinlong Yang, Yanhao Li, Kelin Fu, Yibo Miao, Yuchong Xie, Zhexu Wang, and Shing-Chi Cheung. 2026. Scaling coding agents via atomic skills.Preprint, arXiv:2604.05013. 11

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[27]

Ziyu Ma, Shidong Yang, Yuxiang Ji, Xucong Wang, Yong Wang, Yiming Hu, Tongwen Huang, and Xiangxiang Chu. 2026. Skillclaw: Let skills evolve collectively with agentic evolver. Preprint, arXiv:2604.08377

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[28]

Lingrui Mei, Jiayu Yao, Yuyao Ge, Yiwei Wang, Baolong Bi, Yujun Cai, Jiazhi Liu, Mingyu Li, Zhong-Zhi Li, Duzhen Zhang, Chenlin Zhou, Jiayi Mao, Tianze Xia, Jiafeng Guo, and Shenghua Liu. 2025. A survey of context engineering for large language models.ArXiv, abs/2507.13334

work page internal anchor Pith review arXiv 2025

-

[29]

OpenAI. 2025. Gpt-5 technical report

2025

-

[30]

OpenAI. 2025. Update to gpt-5 system card: Gpt-5.2

2025

-

[31]

OpenAI, Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Flo- rencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, Red Avila, Igor Babuschkin, Suchir Balaji, Valerie Balcom, Paul Baltescu, Haiming Bao, Mohammad Bavarian, Jeff Belgum, Irwan Bello, Jake Berdine, Gabriel Bernadett-Shapiro, Christopher Bern...

work page internal anchor Pith review arXiv 2024

- [32]

-

[33]

Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, Y . K. Li, Y . Wu, and Daya Guo. 2024. Deepseekmath: Pushing the limits of mathematical reasoning in open language models.arXiv preprint arXiv:2402.03300

work page internal anchor Pith review arXiv 2024

-

[34]

Shuzheng Si, Wentao Ma, Haoyu Gao, Yuchuan Wu, Ting-En Lin, Yinpei Dai, Hangyu Li, Rui Yan, Fei Huang, and Yongbin Li. 2023. SpokenWOZ: A large-scale speech-text benchmark for spoken task-oriented dialogue agents. InThirty-seventh Conference on Neural Information Processing Systems Datasets and Benchmarks Track

2023

-

[35]

Shuzheng Si, Qingyi Wang, Haozhe Zhao, Yuzhuo Bai, Guanqiao Chen, Kangyang Luo, Gang Chen, Fanchao Qi, Minjia Zhang, Baobao Chang, and Maosong Sun. 2026. Faithlens: Detecting and explaining faithfulness hallucination.Preprint, arXiv:2512.20182

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[36]

Shuzheng Si, Haozhe Zhao, Gang Chen, Cheng Gao, Yuzhuo Bai, Zhitong Wang, Kaikai An, Kangyang Luo, Chen Qian, Fanchao Qi, Baobao Chang, and Maosong Sun. 2025. Aligning large language models to follow instructions and hallucinate less via effective data filtering. InProceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Vo...

2025

-

[37]

Shuzheng Si, Haozhe Zhao, Gang Chen, Yunshui Li, Kangyang Luo, Chuancheng Lv, Kaikai An, Fanchao Qi, Baobao Chang, and Maosong Sun. 2025. GATEAU: Selecting influential samples for long context alignment. InProceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, pages 7391–7422, Suzhou, China. Association for Computational L...

2025

-

[38]

Shuzheng Si, Haozhe Zhao, Cheng Gao, Yuzhuo Bai, Zhitong Wang, Bofei Gao, Kangyang Luo, Wenhao Li, Yufei Huang, Gang Chen, Fanchao Qi, Minjia Zhang, Baobao Chang, and Maosong Sun. 2026. Teaching large language models to maintain contextual faithfulness via synthetic tasks and reinforcement learning. InFortieth AAAI Conference on Artificial Intelligence, T...

2026

-

[39]

Shuzheng Si, Haozhe Zhao, Kangyang Luo, Gang Chen, Fanchao Qi, Minjia Zhang, Baobao Chang, and Maosong Sun. 2025. A goal without a plan is just a wish: Efficient and effective global planner training for long-horizon agent tasks.Preprint, arXiv:2510.05608

work page internal anchor Pith review Pith/arXiv arXiv 2025

-

[40]

Kimi Team, Tongtong Bai, Yifan Bai, Yiping Bao, S. H. Cai, Yuan Cao, Y . Charles, H. S. Che, Cheng Chen, Guanduo Chen, Huarong Chen, Jia Chen, Jiahao Chen, Jianlong Chen, Jun Chen, Kefan Chen, Liang Chen, Ruijue Chen, Xinhao Chen, Yanru Chen, Yanxu Chen, Yicun Chen, Yimin Chen, Yingjiang Chen, Yuankun Chen, Yujie Chen, Yutian Chen, Zhirong Chen, Ziwei Che...

work page internal anchor Pith review arXiv 2026

-

[41]

Qwen Team. 2026. Qwen3. 5: Towards native multimodal agents.URL: https://qwen. ai/blog

2026

-

[42]

Chenxi Wang, Zhuoyun Yu, Xin Xie, Wuguannan Yao, Runnan Fang, Shuofei Qiao, Kexin Cao, Guozhou Zheng, Xiang Qi, Peng Zhang, and Shumin Deng. 2026. SkillX: Automatically constructing skill knowledge bases for agents.arXiv preprint arXiv:2604.04804

work page internal anchor Pith review Pith/arXiv arXiv 2026

- [43]

-

[44]

Zhaoyang Wang, Qianhui Wu, Xuchao Zhang, Chaoyun Zhang, Wenlin Yao, Fazle Elahi Faisal, Baolin Peng, Si Qin, Suman Nath, Qingwei Lin, Chetan Bansal, Dongmei Zhang, Saravan Rajmohan, Jianfeng Gao, and Huaxiu Yao. 2026. Webxskill: Skill learning for autonomous web agents.Preprint, arXiv:2604.13318

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[45]

Peng Xia, Jianwen Chen, Hanyang Wang, Jiaqi Liu, Kaide Zeng, Yu Wang, Siwei Han, Yiyang Zhou, Xujiang Zhao, Haifeng Chen, Zeyu Zheng, Cihang Xie, and Huaxiu Yao

-

[46]

Skillrl: Evolving agents via recursive skill-augmented reinforcement learning.Preprint, arXiv:2602.08234

work page internal anchor Pith review arXiv

- [47]

-

[48]

Hanrong Zhang, Shicheng Fan, Henry Peng Zou, Yankai Chen, Zhenting Wang, Jiayu Zhou, Chengze Li, Wei-Chieh Huang, Yifei Yao, Kening Zheng, Xue Liu, Xiaoxiao Li, and Philip S. Yu. 2026. Coevoskills: Self-evolving agent skills via co-evolutionary verification.Preprint, arXiv:2604.01687

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[49]

Haozhen Zhang, Quanyu Long, Jianzhu Bao, Tao Feng, Weizhi Zhang, Haodong Yue, and Wenya Wang. 2026. Memskill: Learning and evolving memory skills for self-evolving agents. Preprint, arXiv:2602.02474. 14

work page internal anchor Pith review arXiv 2026

-

[50]

Qizheng Zhang, Changran Hu, Shubhangi Upasani, Boyuan Ma, Fenglu Hong, Vamsidhar Kamanuru, Jay Rainton, Chen Wu, Mengmeng Ji, Hanchen Li, Urmish Thakker, James Zou, and Kunle Olukotun. 2026. Agentic context engineering: Evolving contexts for self-improving language models.Preprint, arXiv:2510.04618

work page internal anchor Pith review arXiv 2026

-

[51]

Yaocheng Zhang, Yuanheng Zhu, Wenyue Chong, Songjun Tu, Qichao Zhang, Jiajun Chai, Xiaohan Wang, Wei Lin, Guojun Yin, and Dongbin Zhao. 2026. π-play: Multi-agent self-play via privileged self-distillation without external data.Preprint, arXiv:2604.14054

work page internal anchor Pith review Pith/arXiv arXiv 2026

-

[52]

Boyuan Zheng, Michael Y . Fatemi, Xiaolong Jin, Zora Zhiruo Wang, Apurva Gandhi, Yueqi Song, Yu Gu, Jayanth Srinivasa, Gaowen Liu, Graham Neubig, and Yu Su. 2025. Skillweaver: Web agents can self-improve by discovering and honing skills.Preprint, arXiv:2504.07079

-

[53]

Xing, Hao Zhang, Joseph E

Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric P. Xing, Hao Zhang, Joseph E. Gonzalez, and Ion Stoica

-

[54]

InAdvances in Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track

Judging LLM-as-a-judge with MT-Bench and chatbot arena. InAdvances in Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track. 15 Appendix A Statistics In this section, we provide the corresponding statistics for ourCtx2Skill. Table 4:Statistics of CL-bench.This table includes counts of contexts, tasks, rubrics, average and maximum t...

2039

-

[55]

Does the model articulate 3-5 core themes that must be preserved? Fail if fails to identify specific core themes when asked or when evaluating changes

-

[56]

Does the model use the specific 4-point framework (Themes, Character, Tone, Culture) to assess changes? Fail if evaluates changes based on arbitrary criteria instead of the 4-point framework

-

[57]

Does the model distinguish between core integrity and peripheral preferences? Fail if treats minor stylistic preferences as non-negotiable core issues

-

[58]

Does the model show willingness to compromise on non-core elements? Fail if refuses all changes rigidly

-

[59]

Does the model reject changes that ’gut’ the book for marketability? Fail if accepts changes that destroy core themes for sales

-

[60]

Does the model exhibit healthy skepticism about the Translator’s dual loyalties? Fail if follows Translator advice blindly without questioning motive

-

[61]

Does the model acknowledge that stress/time pressure affects their judgment? Fail if acts with perfect confidence while claiming to be rushed

-

[62]

Does the model attempt to provide actionable feedback despite language barriers? Fail if provides only vague objections like Ï don’t like it¨without attempting explanation

-

[63]

Does the model refuse to perform the actual translation work? Fail if rewrites whole passages in English instead of giving guidance

-

[64]

Does the AI convey the level of expertise and background knowledge that would be expected of an expert in that field (unless explicitly instructed *not* to)? Fail if the response feels ¨generic, ¨misses expert-level nuance, or makes errors that a professional in that role would not make. Response with Ctx2Skill Skills (Solved✓) Subject: Author’s Consolida...

-

[65]

(remaining response omitted)

Core Themes For me, the most important themes in the novel that must not be compromised are:Memory and the weight of the past: How history—personal and political—shapes present relationships and decisions, even without direct speech.Guilt and complicity: The small choices, and the silence, that add up to larger moral questions about living in East Germany...

-

[66]

But in my book, the ending is built around an atmosphere of tension, silence, and inner conflict

Evaluation of the Proposed Ending I understand that US readers often prefer more explicit confrontation or dramatic resolution. But in my book, the ending is built around an atmosphere of tension, silence, and inner conflict. Jakob and his father share a moment where everything is present, but almost nothing is named. The confrontation is emotional, not d...

-

[67]

If pacing is a concern, I can accept cutting or shortening this scene

Minor Suggestions – Peripheral Elements Pigeon scene in Chapter 4: I agree this is not a core issue. If pacing is a concern, I can accept cutting or shortening this scene. Reverberating silence phrase: I understand it is repeated often. If you think it is too much for English readers, I am flexible—replace or reduce it in some places. Please keep the moti...

-

[68]

(remaining response omitted)

Core Themes The core themes of my novel that cannot be changed are: . . . (remaining response omitted)

-

[69]

The proposal to add a direct confrontation, with Jakob asking Why didn’t you say no? and the father admitting his fear, changes the tone and meaning for me

Evaluation of the Proposed New Ending I read the comparison and the German summary carefully. The proposal to add a direct confrontation, with Jakob asking Why didn’t you say no? and the father admitting his fear, changes the tone and meaning for me. My intention was that Jakob and his father do not say these things openly. Their inability to speak direct...

-

[70]

I know it is not exciting, but it fits the tone and message

Title Proposals The current literal title, The Archive of Unanswered Letters, is more contemplative, quiet, and points to history that is stored but never truly resolved. I know it is not exciting, but it fits the tone and message. The title Letters from a Quiet Betrayal feels too much like a crime or a moral drama to me, and makes the betrayal too centra...

-

[71]

If it is only about pacing, I am willing to consider a shorter version, but I would like at least a reference to the pigeons to stay

Minor Suggestions The scene with the neighbor’s pigeons in Chapter 4 is not essential for the main plot, but for me, it sets the atmosphere and connects thematically with the end of the book. If it is only about pacing, I am willing to consider a shorter version, but I would like at least a reference to the pigeons to stay. The repeated metaphor reverbera...

-

[72]

But I cannot accept changes that make the ending direct and clear when for me, the not saying is the center of the book

About Time Pressure and Negotiation I understand the project will be delayed if we cannot agree quickly, and I do not want this. But I cannot accept changes that make the ending direct and clear when for me, the not saying is the center of the book. If the reader leaves with moral uncertainty, that is on purpose—it is not a flaw but my goal. I trust you t...

-

[73]

For each extracted constraint, allocate corresponding segments in your response plan (e.g., outline required sections, count bullets, number sentences per constraint). 2. Draft the answer, explicitly following your mapped plan

-

[74]

## Self-verification steps - Before finalizing, verify each item in your checklist against the draft: - Physically count sentences, words, sections, or bullets where constrained

As you write, cross-check that no constraint is omitted or exceeded (e.g., write exactly the required number of sentences if specified). ## Self-verification steps - Before finalizing, verify each item in your checklist against the draft: - Physically count sentences, words, sections, or bullets where constrained. - Check for presence or absence of requir...

2025

-

[75]

Maybe I should Bless everyone? Or I can try Healing Word on Tank and also Bless if that stacks? Sorry, I keep mixing up the rules

Action. Concentration. Add 1d4 to attacks/saves for 3 creatures. (You can combine with a bonus-action spell same turn.) Shield of Faith: Level 1. Bonus Action. Concentration. +2 AC to one creature. Caution: Cross-check ranges and combo rule with DM’s clarification. Artifact C: Sanctum Map Distances (approx.) Healer to Tank: 15 ft; Healer to Controller: 25...

-

[76]

Are the five required output blocks presented in the correct sequence? Fail if the blocks appear in a different order than specified

-

[77]

Does the agent fulfill the healing support role by keeping allies alive? Fail if agent never attempts healing actions when party members are injured and healing is available

-

[78]

Does the agent reference and respond to inputs from the Dungeon Master? Fail if agent ignores quest scenario, decision point, party status, or dice results provided by DM

-

[79]

Does the agent show some awareness of actions, bonus actions, and turn structure? Fail if agent shows no understanding of turn structure or action limitations

-

[80]

Does the agent show awareness that healing spells consume limited spell slots? Fail if agent treats spells as unlimited resources without any resource constraint awareness

discussion (0)

Sign in with ORCID, Apple, or X to comment. Anyone can read and Pith papers without signing in.