Manipulation, Insider Information, and Regulation in Leveraged Event-Linked Markets

In event-linked markets the two-axis taxonomy shows linear scaling for price manipulation, threshold shifts for outcome manipulation, and a

abstract

click to expand

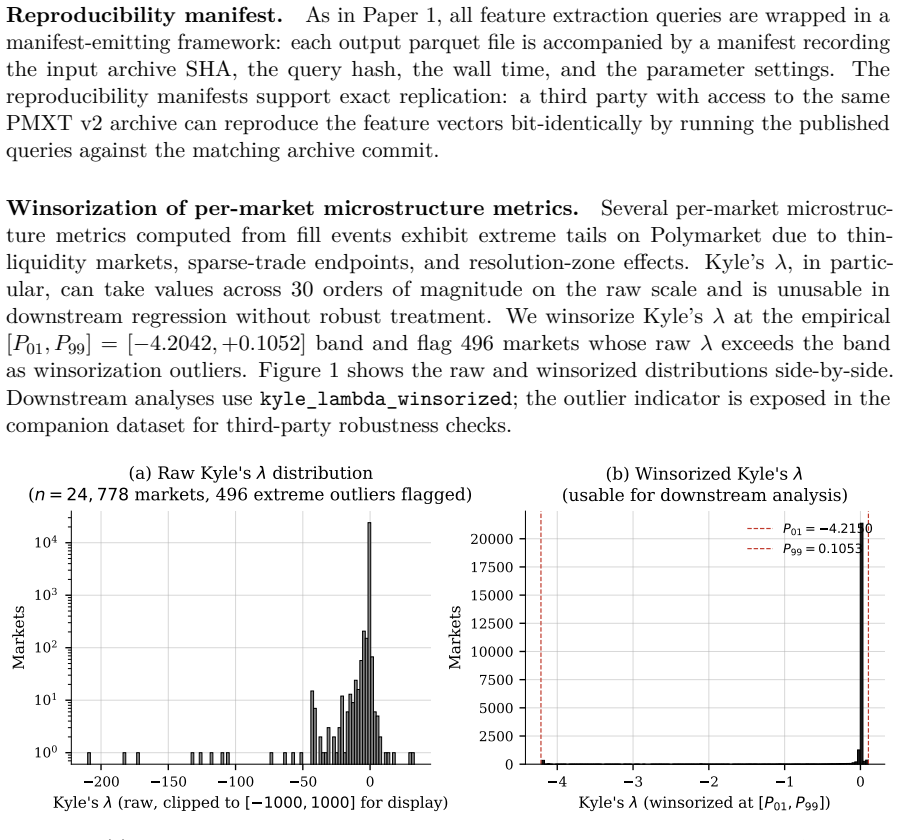

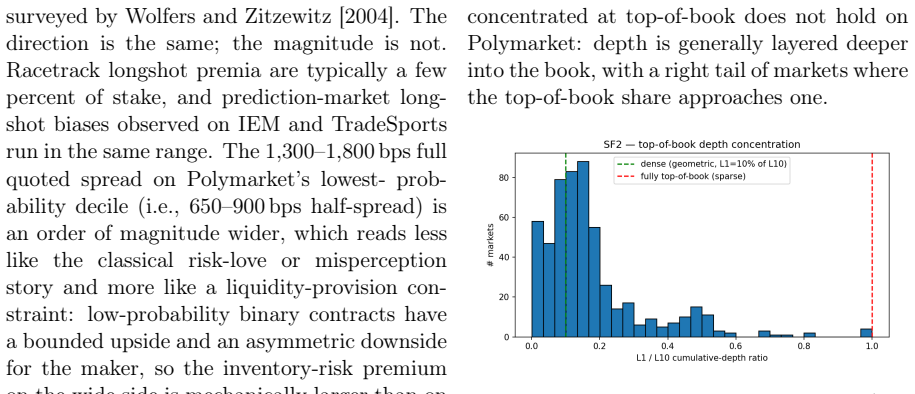

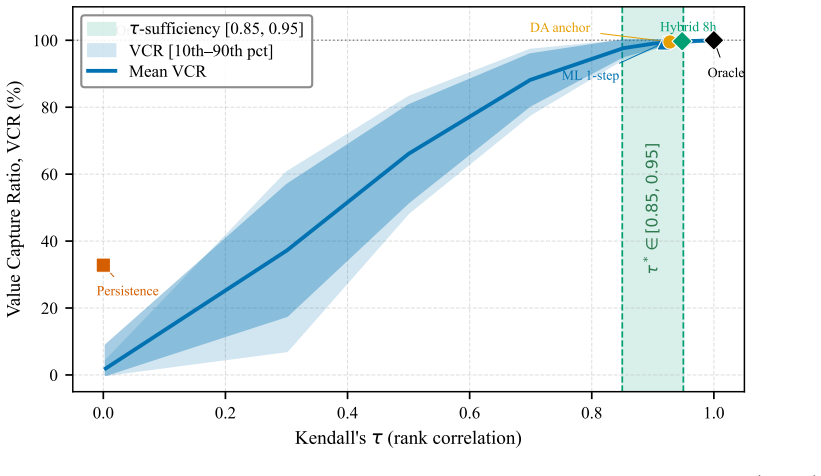

The introduction of leverage on prediction-market event contracts raises three structurally distinct questions that have not been addressed jointly: how leverage changes manipulation incentives, how it interacts with informed-trading rents, and how regulatory frameworks should respond. This paper develops a theoretical framework for the first two and a synthesis of the existing regulatory landscape for the third. The principal analytical move is a two-axis manipulation taxonomy distinguishing market-price manipulation from real-world outcome manipulation, where the manipulator affects the underlying event itself. Continuous-underlying derivative markets generally do not make outcome manipulation a venue-level payoff channel; event-linked markets do. Within this taxonomy, leverage plays asymmetric roles: it scales market-price manipulation linearly but shifts the cost-benefit threshold for outcome manipulation, and it scales informed-trading rents in three ways (direct multiplication, Sharpe-ratio preservation, detection-cost amortization). Section 7 connects Paper 1's pre-emption and halt-protocol findings (CC-007b, CC-008) to three manipulation channels: pre-emption introduced by the dynamic-margin engine, halt-arbitrage introduced by the resolution-zone halt protocol, and strategic bad-debt-shifting that no engine in Paper 1's framework family addresses. The framework's manipulation-resistance contribution is a re-allocation of attack surface, not a net reduction. The regulatory synthesis covers principal jurisdictions (US, EU, UK, Singapore, offshore) and identifies three regulatory-arbitrage pathways. The paper concludes with 14 recommendations for venue operators, regulatory bodies, and the research community, separated into framework-independent and framework-conditional categories.

full image

full image