Right-hand side regularity fixes ODE solving difficulty

It assigns each initial value problem to one precise stratum from polynomial-time solutions to transfinite computation.

Symbolic Computation

Roughly includes material in ACM Subject Class I.1.

It assigns each initial value problem to one precise stratum from polynomial-time solutions to transfinite computation.

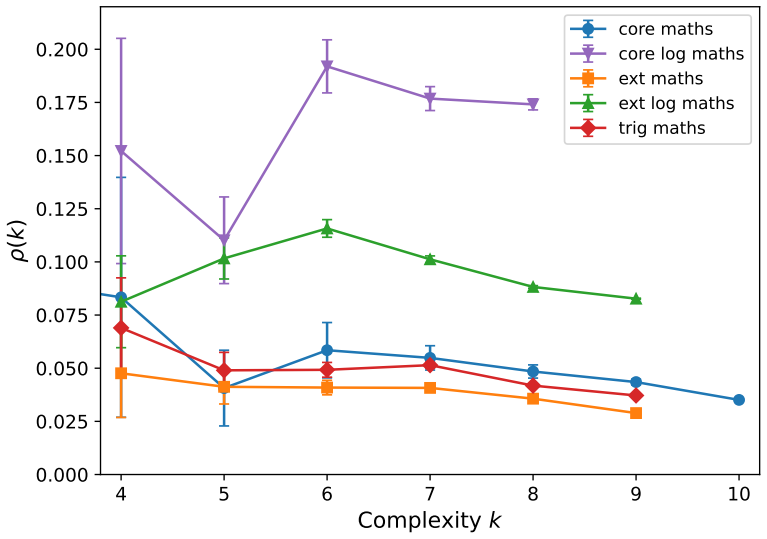

Exhaustive search up to given complexity shows integrability depends strongly on operator basis and uncovers integrals missed by major CAS

full image

full image

On Minimum CADs for Algebraic Sets in Dimension Three

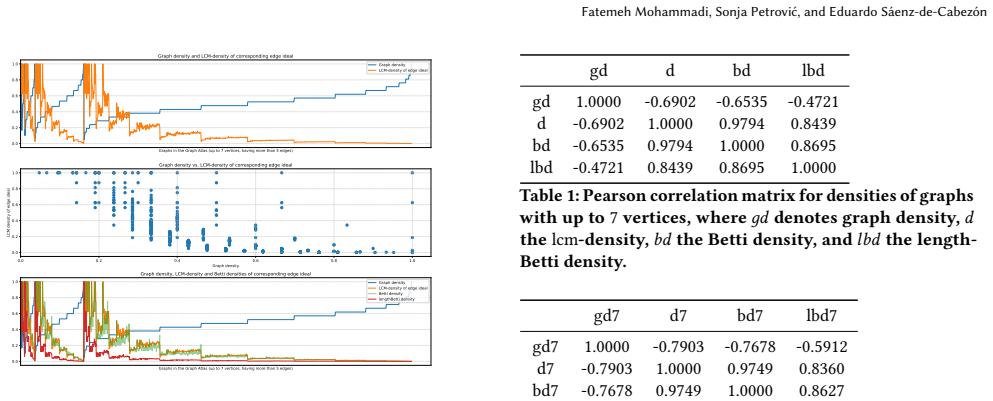

The class includes every algebraic set and gives the first existence result for a non-trivial collection beyond two dimensions

Asymptotic properties of random monomial ideals

Data separate low-density Taylor regimes from high-density redundant ones by narrow transition windows whose location depends on generator

full image

full image

A Generalisation of Goursat's Algorithm for Integration in Finite Terms

Two eigencomponents reduce to rational curves and integrate elementarily; the third stays on y³ = x(x-K) and is generically transcendental.

Pseudo-Complex Quantifier Elimination

Formulas over complexes with i, Re, Im and conjugates are first mapped to real problems, solved there, and then rewritten back into the rich

full image

full image

Arboretum.hs: Symbolic manipulation for algebras of graphs

Arboretum.hs follows mathematical definitions directly to enable flexible extensions and compile-time safety for algebraic combinatorics.

full image

full image

Equivalence Checking of Quantum Circuits via Path-Sum and Weighted Model Counting

Path-sum reductions plus weighted model counting decide equivalence up to global phase without full simulation.

full image

full image

Enhanced CAD-Based Quantifier Elimination With Multiple Equational Constraints

The method identifies regions with finite or infinite unknowns and drops more polynomials from the second projection than prior theory.

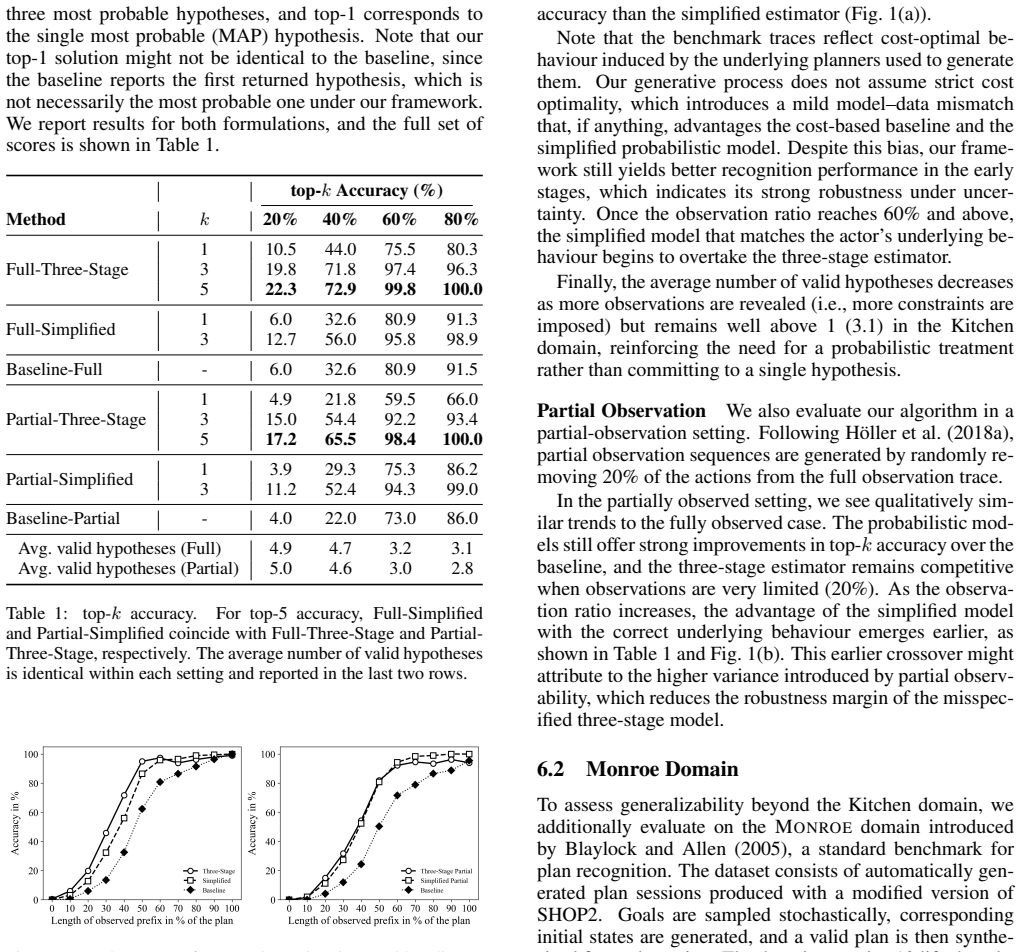

A Probabilistic Framework for Hierarchical Goal Recognition

Three-stage generative model with HTN planner yields posteriors over goals and outperforms prior HTN recognizers on benchmarks.

full image

full image

SignatureTensors.jl: A Package for Signature Tensors in Julia

SignatureTensors.jl supports exact calculations with OSCAR and numerical approximations for paths.